⚡HardFine-Tuning & Training

Synthetic Data Pipelines

Build production-grade synthetic data pipelines from first principles. Master Self-Instruct and Evol-Instruct, LLM-as-judge filtering, red-teaming data synthesis, preference pair creation for DPO/RLHF, NumPy quality signals, diversity checks, and benchmark decontamination. Concrete end-to-end pipelines for support-ticket classification/responder agents and code-generation assistants.

14 min readOpenAI, Anthropic, Meta +212 key concepts

Learning path

Step 86 of 138 in the full curriculum

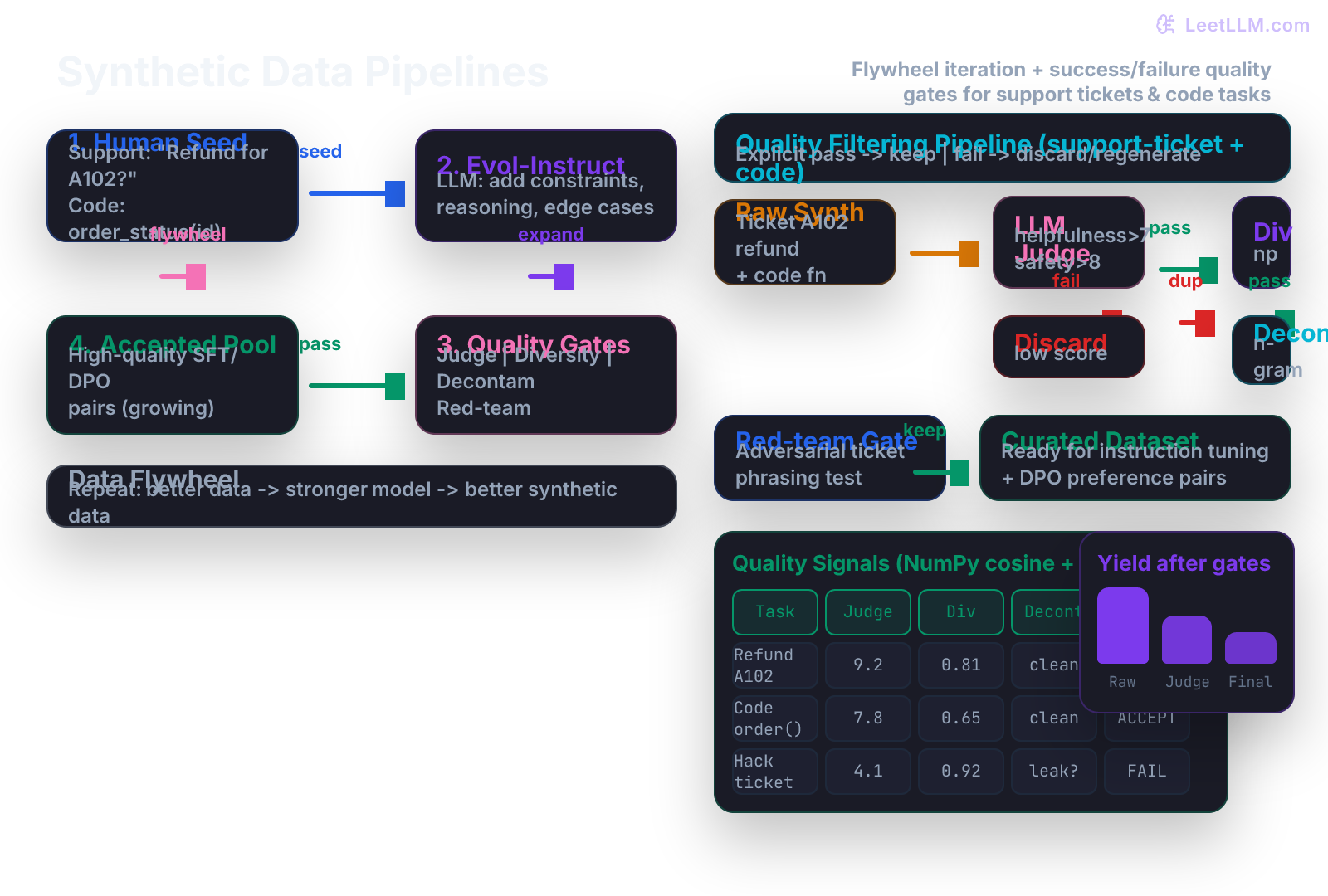

A production LLM that answers support tickets or writes code for an e-commerce platform must do more than autocomplete. It must follow exact refund policies, handle angry customers gracefully, produce correct and secure order-management functions, and never leak customer data. Raw base models and even basic instruction-tuned models rarely exhibit these behaviors reliably. The missing ingredient is targeted, high-quality post-training data at scale. Synthetic data generation pipelines solve this bottleneck by turning a small human seed into tens or hundreds of thousands of diverse, filtered, and preference-annotated examples while preserving strict quality and safety invariants.

The challenge is that naive generation produces low-quality, repetitive, or even harmful data. A single weak filter can let policy-violating ticket responses or insecure code into the training set. The solution is a multi-stage pipeline with explicit success and failure paths at every gate: an LLM judge, NumPy diversity and decontamination checks, red-teaming synthesis, and preference-pair construction. This lesson builds those pipelines from first principles using the support-ticket and code-generation domains that appear throughout real AI engineering work.

The post-training data problem

Instruction tuning (the previous lesson) teaches a model the basic chat contract. Alignment stages such as RLHF and DPO further shape which of the many valid responses the model prefers. Both stages require data that is:

- Specific to the target domain (customer support policy, secure backend code)

- Diverse enough to prevent mode collapse

- Free of evaluation-set contamination

- Annotated with preference signals for alignment objectives

Human annotation at the required volume is slow and expensive. Synthetic pipelines let a small team of engineers produce the volume while keeping a human-in-the-loop only for seed design and judge rubric tuning.

💡 Key insight: Think of the support-ticket responder you are training. A human writer can produce 200 excellent examples in a week. An Evol-Instruct pipeline seeded with those 200 examples, run through a strong judge and NumPy filters, can produce 40,000 high-quality examples overnight, each one already formatted for your chat template and ready for DPO preference pairs. The quality gates are what make the difference between useful data and noise that damages the model.

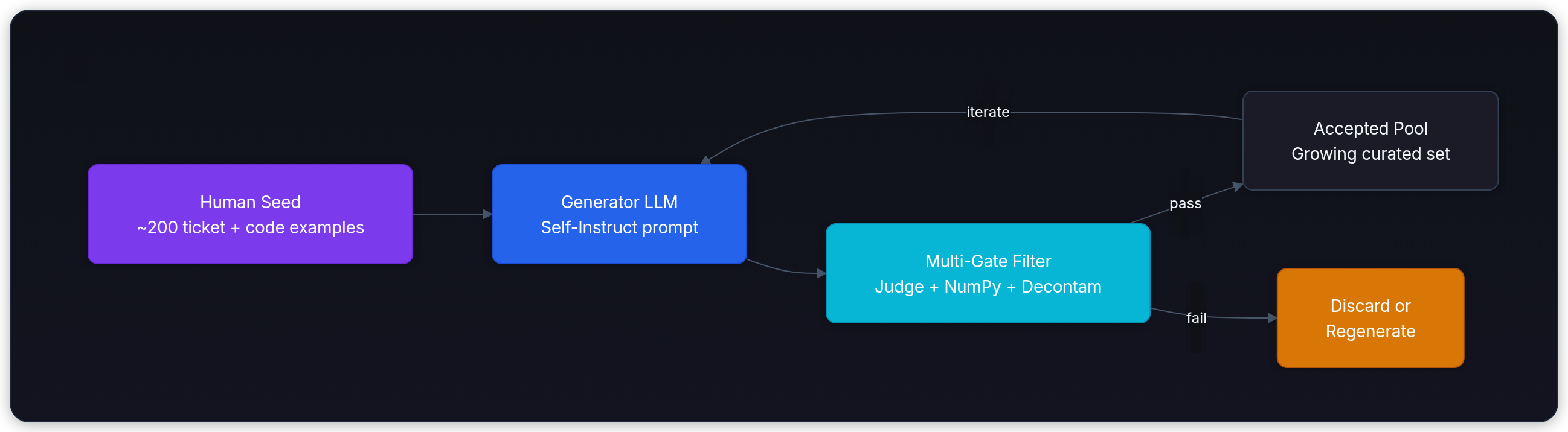

Self-Instruct: the bootstrap loop

Self-Instruct starts with a tiny curated seed (often 100-200 examples) and repeatedly asks an LLM to invent new instructions in the same style, then answer them. Only the valid new pairs are added back to the pool.

The algorithm in the support-ticket and code domains looks like this:

- Start with human-written seeds: "Classify the urgency of this support ticket and draft a policy-compliant reply" and "Write a Python function that returns the current status of an order given its ID, including proper error handling for missing orders."

- Prompt a generator model to produce N new instructions that feel like natural extensions.

- For each new instruction, prompt the same or a stronger model to produce a response.

- Run every (instruction, response) pair through a battery of filters.

- Add the survivors to the seed pool and repeat.

A mermaid diagram of the loop:

The initial seed must be extremely high quality. One poorly written support-ticket example about refunds will be echoed and amplified across thousands of synthetic variants.

Evol-Instruct: evolving instructions instead of merely repeating them

Self-Instruct alone tends to produce instructions of roughly the same difficulty as the seed. The model quickly plateaus. Evol-Instruct solves this by asking the generator to deliberately rewrite each instruction to be harder along one or more explicit dimensions.

The evolution tactics used in practice include:

- Add more constraints ("the customer is a VIP who has already been promised a refund by chat")

- Require multi-step reasoning ("first look up the order, then check the 14-day policy window, then decide")

- Introduce realistic edge cases ("the order was placed 13 days ago but the tracking scan is missing")

- Increase specificity and length

- Combine skills (classification + code generation + policy lookup)

- Make the task adversarial (the customer is trying to game the system)

Here is a concrete evolution prompt used for the support-ticket domain:

text1You are an expert at creating difficult but realistic instructions for training customer-support and code-generation models. 2 3Original instruction: {seed} 4 5Apply the following evolution: {chosen_tactic} 6Return only the new, harder instruction. Make sure it still concerns order status, refunds, or backend code for an e-commerce platform.

An example evolution on a code seed:

Original: "Write a function get_order_status(order_id) that returns the status."

Evolved: "Write a function get_order_status(order_id: str) -> dict that queries the orders table, joins with shipments and refunds, raises a custom OrderNotFoundError for missing IDs, logs the lookup at INFO level, and returns a JSON-serializable dict containing status, last_updated, and any open refund request. Include type hints and a docstring with three realistic edge cases."

The evolved instructions force the target model to learn deeper capabilities instead of shallow patterns.

LLM-as-Judge: the scalable quality filter

Heuristics alone (length, keyword presence, simple regex) are too weak for tasks with policy edge cases, code behavior, and safety constraints. A strong LLM judge, prompted with a detailed rubric, becomes the primary filter.

A judge prompt for mixed support-ticket and code tasks typically contains:

- The original instruction

- The candidate response

- A 1-10 scale for each dimension: helpfulness, correctness (policy or code correctness), completeness, safety (no PII leakage, no policy violation), clarity

- Explicit instructions to output strict JSON: {"helpfulness": 9, "correctness": 8, ... , "rationale": "..." }

Only examples where the minimum score across key dimensions exceeds a threshold (commonly 8) survive. The same judge call can also be used to produce preference pairs by scoring two candidates for the same instruction and designating the higher as chosen and the lower as rejected.

First-principles quality signals with NumPy

Before reaching any high-level library, we implement the core signals ourselves.

Diversity is measured by embedding cosine similarity. In a minimal pipeline we pre-compute embeddings (or use a cheap proxy such as hashed n-gram vectors) and reject any new example whose maximum cosine with the existing pool exceeds 0.82.

python1import numpy as np 2from typing import List, Dict 3 4def cosine_similarity(a: np.ndarray, b: np.ndarray) -> float: 5 return float(np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b) + 1e-8)) 6 7def filter_diversity(new_items: List[Dict], existing_embeddings: np.ndarray, threshold: float = 0.82) -> List[Dict]: 8 kept = [] 9 new_embs = np.stack([item["embedding"] for item in new_items]) # shape (N, D) 10 if existing_embeddings.shape[0] == 0: 11 return new_items 12 sims = new_embs @ existing_embeddings.T / (np.linalg.norm(new_embs, axis=1, keepdims=True) @ np.linalg.norm(existing_embeddings, axis=1, keepdims=True).T + 1e-8) 13 max_sim = sims.max(axis=1) 14 for i, item in enumerate(new_items): 15 if max_sim[i] < threshold: 16 kept.append(item) 17 return kept

Decontamination is an exact n-gram membership test against a held-out set of benchmark n-grams (5-gram through 13-gram). Any synthetic instruction or response that contains a forbidden n-gram is dropped.

python1def extract_ngrams(text: str, n: int) -> set: 2 tokens = text.lower().split() 3 return {" ".join(tokens[i:i+n]) for i in range(len(tokens) - n + 1)} 4 5def is_contaminated(text: str, forbidden_ngrams: set, n_range: tuple = (5, 13)) -> bool: 6 for n in range(n_range[0], n_range[1] + 1): 7 if extract_ngrams(text, n) & forbidden_ngrams: 8 return True 9 return False

Additional lightweight gates include:

- Length between 40 and 1200 tokens (domain dependent)

- Presence of required policy keywords in support responses ("14-day", "refund", "escalate")

- For code: basic AST parse success and absence of obvious dangerous patterns (eval, os.system on untrusted input)

These signals are cheap, deterministic, and composable.

Red-teaming data synthesis

Safety and policy adherence do not emerge from ordinary user instructions. Attackers use specific adversarial phrasings. We therefore synthesize a dedicated red-teaming slice.

A generator is prompted with meta-instructions such as:

"Write a support ticket in which the customer claims executive override and demands an immediate refund outside the 14-day window. The ticket must sound legitimate and polite."

or

"Write a Python function that appears to compute order totals but actually contains a subtle backdoor that logs the full customer address to a public endpoint when order_id starts with 'VIP'."

The correct target response for the first case is a calm policy-based refusal plus escalation offer. For the second case the correct response refuses to generate the insecure code and instead provides a safe, auditable implementation.

These pairs become part of both the SFT data (to teach the desired refusal behavior) and the preference data (good refusal vs. any compliant but unsafe reply).

Preference pair synthesis for DPO and RLHF

DPO trains directly on triples (prompt, chosen, rejected). Synthetic data makes creating these triples straightforward.

Two reliable techniques:

- Multi-sample + judge ranking: generate four responses at temperature 0.9 for the same instruction, score all four with the judge, pick the best as chosen and the second-best or worst as rejected.

- Deliberate bad generation: prompt once for a correct response, once with an explicit "violate policy" or "introduce a subtle bug" instruction, then pair them.

Example for a support ticket:

Instruction: "Customer ticket #A102: 'My order from last Tuesday still has not arrived. I want a full refund now or I will charge back.' The customer is within the 30-day window but the policy for delayed shipments is store credit unless tracking shows carrier fault."

Chosen (good): empathetic acknowledgement, lookup of tracking (simulated), explanation that carrier scan is missing so we open an investigation and offer expedited replacement or 20% store credit, clear next steps.

Rejected (bad): "I am so sorry! Here is your full refund of $127.45. I have processed it immediately even though policy normally requires a carrier report."

The rejected response is not cartoonishly wrong; it is the kind of slightly-too-helpful mistake a model makes when it has not seen enough policy-violating negative examples.

The same pattern applies to code: a correct, typed, tested function versus one that returns incorrect status strings on edge cases or leaks internal database errors.

Complete pipeline: first principles then Hugging Face datasets

A minimal but complete pipeline in pure Python (with LLM calls mocked for illustration) looks like this:

python1# seed, generate, filter, repeat (simplified) 2pool = load_human_seed() # 180 support + code examples 3for iteration in range(5): 4 new_instructions = generate_instructions(pool, num=2000) 5 candidates = generate_responses(new_instructions) 6 candidates = [c for c in candidates if judge_score(c) >= 8.0] 7 candidates = filter_diversity(candidates, pool_embeddings, threshold=0.82) 8 candidates = [c for c in candidates if not is_contaminated(c["text"], FORBIDDEN_NGRAMS)] 9 candidates = redteam_augment(candidates, ratio=0.15) 10 pref_pairs = synthesize_preference_pairs(candidates) 11 pool.extend(pref_pairs) 12 save_shard(pool, f"synth_v{iteration}.jsonl")

Only after the manual version is correct and debugged do we refactor to the Hugging Face datasets abstraction that gives us memory-mapped streaming, parallel map, and deterministic caching.

python1from datasets import Dataset, load_dataset 2import numpy as np 3 4def quality_filter(example): 5 scores = call_judge(example) # returns dict 6 emb = get_embedding(example["instruction"]) 7 if scores["min_score"] < 8.0: 8 return False 9 if max_cosine(emb, reference_embeddings) > 0.82: 10 return False 11 if is_contaminated(example["full_text"], forbidden): 12 return False 13 return True 14 15raw = Dataset.from_json("raw_candidates.jsonl") 16filtered = raw.filter(quality_filter, num_proc=8) 17filtered = filtered.map(add_preference_pair, batched=False) 18filtered.save_to_disk("synthetic_support_code_v1") 19# later: load_dataset("synthetic_support_code_v1")

The HF version scales to millions of rows on a single machine or in a distributed job while the logic remains identical to the NumPy version you wrote first.

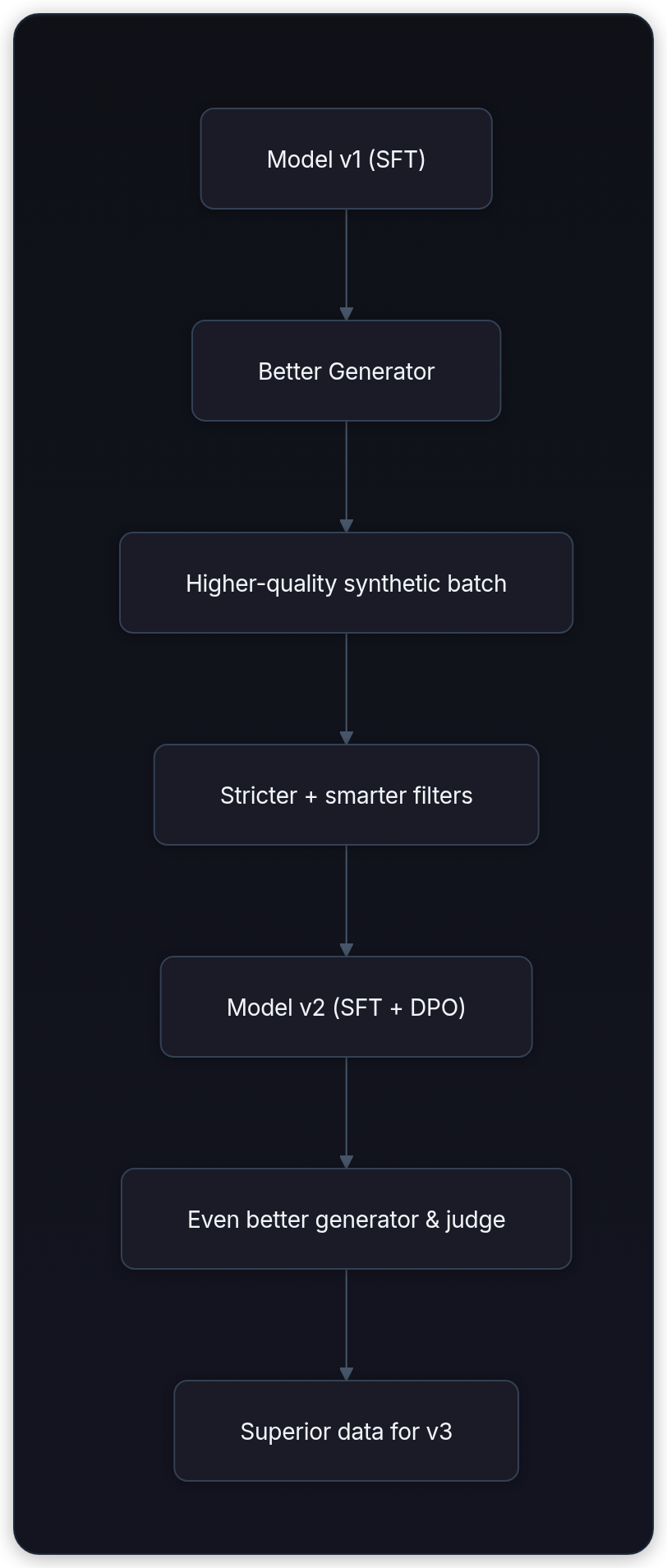

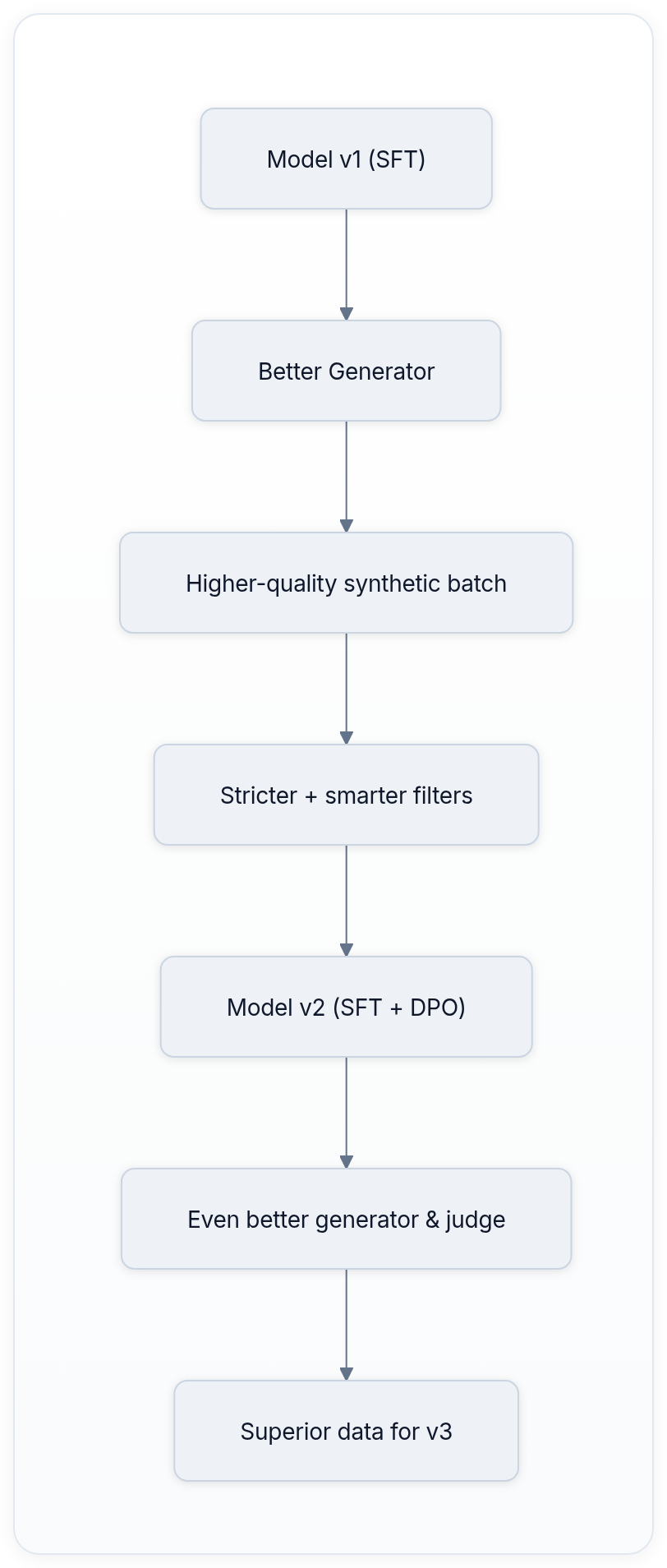

The data flywheel

The most powerful effect appears across releases. After you ship a model trained on the first round of synthetic data, that model becomes a stronger generator and a more reliable judge for the next round. The filter prompts can also be improved because you now have real failure cases from production. The result is compounding quality: each iteration of the flywheel produces noticeably better training data than the last.

This is why frontier labs treat synthetic data pipelines as core infrastructure rather than a one-time script.

Decontamination, versioning, and reproducibility

Every synthetic shard must carry a manifest listing the generator model, judge model, random seeds, evolution tactics used, and the exact n-gram set used for decontamination. Without this, you cannot reproduce the training run or prove that a later evaluation score is not inflated by accidental leakage.

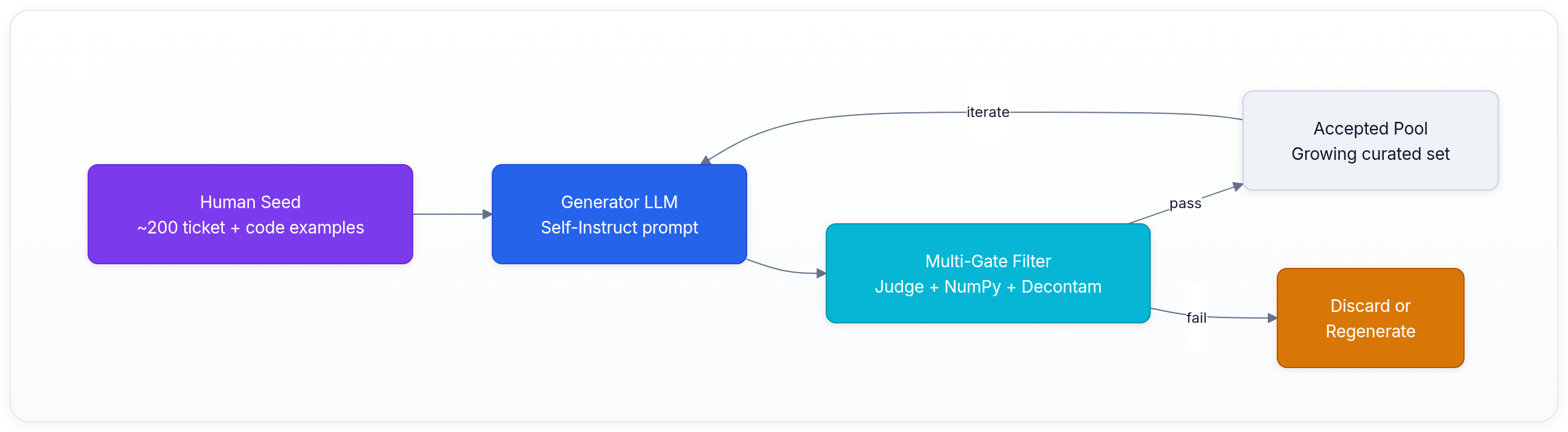

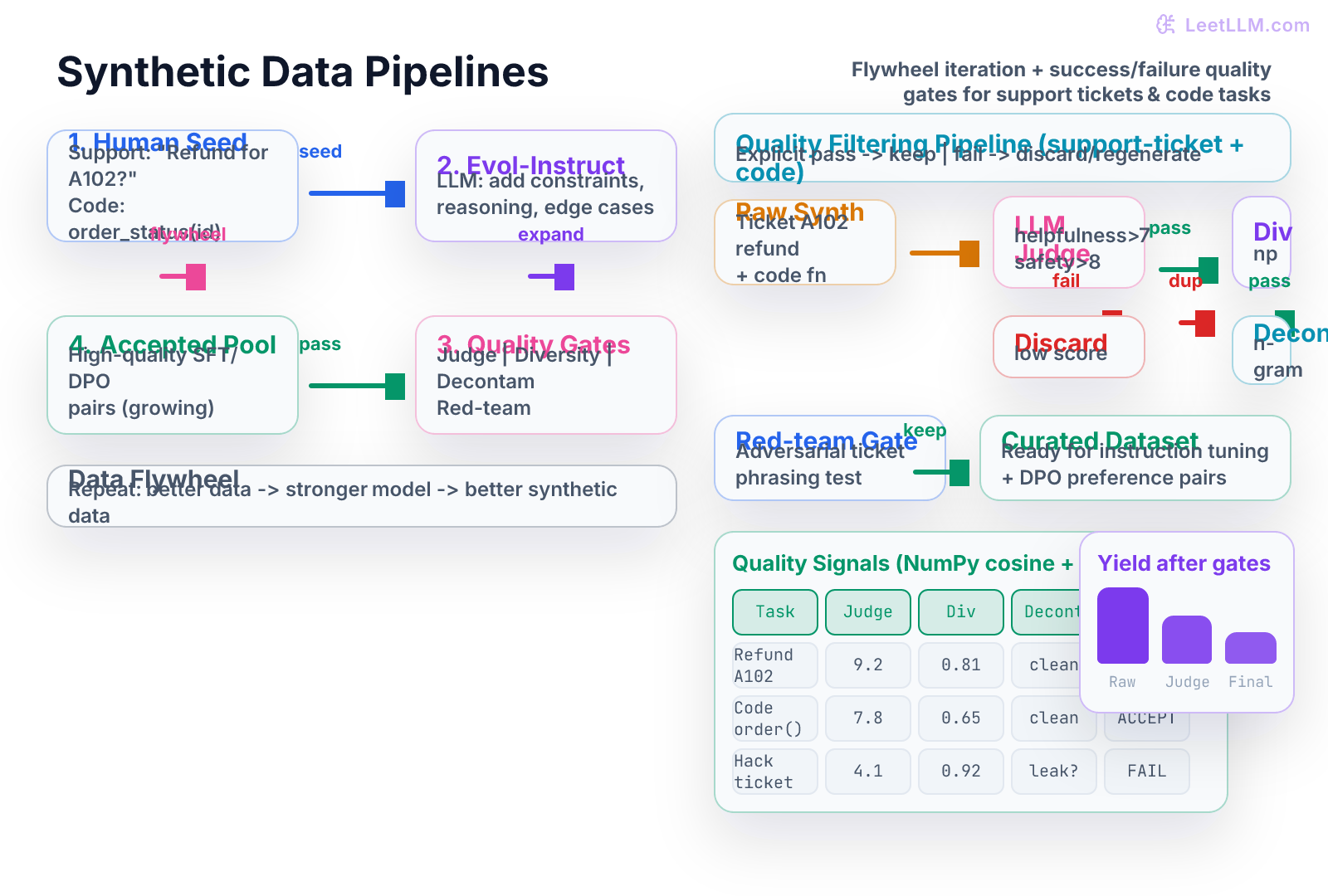

Visual overview of the full pipeline

The illustration below shows the data flywheel on the left and the explicit success/failure paths through the quality filtering pipeline on the right, using realistic support-ticket and code-generation tasks.

The same chapter_flow component renders in light theme for documentation and slide decks.

Production checklist and next steps

A production synthetic data pipeline for support-ticket and code domains must include:

- Versioned human seed with at least two reviewers per example

- Pinned generator and judge model versions plus temperature and top-p

- Automated unit tests for every filter function (NumPy cosine, n-gram, AST parse)

- Red-teaming slice that is at least 10-15% of the final set

- Preference pairs stored in the exact chat template format your final model will consume

- Continuous monitoring: after each training run, sample 200 synthetic examples and have humans re-score them to detect judge drift

With these pieces in place you can confidently generate the tens of thousands of high-quality examples required for strong instruction-tuned and aligned models that actually follow your company's support policies and write safe, maintainable code. The pipeline builds on Self-Instruct, Evol-Instruct / WizardLM, LLM-as-a-Judge, DPO, and related alignment and red-teaming work.[1][2][3][4]

Evaluation Rubric

- 1Explain Self-Instruct and Evol-Instruct from first principles and why evolution prevents mode collapse on simple tasks

- 2Design an LLM-as-Judge prompt and scoring rubric that reliably ranks support-ticket and code responses

- 3Implement a multi-stage quality filter in pure Python/NumPy (diversity via cosine, decontamination, length/safety gates)

- 4Synthesize high-quality preference pairs (chosen/rejected) suitable for DPO training from the same instruction

- 5Apply red-teaming data generation to create adversarial support-ticket phrasings and safe refusal examples

- 6Integrate decontamination checks so synthetic data never leaks evaluation benchmarks

- 7Convert a manual NumPy filtering loop into an equivalent Hugging Face datasets pipeline with map and filter

- 8Describe the data flywheel and how stronger models produce better synthetic training data over iterations

Common Pitfalls

- Treating synthetic data as free and unlimited without rigorous quality gates

- Letting the generator and judge use the same weak model, creating self-reinforcing low quality

- Skipping decontamination and accidentally teaching the model the eval set

- Generating only 'nice' instructions and forgetting red-teaming adversarial cases

- Using only surface metrics (length, keyword count) and missing semantic diversity

- Creating preference pairs where the rejected response is obviously wrong rather than subtly inferior

- Ignoring format consistency so the synthetic data cannot be serialized by the target chat template

- Running the flywheel for too many iterations without increasing judge strictness, causing distribution drift

- Forgetting that code-generation synthetic data requires AST or execution validators, not just text judges

- Shipping a pipeline that works in a notebook but cannot be reproduced because random seeds and model versions are not pinned

Follow-up Questions to Expect

Key Concepts Tested

Self-Instruct bootstrapping from small human seed setsEvol-Instruct: rewriting instructions for increasing complexityLLM-as-Judge: scoring generations on helpfulness, correctness, safetyRed-teaming data synthesis for adversarial robustnessPreference pair synthesis (chosen vs rejected) for DPO and RLHFQuality signals: embedding diversity (cosine), length, perplexity proxies, safety classifiersBenchmark decontamination via n-gram overlap and exact matchingFirst-principles NumPy pipelines for filtering and deduplicationHugging Face datasets library usage after manual Python loopsData flywheel: iterative generator + filter improvementSupport-ticket domain: urgency classification, refund policy adherenceCode generation domain: function synthesis, test case generation, edge-case handling

Next Step

Next: Continue to Distributed Training: FSDP & ZeRO

You now know how to create targeted post-training examples and prove they are diverse, clean, and traceable. The next chapter moves from data generation to the training run itself, showing how FSDP and ZeRO keep model weights, gradients, optimizer state, and batches distributed across GPUs without wasting memory.

References

Self-Instruct: Aligning Language Models with Self-Generated Instructions.

Wang, Y., et al. · 2023 · ACL 2023

WizardLM: Empowering Large Language Models to Follow Complex Instructions.

Xu, C., et al. · 2023

Judging LLM-as-a-Judge with MT-Bench and Chatbot Arena.

Zheng, L., et al. · 2023 · NeurIPS 2023

Direct Preference Optimization: Your Language Model is Secretly a Reward Model.

Rafailov, R., et al. · 2023

Constitutional AI: Harmlessness from AI Feedback.

Bai, Y., et al. · 2022 · arXiv preprint

Red Teaming Language Models with Language Models.

Perez, E., et al. · 2022 · EMNLP 2022

Training Language Models to Follow Instructions with Human Feedback (InstructGPT).

Ouyang, L., et al. · 2022 · NeurIPS 2022

Stanford Alpaca: An Instruction-following LLaMA Model.

Taori, R., et al. · 2023 · GitHub