⚙️EasyMLOps & Deployment

Data Structures for AI

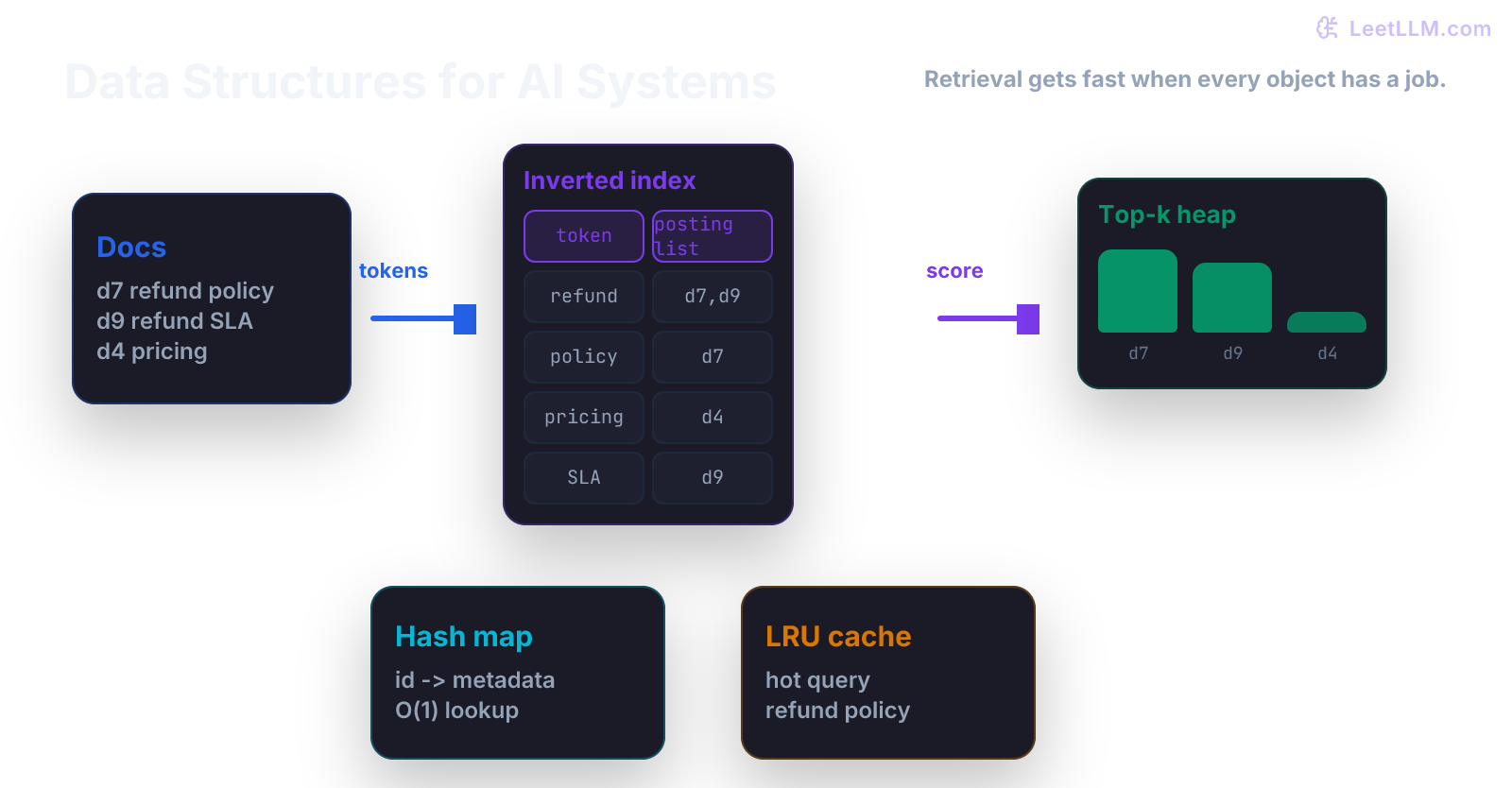

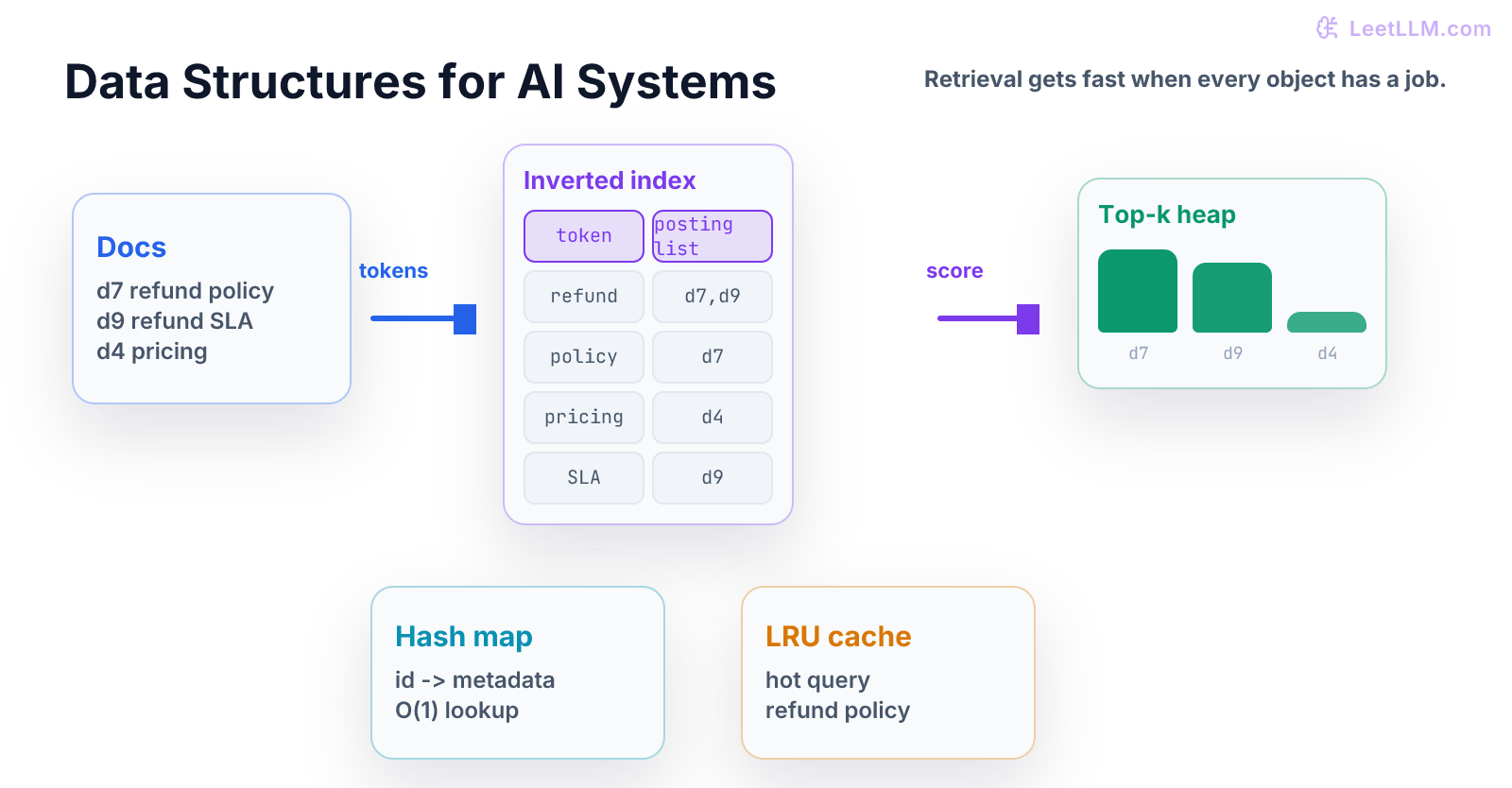

A beginner-first data-structures chapter that traces one support query through an inverted index, heap ranking, set membership, and cache.

35 min readOpenAI, Anthropic, Google +17 key concepts

Data structures decide what an AI system can find quickly.

Models get attention, but storage choices often decide whether a product feels instant or slow. A RAG system with a strong model and a bad index can still miss the right document. An agent with no queue can still lose work. A cache with no invalidation rule can still serve stale answers.

What You Learn

This chapter teaches data structures as job tools. By the end, a beginner should be able to choose a list, dictionary, set, heap, inverted index, or cache because of the operation they need to make fast.[1][2][3]

refund from raw documents into posting lists, then into a top-k heap and cache hit path.Step Map

| Step | Question | What you should be able to do |

|---|---|---|

| 1 | What operation matters? | Say lookup, membership, ranking, ordering, or invalidation. |

| 2 | What structure fits? | Pick the smallest structure that makes that operation clear. |

| 3 | What does one example do? | Trace one query through the structure by hand. |

| 4 | What can fail? | Catch duplicates, empty input, stale cache, and wrong ranking. |

| 5 | What ships? | Add tests for the structure, not only the model. |

Analogy: a data structure is a labeled shelf, a waiting line, or a scoreboard. Pick the physical shape that matches the job.

Tiny Story

Imagine three support documents:

| doc id | text |

|---|---|

| 0 | refund policy details |

| 1 | account password reset |

| 2 | refund status email |

A user asks:

text1refund email

You could scan every document. With three documents, that is fine. With three million documents, it isn't fine.

An inverted index lets you jump from a word to the documents that contain it.

Vocabulary

- list: ordered sequence. Good for small scans and stable order.

- dictionary: key-value table. Good for exact lookup.

- set: unique items. Good for membership checks and intersections.

- queue: first-in, first-out line. Good for work that should happen in order.

- heap: structure that keeps best or smallest items easy to pop.

- inverted index: mapping from term to document IDs.

- cache: saved result for repeated work.

- invalidation: rule for when cached data must be removed or refreshed.

Each structure answers a different speed question.

Worked Example: Build An Index By Hand

Split each document into terms:

| term | document IDs |

|---|---|

refund | {0, 2} |

policy | {0} |

details | {0} |

account | {1} |

password | {1} |

reset | {1} |

status | {2} |

email | {2} |

Now trace the query refund email:

| query term | candidate IDs |

|---|---|

refund | {0, 2} |

email | {2} |

Document 2 appears twice across query terms. Document 0 appears once. So document 2 should rank first.

That is the whole mental model behind a tiny lexical retriever.

Code Lab

Put this in data_structures_demo.py:

python1from collections import defaultdict 2import heapq 3 4docs = [ 5 "refund policy details", 6 "account password reset", 7 "refund status email", 8] 9 10index: dict[str, set[int]] = defaultdict(set) 11for doc_id, text in enumerate(docs): 12 for term in text.split(): 13 index[term].add(doc_id) 14 15def search(query: str, k: int = 2) -> list[int]: 16 scores: dict[int, int] = defaultdict(int) 17 for term in query.split(): 18 for doc_id in index.get(term, set()): 19 scores[doc_id] += 1 20 21 ranked = heapq.nlargest(k, [(score, doc_id) for doc_id, score in scores.items()]) 22 return [doc_id for score, doc_id in ranked] 23 24print("refund docs", sorted(index["refund"])) 25print("search", search("refund email"))

Expected output:

text1refund docs [0, 2] 2search [2, 0]

Read the code as data movement:

| Data movement | Structure |

|---|---|

| term to document IDs | dictionary of sets |

| count query matches | dictionary of integers |

| keep best documents | heap |

The model isn't involved yet. You are building the retrieval skeleton.

Second Worked Example: Membership

A set answers one question quickly:

Have I seen this item before?

Use that to remove duplicate document IDs:

python1seen: set[int] = set() 2for doc_id in [2, 0, 2, 1]: 3 seen.add(doc_id) 4 5print(sorted(seen)) 6assert seen == {0, 1, 2}

Expected output:

text1[0, 1, 2]

Sets aren't "better lists." They are for membership and uniqueness.

Third Worked Example: Cache

If users repeat queries, a cache can save work.

Cache Lookup

The smallest cache is a dictionary:

python1cache: dict[str, list[int]] = {} 2 3query = "refund email" 4if query not in cache: 5 cache[query] = search(query) 6 7print("cached", cache[query]) 8assert cache["refund email"] == [2, 0]

Expected output:

text1cached [2, 0]

Invalidation

This is useful, but incomplete. A real cache needs an invalidation rule.

If document 2 changes, the cached answer for refund email might be stale.

Common Trap

The common trap is using the structure that feels familiar instead of the one the operation needs.

| Need | Weak first instinct | Better structure |

|---|---|---|

| exact document lookup | scan a list | dictionary |

| avoid duplicate IDs | append to list | set |

| best k results | sort every item | heap |

| repeated query result | recompute every time | cache |

| text term lookup | scan every doc | inverted index |

Lists aren't bad. They are great when the data is small or order matters. The mistake is pretending every operation is a list scan forever.

Mini Test

Add tests like these:

python1def test_refund_lookup_has_two_docs(): 2 assert index["refund"] == {0, 2} 3 4def test_empty_query_returns_empty_list(): 5 assert search("") == [] 6 7def test_refund_email_ranks_doc_2_first(): 8 assert search("refund email")[0] == 2

These tests protect the structure:

- index terms point to expected document IDs

- empty input is predictable

- ranking follows match counts

Practice

- Add a fourth document:

email support refund. - Predict the posting list for

refund. - Predict the ranking for

refund email. - Add a query with a word that never appears.

- Make sure it returns an empty list instead of crashing.

- Write one sentence that starts: "A dictionary fits here because..."

Production Check

Before trusting a data structure in an AI system, write down:

- operation that must be fast

- expected data size

- key type

- value type

- duplicate behavior

- empty-input behavior

- update behavior

- cache invalidation rule, if any

- test that proves the structure still means what it says

For the tiny retriever:

Operation is term lookup. Key is a term string. Value is a set of document IDs. Empty queries return

[]. Updating documents requires rebuilding or updating affected posting lists and clearing stale cache entries.

That note isn't academic. It tells another engineer where latency and stale data can enter the product.

Next, continue to Probability for Machine Learning. Data structures taught you how to retrieve candidates. Probability teaches you how to reason when signals are uncertain.

Evaluation Rubric

- 1Chooses lists, dictionaries, sets, heaps, indexes, or caches based on the operation that must be fast

- 2Builds a tiny inverted index and top-k searcher with visible output and tests

- 3Tests empty input, duplicate IDs, ranking order, update behavior, and cache staleness risks

Common Pitfalls

- A list scan is fine for ten examples and useless for ten million. Picking the wrong structure turns a model problem into a latency problem.

- Using a list scan for every operation hides latency until data grows.

- A cache without an invalidation rule can make an answer fast and wrong.

Follow-up Questions to Expect

Key Concepts Tested

hash mapsqueuesheapstriesgraphsindexescaches

References

Designing Data-Intensive Applications.

Kleppmann, M. · 2017

Efficient and Robust Approximate Nearest Neighbor Using Hierarchical Navigable Small World Graphs.

Malkov, Y. A., & Yashunin, D. A. · 2018 · IEEE Transactions on Pattern Analysis and Machine Intelligence

Billion-scale similarity search with GPUs.

Johnson, J., Douze, M., & Jégou, H. · 2017 · arXiv preprint