📐MediumEmbeddings & Vector Search

Clustering and PCA

Hands-on chapter for clustering and pca, with first-principles mechanics, runnable code, failure modes, and production checks.

40 min readOpenAI, Anthropic, Google +16 key concepts

Clustering and PCA help you inspect structure in data without labels. They are beginner-friendly ways to see what representations contain. This chapter starts from zero and builds toward the concrete job skill: Run k-means and PCA on embeddings, then inspect whether clusters line up with labels or artifacts. [1][2][3]

Step map

| Stage | Beginner action | Checkpoint |

|---|---|---|

| Concept | Represent each item as a vector before grouping. | Reader can say input, operation, and output without naming a library. |

| Build | Cluster toy points and project them into two dimensions. | Code prints or asserts one result the reader predicted first. |

| Failure | Bad scaling changes clusters, making preprocessing visible. | The common beginner mistake has a visible symptom and guard. |

| Ship | Saved plot, cluster summary, and limitations explain result. | Artifact is small enough for another engineer to rerun. |

Start here

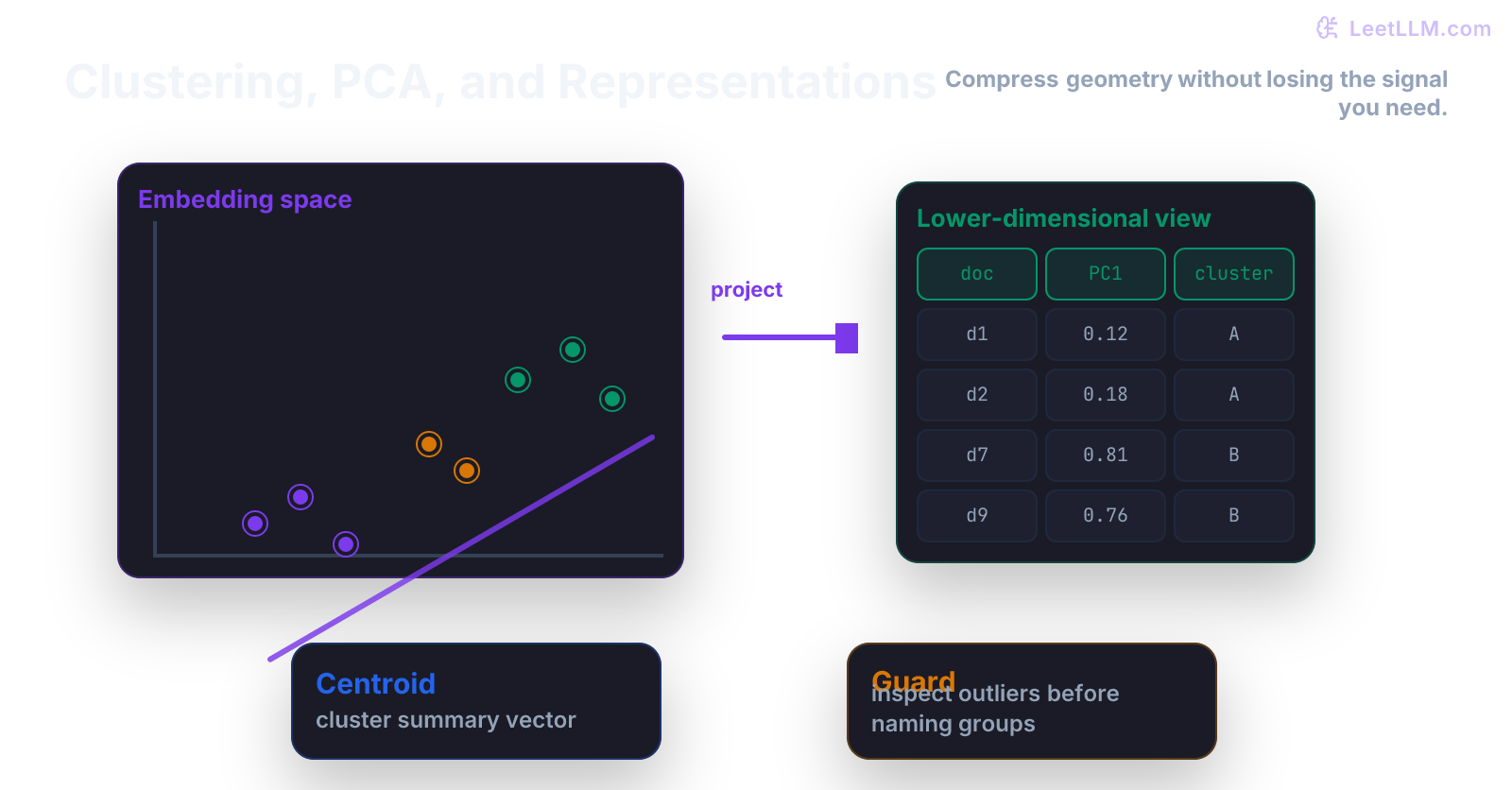

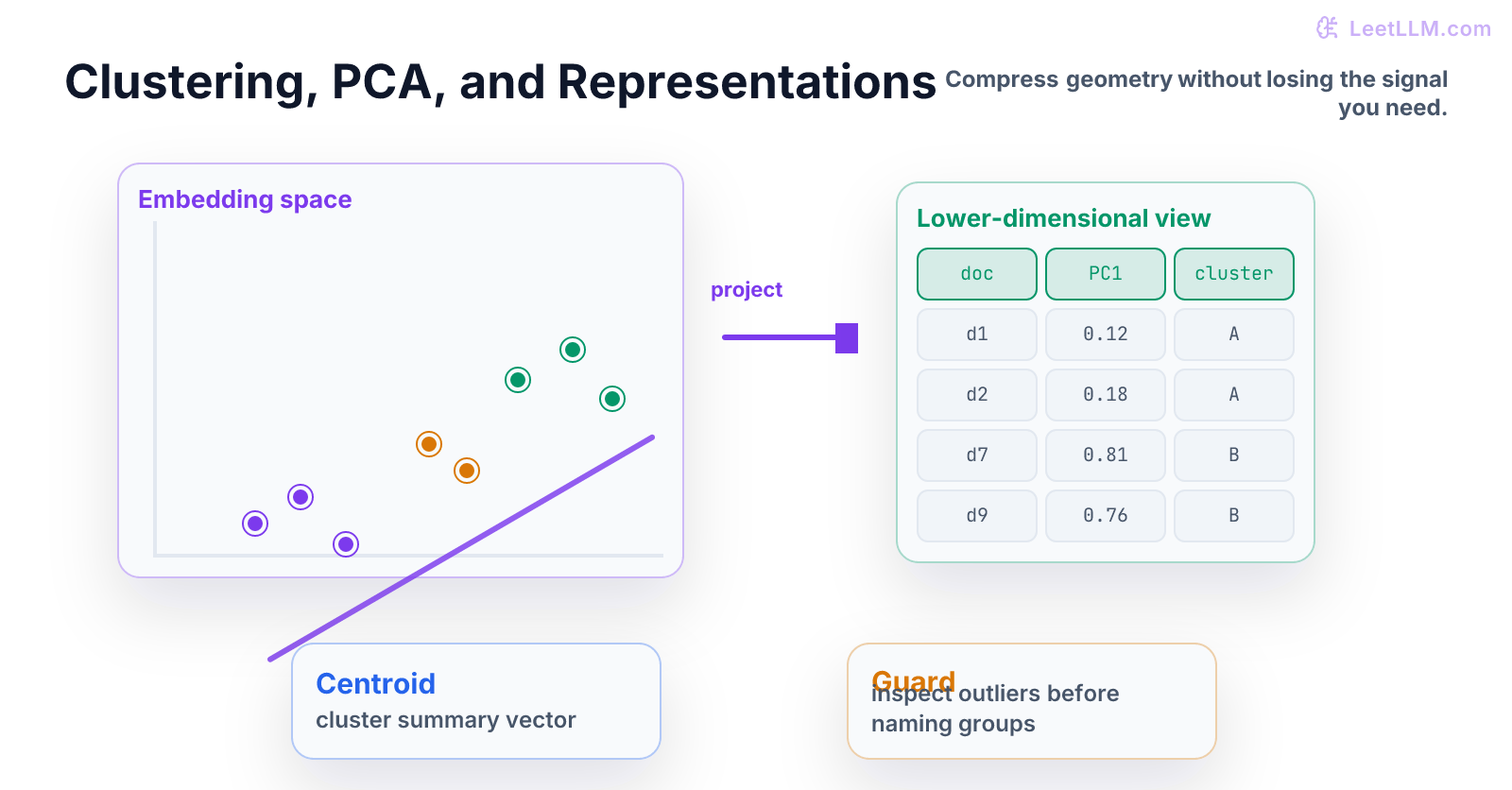

Start with points in space. Clustering asks which points belong together. PCA asks which directions explain most variation. Representation learning is the broader habit of turning raw objects into useful vectors.

Read this chapter once for the idea, then run the demo and change one value. For Clustering and PCA, progress means you can name the input, explain the operation, and say what result would prove the idea worked.

By the end, you should be able to explain Clustering and PCA with a worked example, not a library name. Keep one runnable file and one short note with the result you expected before you ran it.

Why this chapter matters

Clustering and PCA matters because later LLM work assumes this habit already exists. You will use it when you inspect data, debug model behavior, compare evaluations, or explain why a result should be trusted.

The job skill here is: Run k-means and PCA on embeddings, then inspect whether clusters line up with labels or artifacts. Treat the snippet as lab equipment: run it, change one input, and write down what changed before you move on.

Beginner mental model

Imagine 100 examples, each represented by eight numbers. PCA compresses each example to two numbers so you can plot or inspect structure while keeping as much variation as possible.

A useful beginner checklist for Clustering and PCA:

- What object enters the system?

- What transformation happens to it?

- What evidence says the result is correct?

Keep the answer concrete. If you can't point to the value, shape, row, metric, or test that proves the point, the Clustering and PCA concept is still fuzzy.

Vocabulary in plain English

- cluster: group of nearby examples according to a distance rule.

- centroid: representative center of a cluster.

- PCA: linear method that finds directions of maximum variance.

- SVD: matrix factorization that makes PCA easy to compute.

- embedding: vector representation of an item.

- dimensionality reduction: compressing vectors to fewer dimensions while trying to preserve useful structure.

Use these definitions while reading the demo. Each term should map to a variable, an assertion, or a decision you could explain in review.

Build it

Start with the smallest version that can run from a terminal. The goal for this Clustering and PCA demo is visibility: one file, one output, and no hidden notebook state.

python1import numpy as np 2 3X = np.random.default_rng(0).normal(size=(100, 8)) 4X_centered = X - X.mean(axis=0) 5_, _, vt = np.linalg.svd(X_centered, full_matrices=False) 6projected = X_centered @ vt[:2].T 7assert projected.shape == (100, 2)

Read the code in this order:

Xis 100 examples with 8 features each.X_centeredsubtracts feature means because PCA looks at variation around the center.np.linalg.svdfinds ordered directions of variation.vt[:2].Tkeeps two directions, soprojectedhas shape(100, 2).

After it runs, make three small edits. Add a normal-case test, add an edge-case test, then log the intermediate value a beginner would most likely misunderstand. That turns Clustering and PCA from a reading exercise into an engineering exercise.

For Clustering and PCA, a strong submission includes a runnable command, one test file, and notes for any assumptions. If data, randomness, training, or evaluation appears, save the split rule, seed, config, and metric definition.

Beginner failure case

A beginner may plot two PCA dimensions and treat the picture as truth, even when scaling or outliers shaped the view.

For Clustering and PCA, make the failure visible before adding the fix. Write the symptom in plain English, then add the smallest guard that would catch it next time.

Good guards for Clustering and PCA are concrete: assertions, fixture rows, duplicate checks, seed control, metric intervals, or release checks. Pick the guard that makes the hidden assumption executable.

Practice ladder

- Run the snippet exactly as written and save the output.

- Change one input value and predict the output before running it again.

- Add one assertion that would catch a beginner mistake.

- Scale one feature by 100 before PCA, observe the projection change, then explain why feature scaling matters.

- Write a two-line README: one command to run the demo, one command to run the test.

Keep this ladder small. Clustering and PCA should feel runnable before it feels impressive. The capstones later reuse the same habit at product scale.

Production check

Quantify cluster stability, inspect examples per cluster, and compare against metadata slices before naming a cluster.

A production check for Clustering and PCA is proof another engineer can trust the result. At foundation level that means a reproducible command and tests. At capstone level it also means a design note, eval evidence, cost or latency notes, and rollback criteria.

Before moving on, answer four Clustering and PCA questions: What input does this accept? What output or metric proves it worked? What failure would fool you? What test catches that failure?

What to ship

Ship a small Clustering and PCA folder with code, tests, and notes. Make it boring to run: install dependencies, run tests, run the demo. That boring path is what makes the artifact useful in a portfolio.

Clustering and PCA feeds later LLM engineering work directly. Retrieval, fine-tuning, agents, evals, and serving all depend on small foundations like this being clear before systems get large.

Evaluation Rubric

- 1Explains the core mental model behind Clustering and PCA without hiding behind library calls

- 2Implements the central idea in runnable Python, NumPy, PyTorch, or scikit-learn code

- 3Identifies realistic failure modes and adds tests or production checks that catch them

Common Pitfalls

- A nice 2D plot can be misleading. PCA preserves high-variance directions, not necessarily useful semantic directions.

- Skipping the from-scratch version and reaching for a library before the mechanics are clear.

- Treating a clean demo as proof that the implementation will survive bad inputs, drift, or scale.

Follow-up Questions to Expect

Key Concepts Tested

k-meansPCASVDembedding projectioncluster inspectionrepresentation failure

References