📝EasyNLP Fundamentals

From GPT to Modern LLMs

A brief history of modern AI: tracing the evolution from the original Transformer to GPT-3, the open-source explosion, and the rise of frontier reasoning models.

25 min readGoogle, Meta, OpenAI +15 key concepts

The Transformer was introduced in 2017 for machine translation.[1] But how did we get from translating French to AI assistants that can write code, answer questions, and call tools?

This article traces the evolution of large language models (LLMs), focusing on the architectural decisions that made modern systems possible.

💡 Key insight: The defining realization of the last decade was scaling laws: language-model performance improves predictably as you increase model size, data, and compute, as long as those ingredients stay in balance.[2][3]

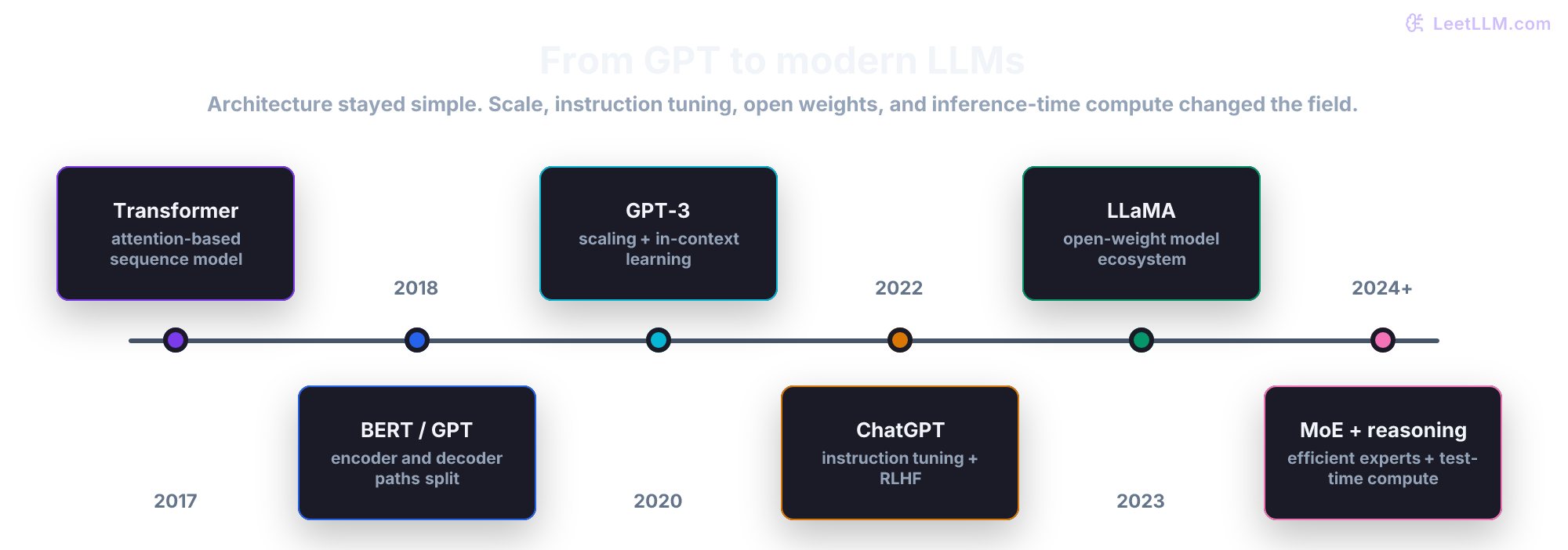

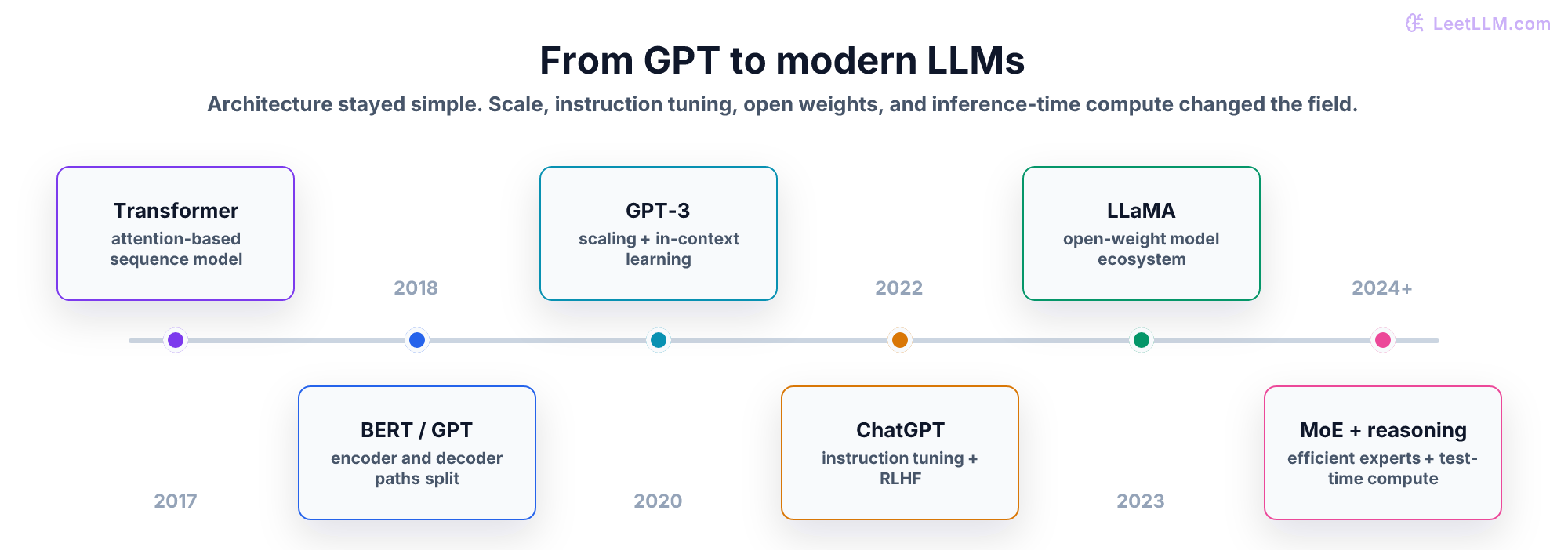

Evolution map

| Era | What changed | Why it matters for engineers |

|---|---|---|

| Transformer | Attention replaced recurrent sequence processing. | Long-range token relationships became easier to learn. |

| GPT path | Decoder-only models made generation the main interface. | One prompt format can express many tasks. |

| Scaling laws | Size, data, and compute became predictable levers. | Model quality became an engineering budget problem. |

| Instruction tuning | Human-preferred answers replaced raw document continuation. | Chat APIs became useful products, not only research demos. |

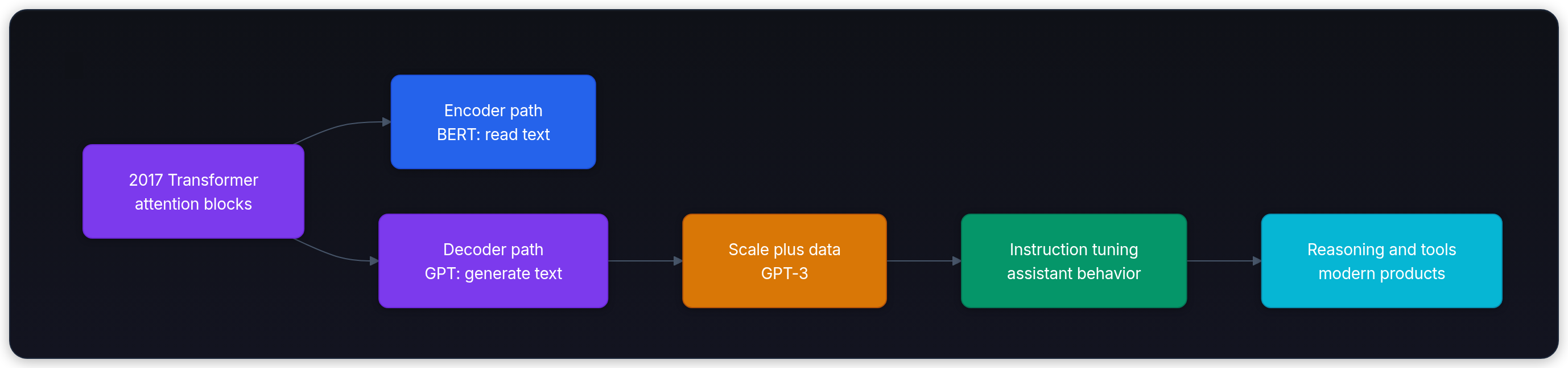

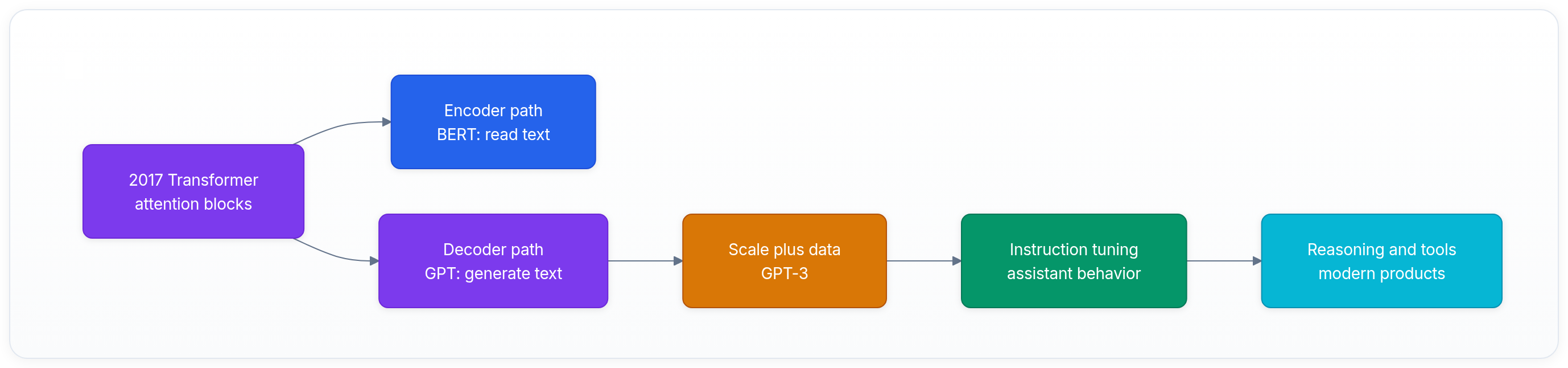

Use this diagram as your anchor for the rest of the article: modern LLMs followed the decoder-only path, then became useful products after scale and instruction tuning.

2017-2018: the fork in the road

The original 2017 Transformer had two parts: an Encoder (to read the input) and a Decoder (to generate the output). Soon after, researchers realized they could separate them.

- The encoder-only path (BERT): Google released BERT in 2018.[4] It used the reading side of the Transformer. It looked at a whole sentence at once and became very good at classification, retrieval, and extraction. But it wasn't built to write long answers token by token.

- The decoder-only path (GPT): OpenAI released GPT-1 in 2018.[5] It used the generating side of the Transformer. It read strictly left to right and learned to predict the next token. It could write because generation was baked into the training objective.

For a few years, BERT-style models dominated many NLP benchmarks. Decoder-only models won the general assistant track because generation is a flexible interface. If a model can generate text, you can ask for translation, summarization, coding, planning, or classification in the same format: prompt in, text out.

2020: scaling laws and GPT-3

In 2020, OpenAI published work on scaling laws for neural language models.[2] The core finding: loss improves predictably with three factors.

- Parameter count (how big the model is)

- Dataset size (how much text it reads)

- Compute (how many training FLOPs you spend)

OpenAI then trained GPT-3, a 175-billion parameter decoder-only model.[6] It was more than 100 times larger than GPT-2.

GPT-3 changed the field because it demonstrated in-context learning. You didn't need to retrain the model for every new task. You could show a few examples in the prompt, and the model often inferred the pattern on the fly. This was the practical birth of prompt engineering.

2022: InstructGPT and ChatGPT

GPT-3 was powerful, but it was still a document completer. If you prompted it with "Write a recipe for cake", it might continue with another prompt instead of answering directly, because the base objective was "continue the text."

To fix this, researchers used RLHF (Reinforcement Learning from Human Feedback). Humans compared model responses, and the model was trained to prefer the answers people rated higher.[7] This turned a raw autocomplete engine into a more helpful conversational assistant.

In late 2022, OpenAI wrapped an instruction-tuned model in a chat interface and called it ChatGPT. For most users, that was the moment LLMs became a product instead of a research demo.

2023: The Open Source Explosion (LLaMA)

While other labs built proprietary model families, Meta took a different approach.

In early 2023, Meta released LLaMA, a family of capable foundation models.[8] Its weights quickly spread through the research and developer community.

Researchers realized you didn't always need 175 billion parameters to get useful performance. Chinchilla-style scaling showed that many models were undertrained for their size: smaller models trained on more tokens could be far more efficient.[3] That insight helped drive fine-tuning, quantization, and local inference engines.

2024 and beyond: efficiency and reasoning

The field then shifted from "make every model bigger" to "spend compute more intelligently."

Mixture of Experts (MoE)

Mixture-of-Experts (MoE) models activate only part of the network for each token. Mixtral, for example, routes each token to a small subset of expert feed-forward networks.[9] This gives the model more total capacity while keeping active compute lower than a dense model of the same total size.

Reasoning Models (System 2 Thinking)

Classic LLMs spend roughly the same forward-pass budget per output token, whether they're greeting you or solving a hard proof. Reasoning models add extra test-time compute before producing the final answer. OpenAI's o1-preview made this approach visible in product form in 2024.[10]

Summary

- The field split into encoder-only (BERT) for understanding and decoder-only (GPT) for generation. Generation became the broader interface.

- Scaling laws showed why model size, data, and compute all matter, leading to GPT-3 and in-context learning.

- RLHF turned raw text predictors into helpful chat assistants like ChatGPT.

- LLaMA helped popularize open-weight development, local inference, and aggressive fine-tuning.

- Newer architectures use MoE for efficiency and extra test-time compute for harder reasoning.

Next, continue to Prompt Engineering Fundamentals, where these models become tools you can steer.

Evaluation Rubric

- 1Differentiates between the original Transformer (Encoder-Decoder) and the GPT architecture (Decoder-only)

- 2Explains the significance of OpenAI's Scaling Laws

- 3Describes how GPT-3 demonstrated that large models can perform zero-shot and few-shot tasks without fine-tuning

- 4Identifies the impact of Meta's LLaMA release on the open-source AI ecosystem

Common Pitfalls

- Assuming every frontier model is just a larger dense GPT-3-style model

- Assuming open-weight models are useful only because they're small, rather than because they changed fine-tuning, local inference, and deployment workflows

Follow-up Questions to Expect

Key Concepts Tested

The split between Encoder-only (BERT) and Decoder-only (GPT) modelsScaling laws: why making models bigger actually workedThe shift from GPT-3 to in-context learningThe open-source explosion: LLaMA and the democratization of AIModern architectural shifts: MoE (Mixture of Experts) and reasoning models

References

Attention Is All You Need.

Vaswani, A., et al. · 2017

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.

Devlin, J., et al. · 2019 · NAACL 2019

Improving Language Understanding by Generative Pre-Training.

Radford, A., et al. · 2018

Language Models are Unsupervised Multitask Learners.

Radford, A., et al. · 2019

Language Models are Few-Shot Learners.

Brown, T., et al. · 2020 · NeurIPS 2020

Scaling Laws for Neural Language Models

Kaplan et al. · 2020

Training Compute-Optimal Large Language Models.

Hoffmann, J., et al. · 2022 · NeurIPS 2022

Training Language Models to Follow Instructions with Human Feedback (InstructGPT).

Ouyang, L., et al. · 2022 · NeurIPS 2022

LLaMA: Open and Efficient Foundation Language Models.

Touvron, H., et al. · 2023

Mixtral of Experts.

Jiang, A. Q., et al. · 2024

o1 Preview Model

OpenAI · 2024