📝EasyNLP Fundamentals

Training & Backpropagation

Understand how neural networks actually learn: the training loop, gradient descent, backpropagation, and how learning rates control the update steps.

25 min readGoogle, Meta, OpenAI +16 key concepts

In the previous article, we saw how a neural network uses its parameters (weights and biases) to turn inputs into predictions. But we skipped the most important question: how does the network know what values those parameters should have?

A fresh neural network starts completely ignorant. Its parameters are initialized with random numbers. If you ask a random network to classify an image, it will just guess. The process of adjusting those random numbers until the network makes accurate predictions is called training.

This article explains how neural networks learn. You'll learn about the loss function, gradient descent, backpropagation, and the delicate balance between learning patterns and memorizing data.

💡 Key insight: Training a neural network is an optimization problem. The goal is to find the specific combination of billions of parameters that minimizes the number of mistakes the network makes.

The training loop

Training happens in a continuous loop. The network looks at data, makes a guess, checks how wrong it was, and adjusts its parameters to be slightly less wrong next time. This loop has four main steps:

- Forward Pass: The network makes a prediction based on its current parameters.

- Calculate Loss: A mathematical function measures how far off the prediction was from the correct answer.

- Backward Pass (Backpropagation): The network calculates how much each parameter contributed to the error.

- Update Weights (Gradient Descent): The parameters are adjusted slightly in the direction that reduces the error.

Training loop map

| Step | What the model does | Visible beginner proof |

|---|---|---|

| Forward pass | Turns input into a prediction. | Printed prediction changes when weights change. |

| Loss | Measures prediction error. | Wrong answers have larger loss than right answers. |

| Backward pass | Computes gradients for parameters. | Each trainable parameter has a gradient value. |

| Optimizer step | Updates parameters to reduce future loss. | Next loss usually moves downward on the toy example. |

Let's break down each concept.

The Loss Function: measuring mistakes

Before a model can improve, it needs to know how badly it's doing. This is the job of the loss function (sometimes called the cost function or objective function).

The loss function takes the model's prediction and the actual correct answer (the "ground truth") and outputs a single number representing the error.

- If the prediction is perfect, the loss is 0.

- If the prediction is completely wrong, the loss is very high.

Different tasks use different loss functions. If you're predicting a continuous number (like house prices), you might use Mean Squared Error (MSE). If you're classifying data or predicting the next word, you use Cross-Entropy Loss, which penalizes the model heavily when it's confident but wrong. (We'll cover this in detail in the next article).

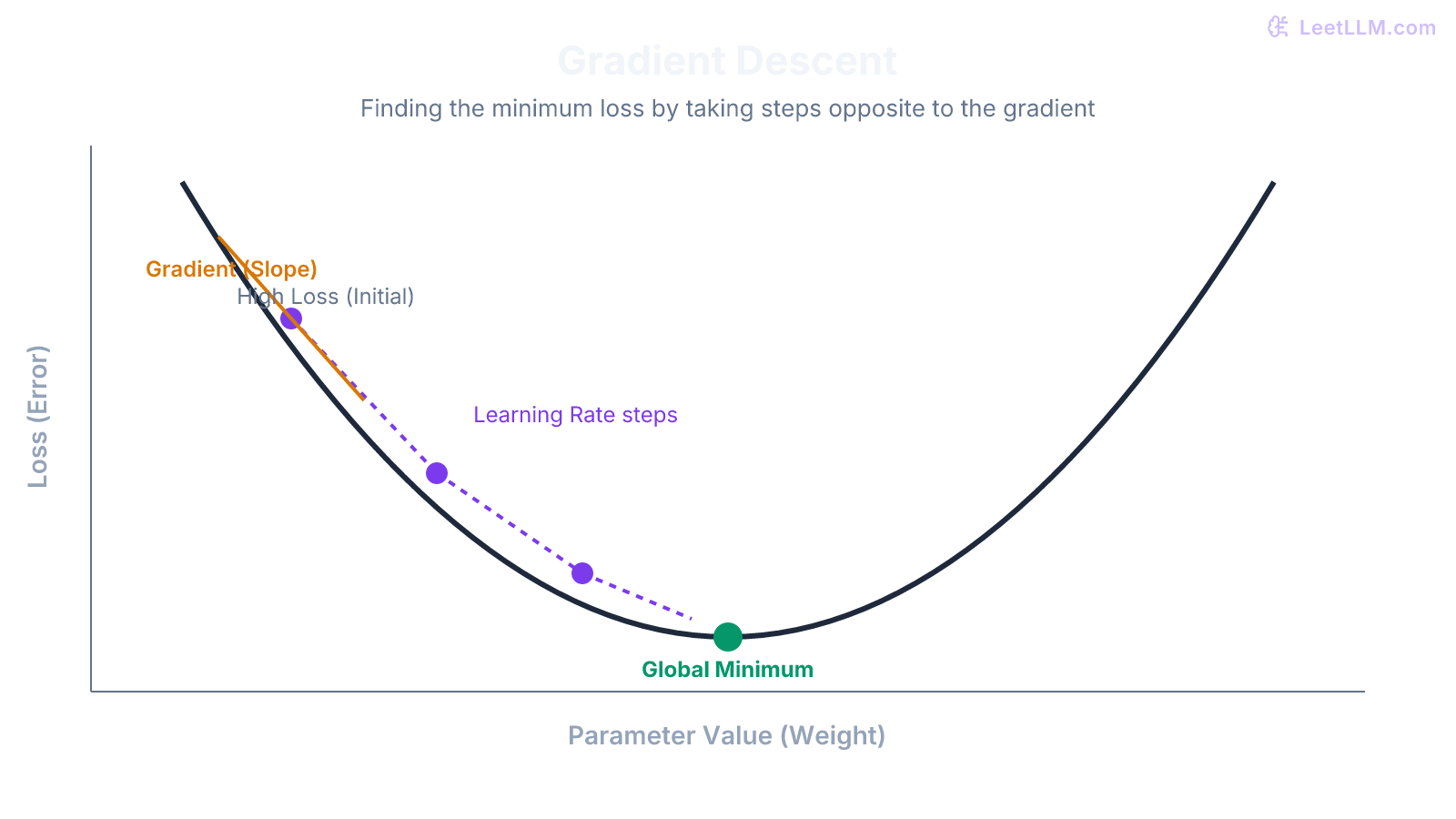

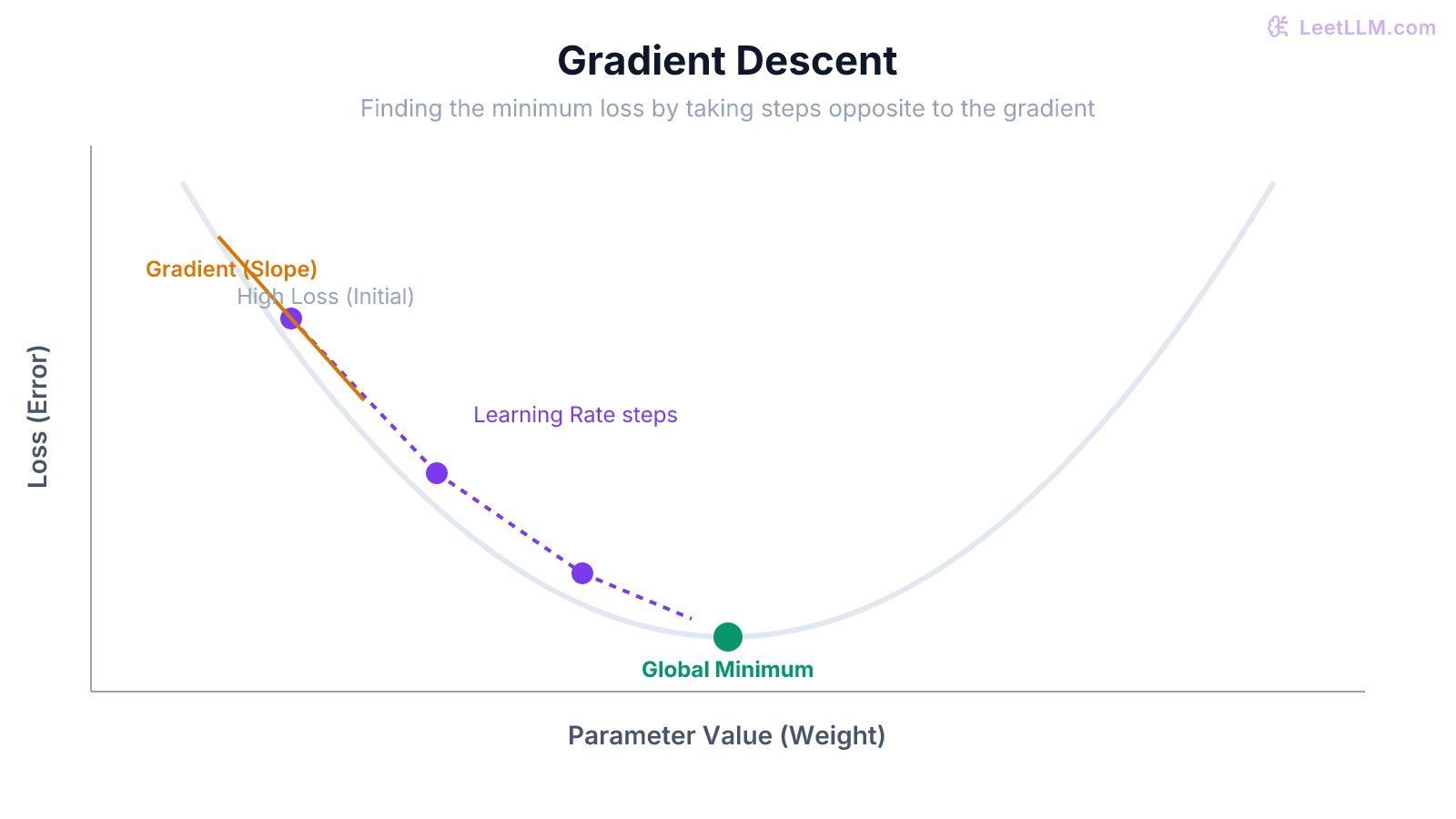

Gradient Descent: walking down the mountain

Imagine you're blindfolded on a rugged mountain, and your goal is to find the lowest valley. You can't see the whole mountain, but you can feel the slope of the ground directly beneath your feet. To get to the bottom, you take a step in the direction that slopes downward the steepest. Once you take a step, you feel the slope again, and take another step.

This is exactly how Gradient Descent works.[1]

- The "mountain" is the loss surface, defined by all the possible combinations of parameter values.

- Your "altitude" is the loss. You want to get to the lowest altitude (minimum loss).

- The "slope under your feet" is the gradient: the mathematical derivative of the loss with respect to the parameters.

The Learning Rate

When you take a step down the mountain, how big should that step be? This is controlled by a hyperparameter called the learning rate.

- If the learning rate is too small: The model takes tiny baby steps. It will eventually reach the bottom, but it might take months or get stuck in a shallow, local dip.

- If the learning rate is too large: The model takes giant leaps. It might overshoot the valley entirely, bouncing back and forth on the mountain walls, and the loss might actually go up instead of down.

Choosing the right learning rate is one of the most critical parts of training an AI model.

🎯 Production tip: Modern training doesn't use standard gradient descent. It uses advanced optimizers like Adam (Adaptive Moment Estimation) or AdamW. These algorithms automatically adjust the learning rate for each individual parameter based on the history of previous gradients, making training much faster and more stable.[2]

Backpropagation: finding the slope efficiently

We know we need the gradient (the slope) to update our parameters. But how do we find the gradient for a specific weight buried deep in layer 2 of a 100-layer network?

This was the problem that stalled neural network research for decades, until a technique called backpropagation was popularized in the 1980s.[3]

Backpropagation uses the chain rule from calculus. It works backwards from the output:

- First, it calculates how much the final prediction needs to change to reduce the loss.

- Then, it looks at the last layer and calculates how much its weights and inputs need to change to fix the output.

- It passes this "blame" backward to the previous layer, calculating how its weights need to change.

- It repeats this all the way back to the first layer.

Why backprop scales

Because it reuses calculations as it moves backward, backprop can find the gradients for billions of parameters in a single, highly efficient sweep. Without backpropagation, modern deep learning would be impossible.

| Backprop checkpoint | Beginner proof |

|---|---|

| Final layer gets gradients first. | Output error has a direct path to final weights. |

| Earlier layers get gradients through the chain rule. | Each layer receives a reusable blame signal. |

| Optimizer sees all parameter gradients. | One update can improve the whole network. |

Batches and Epochs

In reality, we don't update the weights after looking at just one example. That would be chaotic, as the model would wildly adjust itself for every single picture or sentence it sees.

Instead, we group data into batches (or mini-batches).

- A batch might contain 32, 256, or even thousands of examples.

- The model runs a forward pass on the entire batch.

- It calculates the average loss across the batch.

- It calculates the gradients based on that average loss, and performs one weight update.

Using batches smooths out the gradient updates and allows GPUs to process data massively in parallel, making training dramatically faster.

An epoch is when the model has seen every single example in the training dataset exactly once. Smaller models often train for many epochs. Massive LLM pre-training runs are usually discussed in tokens processed, not repeated passes over a small dataset, because the dataset itself is enormous.

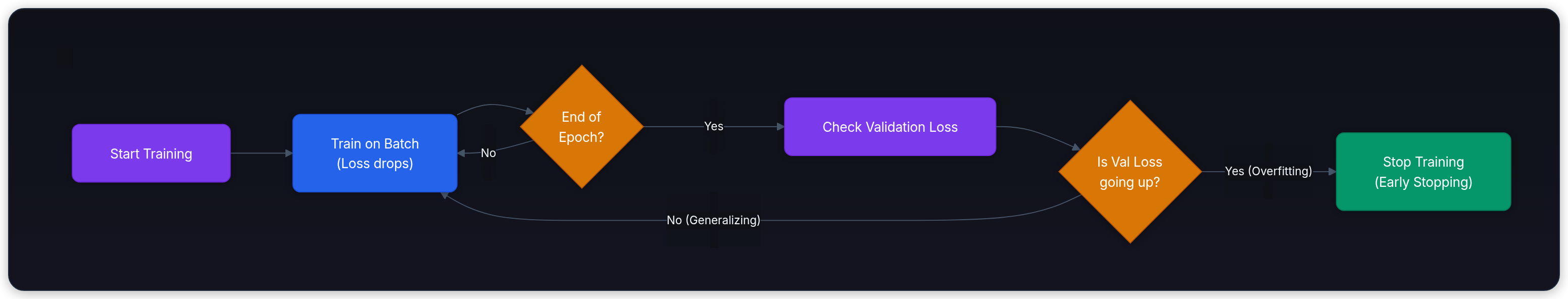

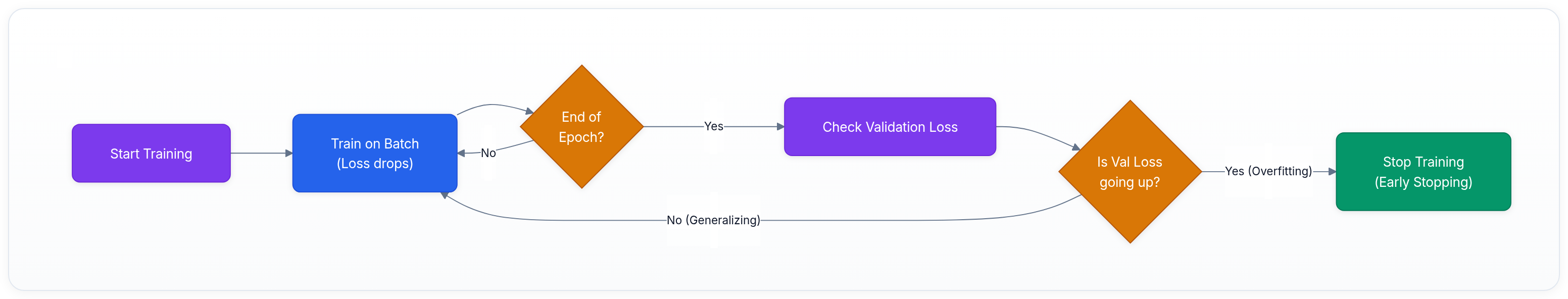

The fundamental tension: Overfitting vs Generalization

The goal of training isn't just to get the loss to zero. If that were the goal, the network could just memorize the exact answers to the training data.

Imagine a student studying for a math test by memorizing the answers to a specific practice worksheet without learning the underlying formulas. If the final exam is exactly the same worksheet, they'll score 100%. But if the teacher changes the numbers, they'll fail.

This is called overfitting. The model has memorized the noise in the training data instead of learning the underlying patterns.

Train vs validation split

To monitor this, we split our data into two sets:

- Training Set: The data the model learns from (where backpropagation happens).

- Validation Set: Hold-out data the model has never seen, used only to evaluate its performance.

If the training loss is going down, but the validation loss starts going up, the model is overfitting. It's memorizing the training data at the expense of general intelligence. This is why techniques like regularization and vast datasets are so important in modern AI.

Summary

Training a neural network is an iterative optimization process:

- A Forward Pass generates a prediction.

- A Loss Function measures the error.

- Backpropagation efficiently calculates the gradients using the chain rule.

- Gradient Descent updates the parameters to step toward a lower loss.

- Learning Rate controls the size of those steps.

- We monitor Validation Loss to ensure the model is actually learning general patterns, not just memorizing the training data.

Next, continue to Softmax, Cross-Entropy & Optimization, where training learns from probabilities instead of raw scores.

Evaluation Rubric

- 1Explains the four steps of the standard training loop

- 2Uses the mountain/valley analogy to explain gradient descent and the role of the learning rate

- 3Defines backpropagation as an efficient way to compute gradients using the chain rule

- 4Contrasts training loss with validation loss to explain overfitting

- 5Explains why training is done in mini-batches rather than updating after every single example

Common Pitfalls

- Confusing the forward pass (making predictions) with the backward pass (learning)

- Thinking backpropagation and gradient descent are the same thing (backprop computes the gradients, gradient descent uses them to update weights)

- Assuming zero loss on the training set means the model is perfectly trained (it usually means it has overfit and will fail on new data)

Follow-up Questions to Expect

Key Concepts Tested

The training loop: forward pass, calculate loss, backward pass, update weightsGradient descent: following the loss surface toward lower errorBackpropagation: applying the chain rule to compute gradients efficientlyLearning rate: the step size of optimization and what happens if it's too big or smallOverfitting vs Underfitting: balancing memorization and generalizationBatches and epochs in the training process

References