📝EasyNLP Fundamentals

Softmax, Cross-Entropy & Optimization

Understand how neural networks turn raw math into probabilities (Softmax) and how they measure their own mistakes (Cross-Entropy Loss).

20 min readGoogle, Meta, OpenAI +15 key concepts

So far, we've built a neural network that passes numbers through layers to learn patterns, and we've seen how training updates those parameters to minimize loss. But we skipped one crucial transition.

When a language model predicts the next word, it has to choose from a vocabulary of roughly 50,000 to 100,000 possible tokens. The neural network's final layer outputs a giant array of 50,000 numbers.

How do we turn those 50,000 raw numbers into a clean probability percentage for each word? And how do we mathematically measure how "wrong" that probability distribution is so the model can learn?

| Beginner question | Mechanism | Result |

|---|---|---|

| Which token is most likely? | Compare logits. | Raw preference, not a probability yet. |

| How confident is the model? | Apply softmax. | Probabilities between 0 and 1 that sum to 1. |

| How wrong was the prediction? | Apply cross-entropy. | One loss number for backpropagation. |

That transition is handled by softmax and cross-entropy loss.[1]

💡 Key insight: Softmax is the translator that turns raw neural network math into human-readable probabilities. Cross-Entropy is the harsh teacher that grades those probabilities so the model can learn.

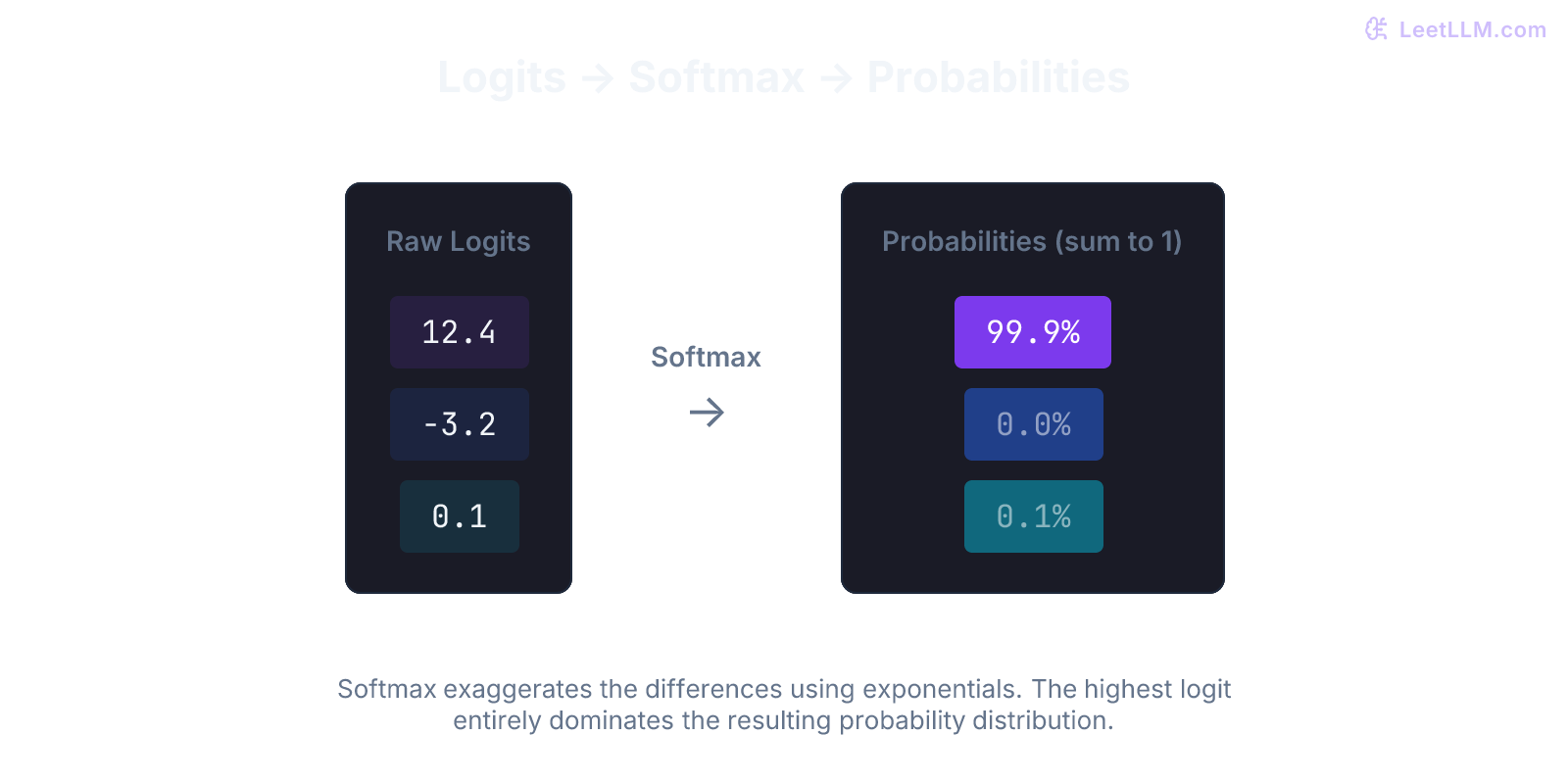

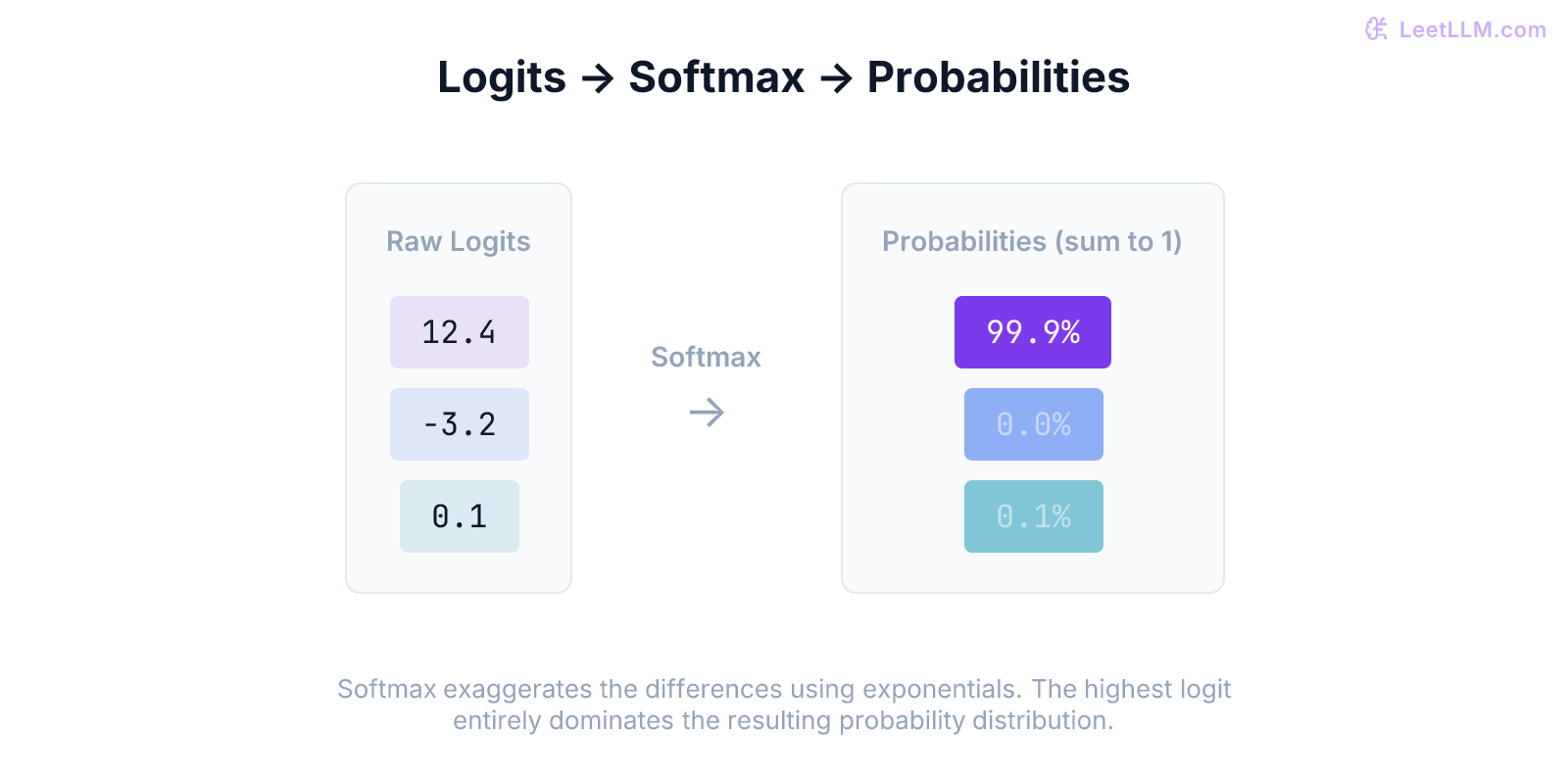

Logits: the raw scores

At the very end of a neural network, the last layer performs a final matrix multiplication. The output is a vector of numbers, one for every possible class or token.

These raw numbers are called logits.

Logits are completely unconstrained. They can be positive, negative, or zero. They don't sum up to anything in particular.

- Logit for "cat": 12.4

- Logit for "dog": -3.2

- Logit for "apple": 0.1

We can clearly see the model prefers "cat", but we can't say "there is a 95% chance of cat" because these numbers aren't probabilities. To get probabilities, we need a function that guarantees two things:

- Every output must be between 0 and 1 (no negative probabilities).

- All outputs must sum exactly to 1 (100%).

Worked probability map

| Raw score | Softmax role | Cross-entropy role |

|---|---|---|

cat: 12.4 | Dominates because exponentials amplify large logits. | Low loss if cat is correct. |

dog: -3.2 | Becomes a tiny positive probability. | High loss if dog is correct. |

apple: 0.1 | Stays possible but unlikely. | Loss depends only on its probability when apple is target. |

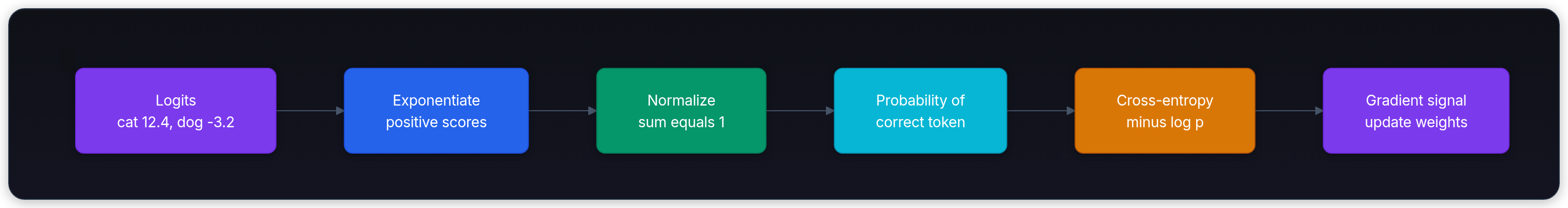

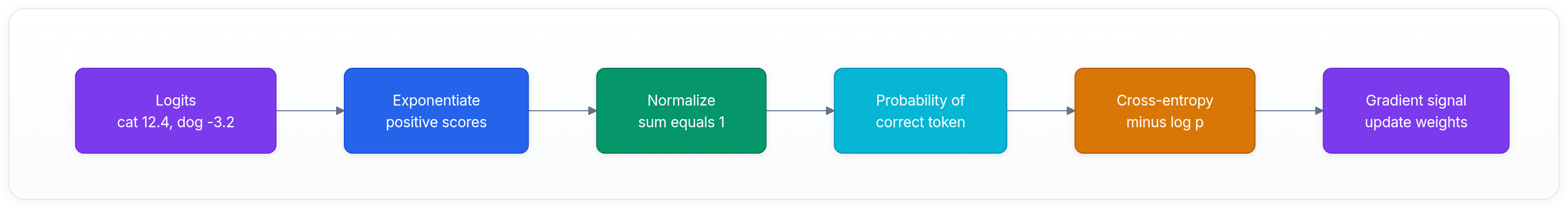

Keep this flow in mind before reading the formulas. Logits are raw preferences, softmax makes them probabilities, and cross-entropy turns the correct token's probability into a training signal.

The softmax function

The softmax function takes an array of logits and turns them into a valid probability distribution.[1]

Here is the formula for the probability of token :

Where is the logit for token , and is the total number of tokens.

Let's break down why this works:

- (Exponentiation): By raising (Euler's number, ) to the power of the logit, we guarantee the result is always positive. Even a negative logit like -3.2 becomes positive ().

- Division by the sum: By dividing each exponentiated value by the sum of all exponentiated values, we guarantee that the final fractions will sum exactly to 1.

python1import numpy as np 2 3# Our raw logits from the network 4logits = np.array([12.4, -3.2, 0.1]) 5 6# Step 1: Exponentiate 7exp_logits = np.exp(logits) 8print(f"Exp: {exp_logits}") 9# [2.42e+05, 4.07e-02, 1.10e+00] 10 11# Step 2: Normalize (divide by sum) 12probabilities = exp_logits / np.sum(exp_logits) 13print(f"Probabilities: {probabilities}") 14# [0.999995, 0.000000, 0.000004]

Why exponentiate? The winner-takes-all effect

Notice what happened above. The logit 12.4 wasn't just slightly bigger than 0.1. It completely dominated.

The exponential function exaggerates differences. If one logit is slightly higher than the rest, softmax pushes its probability closer to 100% and crushes the others toward 0%. It's called a "soft" max because it acts like a maximum function (picking the single highest value), but still leaves a tiny probability for the runners-up. That keeps the math differentiable for training.

Temperature: controlling the LLM's confidence

If you've used an LLM API, you've seen the temperature setting. Temperature is a math trick applied directly to the softmax function.[2]

Before we exponentiate the logits, we divide them by the temperature ().

- (default): The standard softmax.

- (very low): Division by a tiny number makes the logits massive. The highest logit dominates. Softmax becomes nearly deterministic, assigning almost all probability to the top choice. This is useful for coding or math.

- (high): Division shrinks the logits closer to zero. The differences between logits shrink too. Softmax flattens out, giving lower-ranked tokens a higher probability. The model becomes more random.

Cross-entropy loss: grading the model

Now the model has output a probability distribution. How do we grade it during training?

First, we represent the correct answer (the ground truth) as a probability distribution. Since the correct next token is exactly one specific token, such as "cat", its distribution is 100% for "cat" and 0% for everything else. This is called a one-hot vector.

- Prediction:

[0.80 (cat), 0.15 (dog), 0.05 (apple)] - Ground Truth:

[1.00 (cat), 0.00 (dog), 0.00 (apple)]

Cross-Entropy Loss measures how different these two distributions are. The formula is:

Where is the ground truth probability, and is the predicted probability.

Because the ground truth is 0 for every incorrect word, all those terms cancel out. The formula simplifies dramatically. We only care about the log probability of the correct word!

If the model predicted 0.80 for the correct word:

If the model was very wrong and predicted 0.01 for the correct word:

Why use logarithms?

Why not just use simple subtraction (e.g., error)?

Because logarithms severely penalize overconfidence. If the model is 99% confident in the wrong answer, the probability assigned to the correct answer is near zero. The negative log of a tiny probability is huge. Cross-entropy pushes the model to be right and calibrated, not merely confident.

Summary

- The neural network outputs raw, unconstrained numbers called logits.

- Softmax uses exponentials to turn those logits into a valid probability distribution (0 to 1, summing to 1).

- Temperature divides the logits before softmax to make the distribution flatter (more random) or sharper (more deterministic).

- Cross-entropy loss grades the prediction by taking the negative logarithm of the probability assigned to the correct answer. This loss is what backpropagation uses to update the weights.

Next, continue to The Transformer Architecture End-to-End, where probabilities sit at the end of the full LLM pipeline.

Evaluation Rubric

- 1Defines logits as raw, unconstrained output scores

- 2Explains how Softmax transforms logits into a valid probability distribution using exponentials

- 3Calculates cross-entropy loss for a simple prediction and explains why we take the negative log

- 4Connects softmax and cross-entropy to the concept of next-token prediction

- 5Explains the math behind 'Temperature' in LLM APIs and how it flattens or sharpens softmax outputs

Common Pitfalls

- Confusing logits with probabilities (logits can be negative and don't sum to 1)

- Thinking cross-entropy loss is just measuring the difference in percentages (it specifically measures the surprise/information difference using logarithms)

- Assuming temperature is applied *after* softmax (it happens *before*, dividing the raw logits)

Follow-up Questions to Expect

Key Concepts Tested

Logits: the raw, unnormalized scores output by the final layerSoftmax: converting logits into a probability distribution that sums to 1Cross-Entropy Loss: measuring the distance between the predicted probabilities and the true answerOne-hot encoding: representing the ground truth as probabilitiesWhy temperature scaling affects model confidence and generation

References