🧠EasyTransformer Architecture

The Transformer Architecture End-to-End

See how all the pieces fit together: tokens, embeddings, attention, feed-forward layers, and residual connections assembled into the complete Transformer model.

30 min readGoogle, Meta, OpenAI +57 key concepts

When ChatGPT writes a poem, when Google Translate converts a paragraph from English to Japanese, when a code assistant autocompletes your function, they're usually running the same kind of engine under the hood: a Transformer. It's the architecture behind much of modern language AI.

You've already seen individual pieces of the Transformer in earlier articles: tokenization breaks text into tokens, embeddings turn those tokens into vectors, and attention lets tokens focus on each other. But how do all these pieces actually fit together into one working model? That's what this article answers.

By the end, you'll be able to trace a sentence through the entire Transformer pipeline, understand what every component does and why it's there, and recognize the three major architecture variants used by modern language models.

💡 Key insight: The Transformer is an assembly of components you can understand individually. The power comes from how they're combined: attention routes information between tokens, feed-forward networks transform each token's representation, residual connections keep gradients flowing in deep networks, and layer normalization stabilizes training. Stacking these four elements N times gives you a model that can learn language, code, math, and more.

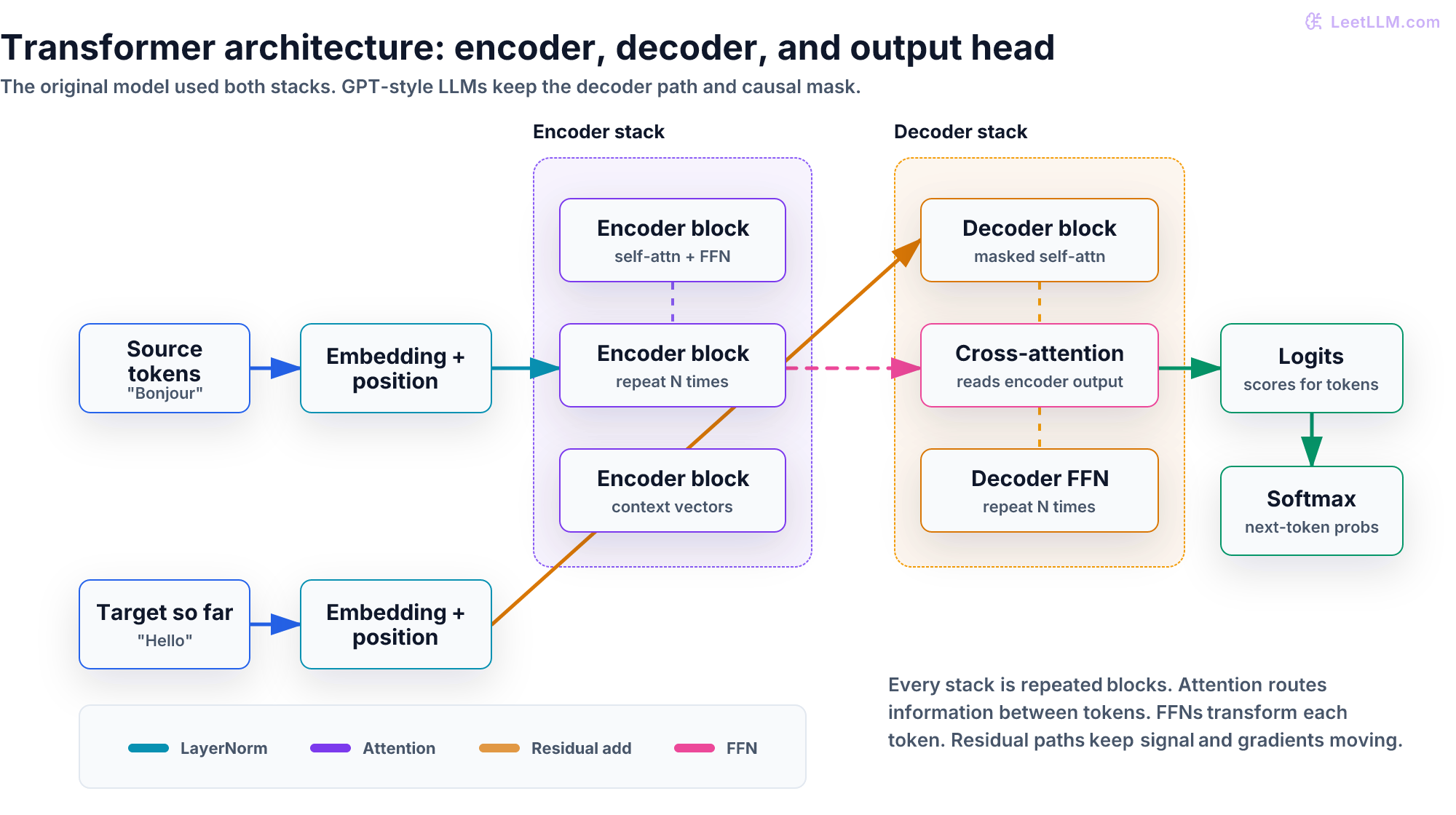

The big picture: what does a Transformer do?

At the highest level, a Transformer is a function that takes a sequence of tokens and produces a new sequence of tokens. For a language model like GPT, it takes all the tokens so far and predicts the next one. For a translation model like the original Transformer, it takes a source sentence and generates the translation.

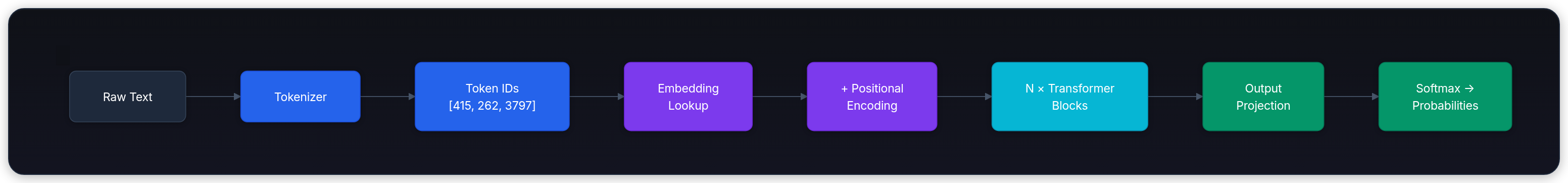

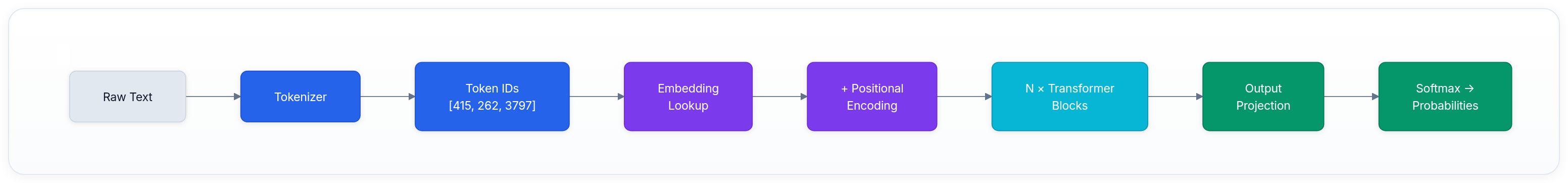

Here's the full data flow from raw text to output:

Each step:

- Tokenize: Split "The cat sat" into token IDs like

[464, 3797, 3332](details) - Embed: Look up each token ID in a learned embedding table, producing a vector (say, 768 numbers) per token (details)

- Add position: Since attention has no inherent sense of word order, add positional encodings to tell the model where each token sits in the sequence

- Transform: Pass through N identical transformer blocks (the core of the architecture)

- Project: Multiply the final hidden state by a weight matrix to produce a score (logit) for every token in the vocabulary

- Predict: Apply softmax to get a probability distribution over the vocabulary, and sample the next token

Steps 4 through 6 are where the magic happens. Let's zoom into the transformer block.

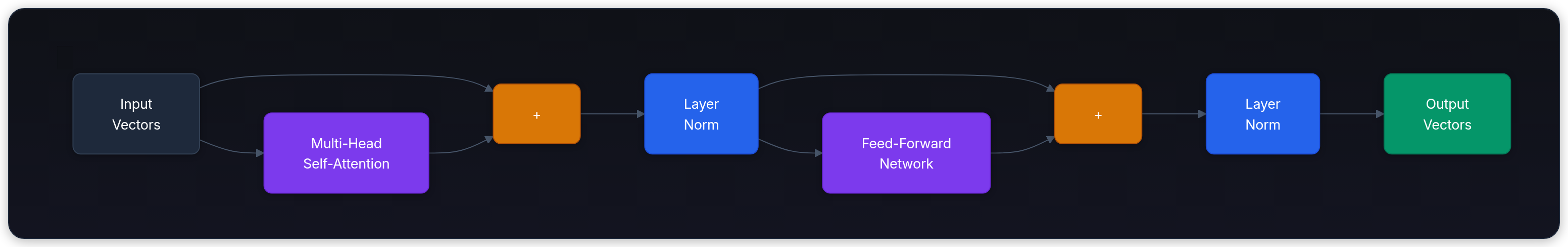

The transformer block: the repeating unit

The Transformer is built from stacking identical blocks (also called layers). Each block has the same internal structure, but its own set of learned parameters. A typical LLM might have 32, 64, or even 128 of these blocks stacked sequentially.

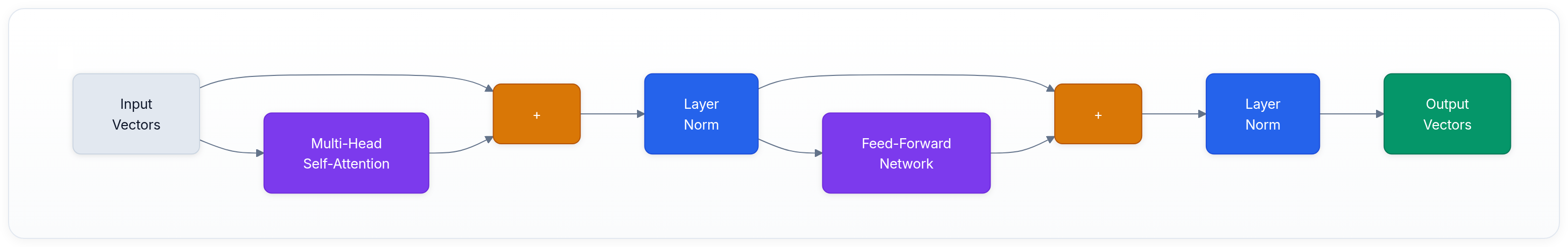

Each block has four components, always in the same order:

Component 1: multi-head self-attention

This is where tokens communicate with each other. Every token looks at every other token (or every previous token, in a decoder) and decides which ones are relevant.

The input enters as a sequence of vectors. Each vector is projected into Query, Key, and Value representations. The attention mechanism computes which tokens should attend to which, and produces a weighted combination of values. Multiple "heads" do this in parallel, each learning to focus on different types of relationships (syntactic, semantic, positional).[1]

For a full walkthrough of the math, see the attention article.

Component 2: add & layer norm (first residual connection)

After attention, two things happen:

- Add (residual connection): The attention output is added to the original input. This means the block's input can flow directly to the output, giving gradients a highway to travel during training.[2]

- Layer normalization: Normalize the values to prevent them from growing too large or too small as they pass through many layers.[3]

This formula shows the original Post-LN pattern: apply the sub-layer, add the residual connection, then normalize. Many modern models use Pre-LN instead, where normalization comes before attention or the FFN. See Layer Normalization for the full story.

Component 3: feed-forward network (FFN)

After attention lets tokens share information, the FFN processes each token independently. It's a simple two-layer neural network (exactly the kind described in Neural Networks from Scratch):

The FFN typically expands the dimension by 4× (e.g., 768 → 3072), applies GELU activation, then projects back down. Why? Research suggests the FFN acts like a key-value memory store where the first layer selects input patterns and the second layer contributes output-token information.[4]

🔬 Research insight: The FFN has more parameters than the attention mechanism in each block. In a standard transformer with hidden size 768, the attention mechanism has roughly 2.4M parameters per block, while the FFN has roughly 4.7M. The FFN is where most of the model's "knowledge" is stored, yet it gets far less attention (pun intended) in popular explanations.

Component 4: add & layer norm (second residual connection)

Same pattern as before: add the FFN output to its input, then normalize.

Putting it all together

Here's one complete transformer block:

The input vectors go through attention (tokens exchange information), get added back (residual connection), get normalized, go through the FFN (each token is transformed independently), get added back again, and get normalized again. The output vectors have the same shape as the input vectors, so they can be fed directly into the next block.

This block repeats N times. Each repetition has its own set of weights. As data passes through more blocks, the representations become increasingly abstract and contextual.

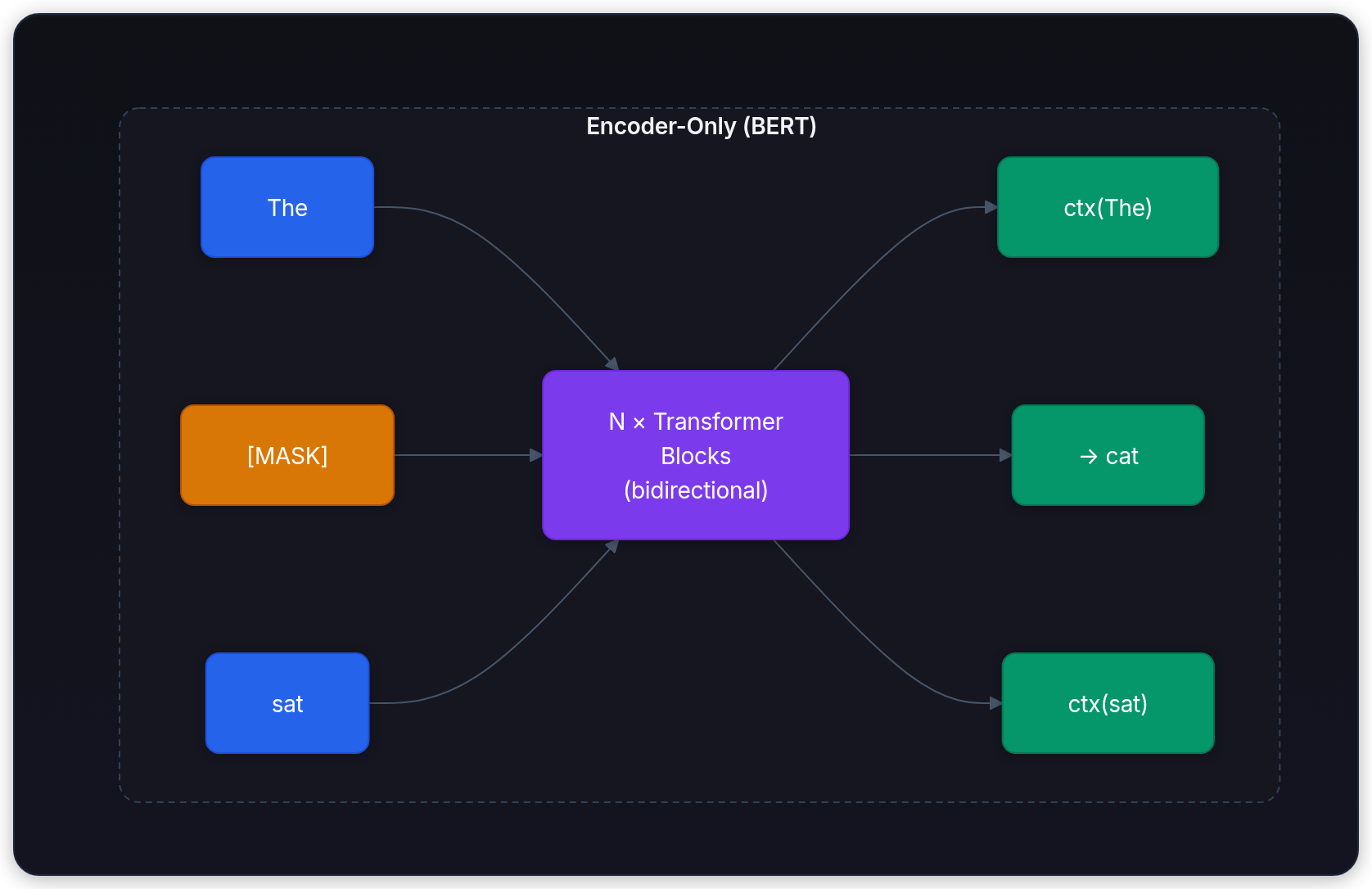

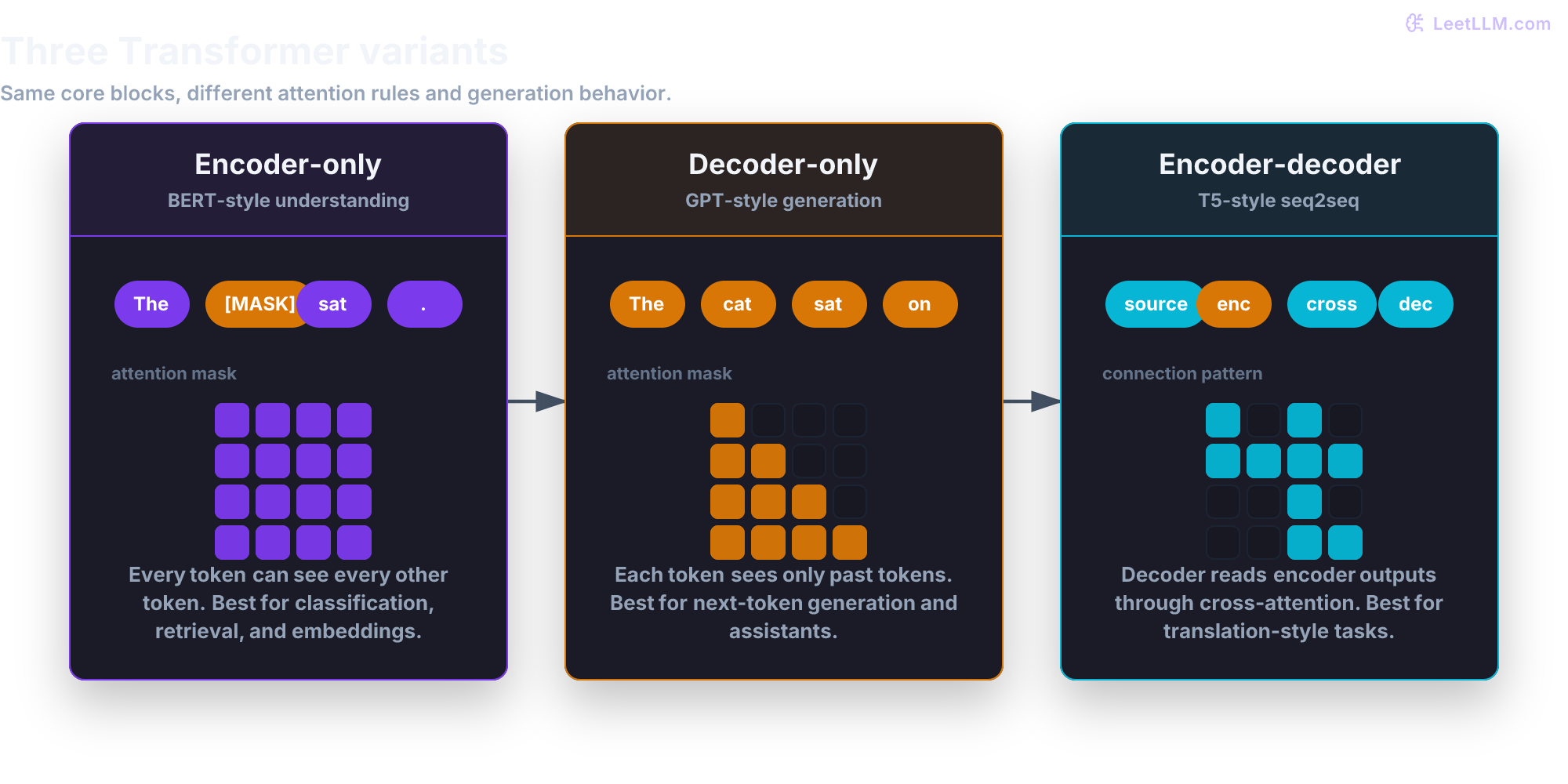

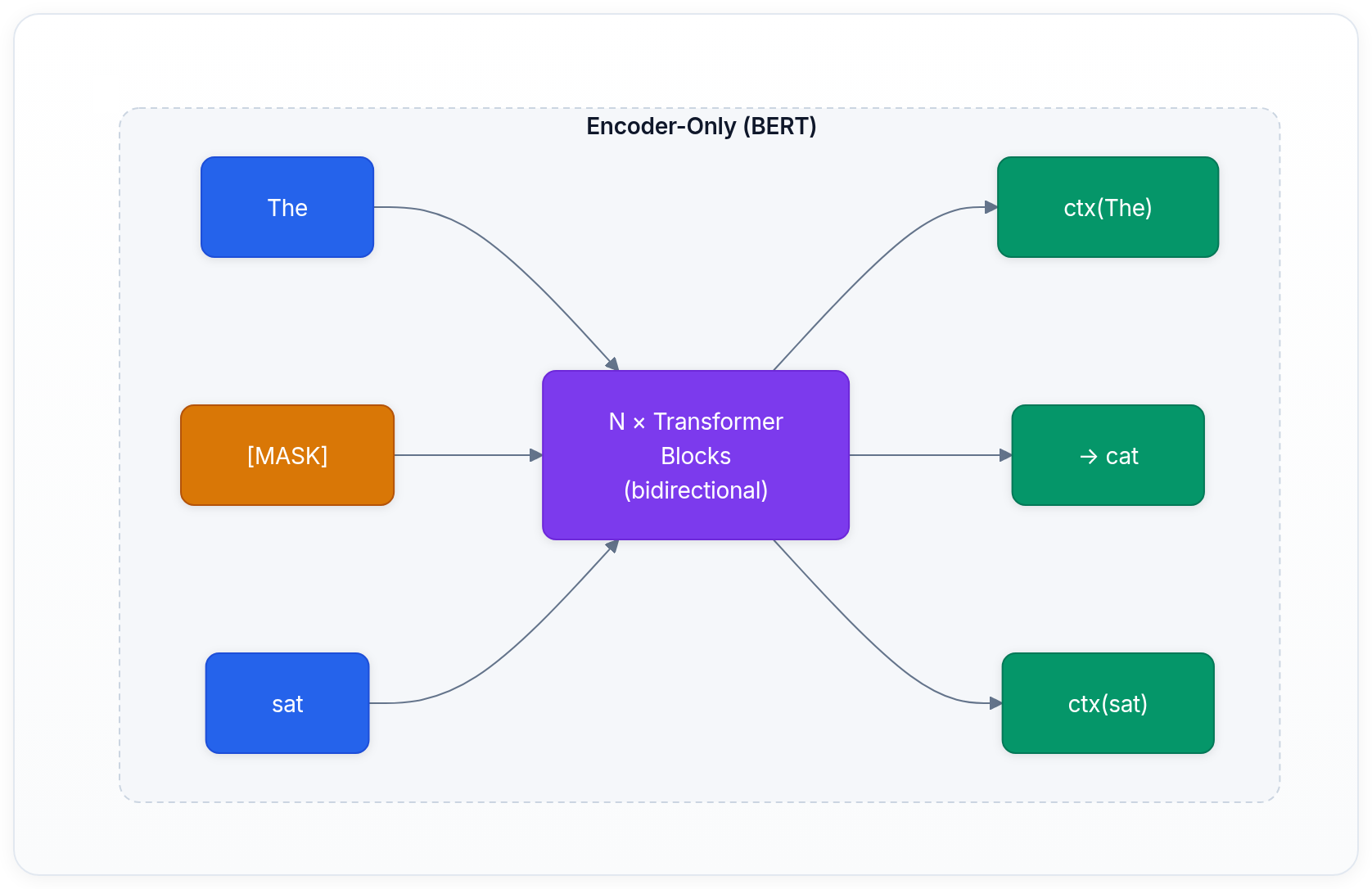

The encoder: bidirectional understanding

The encoder processes the entire input sequence simultaneously and bidirectionally. Every token can attend to every other token, in both directions. This is ideal for tasks where you need to understand the full input before producing an output, like classification, named entity recognition, or creating a semantic representation of text.

The encoder stack is N identical transformer blocks, as described above. The output is a sequence of contextualized vectors, one per input token, where each vector captures the meaning of that token in the context of the entire sequence.

BERT: the encoder-only model

BERT (Bidirectional Encoder Representations from Transformers) showed that an encoder-only model, trained with a masked language modeling objective ("fill in the blank"), could learn powerful representations that transfer to many downstream tasks.[5]

BERT sees all tokens at once. During training, it masks random tokens and learns to predict them using the surrounding context. This bidirectional understanding makes it excellent for classification and information extraction, but it can't generate text token-by-token.

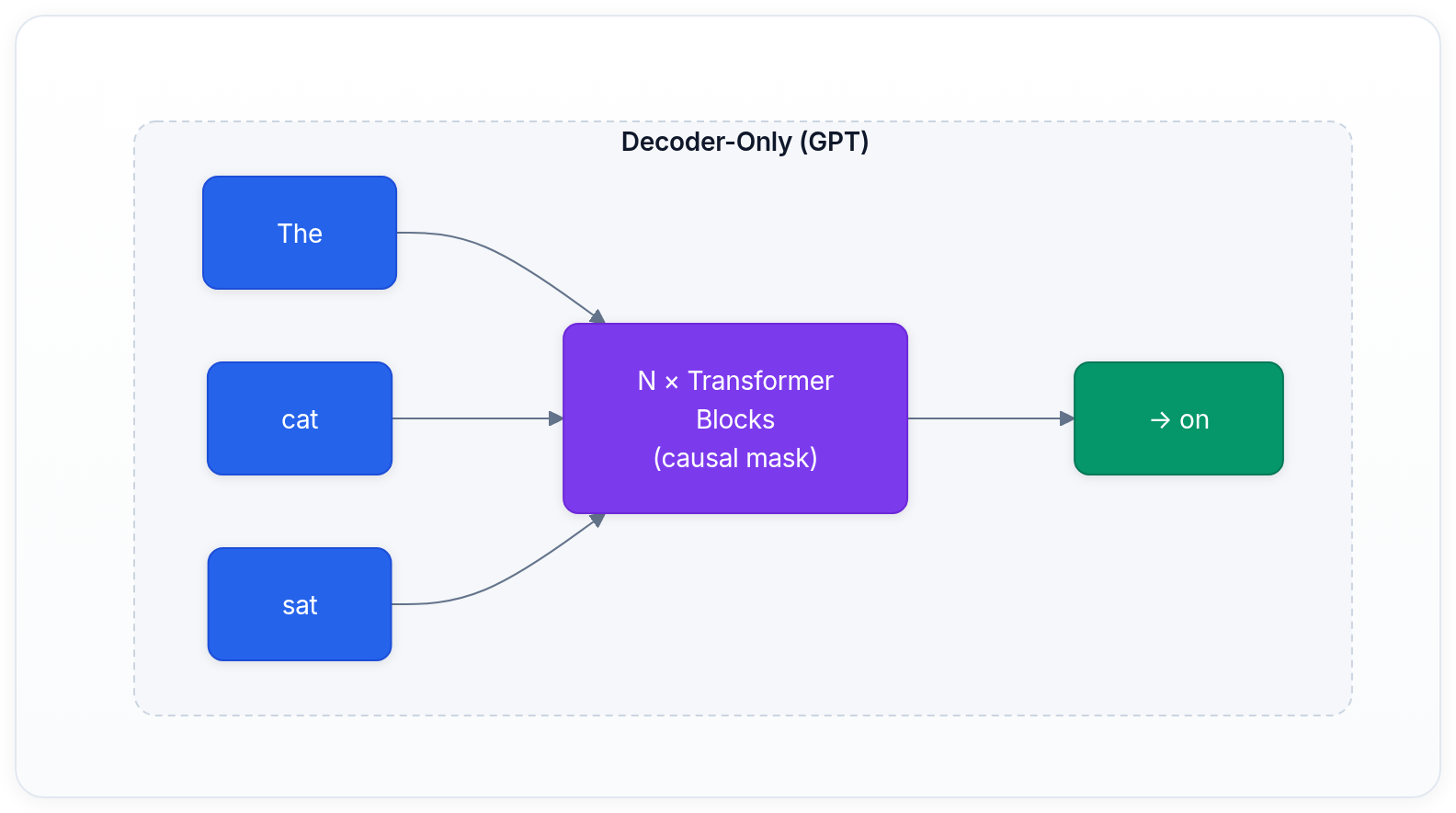

The decoder: autoregressive generation

The decoder generates output one token at a time, left to right. At each step, it can only see the tokens that came before the current position. This is enforced by a causal mask in the attention layer that blocks future positions.

Causal masking: can't peek at the future

In the attention computation, the causal mask sets all positions to the right of the current token to before the softmax. This zeroes out their attention weights, ensuring the model predicts each token based only on preceding context:

| The | cat | sat | on | |

|---|---|---|---|---|

| The | ✅ | ❌ | ❌ | ❌ |

| cat | ✅ | ✅ | ❌ | ❌ |

| sat | ✅ | ✅ | ✅ | ❌ |

| on | ✅ | ✅ | ✅ | ✅ |

✅ = can attend, ❌ = masked (set to before softmax)

When predicting the token after "sat", the model can attend to "The", "cat", and "sat", but not "on." This is what makes generation possible: the model learns to predict the next token given only the preceding tokens.

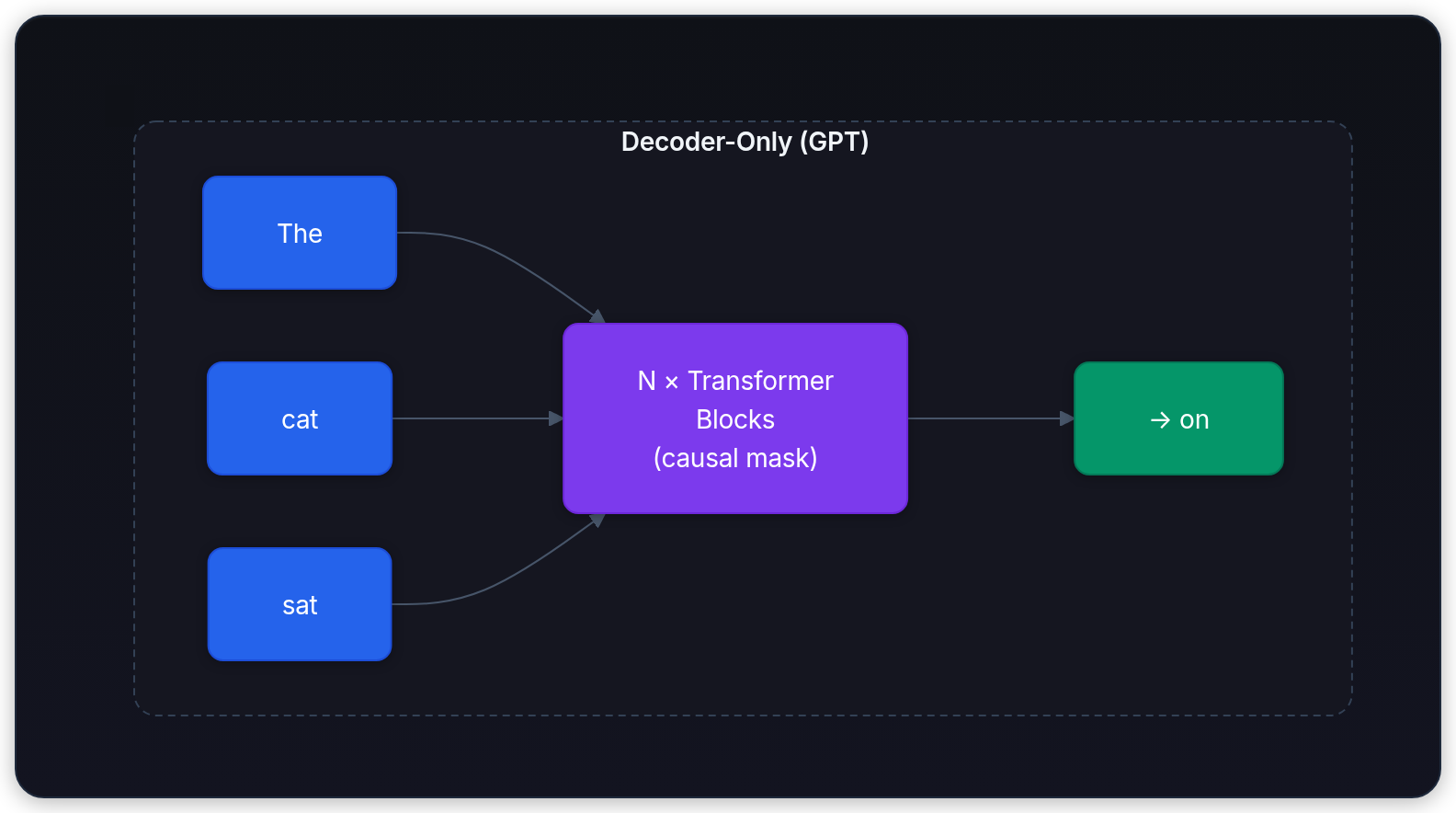

GPT: the decoder-only model

GPT (Generative Pre-trained Transformer) is the decoder-only architecture family that made large-scale autoregressive language modeling practical.[6][7][8]

The decoder-only approach is simpler (one stack instead of two), easier to scale, and naturally supports open-ended generation. This is why it became the dominant architecture for GPT-style large language models.

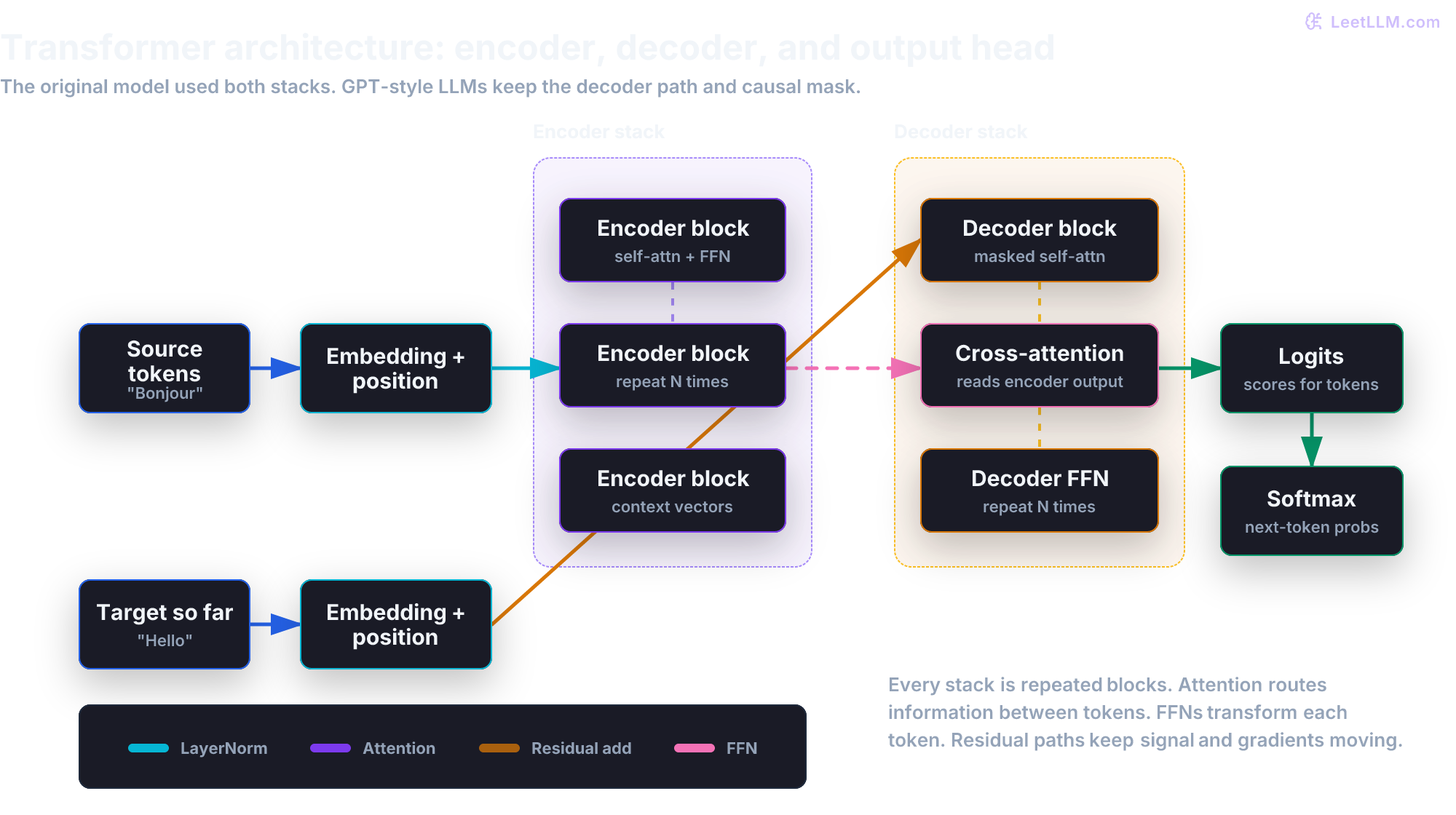

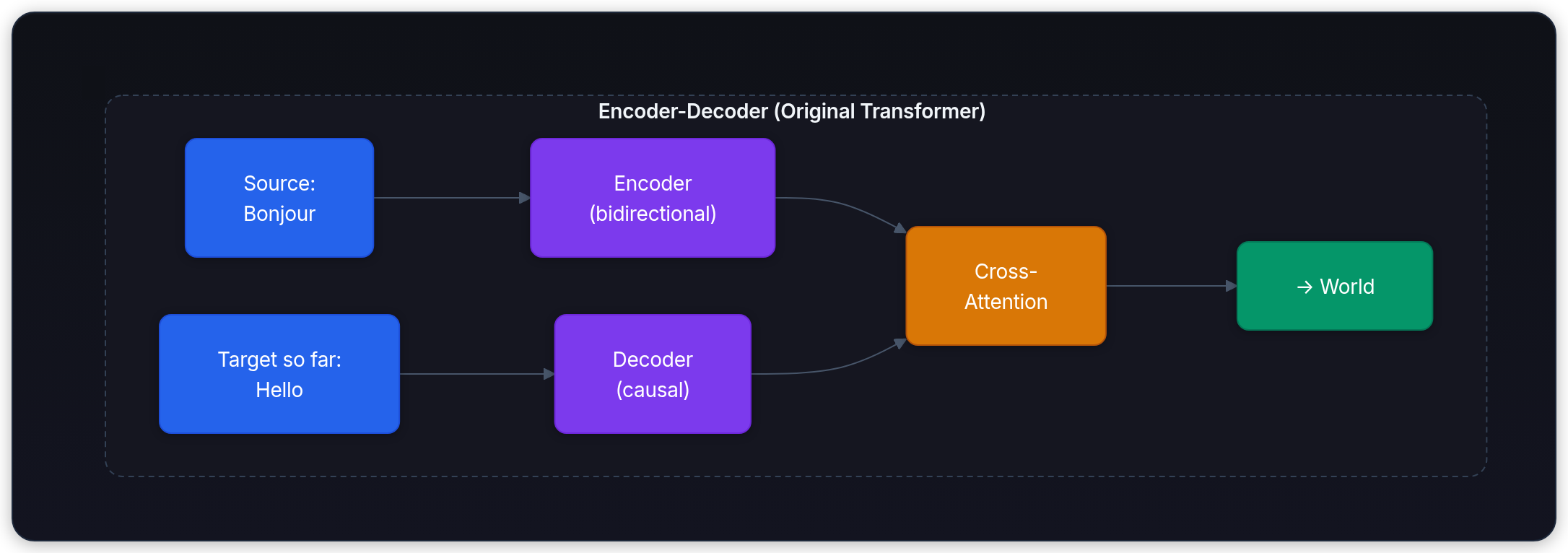

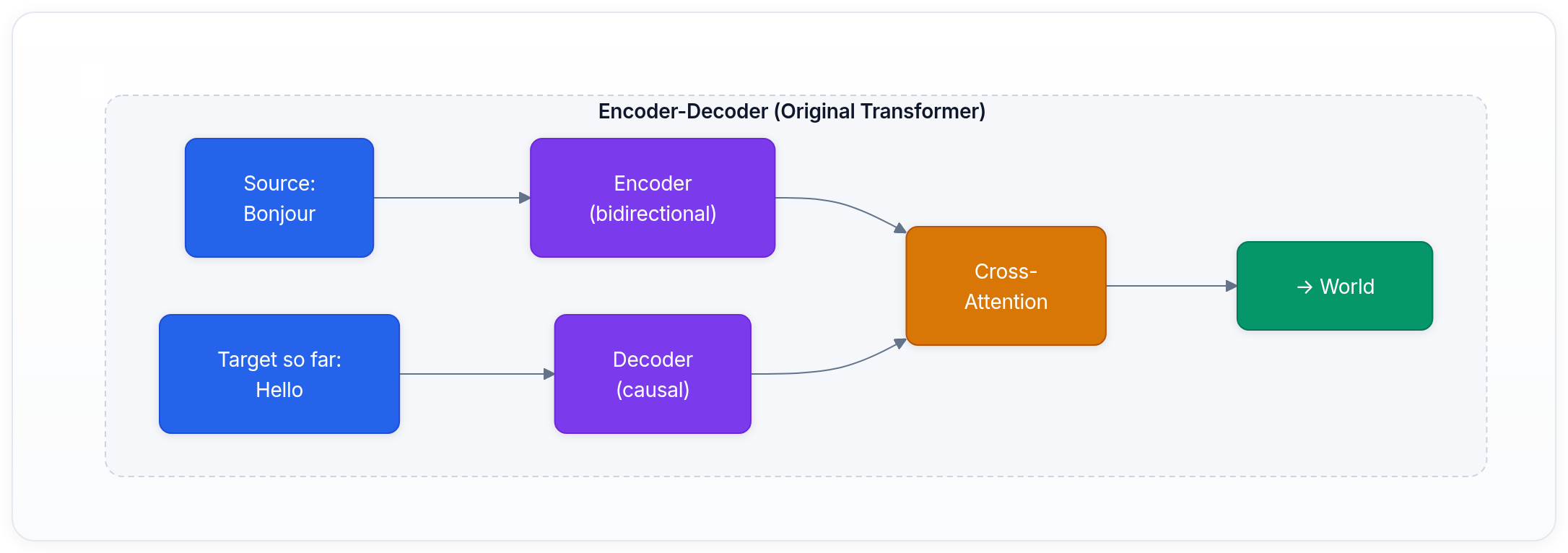

The original encoder-decoder: the full Transformer

The original Transformer paper introduced both an encoder and decoder working together for machine translation.[1] The key addition is cross-attention, which lets the decoder "read" the encoder's output:

In cross-attention, the queries come from the decoder (what the decoder is looking for), while the keys and values come from the encoder (what the source sentence contains). This lets each generated word in the target language attend to the most relevant words in the source language.

T5 and sequence-to-sequence models

T5 (Text-to-Text Transfer Transformer) by Google unified many NLP tasks into a single encoder-decoder format: every task is framed as "input text → output text."[9] Summarization, translation, question answering, and classification all use the same architecture with different input/output prefixes.

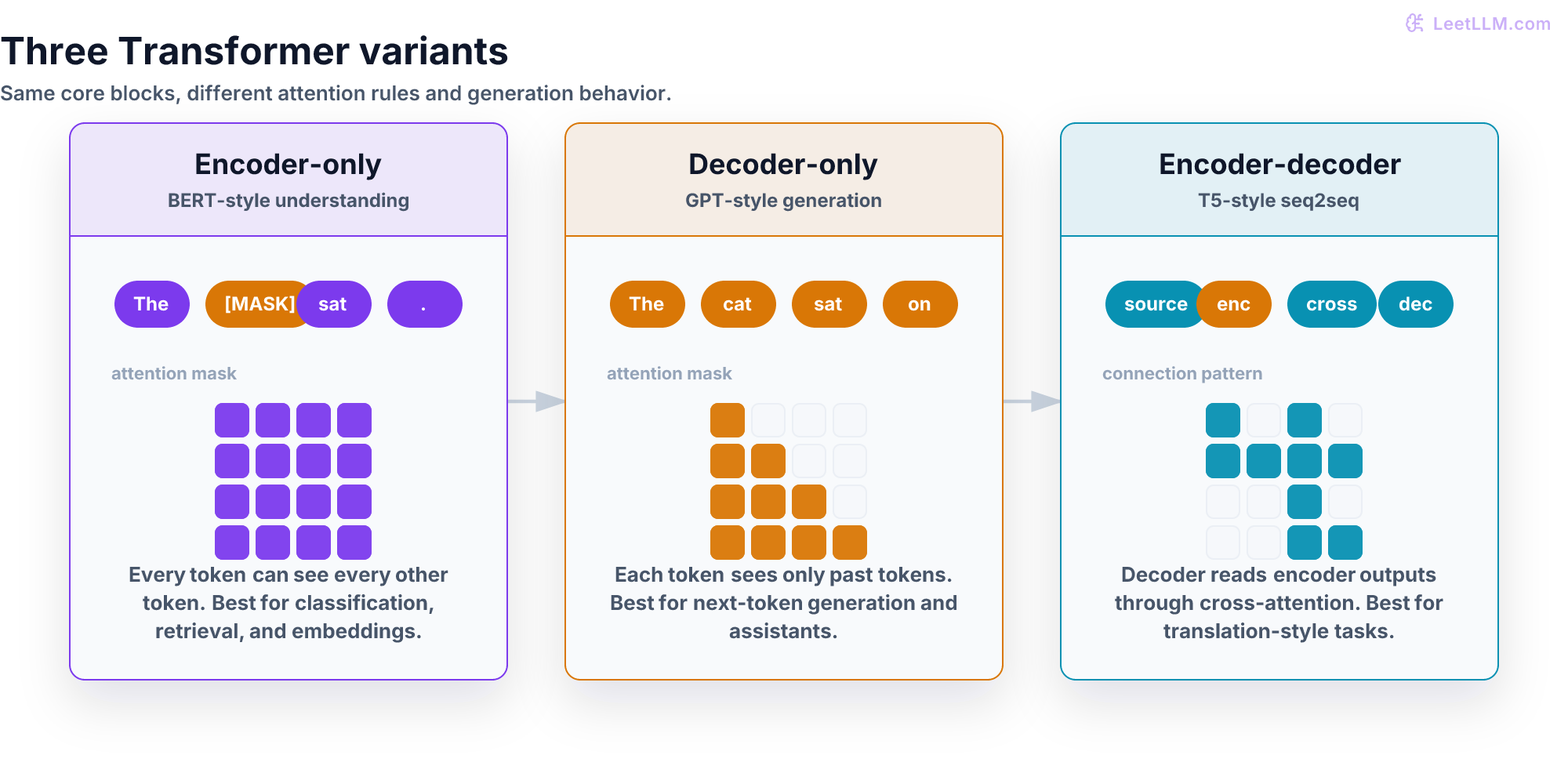

The three architecture variants: when to use which

| Variant | Representative Models | Attention Type | Strength | Weakness |

|---|---|---|---|---|

| Encoder-only | BERT, RoBERTa, DeBERTa | Bidirectional | Understanding, classification, embeddings | Can't generate text |

| Decoder-only | GPT, Llama, Qwen | Causal (left-to-right) | Text generation, general-purpose AI | Doesn't see future context |

| Encoder-decoder | T5, BART, mBART | Both + cross-attention | Translation, summarization with clear input→output | More complex, harder to scale |

For general-purpose text generation, the field has moved heavily toward decoder-only models. The simplicity and scalability of this approach won out, and in-context learning reduced the need for separate encoder representations.

Residual connections: the secret to going deep

We mentioned residual connections briefly, but they deserve more attention because they're what makes deep transformers possible at all.

The idea, from He et al.'s ResNet paper, is simple: instead of learning the full transformation , each layer learns the residual , and the output is .[2]

Why does this matter? Without residual connections, training a 96-layer network is nearly impossible. Gradients either vanish (become too small to update early layers) or explode (become so large they destabilize training). The residual connection gives gradients a direct shortcut path from the output all the way back to the input, regardless of how many layers are in between.

| Path | What it carries | Why it helps |

|---|---|---|

| Identity path | Original input vector | Preserves information and gradient flow. |

| Attention path | Token-to-token context | Adds information from relevant positions. |

| FFN path | Per-token transformation | Adds learned knowledge and nonlinear features. |

There's also an intuitive benefit: each transformer block doesn't need to learn the entire representation from scratch. It only needs to learn a small refinement of its input. The identity path carries the original signal forward, and the attention + FFN path adds corrections. This makes each block's job easier.

💡 Key insight: Residual connections give each block a conservative option: keep the input and learn a small correction. That shortcut is why very deep LLMs can train without forcing every layer to relearn the whole representation from scratch.

End-to-end walkthrough: tracing "The cat sat"

Let's trace the sentence "The cat sat" through a complete decoder-only transformer, step by step.

Step 1: tokenization

The tokenizer splits "The cat sat" into token IDs. Using a BPE tokenizer, this might produce [464, 3797, 3332].

Step 2: embedding lookup

Each token ID is used to look up a learned vector from the embedding table. If our model uses a hidden dimension of 768, we now have a matrix of shape [3, 768], where each row is a 768-dimensional vector representing one token.

Step 3: positional encoding

Since attention is permutation-invariant (it doesn't know word order), we add positional information. Modern models use RoPE (details), which encodes position directly into the attention computation.

Step 4: transformer blocks (×N)

The [3, 768] matrix enters the first transformer block:

- Self-attention: Each token computes Q, K, V projections. Token "sat" attends to "The" and "cat" (but not future tokens, thanks to causal masking). The attention output captures contextual relationships.

- Add & norm: The attention output is added back to the input and normalized.

- FFN: Each token's 768-dim vector is expanded to 3072 dimensions, transformed with GELU, and projected back to 768. This is where the model applies learned knowledge.

- Add & norm: The FFN output is added back and normalized.

This repeats for every block. After 32 blocks, the initial token representations have been refined 32 times, each time incorporating more context and knowledge.

Step 5: output projection

The final hidden state (shape [3, 768]) is multiplied by the output projection matrix (shape [768, vocab_size]). This produces logits: one score per vocabulary token. For a vocabulary of 50,257 tokens, the output is [3, 50257].

Step 6: next-token prediction

We take the logits for the last position (corresponding to "sat") and apply softmax to get a probability distribution over the vocabulary. The model might assign high probability to tokens like "on", "down", "there", and "quietly." We sample from this distribution to get the next token.

Then we append that token to the sequence, and the whole process repeats for the next position. This is autoregressive generation.

Where each component lives in the curriculum

This article gives you the map. Here's where to go deep on each piece:

| Component | Deep-dive article |

|---|---|

| Tokenization | BPE, WordPiece, and SentencePiece |

| Embeddings | Static to Contextual Embeddings |

| Attention mechanism | Scaled Dot-Product Attention |

| Multi-head / grouped-query attention | Multi-Query & Grouped-Query Attention |

| Positional encoding | Positional Encoding: RoPE & ALiBi |

| Layer normalization | Layer Normalization: Pre-LN vs Post-LN |

| Decoding / sampling | Decoding Strategies: Greedy to Nucleus |

| Scaling the architecture | Scaling Laws & Compute-Optimal Training |

| Efficient attention | FlashAttention & Memory Efficiency |

| Training the model | Pre-training Data at Scale |

Key takeaways

- The Transformer is built from four core components stacked into repeating blocks: multi-head attention, residual connections, layer normalization, and feed-forward networks

- The FFN (feed-forward network) has more parameters than attention and acts as each block's knowledge store

- Residual connections enable training networks 100+ layers deep by giving gradients a direct shortcut path

- Encoder-only models (BERT) understand bidirectionally but can't generate; decoder-only models (GPT-style models) generate left-to-right with causal masking; encoder-decoder models (T5) use cross-attention for sequence-to-sequence tasks

- General-purpose LLMs are now mostly decoder-only: simpler, more scalable, and effective for open-ended generation

- Every token flows through the same pipeline: tokenize → embed → add position → N × (attend + FFN) → project → softmax → predict

- This article is the map; each component has its own deep-dive article in the curriculum

Next, continue to Language Modeling & Next Tokens, where this architecture becomes an autoregressive generator.

Evaluation Rubric

- 1Traces a complete input sentence through every stage of a transformer, from raw text to output probabilities

- 2Explains the transformer block as attention + residual + layer norm + FFN + residual + layer norm

- 3Differentiates encoder (bidirectional) from decoder (causal, autoregressive) and their masking strategies

- 4Explains why residual connections solve the degradation problem in deep networks

- 5Describes the role of the FFN as the per-token knowledge transformation step between attention layers

- 6Maps the three major architecture variants (encoder-only, decoder-only, encoder-decoder) to their use cases and representative models

- 7Connects each component to its dedicated deep-dive article in the curriculum

Common Pitfalls

- Thinking the transformer is just attention (it's attention + FFN + residual connections + layer norm, and the FFN has more parameters than attention)

- Not knowing that GPT-style LLMs are decoder-only, meaning they dropped the encoder entirely

- Confusing the number of attention heads with the number of layers (heads are parallel within one layer; layers are sequential)

- Forgetting that residual connections are essential, not optional, for training deep transformers

- Assuming BERT and GPT have the same architecture (BERT is encoder-only with bidirectional attention; GPT is decoder-only with causal masking)

Follow-up Questions to Expect

Key Concepts Tested

Complete data flow: tokens to embeddings to transformer blocks to output logitsThe transformer block: attention, add & norm, FFN, add & normResidual connections and why they're essential for deep networksEncoder vs decoder: what each does and when you need whichCross-attention: how encoder outputs inform decoder predictionsEncoder-only (BERT), decoder-only (GPT), and encoder-decoder (T5) variantsThe FFN as the knowledge store within each transformer block

References

Attention Is All You Need.

Vaswani, A., et al. · 2017

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.

Devlin, J., et al. · 2019 · NAACL 2019

Improving Language Understanding by Generative Pre-Training.

Radford, A., et al. · 2018

Language Models are Unsupervised Multitask Learners.

Radford, A., et al. · 2019

Language Models are Few-Shot Learners.

Brown, T., et al. · 2020 · NeurIPS 2020

Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer.

Raffel, C., et al. · 2020 · JMLR

Deep Residual Learning for Image Recognition.

He, K., Zhang, X., Ren, S., Sun, J. · 2015 · CVPR 2016

Layer Normalization.

Ba, J. L., Kiros, J. R., & Hinton, G. E. · 2016

Deep Learning.

Goodfellow, I., Bengio, Y., Courville, A. · 2016

Transformer Feed-Forward Layers Are Key-Value Memories.

Geva, M., Schuster, R., Berant, J., Levy, O. · 2021 · EMNLP 2021