📊EasyEvaluation & Benchmarks

Statistics and Uncertainty

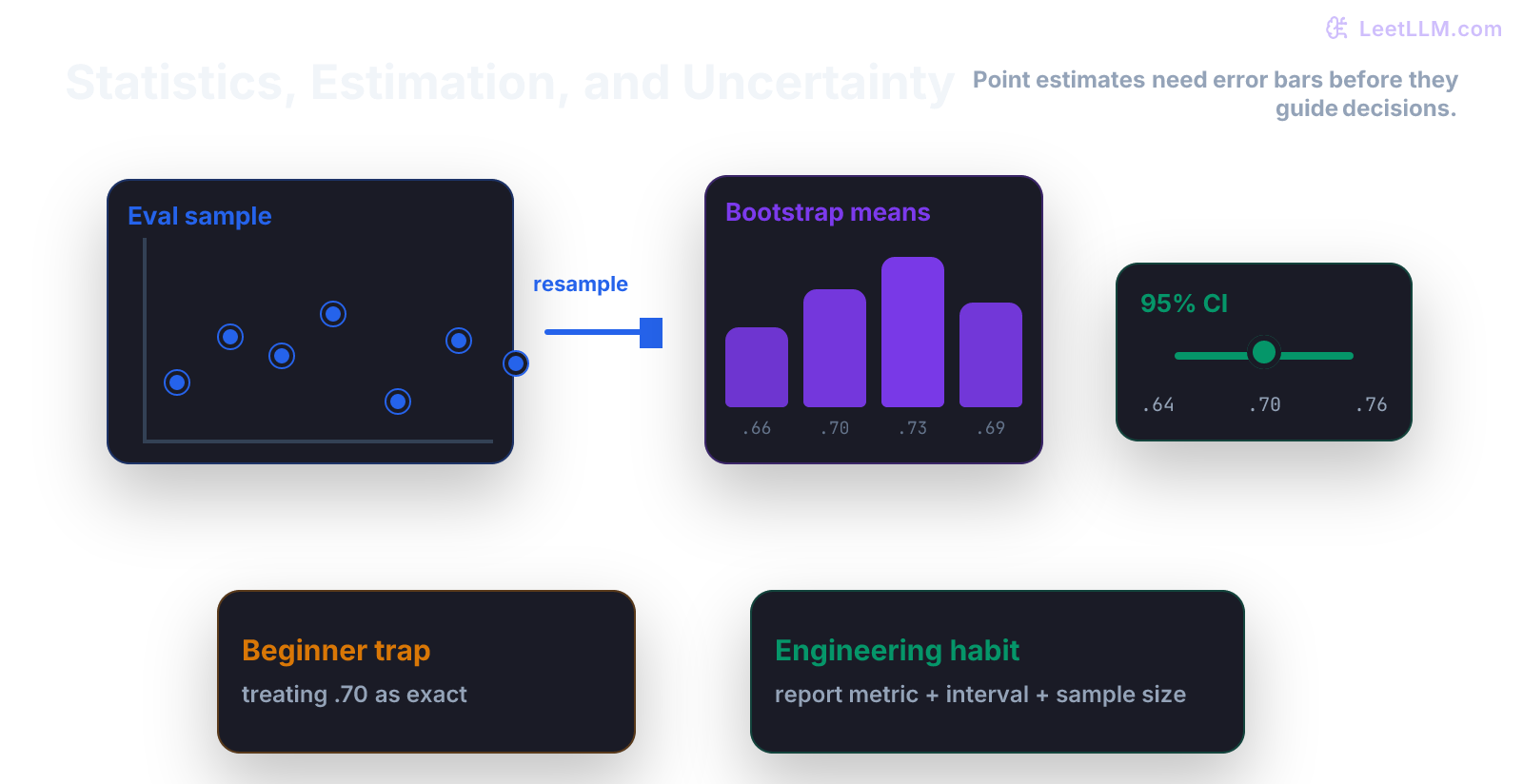

A math-book style chapter on estimates, sample size, standard error, and confidence intervals using model accuracy as the running example.

35 min readOpenAI, Anthropic, Google +17 key concepts

Statistics starts with a humbling idea: your dataset is only a sample.

If an evaluation says a model got 84 out of 100 examples right, the model's observed accuracy is 84 percent. But that doesn't mean the model's true accuracy is exactly 84 percent on future traffic.

The sample is a spoonful, not the whole pot.

What You Learn

This chapter teaches how to separate a score from the uncertainty around that score. That habit is the difference between "the metric went up" and "the evidence is strong enough to launch."[1][2][3]

Step Map

| Step | Question | What you should be able to do |

|---|---|---|

| 1 | What did we observe? | Compute a sample metric. |

| 2 | What are we trying to know? | Name the unknown true value. |

| 3 | How noisy is the estimate? | Compute standard error or bootstrap spread. |

| 4 | What range is plausible? | Report an interval, not just one score. |

| 5 | What decision follows? | Compare the interval to the launch threshold. |

Probability taught you how to reason about uncertain events. Statistics teaches you how much to trust estimates from finite data.

Tiny Story

You evaluate an answer classifier on 100 held-out examples.

It gets 84 correct.

| Quantity | Value |

|---|---|

| Correct answers | 84 |

| Total examples | 100 |

| Observed accuracy | 0.84 |

The point estimate is:

The hat in means "estimate." It isn't the exact truth. It is your best guess from this sample.

Worked Example

A useful first approximation for accuracy uncertainty is the standard error:

For 84 correct out of 100:

A rough 95 percent interval uses about two standard errors on each side:

That gives approximately:

Plain English:

The observed score is 84 percent, but with only 100 examples, a plausible range is roughly 77 percent to 91 percent.

That range is wide. A one-point improvement isn't convincing when the uncertainty band is this large.

Same Score, Different Evidence

Now compare two evaluations:

| Result | Observed accuracy | What changes? |

|---|---|---|

| 84 / 100 | 0.84 | Small sample, wide interval |

| 8400 / 10000 | 0.84 | Large sample, narrow interval |

Both have the same center. They don't have the same evidence strength.

That is one of the most important lessons in applied ML evaluation:

A metric without sample size isn't a conclusion.

What Sample Size Buys You

Use the same formula again, but slow down the story.

The estimate stays at 0.84:

The part that changes is the denominator:

When n grows, the fraction gets smaller. The square root gets smaller too. That means the interval gets tighter.

| Evaluation | Standard error | Rough 95 percent interval |

|---|---|---|

| 84 / 100 | about 0.0367 | 0.768 to 0.912 |

| 840 / 1000 | about 0.0116 | 0.817 to 0.863 |

| 8400 / 10000 | about 0.0037 | 0.833 to 0.847 |

This table is the bridge from math to judgment.

With 100 examples, 84 percent could still mean the true performance is in the high 70s. With 10,000 examples, the score is pinned much closer to 84 percent.

That doesn't make big evals automatically honest. Bad sampling can still fool you. It only means random wobble got smaller.

A Report That Reads Like Evidence

A useful evaluation note has four parts:

| Part | Example |

|---|---|

| Metric | accuracy on held-out answer labels |

| Estimate | 84 correct out of 100, so 0.84 |

| Uncertainty | rough 95 percent interval from 0.768 to 0.912 |

| Decision | not enough evidence for a 1-point launch threshold |

That form is plain, but it prevents metric theater.

When someone asks "is the new model better?", you can answer with evidence:

We saw a one-point lift, but the interval is wider than that. The honest answer is that we need a larger or better-targeted eval before launch.

Vocabulary

- sample: the data you actually observed.

- population: the larger world you want to make a claim about.

- estimator: a rule that calculates a value from data, such as sample accuracy.

- estimate: the number produced by the estimator.

- bias: systematic error that pushes estimates in one direction.

- variance: how much an estimate moves from sample to sample.

- standard error: rough size of that sample-to-sample wobble.

- confidence interval: a range produced by a repeatable procedure. Under its assumptions, the procedure captures the true value at the chosen long-run rate.

Don't memorize these as vocabulary cards. Tie them to the table above.

Code Lab

Put this in statistics_demo.py:

python1import math 2 3def accuracy_interval(successes: int, n: int): 4 p = successes / n 5 standard_error = math.sqrt(p * (1 - p) / n) 6 half_width = 1.96 * standard_error 7 return p, p - half_width, p + half_width 8 9for successes, n in [(84, 100), (8400, 10000)]: 10 estimate, low, high = accuracy_interval(successes, n) 11 print(successes, n, round(estimate, 3), round(low, 3), round(high, 3))

Read the output as:

text1successes total estimate low high

The two rows should have the same estimate and different interval widths.

Why Intervals Matter

Suppose your current model scores 84 percent and a new model scores 85 percent.

With 100 examples, that difference is probably not enough evidence. With 100,000 examples, it might be.

Statistics doesn't remove judgment. It makes judgment harder to fake.

MLE And MAP

You will see MLE and MAP later.

At foundation level, keep the mental model simple:

- MLE chooses the parameter that makes the observed data most likely.

- MAP does the same thing, but also includes prior belief.

For the accuracy example, the MLE estimate for the success probability is just:

Later, when models have millions or billions of parameters, the idea is still familiar: choose parameters that explain the data well.

Common Trap

The common trap is treating the metric as the truth.

Bad report:

Model B is better. It got 85 percent and Model A got 84 percent.

Better report:

Model B scored 85 percent on 100 examples. Model A scored 84 percent. The interval is wider than the difference, so this isn't enough evidence to launch.

The second report is less flashy. It is also much more useful.

Mini Test

Add this test:

python1def test_more_examples_narrows_interval(): 2 _, low_small, high_small = accuracy_interval(84, 100) 3 _, low_big, high_big = accuracy_interval(8400, 10000) 4 5 small_width = high_small - low_small 6 big_width = high_big - low_big 7 8 assert big_width < small_width

This test protects the core idea: more data should reduce uncertainty when the observed proportion stays the same.

Practice

- Compute 84 / 100 by hand.

- Explain why 84 / 100 and 8400 / 10000 aren't equally strong evidence.

- Change the code to evaluate 52 / 60.

- Write one sentence that starts: "I wouldn't launch yet because..."

- Add a guard that rejects

n = 0.

Production Check

Every evaluation report should include:

- sample size

- metric definition

- data split or sampling rule

- estimate

- uncertainty interval

- decision threshold

- slices where the model might fail differently

For LLM systems, slices matter. A model can look good overall and still fail on long documents, non-English prompts, or code-heavy answers.

Next, continue to Distributions and Sampling. You now know a sample can wobble. The next chapter shows how to simulate that wobble before production traffic exists.

Evaluation Rubric

- 1Explains why a sample score is an estimate, not the exact future score

- 2Computes standard error and a confidence interval by hand and in Python

- 3Reports sample size, interval width, and slice uncertainty before making launch claims

Common Pitfalls

- A single score without an interval hides risk. 84 percent on 100 examples and 84 percent on 10,000 examples shouldn't drive the same launch decision.

- A small slice can look great or terrible by luck. Report slice sample size before trusting the slice.

- A confidence interval doesn't prove the true value is inside. It describes the behavior of the estimation process.

Follow-up Questions to Expect

Key Concepts Tested

estimatorsbiasvarianceMLEMAPconfidence intervalscalibration

References