📝EasyNLP Fundamentals

Prompt Engineering Fundamentals

Learn how to steer language models using System messages, Zero-Shot vs Few-Shot prompting, and Chain of Thought reasoning.

20 min readGoogle, Meta, OpenAI +14 key concepts

We now know that modern LLMs are autoregressive models trained on massive text corpora and fine-tuned with human feedback. But how do you use them effectively as a developer?

Prompt Engineering is the art and science of communicating with LLMs to get reliable, high-quality, and structured outputs.

💡 Key insight: An LLM is a brilliant but amnesiac intern. It has absorbed broad patterns from training data, but it knows nothing about your current project unless you put that context in the prompt.

The chat API structure

When you use ChatGPT in the browser, you just type a message. But under the hood (and when you use the API), the conversation is structured into distinct roles.

A typical API request looks like this:

json1[ 2 {"role": "system", "content": "You are a senior Python engineer. Always reply with working code and no explanations."}, 3 {"role": "user", "content": "Write a function to reverse a string."}, 4 {"role": "assistant", "content": "def reverse_string(s):\n return s[::-1]"}, 5 {"role": "user", "content": "Now do it without slicing."} 6]

- System Message: The persistent "rules of the game". It sets the persona, formatting rules, and constraints. It heavily anchors the model's behavior.

- User Message: The specific request or question from the human.

- Assistant Message: The model's previous replies. You pass these back in so the model has memory of the conversation.

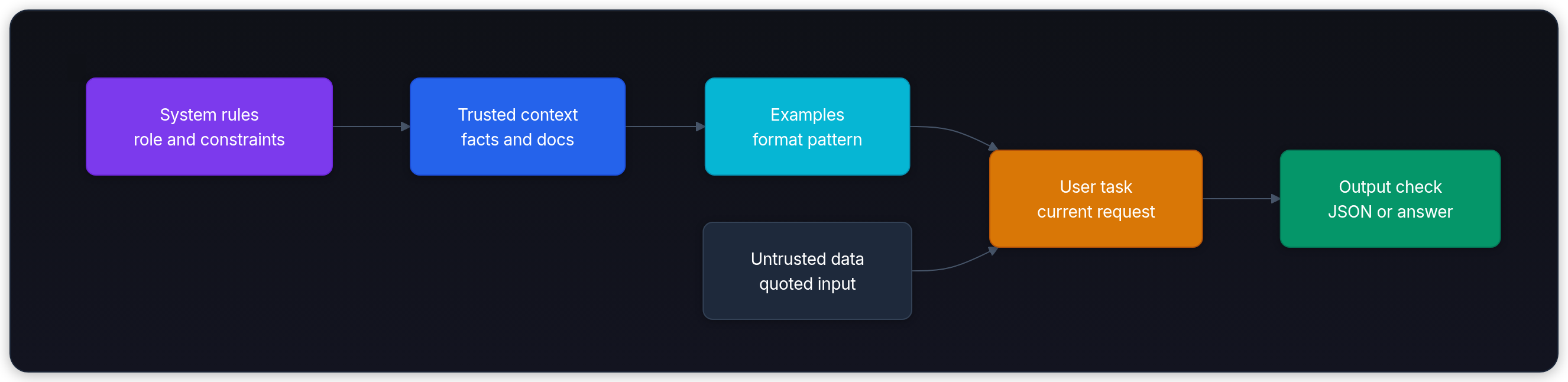

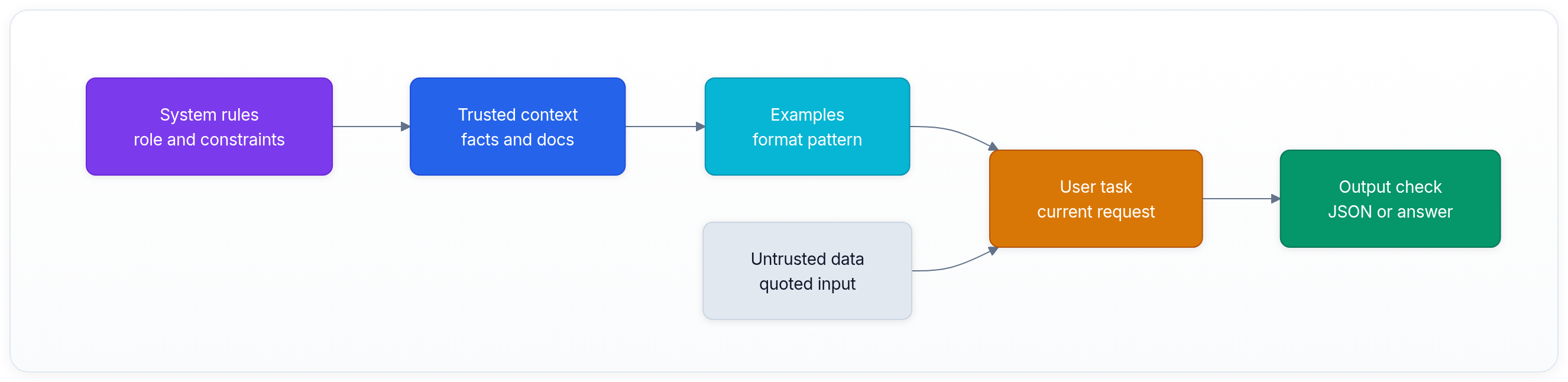

Prompting map

| Pattern | Add to context | Best beginner use | Failure to watch |

|---|---|---|---|

| Zero-shot | Task instruction only | Common tasks with obvious format | Model guesses hidden requirements. |

| Few-shot | Two or more examples | Exact output shape or style | Bad examples teach the wrong pattern. |

| Structured output | Schema and delimiters | JSON, extraction, tool inputs | User data leaks into instructions. |

| Reasoning prompt | Visible intermediate work when appropriate | Multi-step math or planning | Extra reasoning can be unnecessary for reasoning-specific models. |

Reliable prompts are built in layers. Put stable rules first, add trusted context and examples, isolate untrusted user data, then check the output shape.

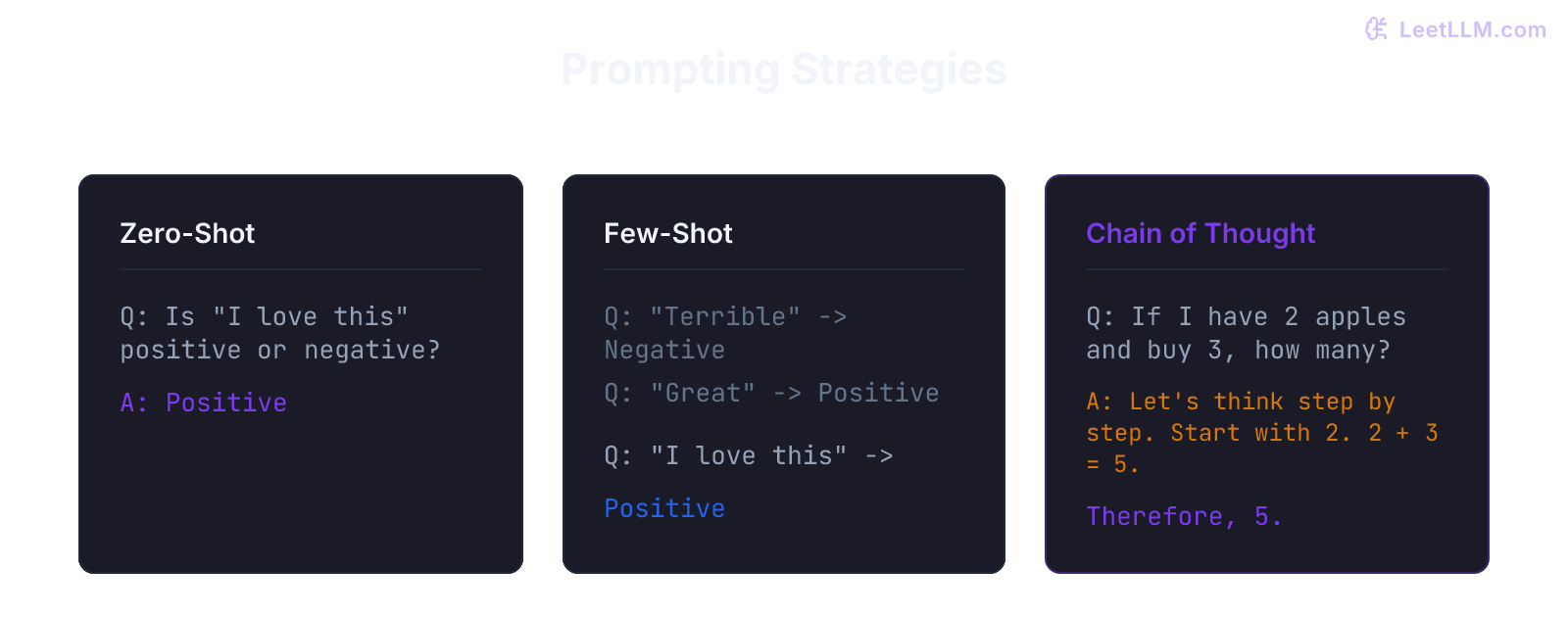

Zero-shot vs few-shot prompting

A zero-shot prompt asks the model to do something without examples. It relies entirely on its pre-training.

User: Translate "hello" to French.

A few-shot prompt provides examples in the prompt to teach the model the exact format or logic you want. This uses the model's in-context learning ability, made famous by GPT-3.[1]

User: English: cat -> French: chat English: dog -> French: chien English: bird -> French:

Few-shot prompting is incredibly powerful for forcing the model to output a specific JSON structure or adopt a weird, non-standard style.

Chain-of-thought prompting

One important prompt engineering discovery is chain-of-thought prompting.[2]

If you ask an LLM a complex math question and demand the answer immediately, it will often fail. Why? Because of autoregressive generation. The model has to output the final number immediately based only on the prompt.

Standard prompt

Q: If John has 5 apples, gives 2 to Mary, buys 3 more, and cuts them all in half, how many apple halves does he have? A:

For standard models, one fix is to make intermediate reasoning visible: add a phrase like "Let's think step by step", or provide a few-shot example that includes reasoning.

Chain-of-thought prompt

Q: If John has 5 apples, gives 2 to Mary, buys 3 more, and cuts them all in half, how many apple halves does he have? A: Let's think step by step. First, John starts with 5 apples. He gives 2 away, leaving him with 3. He buys 3 more, giving him 6. He cuts 6 apples in half, yielding 12 halves. The answer is 12.

By generating the intermediate steps, the model adds them to its context window. It uses its output as a scratchpad, making the final answer easier to predict.

Some reasoning models do extra hidden reasoning internally before outputting the final answer, so explicit chain-of-thought prompts aren't always the right interface.[3]

Best Practices for Developers

- Be specific and use delimiters: Use markdown, XML tags (

<data>...</data>), or triple quotes (""") to clearly separate your instructions from the user's data. This prevents prompt injection and confuses the model less. - Specify the output format: If you want JSON, ask for JSON and provide a schema.

- Give the model an "out": Tell it: "If you don't know the answer based on the provided text, say 'I don't know'." This reduces hallucinations.

- Repeat critical instructions near the end: Models can miss information buried in the middle of long contexts.[4] If you have a 10-page document, put the core instruction close to the final user request.

Next, continue to The LLM Lifecycle, where prompting fits into training, alignment, and deployment.

Evaluation Rubric

- 1Explains the difference between System, User, and Assistant message roles

- 2Differentiates between Zero-shot and Few-shot prompting

- 3Demonstrates how Chain of Thought (CoT) prompting forces the model to reason before answering

Common Pitfalls

- Treating the LLM like a human that can read your mind (if it's not in the prompt, the model doesn't know it)

- Putting complex instructions at the top of a very long prompt (models suffer from 'lost in the middle' syndrome; put crucial instructions at the very end)

Follow-up Questions to Expect

Key Concepts Tested

The Chat completions API structure (System, User, Assistant messages)Zero-shot vs One-shot vs Few-shot promptingChain of Thought (CoT) prompting to improve reasoningClear instructions, delimiters, and specifying output formats

References

Language Models are Few-Shot Learners.

Brown, T., et al. · 2020 · NeurIPS 2020

Chain-of-Thought Prompting Elicits Reasoning in Large Language Models.

Wei, J., et al. · 2022 · NeurIPS

Lost in the Middle: How Language Models Use Long Contexts

Liu, N.F., et al. · 2023 · TACL 2023

Reasoning models

OpenAI · 2026