📊EasyEvaluation & Benchmarks

Distributions and Sampling

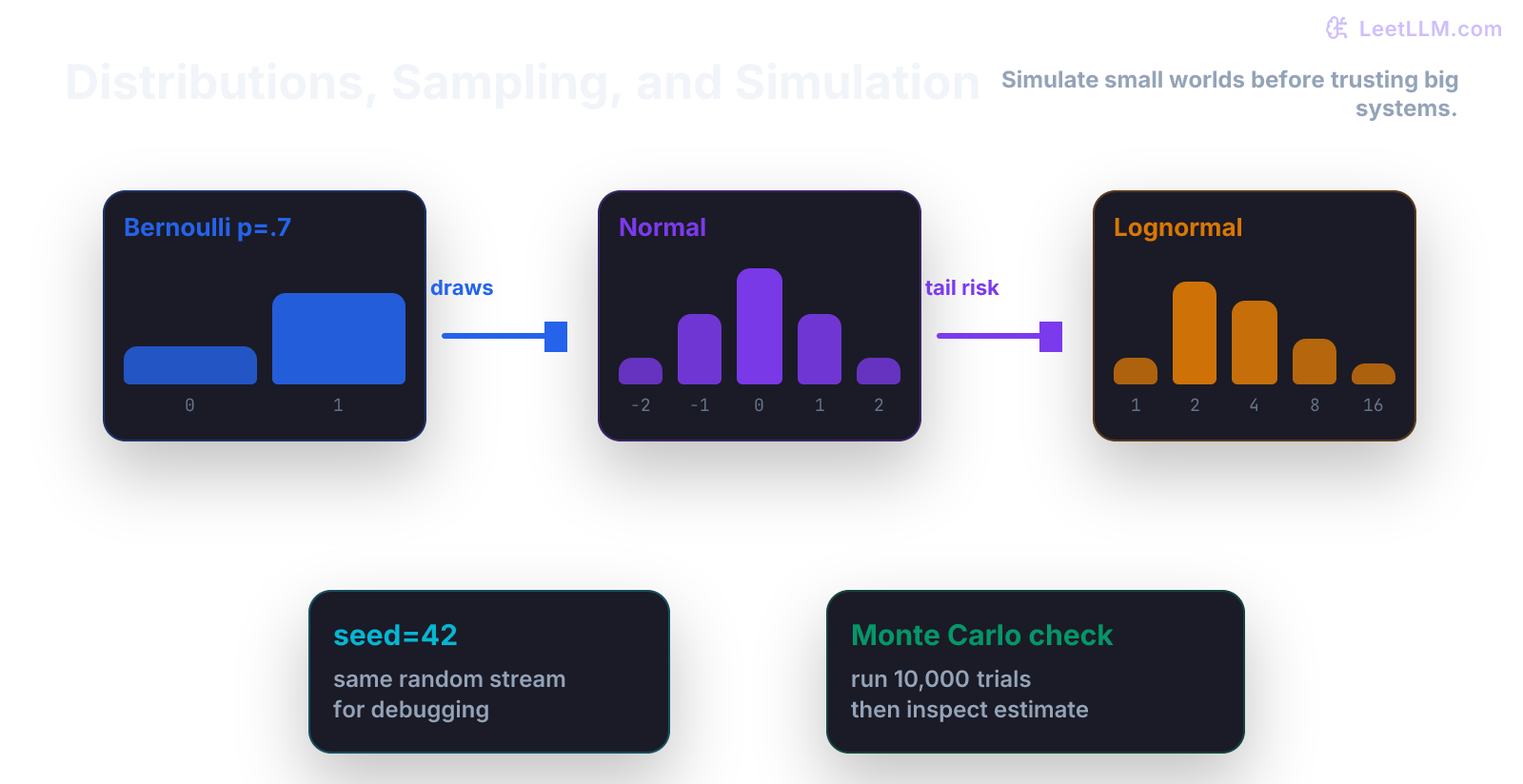

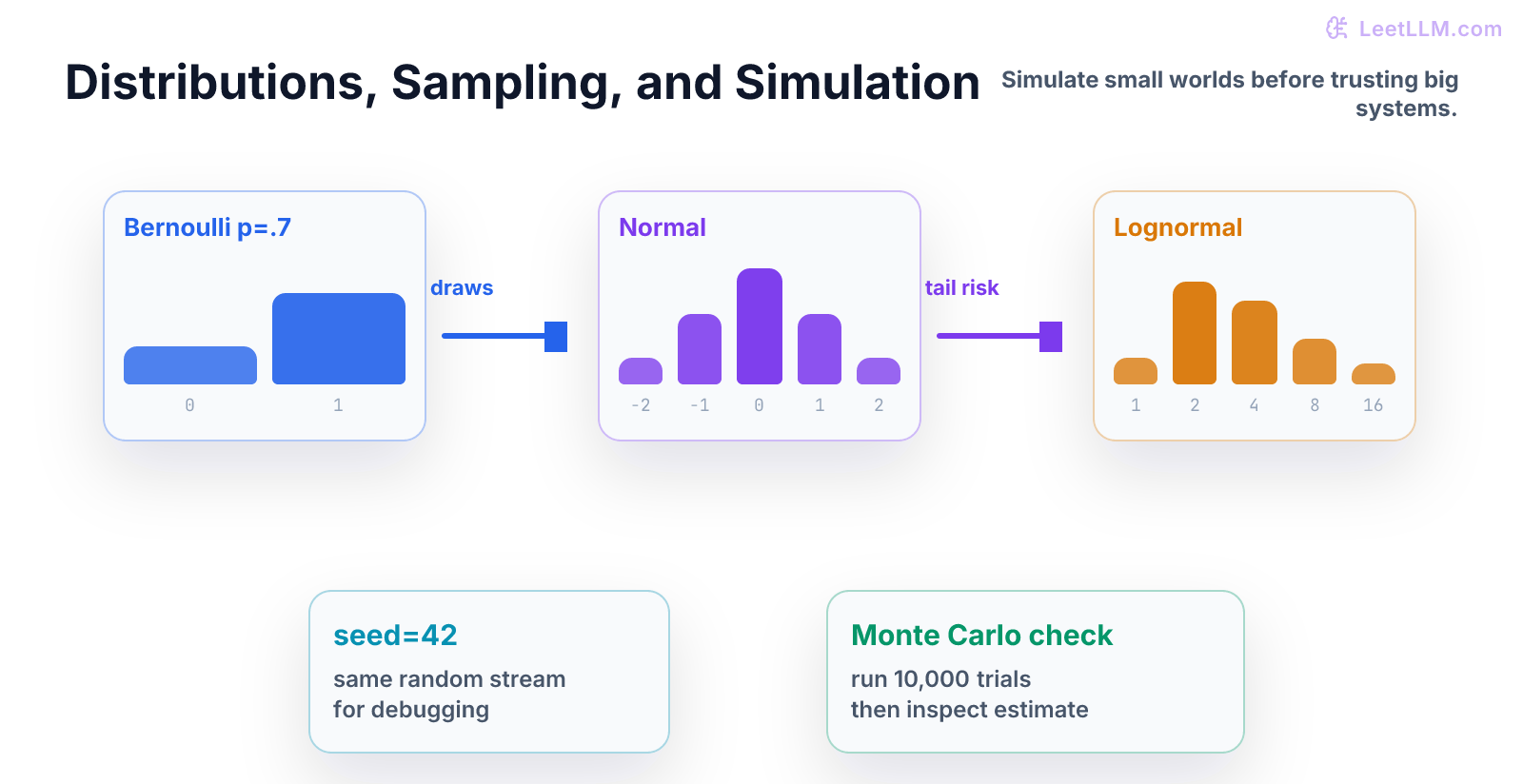

A beginner-first guide to distributions as recipes for randomness, with seeded NumPy simulations for clicks, latency, counts, and tail behavior.

35 min readOpenAI, Anthropic, Google +17 key concepts

A distribution is a recipe for randomness.

That sounds abstract, so start with product behavior. Some events are yes/no: a user clicked or didn't click. Some values are counts: a request used 14 tool calls. Some values are positive and skewed: latency is usually small, but occasionally huge.

Those shapes are different. If you simulate them all with the same bell curve, your code will teach you the wrong lesson.

What You Learn

This chapter teaches how to choose a simple distribution, draw samples, and use simulation as a safe practice field before real traffic arrives.[1][2][3]

Step Map

| Step | Question | What you should be able to do |

|---|---|---|

| 1 | What kind of value is this? | Match value type to distribution shape. |

| 2 | What parameters control it? | Name probability, mean, spread, or rate. |

| 3 | Can we sample it? | Generate repeatable fake data. |

| 4 | What summary matters? | Compare mean, percentile, or count. |

| 5 | What would break? | Notice wrong distribution choices. |

Statistics taught you that samples wobble. Distributions explain the shape of that wobble.

Tiny Story

Imagine you are designing an LLM support bot.

Before launch, you want fake traffic for a small load test.

You need to simulate:

| Product behavior | Value type | Good beginner distribution |

|---|---|---|

| User clicked "thumbs up" | yes/no | Bernoulli |

| User intent | category | Categorical |

| Number of tool calls | count | Poisson |

| Model latency | positive skewed number | Lognormal |

| Average eval score noise | centered continuous number | Normal |

This chapter isn't trying to turn you into a probability theorist. It teaches one habit: stop pretending all randomness has one shape.

Vocabulary

- random variable: a quantity whose value is uncertain before observation.

- distribution: a rule for which values can happen and how often.

- sample: one draw from a distribution.

- parameter: a number that controls the distribution, such as click probability.

- seed: a number that makes a random run repeatable.

- Monte Carlo: estimating behavior by running many random samples.

Analogy: a distribution is like a vending machine with rules. You don't know the next item, but you know which items are possible and how likely each one is.

Worked Example

For a thumbs-up click, the value is yes/no.

Use a Bernoulli distribution:

| Outcome | Numeric value | Probability |

|---|---|---|

| no click | 0 | 0.92 |

| click | 1 | 0.08 |

If you simulate 1,000 users, the click rate won't be exactly 0.08 every time. It should land near 0.08.

For latency, the value can't be negative. A normal distribution can generate negative numbers, so it's a poor first choice for latency. A lognormal distribution is often a better teaching example because it stays positive and creates a right tail.

That tail matters. Users often feel the 95th percentile, not the average.

Code Lab

Put this in distributions_demo.py:

python1import numpy as np 2 3rng = np.random.default_rng(7) 4 5clicks = rng.binomial(n=1, p=0.08, size=1000) 6latency = rng.lognormal(mean=2.0, sigma=0.4, size=1000) 7 8print("click_rate", round(clicks.mean(), 3)) 9print("mean_latency", round(latency.mean(), 2)) 10print("p95_latency", round(np.percentile(latency, 95), 2))

Read it line by line:

default_rng(7)creates repeatable randomness.binomial(n=1, p=0.08, size=1000)creates 1,000 yes/no clicks.lognormal(...)creates positive latency-like values.percentile(latency, 95)asks how slow the slowest 5 percent of requests are.

The exact numbers matter less than the pattern:

text1click_rate near 0.08 2p95_latency greater than mean_latency

Second Worked Example: Categories

Now simulate routing for the same support bot.

Each request has one intent:

| Intent | Probability |

|---|---|

| billing | 0.25 |

| bug | 0.35 |

| account | 0.30 |

| other | 0.10 |

Use a categorical sample:

python1intents = np.array(["billing", "bug", "account", "other"]) 2probabilities = np.array([0.25, 0.35, 0.30, 0.10]) 3 4sampled = rng.choice(intents, size=20, p=probabilities) 5print(sampled[:10])

This isn't a classifier yet. It is fake traffic with a controlled shape.

Read the line p=probabilities as a contract: each sampled request must choose one of the listed intents, and the long-run frequencies should match the probabilities.

Third Worked Example: Counts

Tool calls are counts: 0, 1, 2, 3, and so on.

A Poisson distribution is a common first model for counts in a fixed window:

python1tool_calls = rng.poisson(lam=2.5, size=1000) 2 3print("mean_tool_calls", round(tool_calls.mean(), 2)) 4print("max_tool_calls", tool_calls.max())

Read The Parameter

The parameter lam=2.5 is the expected count. It doesn't mean every request has 2.5 tool calls. Counts are whole numbers, and individual requests still wobble.

Use the output to ask product questions:

| Question | Summary to inspect |

|---|---|

| What is normal load? | mean tool calls |

| How often are requests expensive? | percentage above 5 calls |

| What should rate limits protect? | max or high percentile count |

Here is one more useful line:

python1print("share_above_5", round((tool_calls > 5).mean(), 3))

That line turns simulation into a planning tool. It estimates how often a request crosses an operational threshold.

Why Seeded Randomness Matters

Random code without a seed is hard to debug.

If your teammate can't reproduce your sample, they can't tell whether a behavior changed because of your code or because randomness picked a different draw.

Named Generator Pattern

Use a named generator:

python1rng = np.random.default_rng(7) 2print(rng.integers(0, 10, size=3))

Then pass rng around instead of calling global random functions from everywhere.

Draw The Shape Before Trusting It

Before using a distribution, write a tiny shape card:

| Value | Can be negative? | Can have a long tail? | Example distribution |

|---|---|---|---|

| Click | no | no | Bernoulli |

| Intent | no | no | Categorical |

| Tool calls | no | sometimes | Poisson |

| Latency | no | yes | Lognormal |

| Score noise | yes | usually no | Normal |

This table is more useful than memorizing names. It forces the key question:

What values are possible?

If the distribution can produce impossible values, the model is already teaching you nonsense.

Common Distributions

| Distribution | Use when | Example |

|---|---|---|

| Bernoulli | one yes/no trial | one answer passed or failed |

| Binomial | count successes across trials | 17 passes out of 100 tasks |

| Categorical | one label from many labels | route to billing, bug, or account |

| Poisson | count events in a window | number of tool calls in a request |

| Normal | symmetric noise around a mean | average embedding score noise |

| Lognormal | positive value with long tail | latency or cost per request |

| Beta | uncertain probability between 0 and 1 | estimated click rate after few samples |

The table is a starting point, not a law. Real data can be messier. Always compare simulated summaries against observed summaries when you have real data.

Common Trap

The common trap is using averages everywhere.

For latency, average can hide user pain.

Example:

| Metric | Meaning |

|---|---|

| Mean latency | typical load on system |

| p95 latency | slow experience for worst 5 percent |

| Max latency | worst observed request |

If the p95 is high, users can feel a bad product even when the mean looks fine.

Mini Test

Add tests like these:

python1def test_click_rate_is_near_probability(): 2 rng = np.random.default_rng(7) 3 clicks = rng.binomial(n=1, p=0.08, size=1000) 4 assert 0.05 < clicks.mean() < 0.11 5 6def test_latency_is_positive(): 7 rng = np.random.default_rng(7) 8 latency = rng.lognormal(mean=2.0, sigma=0.4, size=1000) 9 assert latency.min() > 0

These tests are intentionally wide. Random samples wobble. The test should catch broken assumptions, not demand exact values.

Practice

- Change click probability from

0.08to0.12. - Predict whether

click_rateshould move up or down. - Increase

sigmain the lognormal sample. - Compare mean latency and p95 latency.

- Write one sentence that starts: "Averages hide tail behavior when..."

Production Check

For simulations, record:

- distribution choice

- parameter values

- random seed

- sample size

- summary metrics

- real data used for comparison, if available

If the simulation drives a launch decision, also record what would make it invalid. For example: "This latency simulation is invalid if production requests include file uploads, because file uploads weren't sampled."

Next, continue to Hypothesis Tests and pass@k. You can now simulate noisy results. The next step is deciding whether one noisy result is evidence of a real improvement.

Evaluation Rubric

- 1Explains distributions as rules for generating possible outcomes

- 2Uses seeded NumPy simulations to compare Bernoulli, categorical, Poisson, beta, and lognormal behavior

- 3Connects distribution choice to product behavior like clicks, classes, counts, and latency tails

Common Pitfalls

- Sampling without a seed makes debugging hard. Sampling from the wrong distribution makes the simulation answer the wrong question.

- A normal distribution can generate negative values, so it's a poor first model for latency or token counts.

- A simulation that matches the mean can still miss the tail behavior that breaks production.

Follow-up Questions to Expect

Key Concepts Tested

BernoullicategoricalGaussianPoissonbetaDirichletMonte Carlo

References