📊EasyEvaluation & Benchmarks

Probability for ML

A beginner-first probability chapter that starts with counts, then teaches conditional probability, Bayes rule, and base-rate mistakes through a moderation-filter example.

35 min readOpenAI, Anthropic, Google +16 key concepts

Probability is what you use when you don't have perfect information.

In ordinary programming, a branch is often clean:

python1if user_is_admin: 2 print("show admin panel") 3 show_admin_panel()

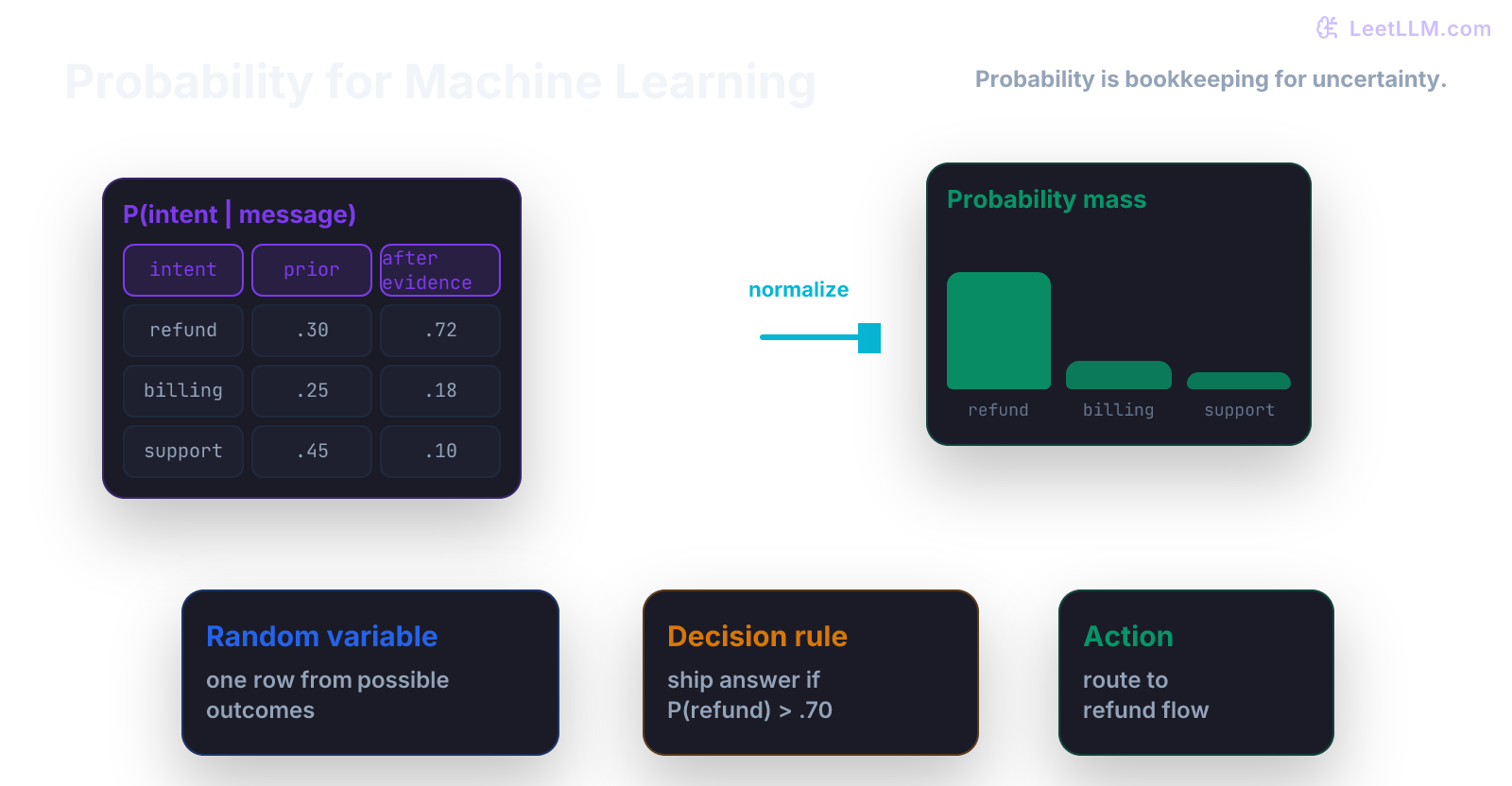

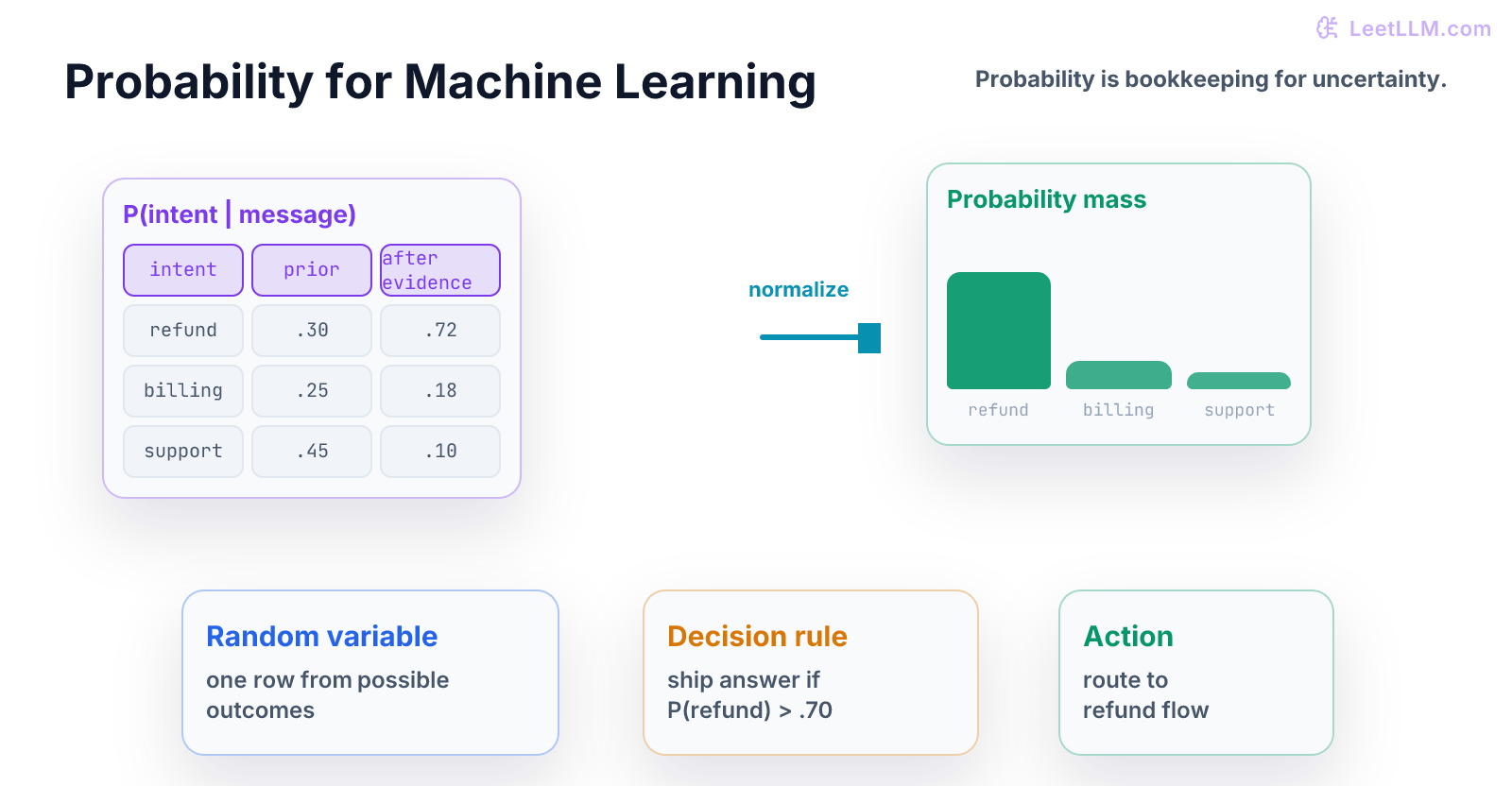

Machine learning is messier. A model says "this looks 82 percent likely." A safety filter says "this message looks risky." A retrieval system says "this document seems relevant." Those aren't facts. They are uncertain claims about events.

What You Learn

This chapter teaches the first habit of probability: name the event, count the cases, then update when new evidence arrives.[1][2][3]

Step Map

| Step | Question | What you should be able to do |

|---|---|---|

| 1 | What can happen? | Define the event in plain English. |

| 2 | How often does it happen? | Turn counts into a probability. |

| 3 | What evidence did we see? | Use conditional probability. |

| 4 | How should belief change? | Apply Bayes rule. |

| 5 | What can go wrong? | Spot base-rate mistakes before shipping. |

You already practiced Python and arrays. Now the same habit becomes statistical: make the invisible assumption visible.

Tiny Story

Imagine an LLM moderation filter.

Out of 1,000 messages:

| Message type | Count |

|---|---|

| Unsafe | 10 |

| Safe | 990 |

| Total | 1,000 |

Before the filter says anything, unsafe messages are rare:

That number is called the prior. It is the probability before new evidence.

Now suppose the filter is pretty good:

| If message is... | Filter flags it how often? |

|---|---|

| Unsafe | 95 percent |

| Safe | 5 percent |

Beginner guess: "If the filter flags a message, it is probably unsafe."

Careful answer: not necessarily. Safe messages are so common that false alarms can dominate the flagged pile.

Worked Example

Let's count the flagged messages out of the same 1,000 messages.

Unsafe messages:

Safe messages:

So the filter flags about 59 messages:

Only 9.5 of those flagged messages are actually unsafe:

The result is about 16 percent, not 95 percent.

That is the base-rate lesson. A strong signal can still be less convincing than it feels when the event is rare.

Vocabulary

- event: something that can happen, such as

message is unsafe. - sample space: the full set of possible outcomes you are considering.

- prior: probability before new evidence.

- evidence: something you observe, such as

filter flagged the message. - likelihood: probability of seeing the evidence if a hypothesis were true.

- posterior: updated probability after evidence.

- conditional probability: probability of one event after you know another event happened.

Keep every word tied to the moderation story. If a term can't point to a row, count, or event, it is still floating.

Formula

Conditional probability asks:

Read it as:

Among the cases where B happened, what fraction also had A?

Bayes rule turns the question around:

For the moderation filter:

- means "message is unsafe"

- means "filter flagged it"

- is the base rate

- is the true-positive rate

- includes both true flags and false alarms

This isn't a trick formula. It is just careful counting.

Code Lab

Put this in probability_demo.py:

python1def flagged_posterior(prior, true_positive, false_positive): 2 real_flags = true_positive * prior 3 false_flags = false_positive * (1 - prior) 4 all_flags = real_flags + false_flags 5 return real_flags / all_flags 6 7posterior = flagged_posterior( 8 prior=0.01, 9 true_positive=0.95, 10 false_positive=0.05, 11) 12 13print(round(posterior, 3))

Expected output:

text10.161

Read the code like a word problem:

prior=0.01means 1 percent of messages are unsafe before filtering.true_positive=0.95means unsafe messages are usually flagged.false_positive=0.05means safe messages are sometimes flagged.real_flags / all_flagsmeans "of flagged messages, how many are truly unsafe?"

Now change only prior:

python1for prior in [0.01, 0.10, 0.50]: 2 print(prior, round(flagged_posterior(prior, 0.95, 0.05), 3))

The detector didn't change. Only the base rate changed. The answer should move a lot.

Second Pass: Change One Number

Keep the filter quality fixed:

| Setting | Value |

|---|---|

| True-positive rate | 0.95 |

| False-positive rate | 0.05 |

Now change only the world the filter lives in:

| Unsafe base rate | Posterior after flag |

|---|---|

| 1 percent | about 16 percent |

| 10 percent | about 68 percent |

| 50 percent | about 95 percent |

Same detector. Different population. Different answer.

This is why product context matters. A fraud model, safety filter, and spam classifier can share the same math but make different decisions because their base rates differ.

Common Trap

The common trap is mixing up these two questions:

| Question | Meaning |

|---|---|

| If the message is unsafe, how often does the filter catch it? | |

| If the filter flagged it, how likely is it unsafe? |

They look similar, but they answer opposite questions.

This mistake appears constantly in LLM products:

- treating model confidence as probability of truth

- treating a retrieval score as probability of relevance

- treating one judge score as proof an answer is correct

- treating a rare safety event as common after seeing one alert

Mini Test

Add a guard before you trust this function:

python1def test_flagged_posterior(): 2 result = flagged_posterior(0.01, 0.95, 0.05) 3 assert 0.15 < result < 0.17

Then add input checks:

python1def check_probability(x, name): 2 assert 0 <= x <= 1, f"{name} must be between 0 and 1"

Probability code should fail loudly when a value like 1.4 enters the system. Silent invalid probabilities create fake certainty.

Practice

- Compute

P(unsafe)from the table without code. - Compute how many safe messages get falsely flagged.

- Change the false-positive rate from

0.05to0.01. - Explain why the posterior changes.

- Write one sentence that starts: "A flagged message isn't automatically unsafe because..."

Production Check

Before using probability in a product decision, write down:

- event: what exactly is being predicted

- population: which users, requests, documents, or tasks count

- base rate: how common the event is before evidence

- evidence: what signal was observed

- decision: what threshold turns probability into action

For an LLM system, this might be:

Event: answer contains a policy violation. Population: support-chat answers in English. Evidence: safety classifier score above threshold. Decision: send answer to human review if posterior risk is above 20 percent.

That sentence is boring, but it is what makes probability useful.

Next, continue to Statistics and Uncertainty. Probability gave you a way to reason about uncertain events. Statistics asks how much you should trust a probability estimated from data.

Evaluation Rubric

- 1Explains events, priors, evidence, likelihoods, and posteriors using counts before formulas

- 2Computes Bayes rule by hand and in runnable Python

- 3Spots base-rate and reversed-conditional mistakes in ML product decisions

Common Pitfalls

- People often treat model confidence as probability of truth. A calibrated probability needs data, a target event, and a population.

- People often reverse P(unsafe | flagged) and P(flagged | unsafe). Those are different questions.

- Ignoring the population makes the same percentage mean different things in different products.

Follow-up Questions to Expect

Key Concepts Tested

eventsconditional probabilityindependenceBayes ruleexpectationvariance

References