📝EasyNLP Fundamentals

Vectors, Matrices & Tensors

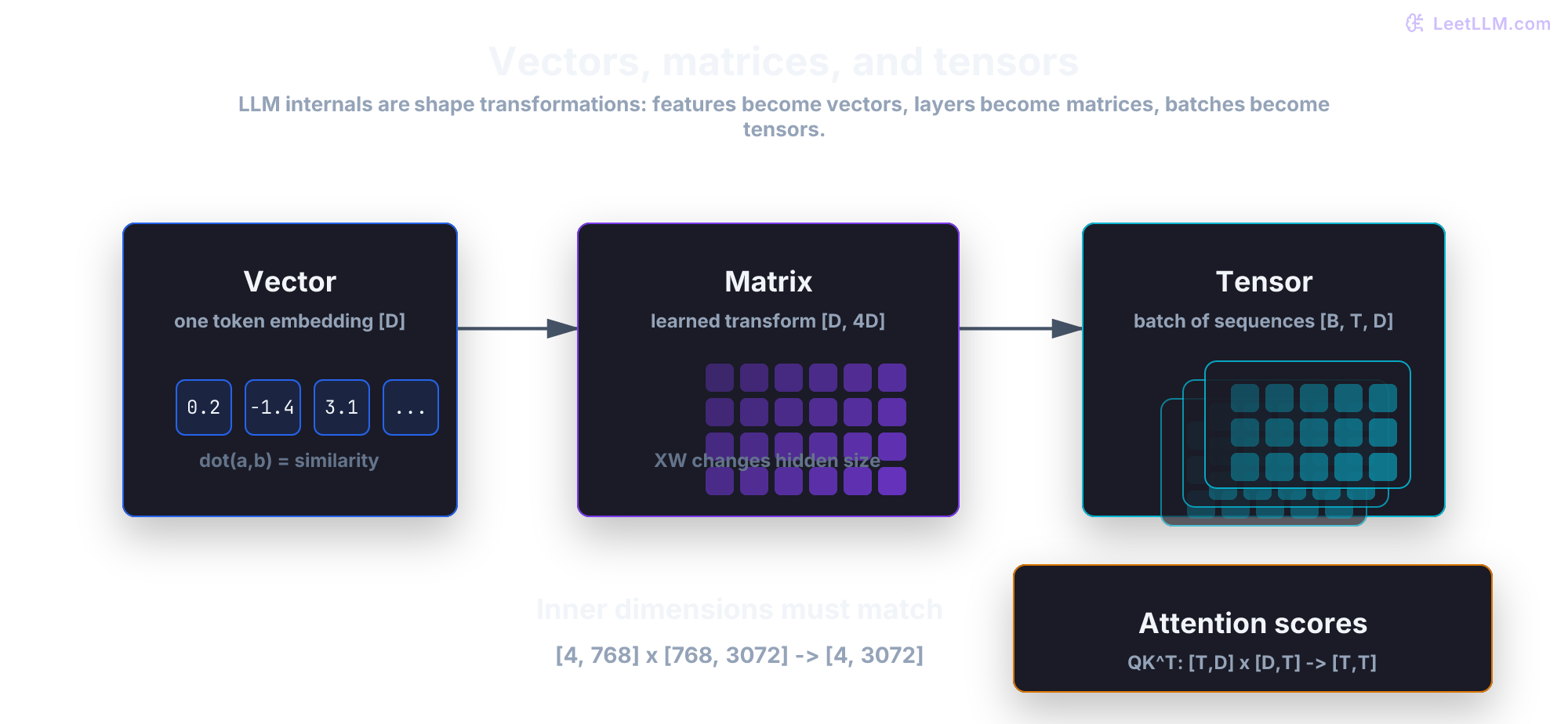

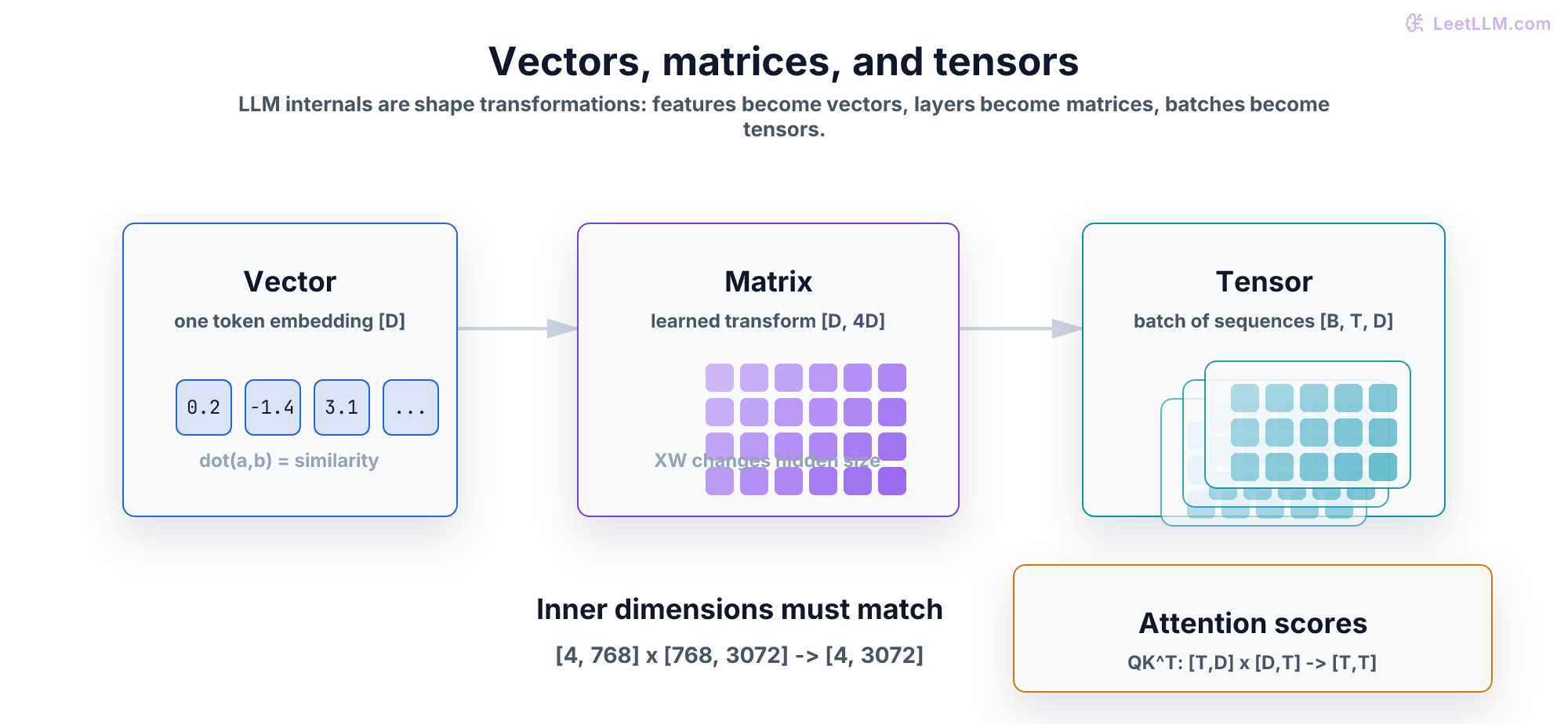

Learn the linear algebra vocabulary behind LLMs: vectors, matrices, tensors, dot products, matrix multiplication, and shape reasoning.

25 min readGoogle, Meta, OpenAI +47 key concepts

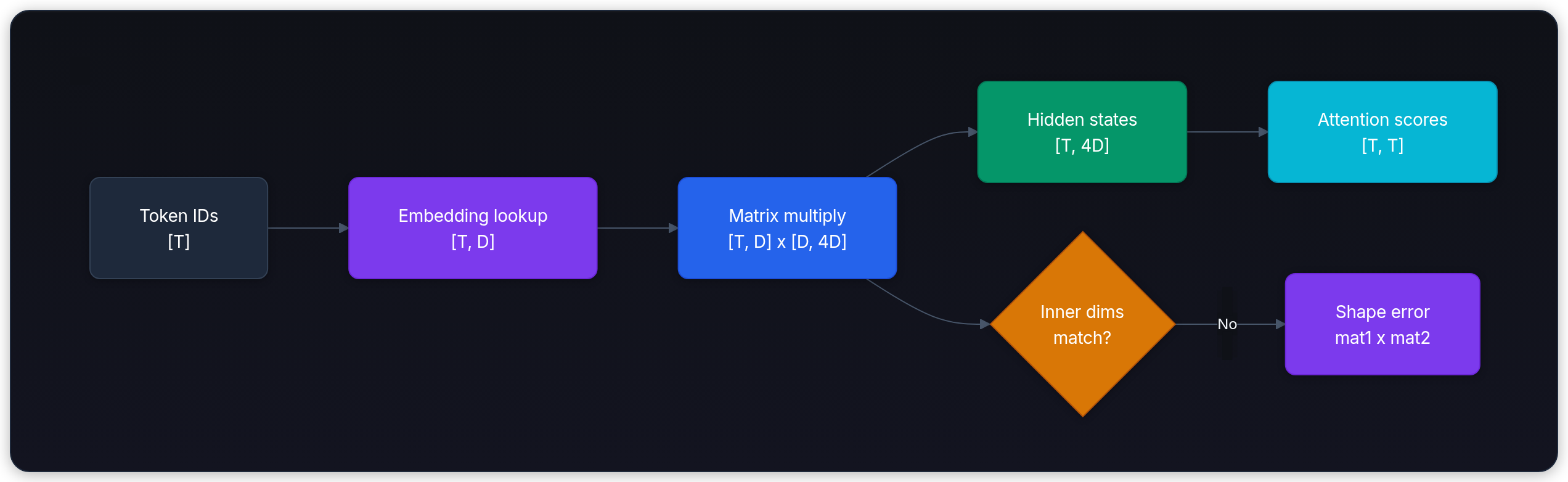

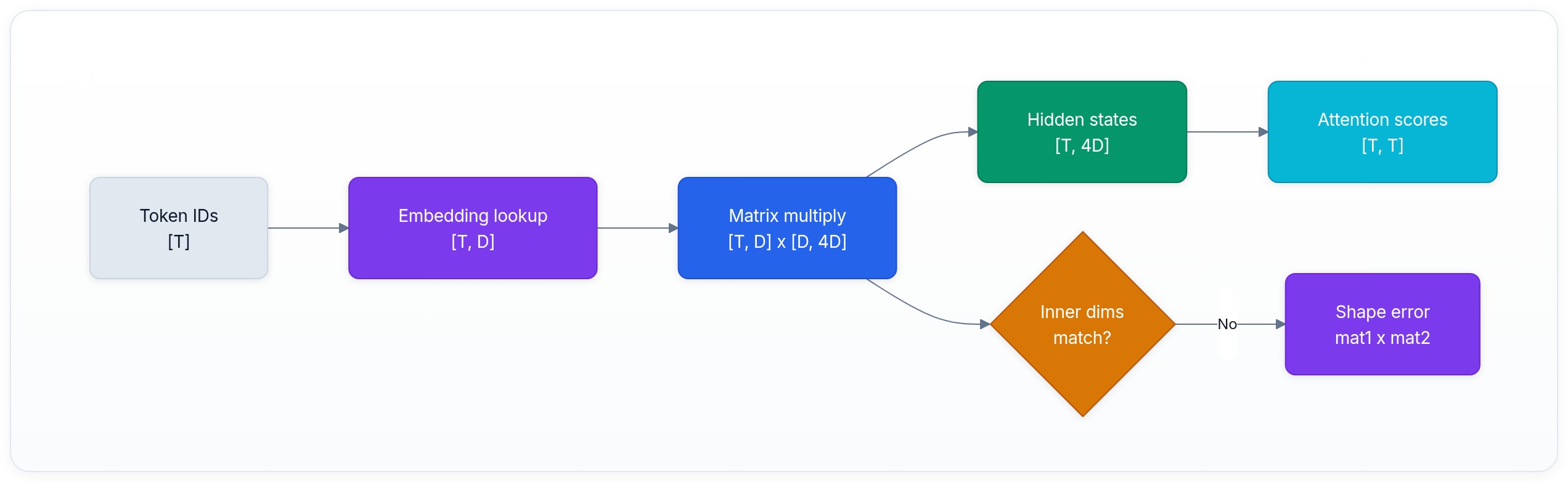

If you've ever seen an LLM error like mat1 and mat2 shapes cannot be multiplied, you've touched the math layer underneath modern AI. Transformers, embeddings, attention, and neural networks all run on the same small set of linear algebra ideas.

The good news: you don't need a math degree to follow LLM internals. You need a practical mental model for vectors, matrices, tensors, and shapes. Once those click, later articles on neural networks and attention become much easier.

This article gives you the minimum math toolkit for the rest of LeetLLM. We'll focus on what each object means, how dimensions flow through a model, and how to debug the most common shape mistakes.

💡 Key insight: Deep learning is mostly learned linear algebra plus nonlinearities. Vectors store features, matrices transform those features, and tensors batch the whole computation so GPUs can process many tokens at once.[1]

Step map

| Step | Object | Beginner check |

|---|---|---|

| 1 | Vector | One token or item becomes one list of features. |

| 2 | Dot product | Two vectors produce one alignment score. |

| 3 | Matrix | A learned grid transforms many features at once. |

| 4 | Tensor | Batch and sequence axes keep many examples organized. |

The main habit is shape tracing. Read each operation from left to right and check which dimensions are allowed to multiply.

Vectors: lists of meaningful numbers

A vector is a list of numbers. That's it.

In geometry, a vector is an arrow pointing somewhere in space. In machine learning, a vector is a compact representation of features. The numbers might describe a token, an image patch, a user profile, or an internal model state.

For LLMs, the most important vector is the embedding. After tokenization, each token ID gets mapped to a learned vector:

| Token | Token ID | Embedding shape |

|---|---|---|

"cat" | 3797 | [768] |

"sat" | 3332 | [768] |

"mat" | 2603 | [768] |

The shape [768] means the token is represented by 768 numbers. Bigger models often use much larger hidden dimensions, but the idea is the same: each token becomes a point in a learned space.

Why vectors? Because numbers can be compared, transformed, and optimized. Strings can't be multiplied by a neural network. Vectors can.

Dot products: alignment between vectors

The dot product takes two vectors of the same length and returns one number:

Example:

Intuitively, the dot product measures alignment. If two vectors point in similar directions, their dot product is high. If they point in opposite directions, it's low or negative. If they're unrelated, it's near zero.

This is why dot products appear everywhere in LLMs:

- Retrieval systems compare query vectors with document vectors.

- Attention compares query vectors with key vectors.

- Output layers compare hidden states with vocabulary vectors.

Cosine similarity is a normalized version of the dot product:

The denominator divides out vector length, so cosine focuses on direction. Raw dot product mixes direction and magnitude.

Matrices: transformations for vectors

A matrix is a rectangular grid of numbers:

You can think of a matrix as a machine that transforms one vector into another vector. If the input vector has 3 numbers and the matrix has shape [2, 3], the output vector has 2 numbers:

Shape rule:

The inner dimensions must match. If they don't, multiplication is undefined.

Neural network layers are built from this operation:

- is the input vector.

- is the learned weight matrix.

- is the learned bias vector.

- is the transformed output vector.

That one equation is the backbone of feed-forward networks, output projections, and many internal Transformer operations.

Matrix multiplication: many vectors at once

In practice, models don't process one vector at a time. They process a whole sequence of token vectors.

Suppose a sentence has 4 tokens, and each token has a 768-dimensional embedding. The sequence is a matrix:

That means:

- 4 rows, one per token position.

- 768 columns, one per embedding feature.

If a layer projects hidden size 768 to hidden size 3072, its weight matrix has shape:

Then:

Shape rule:

Every token gets transformed by the same weight matrix. That's why neural networks can run efficiently on GPUs: one matrix multiplication applies the same learned operation across many tokens in parallel.

Tensors: matrices with more axes

A tensor is a generalization of vectors and matrices:

| Object | Rank | Example shape | Meaning |

|---|---|---|---|

| Scalar | 0 | [] | One number |

| Vector | 1 | [768] | One token embedding |

| Matrix | 2 | [128, 768] | One sequence of 128 token embeddings |

| Tensor | 3+ | [8, 128, 768] | Batch of 8 sequences |

LLMs usually carry hidden states as a 3D tensor:

Where:

- = batch size (how many examples at once)

- = sequence length (how many token positions)

- = hidden dimension (how many numbers per token)

Example:

text1hidden_states.shape == [8, 128, 768]

This means: 8 examples, 128 tokens each, 768 numbers per token.

Once you can read shapes, model internals become less mysterious. A tensor shape tells you what the model is storing and which dimensions can interact.

Attention preview: shapes tell the story

Attention is covered deeply later, but the shape story is useful now.[2]

Each token vector gets projected into three new vectors:

- Query (Q): what this token is looking for

- Key (K): what this token offers for matching

- Value (V): what information this token will contribute

For one sequence:

Attention scores come from:

Shape check:

The result is a square matrix: every token compared with every other token. If the sequence has 128 tokens, attention produces a [128, 128] score matrix. This is the core reason attention gets expensive for long context windows.

Shape debugging: the skill that saves hours

When a model crashes with a shape error, don't guess. Write down the dimensions.

Common mistake 1: wrong matrix order

Matrix multiplication isn't commutative:

If:

Then:

But:

is invalid, because [768, 3072] x [4, 768] has inner dimensions 3072 and 4, which don't match.

Common mistake 2: forgetting the transpose

Attention needs , not .

If both and are [T, D], then:

is invalid. Transpose first:

Common mistake 3: mixing hidden size and vocabulary size

Inside the Transformer, most tensors use hidden dimension :

text1hidden_states.shape == [batch, sequence, hidden_dim]

Only the final output projection maps hidden states to vocabulary logits:

text1logits.shape == [batch, sequence, vocab_size]

Confusing these two dimensions leads to broken mental models. Hidden dimension is the model's internal workspace. Vocabulary size is the set of tokens it can predict.

Key takeaways

- A vector is a list of numbers that represents features, such as one token embedding.

- A dot product measures alignment between two vectors and powers similarity, attention, and logits.

- A matrix is a learned transformation that maps vectors from one space to another.

- Matrix multiplication only works when inner dimensions match.

- A tensor is a vector or matrix with extra axes, such as

[batch, sequence, hidden]. - Shape reasoning is the fastest way to debug neural networks and understand LLM internals.

Next, continue to Neural Networks from Scratch, where these shapes become trainable layers.

Evaluation Rubric

- 1Defines vectors, matrices, and tensors using concrete LLM examples

- 2Explains dot product intuition and why it measures alignment or similarity

- 3Checks matrix multiplication shapes and explains why incompatible shapes fail

- 4Maps embeddings, hidden states, and attention scores to tensor shapes

- 5Explains how weight matrices transform vectors inside neural network layers

- 6Uses shape notation to debug attention computations like QK^T

Common Pitfalls

- Treating tensor shapes as bookkeeping instead of core model behavior

- Forgetting that matrix multiplication order matters: AB and BA are usually different and may not both be valid

- Confusing dot product similarity with cosine similarity without checking vector length

- Ignoring batch and sequence dimensions when reasoning about LLM hidden states

- Assuming a tensor's last dimension is always vocabulary size, when it's usually the hidden dimension

Follow-up Questions to Expect

Key Concepts Tested

Vectors as lists of features and directions in spaceDot product as a similarity scoreMatrices as transformations that move vectors between spacesMatrix multiplication and shape compatibilityTensors as batched stacks of vectors and matricesCommon LLM tensor shapes: batch, sequence, hidden dimensionShape debugging for attention and neural network layers

References