📝EasyNLP Fundamentals

Neural Networks from Scratch

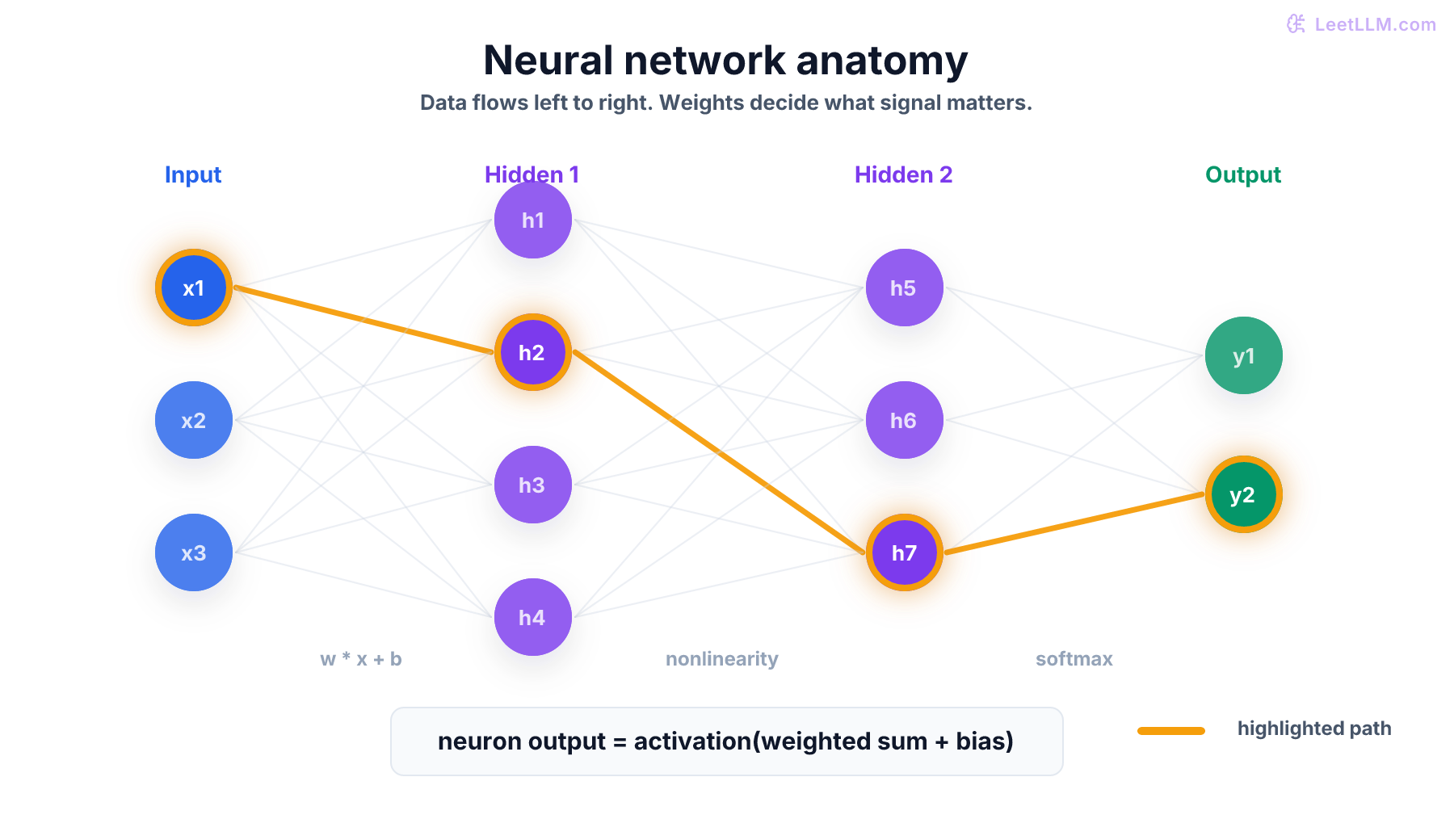

Build your mental model of neural networks from the ground up: neurons, layers, weights, activations, and the forward pass that turns inputs into predictions.

25 min readGoogle, Meta, OpenAI +47 key concepts

You've probably heard that AI systems like ChatGPT, image generators, and self-driving cars all use "neural networks." But what actually is a neural network? Strip away the buzzwords and you get something surprisingly simple: a mathematical function that takes numbers in, transforms them through a series of steps, and produces numbers out. The magic is that the steps are learned from data, not programmed by hand.

Think of a spam filter. A traditional programmer would write rules: "if the email contains 'free money,' flag it." A neural network takes a different approach: show it thousands of emails labeled "spam" or "not spam," and it figures out the rules on its own. It might discover that emails with certain word combinations, sent at unusual hours, from new addresses, are likely spam. It learns patterns no human thought to code.

This article builds your mental model of neural networks from scratch. No prior machine learning knowledge needed. By the end, you'll understand how data flows through a network, what "parameters" are, why "layers" matter, and how a pile of simple math operations can learn to do remarkable things.

💡 Key insight: A neural network is just a function: input → math → output. The "intelligence" comes from the fact that the math operations have tunable knobs (called parameters) that are adjusted during training to make the outputs match what you want. That's it. Everything in modern AI, from chatbots to protein folders, is built on this idea.

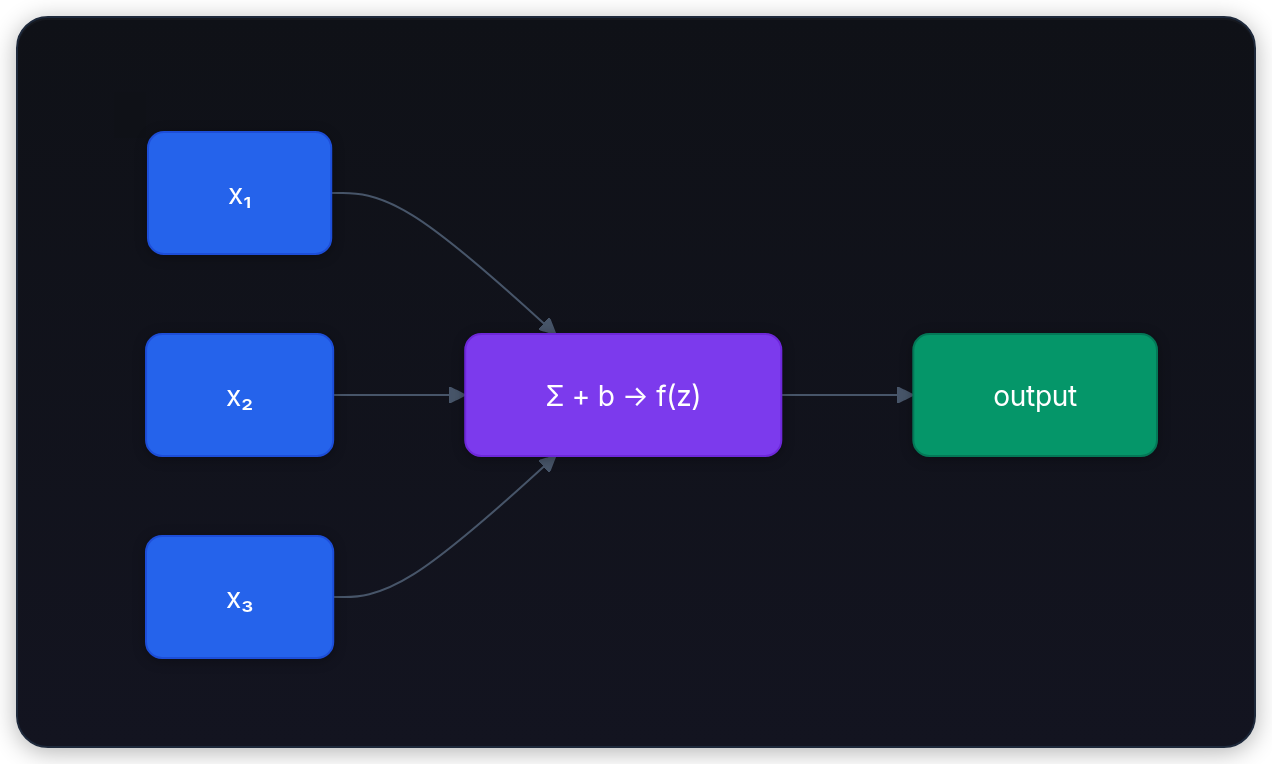

A single neuron: the smallest building block

Every neural network is built from a simple unit called a neuron (also called a node or unit). A neuron takes in some numbers, does basic math, and produces one number as output.

Here's the analogy: imagine a hiring manager evaluating a job candidate. They look at several factors: years of experience, test score, and number of references. Each factor matters a different amount. Experience might be very important (high weight), while the number of references matters less (low weight). The manager mentally weighs everything, adds a personal threshold ("I generally set a high bar"), and makes a decision: hire or not.

A neuron does exactly this:

- Multiply each input by a weight:

input_1 × weight_1 + input_2 × weight_2 + ... - Add a bias: a constant offset that shifts the threshold

- Apply an activation function: a non-linear twist that controls the output

Mathematically, a neuron computes:

where are the weights, is the bias, and is the activation function. The weights and bias are the neuron's parameters: the numbers it learns during training.

🔬 Research insight: The idea of an artificial neuron dates back to 1958, when Frank Rosenblatt introduced the Perceptron.[1] It could only learn linearly separable patterns (like drawing a straight line between two groups of points), but it established the core concept: multiply inputs by weights, sum them up, and threshold the result.

A concrete walkthrough

Let's trace through a single neuron with real numbers. Suppose we're predicting whether a student passes an exam based on two inputs: hours studied () and hours slept ().

The neuron has learned these parameters:

- (studying matters a lot)

- (sleep matters, but less)

- (the bar is moderately high)

For a student who studied 6 hours and slept 8 hours:

After applying an activation function like the sigmoid (which squashes any number to between 0 and 1):

The neuron outputs 0.93, which we can interpret as a 93% chance the student passes. The weights encode what the neuron has learned: studying is 2.3× more important than sleeping, and you need a combined score above 4.0 to likely pass.

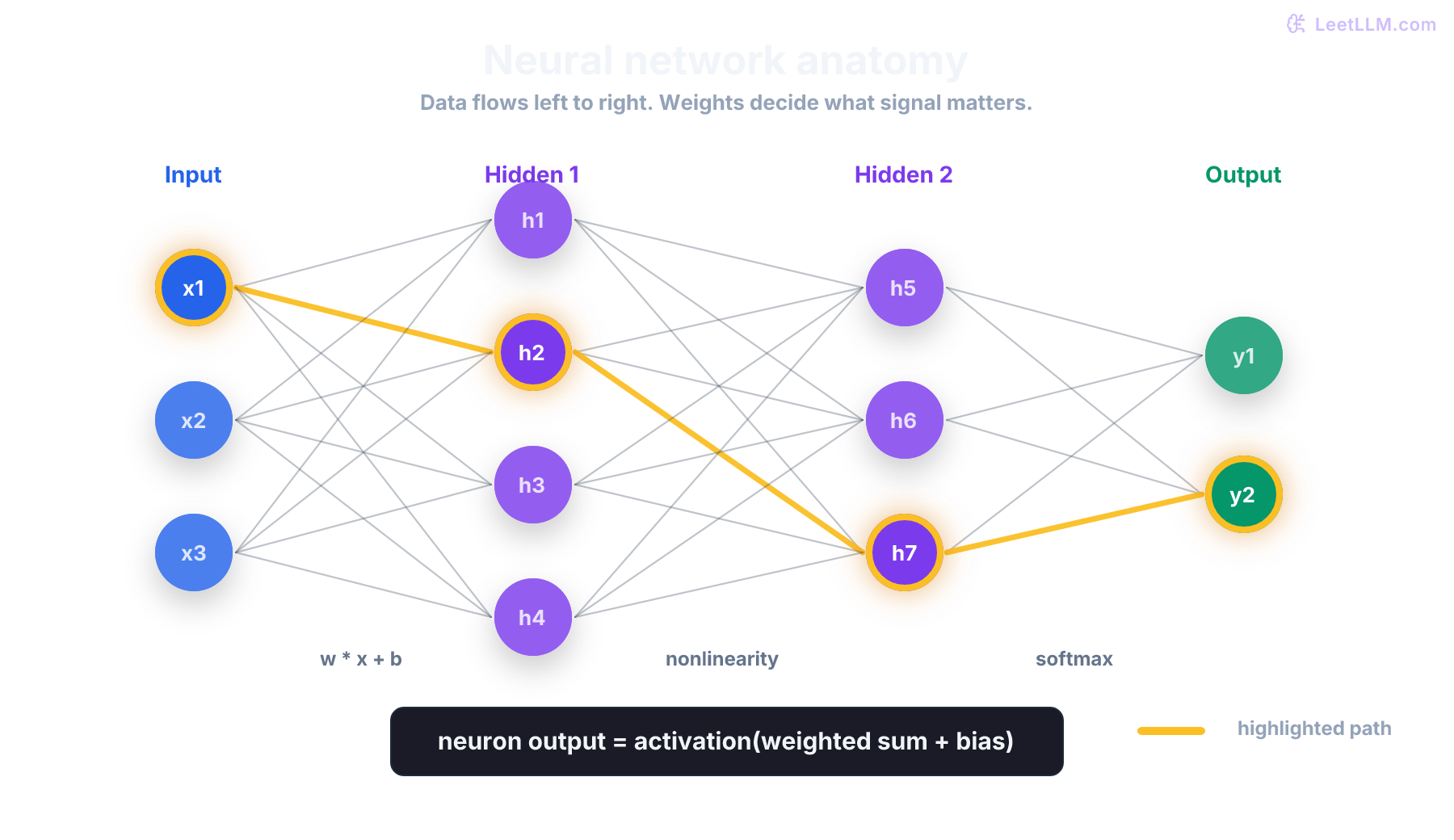

Layers: where the power comes from

A single neuron can only draw a straight line between "yes" and "no." That's not enough for anything interesting. Real-world patterns, like recognizing faces, understanding language, or predicting stock prices, are far more complex than a single line can capture.

The solution? Stack neurons into layers, and stack layers into a network. Each layer transforms its inputs, and the next layer works with those transformed values. This lets the network learn increasingly abstract patterns.

The three types of layers

| Layer | Role | Example |

|---|---|---|

| Input layer | Receives raw data | Pixel values of an image, word embeddings, sensor readings |

| Hidden layer(s) | Transforms and learns patterns | Detects edges, groups similar words, finds correlations |

| Output layer | Produces the final answer | Class probabilities, next-word prediction, a regression value |

The hidden layers are where learning happens. "Hidden" just means they're between input and output; there's nothing mysterious about them. Each hidden layer takes the previous layer's output, multiplies by new weights, adds new biases, and applies an activation function.

Why depth matters

Why not just use one massive hidden layer? Because depth lets the network learn hierarchical features. In image recognition, for example:

- Layer 1 learns to detect edges (simple patterns)

- Layer 2 combines edges into textures and corners (medium patterns)

- Layer 3 combines those into shapes like eyes and noses (complex patterns)

- Layer 4 combines shapes into faces (high-level concepts)

Each layer builds on the previous one, creating increasingly abstract representations. A single wide layer would have to learn all of these patterns simultaneously, which is much harder. Deep networks decompose problems into layers of simpler sub-problems.[2]

💡 Key insight: The term "deep learning" literally means using neural networks with many layers (depth). When someone says "deep learning," they're talking about neural networks with more than a couple of hidden layers. Modern transformer-based LLMs have dozens to hundreds of layers.

The forward pass: how data flows through the network

When a neural network processes an input, data flows from left to right through every layer. This is called the forward pass. Here's the complete process for a network with two hidden layers:

Step-by-step example

Consider a tiny network that classifies handwritten digits (0-9). The input is a small 4-pixel image represented as 4 numbers.

Network architecture: 4 inputs → 3 hidden neurons → 3 hidden neurons → 10 outputs

Step 1: Input to first hidden layer

Each of the 3 neurons in hidden layer 1 computes a weighted sum of all 4 inputs, adds its bias, and applies an activation function:

python1import numpy as np 2 3# Input: 4 pixel values 4x = np.array([0.2, 0.8, 0.1, 0.9]) 5 6# Layer 1: weights (3 neurons, 4 inputs each) and biases 7W1 = np.array([[ 0.5, -0.3, 0.7, 0.1], 8 [-0.2, 0.8, -0.1, 0.4], 9 [ 0.3, -0.5, 0.6, -0.2]]) 10b1 = np.array([0.1, -0.2, 0.05]) 11 12# Forward pass through layer 1 13z1 = W1 @ x + b1 # weighted sum + bias 14h1 = np.maximum(0, z1) # ReLU activation: max(0, z) 15 16print(f"z1 = {z1}") # z1 = [0.30, 0.60, 0.03] 17print(f"h1 = {h1}") # h1 = [0.30, 0.60, 0.03] (all positive, so ReLU keeps them)

Continue in the same file.

Step 2: First hidden layer to second hidden layer

The process repeats. The 3 outputs from layer 1 become the 3 inputs to layer 2:

python1# Layer 2: weights (3 neurons, 3 inputs each) and biases 2W2 = np.array([[ 0.4, -0.6, 0.2], 3 [ 0.1, 0.5, -0.3], 4 [-0.7, 0.3, 0.8]]) 5b2 = np.array([0.0, 0.1, -0.1]) 6 7z2 = W2 @ h1 + b2 8h2 = np.maximum(0, z2) # ReLU activation again 9print(f"h2 = {h2}")

Continue in the same file.

Step 3: Second hidden layer to output

The final layer produces 10 numbers (one per digit). We apply softmax (covered in a later article) to convert them into probabilities that sum to 1:

python1# Output layer: weights (10 classes, 3 inputs) and biases 2W3 = np.random.randn(10, 3) * 0.5 3b3 = np.zeros(10) 4 5logits = W3 @ h2 + b3 6 7# Softmax: convert raw scores to probabilities 8exp_logits = np.exp(logits - logits.max()) # subtract max for stability 9probs = exp_logits / exp_logits.sum() 10 11predicted_digit = np.argmax(probs) 12print(f"Predicted digit: {predicted_digit}") 13print(f"Confidence: {probs[predicted_digit]:.1%}")

That's the entire forward pass. Raw pixels flow through two hidden layers of transformation, and the output layer produces a probability distribution over the 10 possible digits. The network's weights encode everything it has learned about what patterns of pixels correspond to which digits.

Activation functions: the non-linearity you can't skip

Remember the activation function from the single-neuron formula? It turns out to be critical. Without it, a neural network is just a fancy linear equation, no matter how many layers you add.

Why non-linearity matters

Here's a key mathematical fact: the composition of linear functions is still linear. If layer 1 computes and layer 2 computes , then:

A 100-layer linear network has the same expressive power as a single layer. All those extra layers buy you nothing. Adding a non-linear activation function between layers breaks this collapse and lets each layer learn something new.[3]

The activation function zoo

| Activation | Formula | Range | Used in |

|---|---|---|---|

| Sigmoid | (0, 1) | Output layers for binary classification; historically in hidden layers | |

| Tanh | (-1, 1) | Centered alternative to sigmoid; used in some RNN architectures | |

| ReLU | [0, ∞) | The modern default for hidden layers[4] | |

| GELU | where is the standard normal CDF | ≈(-0.17, ∞) | Used in transformers (BERT, GPT)[5] |

Sigmoid was the original activation. It squashes any input to between 0 and 1, which is intuitive for probabilities. But it has a fatal flaw in deep networks: for very large or very small inputs, the gradient approaches zero. When gradients vanish, layers stop learning. This is called the vanishing gradient problem, and it's why sigmoid fell out of favor for hidden layers.

ReLU (Rectified Linear Unit) fixes this by being dead simple: if the input is positive, pass it through unchanged. If it's negative, output zero. It's computationally fast and doesn't suffer from vanishing gradients for positive inputs, which made deep networks practical.[4]

GELU (Gaussian Error Linear Unit) is a smoother version of ReLU that's used in most modern transformers. Instead of a hard cutoff at zero, it gradually dampens negative values. GPT and BERT both use GELU.[5]

⚠️ Common mistake: Don't think of activation functions as optional. They're what give neural networks the ability to learn anything beyond straight lines. Removing them makes the entire network collapse into a single linear operation, regardless of depth.

Parameters: the knowledge stored in a network

Everything a neural network "knows" is stored in its parameters: the weights and biases across all layers. When people say a model has "70 billion parameters," they mean it has 70 billion learned numbers that collectively encode all its knowledge.

Counting parameters

Counting parameters is straightforward. For a fully connected layer connecting inputs to outputs:

The first term is the weight matrix; the second is the bias vector. Let's count parameters for a concrete network:

| Layer | Shape | Weights | Biases | Total |

|---|---|---|---|---|

| Input → Hidden 1 | 784 → 256 | 200,704 | 256 | 200,960 |

| Hidden 1 → Hidden 2 | 256 → 128 | 32,768 | 128 | 32,896 |

| Hidden 2 → Output | 128 → 10 | 1,280 | 10 | 1,290 |

| Total | 235,146 |

This network for classifying handwritten digits has about 235K parameters. That's tiny next to common language-model checkpoints:

| Model | Parameters | Scale |

|---|---|---|

| Digit classifier (above) | 235K | Fits on a microcontroller |

| BERT Base (2018)[6] | 110M | Fits on a single GPU |

| GPT-2 XL (2019)[7] | 1.5B | Needs larger GPU memory |

| GPT-3 (2020)[8] | 175B | Requires distributed training and serving |

The progression from 235K to hundreds of billions is the story of modern AI. More parameters means more capacity to store patterns, but also more data, memory, and compute needed to train them.[3]

The universal approximation theorem: why this works at all

Here's the deep reason neural networks are so powerful: in theory, a neural network with a single hidden layer and enough neurons can approximate any continuous function to arbitrary precision.[9][10]

Think about what that means. Any pattern that maps inputs to outputs, be it recognizing cats in photos, translating between languages, or predicting weather, can be learned by a neural network if it has enough neurons and enough training data.

The catch? "Enough neurons" might mean an impractically large number. In practice, deeper networks (more layers, fewer neurons per layer) tend to learn the same patterns much more efficiently than shallow, wide networks. This is why modern AI uses deep networks with many layers rather than one enormous hidden layer.

🎯 Production tip: The universal approximation theorem tells you that neural networks can represent any function. It says nothing about whether training will actually find that function. Successful training depends on having enough data, choosing the right architecture, and using proper optimization, all of which are covered in the training and backpropagation article.

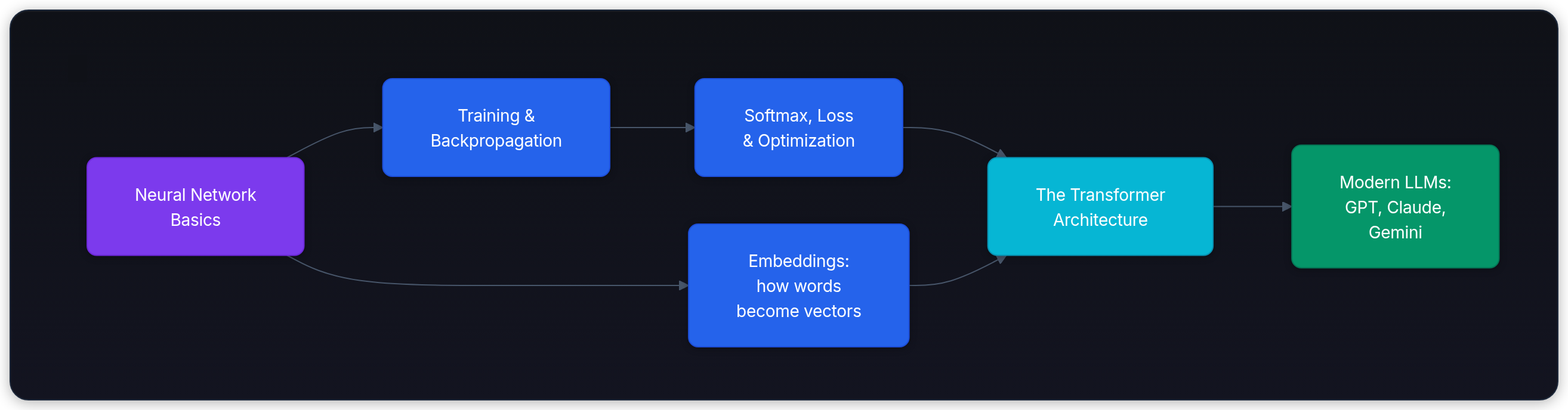

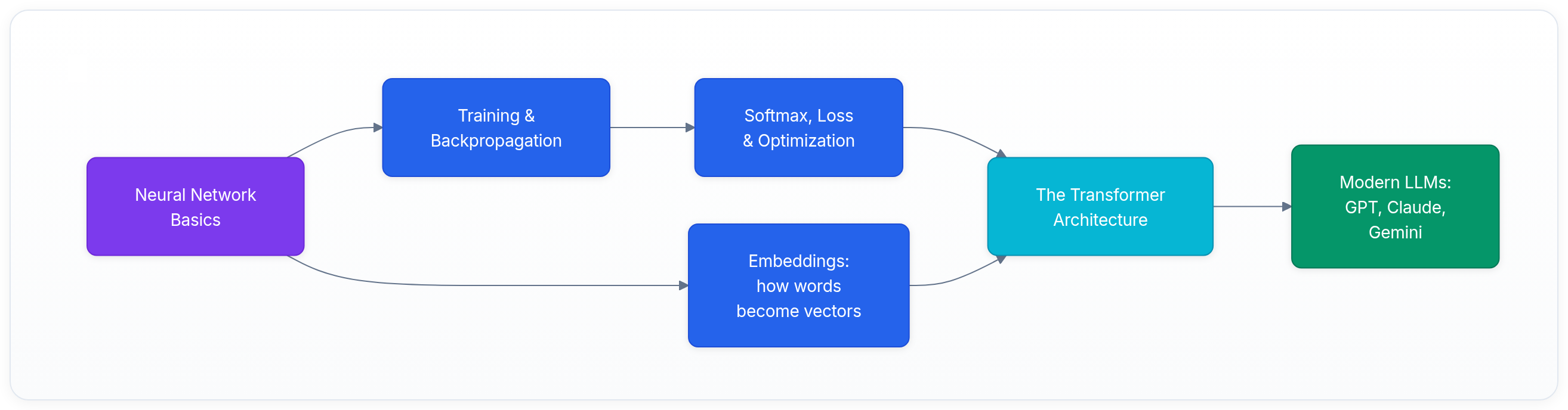

From neurons to modern AI: connecting the dots

You now understand the building blocks of every modern AI system. Here's how they connect to what's coming next in this curriculum:

| Concept from this article | Where it goes next |

|---|---|

| Weights and biases | Training and Backpropagation: how these parameters are learned from data |

| Activation functions (softmax) | Softmax, Loss & Optimization: the math behind classification and model training |

| Layers and forward pass | Transformer Architecture: how attention layers process language |

| Feed-forward networks | The FFN block inside every transformer layer is exactly the kind of network described in this article |

| Parameter counting | Scaling Laws: why bigger models need more data and compute |

The key ideas to take with you

- A neural network is a mathematical function built from layers of simple units (neurons)

- Each neuron computes: weighted sum → add bias → apply activation

- Parameters (weights and biases) are the numbers the network learns during training

- Activation functions add non-linearity, which is what gives networks the power to learn complex patterns

- Depth (more layers) lets networks learn hierarchical features more efficiently

- Everything from spam filters to ChatGPT is built on these same building blocks, just at wildly different scales

Key takeaways

- Neural networks are functions built from layers of neurons, where each neuron computes a weighted sum of its inputs, adds a bias, and applies a non-linear activation

- Without activation functions, any depth of linear layers collapses to a single linear transformation

- Parameters (weights and biases) encode everything the network has learned; modern LLMs have hundreds of billions of them

- The forward pass is data flowing through layers: input → hidden transformations → output

- The universal approximation theorem guarantees that neural networks can, in principle, learn any pattern, given enough neurons and training data

- Deep networks learn hierarchical features more efficiently than wide, shallow ones

- Everything in this curriculum, from tokenization to transformer architecture, builds on these foundations

Next, continue to Training & Backpropagation, where those parameters learn from loss.

Evaluation Rubric

- 1Explains a single neuron as weighted sum + bias + non-linear activation

- 2Describes how stacking layers enables learning hierarchical features

- 3Traces data flow through a complete forward pass with concrete numbers

- 4Explains why non-linear activations are necessary (linear collapse)

- 5Contrasts sigmoid, ReLU, and GELU activations with practical tradeoffs

- 6Connects the universal approximation theorem to why depth and width matter

- 7Calculates parameter counts for a multi-layer network and relates to LLM scale

Common Pitfalls

- Thinking neural networks are modeled on biological neurons (they share the name but are mathematically very different)

- Assuming more layers always means better performance (without proper training techniques, deep networks fail to learn)

- Forgetting that without non-linear activations, any deep network collapses to a single linear layer

- Confusing parameters (weights and biases the model learns) with hyperparameters (settings humans choose, like learning rate)

- Not realizing that the same neural network architecture can perform completely different tasks depending on what data it's trained on

Follow-up Questions to Expect

Key Concepts Tested

Single neuron: weighted sum, bias, and activation functionFeed-forward architecture: input, hidden, and output layersParameters (weights and biases) as learned knowledgeActivation functions: sigmoid, ReLU, GELUThe forward pass: how data flows through the networkUniversal approximation theorem intuitionParameter counting and scale (from hundreds to billions)

References

The Perceptron: A Probabilistic Model for Information Storage and Organization in the Brain.

Rosenblatt, F. · 1958 · Psychological Review, 65(6)

Learning Representations by Back-Propagating Errors.

Rumelhart, D. E., Hinton, G. E., Williams, R. J. · 1986 · Nature, 323

Multilayer Feedforward Networks are Universal Approximators.

Hornik, K., Stinchcombe, M., White, H. · 1989 · Neural Networks, 2(5)

Approximation by Superpositions of a Sigmoidal Function.

Cybenko, G. · 1989 · Mathematics of Control, Signals and Systems, 2(4)

Deep Learning.

Goodfellow, I., Bengio, Y., Courville, A. · 2016

Rectified Linear Units Improve Restricted Boltzmann Machines.

Nair, V., Hinton, G. E. · 2010 · ICML 2010

Gaussian Error Linear Units (GELUs).

Hendrycks, D., Gimpel, K. · 2016

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding.

Devlin, J., et al. · 2019 · NAACL 2019

Language Models are Unsupervised Multitask Learners.

Radford, A., et al. · 2019

Language Models are Few-Shot Learners.

Brown, T., et al. · 2020 · NeurIPS 2020

ImageNet Classification with Deep Convolutional Neural Networks

Krizhevsky, A., et al. · 2012 · NeurIPS 2012

Gradient-Based Learning Applied to Document Recognition

Yann LeCun, Leon Bottou, Yoshua Bengio, Patrick Haffner · 1998