⚙️EasyMLOps & Deployment

Python for AI Engineering

A beginner-first Python chapter that turns one JSONL eval row into a typed object, exact-match metric, failing test, and reproducible command.

35 min readOpenAI, Anthropic, Google +16 key concepts

Python is the first workshop tool in this curriculum.

Before you train models, build agents, or deploy services, you need one simple habit: turn messy AI work into small Python functions that another engineer can run.

Imagine a folder with model answers in a JSONL file. Each line has a prompt, an expected answer, and a prediction. Your job isn't to build a chatbot yet. Your job is to load the rows, score them, print a metric, and fail loudly when the data is wrong.

What You Learn

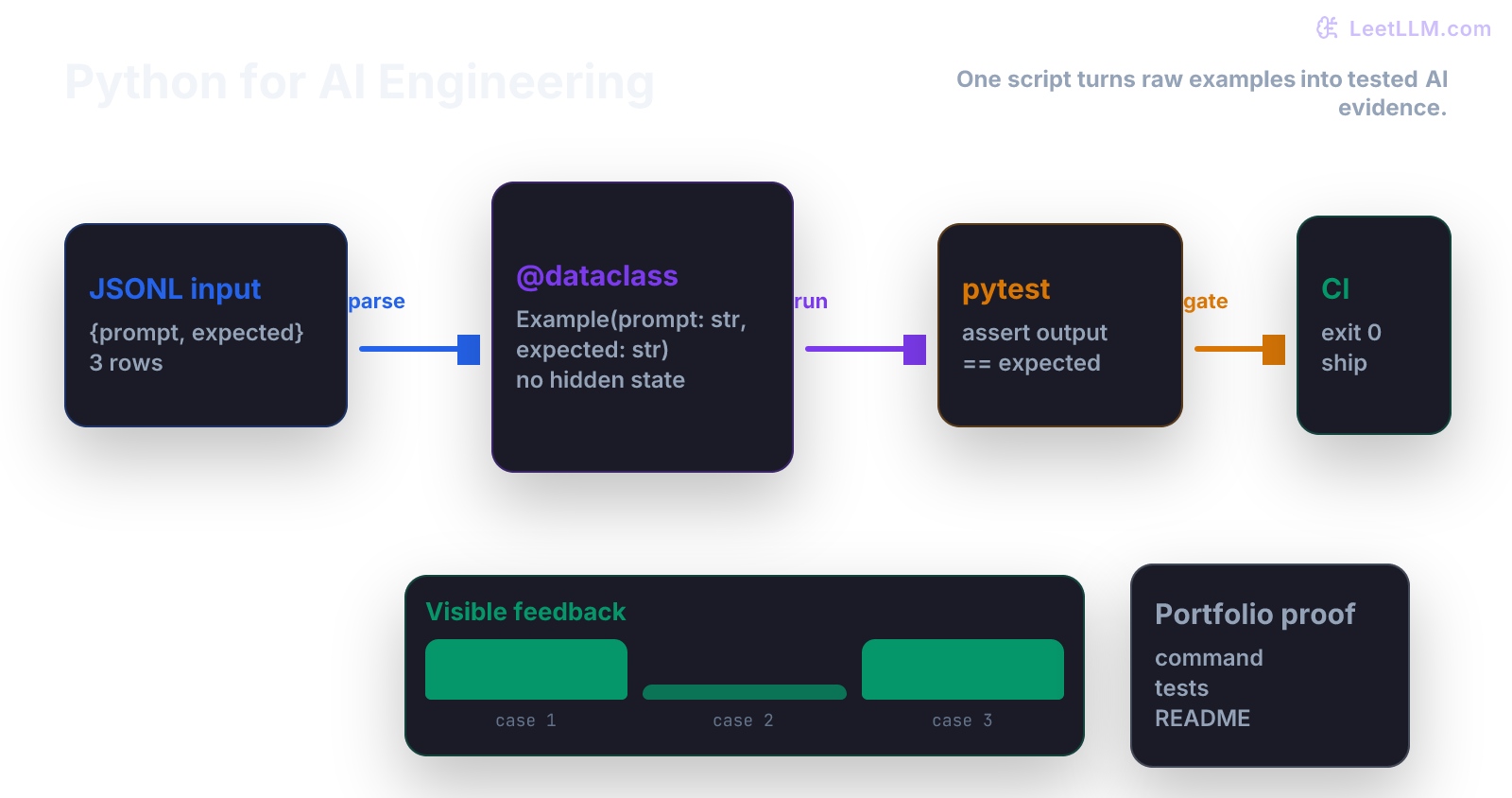

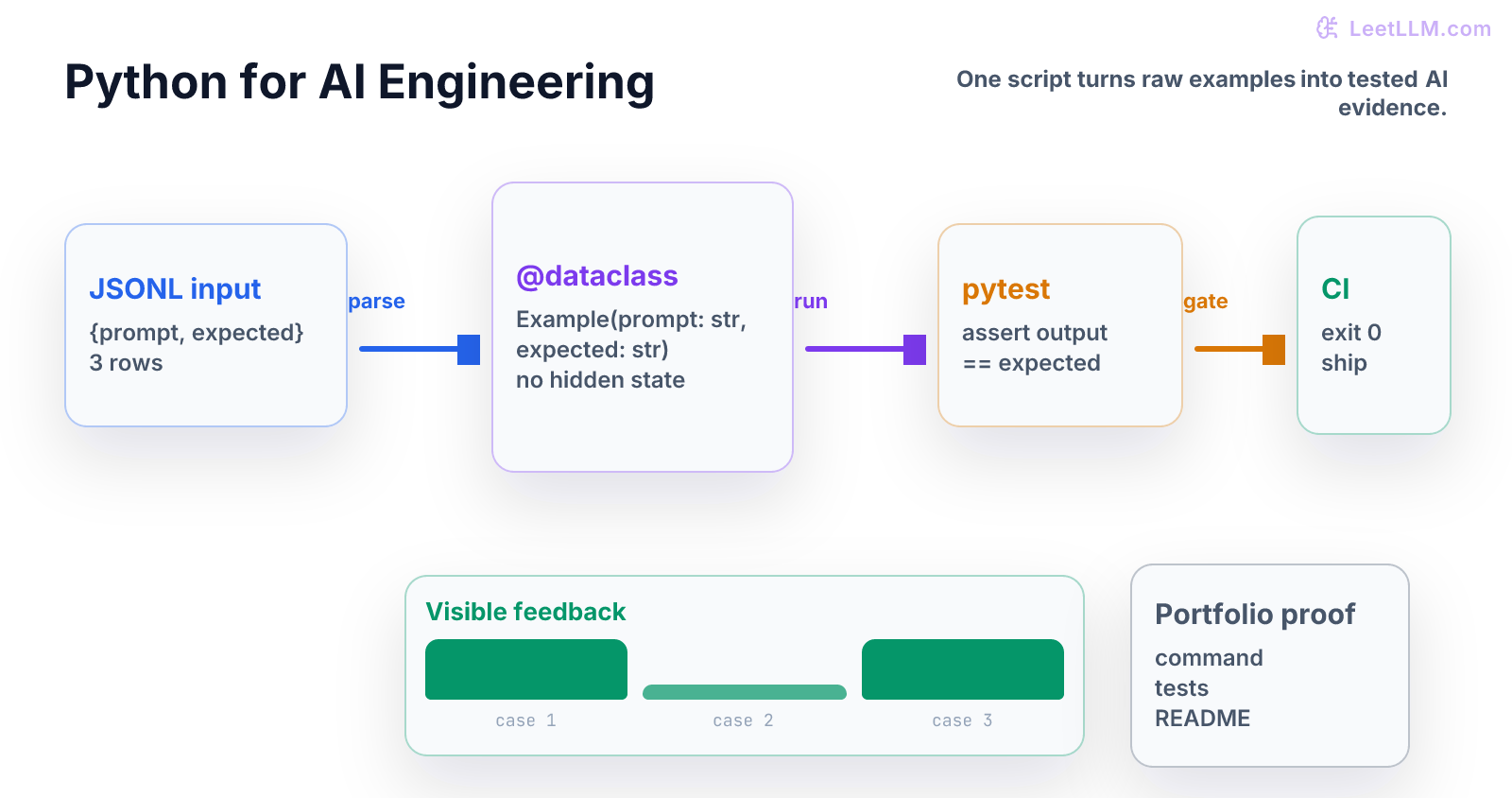

This chapter teaches Python as a repeatable eval tool. By the end, a beginner should be able to explain how one JSONL row becomes a typed object, how one prediction becomes a score, and how one failing assertion prevents a broken script from shipping.[1][2][3]

Step Map

| Step | Question | What you should be able to do |

|---|---|---|

| 1 | What data enters? | Name the fields in one eval row. |

| 2 | What shape should it have? | Store the row in a small typed object. |

| 3 | What operation happens? | Score one prediction against one expected answer. |

| 4 | What can fail? | Catch missing fields and wrong types early. |

| 5 | What ships? | Run one command and get one clear metric. |

Think of the script like a lab scale. It doesn't make the experiment interesting. It makes the measurement trustworthy.

Tiny Story

Start with three examples from a fictional geography eval:

| prompt | expected | prediction |

|---|---|---|

Capital of France? | Paris | Paris |

Capital of Spain? | Madrid | Barcelona |

Capital of Italy? | Rome | Rome |

Two predictions are correct. One is wrong.

Before using any AI framework, you can already answer the important question:

What fraction of rows matched the expected answer?

The answer is:

That is a metric. Small, plain, and useful.

Vocabulary

- function: named operation with inputs and an output.

- dataclass: small Python class for storing named fields.

- type hint: label that says what kind of value a function expects or returns.

- JSONL: file format with one JSON object per line.

- metric: number that summarizes behavior, such as exact-match accuracy.

- test: small check that protects an assumption.

- exit code: process result where

0means success and nonzero means failure.

Each word should point to something in the eval story. If it can't point to a row, field, function, score, or failure, it is floating.

Worked Example

One row is a dictionary:

text1{"prompt": "Capital of France?", "expected": "Paris", "prediction": "Paris"}

A beginner might keep it as a dictionary forever. That works for one file, then gets fragile.

A typed object gives the row a name and stable fields:

| Field | Meaning |

|---|---|

prompt | input question |

expected | answer we wanted |

prediction | answer the model gave |

The object isn't fancy. It is a little box with labels.

Code Lab

Put this in python_eval_demo.py:

python1from dataclasses import dataclass 2import json 3 4@dataclass 5class Example: 6 prompt: str 7 expected: str 8 prediction: str 9 10def parse_example(line: str) -> Example: 11 row = json.loads(line) 12 return Example( 13 prompt=row["prompt"], 14 expected=row["expected"], 15 prediction=row["prediction"], 16 ) 17 18def exact_match(example: Example) -> bool: 19 return example.prediction.strip() == example.expected.strip() 20 21raw_rows = [ 22 '{"prompt": "Capital of France?", "expected": "Paris", "prediction": "Paris"}', 23 '{"prompt": "Capital of Spain?", "expected": "Madrid", "prediction": "Barcelona"}', 24 '{"prompt": "Capital of Italy?", "expected": "Rome", "prediction": "Rome"}', 25] 26 27examples = [parse_example(row) for row in raw_rows] 28score = sum(exact_match(example) for example in examples) / len(examples) 29 30print("rows", len(examples)) 31print("exact_match", round(score, 3))

Expected output:

text1rows 3 2exact_match 0.667

Read the code as a pipeline:

| Line of work | Meaning |

|---|---|

| parse JSON | turn text into data |

build Example | give fields stable names |

| compare strings | score one row |

| average booleans | score the whole file |

Python is doing ordinary bookkeeping. That bookkeeping is what makes later model work inspectable.

Second Worked Example: Fail Early

Now break one row:

python1bad_row = '{"prompt": "Capital of France?", "expected": "Paris"}' 2 3try: 4 parse_example(bad_row) 5except KeyError as error: 6 print("missing", error.args[0])

Expected output:

text1missing prediction

Read The Failure

That failure is good.

It tells you the input is wrong before a metric report lies to you. In real AI systems, silent bad data is worse than a loud crash.

Mini Test

Add tests beside the demo:

python1def test_exact_match_strips_whitespace(): 2 example = Example("Q", "Paris", " Paris ") 3 assert exact_match(example) 4 5def test_parse_example_requires_prediction(): 6 bad_row = '{"prompt": "Q", "expected": "A"}' 7 try: 8 parse_example(bad_row) 9 except KeyError as error: 10 assert error.args[0] == "prediction" 11 else: 12 raise AssertionError("missing prediction should fail")

These tests protect two beginner lessons:

- string cleanup belongs in the metric

- missing required fields should fail before scoring

Common Trap

The common trap is notebook-only code.

Notebook-only code usually has hidden state:

| Hidden thing | Why it hurts |

|---|---|

| variable from an old cell | rerun order changes the answer |

| local file path | teammate can't reproduce the script |

| copied metric code | bugs drift between notebooks |

| no tests | broken input still prints a score |

A small script is less glamorous, but it is easier to trust.

From Script To Command

The next step is a command that accepts paths:

python1def main(input_path: str, output_path: str) -> None: 2 print("input", input_path) 3 print("output", output_path)

This tiny shape matters:

| Input | Output |

|---|---|

| path to examples | metric file |

| path to predictions | error log |

| config value | exit code |

Later chapters will use the same pattern for NumPy arrays, PyTorch tensors, RAG evals, and deployment checks.

Practice

- Add a fourth row where prediction equals expected.

- Predict the new exact-match score before running code.

- Add a row with extra whitespace around the prediction.

- Confirm

exact_matchstill treats it as correct. - Add a row missing

expectedand make sure it fails loudly. - Write one sentence that starts: "This script is trustworthy because..."

Production Check

Before you trust a Python eval script, write down:

- input file format

- required fields

- metric definition

- behavior on empty files

- behavior on missing fields

- command used to run it

- test command

- expected output example

For example:

Input is JSONL with

prompt,expected, andprediction. Metric is exact match after stripping whitespace. Missing fields raiseKeyError. Tests cover whitespace and missing fields.

That note is small. It is also the difference between "I ran some code" and "another engineer can reproduce this result."

Next, continue to NumPy and Tensor Shapes. Python gave you named functions and testable rows. NumPy turns rows into arrays, and arrays need shape discipline.

Evaluation Rubric

- 1Explains Python eval scripts as repeatable data pipelines from row to object to metric

- 2Builds a dataclass parser, exact-match scorer, and tests with visible output

- 3Catches missing fields, hidden notebook state, and unreproducible inputs before scoring

Common Pitfalls

- The common failure is notebook-only code. It works once, then breaks when another person changes paths, environment variables, or input shape.

- Hidden notebook state makes a result hard to reproduce. Prefer a small script with inputs, outputs, and tests.

- A metric is only useful if malformed rows fail before the score is printed.

Follow-up Questions to Expect

Key Concepts Tested

functions and modulestype hintsfile IOJSONL datasetstestable CLI scriptserror handling

References