📝EasyNLP Fundamentals

Language Modeling & Next Tokens

Discover how the simple objective of predicting the next word leads to complex reasoning, and understand the concept of autoregressive generation.

20 min readGoogle, Meta, OpenAI +15 key concepts

By now, we understand the architecture of the Transformer, how it processes sequences, and how we measure its mistakes using cross-entropy loss. But what exactly is the model doing?

If you strip away the hype, a large language model (LLM) does one core thing: it predicts the next token.

This article explains language modeling, autoregressive generation, and how the deceptively simple task of "guessing the next token" can produce broad capabilities.

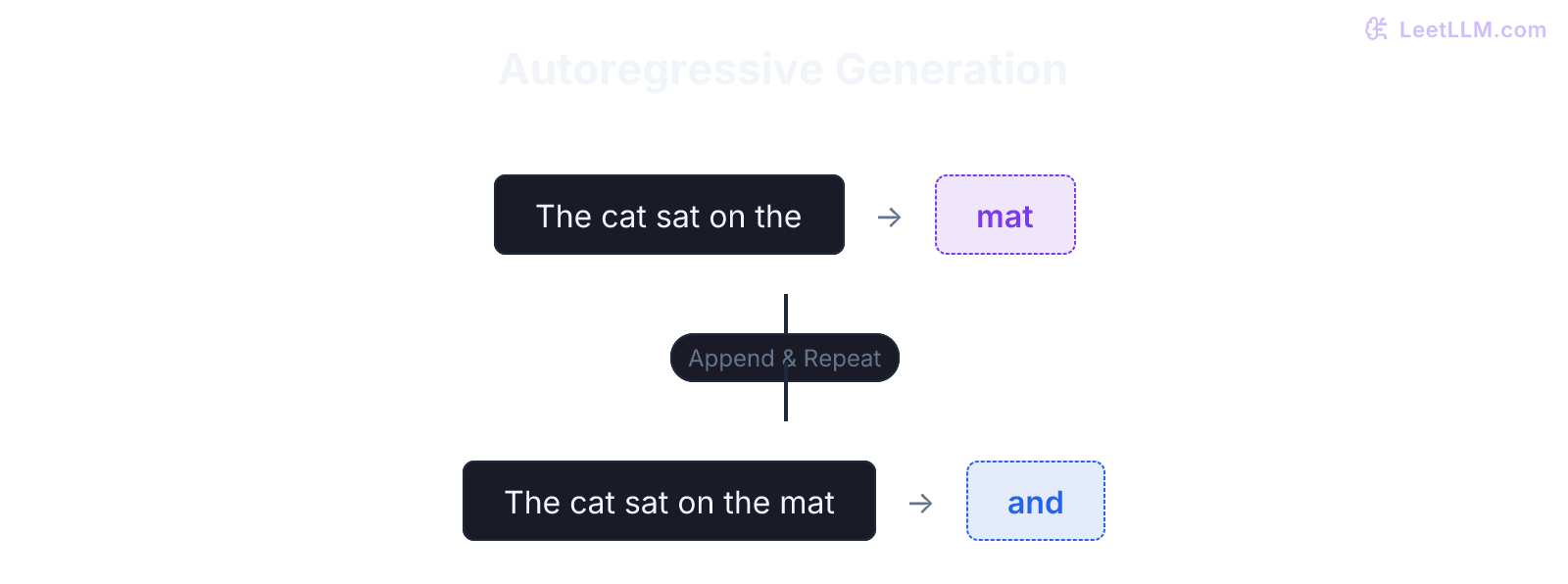

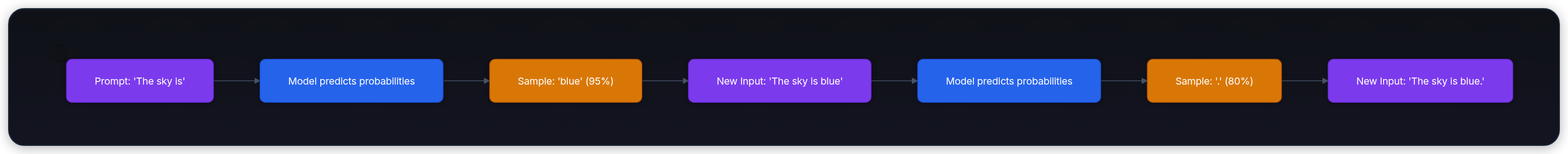

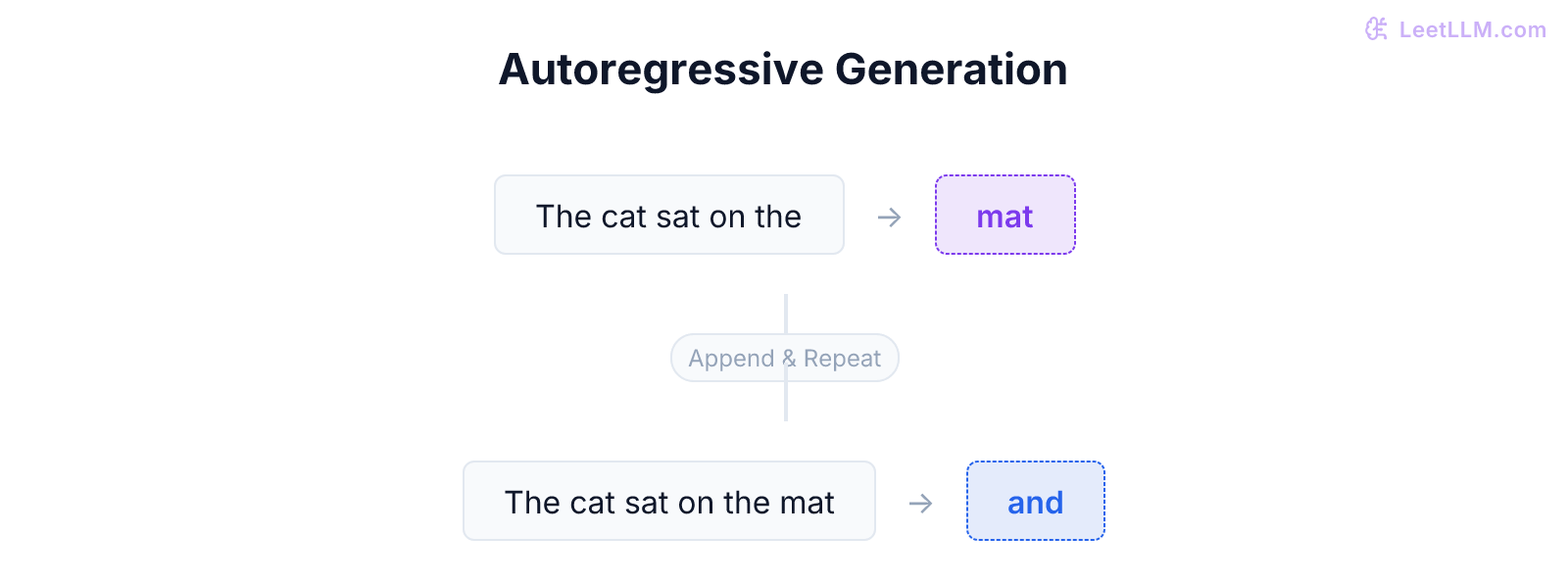

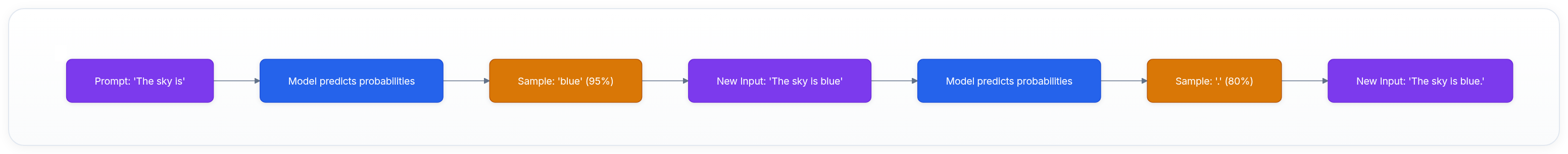

💡 Key insight: An LLM doesn't generate a whole paragraph at once. It predicts a single token, appends it to its input, and runs the process again. This is called autoregressive generation.

What is a language model?

At its core, a language model is a probability engine. Given a sequence of text, it assigns a probability distribution over the entire vocabulary to determine what token comes next.

If you give a language model the prompt:

"The cat sat on the"

It will output a probability distribution over its 50,000+ vocabulary tokens:

mat(85%)floor(10%)couch(3%)apple(0.0001%)

Probability table

| Candidate token | Probability | What reader should notice |

|---|---|---|

mat | 85% | Highest probability becomes the default continuation. |

floor | 10% | Plausible alternatives remain available when sampling. |

couch | 3% | Lower-ranked words can appear with higher temperature. |

apple | 0.0001% | Grammatically possible tokens can still be semantically unlikely. |

Causal language modeling

This specific flavor of language modeling, reading from left to right and predicting the future, is called causal language modeling (or autoregressive modeling). GPT-style models use this objective.[1][2]

It's called "causal" because the model is bound by causality: it can only look at the past to predict the future. It can't peek ahead. When predicting the 5th word, an attention mask blocks it from looking at the 6th or 7th words.

Models like GPT (Generative Pre-trained Transformer) and Llama are Causal Language Models.

| Objective | Can read | Good for | Poor fit |

|---|---|---|---|

| Causal language modeling | Past tokens only | Generating text left to right | Filling a middle blank with future context |

| Masked language modeling | Left and right context | Classification, extraction, sentence understanding | Open-ended generation |

(Note: There is another type called Masked Language Modeling, used by BERT, where a word in the middle of a sentence is hidden and the model looks at both the left and right context to guess the blank. This is great for reading comprehension, but terrible for generating text, which is why modern LLMs are almost entirely causal).

The autoregressive loop

When you ask ChatGPT to write a poem, it doesn't plan out the rhyming structure in advance and output the whole poem in one go. It generates the poem exactly one token at a time in a loop.

This is the autoregressive generation loop:

- Input: The model receives the prompt.

- Predict: It calculates the probabilities for the very next token.

- Sample: We pick a token from those probabilities (usually the most likely one, or we use Temperature to add randomness).

- Append: That chosen token is glued to the end of the prompt.

- Repeat: The new, longer prompt is fed back into the model to predict the next token.

This explains why generation speed is measured in tokens per second (t/s). The model has to run a forward pass through its parameters for every generated token.

The context window limit

Because the output is constantly being appended to the input, the text the model has to read keeps growing. If you generate a 1000-word essay, by the end, the model is reading the original prompt plus the 999 words it just generated.

This growing sequence must fit into the model's context window, its short-term memory limit. If the context window is 8,000 tokens and the prompt plus generated text reaches 8,001 tokens, the model can't continue without dropping earlier context.

How does "guessing words" lead to intelligence?

A common critique of LLMs is "it's just a stochastic parrot; it's just autocomplete."

If it's autocomplete, how can it write code, solve logic puzzles, or translate languages?

The answer lies in scale, diversity, and optimization. When you force a neural network to predict the next token across huge mixtures of code, math, dialogue, documentation, and books, simple word association isn't enough to minimize the loss function.

Worked prompt

Consider this prompt:

"If John has 5 apples and eats 2, he has"

| Model family | Likely shortcut | Better internal feature |

|---|---|---|

| Markov-style autocomplete | Nearby token pattern like 2, 3 | None; it mostly follows local statistics. |

| Large language model | Still predicts one token | A subtraction-like representation helps reduce loss across many examples. |

A simple Markov chain (basic autocomplete) might look at the word "2" and guess "3" because "2, 3" is common. But an LLM can learn that, across many similar math problems, representing subtraction helps it predict the next token more reliably than memorizing every possible combination of numbers.

To predict text from a complex world, the model has pressure to learn useful approximations of that world. Grammar, facts, reasoning patterns, and code structure become helpful internal representations for minimizing next-token prediction loss.

Summary

- Language models output a probability distribution over a vocabulary for the next token.

- Causal language modeling strictly looks at the past to predict the future.

- Autoregressive generation is a loop where the predicted token is appended to the input, and the process repeats.

- The context window is the maximum number of tokens the model can hold in this input loop.

- Reasoning emerges because understanding logic and world knowledge is the most efficient way to minimize next-token prediction error on a massive scale.

Next, continue to From GPT to Modern LLMs, where next-token training becomes the modern model family.

Evaluation Rubric

- 1Defines what a language model is in terms of probability distribution over a vocabulary

- 2Explains the autoregressive generation loop step-by-step

- 3Contrasts causal (left-to-right) modeling with masked (fill-in-the-blank) modeling

- 4Discusses why predicting the next word naturally forces the model to learn world knowledge

Common Pitfalls

- Assuming LLMs have an internal 'thinking' loop separate from word prediction (the 'thinking' is entirely encoded in the word prediction process)

- Confusing the context window (how much it can read at once) with the vocabulary (the set of valid words it can generate)

- Thinking the model generates whole sentences at once

Follow-up Questions to Expect

Key Concepts Tested

The core objective of a language model: predicting the next token given a sequence of tokensAutoregressive generation: appending the predicted token to the input and loopingCausal language modeling vs masked language modeling (GPT vs BERT)Context window limits and why generation slows down over timeHow next-token prediction forces a model to learn grammar, facts, and reasoning

References