📝EasyNLP Fundamentals

The LLM Lifecycle

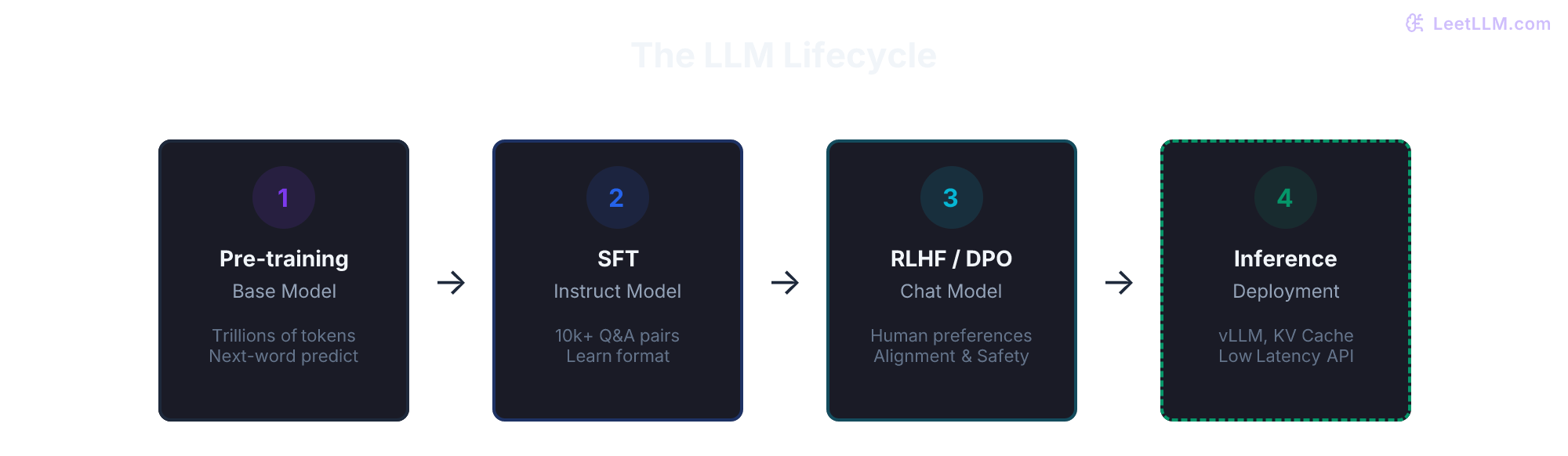

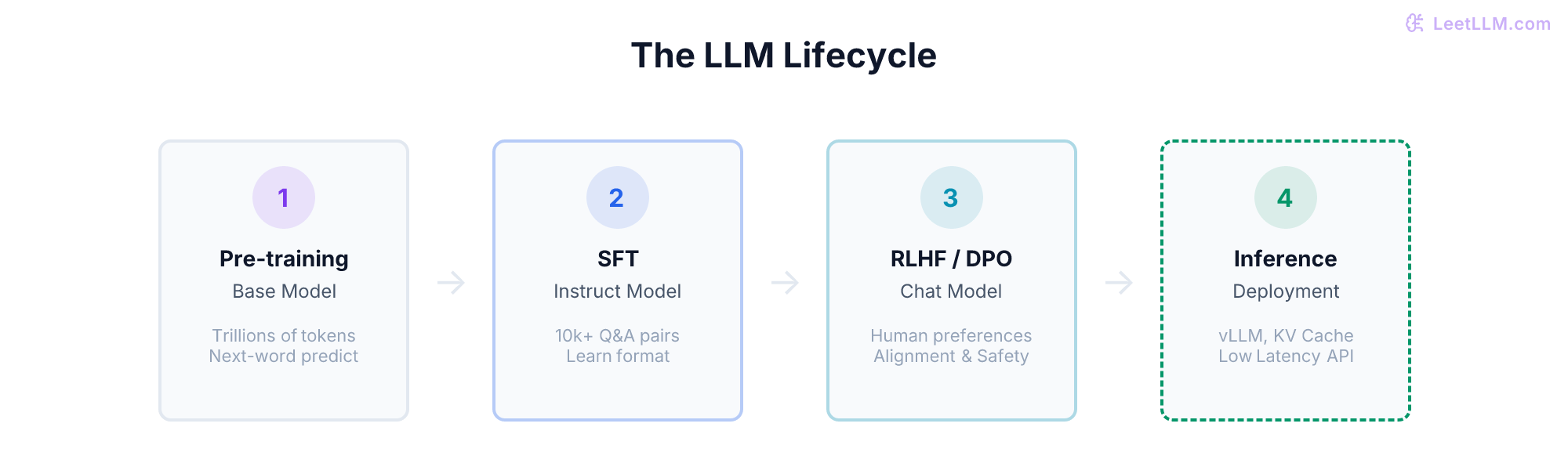

A high-level map of how an AI goes from a pile of empty GPUs to a production API: Pre-training, Fine-Tuning, Alignment, and Deployment.

20 min readGoogle, Meta, OpenAI +14 key concepts

You've reached the end of the Preparation & Prerequisites section. You now understand what neural networks are, how they learn via backpropagation, how they output probabilities using softmax, how Transformers generate text, and how prompts guide their behavior.

Before you dive into the technical engineering chapters of LeetLLM, it's helpful to have a map of the territory. How is an LLM built?

Creating a frontier-class model follows a multi-stage lifecycle.

💡 Key insight: You don't train a chatbot from scratch. You train a massive text predictor, then shape it into an assistant.

Lifecycle map

| Stage | Input | Output | Engineer question |

|---|---|---|---|

| Pre-training | Web-scale text and code | Base model | Is data quality high enough for the compute spend? |

| SFT | Prompt-response examples | Instruct model | Does the model follow the product format? |

| Preference tuning | Ranked answers | Chat model | Does behavior match human preference and safety goals? |

| Inference | Frozen weights and requests | Served API | Can latency, cost, and reliability meet the product bar? |

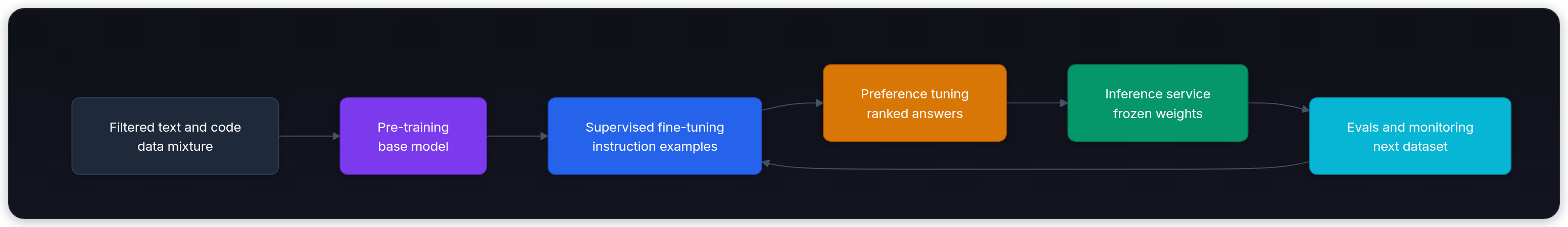

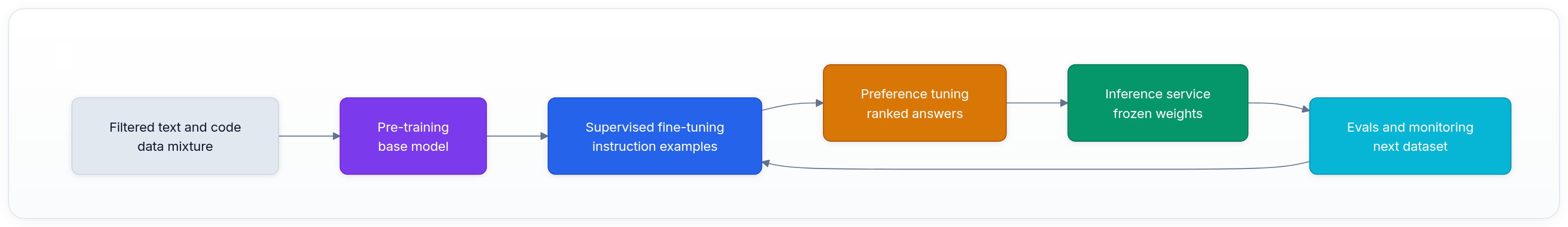

The lifecycle is a feedback loop, not a one-way checklist. Serving the model reveals failures, and those failures feed the next training or tuning run.

Stage 1: pre-training (the foundation)

This is where most of the compute budget goes.

You start with a massive neural network whose parameters are random. You train it on a huge mixture of text and code: web pages, books, papers, documentation, and repositories.

The objective is simple: next-token prediction. The model reads a chunk of text, guesses the next token, calculates cross-entropy loss, and uses backpropagation to update its weights.[1][2]

- Data: Trillions of tokens. Unstructured and raw.

- Compute: Thousands of GPUs running for months.

- Output: A "base model".

A base model contains a lot of knowledge, but it's awkward for consumers. If you prompt a base model with "How do I bake a cake?", it might continue with "How do I bake a pie? How do I bake cookies?" because it thinks it's continuing a list of questions. It hasn't been trained to act like an assistant.

Stage 2: supervised fine-tuning (SFT)

To turn the base model into an assistant, we need to teach it the Q&A format.

Researchers hire human experts to write thousands of high-quality prompt-and-response pairs.

- Prompt: "How do I bake a cake?"

- Response: "Here is a simple recipe for a vanilla cake: 1. Preheat oven..."

We continue training the model, but on this high-quality dataset.

- Data: 10,000 to 100,000 human-written examples.

- Compute: A few GPUs running for a few days.

- Output: An "Instruct Model".

The model now knows how to follow instructions and act like an assistant.

Stage 3: alignment and preference tuning

Even after SFT, the model might be toxic, helpful to hackers, or overly verbose. We need to align it with human values (helpful, honest, harmless).

This is often done using RLHF (Reinforcement Learning from Human Feedback) or newer techniques like DPO (Direct Preference Optimization). InstructGPT is the classic RLHF example.[3]

Humans are given two different answers generated by the model for the same prompt, and they vote on which one is better. The model is then trained to maximize the probability of generating the "winning" type of answer.

- Data: Human preference rankings.

- Compute: Medium compute.

- Output: A "chat model".

Stage 4: deployment and inference

Once the weights are frozen, the training is done. The model is deployed to servers so users can access it. This phase is called Inference.

Unlike training, inference doesn't update the weights. But running a large model for millions of users is an extreme engineering challenge.

Inference engineers spend their time optimizing:

- Latency: Time to first token (TTFT).

- Throughput: Tokens per second.

- Memory: LLMs consume massive amounts of VRAM, especially for the KV cache, which stores context so the model doesn't have to recompute earlier tokens from scratch on every step.

The LeetLLM Curriculum Map

Now that you know the lifecycle, here is how the rest of the LeetLLM roadmap maps to the work:

- Computing Foundations - Python, NumPy, data structures, and enough engineering muscle to work through examples.

- Math & Statistics - probability, uncertainty, sampling, hypothesis tests, and pass@k.

- Preparation & Prerequisites - vectors, neural networks, backpropagation, softmax, Transformers, next-token prediction, model history, prompting, and this lifecycle map.

- Core LLM Foundations - tokenization, embeddings, perplexity, tool calls, chunking, evaluation limits, and chat templates.

- ML Algorithms & Evaluation - classical ML, leakage, retrieval, decoding, experiments, PyTorch loops, and data pipelines.

- Applied LLM Engineering - RAG, agents, safety, observability, cost, deployment, caching, versioning, and support-bot design.

- Portfolio Capstones - document QA, evaluation dashboards, fine-tuned classifiers, and production agents.

- Transformer Deep Dives - embeddings, attention, positional encoding, normalization, and decoding mechanics.

- Advanced Training & Adaptation - scaling laws, distributed training, LoRA, RLHF, DPO, reward training, distillation, model merging, prompt optimization, and recursive models.

- Advanced Agents & Retrieval - vector indexes, advanced RAG, GraphRAG, RBAC, constrained generation, agent architectures, guardrails, code agents, memory, human review, failure handling, and orchestration.

- Inference & Production Scale - TTFT, TPS, KV cache, GQA, paged attention, FlashAttention, batching, quantization, speculative decoding, long context, MoE, Mamba, reasoning models, GPU serving, and online evals.

- System Design Capstones - end-to-end moderation, code completion, multi-tenant serving, search, multimodal systems, image generation, voice agents, and reasoning-agent design.

Next, start Core LLM Foundations with The Bitter Lesson. That chapter explains why scalable learning and search beat hand-coded AI shortcuts.

Evaluation Rubric

- 1Differentiates between Pre-training (unsupervised/self-supervised) and Fine-tuning (supervised)

- 2Explains why a base pre-trained model isn't useful as a chatbot without SFT and RLHF

- 3Identifies the core challenges in the deployment (inference) phase

Common Pitfalls

- Thinking RLHF teaches the model new facts (it doesn't; it just teaches it *how* to answer)

- Confusing training (updating weights) with inference (running the model to get a response)

Follow-up Questions to Expect

Key Concepts Tested

Pre-training: learning language from the raw internetSupervised Fine-Tuning (SFT): teaching the model to be an assistantAlignment (RLHF): teaching the model to be safe and helpfulDeployment and Inference: the mechanics of running the model for users

References