📝EasyNLP Fundamentals

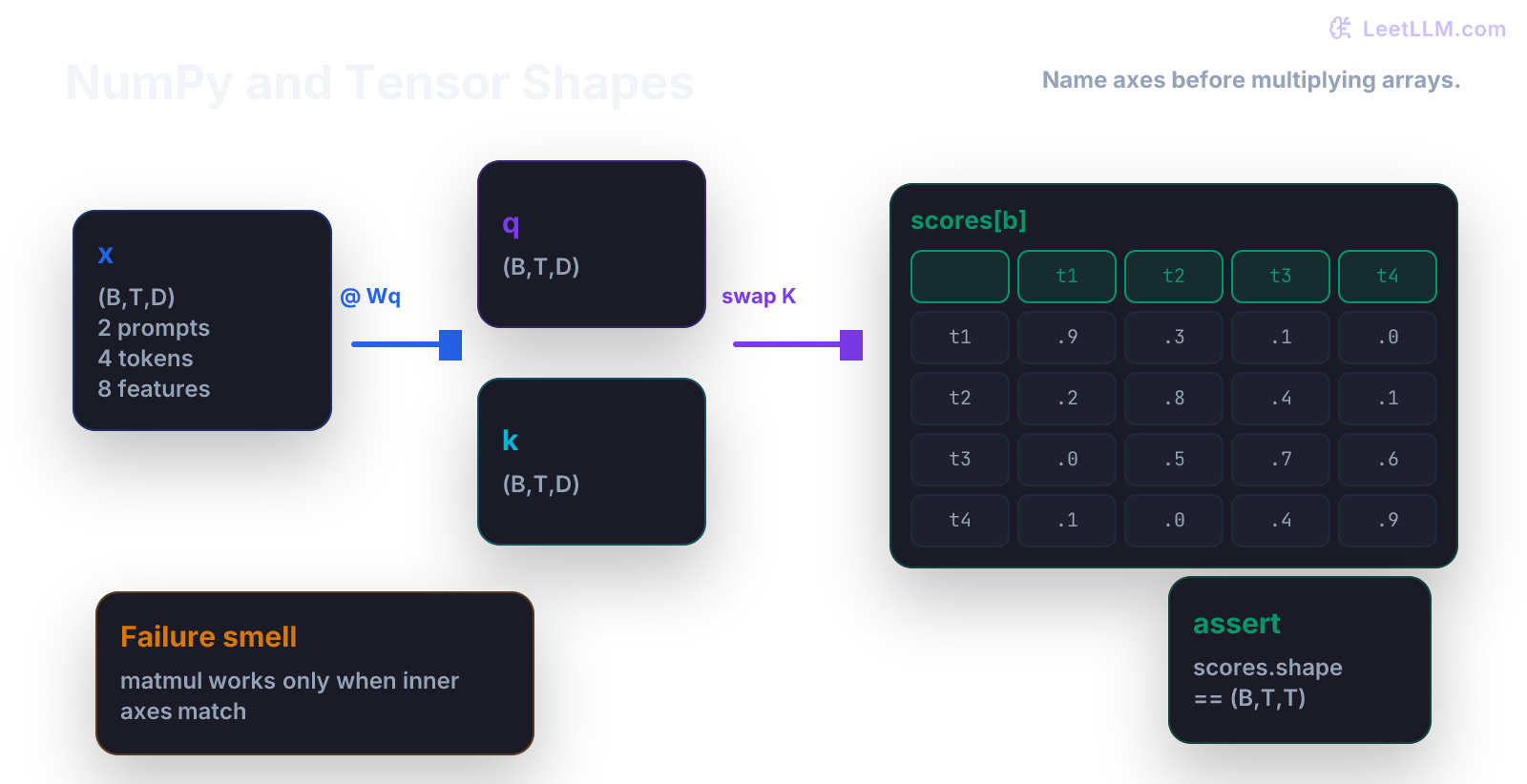

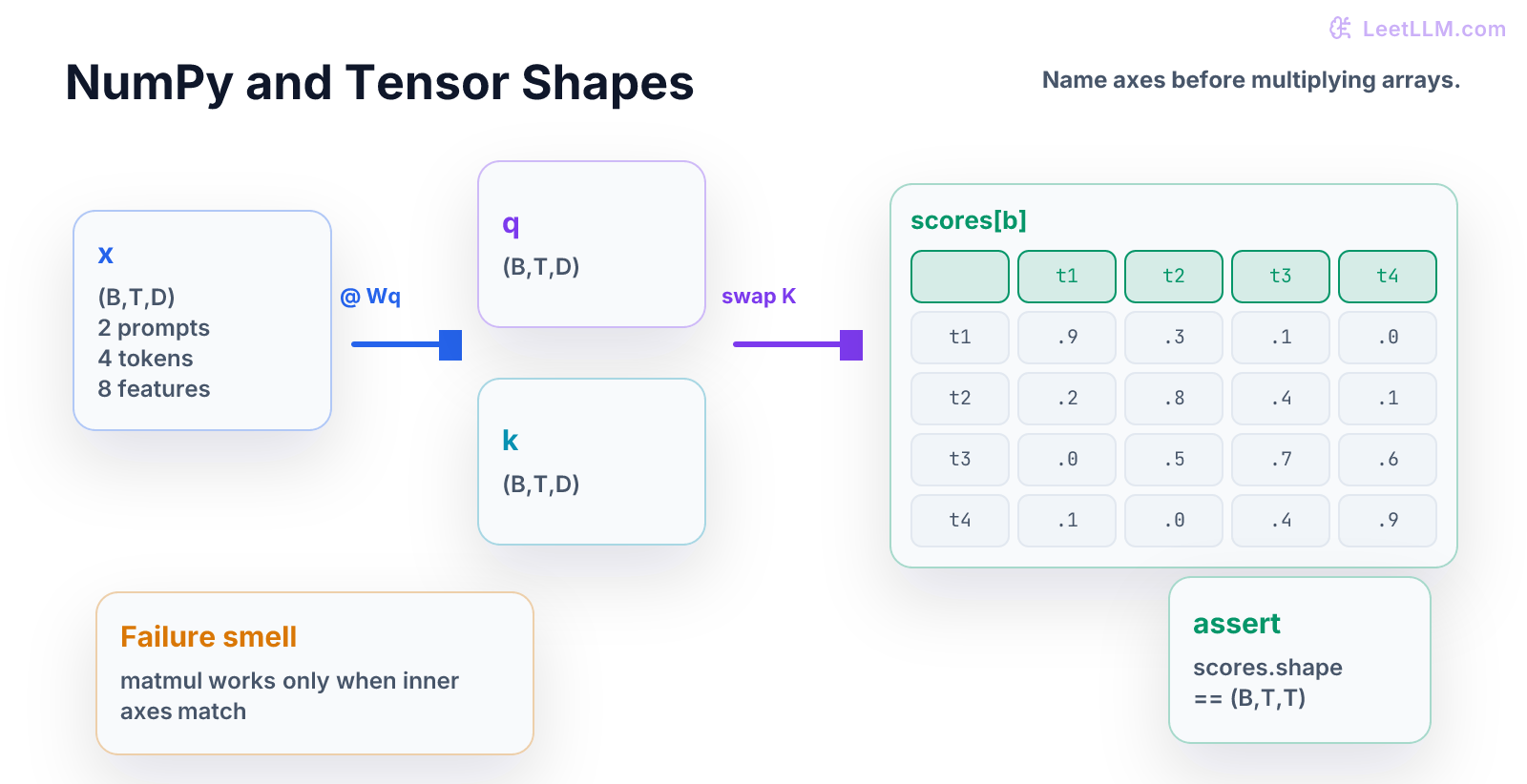

NumPy and Tensor Shapes

A textbook-style NumPy chapter that teaches B/T/D axes, indexing, matrix multiplication, attention score shapes, and shape assertions.

35 min readOpenAI, Anthropic, Google +16 key concepts

NumPy teaches you to see data as shapes.

Python gave you rows and functions. NumPy adds a new rule: every value has axes, and those axes must mean something.

In LLM work, many bugs look like mysterious math bugs. Often they aren't mysterious. Batch, token, and hidden dimensions got swapped, squeezed, or broadcast in the wrong place.

What You Learn

This chapter teaches the shape habit. By the end, a beginner should be able to look at an array, name each axis, predict the output shape of a small operation, and write assertions that catch wrong shapes before model code runs.[1][2][3]

(B,T,D) becomes query and key tensors, then K flips so attention scores end as (B,T,T).Step Map

| Step | Question | What you should be able to do |

|---|---|---|

| 1 | What are the axes? | Name B, T, and D in plain English. |

| 2 | What does one slice mean? | Point to one batch item, token, or feature vector. |

| 3 | What operation happens? | Predict shape after indexing or matrix multiply. |

| 4 | What can fail silently? | Spot wrong broadcasting and swapped axes. |

| 5 | What ships? | Add shape assertions around model boundaries. |

Analogy: a tensor is like a set of labeled shelves. If you remove the labels, the boxes may still stack, but nobody knows what the stack means.

Tiny Story

Imagine two short prompts:

| Batch item | Tokens |

|---|---|

| 0 | ["hello", "ai", "world"] |

| 1 | ["debug", "the", "shape"] |

Each token becomes four numbers.

That means the hidden states have shape:

text1(B, T, D) = (2, 3, 4)

Read it slowly:

| Axis | Meaning | Size |

|---|---|---|

B | batch, how many prompts | 2 |

T | tokens per prompt | 3 |

D | numbers per token | 4 |

The shape isn't decoration. It is the contract.

Vocabulary

- array: grid of numbers.

- axis: one direction in an array.

- shape: tuple of axis sizes, such as

(2, 3, 4). - scalar: one number with shape

(). - vector: one axis, such as

(4,). - matrix: two axes, such as

(3, 4). - tensor: array with any number of axes.

- broadcasting: NumPy rule that stretches compatible axes.

Keep the words concrete. If D means hidden size, every vector on that axis should have D numbers.

Worked Example: Indexing

Start with shape (2, 3, 4).

| Expression | Meaning | Result shape |

|---|---|---|

x[0] | first prompt | (3, 4) |

x[0, 1] | second token in first prompt | (4,) |

x[:, 1] | second token from every prompt | (2, 4) |

x[:, :, 0] | first feature from every token | (2, 3) |

Indexing is reading from the shelf.

When you remove one coordinate, you usually remove one axis.

Code Lab

Put this in numpy_shapes_demo.py:

python1import numpy as np 2 3B, T, D = 2, 3, 4 4x = np.arange(B * T * D).reshape(B, T, D) 5 6print("x", x.shape) 7print("first_prompt", x[0].shape) 8print("one_token", x[0, 1].shape) 9print("all_second_tokens", x[:, 1].shape) 10 11assert x.shape == (2, 3, 4) 12assert x[0, 1].shape == (4,)

Expected output:

text1x (2, 3, 4) 2first_prompt (3, 4) 3one_token (4,) 4all_second_tokens (2, 4)

The values inside x aren't important yet. The shape changes are the lesson.

Second Worked Example: Matrix Multiply

Now transform each token vector from four numbers to five numbers.

Use a weight matrix:

| Object | Shape | Meaning |

|---|---|---|

x | (2, 3, 4) | two prompts, three tokens, four features |

W | (4, 5) | map four features to five features |

y = x @ W | (2, 3, 5) | same prompts and tokens, new feature size |

The last axis of x must match the first axis of W.

Shape rule:

text1(2, 3, 4) @ (4, 5) -> (2, 3, 5)

The 4 disappears because it is the matched dimension. The 5 appears because it is the new feature size.

Run The Projection

python1import numpy as np 2 3B, T, D, H = 2, 3, 4, 5 4x = np.ones((B, T, D)) 5W = np.ones((D, H)) 6y = x @ W 7 8print("y", y.shape) 9assert y.shape == (B, T, H)

Expected output:

text1y (2, 3, 5)

That one rule appears everywhere in neural networks.

Third Worked Example: Attention Scores

Attention compares tokens to tokens.

For one batch item with three tokens, each token needs a score against three tokens. That gives a T x T table.

For a full batch, the score shape should be:

text1(B, T, T)

Shape Checkpoint

Build the smallest version:

python1import numpy as np 2 3B, T, D = 2, 3, 4 4rng = np.random.default_rng(7) 5x = rng.normal(size=(B, T, D)) 6wq = rng.normal(size=(D, D)) 7wk = rng.normal(size=(D, D)) 8 9q = x @ wq 10k = x @ wk 11scores = q @ np.swapaxes(k, -1, -2) 12 13print("q", q.shape) 14print("k", k.shape) 15print("scores", scores.shape) 16assert scores.shape == (B, T, T)

Expected output shape:

text1scores (2, 3, 3)

Read The Output

This is the first shape checkpoint for Transformer attention. You don't need full attention yet. You only need to know why token-to-token scores have two token axes.

Common Trap

The common trap is trusting NumPy because it returned an array.

NumPy can return a legal array that means the wrong thing.

Example:

| Mistake | Why it is dangerous |

|---|---|

using (T, B, D) when code expects (B, T, D) | batch and token axes swap silently |

broadcasting a (T,) mask over the wrong axis | every prompt gets the wrong mask |

calling reshape without naming axes | values move into a shape that looks valid |

dropping a batch axis with x[0] | code works for one prompt and breaks later |

The fix isn't more confidence. The fix is shape assertions with names.

Mini Test

Add tests like these:

python1import numpy as np 2 3def test_token_projection_shape(): 4 B, T, D, H = 2, 3, 4, 5 5 x = np.ones((B, T, D)) 6 W = np.ones((D, H)) 7 y = x @ W 8 assert y.shape == (B, T, H) 9 10def test_attention_scores_are_token_by_token(): 11 B, T, D = 2, 3, 4 12 q = np.ones((B, T, D)) 13 k = np.ones((B, T, D)) 14 scores = q @ np.swapaxes(k, -1, -2) 15 assert scores.shape == (B, T, T)

These tests don't prove a model is good. They prove the arrays still mean what their names claim.

Practice

- Change

Tfrom 3 to 6. - Predict every printed shape before running code.

- Change

Hfrom 5 to 2. - Explain why

ychanges only on the last axis. - Remove

np.swapaxesand read the error. - Write one sentence that starts: "The scores shape is

(B, T, T)because..."

Production Check

At every model boundary, write down:

- input shape

- axis names

- expected output shape

- batch behavior

- sequence behavior

- feature or hidden size

- shape assertion

- example with tiny numbers

For example:

Input hidden states are

(B, T, D). Projection matrix is(D, H). Output is(B, T, H). Test usesB=2,T=3,D=4,H=5.

That note prevents the most common beginner mistake: treating arrays as anonymous grids.

Next, continue to Data Structures for AI. NumPy taught you to keep shape meaning attached to arrays. Data structures teach you to keep lookup meaning attached to storage.

Evaluation Rubric

- 1Names tensor axes in plain English before doing array operations

- 2Predicts and verifies shapes for indexing, projection, and attention-score examples

- 3Adds shape assertions that catch swapped axes, wrong broadcasting, and dropped batch dimensions

Common Pitfalls

- Most tensor bugs are silent until a later layer sees the wrong axis. Broadcasting can hide a bug by producing a legal but wrong shape.

- A legal broadcast can still be wrong. Check meaning, not only whether NumPy returned an array.

- Dropping a batch axis can make a one-example demo pass while production batches break.

Follow-up Questions to Expect

Key Concepts Tested

ndarraysbroadcastingmatrix multiplicationbatch dimensionsrandom seedsshape assertions

References