📊EasyEvaluation & Benchmarks

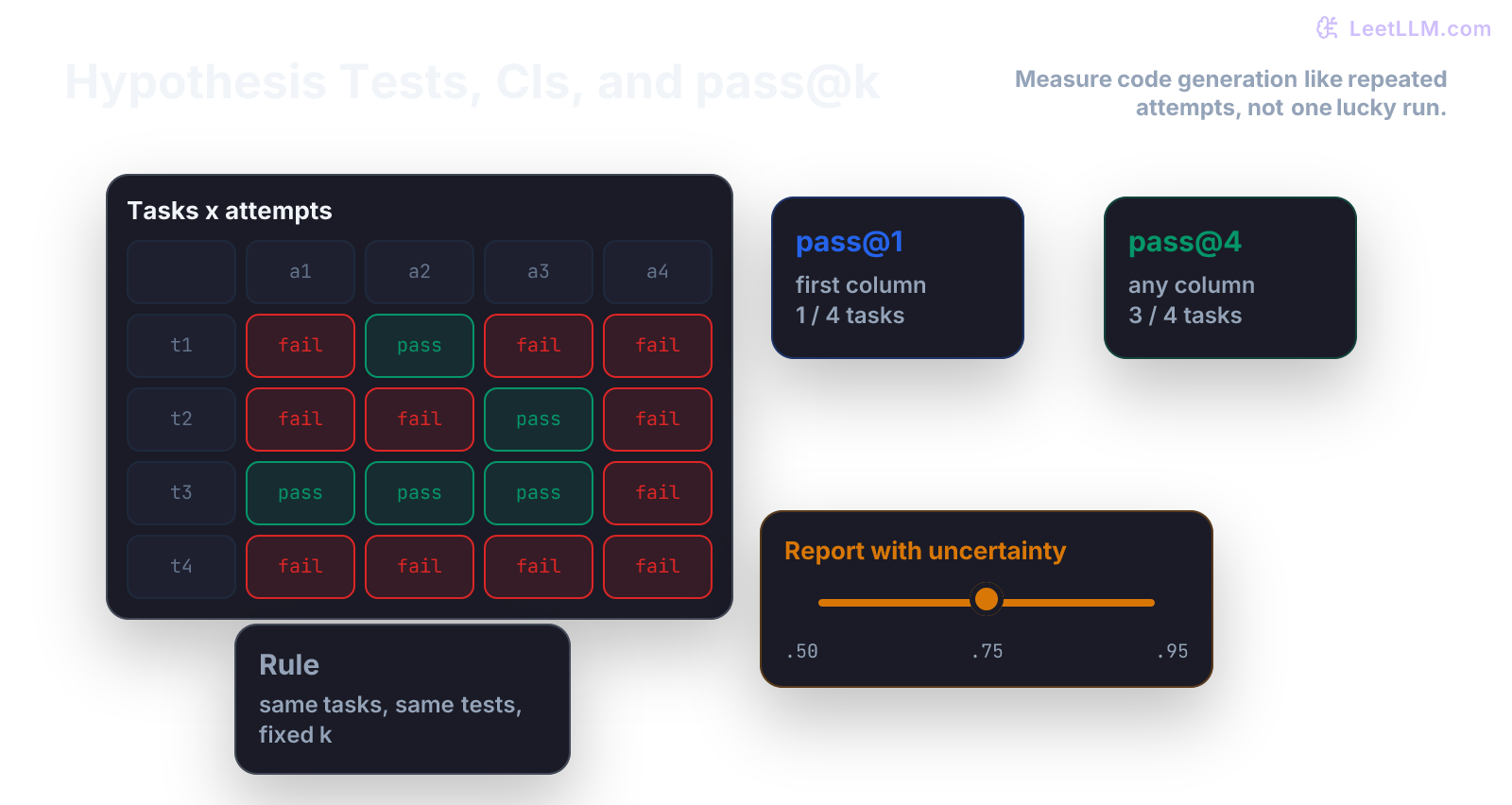

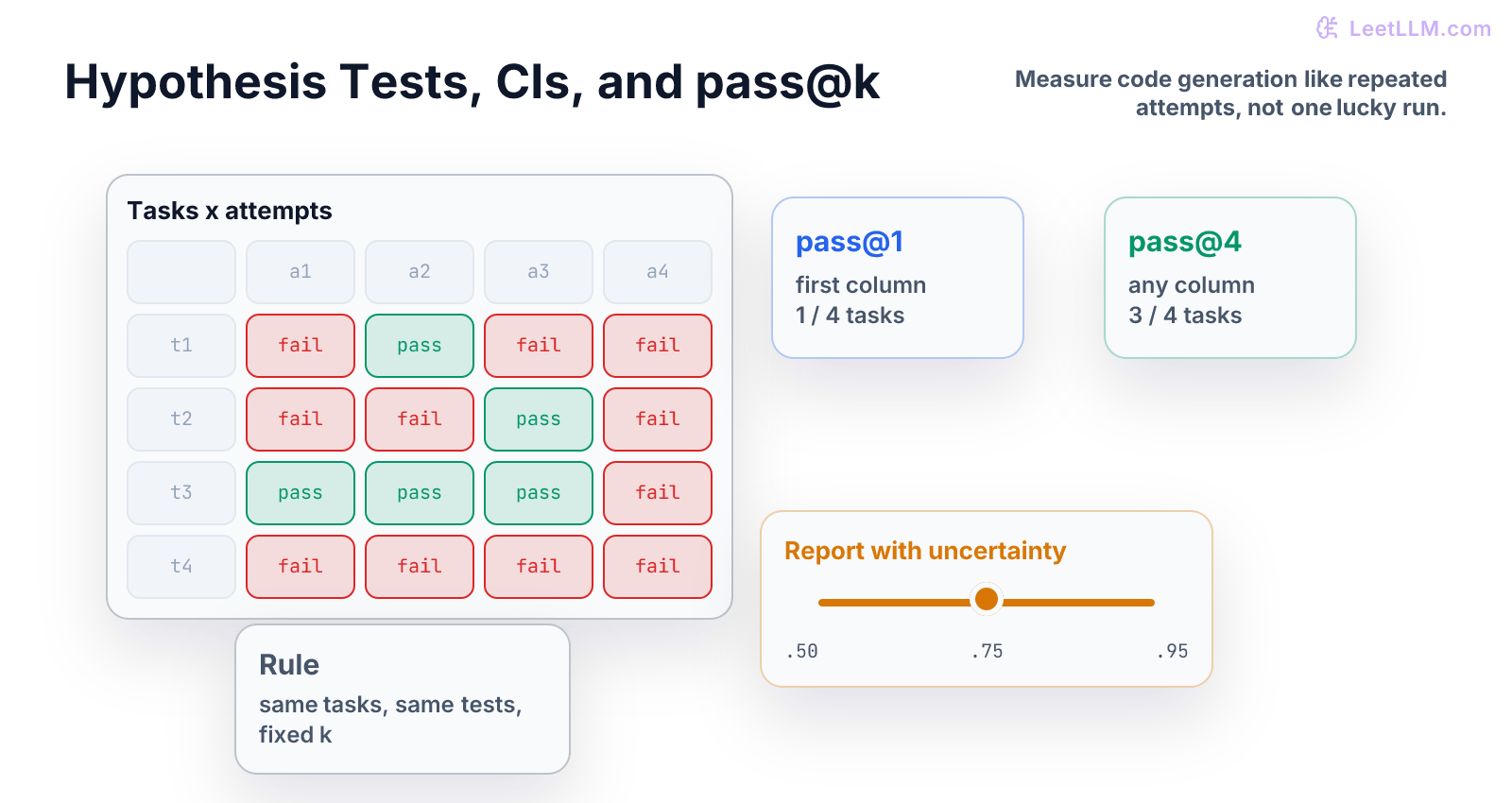

Hypothesis Tests and pass@k

A step-by-step chapter on noisy model comparisons, bootstrap intervals, and pass@k for code-generation benchmarks.

35 min readOpenAI, Anthropic, Google +16 key concepts

Hypothesis tests ask a practical question:

Did something real happen, or did the sample just wobble?

In ML and LLM work, you will compare models constantly. Model B scored higher than Model A. A new prompt got more correct answers. A coding model passed more unit tests. The hard part is deciding whether the difference is evidence or noise.

What You Learn

This chapter teaches the beginner version: define the default claim, measure uncertainty, and report pass@k without hiding the evaluation protocol.[1][2][3]

pass@k asks whether any attempt in the row succeeds.Step Map

| Step | Question | What you should be able to do |

|---|---|---|

| 1 | What is the default claim? | State the null hypothesis. |

| 2 | What metric are we measuring? | Define pass rate or pass@k. |

| 3 | How noisy is the metric? | Bootstrap an interval. |

| 4 | What does k mean? | Explain attempts per task. |

| 5 | What should be reported? | Publish n, k, estimator, interval, and protocol. |

You already learned probability, intervals, and sampling. Now we use them to compare model results.

Tiny Story

Suppose two code models are evaluated on programming tasks.

Each task can pass or fail.

| Task | Model A | Model B |

|---|---|---|

| 1 | pass | pass |

| 2 | fail | pass |

| 3 | pass | pass |

| 4 | fail | fail |

| 5 | pass | pass |

Model A passed 3 out of 5. Model B passed 4 out of 5.

Did Model B really improve, or did we just see a lucky five-task sample?

A hypothesis test starts by naming the boring default:

Null hypothesis: Model B isn't truly better than Model A.

The test asks how surprising the observed result would be if that default claim were true.

Vocabulary

- null hypothesis: the default claim, often "no real difference."

- alternative hypothesis: the claim you need evidence for, such as "Model B is better."

- p-value: how surprising the result would be if the null hypothesis were true. It isn't the probability that the null is true.

- confidence interval: a range that shows uncertainty around an estimate.

- bootstrap: resampling observed data to estimate uncertainty.

- power: chance of detecting a real effect when it exists.

- multiple testing: extra false-win risk when you test many metrics or slices.

- pass@k: fraction of tasks where at least one of k attempts passes.

Do not rush to formulas. The real skill is defining the question correctly.

Worked Example: Bootstrap A Pass Rate

Start with one model and one attempt per task.

text1passes = [1, 0, 1, 1, 0, 1]

That means 4 passes out of 6 tasks:

A bootstrap interval asks:

If these six tasks are the sample we have, how much could the pass rate move if we sampled similar tasks again?

The bootstrap does this by resampling the observed tasks with replacement.

| Bootstrap sample | Values | Pass rate |

|---|---|---|

| sample 1 | [1, 1, 1, 0, 1, 1] | 5 / 6 |

| sample 2 | [0, 0, 1, 1, 1, 0] | 3 / 6 |

Each sample gives a pass rate. The spread of those pass rates gives an uncertainty interval.

Code Lab

Put this in hypothesis_passk_demo.py:

python1import random 2 3def bootstrap_mean(values, rounds=1000, seed=7): 4 rng = random.Random(seed) 5 means = [] 6 7 for _ in range(rounds): 8 sample = [rng.choice(values) for _ in values] 9 means.append(sum(sample) / len(sample)) 10 11 means.sort() 12 return means[25], means[975] 13 14passes = [1, 0, 1, 1, 0, 1] 15low, high = bootstrap_mean(passes) 16 17print("pass_rate", round(sum(passes) / len(passes), 3)) 18print("interval", round(low, 3), round(high, 3))

Read this as a teaching tool, not a production-grade statistics library:

valuesare task outcomes.rng.choice(values)samples one observed task with replacement.rounds=1000builds many possible resampled worlds.means[25]andmeans[975]approximate a 95 percent interval.

The interval will be wide because six tasks is tiny.

That is the lesson.

pass@k, Slowly

For coding benchmarks, a model may get multiple attempts per task.

Here are three tasks with three attempts each:

| Task | Attempt 1 | Attempt 2 | Attempt 3 | pass@1 | pass@3 |

|---|---|---|---|---|---|

| 1 | pass | fail | fail | 1 | 1 |

| 2 | fail | fail | pass | 0 | 1 |

| 3 | fail | fail | fail | 0 | 0 |

pass@1 checks only the first attempt:

pass@3 asks whether any of the first three attempts passed:

So pass@k isn't ordinary accuracy. It changes the question:

If the model gets k tries, how often does at least one try succeed?

pass@k Estimator

Many code benchmarks sample n completions and count c correct completions. The Codex paper uses this estimator for pass@k:[2]

Plain English:

One minus the chance that all k selected attempts came from the incorrect completions.

Beginner code:

python1from math import comb 2 3def pass_at_k(n, c, k): 4 if n - c < k: 5 return 1.0 6 return 1 - comb(n - c, k) / comb(n, k) 7 8print(round(pass_at_k(n=10, c=2, k=3), 3))

This reports the chance that at least one of 3 selected attempts passes when 2 of 10 sampled attempts were correct.

Common Trap

The common trap is reporting a bigger number without explaining the bigger budget.

Bad report:

The model gets 66 percent.

Better report:

The model gets pass@3 = 66 percent on 3 tasks with 3 attempts per task. pass@1 is 33 percent. The sample is tiny, so this is only a teaching example.

In real benchmarks, always say:

- number of tasks

- number of samples per task

- k

- sampling temperature or decoding policy

- test quality

- confidence interval or bootstrap interval

Mini Test

Add these guards:

python1def test_pass_at_k_increases_with_k(): 2 assert pass_at_k(10, 2, 1) <= pass_at_k(10, 2, 3) 3 4def test_pass_at_k_is_zero_when_no_completion_passes(): 5 assert pass_at_k(10, 0, 3) == 0

These tests protect interpretation. More attempts shouldn't make pass@k worse, and zero correct attempts should never produce a positive score.

Practice

- Compute pass@1 and pass@3 from the table by hand.

- Run

pass_at_k(10, 2, 3). - Change

cfrom2to5and predict the direction. - Bootstrap task-level pass@3 values

[1, 1, 0]. - Write one sentence that starts: "pass@k isn't ordinary accuracy because..."

Sanity Check

Before trusting a benchmark table, ask two questions:

| Question | Why it matters |

|---|---|

| How many tasks were sampled? | Small task sets create wide intervals. |

| How many attempts did each model get? | pass@k changes when k changes. |

Production Check

A trustworthy eval report should publish:

- task count

- samples per task

- k

- estimator

- confidence interval

- decoding settings

- public, hidden, or generated tests

- whether tasks were inspected after seeing model outputs

If you test many slices, don't celebrate the best slice without saying how many slices you checked. Multiple testing creates false wins.

Next, continue to Linear Regression from Scratch. You now know how to reason about uncertain evaluation results. Regression is the first model where we turn that math into learned parameters.

Evaluation Rubric

- 1Explains null hypotheses, p-values, intervals, power, and pass@k in plain language before formulas

- 2Computes bootstrap intervals and pass@k estimates in runnable Python

- 3Reports n, k, sample count, estimator, interval, and test quality for benchmark claims

Common Pitfalls

- pass@k is often misread as ordinary accuracy. It estimates the chance that at least one of k sampled solutions passes tests, so sampling policy and test quality matter.

- A small benchmark can swing by luck. Report uncertainty before calling a model better.

- Testing many slices and reporting only the best slice creates false wins unless you say how many comparisons you made.

Follow-up Questions to Expect

Key Concepts Tested

p-valuesbootstrappowermultiple comparisonspass@kbenchmark uncertainty

References