🚀MediumInference Optimization

Decoding Algorithms

Hands-on chapter for decoding algorithms, with first-principles mechanics, runnable code, failure modes, and production checks.

40 min readOpenAI, Anthropic, Google +16 key concepts

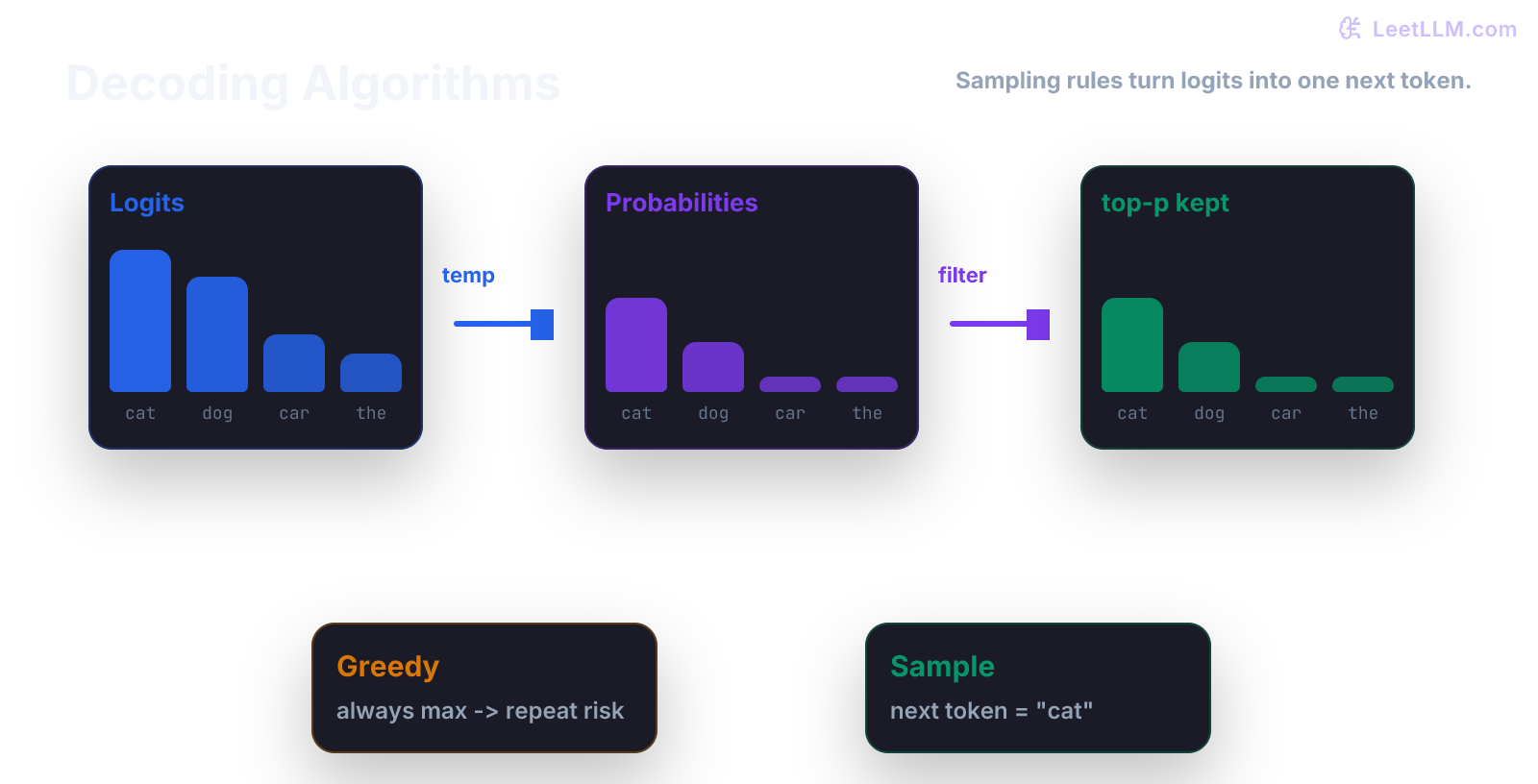

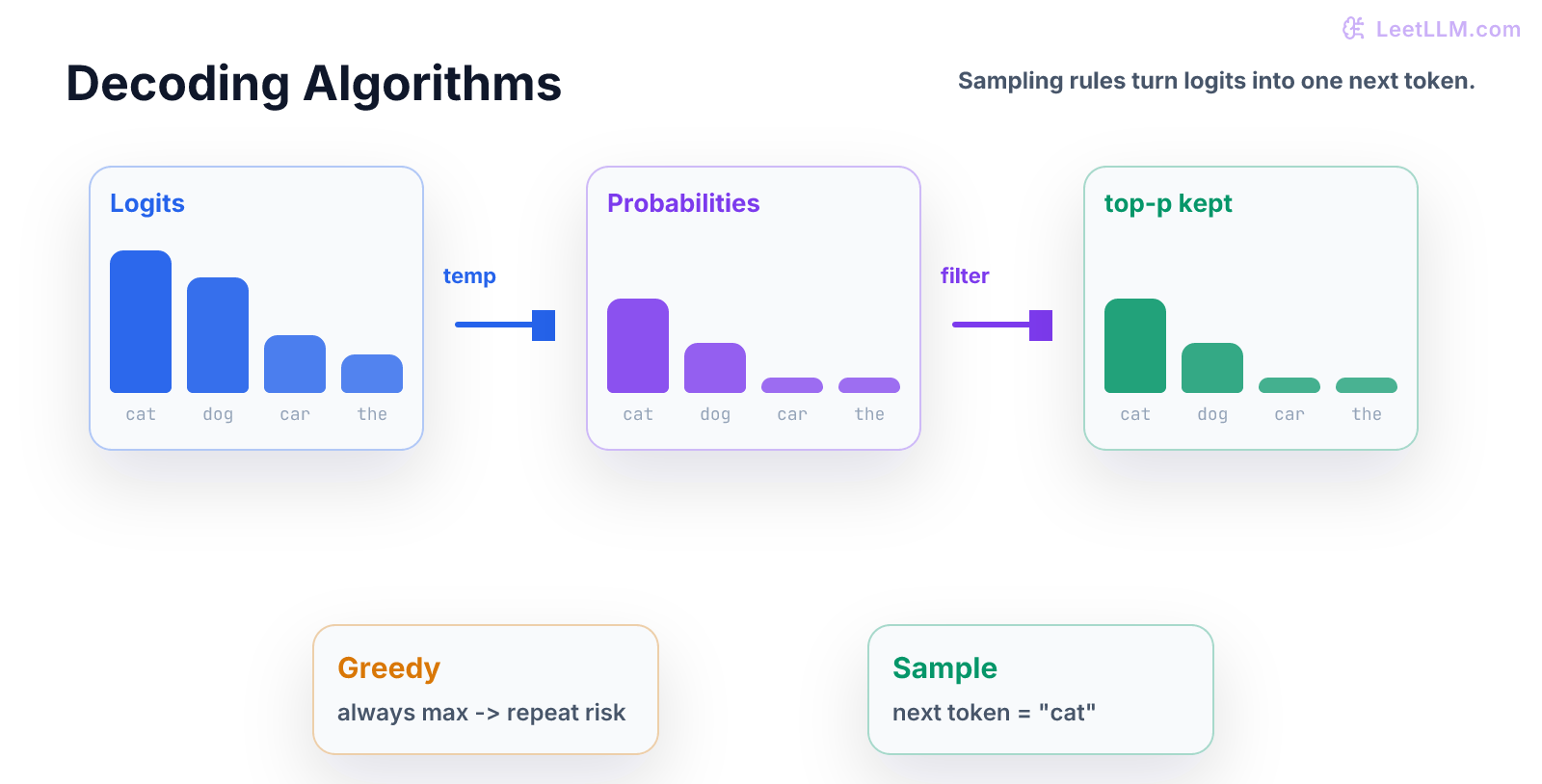

Decoding is how an LLM turns next-token probabilities into actual text. Same model, different decoding settings, different product behavior. This chapter starts from zero and builds toward the concrete job skill: Implement greedy, temperature, top-k, nucleus, and beam decoding on a toy probability vector. [1][2][3]

Step map

| Stage | Beginner action | Checkpoint |

|---|---|---|

| Concept | Start from logits, then choose one next token. | Reader can say input, operation, and output without naming a library. |

| Build | Implement greedy, temperature, top-k, and top-p choices. | Code prints or asserts one result the reader predicted first. |

| Failure | Fixed seeds prove randomness is controlled during tests. | The common beginner mistake has a visible symptom and guard. |

| Ship | Sampling config and failure cases are visible in eval notes. | Artifact is small enough for another engineer to rerun. |

Start here

Start with a probability list. Greedy decoding picks the largest value. Top-k keeps only k choices. Nucleus sampling keeps enough choices to cover a probability mass. Temperature changes how sharp or flat the distribution feels.

Read this chapter once for the idea, then run the demo and change one value. For Decoding Algorithms, progress means you can name the input, explain the operation, and say what result would prove the idea worked.

By the end, you should be able to explain Decoding Algorithms with a worked example, not a library name. Keep one runnable file and one short note with the result you expected before you ran it.

Why this chapter matters

Decoding Algorithms matters because later LLM work assumes this habit already exists. You will use it when you inspect data, debug model behavior, compare evaluations, or explain why a result should be trusted.

The job skill here is: Implement greedy, temperature, top-k, nucleus, and beam decoding on a toy probability vector. Treat the snippet as lab equipment: run it, change one input, and write down what changed before you move on.

Beginner mental model

Imagine four possible next tokens with probabilities [0.1, 0.2, 0.6, 0.1]. Top-k with k=2 keeps the two most likely tokens and renormalizes them so they sum to one.

A useful beginner checklist for Decoding Algorithms:

- What object enters the system?

- What transformation happens to it?

- What evidence says the result is correct?

Keep the answer concrete. If you can't point to the value, shape, row, metric, or test that proves the point, the Decoding Algorithms concept is still fuzzy.

Vocabulary in plain English

- greedy decoding: always choose the highest-probability next token.

- beam search: keep several partial sequences and expand the best ones.

- top-k: sample only from the k most likely tokens.

- nucleus sampling: sample from the smallest token set whose total probability reaches p.

- temperature: setting that makes probabilities sharper or flatter before sampling.

- speculative decoding: speedup pattern where a smaller draft model proposes tokens that a larger model verifies.

Use these definitions while reading the demo. Each term should map to a variable, an assertion, or a decision you could explain in review.

Build it

Start with the smallest version that can run from a terminal. The goal for this Decoding Algorithms demo is visibility: one file, one output, and no hidden notebook state.

python1import numpy as np 2 3def top_k(probs, k): 4 idx = np.argsort(probs)[-k:] 5 kept = probs[idx] / probs[idx].sum() 6 return idx, kept 7 8print(top_k(np.array([0.1, 0.2, 0.6, 0.1]), 2))

Read the code in this order:

np.argsort(probs)[-k:]finds indices of the k largest probabilities.probs[idx]selects only those kept probabilities.- Dividing by

probs[idx].sum()makes the kept probabilities sum to one again. - The returned indices tell you which tokens are still allowed.

After it runs, make three small edits. Add a normal-case test, add an edge-case test, then log the intermediate value a beginner would most likely misunderstand. That turns Decoding Algorithms from a reading exercise into an engineering exercise.

For Decoding Algorithms, a strong submission includes a runnable command, one test file, and notes for any assumptions. If data, randomness, training, or evaluation appears, save the split rule, seed, config, and metric definition.

Beginner failure case

A beginner may change temperature to make outputs feel better without logging the setting, making eval results impossible to reproduce.

For Decoding Algorithms, make the failure visible before adding the fix. Write the symptom in plain English, then add the smallest guard that would catch it next time.

Good guards for Decoding Algorithms are concrete: assertions, fixture rows, duplicate checks, seed control, metric intervals, or release checks. Pick the guard that makes the hidden assumption executable.

Practice ladder

- Run the snippet exactly as written and save the output.

- Change one input value and predict the output before running it again.

- Add one assertion that would catch a beginner mistake.

- Add a

top_pfunction for nucleus sampling and test it on[0.5, 0.2, 0.2, 0.1]withp=0.8. - Write a two-line README: one command to run the demo, one command to run the test.

Keep this ladder small. Decoding Algorithms should feel runnable before it feels impressive. The capstones later reuse the same habit at product scale.

Production check

Log decoding config with every sample, test deterministic and sampled modes separately, and pin defaults per product task.

A production check for Decoding Algorithms is proof another engineer can trust the result. At foundation level that means a reproducible command and tests. At capstone level it also means a design note, eval evidence, cost or latency notes, and rollback criteria.

Before moving on, answer four Decoding Algorithms questions: What input does this accept? What output or metric proves it worked? What failure would fool you? What test catches that failure?

What to ship

Ship a small Decoding Algorithms folder with code, tests, and notes. Make it boring to run: install dependencies, run tests, run the demo. That boring path is what makes the artifact useful in a portfolio.

Decoding Algorithms feeds later LLM engineering work directly. Retrieval, fine-tuning, agents, evals, and serving all depend on small foundations like this being clear before systems get large.

Evaluation Rubric

- 1Explains the core mental model behind Decoding Algorithms without hiding behind library calls

- 2Implements the central idea in runnable Python, NumPy, PyTorch, or scikit-learn code

- 3Identifies realistic failure modes and adds tests or production checks that catch them

Common Pitfalls

- Changing decoding changes product behavior without changing model weights. A safer model can look worse with bad sampling, and a risky model can look better on easy prompts.

- Skipping the from-scratch version and reaching for a library before the mechanics are clear.

- Treating a clean demo as proof that the implementation will survive bad inputs, drift, or scale.

Follow-up Questions to Expect

Key Concepts Tested

greedy decodingbeam searchtop-knucleus samplingtemperaturespeculative decoding

References