⚙️EasyMLOps & Deployment

First AI App End-to-End

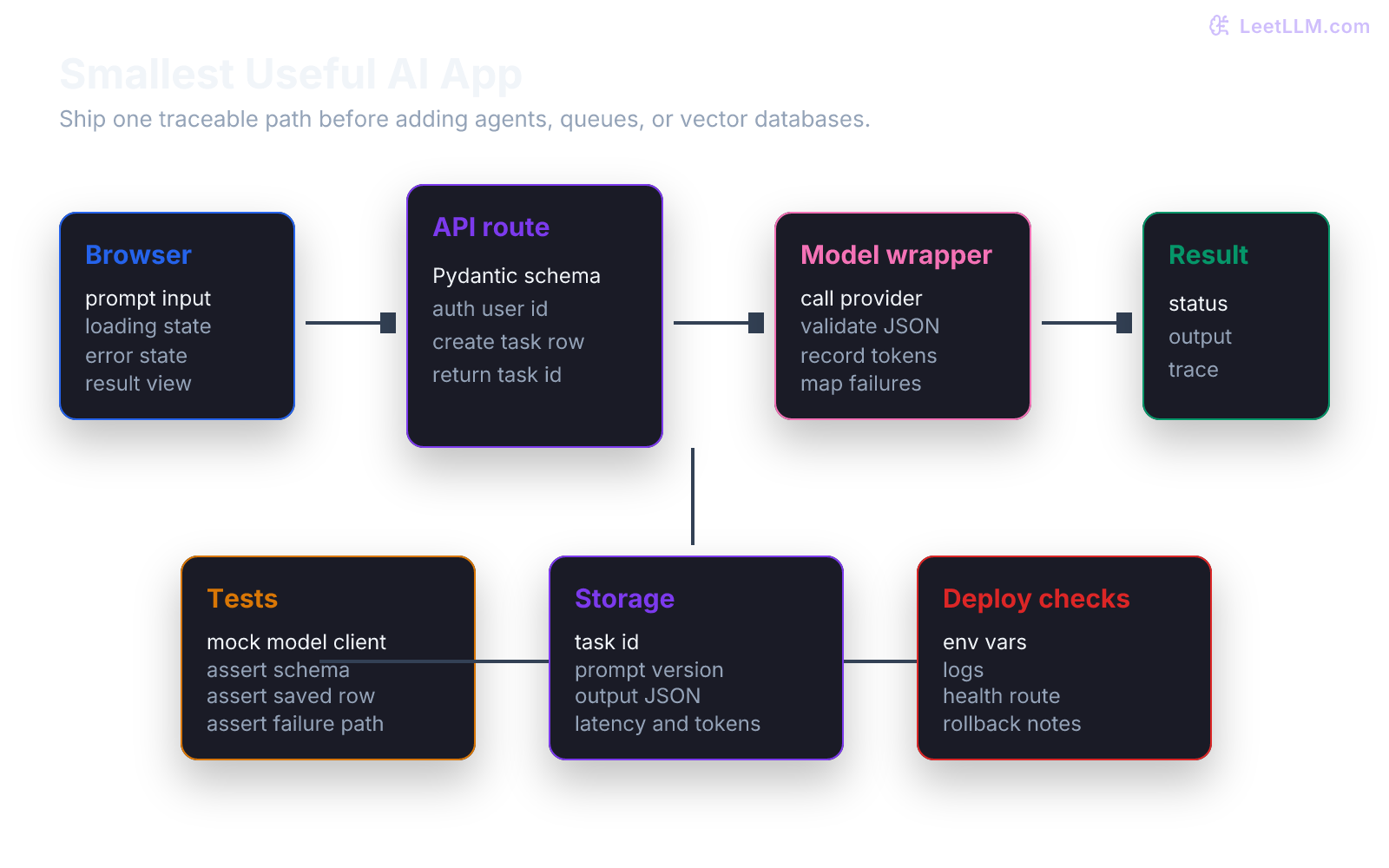

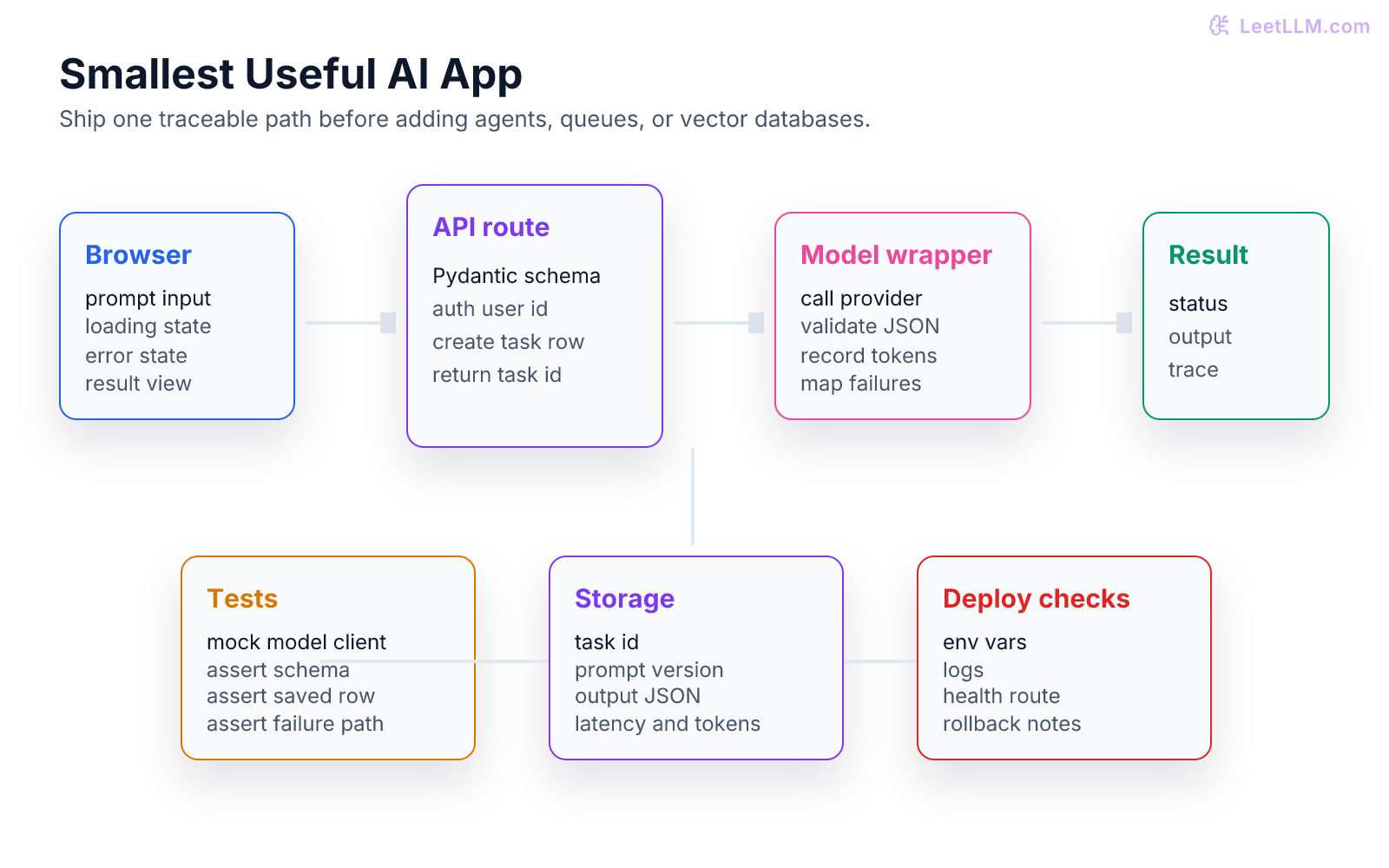

Build the smallest useful AI application from request to response: API route, model call, schema validation, storage, tests, and deployment checklist.

32 min readOpenAI, Anthropic, Google +25 key concepts

Learning path

Step 17 of 108 in the full curriculum

Most beginners try to build an AI app by adding every exciting piece at once. They add a chatbot, agents, a vector database, auth, file upload, queues, dashboards, and deployment before one request works from start to finish. The result is hard to debug because no one knows where the failure lives.

The better path is smaller. Build one useful slice. A user submits text. Your API validates it. Your model wrapper returns a structured answer. The app saves the trace. Tests prove success and failure paths. Then you deploy it.

This chapter builds on Calling LLM APIs in Production. There, you built the model boundary. Here, you'll connect that boundary to a shippable app.

What counts as a first AI app?

A first AI app should be boring in the right places.

It doesn't need a complex agent. It doesn't need a custom model. It doesn't need a fancy dashboard. It needs one workflow that works and can be explained.

Use this target:

A user pastes messy support text. The app extracts a clean ticket with category, priority, and summary. The result is saved and shown back to the user.

That gives you a real product shape:

| Layer | Smallest useful version |

|---|---|

| Frontend | Form, submit button, loading state, result, error. |

| API | Validate input, call model wrapper, save result. |

| Model boundary | Prompt version, schema, timeout, logs. |

| Storage | One task table or JSONL file for local learning. |

| Tests | Mock model success, invalid output, and provider failure. |

| Deployment | Environment variables, health check, logs, rollback note. |

This is enough to show real engineering skill.

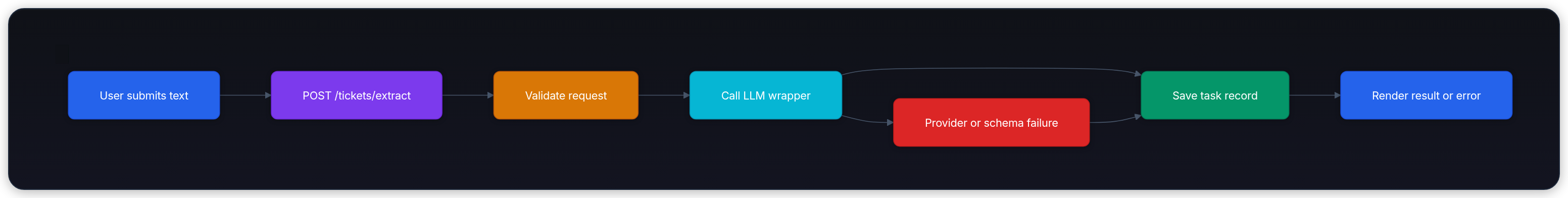

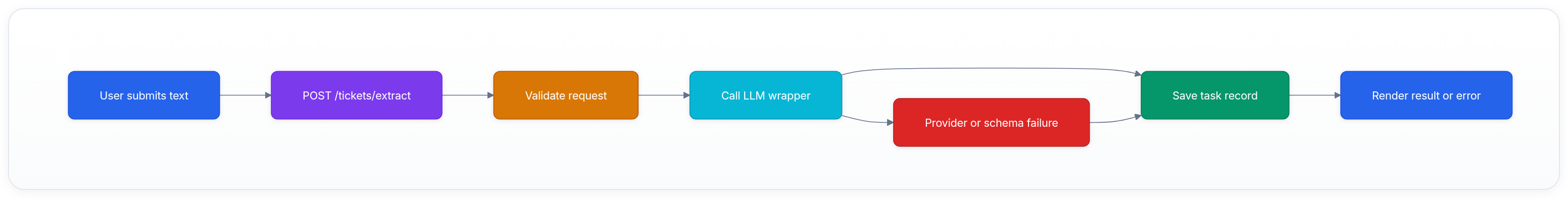

The request path

The full path has six steps.

Each step should be testable by itself.

The frontend can be any framework. The backend example below uses FastAPI because it's small, typed, and common in Python service work.[1] The same structure works in Next.js, Flask, Django, Express, Hono, or any other web stack.

Define the data contract

Start with the records.

If you can't describe the request and response as data, the app isn't ready for a model call.

python1from pydantic import BaseModel, Field 2 3class ExtractTicketRequest(BaseModel): 4 text: str = Field(min_length=10, max_length=4000) 5 6class ExtractedTicket(BaseModel): 7 category: str 8 priority: str 9 summary: str 10 11sample = ExtractTicketRequest(text="Customer paid twice for order A-102 and wants a refund.") 12print(sample.model_dump()) 13assert sample.text.startswith("Customer")

Expected output:

text1{'text': 'Customer paid twice for order A-102 and wants a refund.'}

This schema catches empty or oversized inputs before the model sees them.

Store a task record

A task record is the app's memory of what happened.

For a real deployment, use Postgres, SQLite, Supabase, or another durable store. For the smallest local version, a list is enough to show the shape.

python1from dataclasses import dataclass 2from datetime import datetime, timezone 3 4@dataclass 5class TaskRecord: 6 task_id: str 7 status: str 8 input_text: str 9 output_json: dict | None 10 prompt_version: str 11 created_at: str 12 error: str | None = None 13 14record = TaskRecord( 15 task_id="task_001", 16 status="completed", 17 input_text="Customer paid twice.", 18 output_json={"category": "billing", "priority": "high"}, 19 prompt_version="ticket_extract@1", 20 created_at=datetime.now(timezone.utc).isoformat(), 21) 22 23print(record.status) 24assert record.prompt_version == "ticket_extract@1"

Expected output:

text1completed

The record is more than history. It becomes your debugging tool, eval dataset seed, and portfolio evidence.

Build the API route

The API route should be thin.

It receives input, validates it, calls the model boundary, saves the result, and returns a response. It shouldn't know prompt details or provider SDK internals.

This snippet uses a fake model wrapper so you can inspect the route shape without credentials.

python1from fastapi import FastAPI 2from pydantic import BaseModel, Field 3 4app = FastAPI() 5TASKS: dict[str, dict] = {} 6 7class ExtractTicketRequest(BaseModel): 8 text: str = Field(min_length=10, max_length=4000) 9 10class ExtractedTicket(BaseModel): 11 category: str 12 priority: str 13 summary: str 14 15def extract_ticket_with_model(text: str) -> ExtractedTicket: 16 if "paid twice" in text.lower(): 17 return ExtractedTicket( 18 category="billing", 19 priority="high", 20 summary="Customer paid twice and needs refund help.", 21 ) 22 return ExtractedTicket( 23 category="general", 24 priority="medium", 25 summary=text[:80], 26 ) 27 28@app.post("/tickets/extract") 29def extract_ticket(request: ExtractTicketRequest) -> dict: 30 task_id = f"task_{len(TASKS) + 1:03d}" 31 try: 32 ticket = extract_ticket_with_model(request.text) 33 TASKS[task_id] = { 34 "status": "completed", 35 "input_text": request.text, 36 "output_json": ticket.model_dump(), 37 "prompt_version": "ticket_extract@1", 38 } 39 return {"task_id": task_id, "status": "completed", "ticket": ticket.model_dump()} 40 except Exception as exc: 41 error_message = "Ticket extraction failed. Please try again." 42 TASKS[task_id] = { 43 "status": "failed", 44 "input_text": request.text, 45 "output_json": None, 46 "prompt_version": "ticket_extract@1", 47 "error": str(exc), 48 } 49 return {"task_id": task_id, "status": "failed", "error": error_message} 50 51if __name__ == "__main__": 52 response = extract_ticket(ExtractTicketRequest(text="Customer paid twice for order A-102.")) 53 print(response) 54 assert response["status"] == "completed"

Expected output:

text1{'task_id': 'task_001', 'status': 'completed', 'ticket': {'category': 'billing', 'priority': 'high', 'summary': 'Customer paid twice and needs refund help.'}}

Run a real FastAPI service with a command like this:

bash1uvicorn app:app --reload

The route is intentionally small. The app becomes easier to debug because each responsibility has a clear owner.

Add frontend states

The frontend should not assume the model will succeed.

At minimum, the UI needs four states:

| State | What the user sees | What the app stores |

|---|---|---|

| Idle | Text box and submit button. | Nothing yet. |

| Running | Disabled submit, visible progress. | Task created or request in flight. |

| Completed | Ticket fields and copy action. | Output JSON and prompt version. |

| Failed | Error message and retry option. | Failure reason and trace ID. |

Don't hide failure.

If the model returns invalid JSON or times out, the user should see a recoverable state, not a blank result.

Frontend contract

The frontend doesn't need model details.

- Success response shape:

json1{ 2 "task_id": "task_001", 3 "status": "completed", 4 "ticket": { 5 "category": "billing", 6 "priority": "high", 7 "summary": "Customer paid twice and needs refund help." 8 } 9}

- Failure response shape:

json1{ 2 "task_id": "task_002", 3 "status": "failed", 4 "error": "The model response did not match the ticket schema." 5}

That stable shape keeps the product clean even if the provider changes.

Test the app you own

You don't need to test the provider's model in every unit test.

You do need to test your code around it.

| Test | What it proves |

|---|---|

| Valid input returns completed task. | Happy path works. |

| Empty input is rejected. | Request schema protects the app. |

| Model wrapper raises error. | Failure state is saved and returned. |

| Model returns invalid object. | Parser rejects it before display. |

| Prompt version is saved. | Future debugging has context. |

Here's a local test shape without a running server.

python1from pydantic import ValidationError 2 3try: 4 ExtractTicketRequest(text="too short") 5except ValidationError as exc: 6 print("validation failed") 7 assert "String should have at least 10 characters" in str(exc) 8 9response = extract_ticket(ExtractTicketRequest(text="Customer paid twice for order A-102.")) 10print(response["task_id"]) 11assert TASKS[response["task_id"]]["prompt_version"] == "ticket_extract@1"

Expected output:

text1validation failed 2task_002

In a real repo, put this in tests/test_extract_ticket.py, mock the model wrapper, and run it in CI.

Deployment checklist

Docker is a common packaging layer for deploying web services because it pins the runtime environment and startup command.[2] You don't need a complex container to learn the habit.

Your first deployment checklist should cover:

| Check | Question |

|---|---|

| Environment | Are provider keys, database URLs, and secrets outside git? |

| Startup | Does the service boot with one documented command? |

| Health | Does /healthz return 200 without calling the model? |

| Logs | Can you find one task by trace ID? |

| Errors | Are provider failures visible without exposing secrets? |

| Rollback | Can you redeploy the last working image or commit? |

AI products add one more check: model behavior.

Before deploy, run a tiny eval set:

python1EVALS = [ 2 ("Customer paid twice for order A-102.", "billing"), 3 ("The app crashes when I upload a CSV.", "general"), 4] 5 6for text, expected_category in EVALS: 7 ticket = extract_ticket_with_model(text) 8 print(text[:20].strip(), "=>", ticket.category) 9 assert ticket.category == expected_category

Expected output:

text1Customer paid twice => billing 2The app crashes when => general

This is not a full evaluation platform. It's a smoke test that catches obvious prompt or parser regressions before users see them.

ML deployment work is full of hidden coupling between code, data, prompts, models, and infrastructure.[3][4] Small apps should start tracking those links early.

Portfolio version

A hire-ready version of this first app should include:

- A live URL or reproducible local demo.

- A clear README with setup, environment variables, and sample request.

- Tests that run without paid model access.

- A tiny eval file with expected outputs.

- A design note explaining the request path.

- A screenshot or short video.

- A section called "Known limits" with honest trade-offs.

This matters because hiring teams need evidence.

Anyone can say they built an AI app. Fewer candidates can show the route, schema, wrapper, tests, traces, failure path, and deployment notes.

Common failure cases

| Symptom | Likely cause | Fix |

|---|---|---|

| App hangs. | Model call has no timeout. | Add timeout and loading state. |

| UI crashes on result. | Frontend assumes fields exist. | Validate response and render failure. |

| Can't reproduce bug. | Prompt version and task input weren't stored. | Save trace record for each request. |

| Tests need paid API calls. | Provider wasn't wrapped. | Mock wrapper in unit tests. |

| Secret appears in bundle. | Key used in frontend code. | Move model call to server. |

Self check

Before moving on, answer these without looking:

- What are the four user-visible states your app needs?

- What should be stored for each model request?

- Why should

/healthzavoid calling the model provider? - Which parts should be mocked in unit tests?

- What proof would convince a reviewer that your app is real?

Strong answers should mention loading, failure, trace records, prompt version, provider mocks, and deployment checks.

Key takeaways

- Build one useful slice before adding agents, RAG, or complex background systems.

- Keep the API route thin and push model details into a wrapper.

- Save task records so behavior becomes inspectable.

- Test your schema, status transitions, storage, and failure paths.

- A strong first AI app is a portfolio artifact when it includes code, tests, evals, logs, and a design note.

Next, continue to The LLM Lifecycle. There, you'll zoom out from one app request to the full lifecycle of training, adaptation, evaluation, deployment, and monitoring.

Evaluation Rubric

- 1Designs the smallest useful product slice before adding framework complexity

- 2Connects frontend state, API validation, model call, and persistence into one traceable path

- 3Uses tests and fixtures to make model-dependent behavior reproducible

- 4Adds clear user states for queued, running, completed, and failed requests

- 5Prepares the app for deployment with environment variables, logging, and rollback notes

Common Pitfalls

- Starting with agents, vector databases, and queues before the single request path works.

- Letting the frontend assume the model will always return valid data.

- Skipping tests because model output is probabilistic.

- Putting secrets in frontend code or committed example files.

- Showing a spinner with no status, retry path, or saved result.

Follow-up Questions to Expect

Key Concepts Tested

End-to-end AI app architectureFastAPI request and response contractsModel call wrapper integrationDatabase record for prompts, outputs, and statusTestable loading, error, and retry states

References

FastAPI Documentation.

FastAPI Project. · 2026 · Official documentation

Docker Documentation.

Docker Inc. · 2026 · Official documentation

Structured outputs

OpenAI · 2024

Hidden Technical Debt in Machine Learning Systems.

Sculley et al. · 2015

Challenges in Deploying Machine Learning: a Survey of Case Studies.

Paleyes, A., Urma, R. G., & Lawrence, N. D. · 2022 · ACM Computing Surveys