🔍EasyRAG & Retrieval

File Ingestion for AI

Learn how real AI systems turn PDFs, HTML pages, OCR images, and Markdown files into clean text records ready for chunking and retrieval.

30 min readOpenAI, Anthropic, Google +25 key concepts

Learning path

Step 24 of 108 in the full curriculum

Most RAG failures start before retrieval.

The user uploads a PDF. The app extracts text in the wrong order. The footer appears on every page. A scanned page becomes an empty string. A Markdown code block loses indentation. The retriever then indexes all of that noise, and the model gets blamed when answers are weak.

File ingestion is the process of turning messy source files into clean, traceable text records that later systems can chunk, embed, retrieve, cite, and debug.

This chapter builds on Function Calling & Tool Use. Tools let an LLM reach outside its context window. Ingestion decides what trustworthy context those tools and retrievers can find.

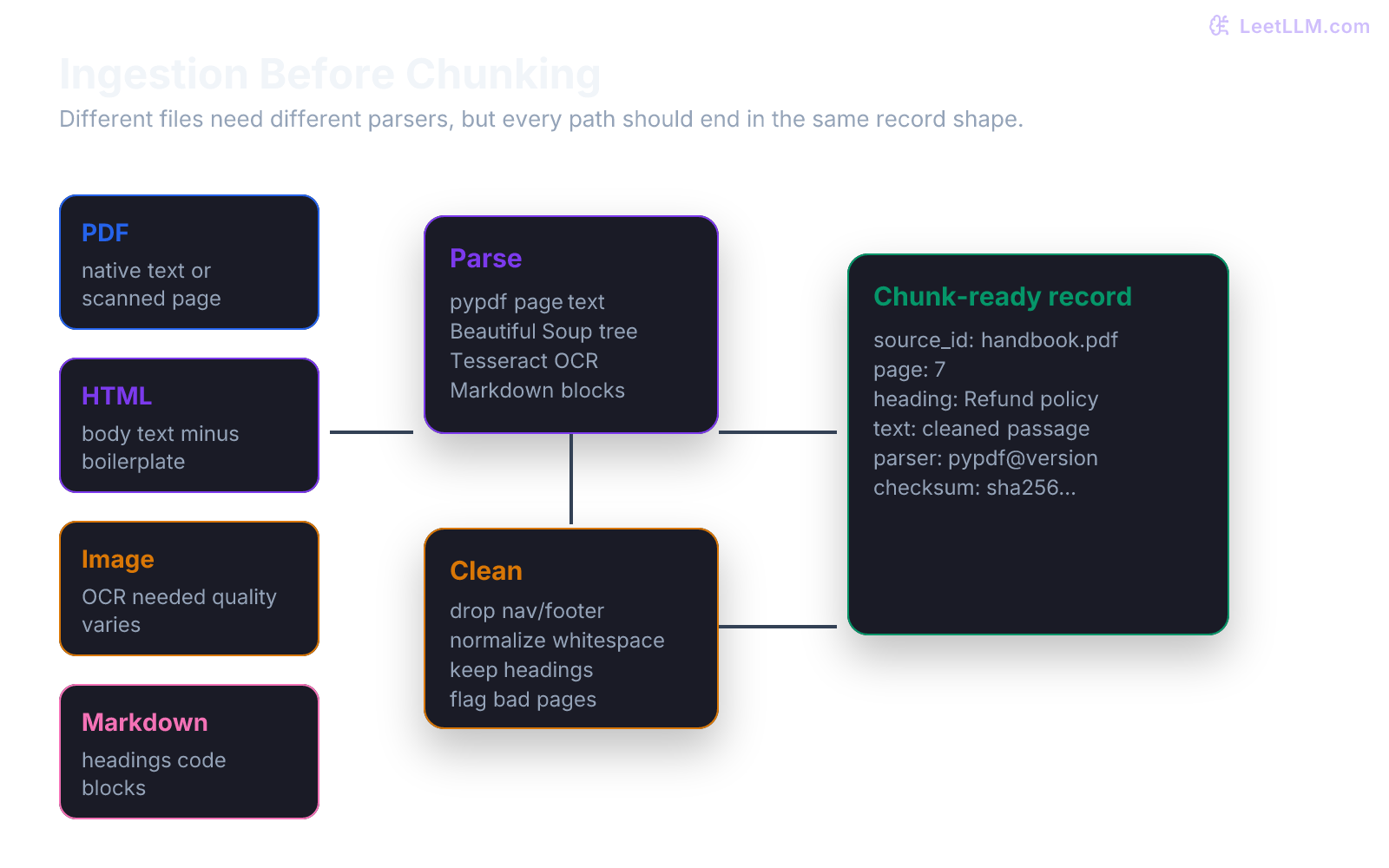

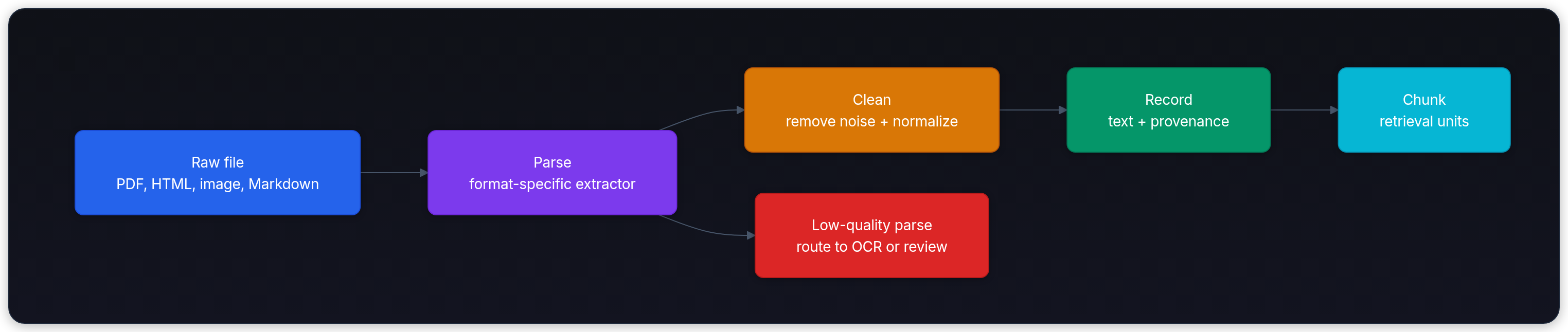

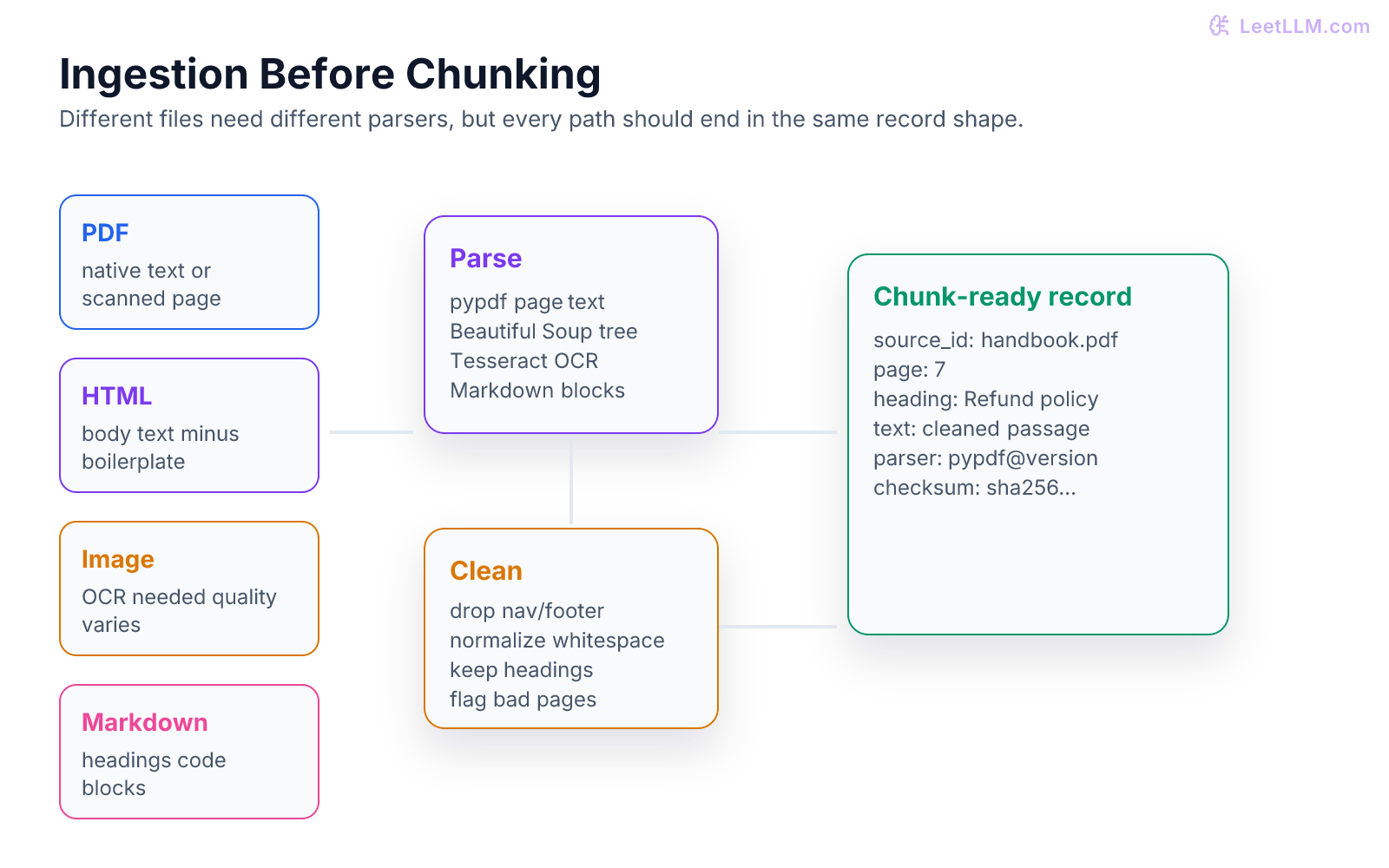

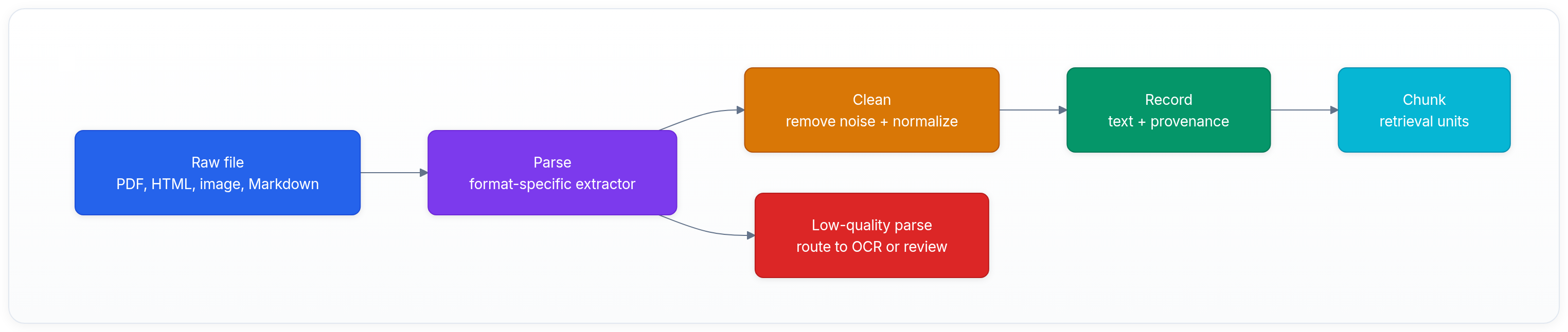

Why ingestion comes before chunking

Chunking splits text into pieces.

But chunking assumes you already have good text.

If the input is dirty, chunking spreads the dirt. A repeated footer becomes hundreds of near-duplicate chunks. A broken PDF table becomes nonsense text. A scanned page becomes a missing answer. A Markdown heading disappears, and the retriever loses a useful section label.

The right order is:

The important output is not "a string."

The output is a record.

| Field | Example | Why it matters |

|---|---|---|

source_id | employee_handbook.pdf | Ties chunks back to a file. |

source_type | pdf | Explains parser path. |

page | 7 | Supports citations. |

heading | Refund policy | Helps retrieval and display. |

text | Employees can... | The content to chunk. |

parser | pypdf | Debugs extraction changes. |

checksum | sha256:... | Detects changed files. |

quality | ok, ocr, review | Keeps bad text visible. |

RAG, short for Retrieval-Augmented Generation, depends on retrieval quality as much as generation quality.[1]

PDFs: native text first

A PDF is a page layout format, not a clean article format.

Some PDFs contain selectable text. Some are scanned images. Some have columns, tables, footnotes, headers, and rotated text. A parser may extract text, but not always in the order a human reads it.

Start with native extraction because it's cheap and preserves text when available. Libraries such as pypdf can read pages and extract text from many PDFs.[2]

python1from dataclasses import dataclass 2 3@dataclass 4class PageText: 5 page: int 6 text: str 7 quality: str 8 9def quality_label(text: str) -> str: 10 cleaned = text.strip() 11 if len(cleaned) < 40: 12 return "needs_ocr_or_review" 13 if "\ufffd" in cleaned: 14 return "bad_encoding" 15 return "ok" 16 17page = PageText( 18 page=7, 19 text="Refund policy\nEmployees can submit refund requests within 14 days.", 20 quality=quality_label("Refund policy\nEmployees can submit refund requests within 14 days."), 21) 22 23print(page) 24assert page.quality == "ok"

Expected output:

text1PageText(page=7, text='Refund policy\nEmployees can submit refund requests within 14 days.', quality='ok')

PDF failure symptoms

| Symptom | Likely cause | Fix |

|---|---|---|

| Empty text | Scanned page or protected file. | Route page to OCR or manual review. |

| Words out of order | Multi-column layout. | Use layout-aware extraction or page image review. |

| Footer appears everywhere | Header/footer not removed. | Detect repeated page text and drop it. |

| Table becomes word salad | Layout table not preserved. | Extract table separately or keep page image evidence. |

| Random replacement characters | Encoding issue. | Flag page for alternate parser. |

Don't index a bad extraction because "some text is better than no text."

Bad text can be worse than missing text because it creates confident retrieval noise.

OCR: when text is an image

OCR means Optical Character Recognition. It turns pixels into text.

Use OCR when native extraction fails or when the source is an image. Tesseract is a widely used open-source OCR engine, and its docs emphasize the importance of image quality and configuration.[3]

OCR should be observable because it can be wrong.

| OCR input issue | Result |

|---|---|

| Low resolution | Missing or confused characters. |

| Skewed scan | Lines merge or split. |

| Complex table | Reading order breaks. |

| Handwriting | Accuracy may drop sharply. |

| Multiple languages | Needs language configuration. |

Store whether text came from OCR. Later, when an answer cites a page, you may want to show lower confidence or let a user open the original image.

OCR routing rule

This small rule catches common cases before chunking.

python1def needs_ocr(native_text: str, page_image_available: bool) -> bool: 2 if not page_image_available: 3 return False 4 cleaned = native_text.strip() 5 if not cleaned: 6 return True 7 if len(cleaned) < 40: 8 return True 9 if cleaned.count("\n") == 0 and len(cleaned) > 500: 10 return True 11 return False 12 13print(needs_ocr("", page_image_available=True)) 14print(needs_ocr("Refund policy with enough readable text on the page.", page_image_available=True)) 15assert needs_ocr("", True) is True

Expected output:

text1True 2False

The exact thresholds will change by product. The habit matters more: route low-quality pages before indexing.

HTML: remove boilerplate

HTML pages include real content and page chrome.

Menus, sidebars, cookie banners, footer links, "related articles," and script text can all enter your parser. If you index them, retrieval will surface generic navigation text instead of the answer.

Beautiful Soup parses HTML into a tree that you can inspect and clean.[4]

python1from bs4 import BeautifulSoup 2 3html = """ 4<html> 5 <body> 6 <nav>Home Pricing Docs</nav> 7 <main> 8 <h1>Refund policy</h1> 9 <p>Annual plans can be refunded within 14 days.</p> 10 </main> 11 <footer>Copyright Example Inc.</footer> 12 </body> 13</html> 14""" 15 16soup = BeautifulSoup(html, "html.parser") 17for tag in soup(["nav", "footer", "script", "style"]): 18 tag.decompose() 19 20text = soup.get_text("\n", strip=True) 21print(text) 22assert "Annual plans" in text 23assert "Pricing" not in text

Expected output:

text1Refund policy 2Annual plans can be refunded within 14 days.

HTML cleaning checklist

| Check | Why |

|---|---|

| Remove nav/footer/script/style. | Cuts repeated boilerplate. |

| Keep headings. | Headings improve retrieval and citations. |

| Preserve links when useful. | URLs can be evidence. |

| Drop hidden text. | Invisible text may be tracking or injection. |

| Normalize whitespace. | Cleaner chunks and previews. |

HTML also creates a security problem: untrusted pages can contain prompt injection text. Don't treat web content as instructions. Treat it as quoted source data.

Markdown: preserve structure

Markdown looks simple, but structure still matters.

Headings, lists, tables, and code fences help readers and retrievers. If your parser flattens everything into one paragraph, you lose boundaries that chunking could use.

Good Markdown ingestion keeps:

- heading hierarchy

- list boundaries

- table rows

- code fences and language tags

- source line ranges when useful

For technical docs, code fences are often the answer.

If a user asks "What command starts the service?", a chunk that preserved uvicorn app:app --reload is more useful than a paragraph that swallowed it.

Markdown record shape

python1markdown = """ 2# Deploy 3 4Run the service: 5 6~~~bash 7uvicorn app:app --reload 8~~~ 9""" 10 11record = { 12 "source_id": "README.md", 13 "heading": "Deploy", 14 "text": markdown.strip(), 15 "contains_code": "~~~" in markdown, 16} 17 18print(record["heading"], record["contains_code"]) 19assert record["contains_code"] is True

Expected output:

text1Deploy True

When a record contains code, retrieval and answer formatting should preserve code blocks. Otherwise the model may paraphrase commands in ways that don't run.

One normalized record

Format-specific parsers are different.

The downstream system should still receive one normalized record shape.

python1from dataclasses import dataclass 2from hashlib import sha256 3 4@dataclass(frozen=True) 5class IngestedRecord: 6 source_id: str 7 source_type: str 8 text: str 9 page: int | None 10 heading: str | None 11 parser: str 12 quality: str 13 checksum: str 14 15def make_record( 16 source_id: str, 17 source_type: str, 18 text: str, 19 parser: str, 20 page: int | None = None, 21 heading: str | None = None, 22) -> IngestedRecord: 23 checksum = sha256(text.encode("utf-8")).hexdigest()[:12] 24 quality = "ok" if len(text.strip()) >= 40 else "review" 25 return IngestedRecord( 26 source_id=source_id, 27 source_type=source_type, 28 text=text.strip(), 29 page=page, 30 heading=heading, 31 parser=parser, 32 quality=quality, 33 checksum=checksum, 34 ) 35 36record = make_record( 37 source_id="handbook.pdf", 38 source_type="pdf", 39 page=7, 40 heading="Refund policy", 41 parser="pypdf", 42 text="Refund policy. Annual plans can be refunded within 14 days.", 43) 44 45print(record.quality, record.checksum) 46assert record.source_id == "handbook.pdf"

Expected output will look like this:

text1ok a5ef3f7fbcda

The checksum lets you detect duplicates and changes. If the same page text appears again, you can skip reprocessing. If it changes, you can re-chunk and re-embed only affected records.

Quality gates before indexing

Add a gate before chunking.

| Gate | Fail when | Action |

|---|---|---|

| Minimum text length | Page has almost no text. | OCR or review. |

| Repeated boilerplate | Same paragraph appears across many pages. | Drop repeated text. |

| Encoding quality | Replacement chars appear often. | Try another parser. |

| Language match | Wrong language detected. | Route to language-specific parser. |

| Source provenance | Missing source ID or page. | Reject record. |

| Security scan | Text contains obvious prompt injection. | Store as source, never instruction. |

Unstructured document libraries can help route many file types through a common partitioning interface, but you still need product-specific quality checks.[5]

Generic parsing is a starting point. Your users' documents define the real bar.

Active learning exercise

Given this extracted page:

text1Home | Pricing | Docs 2Refund policy 3Annual plans can be refunded within 14 days. 4Copyright Example Inc.

Create the chunk-ready record.

Strong answer:

json1{ 2 "source_id": "pricing.html", 3 "source_type": "html", 4 "heading": "Refund policy", 5 "page": null, 6 "text": "Refund policy\nAnnual plans can be refunded within 14 days.", 7 "parser": "beautifulsoup", 8 "quality": "ok", 9 "removed_boilerplate": ["Home | Pricing | Docs", "Copyright Example Inc."] 10}

The key move is not the JSON formatting.

The key move is separating source text from boilerplate and keeping provenance.

Key takeaways

- Ingestion turns messy files into clean, traceable records before chunking.

- PDFs need native extraction first, then OCR or review when extraction quality is low.

- HTML needs boilerplate removal or repeated navigation text will pollute retrieval.

- Markdown ingestion should preserve headings, code fences, and tables.

- Every record should keep source ID, type, page or heading, parser, quality, and checksum.

- Bad extraction should be visible before it reaches embeddings.

Next, continue to Chunking Strategies. There, you'll take clean ingestion records and split them into retrieval units that keep enough context without drowning the model.

Evaluation Rubric

- 1Chooses the right parser path for PDF, HTML, image, or Markdown input

- 2Preserves provenance such as source URL, page number, and parser version

- 3Detects low-quality extraction before indexing bad text

- 4Separates parse, clean, normalize, and chunk-ready stages

- 5Explains when OCR is necessary and why it should be observable

Common Pitfalls

- Treating every file as plain text and losing tables, page numbers, and headings.

- Indexing OCR output without a confidence or quality check.

- Chunking before cleaning, which spreads boilerplate across many retrieval records.

- Throwing away the original file checksum, making reprocessing and deduplication hard.

- Assuming PDF text order always matches visual reading order.

Follow-up Questions to Expect

Key Concepts Tested

Document parsing versus text cleaningPDF text extraction and OCR fallbackHTML boilerplate removalMarkdown structure preservationIngestion records with provenance and parse status

References

pypdf Documentation.

pypdf Contributors. · 2026 · Official documentation

Beautiful Soup Documentation.

Leonard Richardson. · 2026 · Official documentation

Tesseract OCR Documentation.

Tesseract OCR Project. · 2026 · Official documentation

Unstructured: The Ultimate ETL for LLMs

Unstructured.io · 2024

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks.

Lewis, P., et al. · 2020 · NeurIPS 2020