⚙️EasyMLOps & Deployment

Calling LLM APIs in Production

Learn the production contract around LLM API calls: request schemas, retries, timeouts, streaming, validation, cost logs, and failure handling.

28 min readOpenAI, Anthropic, Google +25 key concepts

Learning path

Step 16 of 108 in the full curriculum

You can write a prompt in a notebook and get a good answer in ten seconds. That doesn't make it a production feature.

A production LLM call has to survive slow networks, invalid outputs, model changes, user cancellation, cost limits, private data, and retries. The model is only one dependency in the request path. Your application still needs a contract around it.

This chapter builds on Prompt Engineering Fundamentals. There, you learned how instructions, examples, and schemas shape a model response. Here, you'll turn that model call into something a real product can use.

💡 Key insight: The model call should be behind one small application boundary. Everything else in the app should call your boundary, not a provider SDK directly.

The beginner mental model

Think of an LLM API call like ordering from a kitchen.

You don't shout random instructions through the door. You send an order ticket with a dish, allergy notes, table number, deadline, and maybe a "do not start twice" marker. When food comes back, someone checks that it matches the order before serving it.

An LLM request needs the same idea:

| Kitchen idea | LLM app equivalent | Why it matters |

|---|---|---|

| Order ticket | Request schema | The app knows what it asked for. |

| Table number | User or task ID | Logs can trace the result. |

| Allergy note | Safety or privacy rule | Risk is handled before output is shown. |

| Deadline | Timeout | The UI doesn't hang forever. |

| Duplicate ticket check | Idempotency key | A retry doesn't create duplicate side effects. |

| Plating check | Response validation | Bad output doesn't enter the product. |

The model sees text and tools. Your application sees contracts.

That separation is the first professional habit.

One request contract

Start by naming what your app owns.

A provider request may use messages, tools, response formats, model IDs, and sampling settings. Those fields differ across providers and change over time. Your product should not scatter those details across every route handler.

Instead, define a small internal request.

python1from dataclasses import dataclass 2from typing import Literal 3 4@dataclass(frozen=True) 5class LLMTask: 6 task: Literal["summarize_policy", "extract_ticket", "draft_reply"] 7 user_input: str 8 trace_id: str 9 timeout_seconds: float = 20.0 10 max_retries: int = 2 11 idempotency_key: str | None = None 12 13task = LLMTask( 14 task="extract_ticket", 15 user_input="Refund request. User paid twice. Order A-102.", 16 trace_id="trace_001", 17 idempotency_key="ticket_extract_A-102", 18) 19 20print(task.task) 21assert task.max_retries == 2

Expected output:

text1extract_ticket

This object doesn't know whether you use OpenAI, Anthropic, a local model, or a gateway.

It describes application intent.

What belongs in the wrapper

The wrapper should own the parts that must be consistent across the product:

| Concern | Wrapper responsibility |

|---|---|

| Model selection | Choose a model or route based on task. |

| Prompt version | Attach the prompt template version used for this call. |

| Timeout | Stop waiting before the user experience breaks. |

| Retry policy | Retry only safe errors, with a cap. |

| Validation | Parse and validate output before returning it. |

| Telemetry | Log latency, tokens, status, and failure reason. |

| Privacy | Redact or avoid logging sensitive fields. |

If every route handler calls the provider directly, these rules drift.

One route retries five times. Another doesn't retry. One route logs raw prompts. Another logs nothing. One route validates JSON. Another trusts a string.

That drift becomes technical debt fast.

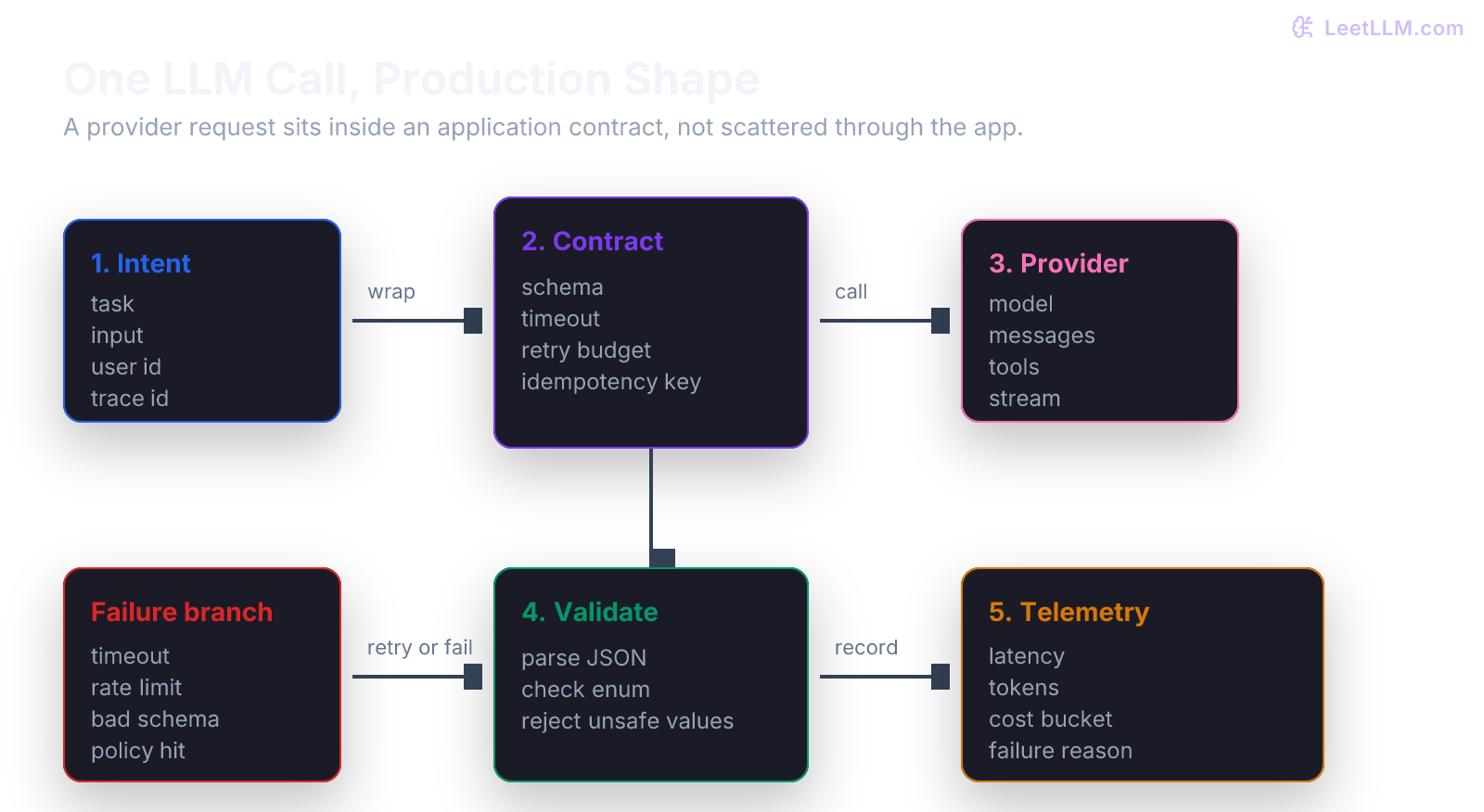

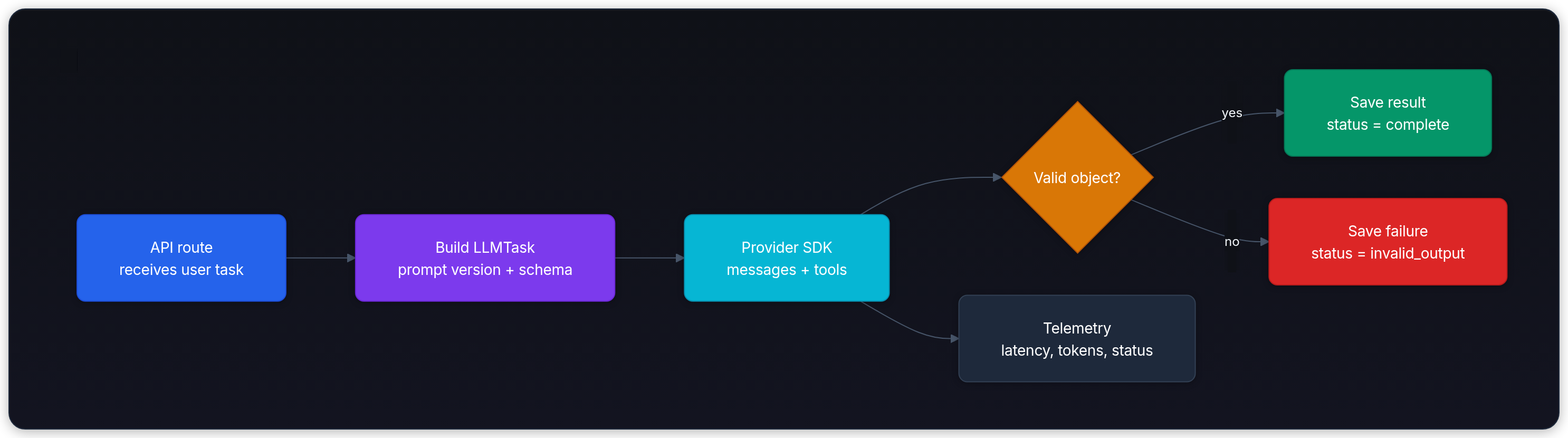

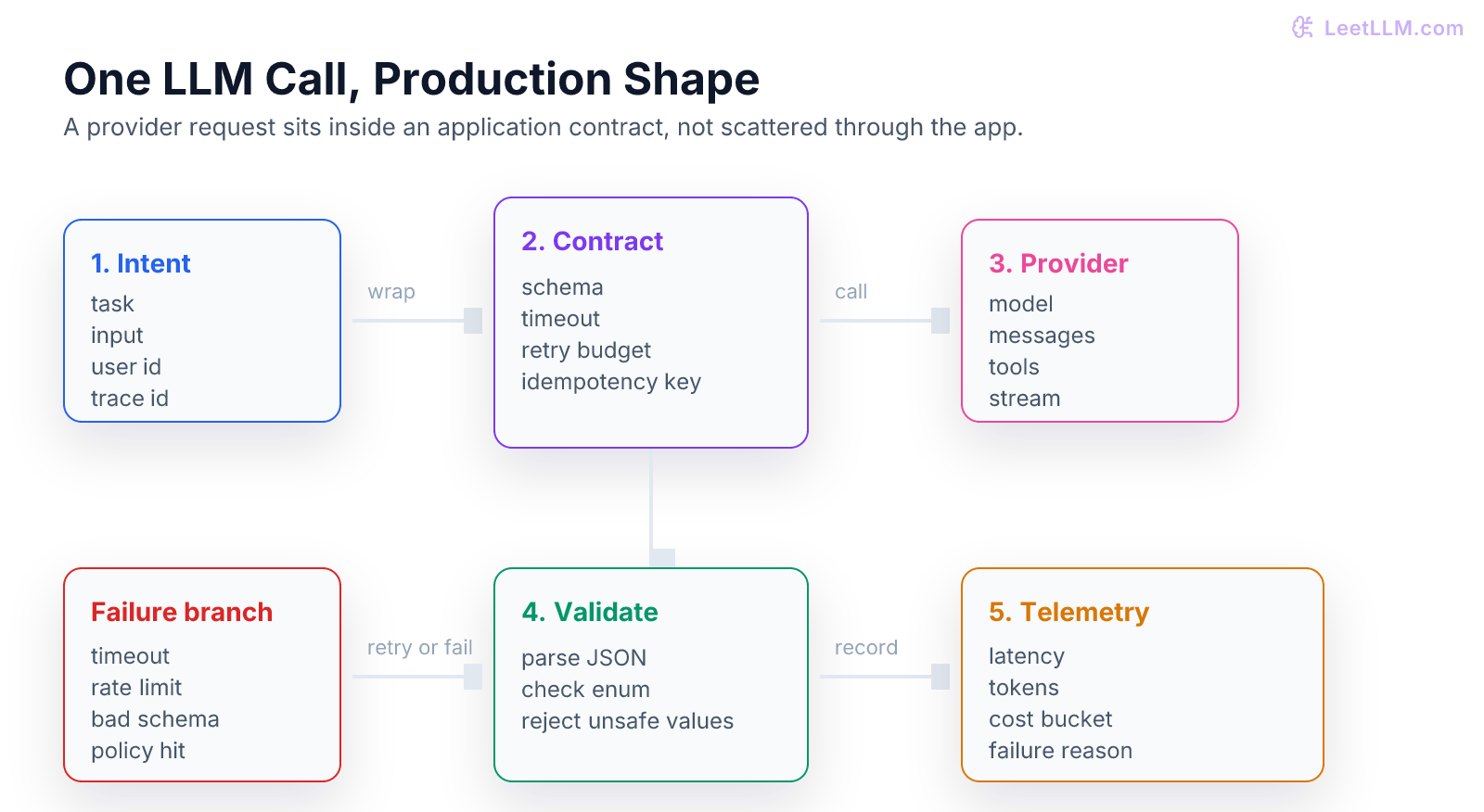

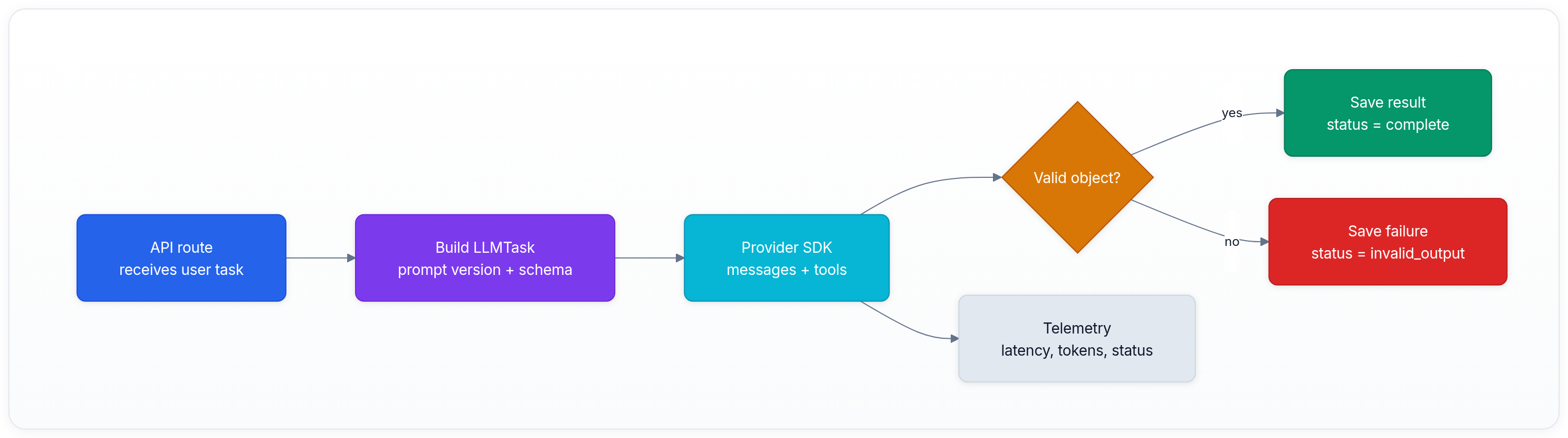

The production call flow

The flow below is provider-agnostic. OpenAI's tool calling and structured output docs describe the same core idea from the provider side: define tools or schemas, send a request, receive structured data, and continue the conversation or validate the answer.[1][2]

Notice the two outputs:

- The user-facing result.

- The operational record.

Production work needs both.

Minimal wrapper

The next snippet uses a fake provider function so you can run the shape without credentials. The point is the control flow: call, parse, validate, log, return.

python1import json 2import time 3from dataclasses import dataclass 4from typing import Any 5 6@dataclass(frozen=True) 7class Ticket: 8 category: str 9 summary: str 10 priority: str 11 12def fake_provider_call(prompt: str) -> str: 13 return json.dumps({ 14 "category": "billing", 15 "summary": "User paid twice for order A-102.", 16 "priority": "high", 17 }) 18 19def parse_ticket(raw: str) -> Ticket: 20 data: dict[str, Any] = json.loads(raw) 21 allowed_priorities = {"low", "medium", "high"} 22 23 if data.get("priority") not in allowed_priorities: 24 raise ValueError("priority is outside allowed enum") 25 26 return Ticket( 27 category=str(data["category"]), 28 summary=str(data["summary"]), 29 priority=str(data["priority"]), 30 ) 31 32def call_ticket_extractor(user_input: str, trace_id: str) -> Ticket: 33 start = time.perf_counter() 34 raw = fake_provider_call( 35 "Extract category, summary, and priority from:\n" 36 f"{user_input}" 37 ) 38 ticket = parse_ticket(raw) 39 latency_ms = (time.perf_counter() - start) * 1000 40 print({"trace_id": trace_id, "status": "ok", "latency_ms": round(latency_ms, 2)}) 41 return ticket 42 43ticket = call_ticket_extractor("Refund request. User paid twice. Order A-102.", "trace_001") 44print(ticket) 45assert ticket.priority == "high"

Expected output will look like this:

text1{'trace_id': 'trace_001', 'status': 'ok', 'latency_ms': 0.05} 2Ticket(category='billing', summary='User paid twice for order A-102.', priority='high')

This example is small, but it already has three production habits:

- The parser owns the response contract.

- The wrapper logs a trace ID and status.

- The caller receives a typed object, not raw model text.

Timeouts and retries

Every network call needs a timeout.

Without a timeout, one slow provider request can hold a web worker, keep a browser spinner open, and make the app look broken. A beginner often sets a very long timeout to avoid errors. That hides the problem instead of designing for it.

Use a timeout that matches the product moment:

| Product moment | Timeout shape |

|---|---|

| Autocomplete | Very short, fail quietly. |

| Chat reply | Short enough to keep user trust, maybe stream partial output. |

| Document summary | Longer, but show progress or move to a job. |

| Batch enrichment | Background job with retry and checkpointing. |

Retries need more care.

Retrying a temporary network error can be safe. Retrying a completed request with side effects can be dangerous. If the request can call tools, send email, create tickets, charge money, or update a database, use idempotency at the tool boundary.

Retry policy

This local example shows a bounded retry loop. It retries only an explicitly retryable error and stops after the budget is spent.

python1class RetryableModelError(Exception): 2 pass 3 4def flaky_call(attempt: int) -> str: 5 if attempt < 2: 6 raise RetryableModelError("temporary rate limit") 7 return "ok" 8 9def call_with_retry(max_retries: int = 2) -> str: 10 attempts = 0 11 while True: 12 try: 13 return flaky_call(attempts) 14 except RetryableModelError as exc: 15 if attempts >= max_retries: 16 print({"status": "failed", "reason": str(exc)}) 17 raise 18 attempts += 1 19 print({"status": "retrying", "attempt": attempts}) 20 21result = call_with_retry() 22print(result) 23assert result == "ok"

Expected output:

text1{'status': 'retrying', 'attempt': 1} 2{'status': 'retrying', 'attempt': 2} 3ok

In a real client, add jittered backoff so many requests don't retry at the same instant.

Also log the final status. A retry that succeeds is still operationally important.

Structured outputs

Free-form text is useful for humans. Applications often need objects.

If the app asks for a support ticket, the downstream code wants fields such as category, summary, and priority. Provider features such as function calling and structured outputs reduce malformed responses by making the requested structure explicit.[2]

They don't remove your responsibility to validate. The schema can guarantee shape. Your business rules still need checks.

| Field | Schema check | Business check |

|---|---|---|

priority | Must be a string enum. | Can only be high when refund amount exceeds a threshold or user is blocked. |

summary | Must be a string. | Must not include private card numbers. |

category | Must be one of known labels. | Must match routing team names. |

Common failure

The tempting shortcut is this:

python1import json 2 3raw = '{"category": "billing", "priority": "urgent", "summary": "Paid twice"}' 4data = json.loads(raw) 5 6print(data["priority"]) 7assert data["priority"] == "urgent"

Expected output:

text1urgent

That code parses JSON, but it doesn't validate meaning. urgent may not be a valid priority in your product.

The fix is to reject unknown values at the boundary.

Streaming

Streaming sends partial output as it is generated.

Use it when the user is waiting and partial text is useful: chat, drafting, long explanations, code generation, or voice. Don't use it for everything. Extraction jobs and hidden classification tasks often work better as normal requests because the app only needs the final validated object.

| Use streaming | Prefer non-streaming |

|---|---|

| Human reads text as it appears. | App needs one JSON object. |

| Long answer improves perceived latency. | Task is short and hidden. |

| User may stop generation. | Result must validate before display. |

| UI can handle partial tokens. | Caller wants one atomic success or failure. |

Streaming adds state:

- partial content buffer

- cancellation path

- final validation step

- error display after partial output

- logs that mark

started,streaming,completed, orfailed

If streaming output later fails validation, the UI needs a plan. For a draft editor, you may show partial text and warn. For a payment support action, you should hold output until validation passes.

Cost and privacy logs

You can't manage what you don't measure.

Each call should record enough information to debug, compare, and budget:

| Field | Example | Why it helps |

|---|---|---|

trace_id | trace_001 | Connects app logs to provider call. |

task | extract_ticket | Groups cost by feature. |

model | provider-model-id | Finds regressions after model changes. |

prompt_version | ticket_prompt@3 | Connects output to prompt history. |

latency_ms | 840 | Tracks user experience. |

input_tokens | 921 | Explains cost and context size. |

output_tokens | 84 | Finds verbose outputs. |

status | ok, timeout, invalid_output | Supports dashboards and alerts. |

Don't log raw prompts by default.

Prompts can contain names, emails, API keys, private documents, or customer messages. The OWASP Top 10 for LLM Applications calls out prompt injection and sensitive information disclosure as serious risk areas for LLM applications.[3] NIST's AI Risk Management Framework also frames AI risk work around governance, mapping, measuring, and managing risk, which maps naturally to traceable logs and controls.[4]

Log summaries, hashes, IDs, and redacted excerpts unless you have a clear retention policy.

Batch, cache, or background job?

Once the wrapper works, choose the execution shape.

| Shape | Use when | Watch out for |

|---|---|---|

| Synchronous request | User needs an immediate answer. | Timeout and loading state. |

| Streaming request | User benefits from partial text. | Partial output can fail later. |

| Background job | Task is slow, retryable, or long-running. | Needs status polling and persistence. |

| Batch API | Many offline records can wait. | Results return later and need reconciliation.[5] |

| Prompt caching | Stable prefix repeats across requests. | Cache hit depends on provider rules and prompt stability.[6] |

The beginner mistake is trying to use one shape for all work.

A chat message, nightly document enrichment job, and compliance extraction workflow don't need the same execution pattern.

Self check

Read this bad design and name three problems:

text1The `/reply` route calls the provider SDK directly. 2It waits up to 90 seconds. 3It retries every error three times. 4It returns whatever text the model produced. 5It logs the full prompt and answer.

Strong answer:

| Problem | Better design |

|---|---|

| SDK call is scattered in route. | Move it behind a wrapper with task contract. |

| Long blocking timeout. | Use shorter timeout or background job with status. |

| Retries every error. | Retry only safe temporary failures with a cap. |

| Trusts raw text. | Validate structured output before returning. |

| Logs private prompt. | Log trace, metadata, status, and redacted excerpts. |

Key takeaways

- A production LLM call needs an application contract around the provider call.

- Put model selection, prompt version, timeout, retry, validation, and telemetry in one wrapper.

- Structured output support helps, but validation remains your responsibility.

- Retrying without idempotency can duplicate work or side effects.

- Streaming is a product choice, not a default.

- Logs should support debugging and budgeting without storing private prompts by default.

Next, continue to First AI App End-to-End. There, you'll connect this model-call boundary to an actual product slice with an API route, storage, tests, and deployment checks.

Evaluation Rubric

- 1Separates application intent from provider-specific API fields

- 2Adds timeouts, bounded retries, and idempotency to model calls

- 3Validates structured responses before trusting model output

- 4Logs latency, token usage, model version, and failure reasons for each call

- 5Explains when to use streaming, batch work, prompt caching, or background jobs

Common Pitfalls

- Calling a model directly from many parts of the codebase, which makes cost controls and retries inconsistent.

- Using a long timeout with no user-visible loading state, so the app feels frozen instead of busy.

- Parsing JSON with string slicing instead of a schema validator.

- Logging raw prompts that may contain private user data.

- Retrying every error with no cap, no jitter, and no idempotency plan.

Follow-up Questions to Expect

Key Concepts Tested

Provider-agnostic LLM request contractTimeouts, retries, and idempotency keysStructured outputs and schema validationStreaming response handlingCost, latency, and error logging

References

Function calling and other API updates.

OpenAI. · 2023 · OpenAI Blog

Structured outputs

OpenAI · 2024

OpenAI Batch API Guide

OpenAI · 2026

Prompt caching

OpenAI · 2026

OWASP Top 10 for Large Language Model Applications

OWASP Foundation · 2025

Artificial Intelligence Risk Management Framework (AI RMF 1.0).

National Institute of Standards and Technology · 2023 · NIST