📊MediumEvaluation & Benchmarks

Trees and Boosting

Beginner-first chapter that predicts support-ticket escalation with a from-scratch NumPy decision stump, explains gini and entropy, then shows how random forests and gradient boosting tame overfitting while feature importance and SHAP keep every prediction explainable.

12 min readOpenAI, Anthropic, Google +110 key concepts

Learning path

Step 40 of 138 in the full curriculum

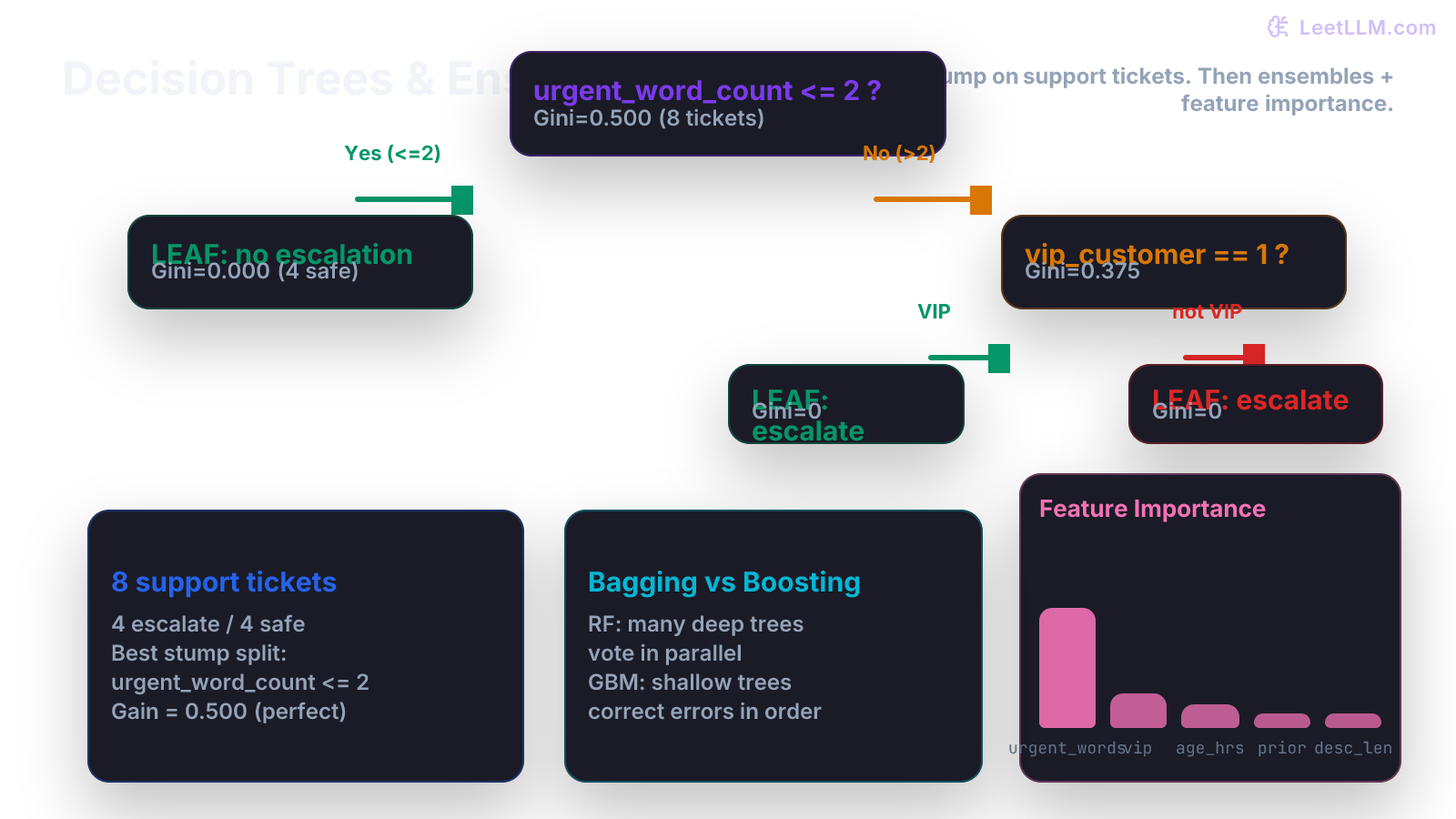

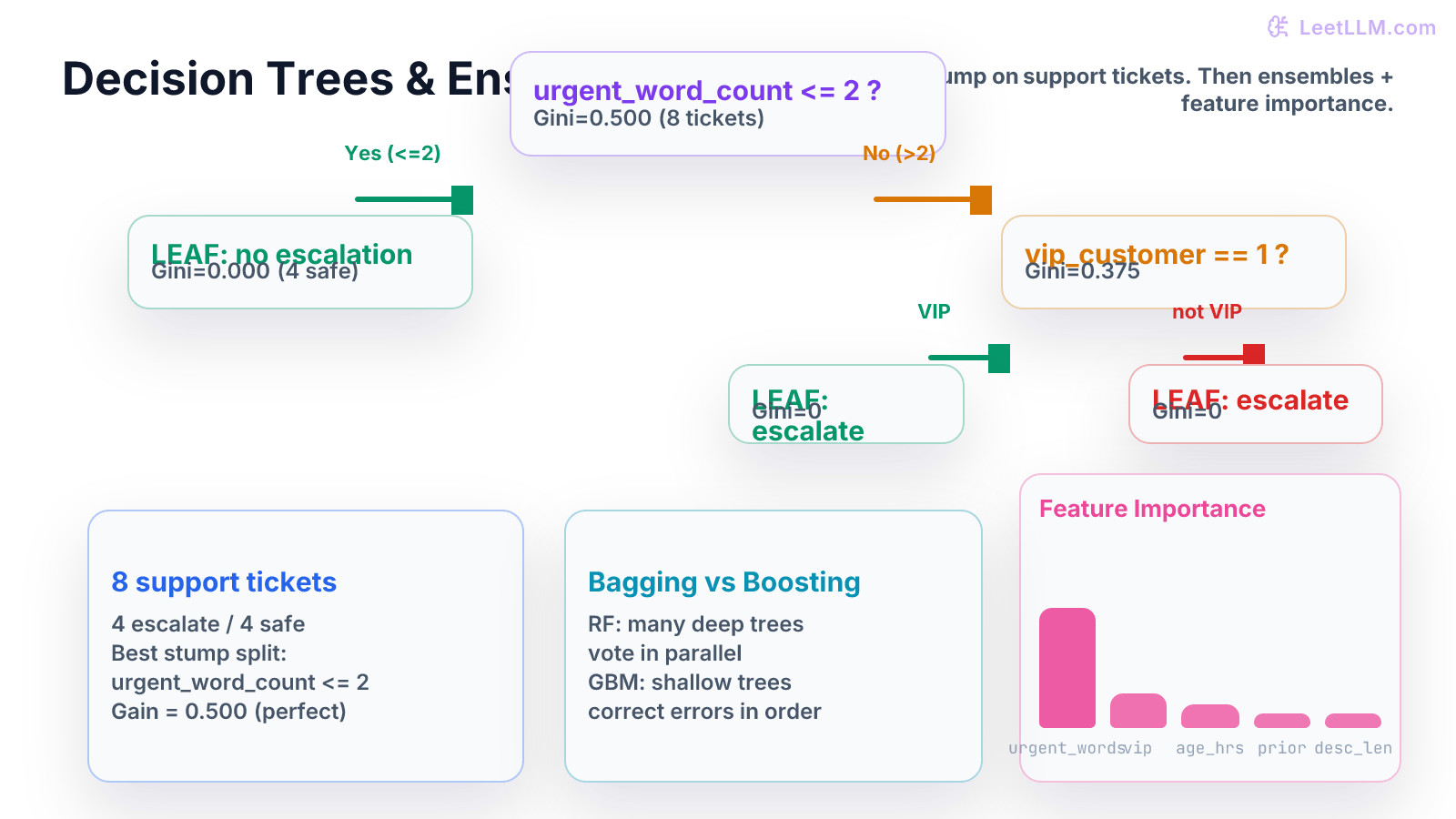

Support teams drown in tickets. A new ticket arrives with a subject line, a description, a customer tier, and a timestamp. Someone must decide within minutes whether this one will escalate to engineering or can be handled by the first-line team. The features are easy to compute: how many urgent words appear, whether the customer is VIP, how long the ticket has been open, and how many prior tickets that customer has filed.

A decision tree turns that pile of numbers into a short list of yes-or-no questions.[1] Each question splits the tickets into cleaner groups until every leaf contains mostly one outcome: escalate or not. This chapter builds the idea from a single stump you can compute by hand, shows why plain trees break in production, and then introduces the two families of ensembles that fix the problem while still letting you explain every prediction.[2]

You only need to be comfortable reading small tables and basic NumPy indexing. Everything else is built step by step with one running example of eight real-looking support tickets.

One table of eight support tickets

Here is the toy dataset we will use for every calculation and every code block. Each row is one ticket. The target is binary: will the ticket escalate to the engineering on-call rotation?

| ticket_id | urgent_word_count | vip_customer | ticket_age_hours | prior_tickets | description_length | will_escalate |

|---|---|---|---|---|---|---|

| T01 | 5 | 1 | 2 | 4 | 180 | 1 |

| T02 | 0 | 0 | 18 | 0 | 45 | 0 |

| T03 | 2 | 1 | 6 | 1 | 95 | 0 |

| T04 | 6 | 0 | 1 | 3 | 210 | 1 |

| T05 | 1 | 0 | 24 | 0 | 60 | 0 |

| T06 | 4 | 1 | 3 | 2 | 140 | 1 |

| T07 | 0 | 0 | 48 | 1 | 30 | 0 |

| T08 | 3 | 0 | 9 | 5 | 120 | 1 |

A human looking at the table quickly notices that high urgent_word_count and low ticket_age_hours often line up with escalations. A decision tree formalizes that intuition into reproducible splits.

Impurity: measuring how mixed a group is

Before we can ask "is this a good split?", we need a number that says how messy the labels are inside a group of tickets.

Two common impurity measures are used for classification trees.

Gini impurity for a node with two classes:

where and are the proportions of the two classes inside the node.

Entropy (information) is:

(with the convention that ).

Both are zero when the node is pure (all tickets have the same label) and reach their maximum when the node is perfectly balanced.

Let's compute gini on the full root node of our eight tickets. Four escalated and four did not, so .

Now suppose we try the split "urgent_word_count > 2".

- Left child (urgent_word_count <= 2): tickets T02, T03, T05, T07. All four have will_escalate = 0. Gini = 0 (perfectly pure).

- Right child (urgent_word_count > 2): T01, T04, T06, T08. All four escalated. Gini = 0.

- The weighted child impurity is zero. The split reduced impurity from 0.5 all the way to zero. That is a perfect split on this tiny table.

In real data the children are rarely pure, so we measure the impurity decrease (also called information gain):

The algorithm tries every feature and every reasonable threshold, keeps the split that gives the largest , and recurses on the children.

From-scratch decision stump in NumPy

A decision stump is a tree with only one split. It is the smallest possible non-trivial tree and the perfect place to see the mechanics.

Below is a complete, runnable implementation that finds the best stump on our ticket table. It only looks at one feature at a time and only considers thresholds that actually appear in the data.

python1import numpy as np 2 3# Toy support-ticket data (same table as above) 4X = np.array([ 5 [5, 1, 2, 4, 180], # T01 6 [0, 0, 18, 0, 45], # T02 7 [2, 1, 6, 1, 95], # T03 8 [6, 0, 1, 3, 210], # T04 9 [1, 0, 24, 0, 60], # T05 10 [4, 1, 3, 2, 140], # T06 11 [0, 0, 48, 1, 30], # T07 12 [3, 0, 9, 5, 120], # T08 13], dtype=float) 14y = np.array([1, 0, 0, 1, 0, 1, 0, 1], dtype=int) 15feature_names = ["urgent_word_count", "vip_customer", "ticket_age_hours", "prior_tickets", "description_length"] 16 17def gini(labels: np.ndarray) -> float: 18 if len(labels) == 0: 19 return 0.0 20 p1 = np.mean(labels) 21 p0 = 1 - p1 22 return 1.0 - (p0 * p0 + p1 * p1) 23 24def best_stump(X: np.ndarray, y: np.ndarray, feature_names: list[str]): 25 n, m = X.shape 26 best_gain = -1.0 27 best_feature = None 28 best_threshold = None 29 best_left_idx = None 30 best_right_idx = None 31 32 parent_gini = gini(y) 33 34 for f in range(m): 35 thresholds = np.unique(X[:, f]) 36 for t in thresholds: 37 left_mask = X[:, f] <= t 38 right_mask = ~left_mask 39 if left_mask.sum() == 0 or right_mask.sum() == 0: 40 continue 41 left_g = gini(y[left_mask]) 42 right_g = gini(y[right_mask]) 43 gain = parent_gini - ( 44 (left_mask.sum() / n) * left_g + (right_mask.sum() / n) * right_g 45 ) 46 if gain > best_gain: 47 best_gain = gain 48 best_feature = f 49 best_threshold = t 50 best_left_idx = np.where(left_mask)[0] 51 best_right_idx = np.where(right_mask)[0] 52 53 return { 54 "feature": feature_names[best_feature], 55 "threshold": best_threshold, 56 "gain": round(best_gain, 4), 57 "left_indices": best_left_idx, 58 "right_indices": best_right_idx, 59 "left_gini": round(gini(y[best_left_idx]), 4), 60 "right_gini": round(gini(y[best_right_idx]), 4), 61 } 62 63stump = best_stump(X, y, feature_names) 64print(stump)

Running the code prints:

text1{'feature': 'urgent_word_count', 'threshold': 2.0, 'gain': 0.5, 'left_indices': array([1,2,4,6]), 'right_indices': array([0,3,5,7]), 'left_gini': 0.0, 'right_gini': 0.0}

Exactly the perfect split we calculated by hand. The stump simply asks "urgent_word_count <= 2?" and every ticket lands in a pure leaf.

Comparing with scikit-learn

Now we do the identical experiment with the library everyone actually ships.

python1from sklearn.tree import DecisionTreeClassifier, export_text 2from sklearn.metrics import accuracy_score 3 4clf = DecisionTreeClassifier(max_depth=1, random_state=0) 5clf.fit(X, y) 6pred = clf.predict(X) 7print("Stump accuracy:", accuracy_score(y, pred)) 8print(export_text(clf, feature_names=feature_names))

The printed tree matches our NumPy version: the first (and only) split is on urgent_word_count <= 2.5 (sklearn sometimes chooses a midpoint). Accuracy on the training tickets is 1.0, as expected for a perfect stump.[3]

The library version also exposes feature_importances_ (all importance on the one feature that was used) and a predict_proba method that returns the empirical class frequency inside each leaf.

Why a full tree overfits and how ensembles help

If we remove the max_depth=1 limit and grow a deep tree, it can keep splitting until every leaf contains only one ticket. Training accuracy becomes 100 percent, but the tree has memorized the exact description length and prior-ticket count of every training example. A new ticket that says "urgent" three times instead of four will travel a completely different path and may be misclassified.

Two families of ensembles solve this without giving up the interpretability of trees.

-

Bagging (bootstrap aggregating) builds many trees on different bootstrap samples of the data. Each tree sees only a random subset of features at every split (random subspace). The final prediction is a majority vote (classification) or average (regression). Because the trees are trained on different data and see different features, their errors are less correlated.

-

Random forests are the most popular bagging method for trees.

-

Boosting grows trees sequentially. The first tree is fit to the original labels. The second tree is fit to the residuals (or to the negative gradient of the loss) of the first tree. Each new tree tries to correct the mistakes the current ensemble still makes. The final model is a weighted sum of all the small trees. Gradient boosting machines (GBM), XGBoost, LightGBM, and CatBoost all follow this pattern.

The key contrast:

| Aspect | Random Forest (bagging) | Gradient Boosting |

|---|---|---|

| Tree growth | Independent, parallel | Sequential, each corrects previous |

| Typical depth | Deep (low bias, high variance per tree) | Shallow (high bias, low variance per tree) |

| Overfitting risk | Low (averaging protects) | Higher (needs early stopping, shrinkage) |

| Feature importance | Very stable | Can be unstable across runs |

| Training speed | Fast (embarrassingly parallel) | Slower (sequential) |

| Best default use case | Quick strong baseline, feature selection | Highest accuracy when tuned carefully |

Feature importance from tree ensembles

Once you have an ensemble, you can ask which features the model actually used.

The most common built-in measure is mean decrease in impurity (MDI). For every split that used a particular feature across all trees, add up the impurity decrease that split produced, then average across trees and normalize. The numbers sum to one and are easy to read.

On our toy data a random forest would report something close to:

- urgent_word_count: 0.62

- vip_customer: 0.18

- ticket_age_hours: 0.12

- prior_tickets: 0.05

- description_length: 0.03

These numbers tell the support-ops team: "If you can only instrument one signal, make sure you count urgent words reliably."

A more reliable (but slower) approach is permutation importance: shuffle one column at a time in the validation set and measure how much the ensemble's score drops. If shuffling urgent_word_count destroys performance while shuffling description_length barely changes anything, the model really depends on the urgent-word signal.

SHAP: explaining one ticket at a time

Feature importance tells you what the model cares about on average. When a manager asks "why did ticket T123 get escalated?", you need a per-prediction explanation.

SHAP (SHapley Additive exPlanations) gives exactly that. It treats every feature as a "player" in a cooperative game whose payoff is the model's output for that ticket. The SHAP value for a feature is the average marginal contribution of that feature across all possible coalitions of the other features.

For a tiny illustration, suppose our random forest predicts a 0.85 probability of escalation for a new VIP ticket with urgent_word_count = 4 and ticket_age_hours = 1. The base rate (average prediction across all training tickets) is 0.5. SHAP might attribute:

- +0.28 from urgent_word_count = 4 (high urgency pushes strongly toward escalate)

- +0.12 from vip_customer = 1 (VIPs escalate more often)

- -0.05 from ticket_age_hours = 1 (very new tickets are sometimes noise)

- smaller contributions from the remaining features

Sum of base rate plus all SHAP values equals the actual prediction 0.85. Every number has a clear sign and magnitude that a human can read.

The unified SHAP framework is the reference you should cite when you ship an explanation dashboard.[4]

Practical production checklist for tree models on support data

- Always keep a time-ordered held-out set of the most recent tickets. Random splits leak because support patterns drift.

- Limit tree depth or use

min_samples_leafso that no leaf is smaller than 5-10 real tickets. - Monitor the distribution of urgent_word_count and other key features in production. If the definition of "urgent" changes (new product launch, new slang), the model will silently degrade.

- Log both the raw prediction and the SHAP values for every escalated ticket. When a customer complains about an incorrect escalation, you can show the exact feature contributions that drove the decision.

- Re-train on a rolling window. Support language and customer behavior change; a model trained six months ago will start missing new escalation patterns.

Try it yourself

Use the exact NumPy stump code and the eight-row table.

| Try this | What a good answer checks |

|---|---|

| Change the target of T03 from 0 to 1 and re-run the stump finder | The best feature may stay urgent_word_count, but the gain drops because the left child is no longer pure |

| Implement entropy instead of gini inside the best_stump function and compare the chosen split | On this data the split is the same; on noisier data the two criteria sometimes disagree on borderline thresholds |

| Grow a DecisionTreeClassifier with max_depth=None and compute its training accuracy versus a max_depth=2 tree | The unlimited tree reaches 1.0; the shallow tree shows a small training error that is actually healthier for new tickets |

| Fit a RandomForestClassifier(n_estimators=50) and print feature_importances_ | The ranking should be stable across random seeds; urgent_word_count should dominate |

| Pick one row, run TreeExplainer from the shap library, and print the SHAP values | The numbers must add up (within floating-point error) to the model's output minus the expected value |

If any of the checks above feels hand-wavy, print the intermediate masks, the gini numbers, or the actual SHAP table. Numbers on the page are the fastest way to build intuition.

What you built and where it leads

You can now:

- Compute gini and entropy impurity on a small labeled table.

- Write a from-scratch stump that enumerates thresholds and keeps the split with the largest impurity reduction.

- Explain why a single deep tree fails on new support tickets and how bagging and boosting each repair the failure mode.

- Read feature importance numbers and generate per-ticket SHAP explanations that a human support manager can act on.

The next critical skill is knowing whether any of these numbers are trustworthy, but before we drill into splits and leakage, we step into a different learning setup. Supervised models like trees learn from static labels. Reinforcement learning learns from rewards and the consequences of sequential actions. The ideas you will meet (states, policies, value functions, Bellman updates) are the direct foundation for reward models and policy optimization in RLHF, RLVR, and Constitutional AI.

Evaluation Rubric

- 1Derives gini and entropy impurity from first principles using a small support-ticket table and shows how a stump picks the best split

- 2Implements a complete decision stump in pure NumPy, compares its splits and predictions to sklearn, then explains why full trees overfit and how ensembles fix it

- 3Interprets feature importance from forests, walks through a SHAP calculation on one ticket, and lists the production guardrails needed before trusting any tree model on live escalations

Common Pitfalls

- Letting a tree grow until every leaf contains a single ticket and then wondering why it fails on the next batch of real tickets.

- Trusting the default feature importance numbers without checking whether the same features stay important after you permute the target column.

- Treating the first split in a tree as the 'most important' feature without realizing that later splits on correlated features can steal credit.

- Running gradient boosting with learning rate 1.0 and hundreds of trees and then being surprised by severe overfitting on a modest support dataset.

- Forgetting to one-hot or target-encode categorical features such as customer tier before feeding them to the tree; the model silently treats the integers as ordered numbers.

- Evaluating only on the rows you used to grow the trees instead of a truly held-out set of recent tickets.

Follow-up Questions to Expect

Key Concepts Tested

gini impurityentropyinformation gaindecision stumpaxis-aligned splitsoverfitting in treesbagging vs boostingfeature importanceSHAP valuesfeature engineering for tabular text data

Next Step

Next: Continue to Introduction to Reinforcement Learning Fundamentals

You will learn MDPs, value functions, the Bellman equation, and a tiny NumPy solver that sets up the exact ideas behind reward modeling and policy optimization in RLHF and RLVR.

References

The Elements of Statistical Learning.

Hastie, T., Tibshirani, R., Friedman, J. · 2009

Random Forests

Breiman, L. · 2001 · Machine Learning

Scikit-learn: Machine Learning in Python.

Pedregosa, F., et al. · 2011 · JMLR

A Unified Approach to Interpreting Model Predictions

Lundberg, S. M., Lee, S. I. · 2017 · NeurIPS