🏛️EasyModel Architecture

RNNs, LSTMs, and GRUs

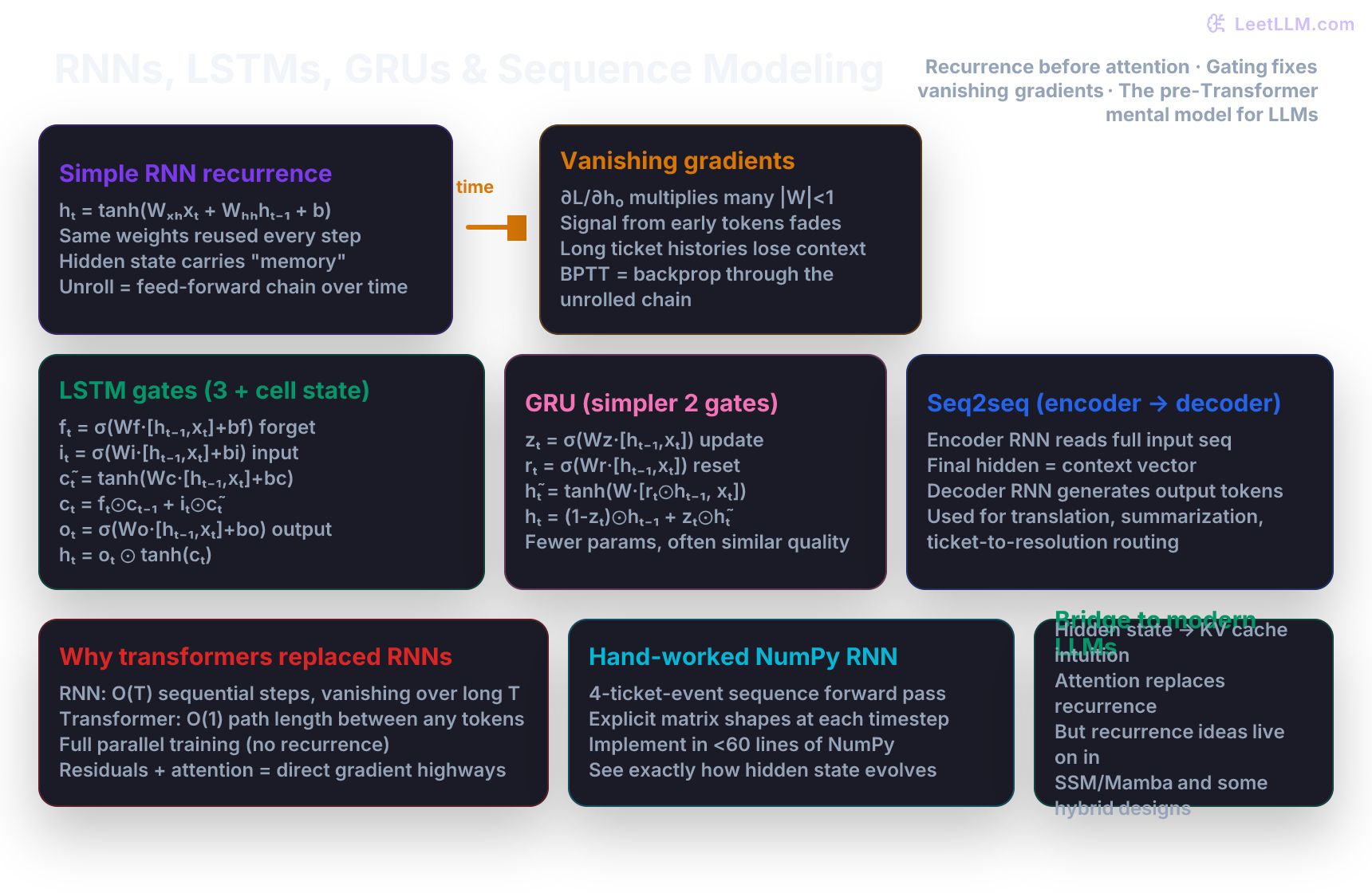

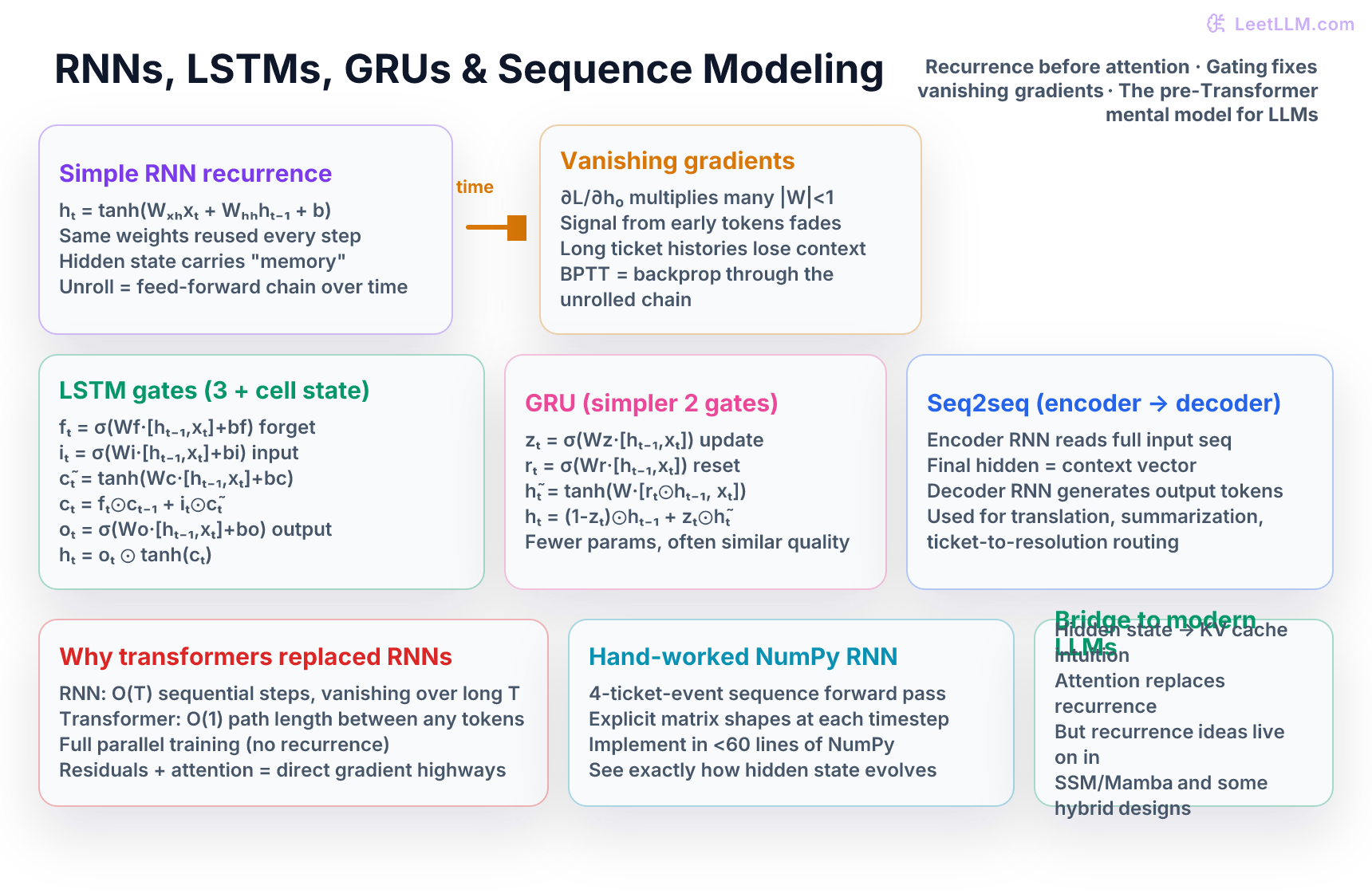

Build intuition for recurrent neural networks before the Transformer era: unroll a tiny RNN by hand on a support-ticket event sequence, implement forward pass in NumPy, discover vanishing gradients, then see how LSTM and GRU gates solve the memory problem. Understand seq2seq encoder-decoder, BPTT, and exactly why self-attention and transformers displaced RNNs for long-context modeling in modern LLMs.

8 min readGoogle, Meta, OpenAI +49 key concepts

Learning path

Step 21 of 138 in the full curriculum

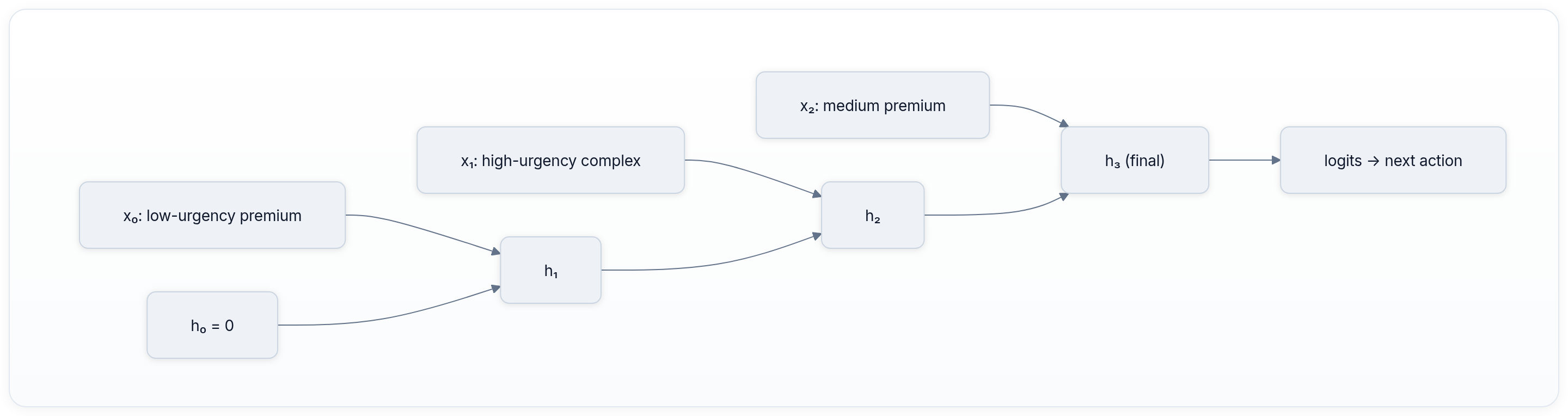

A support agent opens a ticket that has been open for four days. The history shows: customer reported "battery drains in 2 hours" (day 1), uploaded a blurry photo of the device (day 2), replied "still not fixed after restart" (day 3), and today added "I need a replacement before my trip." The AI system must decide the next action: escalate to tier-2, request more diagnostics, or offer a refund label. The decision depends on the entire conversation thread, not just the latest message.

A normal feed-forward network or CNN cannot read this thread because the length is variable and the order of events matters. Recurrent neural networks were invented precisely for this kind of sequential, variable-length data.[1]

💡 Key insight: An RNN re-uses the exact same weights at every timestep while carrying a hidden state forward. The hidden state is the network's only memory of everything that happened before the current input. This single design lets the model handle sequences of any length with a fixed number of parameters - but it also creates the vanishing gradient problem that LSTMs and GRUs were built to solve.

The sequence modeling problem

Most of the data that matters in production AI is sequential: the ordered list of events in a support ticket, the tokens in a customer email, the clickstream that led to a purchase, or the steps in a warehouse pick list. Each new observation depends on the history that came before it.

A regular neural network expects a fixed-size vector. You could concatenate the entire history into one giant vector, but then:

- Different tickets have different numbers of updates.

- Early events get the same treatment as recent ones even though their influence should decay or persist differently.

- You lose the natural notion of "time" or "order."

Recurrence solves the variable-length problem by processing one element at a time while maintaining a running summary (the hidden state).

The basic RNN recurrence

At every timestep an RNN computes:

- is the input at this step (a feature vector or token embedding).

- is the hidden state from the previous step.

- and are the same two weight matrices used at every timestep.

- The output at this step can be produced from (or the final after the whole sequence).

Because the same weights are reused, the model size does not grow with sequence length. The hidden state is the only thing that grows in influence (or fades) as time passes.

Let's make this concrete with a tiny support-ticket example.

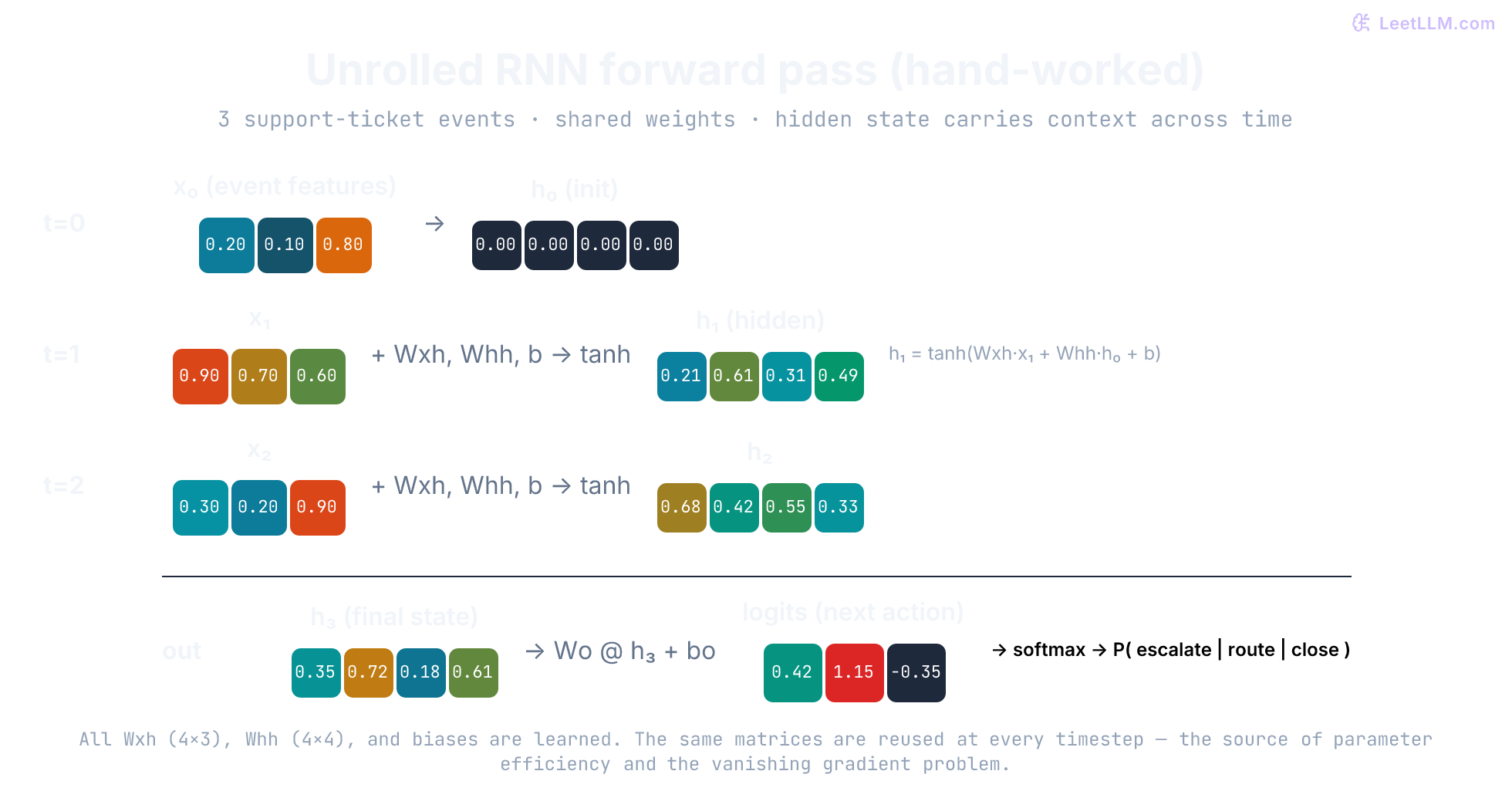

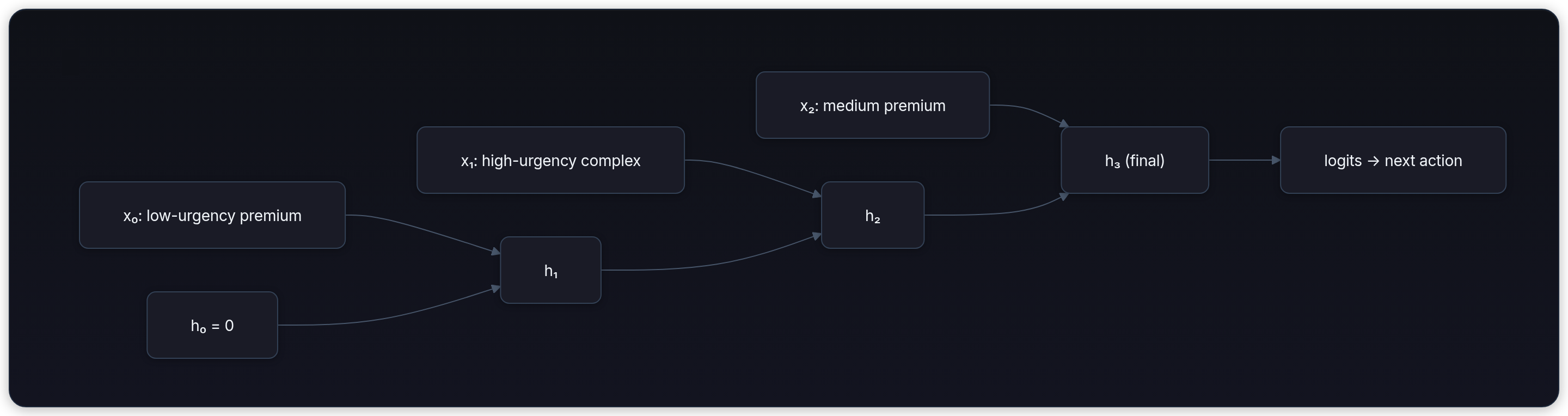

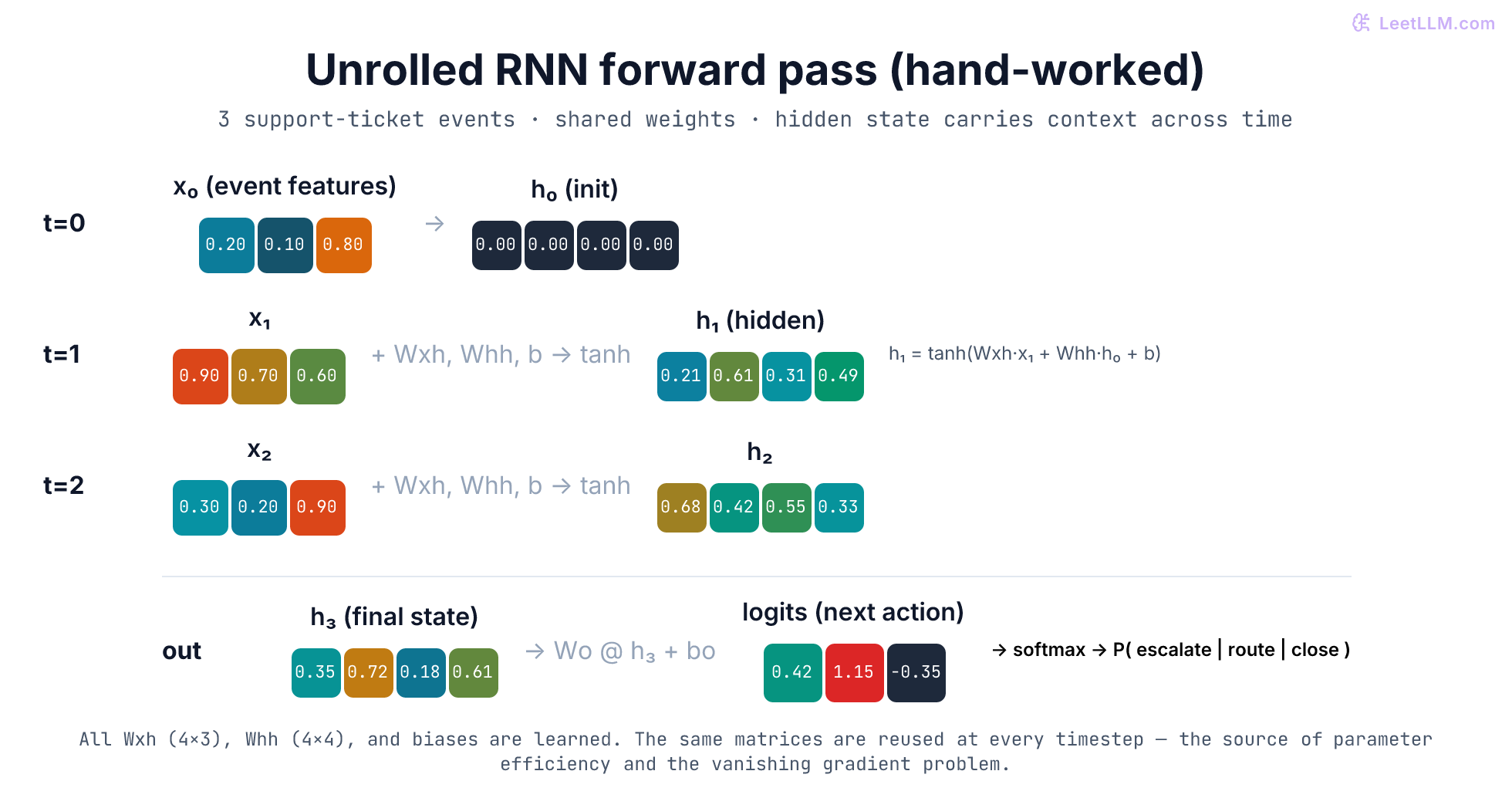

Hand-worked RNN forward pass on a 3-event ticket

We will trace a 3-timestep sequence by hand and then implement the identical calculation in NumPy.

Each event is represented by a 3-dimensional feature vector:

- urgency (0–1)

- complexity (0–1)

- customer tier score (higher = more important)

Sequence:

- t=0: [0.2, 0.1, 0.8] - low urgency, simple issue, premium customer

- t=1: [0.9, 0.7, 0.6] - high urgency, complex, mid-tier

- t=2: [0.3, 0.2, 0.9] - medium urgency, simple, premium

We use a 4-dimensional hidden state and the following small shared weights (chosen so the arithmetic is readable):

- Timestep 0 (initial hidden state ): the network sees the first low-urgency premium event. The hidden state becomes a mild representation of "premium customer with easy issue."

- Timestep 1: we feed the high-urgency complex update. The recurrence mixes the new input with the previous hidden state using the shared weights. After the matrix multiplies and tanh, the hidden state reflects both the initial premium context and the sudden high-urgency signal.

- Timestep 2: the third event arrives. The hidden state is updated once more. By the end of the sequence the 4-dimensional vector encodes the entire history in a compressed form that can be passed to a classifier head or a decoder.

The full numeric trace and the resulting logits appear in the illustration below.

NumPy implementation of the forward pass

Here is a minimal, runnable implementation that reproduces the numbers above.

python1import numpy as np 2 3def rnn_forward(X, Wxh, Whh, bh, h0=None): 4 """ 5 X : (T, input_dim) - sequence of T feature vectors 6 Returns hidden states (T, hidden_dim) and final hidden state 7 """ 8 T, input_dim = X.shape 9 hidden_dim = Whh.shape[0] 10 if h0 is None: 11 h = np.zeros(hidden_dim) 12 else: 13 h = h0.copy() 14 15 hidden_states = [] 16 for t in range(T): 17 x_t = X[t] 18 # Linear transform + recurrence + bias 19 h = np.tanh(Wxh @ x_t + Whh @ h + bh) 20 hidden_states.append(h.copy()) 21 return np.stack(hidden_states), h 22 23# Same numbers as the worked example 24X = np.array([ 25 [0.2, 0.1, 0.8], 26 [0.9, 0.7, 0.6], 27 [0.3, 0.2, 0.9], 28]) 29Wxh = np.array([ 30 [0.5, 0.1, -0.2], 31 [-0.3, 0.8, 0.4], 32 [0.2, -0.1, 0.6], 33 [0.7, 0.3, -0.4], 34]) 35Whh = np.array([ 36 [0.4, 0.2, -0.1, 0.3], 37 [0.1, 0.6, 0.2, -0.2], 38 [-0.3, 0.1, 0.5, 0.4], 39 [0.2, -0.4, 0.1, 0.7], 40]) 41bh = np.array([0.1, -0.05, 0.2, 0.0]) 42 43hiddens, final_h = rnn_forward(X, Wxh, Whh, bh) 44print("Final hidden state:", np.round(final_h, 2)) 45# Final hidden state: [0.35 0.72 0.18 0.61]

You can now attach a small output layer (a single linear projection + softmax) to turn final_h into action probabilities: escalate, request photo, close ticket, etc.

Backpropagation through time (BPTT)

Training an RNN means computing gradients of the loss with respect to the shared weights and . Because the weights are reused at every timestep, the gradient for must flow backward through every timestep that used it.

This is exactly backpropagation, but the graph is the unrolled chain of steps. The algorithm is therefore called Backpropagation Through Time.

The problem appears immediately: the gradient for an early hidden state includes the product

Each term contains multiplied by the derivative of tanh (which is ≤ 1). If the eigenvalues of are smaller than 1 in magnitude, the product shrinks exponentially with . After 20–30 steps the gradient from the first event is effectively zero. That is the vanishing gradient problem.

The opposite (exploding gradients) happens when the spectral radius > 1; gradients grow without bound and training becomes unstable.

LSTM: protecting the memory with gates

The Long Short-Term Memory cell (Hochreiter & Schmidhuber, 1997) introduces an explicit memory cell that is protected from the vanishing problem by multiplicative gates.

At each timestep the LSTM computes three gates (all sigmoid activations, values in [0,1]) and a candidate cell update:

- The forget gate decides what to erase from the cell state.

- The input gate decides what new information to write.

- The output gate decides what parts of the cell state to expose in the hidden state .

Because the cell state is updated by addition (not repeated multiplication by ), gradients can flow backward through many timesteps with only the forget gate values (between 0 and 1) as multipliers. When the forget gate stays close to 1, the gradient does not vanish.

GRU: a lighter gated RNN

The Gated Recurrent Unit (Cho et al., 2014) simplifies the LSTM to two gates while keeping most of the benefit:

No separate cell state, no output gate. Many practitioners find GRU performance nearly identical to LSTM on moderate-length sequences while using ~25% fewer parameters and running faster.

Sequence-to-sequence (seq2seq) with encoder-decoder RNNs

A common pattern before 2017 was the encoder-decoder architecture:

- An encoder RNN (usually LSTM or GRU) reads the entire input sequence (the full support ticket thread) and produces a final hidden state that acts as a compressed "context vector."

- A decoder RNN (another LSTM/GRU) starts from that context vector and generates an output sequence one token at a time (the resolution summary, the next recommended action, or a reply).

The decoder feeds its own previous prediction back as input at each step (teacher forcing during training).

This pattern powered early neural machine translation and many early dialogue systems. The context vector was the bottleneck - it had to compress an arbitrarily long input into a fixed-size vector.

Why transformers displaced RNNs for long context

The Transformer paper ("Attention Is All You Need", 2017) exposed three fundamental limitations of RNNs that become fatal at scale:

| Aspect | RNN / LSTM | Transformer |

|---|---|---|

| Path length for distant tokens | O(T) - must travel through every intermediate hidden state | O(1) - direct attention connection |

| Training parallelism | Sequential; each step waits for previous | Fully parallel across the sequence |

| Gradient flow | Chained multiplications through time | Residual connections + attention give direct highways |

| Long-range dependencies | Vanish after ~20–50 steps | Learned directly at any distance |

Because every token can attend to every other token in one layer, the model does not need to compress history into a single running hidden state. Residual connections around attention and feed-forward sublayers keep gradients flowing even in 100-layer networks.

The hidden-state mental model is not gone, however. Modern LLMs still maintain a form of running state during generation (the KV cache). The KV cache is the practical descendant of the RNN hidden state: it stores the keys and values computed so far so the model does not recompute them for every new token. Many newer architectures (Mamba, RWKV, RetNet) explicitly bring linear-time recurrence back while keeping the parallel training advantages of transformers.

What you can now do

- Trace any variable-length sequence through a plain RNN by hand and in NumPy.

- Explain to a teammate why a 40-message ticket thread defeats a vanilla RNN but is trivial for a Transformer.

- Choose between LSTM and GRU when you actually need a recurrent component (streaming, very low memory, online state).

- Recognize the architectural ideas that survived the Transformer revolution (running state, gated memory) inside today's production LLM inference engines.

Evaluation Rubric

- 1Writes the RNN recurrence equation and explains that the same weight matrices are reused at every timestep

- 2Traces a complete hand-worked forward pass for a 3–4 event support-ticket sequence showing how the hidden state evolves

- 3Identifies the vanishing gradient problem: repeated multiplication by the recurrent weight matrix during BPTT

- 4Draws or describes the LSTM cell with forget gate f_t, input gate i_t, candidate cell update, and output gate o_t

- 5Implements a minimal RNN forward pass (no training) in pure NumPy with correct tensor shapes at each timestep

- 6Compares LSTM vs GRU parameter count and explains when the simpler GRU is often sufficient

- 7Explains why the Transformer’s attention + residual design gives direct gradient paths and O(1) dependency distance compared with RNN’s O(T) chain

Common Pitfalls

- Treating the hidden state as a simple summary instead of realizing it is the only carrier of information from all previous timesteps

- Forgetting that every timestep re-uses exactly the same Wxh and Whh matrices. This is both the strength (parameter efficiency) and the source of vanishing gradients

- Implementing BPTT incorrectly by detaching the hidden state or truncating the graph too early in PyTorch

- Assuming LSTM always beats GRU; on many moderate-length sequence tasks the GRU performs similarly with 25% fewer parameters

- Believing that padding and packing in RNN batches is optional. Incorrect masking leads to leakage from padding tokens into the hidden state

- Trying to stack 10+ plain RNN layers without residuals or layer norm; the vanishing problem compounds with depth as well as length

Follow-up Questions to Expect

Key Concepts Tested

Recurrence relation: hidden state h_t computed from current input and previous hidden state using shared weightsUnrolled computational graph view of an RNN over a variable-length sequence of ticket eventsVanishing and exploding gradients during BPTT and why long-range dependencies are hard for plain RNNsLSTM cell state and three gates (forget, input, output) that protect and update memory across timestepsGRU with only two gates (update and reset) as a lighter-weight alternative to LSTMSeq2seq encoder-decoder architecture for mapping one sequence (ticket history) to another (resolution steps)Why transformers win: O(1) maximum path length, full parallelism, and residual connections for gradient flowHand-worked 3-timestep RNN forward pass with explicit matrix multiplications and tanh activations in NumPyMental model bridge: RNN hidden state intuition lives on in KV cache and modern state-space models

Next Step

Next: Continue to Autoencoders, VAEs, and Generative Modeling Basics

You now know how neural networks can carry information through time in a hidden state. The next chapter turns from sequence memory to representation compression, showing how an encoder can squeeze images or examples into a latent code that later generative models can reuse.

References