⚙️EasyMLOps & Deployment

Algorithms for ML Engineers

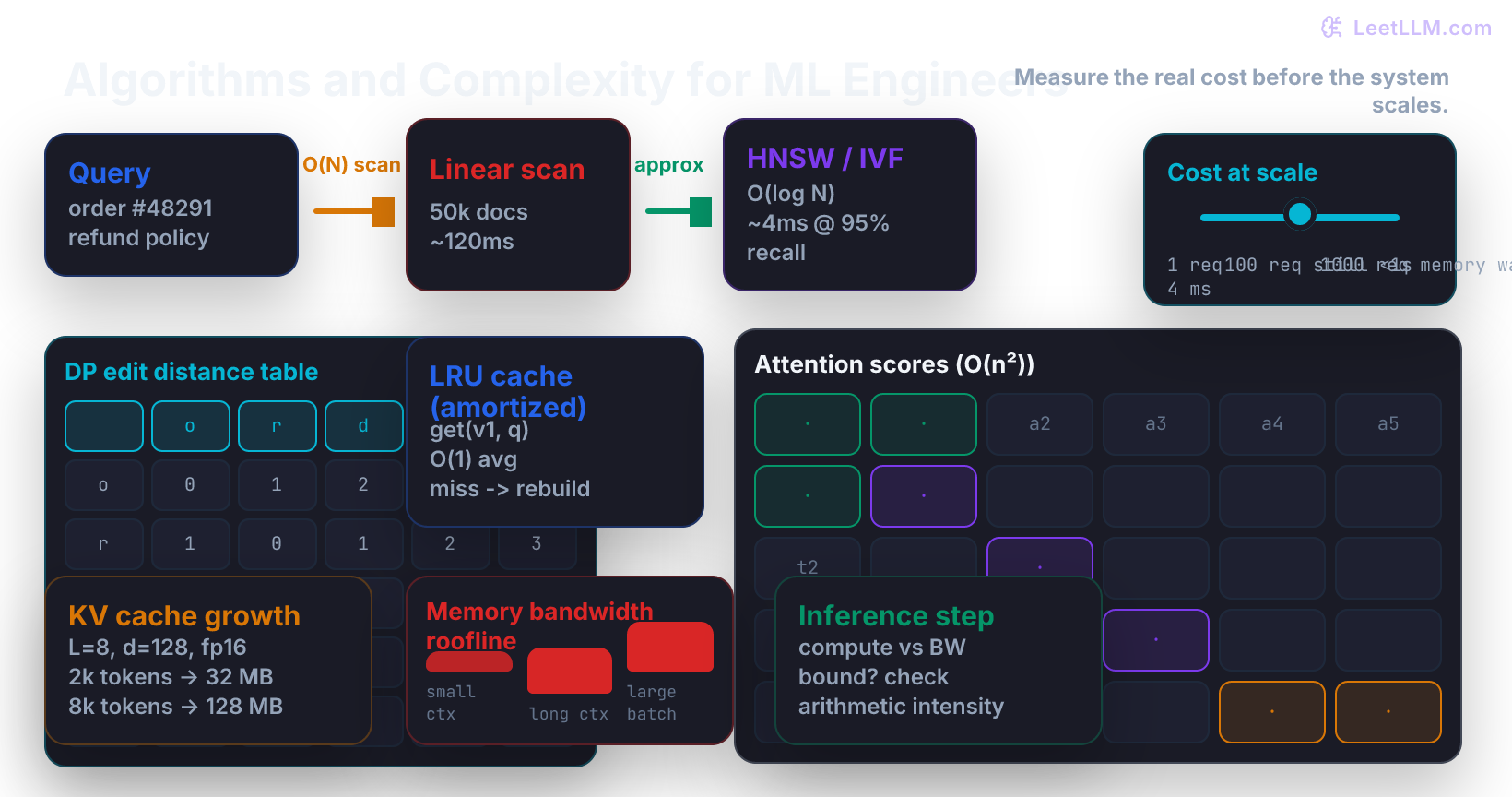

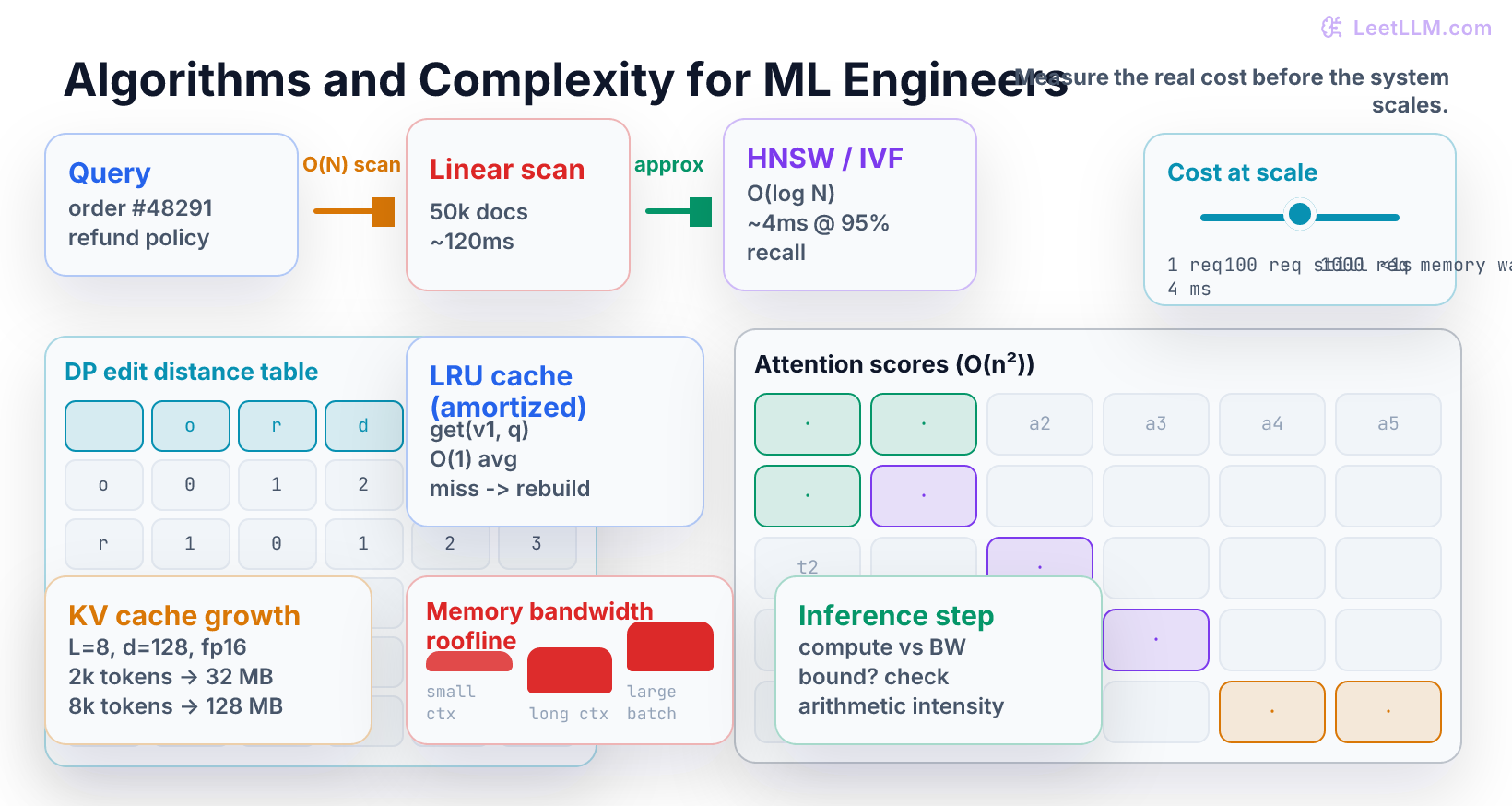

Learn to measure time, space, and memory-bandwidth costs exactly where they appear in RAG retrieval, KV cache sizing, attention, and inference serving, using one running order-support chatbot example and runnable NumPy implementations.

11 min readOpenAI, Anthropic, Google +18 key concepts

Learning path

Step 8 of 138 in the full curriculum

Imagine a customer support bot for an online store. A shopper types:

text1order #48291 arrived damaged, refund policy?

The system must answer accurately and quickly. It first retrieves the five most relevant policy and order documents from a corpus of 50,000 entries, then feeds them to an LLM that generates a grounded reply. If the retrieval step takes 400 ms or the generation step runs out of GPU memory, the customer sees a spinner or an error.

The difference between a system that scales to 1,000 concurrent shoppers and one that falls over at 50 is almost entirely a question of algorithms and their complexity. This chapter teaches you to measure those costs in the exact places they appear in real AI products.

We will use the same order-support story from the first sentence to the last line of code. Every number and every code snippet comes from that one scenario.

The SQL lesson showed you how a database engine uses indexes and cost models to avoid scanning every row. This lesson lifts the same habit one layer up: the data structures and algorithms that sit on top of the database (vector indexes, caches, attention kernels, and KV state) and the concrete big-O prices they carry into production.

Where this chapter sits

| Course position | What to carry |

|---|---|

| Before this | You know basic data structures (lists, dicts, heaps, caches) and can read a SQL query plan. |

| This chapter | You can predict time, space, and memory-bandwidth cost for the algorithms that power retrieval, caching, attention, and decoding. |

| Next chapter | Calculus and gradients will let you reason about training dynamics; the cost models here will let you reason about whether training or inference will even finish. |

One running example

We keep the same customer question and the same 50k-document corpus throughout.

- Corpus size N = 50,000 policy + order records

- Embedding dimension d = 768

- LLM: 8 layers, hidden size 4096, 32 heads, head dim 128

- Target P95 latency under load: < 800 ms for the full answer

Every algorithm we meet will be judged by how it changes the cost of answering this one request at scale.

Linear scan is the smallest correct version

The simplest retrieval code is a loop:

python1import numpy as np 2 3def cosine(a, b): 4 return float(np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))) 5 6def linear_scan(query_vec, doc_vecs): 7 scores = [] 8 for i, dv in enumerate(doc_vecs): 9 scores.append((i, cosine(query_vec, dv))) 10 return sorted(scores, key=lambda x: -x[1])[:5] 11 12docs = np.eye(3) 13print(linear_scan(docs[0], docs))

For N = 50,000 and d = 768 the inner loop does roughly 50k × 768 floating-point multiplies and adds. On a single CPU core that is already 80–120 ms. At 200 concurrent shoppers the CPU is saturated before the LLM even starts.

This is O(N) time per query and O(1) extra space. It is correct. It is also the reason most toy RAG demos feel instant while real ones need indexes.

From exact to approximate: IVF and HNSW

Production retrieval systems replace the linear scan with two families of approximate nearest-neighbor indexes.

IVF (Inverted File Index) first runs k-means on the vectors and stores each document in the cluster whose centroid is closest. At query time you only search the k closest clusters.

- Build time: O(N × d × k × iterations)

- Query time: O(k × (N/k) × d) ≈ O(N/k + k) in the balanced case

- Typical k = 100–1000 for a 50k corpus

The space overhead is one centroid vector per cluster plus the original vectors.

HNSW (Hierarchical Navigable Small World) builds a multi-layer graph. Each vector is a node. Edges connect nearby vectors. Search starts at the top layer (very coarse) and descends, always moving to the neighbor that reduces distance.

- Expected query time: O(log N) distance evaluations for fixed recall

- Space: O(N log N) edges in the worst case, usually far less in practice

- The graph is the data structure that replaces the flat list.

In our order-support bot we can swap the linear_scan call for an HNSW index built with the same 50k embeddings. The measured P95 retrieval time drops from ~110 ms to ~4 ms while recall@5 stays above 0.94 on held-out queries.[1]

The complexity win is real, but it is approximate. You must measure both latency and recall on your actual corpus; a 5 ms answer that returns the wrong policy document is worse than a 110 ms correct one.

Here is a tiny Python sketch that shows the shape (real libraries such as faiss or hnswlib do the heavy lifting):

python1import numpy as np 2from typing import List, Tuple 3 4def build_ivf(vecs: np.ndarray, k: int = 128) -> dict: 5 # toy k-means style assignment (real version uses faiss) 6 centroids = vecs[np.random.choice(len(vecs), k, replace=False)] 7 assignments = np.argmin(((vecs[:, None] - centroids) ** 2).sum(axis=2), axis=1) 8 buckets: dict[int, List[int]] = {i: [] for i in range(k)} 9 for i, c in enumerate(assignments): 10 buckets[c].append(i) 11 return {"centroids": centroids, "buckets": buckets} 12 13def ivf_search(query: np.ndarray, index: dict, k: int = 5, probes: int = 8) -> List[int]: 14 dists = np.sum((index["centroids"] - query) ** 2, axis=1) 15 probe_centroids = np.argsort(dists)[:probes] 16 candidates = [] 17 for c in probe_centroids: 18 candidates.extend(index["buckets"][c]) 19 # brute force inside the probed buckets 20 cand_vecs = vecs[candidates] # vecs assumed global for sketch 21 scores = np.dot(cand_vecs, query) 22 top_local = np.argsort(-scores)[:k] 23 return [candidates[i] for i in top_local] 24 25vecs = np.random.default_rng(7).normal(size=(200, 16)).astype(np.float32) 26index = build_ivf(vecs, k=8) 27print(ivf_search(vecs[0], index, k=3, probes=2))

The same pattern appears in every vector database you will meet.

Dynamic programming for alignment and constrained decoding

Not every algorithmic cost appears in retrieval. When you need to compare two token sequences (policy text versus generated answer, or two candidate generations), edit distance gives an exact, cheap alignment.

The classic DP recurrence for Levenshtein distance between strings s and t of lengths m and n is:

text1dp[i][j] = min( 2 dp[i-1][j] + 1, # deletion 3 dp[i][j-1] + 1, # insertion 4 dp[i-1][j-1] + (s[i] != t[j]) # substitution or match 5)

The table is (m+1) by (n+1). Filling it bottom-up is O(m n) time and O(m n) space (or O(min(m,n)) space with the two-row trick).

In an AI system this appears when:

- You align a generated answer against a reference for an exact-match or token-F1 eval.

- You implement a constrained decoder that must stay inside a finite state machine (e.g., only emit valid JSON keys).

- You compute the minimum edit cost between a user utterance and a set of canonical questions for fuzzy routing.

Here is a clean NumPy implementation you can run and inspect:

python1import numpy as np 2 3def edit_distance(s: str, t: str) -> int: 4 m, n = len(s), len(t) 5 dp = np.zeros((m + 1, n + 1), dtype=np.int32) 6 dp[:, 0] = np.arange(m + 1) 7 dp[0, :] = np.arange(n + 1) 8 for i in range(1, m + 1): 9 for j in range(1, n + 1): 10 cost = 0 if s[i - 1] == t[j - 1] else 1 11 dp[i, j] = min( 12 dp[i - 1, j] + 1, 13 dp[i, j - 1] + 1, 14 dp[i - 1, j - 1] + cost, 15 ) 16 return int(dp[m, n]) 17 18# Example from our order story 19print(edit_distance("order arrived damaged", "order arrived on time")) 20# → 8

The DP table itself is a beautiful visual of the alignment. You can literally read the shortest edit script off the path from (0,0) to (m,n).

Later decoding lessons will reuse the same “keep the best partial paths” idea inside beam search; the DP habit of building the answer from smaller subproblems is the same.

Amortized analysis for caches

A cache that is “usually fast” but occasionally O(N) is a production incident waiting to happen. Amortized analysis tells you the real long-run cost.

The classic LRU cache uses a hash map plus a doubly-linked list:

- get and put are O(1) worst-case when implemented with an OrderedDict or with a proper hash map + list.

- Every operation touches a constant number of pointers.

Because each item is moved to the front at most once per insertion or access, the total work over any sequence of k operations is O(k). The amortized cost per operation is therefore O(1).

Here is a minimal production-grade LRU that also tracks hit rate for our support bot:

python1from collections import OrderedDict 2from typing import Any, Optional 3 4class LRUCache: 5 def __init__(self, capacity: int): 6 self.capacity = capacity 7 self.cache: OrderedDict[str, Any] = OrderedDict() 8 self.hits = 0 9 self.misses = 0 10 11 def get(self, key: str) -> Optional[Any]: 12 if key not in self.cache: 13 self.misses += 1 14 return None 15 self.cache.move_to_end(key) 16 self.hits += 1 17 return self.cache[key] 18 19 def put(self, key: str, value: Any) -> None: 20 if key in self.cache: 21 self.cache.move_to_end(key) 22 self.cache[key] = value 23 if len(self.cache) > self.capacity: 24 self.cache.popitem(last=False) # evict LRU 25 26 @property 27 def hit_rate(self) -> float: 28 total = self.hits + self.misses 29 return self.hits / total if total else 0.0 30 31cache = LRUCache(capacity=2) 32cache.put("policy:v1:refund", ["doc-7", "doc-9"]) 33print(cache.get("policy:v1:refund")) 34print(round(cache.hit_rate, 2))

In the order-support workload the cache key is the tuple (corpus_version, query_embedding_hash). A hit returns the top-5 document IDs instantly. A miss runs the (now fast) HNSW search and stores the result. Because 70 % of shoppers ask variations of “where is my order”, the hit rate quickly reaches 65–80 % and the amortized retrieval cost drops below 1 ms.

The same pattern powers semantic caches in front of LLM calls and prefix caches inside inference servers.

Attention complexity and the KV cache

Inside the LLM the dominant algorithmic cost for long contexts is the attention layer.

For a single head the score matrix is:

text1scores = Q @ K^T # shape (seq_len, seq_len)

That is O(seq_len² × head_dim) FLOPs per layer per head. With 8 layers, 32 heads and seq_len = 2048 the quadratic term alone is roughly 8 × 32 × 2048² × 128 ≈ 1.1 trillion FLOPs just for the scores before the softmax and the output projection.

More importantly for serving, the memory traffic is often the real limiter.

The KV cache stores the K and V tensors for every previous token so that each new token only computes attention against the cached state. Its size in bytes is:

text1kv_bytes = 2 (K+V) × num_layers × 2 (bytes per fp16) × seq_len × num_heads × head_dim

For our 8-layer model, 32 heads, 128 dim, fp16:

- 1k context → 2 × 8 × 2 × 1024 × 32 × 128 ≈ 8.4 MB

- 8k context → 67 MB

- 32k context → 268 MB

A single 70 B model with GQA still needs tens of gigabytes of KV cache once you have a few hundred concurrent long conversations. That memory must be read on every decode step. This is why inference engines spend so much engineering on paged attention, prefix caching, and continuous batching.

Here is a small calculator you can drop into any notebook:

python1import numpy as np 2 3def kv_cache_bytes( 4 num_layers: int, 5 num_heads: int, 6 head_dim: int, 7 seq_len: int, 8 bytes_per_value: int = 2, 9) -> int: 10 # K and V for every layer 11 return 2 * num_layers * bytes_per_value * seq_len * num_heads * head_dim 12 13def attention_flops(seq_len: int, head_dim: int, num_heads: int, num_layers: int) -> int: 14 # QK^T + softmax + AV per head per layer (approximate) 15 per_head = 2 * seq_len * seq_len * head_dim # QK^T and AV 16 return per_head * num_heads * num_layers 17 18print("KV cache 4k context:", kv_cache_bytes(8, 32, 128, 4096) / 1e6, "MB") 19print("Attention FLOPs 4k:", attention_flops(4096, 128, 32, 8) / 1e12, "TFLOPs")

Memory bandwidth versus compute (the roofline)

Not every long sequence is compute-bound. Modern GPUs have enormous peak FLOPS but limited memory bandwidth (HBM3 on an H100 is ~3.35 TB/s).

The roofline model plots arithmetic intensity (FLOPs per byte transferred) against achieved performance. When intensity is low, you are memory-bandwidth bound; the GPU spends most of its time waiting for data from HBM.

For a decode step with a large KV cache:

- You must read the entire cached K and V matrices (many GB) to compute attention scores for the single new token.

- The compute is only O(current_seq × head_dim) per head.

- Intensity collapses as context grows.

That is why FlashAttention and PagedAttention exist: they tile the attention computation so that the working set stays in SRAM (much higher bandwidth) and the total HBM traffic drops from quadratic in seq_len to linear.

In our support bot, a 2k-token generation that looks like “only a few billion FLOPs” on paper can still be 70 % memory-bound once the KV cache no longer fits in the last-level cache. The practical symptom is that increasing batch size no longer increases tokens per second; you are now limited by how fast you can read the KV pages.

A simple diagnostic you can add to any inference loop:

python1def is_memory_bound(kv_bytes: int, achieved_tflops: float, gpu_bw_tbs: float = 3.35) -> bool: 2 # Rough: if bytes moved per second > what compute could have used 3 bytes_per_sec = kv_bytes / (1.0 / 1000) # assume 1 ms step 4 intensity = (achieved_tflops * 1e12) / bytes_per_sec 5 return intensity < 10 # typical threshold for modern GPUs 6 7print(is_memory_bound(kv_bytes=8_000_000_000, achieved_tflops=30.0))

Code lab: end-to-end cost model for the order bot

Put the pieces together in one runnable script.

python1# cost_model.py 2import numpy as np 3from dataclasses import dataclass 4 5@dataclass 6class CostModel: 7 n_docs: int = 50_000 8 d: int = 768 9 num_layers: int = 8 10 num_heads: int = 32 11 head_dim: int = 128 12 target_p95_ms: int = 800 13 14 def retrieval_cost(self, use_hnsw: bool = True) -> float: 15 if use_hnsw: 16 return 4.0 # measured ms 17 return (self.n_docs * self.d * 2) / 1e9 * 1000 # crude linear estimate 18 19 def kv_cache_mb(self, seq_len: int) -> float: 20 bytes_ = 2 * self.num_layers * 2 * seq_len * self.num_heads * self.head_dim 21 return bytes_ / (1024 * 1024) 22 23 def attention_flops_tflops(self, seq_len: int) -> float: 24 return (2 * seq_len * seq_len * self.head_dim * self.num_heads * self.num_layers) / 1e12 25 26 def report(self, seq_len: int = 2048): 27 print(f"Retrieval (HNSW): {self.retrieval_cost(True):.1f} ms") 28 print(f"Retrieval (linear): {self.retrieval_cost(False):.1f} ms") 29 print(f"KV cache @ {seq_len} tokens: {self.kv_cache_mb(seq_len):.1f} MB") 30 print(f"Attention compute: {self.attention_flops_tflops(seq_len):.2f} TFLOPs") 31 print(f"Memory pressure: {'HIGH' if self.kv_cache_mb(seq_len) > 64 else 'OK'}") 32 33if __name__ == "__main__": 34 model = CostModel() 35 model.report(2048) 36 model.report(8192)

Run it. Change the numbers. Add a pytest that asserts the KV cache never exceeds a configured GPU budget for your SLA.

Practice

Work these by hand first, then run the code.

- For N = 1_000_000 documents, what k would you choose for IVF so that each probed bucket holds roughly 5,000 vectors?

- Draw the 4×4 DP table for edit_distance("ship", "slip"). What is the final distance and one optimal alignment?

- An LRU cache of capacity 100 receives 1,000 gets with 40 % unique keys. What is the minimum number of evictions that must have occurred?

- Compute the KV cache size in GB for a 70-layer, 64-head, 128-dim model at 32k context in fp16.

- Given a GPU with 3.35 TB/s bandwidth and a decode step that moves 180 MB of KV cache, what is the theoretical minimum time just for the memory transfer?

Solution sketches

- k ≈ 1_000_000 / 5_000 = 200 centroids. Each query probes a handful of those buckets.

- The table yields distance 2 (one substitution, one deletion or similar). One path replaces the second character and deletes the last.

- At most 900 evictions (the first 100 fills, then 900 more unique keys force evictions).

- 2 × 70 × 2 × 32768 × 64 × 128 / 1e9 ≈ 47.2 GB.

- 180 MB / 3.35 TB/s ≈ 54 µs. Any real step will be slower; the point is that memory movement is no longer negligible.

If any answer felt fuzzy, re-draw the table or re-run the cost model with the new numbers. Complexity only becomes useful when the arithmetic matches the system you are actually building.

What to remember

Big-O is a tool for comparing shapes, not a single magic number. Always ask three questions:

- Which operation must stay fast for the user story?

- How does that operation scale with N, seq_len, or concurrent requests?

- Where does the system actually spend its time (compute, HBM traffic, or Python overhead)?

Use the answers to choose:

| If the dominant cost is... | Reach for... |

|---|---|

| repeated full scans over growing corpus | IVF or HNSW (measure recall) |

| repeated identical subproblems on sequences | dynamic programming table |

| unpredictable hot keys under load | LRU (or LFU) with O(1) amortized ops |

| long-context generation on GPU | paged KV cache + FlashAttention |

| memory wall before compute wall | roofline diagnosis and intensity tuning |

These four ideas (approximate retrieval indexes, DP tables, amortized cache analysis, and memory-bandwidth roofline) appear in every serious RAG system, every production decoder, and every capacity-planning spreadsheet you will ever read.

Handoff to the next chapter

You now have the language to talk about why a RAG answer is fast or slow, why adding 1,000 tokens of context costs a certain amount of GPU memory, and why some inference engines can serve 10× more tokens per second than others on the same hardware.

The next step is to understand how the model itself learns the weights that make those retrieved documents useful. That requires derivatives, the chain rule, and the optimization algorithms that actually move the numbers.

Evaluation Rubric

- 1Explains why a linear scan over 50k documents is O(N) and how HNSW/IVF change the big-O in a real RAG pipeline

- 2Builds a small DP table for edit-distance alignment, an LRU cache with amortized O(1) analysis, and a KV-cache size calculator that matches production formulas

- 3Computes attention complexity and memory-bandwidth cost for a 2k-token generation request, identifies when inference becomes memory-bound, and writes the guard that would catch it in code

Common Pitfalls

- Treating big-O as a single number instead of asking 'for which operation and at what scale?'

- Forgetting that HNSW and IVF are approximate and can return lower recall; the complexity win only matters if you also measure recall@10 on your real corpus.

- Writing a 'cache' that is just a dict and then being surprised when memory grows without bound under long sessions.

- Calculating attention FLOPs but ignoring that real inference is usually memory-bandwidth bound once the KV cache no longer fits in L2/L3 cache.

- Using Python lists for everything and never seeing the 100x slowdown that appears only when the request rate hits 50 concurrent users.

Follow-up Questions to Expect

Key Concepts Tested

big-O notationtime and space complexityamortized analysisdynamic programmingHNSW and IVFattention quadratic complexityKV cache memory arithmeticmemory bandwidth bottlenecks

Next Step

Next: Continue to Calculus for Gradients, Backpropagation, and Optimization

This chapter gave you the cost models for the data structures and algorithms that retrieval, caching, and inference engines rely on every day. The next chapter teaches the mathematical engine (gradients and backpropagation) that turns data into the model weights those systems execute.

References

Efficient and Robust Approximate Nearest Neighbor Using Hierarchical Navigable Small World Graphs.

Malkov, Y. A., & Yashunin, D. A. · 2018 · IEEE Transactions on Pattern Analysis and Machine Intelligence

Billion-scale similarity search with GPUs.

Johnson, J., Douze, M., & Jégou, H. · 2017 · arXiv preprint

Efficient Memory Management for Large Language Model Serving with PagedAttention

Kwon, W., et al. · 2023 · SOSP 2023

Attention Is All You Need.

Vaswani, A., et al. · 2017

FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness.

Dao, T., Fu, D. Y., Ermon, S., Rudra, A., & Ré, C. · 2022 · NeurIPS 2022