🛡️MediumAlignment & Safety

Responsible AI Governance

Master the practical foundations of responsible AI: EU AI Act high-risk rules, model cards, agent audit trails, red-teaming programs, liability allocation, accessibility mandates, and building a living organizational risk register.

13 min readOpenAI, Anthropic, Google +410 key concepts

Learning path

Step 54 of 138 in the full curriculum

Responsible AI, Governance, Ethics, and Compliance Basics

Governance is no longer a legal afterthought. It is the operating system that lets you ship LLM products without destroying user trust, attracting massive fines, or waking up to a 6 a.m. call from your counsel.

Consider ShipFlow, a logistics platform that serves merchants across the EU. The company runs three LLM-powered systems:

- A route optimizer that assigns drivers and sets ETAs for time-sensitive deliveries.

- A customer support agent that approves or denies refunds and handles disputes.

- A financing scorer that decides which merchants receive instant cash advances against their sales.

Each of these touches safety, fundamental rights, or credit decisions. Under the EU AI Act, at least two of them are high-risk. If ShipFlow cannot prove it has the right controls, documentation, and oversight, the company can face fines up to 6 % of global annual turnover and personal liability for executives.[1]

This article teaches the concrete practices that turn "responsible AI" from a slogan into an engineering and organizational discipline. You will learn the EU AI Act classification rules, how to write and maintain model cards, what audit logs an agent must produce, how to run a real red-teaming program, how liability actually flows, why accessibility belongs in the risk register, and how to build a living organizational AI risk register that connects every technical control to regulatory reality.

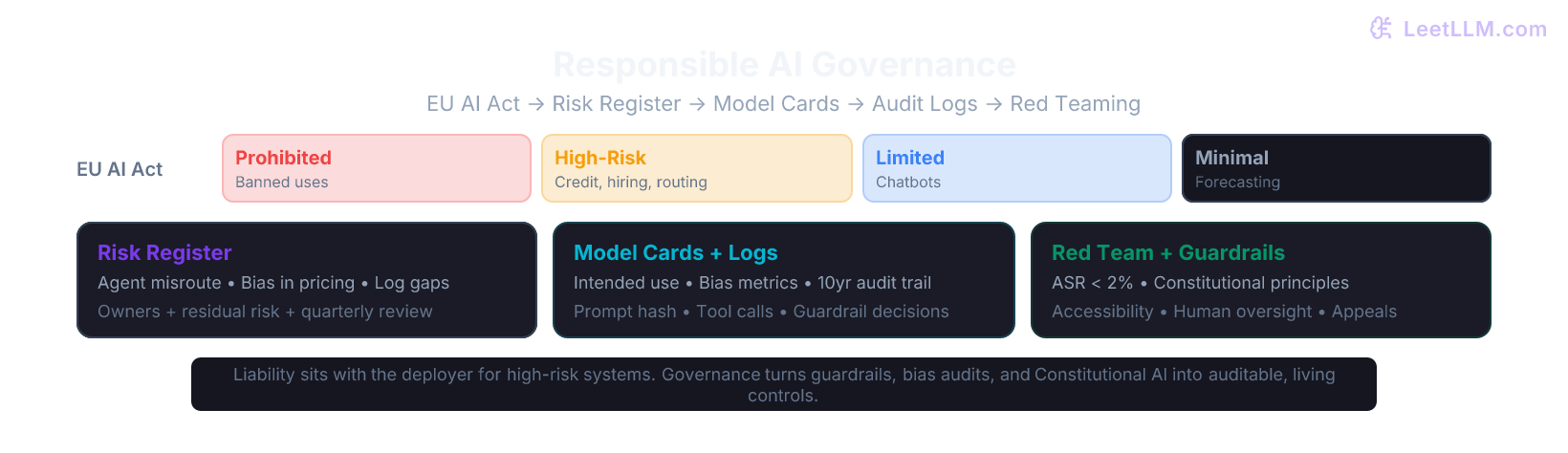

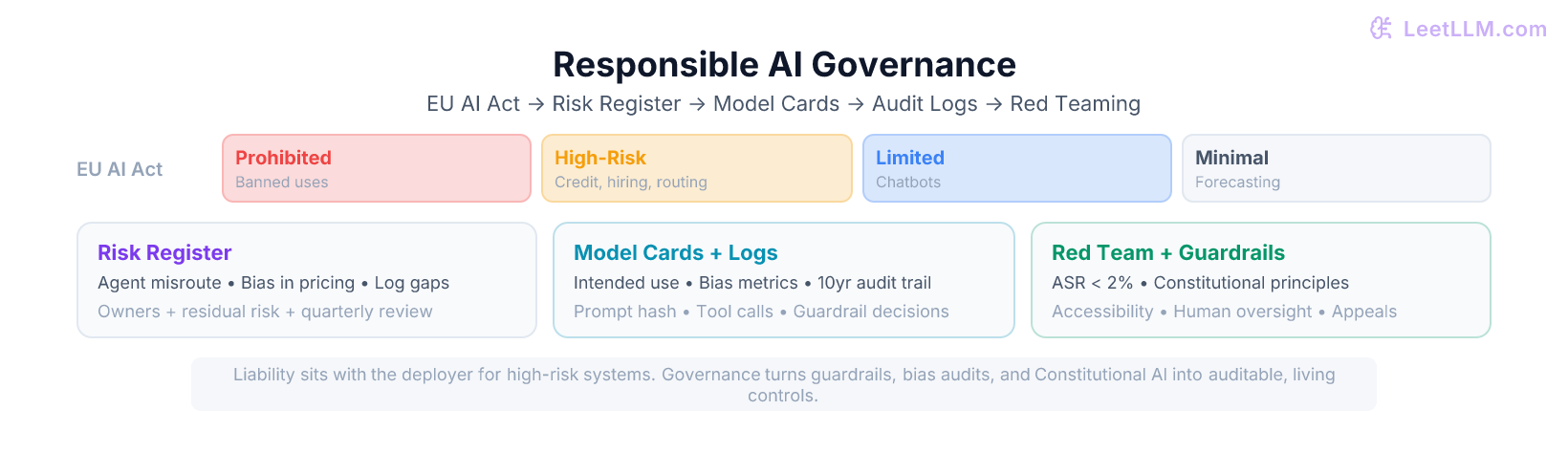

The diagram below shows the full stack in one view.

Why This Matters for Every LLM Engineer

Five years ago, most teams treated safety as prompt engineering and bias as "something the data team handles." Today, the same teams are asked to sign off on model cards that regulators may audit, to produce 10-year audit logs on demand, and to explain in court why a particular agent decision was made.

The shift is driven by three forces that now intersect:

- Regulation with real teeth (EU AI Act, upcoming US state laws, sector rules for finance and healthcare).

- Civil liability and insurance requirements that treat AI systems like medical devices or aircraft software.

- User and customer expectations. Merchants and consumers will not tolerate systems that systematically disadvantage certain groups or make inexplicable decisions.

The good news is that the technical work you already do (guardrails, bias audits, Constitutional AI, red teaming) is exactly the evidence regulators and courts want to see. Governance simply gives that work a home, a cadence, and a paper trail.

EU AI Act: High-Risk Classification in Practice

The EU AI Act uses a risk-based approach with four tiers. Only two of them create heavy obligations.

Prohibited practices (social scoring by governments, real-time biometric identification in public spaces for law enforcement, subliminal manipulation) are banned outright.

High-risk systems are listed in Annex III. The categories most relevant to LLM products include:

- Employment, worker management, and access to self-employment (resume screening, shift scheduling, performance evaluation).

- Credit scoring and creditworthiness assessment.

- Access to essential public and private services (housing, insurance, benefits).

- Law enforcement and migration.

- Administration of justice.

- Safety-critical systems that can affect physical or mental health (certain medical, transport, or critical infrastructure uses).

Limited risk covers chatbots and emotion recognition systems that must disclose they are AI.

Minimal risk covers most inventory forecasters, product recommendation widgets, and internal routing helpers.

For an LLM engineer the practical question is: "If my system is used in one of the high-risk contexts, even as a component, the obligations apply to the deployer."

ShipFlow's route optimizer that can reroute drivers into dangerous conditions or the financing scorer that denies cash advances are high-risk. The customer support agent that only handles refunds is probably limited risk unless the company uses it to make automated decisions about essential services.

Classification checklist for your next project

- Does the output affect a person's access to employment, credit, housing, or essential services?

- Can a wrong output create physical safety risk (wrong route for hazardous goods, incorrect medical advice)?

- Is the system used by or on behalf of a public authority in a domain listed in Annex III?

- Will the system make or substantially influence a decision that has legal effect on a natural person?

If any answer is yes, treat the system as high-risk until legal counsel says otherwise. Document the classification decision and keep it with the model card.

Model Cards and Datasheets

A model card is a living document that travels with the model. The original framework[2] defines the minimum sections every card should contain:

- Model details (architecture, training data summary, version, license)

- Intended use and out-of-scope uses

- Performance and benchmarks, broken down by relevant subgroups

- Bias and fairness evaluation results

- Safety and red-team findings

- Known limitations and failure modes

- Environmental impact and compute used

- Contact and update policy

A datasheet[3] does the same job for the dataset: motivation, composition, collection process, preprocessing, labeling, distribution, maintenance, and recommended uses.

For high-risk systems the EU AI Act requires technical documentation that is essentially a detailed model card plus risk management file. Keep both the card and the underlying datasheets under version control. Every time you retrain, add a new retrieval-augmented fine-tune, or change guardrails, you create a new version of the card and note what changed.

The bias and fairness metrics you compute in the dedicated bias lesson become the "Fairness evaluation" section of the card. The red-team results you will see in the Constitutional AI lesson become the "Safety evaluation" section. Governance is the glue that forces these technical artifacts to exist and stay current.

Auditability for Agents

General-purpose chat models are hard enough to audit. Agents that call tools, maintain state across turns, and act in the real world are much harder.

An audit log for a production agent must let a reviewer reconstruct exactly what happened and why. At minimum it must record:

- The exact prompt template and its version hash

- The model checkpoint or API version used

- All retrieved context (document IDs or vector IDs, not just text)

- Every tool call: name, arguments, result, latency, and whether the guardrail allowed it

- Any intermediate reasoning or plan the agent produced

- The final output shown to the user

- All guardrail decisions (input blocked, output rewritten, human review requested)

- User identifier (hashed or tokenized), session ID, and precise timestamp

- The constitutional principles or policy version active at the time

Store these records in an append-only store with cryptographic integrity (object storage with object lock, or a database with row-level security and immutable history). For high-risk systems you must be able to produce the relevant slice of logs within days of a regulator request and retain them for at least 10 years.

Many teams start with structured JSON logs written to a dedicated topic or table, then add a nightly job that hashes the day's batch and writes the hash to a tamper-evident ledger. This is the minimum that satisfies both incident response and regulatory record-keeping.

Red-Teaming Programs

Red teaming is the systematic attempt to make the system fail in ways that matter: jailbreaks that bypass safety, biased or toxic outputs on protected groups, agent actions that cause real harm (refund fraud, dangerous routing instructions, data leakage through tool misuse), and goal hijacking across multi-turn conversations.

A production red-teaming program has four parts:

-

Scope and threat model. Write down the attacker personas and the assets they want to reach. For ShipFlow this includes a malicious merchant trying to get an inflated cash advance, a disgruntled driver trying to force a dangerous route, and an external attacker trying to extract other merchants' financial data through the support agent.

-

Methods. Combine automated adversarial prompt generation (the techniques you will see in the Constitutional AI article), human red teamers, and bug-bounty programs. Always test the full production stack, not just the base model: retrieval, guardrails, tool policies, and human-in-the-loop escalation must all be in the firing line.

-

Metrics. Track Attack Success Rate (ASR), the percentage of attacks that produce the bad outcome, and False Refusal Rate (FRR) on benign traffic. Both matter. A system that refuses 30 % of legitimate refund requests to achieve 0 % ASR is not a success.

-

Feedback loop. Every successful attack must create or update an entry in the organizational risk register, trigger a guardrail or constitutional principle update, and appear in the next version of the model card.

Red teaming is not a one-off exercise before launch. It is a quarterly (or continuous) program whose results are reviewed by the same governance body that owns the risk register.

Liability and Who Actually Pays

The EU AI Act places the heaviest obligations on the "deployer", the legal person that uses the AI system in the EU market under its own name or trademark. If ShipFlow fine-tunes an open model or wraps a closed model with its own retrieval and tools and offers the result to EU merchants, ShipFlow is the deployer for the high-risk use cases.

The general-purpose model provider (OpenAI, Anthropic, Meta, etc.) is the "provider" and has its own obligations, but when the deployer modifies the system or uses it outside the provider's documented intended use, much of the liability shifts downstream.

Key practical consequences:

- You must be able to show the provider's intended-use documentation and prove that your deployment stays inside it or that you added compensating controls.

- Contracts with model providers should allocate responsibility for high-risk documentation and testing.

- Insurance underwriters now ask for model cards, red-team reports, and risk-register excerpts before they will cover AI products.

Documentation is your best defense. If you can produce a current model card, a complete risk register, and the last four quarters of red-team results, you have already won half the argument in any regulatory or liability conversation.

Accessibility Is a Governance Issue

Accessibility requirements appear in both the EU AI Act (transparency and human oversight) and the European Accessibility Act. For LLM systems this means more than making the web interface WCAG compliant.

The generated outputs themselves must be usable by people with disabilities:

- Clear, non-figurative language for users with cognitive disabilities.

- No reliance on color alone or on visual patterns that could trigger seizures.

- Alternative text or structured data when the model describes images or charts.

- Avoidance of stereotypes or lower-quality service for users who disclose disability in the conversation.

Bias against disabled groups is both a fairness failure (see the bias lesson) and an accessibility failure. Add disability-related test cases to your bias audits and red-team scope. Log whether the agent offered appropriate accommodations when a user requested them.

The Organizational AI Risk Register

All of the above only becomes governance when it lives in one place that the company actually reviews on a cadence.

A minimal viable risk register for AI systems contains these columns for each identified risk:

- Risk ID and short title

- Description and affected stakeholder

- Risk category (safety, fairness/bias, privacy, reliableness, compliance, accessibility, reputational)

- Inherent likelihood and impact (before controls)

- Existing controls (guardrails version, red-team coverage, model-card status, human oversight process)

- Residual likelihood and impact

- Risk owner (named person or team)

- Mitigation plan and due date

- Last reviewed and next scheduled review

- Regulatory reference (EU AI Act article, internal policy)

ShipFlow's register might contain entries such as:

-

"Route optimizer suggests unsafe roads for hazardous cargo"

Category: Safety (High inherent risk)

Mitigations: Guardrails + human dispatcher review + quarterly red team

Residual risk: Medium

Owner: Safety engineering

Next review: 2026-Q3 -

"Financing scorer disadvantages merchants in certain postal codes"

Category: Fairness (High inherent risk)

Mitigations: Bias audit + model card section + appeal process

Residual risk: Low

Owner: Fairness lead

Next review: After next training run -

"Support agent can be jailbroken into issuing fraudulent refunds"

Category: Robustness / Compliance (Medium inherent risk)

Mitigations: Prompt injection defenses + output guardrails + constitutional principles

Residual risk: Low

Owner: Trust & safety

Next review: After next red-team cycle

The register is reviewed by an AI governance board (or the existing risk committee) at least quarterly. New use cases, major model updates, or external incidents trigger ad-hoc reviews. The register is the single source of truth that regulators, auditors, and the board will ask to see first.

Pre-Deployment Governance Checklist

Use this checklist before any LLM system that touches a high-risk or limited-risk use case goes live:

- Risk classification documented and signed by legal + product owner

- Model card (v1) published internally with intended use, bias results, and known limitations

- Datasheet(s) for all training and evaluation data referenced from the card

- Agent audit log schema implemented and tested with sample queries

- Logs flowing to immutable store with retention policy set to at least 10 years for high-risk

- Red-team plan written, first round executed, findings in risk register

- Guardrails + Constitutional AI principles cover the top risks identified in the register

- Human oversight / appeal process defined and staffed for high-risk decisions

- Accessibility test cases run and results in the model card

- Risk register entry created with owner and review date

- Contractual allocation of liability reviewed with legal

- Insurance broker has seen the current model card and red-team summary

If any item is missing, the system is not ready for production in a regulated environment.

Connecting the Pieces

The bias and fairness work you do produces the numbers that go into the model card. The guardrails and prompt-injection defenses you build become the "existing controls" column in the risk register. The Constitutional AI principles and automated red teaming you will study next become the scalable way to keep the safety section of the card and the register up to date without hiring an army of human reviewers for every new model release.

Governance does not replace those technical practices. It makes them visible, repeatable, and defensible.

What You Should Do This Week

- Pick one LLM system your team owns or is building. Run the four-question classification checklist above and write the one-page classification memo.

- Create the first version of its model card using the Mitchell structure. Even if the card is only 60 % complete, the act of writing it will surface gaps in your current testing.

- Add the three highest risks you can think of to a shared risk-register spreadsheet and assign owners.

- Schedule the first quarterly red-team session and invite both the guardrail owners and the people who will have to defend the system in an audit.

These four actions cost almost nothing and immediately move your organization from "we care about responsible AI" to "we can prove we manage it."

Evaluation Rubric

- 1Correctly classifies an LLM use case (e.g. delivery routing agent or merchant credit scorer) as high-risk under EU AI Act Annex III criteria

- 2Lists the core sections of a model card (intended use, performance, bias/fairness, safety, limitations) and explains why each supports auditability

- 3Designs minimal viable audit logs for an agent: every tool call, prompt version hash, model ID, user context, and decision timestamp

- 4Outlines a red-teaming program that combines automated adversarial generation with human review and feeds findings into both guardrails and the risk register

- 5Explains the difference in liability between a general-purpose LLM provider and a company that fine-tunes or deploys the model in a high-risk domain

- 6Builds a living risk register entry that includes likelihood × impact, mitigation owner, residual risk after guardrails + red teaming, and review cadence

- 7Connects technical controls (guardrails, Constitutional AI) to regulatory obligations (documentation, human oversight, robustness testing)

Common Pitfalls

- Treating governance as a one-time compliance checkbox instead of a living process with quarterly risk register reviews and red-team cycles

- Building beautiful model cards that are never updated after the initial release or after significant fine-tuning / guardrail changes

- Logging only final outputs while omitting the full agent trace (tool calls, intermediate reasoning, prompt versions), making incident investigation impossible

- Running red-teaming only on the base model and forgetting to test the production stack with guardrails, retrieval, and tool-use policies enabled

- Assuming 'we are not in the EU' removes obligations. Any system that affects EU residents or is used by EU customers triggers the Act for the deployer

- Ignoring accessibility until after launch: biased or inaccessible outputs for users with disabilities create both ethical and legal exposure

Follow-up Questions to Expect

Key Concepts Tested

EU AI Act risk categories and high-risk classification criteriaModel cards vs datasheets for transparency and accountabilityAuditability requirements for LLM agents (decision logs, tool traces, prompt hashes)Red-teaming program design, metrics (ASR, FRR), and integration with guardrailsLiability allocation between providers, deployers, and users under EU rulesAccessibility obligations and disability bias in generative systemsOrganizational AI risk registers and living governance processesConnection between technical guardrails, Constitutional AI principles, and compliance documentation10-year record-keeping obligations for high-risk systemsHuman oversight and appeal mechanisms for automated decisions

Next Step

Next: Continue to Data Labeling, Human Feedback, and Active Learning Systems

Governance tells you what evidence, provenance, and human oversight must exist. The next chapter applies those requirements to the data pipeline itself, showing how active learning, annotator quality controls, and versioned preference datasets turn human judgment into trustworthy training signal.

References

EU AI Act: Regulation laying down harmonised rules on artificial intelligence

European Parliament and Council of the European Union · 2024

Model Cards for Model Reporting

Mitchell, M., Wu, S., Zaldivar, A., et al. · 2019 · FAT* 2019

Datasheets for Datasets

Gebru, T., Morgenstern, J., Vecchione, B., et al. · 2021 · Communications of the ACM

Artificial Intelligence Risk Management Framework (AI RMF 1.0)

National Institute of Standards and Technology · 2023

Constitutional AI: Harmlessness from AI Feedback.

Bai, Y., et al. · 2022 · arXiv preprint

Red Teaming Language Models with Language Models.

Perez, E., et al. · 2022 · EMNLP 2022

Training Language Models to Follow Instructions with Human Feedback (InstructGPT).

Ouyang, L., et al. · 2022 · NeurIPS 2022

NeMo Guardrails: A Toolkit for Controllable and Safe LLM Applications with Programmable Rails.

Rebedea, T., et al. · 2023 · EMNLP 2023 Demo