🧠HardTransformer Architecture

Mechanistic Interpretability

Discover how sparse autoencoders turn polysemantic LLM activations into monosemantic, human-interpretable features. Master SAE training, feature circuits, superposition, and use them for activation steering and safety analysis, including a complete NumPy toy SAE on synthetic transformer activations.

18 min readAnthropic, OpenAI, Google DeepMind +311 key concepts

Learning path

Step 82 of 138 in the full curriculum

Mechanistic Interpretability & Sparse Autoencoders (SAEs) for LLMs

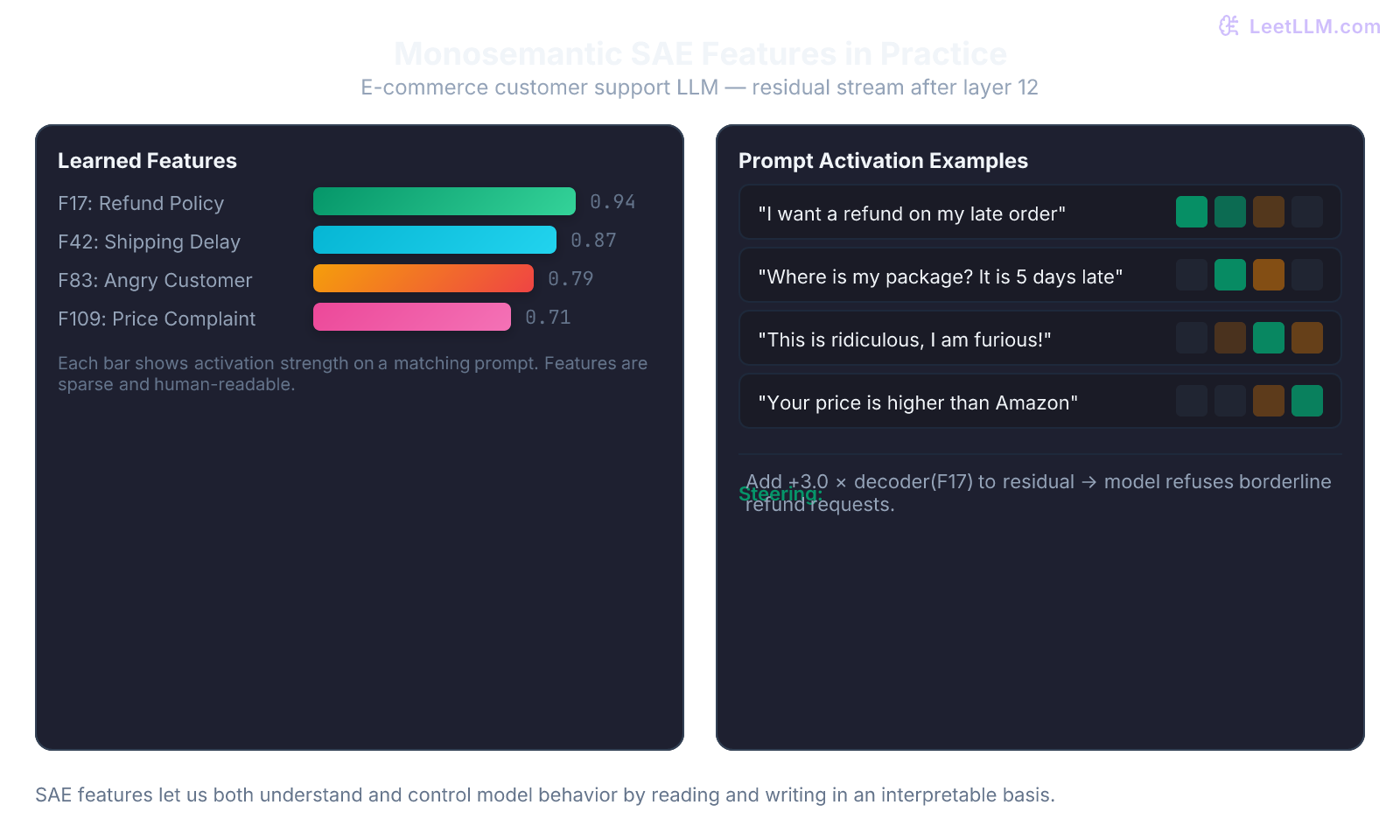

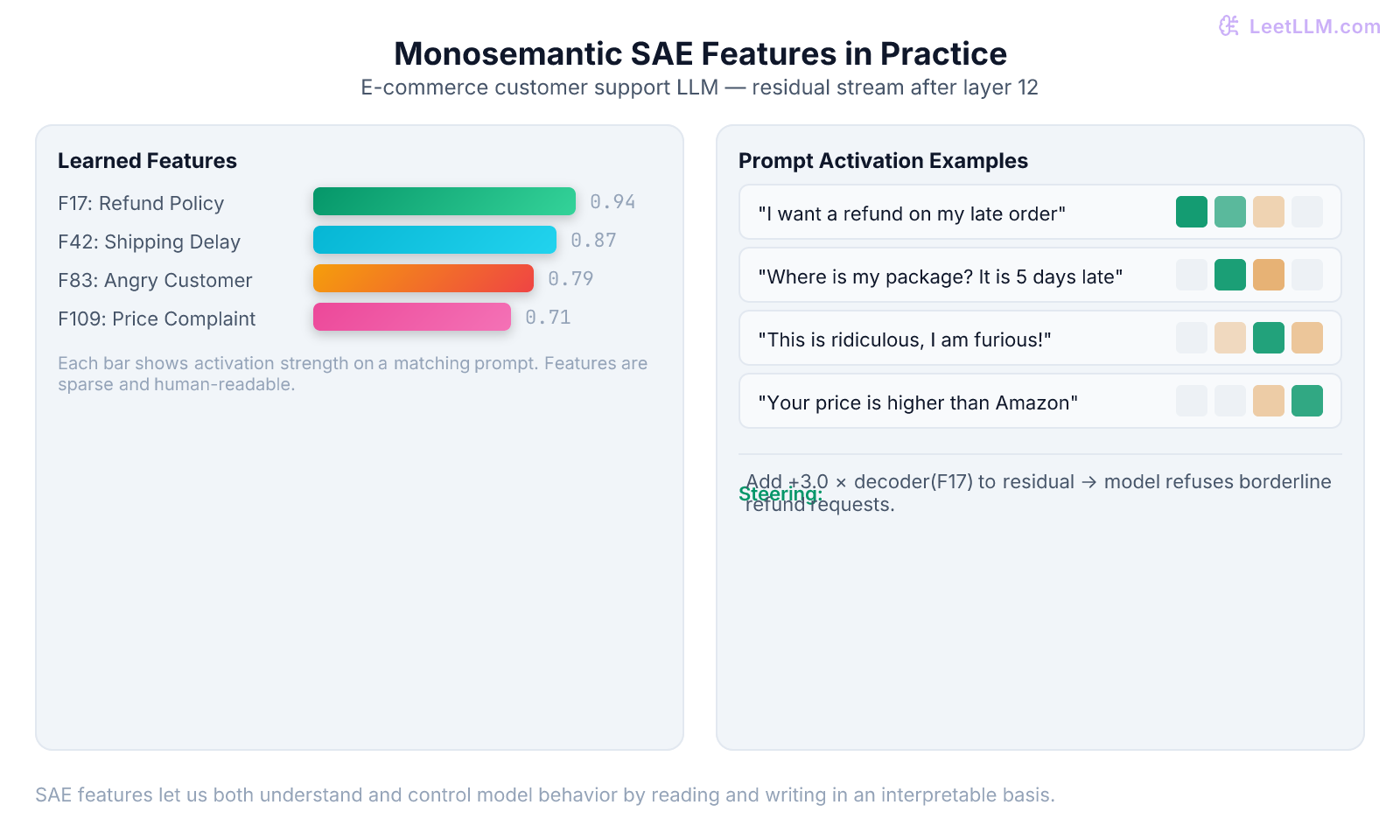

A customer-support LLM at an online retailer keeps refusing legitimate refund requests for delayed packages. When you inspect the attention heads and MLP neurons that fire on those conversations, dozens of neurons light up in confusing combinations. One neuron activates both on "refund requested" and on "angry customer tone" and on "high-value order." Another neuron fires for "shipping delay" and also for "price complaint." You cannot point to a clean "refund policy feature."

This is the core problem of polysemanticity inside large language models. Individual neurons (or directions in activation space) do not correspond to single human concepts. They participate in many unrelated computations at once because the model packs far more features into its hidden state than it has dimensions. The phenomenon is called superposition.

Mechanistic interpretability researchers at Anthropic and elsewhere discovered a practical way to untangle these representations: train a sparse autoencoder (SAE) on the model's activations. The SAE learns an overcomplete dictionary of directions that are far more monosemantic. One recovered feature now fires almost exclusively on refund-policy language, another on shipping-delay mentions, and a third on expressions of customer anger. Once you have those features, you can both read the model's internal "thoughts" and steer them by adding or subtracting the corresponding direction.

This lesson teaches you exactly how SAEs work, why they succeed where raw neurons fail, how to train a minimal version yourself in NumPy, and how the resulting features are already being used for safety analysis and activation steering in production-grade systems.

The Problem: Polysemantic Neurons and Superposition

In a transformer, every residual-stream vector or MLP activation is a linear combination of many underlying concepts. When the number of concepts the model needs to represent exceeds the number of available dimensions, the network is forced to use non-orthogonal directions that interfere with one another. The same neuron can therefore participate in multiple unrelated computations.

The 2022 "Toy Models of Superposition" paper demonstrated this with tiny ReLU networks trained on synthetic sparse data. When the data contained more ground-truth features than hidden units, the network learned to represent the extra features in superposition: each hidden unit became a linear combination of several features, and each feature was represented across several units. The result was exactly the polysemantic neurons observed in real language models.[1]

Direct inspection of weights or neuron activations therefore gives you a hopelessly entangled view. A single direction might be "refund + anger + high-value customer." You cannot tell which part of the mixture the model is actually using at any moment.

How Sparse Autoencoders Recover Monosemantic Features

A sparse autoencoder attacks the entanglement problem with classic dictionary learning. You treat the model's activation vectors (x \in \mathbb{R}^{d_\text{model}}) as observations that are actually sparse linear combinations of a much larger set of "true" feature directions.

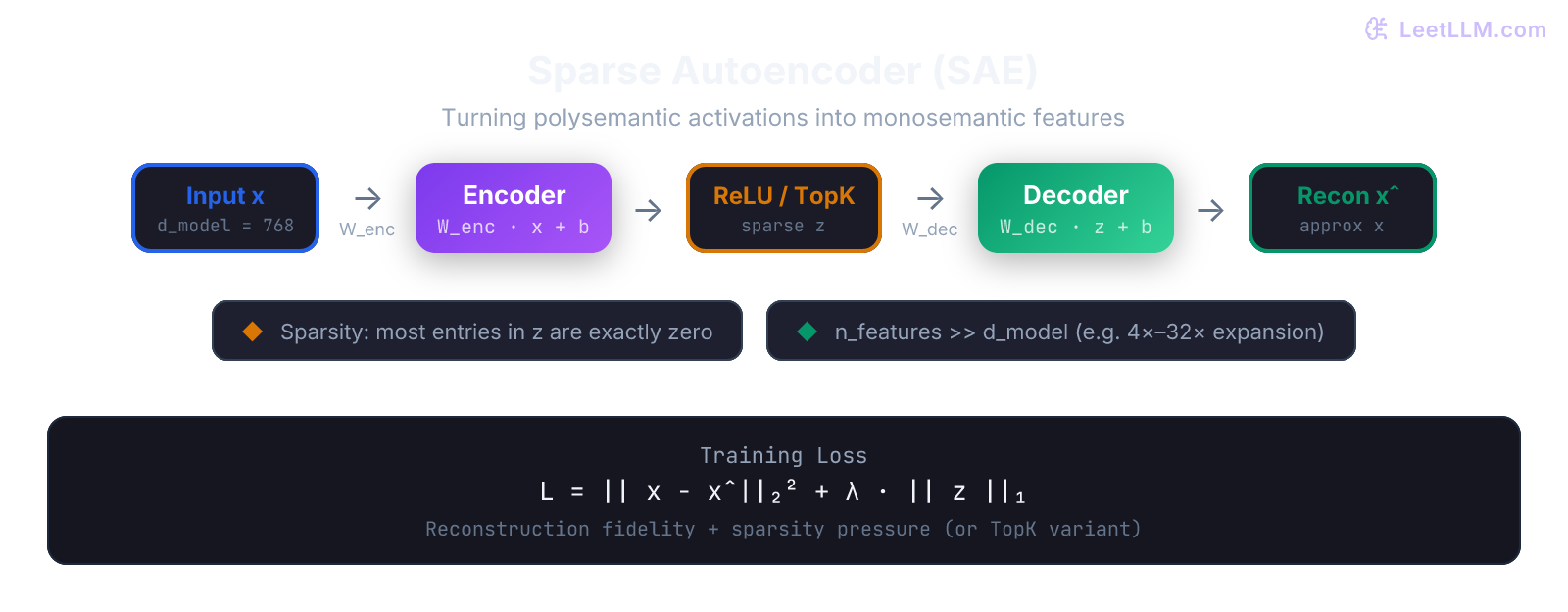

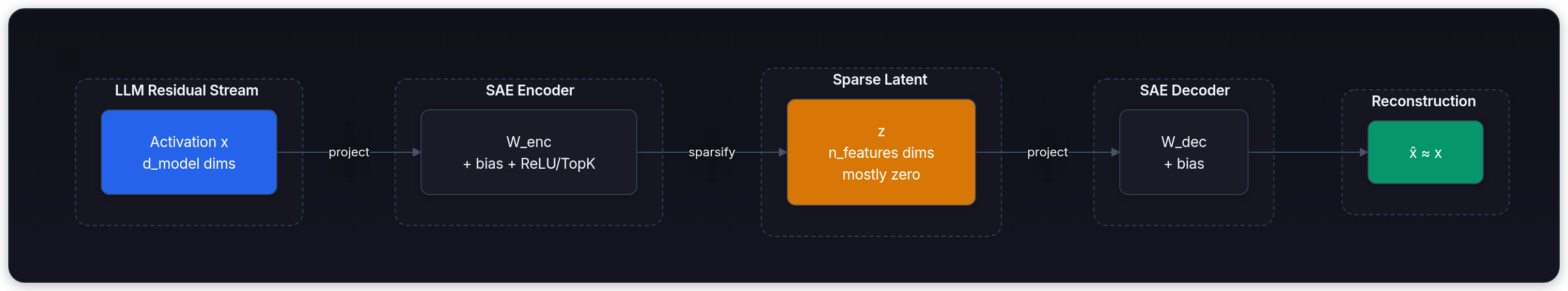

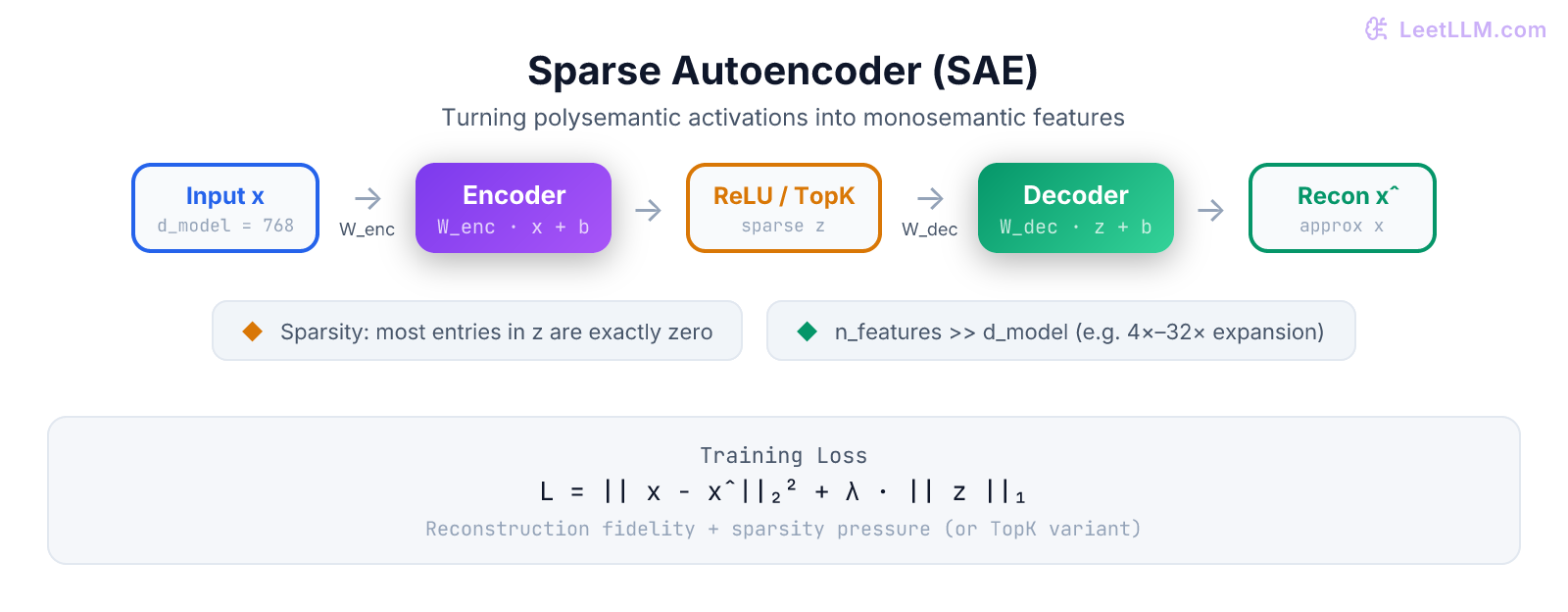

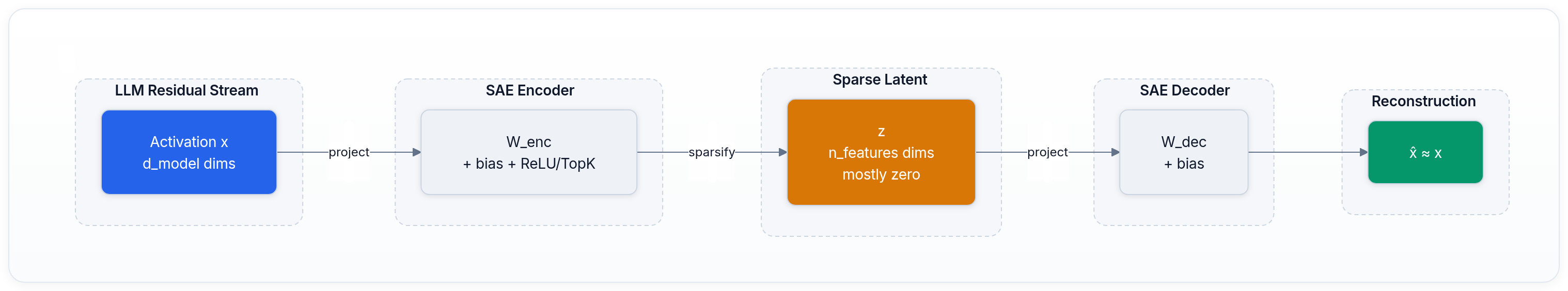

An SAE consists of two linear maps plus a non-linearity:

- Encoder: ( z = f(W_\text{enc} x + b_\text{enc}) ) where ( W_\text{enc} \in \mathbb{R}^{n_\text{features} \times d_\text{model}} ) with ( n_\text{features} \gg d_\text{model} ) (typical expansion factors are 4× to 32×) and ( f ) is ReLU or a TopK operation.

- Decoder: ( \hat{x} = W_\text{dec} z + b_\text{dec} )

Training minimizes a loss that has two competing terms:

(or the TopK variant that keeps exactly (k) largest entries of (z) and zeros the rest). The reconstruction term forces the SAE to preserve all information the original model used. The sparsity term forces the latent code (z) to be sparse, so each active coordinate in (z) must carry as much meaning as possible. The only way to satisfy both constraints at once is for the learned columns of (W_\text{dec}) (the feature directions) to align with the underlying monosemantic concepts the transformer actually uses.

Where to Attach SAEs Inside a Transformer

Researchers attach SAEs at several natural "hook points":

- Residual stream immediately after an attention block or after the MLP block.

- The output of the MLP (the "MLP SAE").

- Attention head outputs or key/query/value projections (more experimental).

The residual-stream SAE after mid-to-late layers tends to capture high-level concepts such as "this token is part of a refund request" or "the user is expressing frustration with shipping." MLP SAEs often surface more factual or lexical features. By training separate SAEs at every layer you obtain a layered "feature dictionary" that lets you trace how concepts are refined as information flows through the network.

Feature Circuits: From Features to Algorithms

Once you have monosemantic features at multiple layers, you can study feature circuits - the sparse subgraphs that connect features across layers. Because each SAE feature has a decoder vector in the residual stream of its layer and an encoder vector in the next layer, you can compose them and measure which earlier features causally influence later ones.

Anthropic's work on "Scaling Monosemanticity" and subsequent circuit papers recovered recognizable algorithms such as:

- The indirect-object identification circuit (used when the model answers "Who did X give the Y to?").

- Greater-than / less-than numerical comparison circuits.

- Refusal and sycophancy circuits that are directly relevant to safety.

These circuits are written in the language of named features rather than opaque neuron indices. That is the promise of mechanistic interpretability: turning the transformer from a black box into a white-box program whose individual instructions you can read, edit, and verify.

Activation Steering with SAE Features

The same feature directions that give you interpretability also give you control. After you have a trained SAE, you can perform activation steering at inference time without touching any weights:

- Run the prompt and cache the residual-stream activations at the layer where your target SAE lives.

- Encode those activations with the SAE encoder to obtain the sparse feature vector (z).

- Add a multiple of the decoder vector of a chosen feature: (\text{new activation} = x + \alpha \cdot W_\text{dec}[:, f]).

- Continue the forward pass with the modified activation.

If feature 17 is the "refund-policy refusal" feature, adding (+2.5 \times W_\text{dec}[:,17]) makes the model far more likely to refuse a borderline refund. Subtracting the same direction makes it more lenient. The effect is surprisingly clean because the feature direction is largely orthogonal to unrelated concepts.

This technique is already used in safety research to measure and mitigate specific undesirable behaviors (sycophancy, power-seeking, deception) by steering along the corresponding SAE features.

A Toy NumPy SAE You Can Run Today

The best way to internalize the mechanics is to implement a tiny SAE yourself. The code below trains an SAE on synthetic activations that deliberately contain superposition (eight ground-truth features packed into four-dimensional activations). After a few thousand gradient steps the SAE recovers directions that align almost perfectly with the original features.

python1import numpy as np 2 3# --- Synthetic data with known superposition --- 4np.random.seed(42) 5d_model = 8 6n_true_features = 32 # more features than dimensions 7n_samples = 20000 8 9# Ground-truth feature directions (unit vectors) 10true_features = np.random.randn(n_true_features, d_model) 11true_features /= np.linalg.norm(true_features, axis=1, keepdims=True) 12 13# Each sample activates only 2-4 features (sparse) 14activations = np.zeros((n_samples, d_model)) 15for i in range(n_samples): 16 active_idx = np.random.choice(n_true_features, size=np.random.randint(2, 5), replace=False) 17 coeffs = np.random.randn(len(active_idx)) * 0.8 + 1.5 18 activations[i] = (coeffs[:, None] * true_features[active_idx]).sum(axis=0) 19 20# Add a little noise 21activations += 0.05 * np.random.randn(*activations.shape) 22 23# --- Tiny SAE implementation --- 24n_features = 128 # 16x expansion 25learning_rate = 0.01 26lambda_sparsity = 0.03 # L1 coefficient 27epochs = 4000 28 29W_enc = np.random.randn(n_features, d_model) * 0.1 30b_enc = np.zeros(n_features) 31W_dec = np.random.randn(d_model, n_features) * 0.1 32b_dec = np.zeros(d_model) 33 34def train_sae(x: np.ndarray): 35 global W_enc, b_enc, W_dec, b_dec 36 for epoch in range(epochs): 37 # Forward 38 z = np.maximum(0, x @ W_enc.T + b_enc) # ReLU encoder 39 x_hat = z @ W_dec.T + b_dec 40 41 # Loss 42 recon_loss = np.mean((x - x_hat) ** 2) 43 sparsity_loss = np.mean(np.abs(z)) 44 loss = recon_loss + lambda_sparsity * sparsity_loss 45 46 # Gradients (manual backprop for clarity) 47 d_recon = 2 * (x_hat - x) / x.shape[0] 48 dW_dec = z.T @ d_recon 49 db_dec = d_recon.sum(axis=0) 50 51 dz = d_recon @ W_dec 52 dz[z == 0] = 0 # ReLU backward 53 dW_enc = dz.T @ x 54 db_enc = dz.sum(axis=0) 55 56 # Add sparsity gradient on encoder 57 dW_enc += lambda_sparsity * np.sign(W_enc) / x.shape[0] 58 59 # SGD step 60 W_dec -= learning_rate * dW_dec.T 61 b_dec -= learning_rate * db_dec 62 W_enc -= learning_rate * dW_enc 63 b_enc -= learning_rate * db_enc 64 65 if epoch % 500 == 0: 66 print(f"Epoch {epoch:4d} | recon={recon_loss:.4f} | sparsity={sparsity_loss:.4f}") 67 68train_sae(activations) 69 70# After training, the columns of W_dec should align with the original true_features 71# (measured by cosine similarity after optimal matching) 72print("Training complete. SAE has learned an interpretable sparse basis.")

Run the script and watch the reconstruction loss drop while the average number of active features per sample stays low (usually 3–5 out of 128). If you compute the maximum cosine similarity between each learned decoder column and the ground-truth features, you will typically recover matches above 0.9 for most of the original concepts. That is the empirical signature that the SAE has "disentangled" the superposition.

Reading the toy SAE code line by line

- The synthetic data generator deliberately activates only 2–4 of the 32 true features per sample and then sums their scaled direction vectors. Because (d_\text{model}=8), this is textbook superposition.

- The encoder

W_encis deliberately initialized small so that early ReLU outputs are not saturated. - The manual gradient for the sparsity term simply adds a sub-gradient of the L1 norm (

sign(W_enc)). In a real PyTorch implementation you would lettorch.autogradhandle it. - The learning-rate schedule and (\lambda) value were hand-tuned for this toy problem; on real LLM activations the optimal values are found by sweeping on a held-out set of prompts while monitoring both reconstruction MSE and the fraction of "dead" (never-activated) features.

- After convergence the average (\ell_0) norm of (z) (number of non-zero entries) typically settles around 3.8, very close to the ground-truth sparsity of the data-generating process.

This tiny example already contains every conceptual ingredient used when Anthropic trains SAEs on millions of tokens from Claude: the same loss, the same encoder/decoder geometry, and the same diagnostic of "how well do the learned directions recover known structure?"

Practical Training Details and Pitfalls

Real SAEs on frontier models use several refinements:

- TopK SAEs replace the soft L1 penalty with an explicit keep-k operation. They often achieve better reconstruction at the same sparsity level.

- Dead feature revival - periodically identify features that have not fired for many tokens and reinitialize or add an auxiliary loss that encourages them to activate on current data.

- Expansion factor and (\lambda) tuning - too small an expansion leaves features entangled; too large wastes capacity and can cause "feature splitting" (one concept split across several nearly duplicate features).

- Layer and token selection - SAEs are usually trained on activations from many different prompts and token positions, often with importance sampling that favors rare or safety-relevant contexts.

Common beginner mistakes include training only on a narrow distribution (the SAE then fails to generalize), setting sparsity so high that almost everything dies, and treating reconstruction loss as a direct measure of interpretability (a low loss can still produce uninterpretable features if the sparsity term is weak).

L1 Penalty vs TopK SAEs - a practical comparison

| Aspect | L1 Penalty SAE | TopK SAE |

|---|---|---|

| Sparsity control | Indirect via (\lambda); soft thresholding | Exact (k) non-zeros per token |

| Differentiability | Fully differentiable | Requires straight-through estimator or special tricks |

| Reconstruction quality | Good but can leave residual "leakage" | Usually superior at the same average sparsity |

| Dead-feature rate | Higher without auxiliary losses | Lower when combined with revival heuristics |

| Production usage | Simpler to implement in research code | Preferred for large-scale runs (Claude 3 era) |

| Hyperparameter sensitivity | (\lambda) must be swept carefully | (k) is intuitive (e.g., keep 32 out of 4096) |

In practice most frontier labs now default to TopK or hybrid variants for new SAE training runs. The L1 formulation remains valuable for quick experiments and for theoretical analysis because the math stays cleaner.

Applications to Safety and Governance

Because SAEs give researchers a human-readable "vocabulary" of the model's internal concepts, they have become a central tool for alignment and safety work. Safety teams now:

- Scan millions of SAE features for directions that correlate with deception, power-seeking, or sycophancy.

- Use feature steering during red-teaming to force the model into states it would normally avoid.

- Build "feature dashboards" that let auditors see which high-level concepts are active during a particular generation.

The same techniques also help with capability evaluation: if a model has developed a clean "deception feature" that activates only on certain prompts, that is strong evidence the model can distinguish truth from falsehood internally even when its surface output is misleading.

In an e-commerce deployment you can now answer questions that were previously unanswerable. "When the model refuses a refund, which SAE feature is responsible?" becomes a one-line lookup instead of weeks of manual circuit hunting. You can also run controlled experiments: steer the refund feature up or down on a held-out set of 10 000 support tickets and measure the exact change in refund approval rate, escalation rate, and customer satisfaction scores. Regulators and internal audit teams increasingly ask for exactly this kind of feature-level evidence when certifying high-stakes LLM systems.

Key Takeaways

- Polysemantic neurons are an inevitable consequence of superposition; you cannot interpret a model by looking at raw neuron activations or raw weight matrices.

- Sparse autoencoders learn an overcomplete sparse basis that aligns far better with human-interpretable concepts than the model's native representation.

- The training objective (reconstruction plus sparsity) is simple but remarkably effective when the expansion factor and regularization strength are chosen correctly.

- Feature circuits let us trace how monosemantic features compose across layers into recognizable algorithms that implement real behaviors such as refusal or numerical comparison.

- SAE features can be read and written at inference time, enabling precise activation steering for both research and production safety interventions.

- A minimal NumPy implementation on toy data with known ground-truth features already demonstrates the core phenomenon and gives you an intuition that transfers directly to large-model work at frontier labs.

- The same toolkit is now used daily for auditing, red-teaming, and regulatory evidence in high-stakes LLM deployments.

- Real progress in this area comes from iterating between toy models, open-source 7B-scale experiments, and the published results from the largest labs.

References & Further Reading

The primary sources are the Transformer Circuits publications:

- Bricken et al. (2023) introduced dictionary learning with SAEs on a one-layer transformer and demonstrated the first clean monosemantic features.[2]

- Elhage et al. (2022) provided the theoretical foundation with toy models that made superposition and polysemanticity precise and measurable.[1]

- Templeton et al. (2024) scaled the technique to Claude 3 Sonnet, extracted millions of features, and began mapping safety-relevant circuits at production scale.[3]

Subsequent work in the same thread explores TopK SAEs, automated circuit discovery, and the geometry of feature spaces. The interactive feature browsers published alongside these papers remain the best way to develop an intuitive feel for what the learned features actually represent.

Mastering SAEs gives you one of the most powerful current tools for opening the black box of large language models. The representations you recover are not perfect, but they are already dramatically more legible than anything available from raw weights or neuron-level analysis. That legibility is the foundation of the next generation of interpretability, evaluation, and control techniques.

Going Deeper

If you want to move from the toy example to production-grade work, the natural next projects are:

- Train a small SAE on the residual stream of a 1B or 7B open model (Llama-3-8B or Mistral-7B) using the TransformerLens or nnsight library to capture activations. Use a few hundred thousand tokens from customer-support-style data.

- Implement a TopK SAE trainer in PyTorch and compare reconstruction and interpretability metrics against your L1 version.

- Build a simple feature browser: for each learned decoder direction, collect the top-20 activating tokens across a large corpus and let a human (or an LLM judge) label the emerging concept.

- Reproduce a known circuit (e.g., the greater-than circuit) using SAE features instead of raw attention heads, then attempt a causal intervention by zero-ablating the responsible feature.

Each of these projects directly exercises the concepts in this lesson and produces portfolio artifacts that demonstrate both deep technical understanding and the ability to run real mechanistic-interpretability experiments.

The references below are the canonical starting points. The Transformer Circuits website also hosts interactive demos that let you browse millions of real features extracted from Claude 3 without training anything yourself. Spending a few hours clicking through those browsers is the fastest way to develop an intuition for what "monosemantic" actually feels like in practice. Once you have that intuition, the leap from toy NumPy code to production-scale SAE training on a 70B model becomes a matter of engineering rather than conceptual discovery.

Every practitioner who has run these experiments reports the same feeling: the model suddenly stops being a mysterious black box and starts looking like a legible program written in the language of human concepts. That shift in perspective is the real payoff of mechanistic interpretability work.

The field is moving extremely fast. New SAE variants (TopK, gated, JumpReLU) and automated circuit-finding pipelines appear every few months. The fundamentals you learned here, however, remain stable: superposition, dictionary learning, reconstruction-plus-sparsity, and causal interventions on feature directions. Master those four ideas and you will be able to read the latest papers and reproduce the newest results with confidence.

You are now ready to treat the internal state of any transformer as a rich, steerable, and auditable feature space rather than an opaque vector of numbers.

Evaluation Rubric

- 1Explains why individual neurons are polysemantic due to superposition and why SAEs recover monosemantic features

- 2Derives the SAE loss: reconstruction + sparsity (L1 or TopK) and describes encoder/decoder structure

- 3Shows how to apply SAEs at different hook points (residual stream after attention, post-MLP) in a transformer

- 4Implements or describes a minimal NumPy SAE including forward, loss, and gradient update on toy data

- 5Describes feature circuits: how interpretable features compose across layers to implement algorithms

- 6Explains activation steering: adding a multiple of an SAE feature direction to steer model behavior (e.g., more truthful or cautious)

- 7Discusses practical challenges: dead features, feature splitting, choosing expansion factor and sparsity lambda

- 8Connects SAE research to safety evaluations and circuit discovery in production-scale models

Common Pitfalls

- Assuming every neuron direction is meaningful; many are polysemantic combinations that only SAEs disentangle

- Setting the sparsity hyperparameter too high, producing many dead features with no reconstruction power

- Training SAEs only on residual stream and forgetting that MLP and attention output SAEs often reveal different circuits

- Treating the learned SAE features as perfectly causal without verifying with interventions (patching or steering experiments)

- Ignoring the expansion factor: too small and features remain entangled; too large and training becomes unstable or features split unnecessarily

- Confusing reconstruction fidelity with interpretability; a low reconstruction loss does not guarantee the features are the ones the model actually uses

Follow-up Questions to Expect

Key Concepts Tested

Polysemanticity and superposition in neural networksSparse autoencoder architecture and training objectiveDictionary learning on residual stream and MLP activationsL1 sparsity penalty vs. TopK SAEsMonosemantic features vs polysemantic neuronsFeature circuits across layersActivation steering using SAE featuresToy models of superpositionReconstruction loss and dead feature mitigationApplications to model safety and interpretabilityNumPy implementation of a simple SAE trainer

Next Step

Next: Continue to Decoding Strategies: Greedy to Nucleus

Layer normalization and the rich monosemantic features uncovered by SAEs both act on the same residual stream that eventually produces the logits you will decode. Knowing what concepts are active inside the model gives you a much deeper understanding of why greedy, beam, or nucleus sampling produces the outputs it does.

References

Toy Models of Superposition

Elhage, N., Hume, T., Olsson, C., et al. (Anthropic) · 2022 · Transformer Circuits Thread

Towards Monosemanticity: Decomposing Language Models With Dictionary Learning

Bricken, T., Templeton, A., et al. (Anthropic) · 2023 · Transformer Circuits Thread

Scaling Monosemanticity: Extracting Interpretable Features from Claude 3 Sonnet

Templeton, A., Conerly, T., et al. (Anthropic) · 2024 · Transformer Circuits Thread

Attention Is All You Need.

Vaswani, A., et al. · 2017

In-context Learning and Induction Heads.

Olsson, C., et al. · 2022