🚀HardInference Optimization

Long-Context Engineering

Master advanced long-context techniques: Ring Attention for distributed scale, context compression and KV eviction, retrieval-plus-long-context hybrids, lost-in-the-middle mitigations at scale, RoPE scaling limits, and production memory, latency, and cost trade-offs.

15 min readGoogle, Anthropic, Meta +38 key concepts

Learning path

Step 123 of 138 in the full curriculum

Imagine a global e-commerce company whose order database now spans eight fulfillment centers across three continents and ten years of history. A customer-support agent needs to answer: "Why was this particular refund delayed in March 2021 for order #48291, and did the same carrier pattern appear for similar high-value electronics returns in 2023?" The relevant facts are scattered across 1.2 million tokens of manifests, carrier scans, warehouse notes, and policy updates. A standard 128K context window forces you to choose: truncate the history, summarize aggressively and lose nuance, or retrieve only the "most relevant" chunks and hope the model can still connect distant events.

Long-context window management taught you how to stretch a single GPU to 128K with RoPE scaling, KV paging, and sliding windows. The next frontier is what happens when even that is not enough, when you need reliable reasoning over 500K or 2M tokens, and when you must do it at production cost and latency. This lesson covers the advanced techniques that make those workloads practical: Ring Attention for true distributed context, modern context compression and KV eviction policies, hybrid retrieval-plus-long-context architectures, production-grade mitigations for the lost-in-the-middle problem, and the hard limits of RoPE scaling. We will also quantify the real memory, latency, and dollar costs so you can make engineering trade-off decisions instead of guessing.

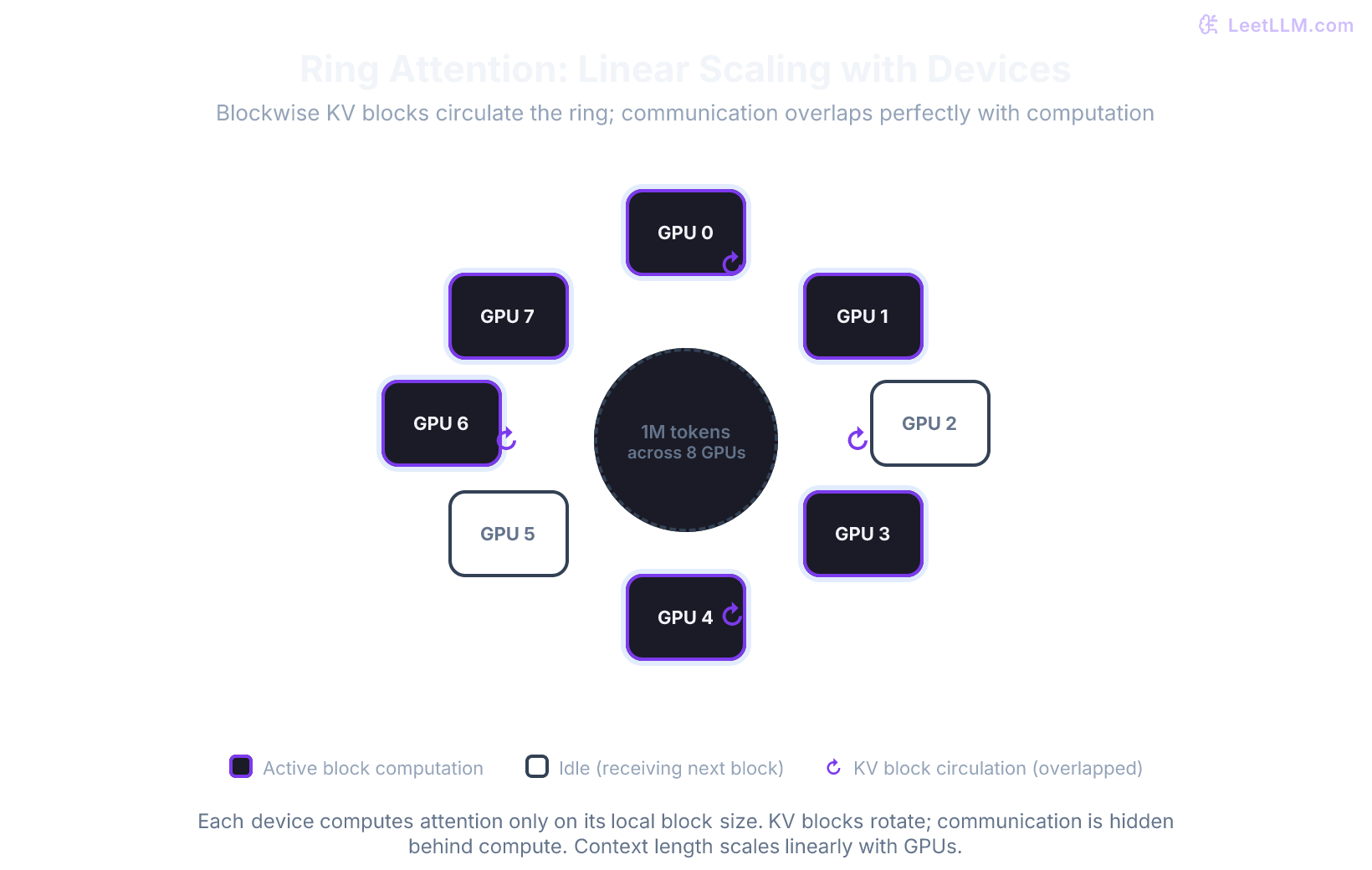

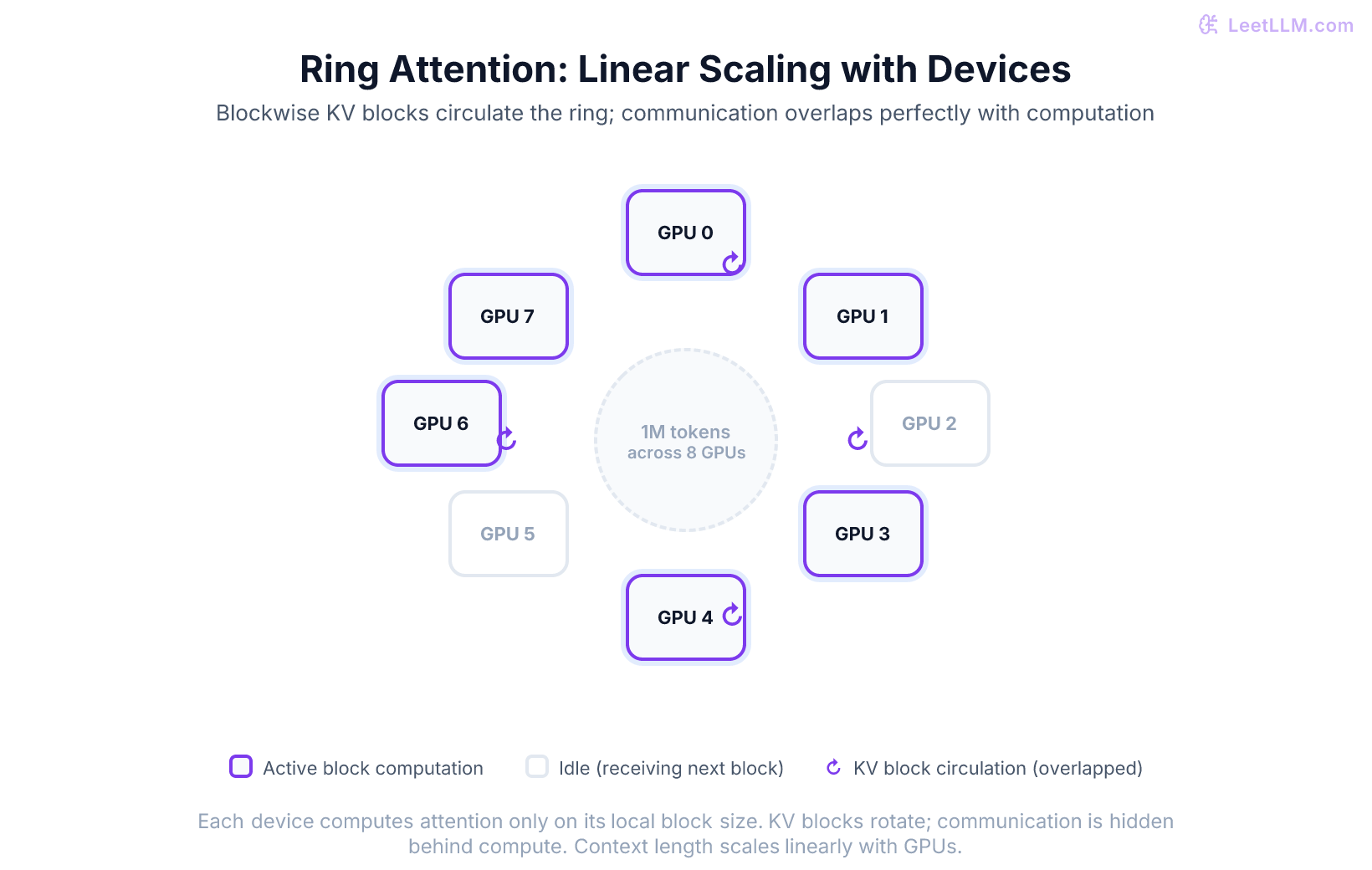

Ring Attention: context length linear in the number of GPUs

The fundamental limit of single-device long context is memory. Even with perfect KV paging and GQA, a 1M-token request on a 70B-class model still requires tens of gigabytes just for the KV cache on one GPU. Ring Attention removes that single-device ceiling by distributing the sequence across many GPUs while computing exact attention, not an approximation.[1]

The core idea is blockwise computation plus ring communication. Split the long sequence into fixed-size blocks (for example 8K or 16K tokens). Each GPU holds one or more blocks of the key/value cache for the current layer. Instead of every GPU needing the entire KV cache, the blocks circulate around a logical ring of GPUs. While a GPU computes blockwise attention between its local queries and the KV block it currently holds, it simultaneously sends its own KV block to the next GPU and receives the next one. When the interconnect is fast enough (NVLink inside a node or InfiniBand across nodes) and blocks are large enough, much of the communication time can be hidden behind computation.

The attention workspace per GPU therefore scales with local block size and local sequence slice instead of forcing every device to materialize the whole sequence. Add another GPU and you can handle roughly that much more context, assuming communication bandwidth and scheduling overhead stay under control. The paper demonstrated million-token-class contexts on multi-GPU and TPU-pod setups that would have been impractical on a single device.

In practice this is powerful for workloads that truly need global cross-references: reconciling every line item across a year of order history, tracing a fraud pattern through thousands of related shipments, or building a world model over a long video-plus-text trace. It is overkill, and more expensive, when your queries can be answered from a well-chosen 20K-token evidence set.

Production teams usually combine Ring Attention with other techniques: GQA or MQA to shrink the KV head count, 4-bit or 8-bit KV quantization on the circulating blocks, and prefix caching so that common system prompts and recent conversation turns stay resident without re-circulating.

Context compression and KV cache eviction policies

Even when you can afford the GPUs for Ring Attention, many production workloads benefit from shrinking the effective context before or during inference. Three families of techniques show up repeatedly in 2026 long-context systems.

Attention sinks and StreamingLLM

Many models, even after instruction tuning, exhibit a striking "attention sink" behavior: a few initial tokens (often the very first token or the first few) receive disproportionately high attention scores from almost every later token, even when they carry little semantic content. StreamingLLM (Xiao et al., 2023) exploits this by keeping the KV cache for a small fixed set of sink tokens plus a sliding window of the most recent tokens. The model can then generate indefinitely without ever growing the cache beyond that budget.

For chat-style agents that process ongoing order-status conversations, StreamingLLM gives you effectively infinite context at the cost of only the last few turns plus the anchors. It requires no fine-tuning on most base model families, although heavy post-training can sometimes weaken the sink phenomenon.

Heavy-hitter eviction (H2O) and SnapKV

For document-centric workloads you can do better than pure recency. H2O (Zhang et al., 2023) observes the cumulative attention each token receives during the first few decode steps and then evicts the lowest-scoring "light" tokens, keeping only the heavy hitters. SnapKV (Li et al., 2024) goes further: before generation even starts, it looks at the attention pattern the prompt itself induces on a small set of generated probe tokens, clusters the important prefix tokens, and keeps only the cluster representatives. Both methods routinely achieve 4-8x effective compression with only single-digit accuracy loss on retrieval and reasoning tasks when the evaluation is done on the target domain.

The key production lesson is that compression quality is domain-specific. A policy that works on Wikipedia articles can drop 20 points on multi-order forensic questions that hinge on a single rare SKU or carrier exception code that appeared only once in the middle of the history.

Quantization of the KV cache itself

Independent of which tokens you keep, you can store the kept keys and values at lower precision. FP8 or INT8 KV caches are supported in several modern serving stacks and can roughly halve KV memory when the model and runtime support them. 4-bit KV quantization (with careful per-channel or per-token scaling) pushes the ratio higher but requires more validation, especially on long-range arithmetic and ordering tasks common in logistics.

Hybrid retrieval plus long-context pipelines

The most effective pattern in real systems is not "use long context" or "use RAG" but a carefully engineered hybrid.

A typical pipeline for the 1.2-million-token order-history scenario:

- Run a high-recall retriever (or a cascade of BM25 + embedding + reranker) over the entire corpus and pull the top 30-50 candidate chunks.

- Apply light compression or clustering inside that candidate set to remove near-duplicates.

- Pack the surviving evidence (usually 12K-20K tokens) together with the full conversation history, system instructions, and any needed few-shot examples into a long-context call (32K or 128K window is plenty).

- Let the model perform cross-document reasoning, multi-hop inference, and final answer generation over that packed evidence.

The hybrid wins because retrieval handles scale and freshness while the long-context model handles the hard synthesis and consistency checks that pure RAG summarization often misses. The packing step must still respect lost-in-the-middle realities: put the most critical retrieved chunks at the very beginning and very end of the evidence block, and duplicate the user's question or the most important constraints at both ends.

Mitigating lost-in-the-middle at production scale

The original lost-in-the-middle paper (Liu et al., 2023) showed a clear U-shaped retrieval curve: models are strong at the start and end of a long context, weak in the middle. When you move from 128K management to true million-token engineering you must treat this as a systems problem, not just a prompt-engineering tip.

Effective mitigations used in production:

- Re-ranking and position-aware packing: After retrieval, run a strong cross-encoder reranker and place the highest-scoring evidence at the head and tail of the final context. This is cheap and highly effective.

- Multi-pass reasoning: First pass asks the model to extract and list all potentially relevant facts or order IDs from the packed evidence (placed at the ends). Second pass feeds only those extracted facts plus the original question for final reasoning. The middle content is never relied upon for the final answer.

- Instruction anchoring: Duplicate the most critical instructions or the exact question at both the very beginning and the very end of the prompt. Many teams also add a short "summary so far" block that the model itself wrote in a previous turn.

- Hierarchical summarization + long-context verification: Build a tree of summaries over the corpus. Only the leaf chunks that survive retrieval are expanded into the final long context for verification and cross-reference.

Each technique has a cost/accuracy curve. Re-ranking + anchoring is almost free. Multi-pass doubles the number of model calls but often recovers most of the lost accuracy.

RoPE scaling limits and when advanced methods are required

All of the context-extension work ultimately rests on being able to place tokens at positions the model never saw during pre-training. Standard YaRN and NTK-aware scaling work well up to roughly 4-8x the original training length with modest continued training. Beyond that, simple uniform interpolation begins to destroy the high-frequency dimensions that encode local syntax and ordering, which are critical for code, tables, and logistics line items.

LongRoPE (Ding et al., 2024) and related progressive search methods solve this by treating the scaling factors per dimension (or per layer) as searchable hyperparameters. They can push a 128K-trained model to 1M or even 2M+ tokens, but the resulting model still requires targeted fine-tuning or continued pre-training on long examples to regain full capability. In practice, teams treat anything beyond 300-400K as requiring explicit long-context training data and evaluation; zero-shot LongRoPE scaling is useful for retrieval and light reasoning but not for precise multi-step arithmetic across distant records.

The practical limit today is therefore a combination of hardware (Ring Attention or large single-context GPUs), compression quality on your domain, and how much long-context fine-tuning budget you are willing to spend.

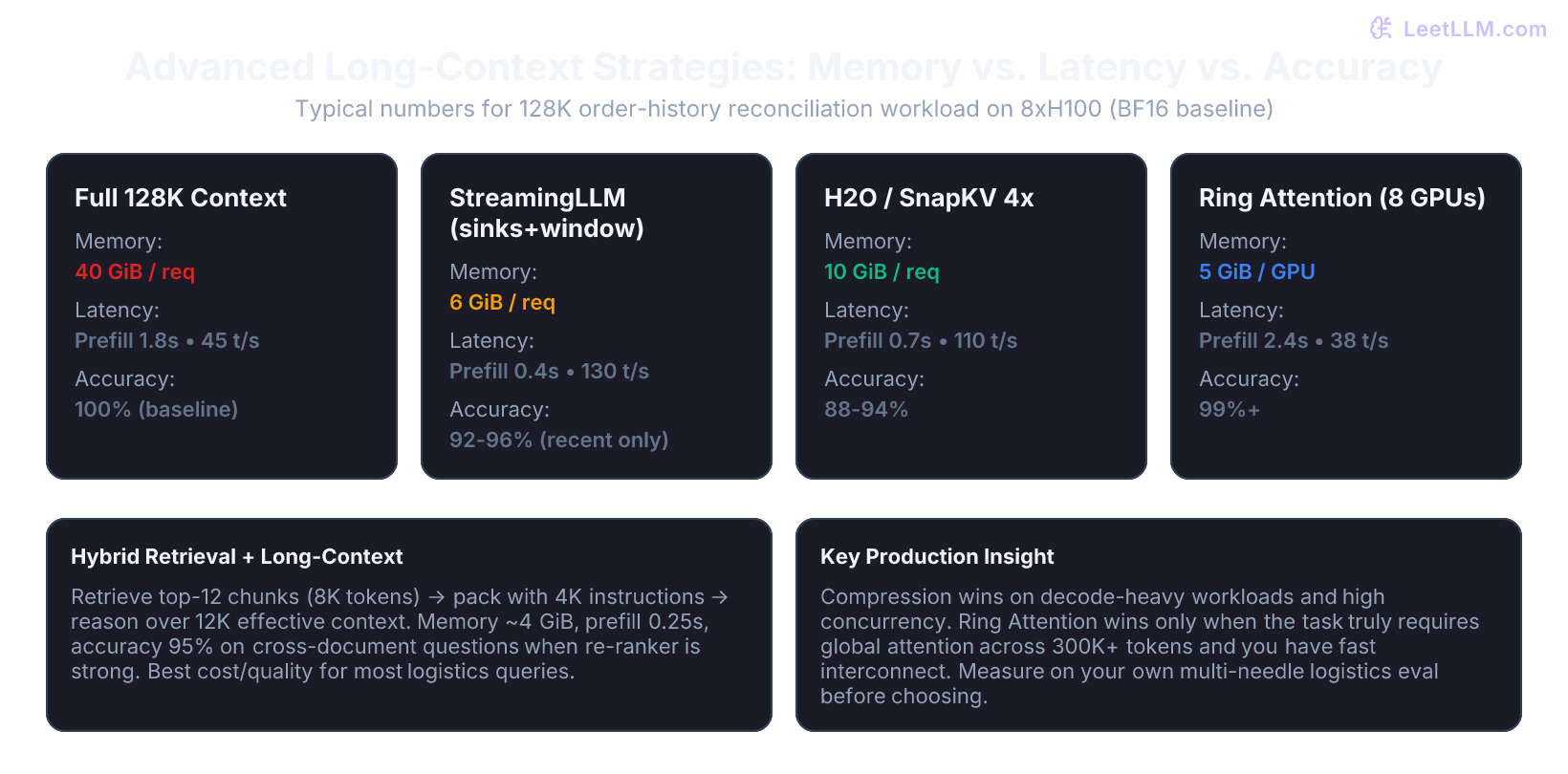

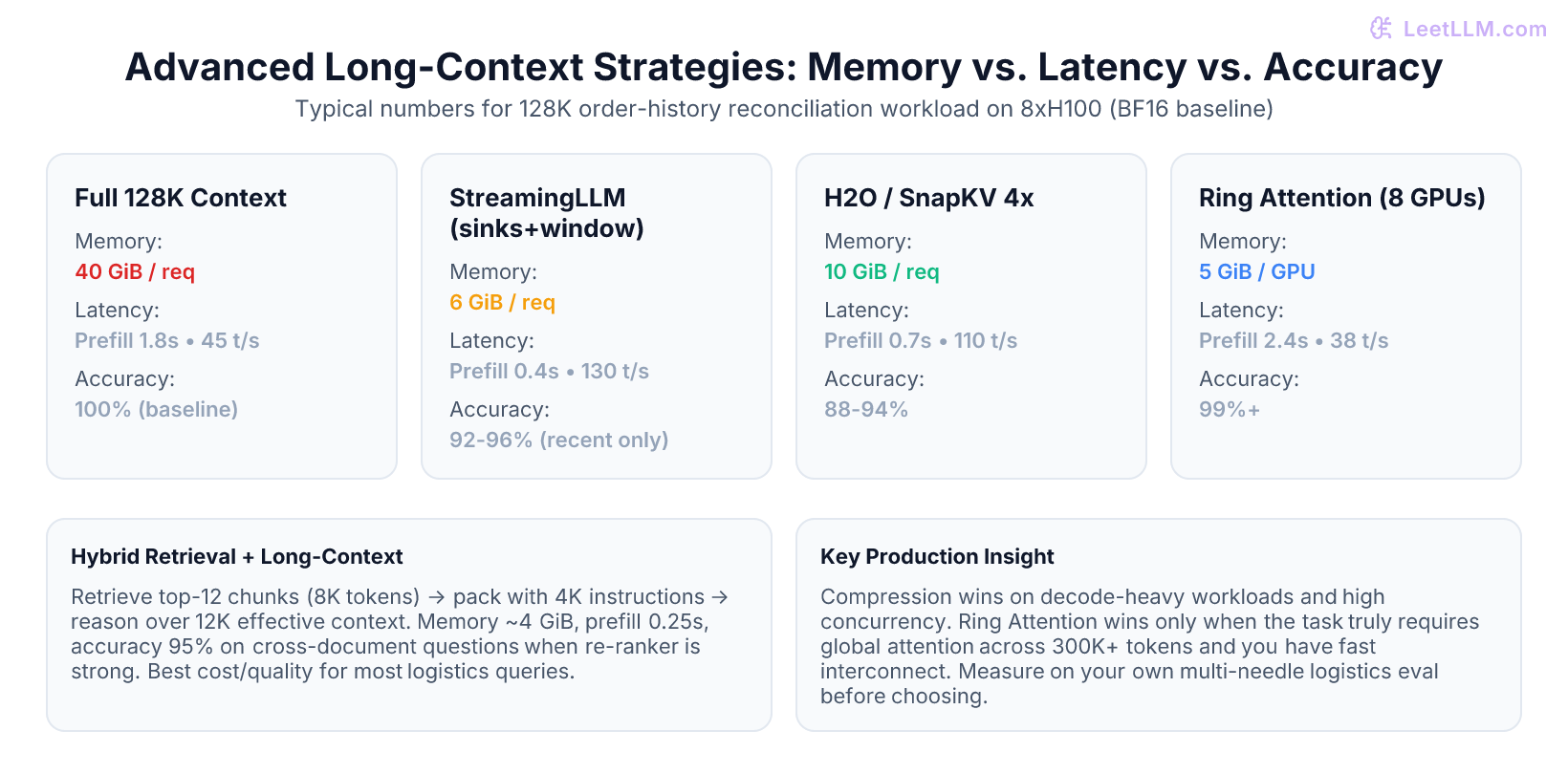

Production cost, latency, and capacity analysis

Theory is useless without numbers. The table below is an illustrative capacity-planning example for an 8xH100 node serving a 70B-class model (GQA, BF16 weights, FP8 or INT8 KV where noted) on a logistics reconciliation workload. Treat it as a sizing worksheet, not a provider benchmark. All times are p50 estimates for a 4K output.

| Strategy | Effective context | KV memory per request | Prefill latency | Decode speed | Concurrent reqs (80 GB GPU) | Relative accuracy on multi-order tasks |

|---|---|---|---|---|---|---|

| Full 128K | 128K | ~40 GiB | 1.8 s | 45 t/s | 1 | 100% (baseline) |

| StreamingLLM (sinks+4K) | Infinite (recent) | ~6 GiB | 0.4 s | 130 t/s | 8-10 | 92-96% (recent only) |

| SnapKV / H2O 4x | 128K (compressed) | ~10 GiB | 0.7 s | 110 t/s | 4-5 | 88-94% |

| Ring Attention (8 GPUs) | 1M | 5 GiB per GPU | 2.4 s | 38 t/s | 1 (ring) | 99%+ |

| Hybrid (retrieve 12K) | 12K packed | ~4 GiB | 0.25 s | 140 t/s | 12+ | 94-97% (with strong reranker) |

Key observations for capacity planning:

- Decode is almost always memory-bandwidth bound once the KV cache is large. Compression that shrinks the cache gives super-linear gains in concurrency.

- Prefill remains quadratic in the tokens you actually attend over. SnapKV and H2O still require a full prefill pass on the original prompt before they can decide what to evict; the savings appear only in decode.

- Ring Attention adds communication latency that is only hidden when block size and interconnect are well matched. On a single 8-GPU node with NVLink it is attractive; across racks it often loses to hierarchical retrieval.

- Dollar cost per query is dominated by GPU-seconds. A hybrid approach that serves 12 concurrent short-context requests usually costs far less than one 1M-token Ring Attention request, even before you count the extra GPUs.

Always run your own domain-specific multi-needle and multi-document reasoning benchmark (RULER-style or custom logistics traces) before trusting any of the published compression or scaling numbers.

Common mistakes and how to spot them

| Symptom | Likely Cause | Fix |

|---|---|---|

| Ring Attention job runs but decode is slower than single-GPU 128K | Interconnect bandwidth insufficient to hide block transfers | Increase block size, move to intra-node NVLink only, or fall back to compression |

| After applying SnapKV the model misses a critical order ID that appeared once in the middle | Compression policy not tuned on your domain distribution | Add domain-specific needles to the eval set and adjust clustering or heavy-hitter threshold |

| Hybrid pipeline still shows lost-in-the-middle errors inside the packed evidence | Reranker not strong enough or packing ignores position | Upgrade cross-encoder reranker; always place top-3 evidence at both ends of the final context |

| 2M LongRoPE model works on generic text but fails on date arithmetic and SKU cross-references | Insufficient long-context continued training | Collect or synthesize long multi-document logistics traces and run targeted fine-tuning |

| Memory usage looks good in theory but OOMs under load | Ignoring that prefill still materializes full attention scores before compression | Enable FlashAttention + chunked prefill; apply compression earlier in the pipeline |

| StreamingLLM works for chat but collapses on a 200-page PDF forensic query | Sinks + recency cannot capture evidence spread across the whole document | Switch to H2O/SnapKV or hybrid retrieval for document workloads |

What to carry forward

- Ring Attention gives you exact attention at scales that no single device can hold, but only when your interconnect and workload justify the extra GPUs and communication complexity.

- Modern KV eviction (attention sinks, H2O, SnapKV) plus quantization can deliver 4-8x memory reduction; always validate the accuracy impact on your own multi-needle and cross-document reasoning traces.

- A strong production pattern for many large-corpus tasks is hybrid: high-recall retrieval to surface candidates, followed by careful packing and long-context reasoning over a few tens of thousands of tokens.

- Lost-in-the-middle does not disappear at million-token scale. Treat position, re-ranking, and multi-pass extraction as first-class systems concerns.

- RoPE scaling has practical limits. Anything beyond 4-8x the original training length benefits from explicit long-context training data and evaluation.

- Cost and latency numbers matter more than advertised context length. Measure prefill, decode, concurrency, and end-to-end GPU-seconds on your real traffic before committing to an architecture.

Long-context engineering beyond basic window management is where algorithmic insight (blockwise rings, attention-based eviction, progressive RoPE search) meets systems reality (interconnect bandwidth, KV quantization, domain-specific evals, and cost modeling). The engineers who can reason across both sides build the systems that turn terabytes of historical order data into reliable, low-latency answers instead of expensive guesses.

Evaluation Rubric

- 1Explain how Ring Attention achieves linear scaling of context length with number of devices while keeping communication overlapped

- 2Compare KV cache eviction policies (attention sinks, H2O heavy hitters, SnapKV) and quantify their memory savings versus accuracy trade-offs

- 3Design a hybrid retrieval-plus-long-context system for a 500-page order history corpus and justify the chunk size, top-k, and final context budget

- 4Describe three practical mitigations for lost-in-the-middle at production scale and when each is cheapest to implement

- 5Analyze the RoPE scaling limits and when LongRoPE or progressive rescaling becomes necessary over standard YaRN

- 6Calculate the end-to-end cost and latency impact of applying 4x context compression versus adding two more GPUs for Ring Attention on a 1M-token workload

Common Pitfalls

- Assuming Ring Attention gives you infinite context for free: you still need enough aggregate GPU memory for the block size and high-bandwidth fabric, otherwise communication becomes the bottleneck

- Applying aggressive KV eviction (H2O or SnapKV) without domain-specific needle or RULER evaluation: compression that works on generic text often drops 15-30 points on logistics reconciliation tasks that need rare but critical order IDs

- Treating hybrid RAG + long-context as simple 'retrieve then stuff': without careful packing, re-ranking, and position optimization you still hit lost-in-the-middle inside the final long context

- Over-relying on RoPE scaling numbers from papers: LongRoPE and YaRN results are usually reported after some continued training; zero-shot extension often fails earlier than advertised

- Ignoring that compression changes the prefill/decode balance: some methods speed up decode dramatically but still require full quadratic prefill on the original prompt before compression is applied

- Using a single global compression ratio for all requests: order-status queries may tolerate 8x compression while multi-order forensic analysis needs near-full context

- Forgetting that StreamingLLM attention sinks only work well when the initial tokens really act as sinks for your model family; some instruction-tuned models lose the property after heavy post-training

Follow-up Questions to Expect

Key Concepts Tested

Ring Attention blockwise computation and ring communication for linear device scalingKV cache compression: attention sinks, heavy-hitter eviction (H2O), SnapKV clustering, quantizationHybrid retrieval + long-context pipelines and when they outperform pure RAG or pure long-contextLost-in-the-middle mitigations: document re-ranking, position reordering, multi-pass reasoning, instruction anchoringRoPE extension limits and advanced scaling methods (LongRoPE, progressive interpolation)Production cost and latency: per-GPU memory, interconnect bandwidth requirements, prefill/decode impact of compressionStreamingLLM for infinite-length inference without fine-tuningRULER and multi-needle evaluations for compression and hybrid systems

Next Step

Next: Continue to Mixture of Experts Architecture

There, you will learn how sparse expert routing delivers dramatically better capacity-per-compute than dense models, the serving challenges unique to MoE, and why production teams choose DeepSeek-V2/V3-style designs for high-throughput inference.

References

Ring Attention with Blockwise Transformers for Near-Infinite Context.

Liu, H., et al. · 2024 · arXiv preprint

Lost in the Middle: How Language Models Use Long Contexts

Liu, N.F., et al. · 2023 · TACL 2023

YaRN: Efficient Context Window Extension of Large Language Models.

Peng, B., et al. · 2023

Efficient Streaming Language Models with Attention Sinks

Xiao, G., Tian, Y., Chen, B., Han, S., Lewis, M. · 2023 · ICLR 2024

H2O: Heavy-Hitter Oracle for Efficient Generative Inference of Large Language Models

Zhang, Z., Sheng, Y., Zhou, T., et al. · 2023

SnapKV: LLM Knows What You are Looking for Before Generation

Li, Y., Huang, Y., Yang, L., et al. · 2024

LongRoPE: Extending LLM Context Window Beyond 2 Million Tokens

Ding, Y., Zhang, L., Zhang, C., et al. · 2024

RULER: What's the Real Context Size of Your Long-Context Language Models?

Hsieh, C.-Y., et al. · 2024