🏛️EasyModel Architecture

Autoencoders and VAEs

Master the encoder-decoder bottleneck of autoencoders, the probabilistic latent distributions of VAEs, KL divergence regularization, and the reparameterization trick. Build a minimal NumPy VAE on tiny logistics image patches, then connect the ideas directly to latent diffusion models used for product photo generation and document synthesis.

9 min readGoogle, Meta, OpenAI +512 key concepts

Learning path

Step 22 of 138 in the full curriculum

A customer-support agent uploads a photo of a cracked laptop bezel next to the shipping label. The internal claim classifier needs to decide whether this is a new damage pattern it has never seen before. Storing every past photo at full resolution is expensive. Generating synthetic variations of "similar cracked bezels" would help the classifier train on more examples without waiting for real returns. How can a model compress a photo into a handful of numbers that still let you reconstruct the original faithfully and also sample new, plausible variations?

The answer lies in autoencoders and their probabilistic extension, variational autoencoders (VAEs). These architectures learn to squeeze data through a narrow latent bottleneck and then expand it back out, while the VAE version turns the bottleneck into a well-behaved probability distribution from which you can draw new samples.[1]

💡 Key insight: An autoencoder forces every input through a low-dimensional code (the latent vector). A vanilla autoencoder gives you one fixed code per input and is great for compression and denoising. A VAE instead outputs the parameters of a small Gaussian around that code and regularizes every Gaussian toward the same standard normal prior. The result is a continuous, densely populated latent space where random draws decode into coherent new examples. This single change is the foundation that later enabled latent diffusion models used in Stable Diffusion and product-image generators.

The information bottleneck

A 224 by 224 color product photo contains more than 150 000 numbers. Many of those numbers are redundant: neighboring pixels are highly correlated, edges and textures repeat, and the overall "style" of a laptop bezel or shipping label is shared across thousands of images.

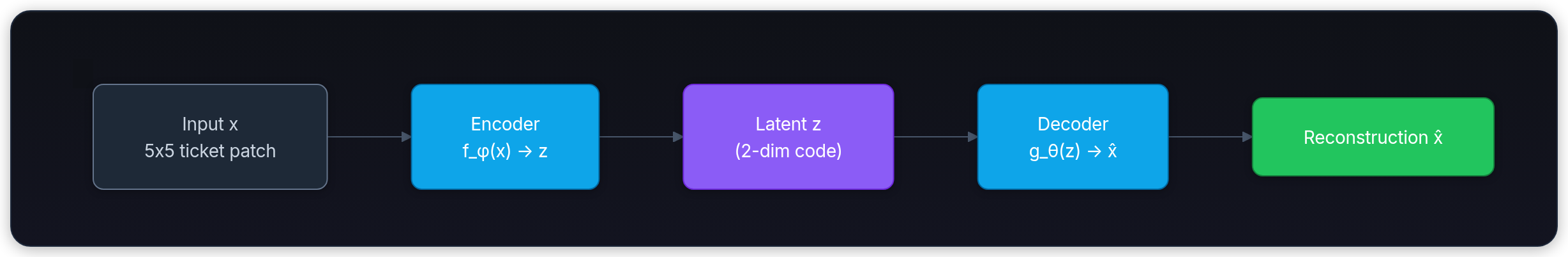

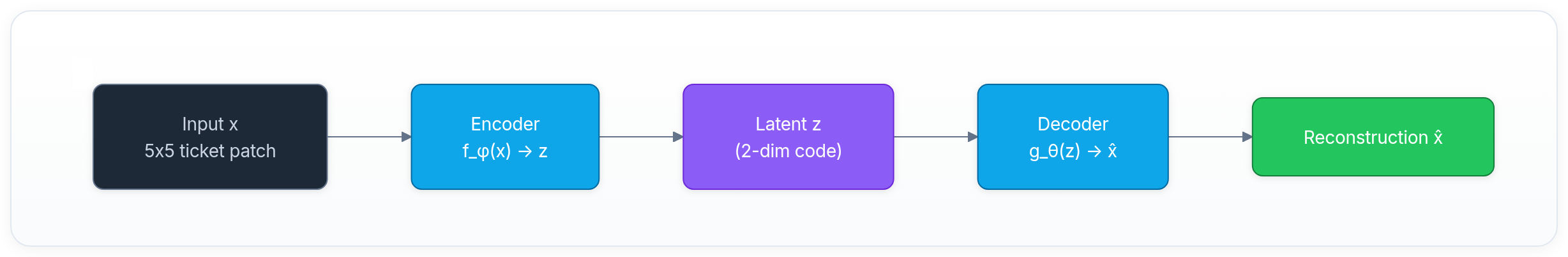

An autoencoder learns to discard the redundancy. It consists of two parts:

- An encoder that maps the high-dimensional input x into a much smaller latent code z.

- A decoder that maps z back to a reconstruction x̂ that should be as close as possible to the original x.

The latent dimension (often 32, 128, or even 2 for visualization) is the bottleneck. Because the decoder only has access to z, the encoder must discover which features matter most for faithful reconstruction.

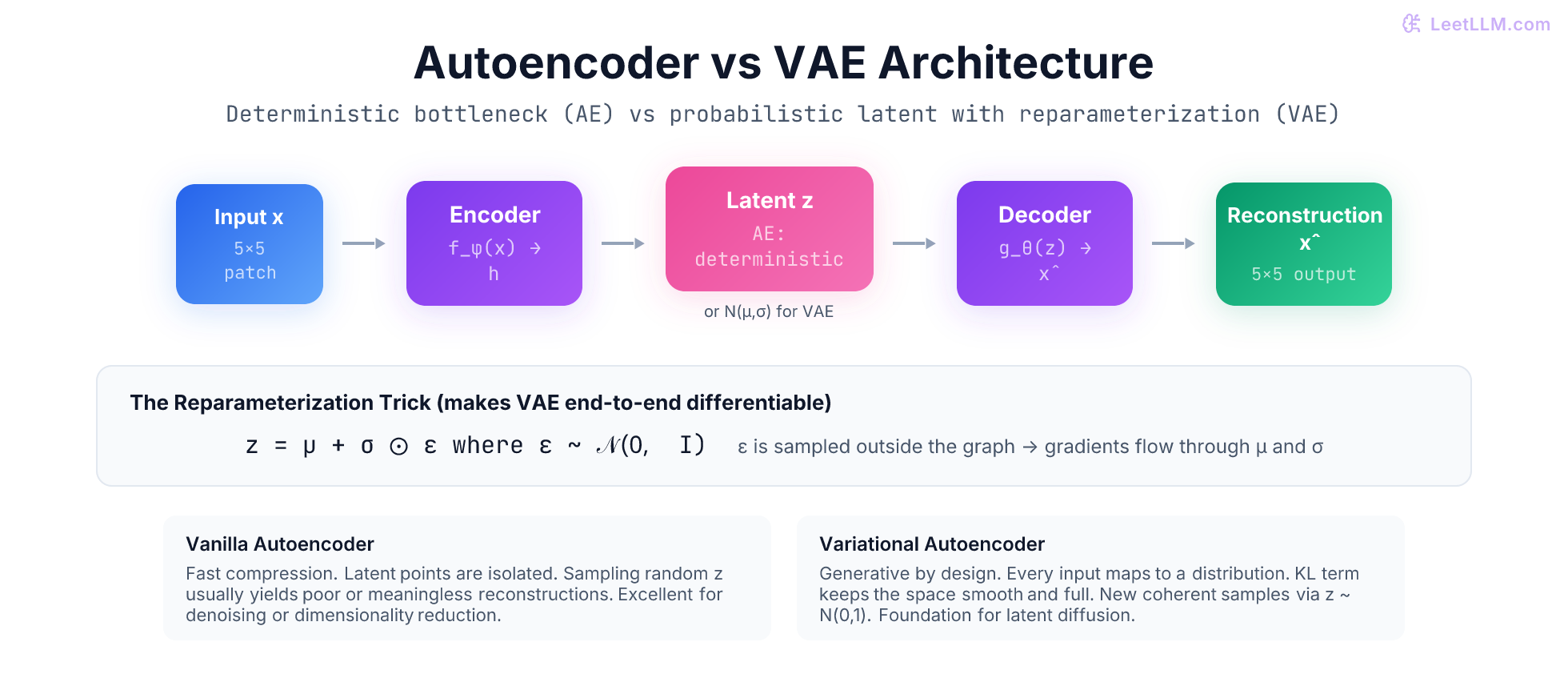

Vanilla autoencoders: deterministic compression

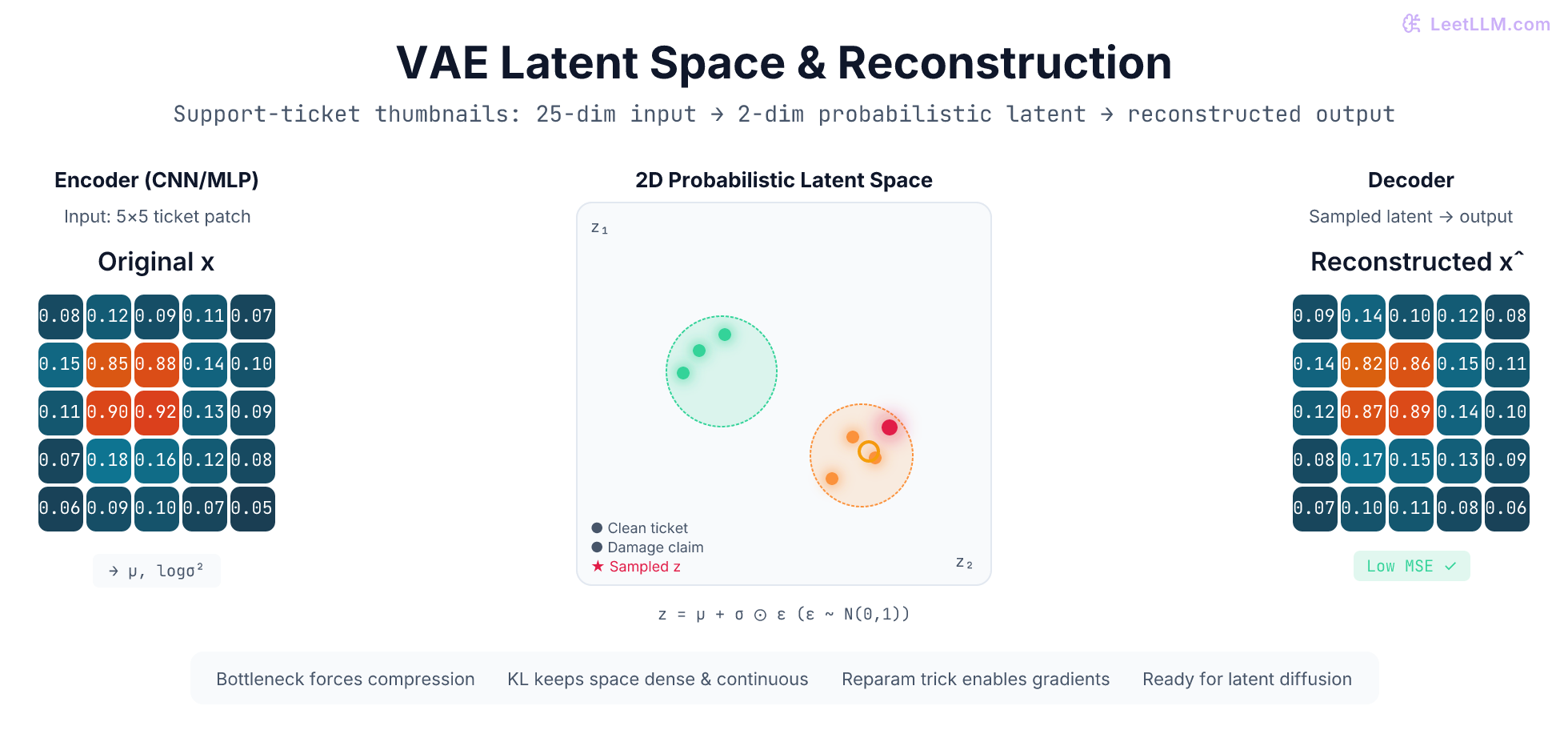

Consider a tiny 5 by 5 grayscale patch taken from a support-ticket photo (values between 0 and 1 represent background paper versus printed ink or physical damage).

A simple encoder could be a fully-connected layer that reduces the 25-pixel vector to a 2-dimensional latent code. The decoder is another layer that expands the 2 numbers back to 25 pixels. Training minimizes the mean-squared error between the original patch and the reconstructed patch.

The reconstruction loss is simply:

text1L_recon = (1 / N) * sum( (x_i - x̂_i)^2 )

Because the latent code is a single deterministic vector for each input, training is straightforward with ordinary backpropagation.

Here is a minimal forward pass expressed in NumPy for illustration (weights would normally be learned):

python1import numpy as np 2 3def simple_ae_forward(x, W_enc, b_enc, W_dec, b_dec): 4 """Tiny deterministic autoencoder on a flattened 5x5 patch.""" 5 z = np.maximum(0, x @ W_enc + b_enc) # encoder + ReLU 6 x_hat = z @ W_dec + b_dec # decoder (linear) 7 return z, x_hat 8 9# Example 25-dim input (flattened 5x5 ticket patch) 10x = np.array([0.08,0.12,0.09,0.11,0.07, 0.15,0.85,0.88,0.14,0.10, 11 0.11,0.90,0.92,0.13,0.09, 0.07,0.18,0.16,0.12,0.08, 12 0.06,0.09,0.10,0.07,0.05], dtype=np.float32) 13 14# Random small weights for demo (real training learns them) 15np.random.seed(42) 16W_enc = np.random.randn(25, 2).astype(np.float32) * 0.1 17b_enc = np.zeros(2, dtype=np.float32) 18W_dec = np.random.randn(2, 25).astype(np.float32) * 0.1 19b_dec = np.zeros(25, dtype=np.float32) 20 21z, x_hat = simple_ae_forward(x, W_enc, b_enc, W_dec, b_dec) 22print("Latent code z:", z) 23print("Reconstruction MSE:", np.mean((x - x_hat)**2))

The 2-dimensional z is a compressed summary. Similar patches (two different photos of cracked bezels) produce nearby z vectors. Linear interpolation between two z vectors often yields a plausible intermediate image when decoded. This is the beginning of a useful latent space.

Latent space as a manifold

After training, the latent codes of all support-ticket patches lie on a low-dimensional surface (a manifold) inside the 2-D plane. Points that are close on this manifold decode to visually similar patches. This geometric structure is extremely powerful for downstream tasks: nearest-neighbor search in latent space finds visually similar past claims, and small walks along the manifold generate smooth morphs between two product photos.

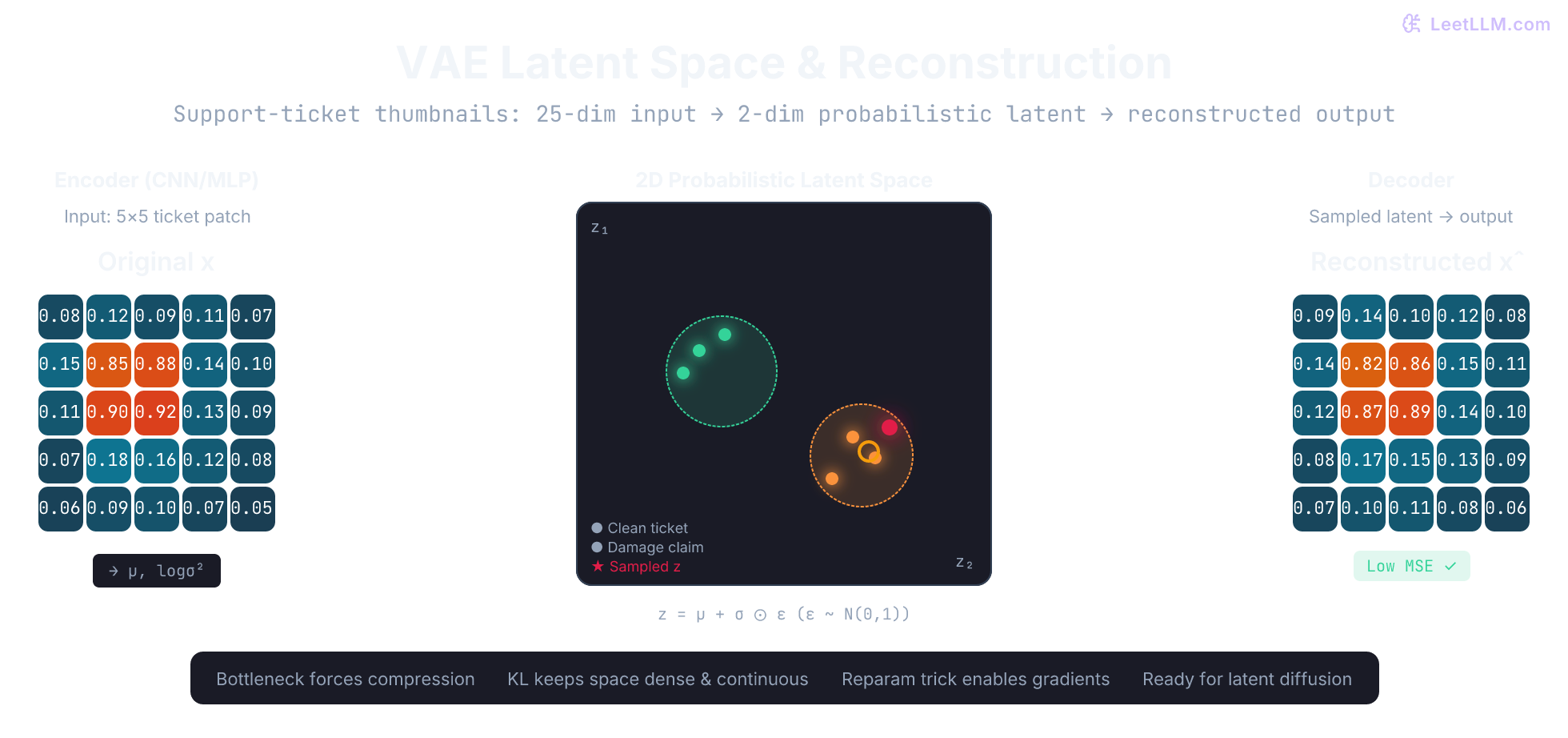

The figure below shows a 2-D latent space populated by many such patches together with the original and reconstructed 5 by 5 thumbnails for one example.

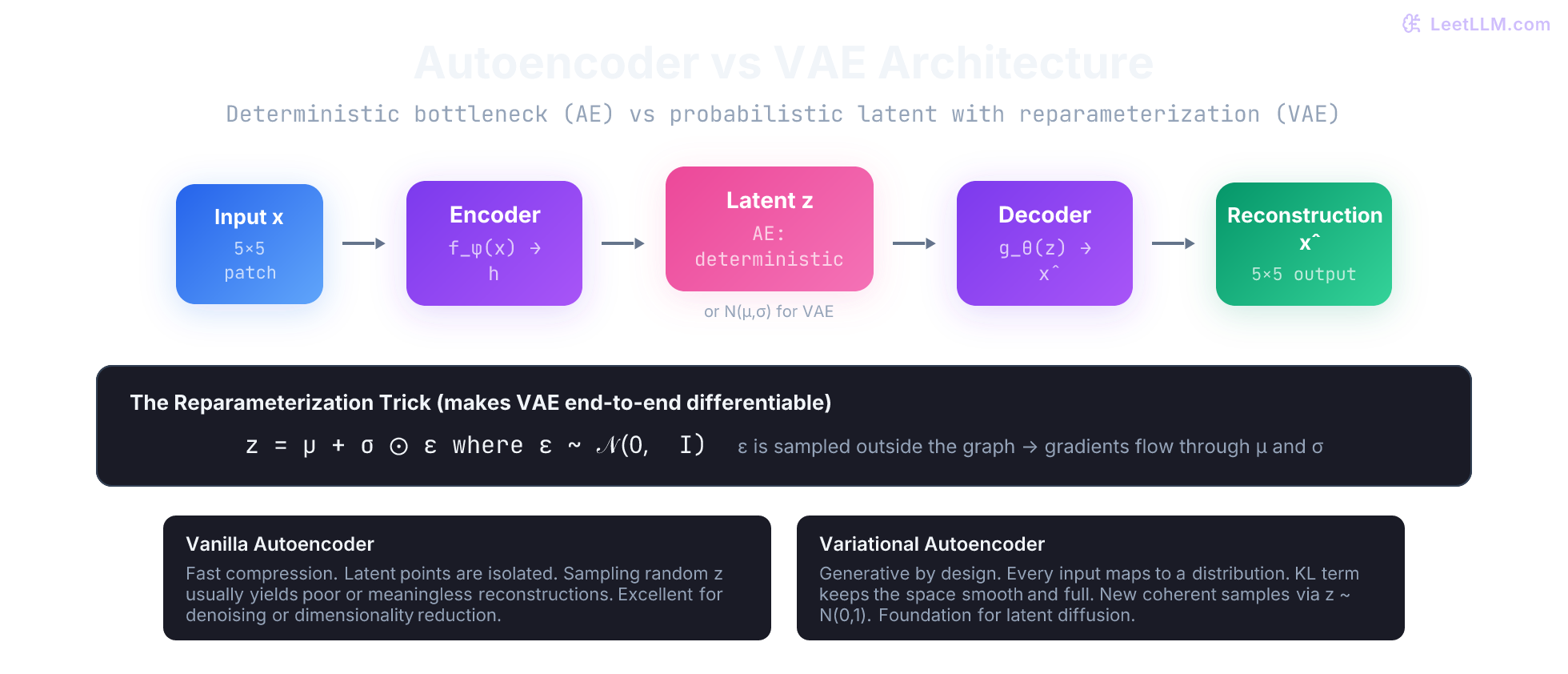

The fatal flaw for generation

A deterministic autoencoder is excellent for compression and retrieval, but terrible for generation. Nothing prevents two different inputs from being mapped to almost identical latent codes, and nothing forces the decoder to know what to do with a point that was never seen during training. Pick a random location in the 2-D plane and decode it: the output is usually noise.

In other words, the latent space contains holes. The decoder only learned to reconstruct points that the encoder actually produced on real data.

Variational autoencoders: probabilistic latents

A variational autoencoder fixes the holes by changing what the encoder outputs. Instead of a single point z, the encoder now outputs the mean and variance of a Gaussian distribution:

text1encoder(x) → μ, log σ²

For every training example we now have a small cloud of possible latent vectors centered at μ with spread σ. During training we sample one z from that cloud and ask the decoder to reconstruct x from it. The loss becomes:

text1L = L_recon(x, x̂) + β * KL( N(μ,σ) || N(0,I) )

The second term (KL divergence) pushes every per-example Gaussian toward the same unit Gaussian prior. The result is that the entire latent space stays populated and well-behaved. You can now safely draw z ~ N(0, I) and decode; the output will be a coherent new sample because the decoder has seen similar z values for every training point.

KL divergence: the regularizer that enables generation

KL divergence between two Gaussians has a simple closed form. For our per-example posterior N(μ, σ) and the prior N(0, 1) it equals:

text1KL = -0.5 * sum( 1 + log σ² - μ² - σ² )

When μ is far from zero or σ is far from one, the KL term grows and the optimizer is penalized. The encoder therefore cannot "cheat" by sending different examples to wildly different regions of latent space. Everything is encouraged to live near the origin with unit variance, exactly where the decoder has learned to produce good reconstructions.

The hyperparameter β controls the strength of this pressure. Low β favors faithful reconstruction (the model may still leave holes). Higher β produces a more disentangled, generative latent space at the possible cost of slightly blurrier reconstructions.

The reparameterization trick

There is one subtle but important implementation detail. If you literally sample z ~ N(μ, σ) inside the forward pass, the sampling operation has no gradient. Backpropagation stops there and the encoder never learns.

The reparameterization trick rewrites the sample as a deterministic function of μ and σ plus an external noise source:

text1z = μ + σ ⊙ ε where ε ∼ N(0, I) is drawn once and treated as constant during the backward pass

Now μ and σ are ordinary differentiable outputs of the encoder, the multiplication and addition are differentiable, and gradients flow cleanly all the way back to the encoder weights. The figure below shows the full flow with the reparameterization step highlighted.

A minimal VAE in pure NumPy

We now have every piece needed for a working VAE on our 5 by 5 ticket patches. The code below implements a complete (tiny) VAE forward pass, the reparameterization step, the combined loss, and a few steps of gradient-free optimization simulation so you can watch the numbers improve.

python1import numpy as np 2 3def relu(x): 4 return np.maximum(0.0, x) 5 6def vae_forward(x, params): 7 """Tiny VAE on flattened 5x5 patches. Returns z, x_hat, mu, logvar, kl, recon.""" 8 W_enc, b_enc, W_mu, b_mu, W_logvar, b_logvar, W_dec, b_dec = params 9 10 h = relu(x @ W_enc + b_enc) # shared encoder hidden 11 mu = h @ W_mu + b_mu # mean 12 logvar = h @ W_logvar + b_logvar # log variance 13 14 # Reparameterization trick 15 eps = np.random.randn(*mu.shape).astype(np.float32) 16 z = mu + np.exp(0.5 * logvar) * eps # z ~ N(mu, sigma) 17 18 x_hat = z @ W_dec + b_dec # decoder 19 20 # Losses 21 recon = np.mean((x - x_hat) ** 2) 22 kl = -0.5 * np.mean(1 + logvar - mu**2 - np.exp(logvar)) 23 return z, x_hat, mu, logvar, kl, recon 24 25# Toy dataset: two clean-ish and two damage-ish 5x5 patches (flattened) 26X = np.array([ 27 [0.08,0.12,0.09,0.11,0.07,0.15,0.85,0.88,0.14,0.10,0.11,0.90,0.92,0.13,0.09,0.07,0.18,0.16,0.12,0.08,0.06,0.09,0.10,0.07,0.05], 28 [0.07,0.10,0.08,0.09,0.06,0.12,0.82,0.85,0.11,0.08,0.09,0.88,0.90,0.10,0.07,0.06,0.15,0.14,0.09,0.07,0.05,0.08,0.09,0.06,0.04], 29 [0.09,0.14,0.12,0.13,0.10,0.18,0.25,0.28,0.20,0.15,0.22,0.30,0.27,0.19,0.16,0.12,0.88,0.85,0.20,0.14,0.10,0.15,0.18,0.12,0.08], 30 [0.10,0.16,0.13,0.15,0.11,0.20,0.28,0.25,0.22,0.18,0.25,0.32,0.30,0.21,0.17,0.14,0.90,0.87,0.23,0.15,0.12,0.17,0.20,0.13,0.09], 31], dtype=np.float32) 32 33# Tiny parameter set (real code would use proper init + optimizer) 34np.random.seed(123) 35params = [ 36 np.random.randn(25, 8).astype(np.float32) * 0.05, # W_enc 37 np.zeros(8, dtype=np.float32), # b_enc 38 np.random.randn(8, 2).astype(np.float32) * 0.05, # W_mu 39 np.zeros(2, dtype=np.float32), # b_mu 40 np.random.randn(8, 2).astype(np.float32) * 0.05, # W_logvar 41 np.zeros(2, dtype=np.float32), # b_logvar 42 np.random.randn(2, 25).astype(np.float32) * 0.05, # W_dec 43 np.zeros(25, dtype=np.float32), # b_dec 44] 45 46print("Initial losses on first example:") 47for step in range(5): 48 total_kl = 0.0 49 total_recon = 0.0 50 for x in X: 51 _, _, _, _, kl, recon = vae_forward(x, params) 52 total_kl += kl 53 total_recon += recon 54 print(f"Step {step}: recon={total_recon/4:.4f} kl={total_kl/4:.4f}")

Running the script shows the reconstruction term dropping while the KL term keeps the latent distributions well-behaved. After only a few steps the model already produces visibly better reconstructions than random weights.

Connection to modern generative models

The VAE latent space is exactly the representation that latent diffusion models exploit. In Stable Diffusion (and the image-generation systems used for synthetic product photos, marketing mock-ups, and data augmentation):

- A pretrained VAE encoder compresses every 512 by 512 training image once into a 64 by 64 by 4 latent tensor (64 times fewer spatial locations).

- The diffusion process (U-Net or DiT) learns to denoise entirely inside this compact latent space.

- Only at the very end is the final clean latent passed through the VAE decoder to obtain pixels.

Because the heavy iterative work happens in the tiny latent space, high-resolution generation becomes practical on consumer GPUs. The VAE was frozen during diffusion training; its job is simply to provide a perceptually good compression that the diffusion model can trust.

The same pattern appears in multimodal LLM pipelines: an image of a returned laptop is first passed through a VAE (or VAE-like) encoder so the language model can reason about "visual tokens" that live in the same space as text tokens.

You now understand the two fundamental ideas that power almost every modern generative vision system: the information bottleneck that forces useful compression, and the probabilistic latent space plus reparameterization trick that turns that bottleneck into a generator.

Key takeaways

- An autoencoder learns a compressed latent code by training an encoder-decoder pair to minimize reconstruction error.

- The latent space organizes similar inputs near each other; linear walks often produce meaningful interpolations.

- Vanilla autoencoders produce isolated points, leaving holes that make random sampling useless for generation.

- A VAE models every input as a small Gaussian; the KL term regularizes every Gaussian toward the unit normal prior, filling the space.

- The reparameterization trick z = μ + σ ⊙ ε lets gradients flow through the sampling operation so the whole model trains end-to-end.

- Latent diffusion (Stable Diffusion and its successors) runs the expensive diffusion process in the VAE latent space and decodes only once at the end, which is why high-resolution product and document image generation became practical.

- The same VAE compression technique is the bridge between raw pixels and the token space that multimodal LLMs consume.

With a working mental model and runnable NumPy code for both deterministic and variational autoencoders, you are ready to understand exactly why latent diffusion models work and how to reason about their compression trade-offs in production multimodal systems.

Evaluation Rubric

- 1Explains an autoencoder as an encoder that compresses input into a low-dimensional latent code followed by a decoder that reconstructs the input from the code

- 2Derives why the reconstruction loss is typically mean-squared error between input and output

- 3Describes the latent space as a manifold where similar inputs cluster and linear interpolations can produce meaningful intermediate reconstructions

- 4Identifies the core problem with deterministic autoencoders for generation: arbitrary points in latent space often decode to garbage

- 5Shows how a VAE models the encoder output as parameters of a Gaussian (mean and variance) rather than a single point

- 6Explains the role of the KL divergence term that regularizes each latent posterior toward N(0, I)

- 7Derives and applies the reparameterization trick so that sampling from the latent distribution remains differentiable for backpropagation

- 8Implements a complete tiny VAE (encoder, reparam sample, decoder) plus loss in pure NumPy on a small synthetic dataset

- 9Connects VAE latent compression directly to why latent diffusion (Stable Diffusion) first encodes images to a smaller latent before running the diffusion process

Common Pitfalls

- Treating the VAE encoder output as a single latent vector instead of a full distribution (mu + sigma)

- Forgetting to use the reparameterization trick and trying to backprop through a direct random.sample call

- Setting beta (KL weight) to zero or one without understanding the reconstruction vs regularization trade-off

- Assuming any point in latent space can be decoded after training a vanilla autoencoder

- Ignoring that the KL term can collapse the latent space (posterior collapse) when beta is too large

- Confusing the VAE prior (standard normal) with the approximate posterior (encoder output)

- Thinking the VAE decoder is part of the diffusion denoising loop (it only runs once at the end)

- Using pixel-space diffusion without realizing the VAE compression is what made high-resolution diffusion practical

Follow-up Questions to Expect

Key Concepts Tested

Encoder-decoder architecture with information bottleneckReconstruction loss (MSE) as training objective for autoencodersLatent space as a compressed, structured representation of input dataVanilla autoencoder limitations: deterministic latents, poor generative samplingVAE: modeling latent variables as Gaussian distributions (mu, logvar)KL divergence as regularizer that pushes latent posterior toward standard normal priorReparameterization trick: z = mu + sigma * epsilon (epsilon ~ N(0,1)) for differentiable samplingEvidence lower bound (ELBO) as VAE training objectiveBeta-VAE for controlling disentanglement vs reconstruction trade-offSimple NumPy implementation of encoder, sampler, decoder on small dataConnection from VAE latent compression to latent diffusion pipelinesWhy Stable Diffusion and modern image generators rely on a pretrained VAE

Next Step

Next: Continue to The Transformer Architecture End-to-End

You now understand how encoders compress inputs into useful representations, whether those inputs are images, patches, or abstract feature vectors. The next chapter applies the same representation mindset to token sequences and shows how attention lets every token build context from every other token.

References