⚡EasyFine-Tuning & Training

Gradients and Backprop

Master the calculus behind every training step: partial derivatives, the chain rule with real numbers, gradient vectors, and why Adam and learning-rate schedules exist, using a concrete two-feature delivery-time predictor.

10 min readOpenAI, Anthropic, Google +16 key concepts

Learning path

Step 9 of 138 in the full curriculum

Calculus is the hidden engine under every training run. When you call loss.backward() in PyTorch or watch an Adam optimizer update millions of weights, you are watching the chain rule and partial derivatives in action.[1] This chapter teaches you the exact arithmetic so the later backpropagation lessons stop feeling like magic. We use one running e-commerce example the whole way: predicting how many hours it will take for a package to reach a customer from warehouse distance and package weight.

You only need basic algebra and the ability to read a small table of numbers. We will do every derivative by hand with real digits before we write a single line of NumPy. By the end you will be able to compute a gradient vector for a two-weight model, run gradient descent on paper, spot why a training curve is misbehaving, and understand exactly why Adam exists.

The derivative tells you which way to push a weight

Suppose your model is dead simple. You only have one knob: a weight w that multiplies distance.

For one package:

- distance

x = 2(200 km) - real delivery time

y = 5.0hours - current guess

w = 3.0 - prediction

ŷ = w · x = 6.0 - squared error loss

L = (6.0 - 5.0)² = 1.0

You want to know: if I change w a tiny amount, does loss go up or down, and by how much?

The derivative dL/dw answers exactly that question. It is the slope of the loss curve at the current w.

We can compute it two ways. First the long way that shows what "tiny change" really means.

| Quantity | Value |

|---|---|

w_new | 3.0 + 0.001 = 3.001 |

ŷ_new | 3.001 × 2 = 6.002 |

L_new | (6.002 - 5.0)² = 1.004004 |

| change in loss | about 0.004 |

| slope estimate | 0.004 / 0.001 = 4 |

That is the definition of the derivative: how much loss changes per unit change in the weight.

Now the fast algebraic way. Expand the loss:

The power rule and chain rule give:

Plug in the current value:

The number matches. Positive 4 tells us: increasing w increases loss. To reduce loss we must decrease w.

This single number is the entire reason gradient descent works.

Partial derivatives when you have several weights

Real models have many weights. Let's give our delivery predictor two knobs:

w1for distance (hundreds of km)w2for weight (kg)

Current values: w1 = 1.0, w2 = 2.0

One training example:

x1 = 2,x2 = 3y = 5.0

Forward pass:

Loss:

A partial derivative asks: "If I change only this one weight while freezing the other, how does loss change?"

We compute two of them.

First hold w2 fixed and treat everything as a function of w1 only. It is exactly the scalar case we just did, but the "x" that multiplies w1 is now x1 = 2.

Similarly for w2:

Both partials are positive, so both weights are currently too high for this example. The gradient vector is therefore:

This vector points uphill on the loss surface in the (w1, w2) plane. Gradient descent will move in the opposite direction.

The chain rule: the real engine of backpropagation

In the example above the prediction was a direct linear combination. In a real neural net the path from a weight to the loss goes through many layers: linear transform, activation, another layer, softmax, cross-entropy. The chain rule is what lets us push the error signal all the way back through every step.

Let's keep the same delivery numbers but make the path explicit so the multiplications become visible.

We have:

- Compute prediction

ŷ = w1·x1 + w2·x2 - Compute error

e = ŷ - y - Compute loss

L = e²

At our point: ŷ = 8, e = 3, L = 9

The local derivatives are easy:

dL/de = 2e = 6de/dŷ = 1dŷ/dw1 = x1 = 2dŷ/dw2 = x2 = 3

The chain rule says the total derivative for w1 is the product of every local piece along the path:

Exactly what we got earlier. The same multiplication happens for w2.

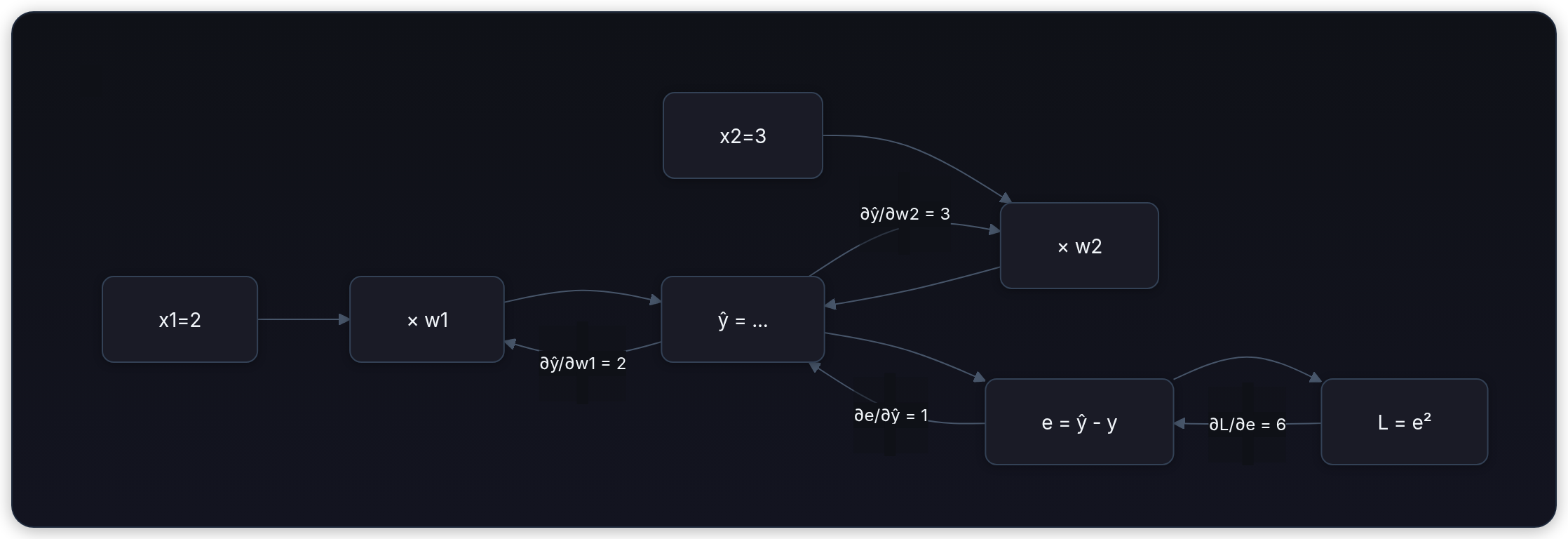

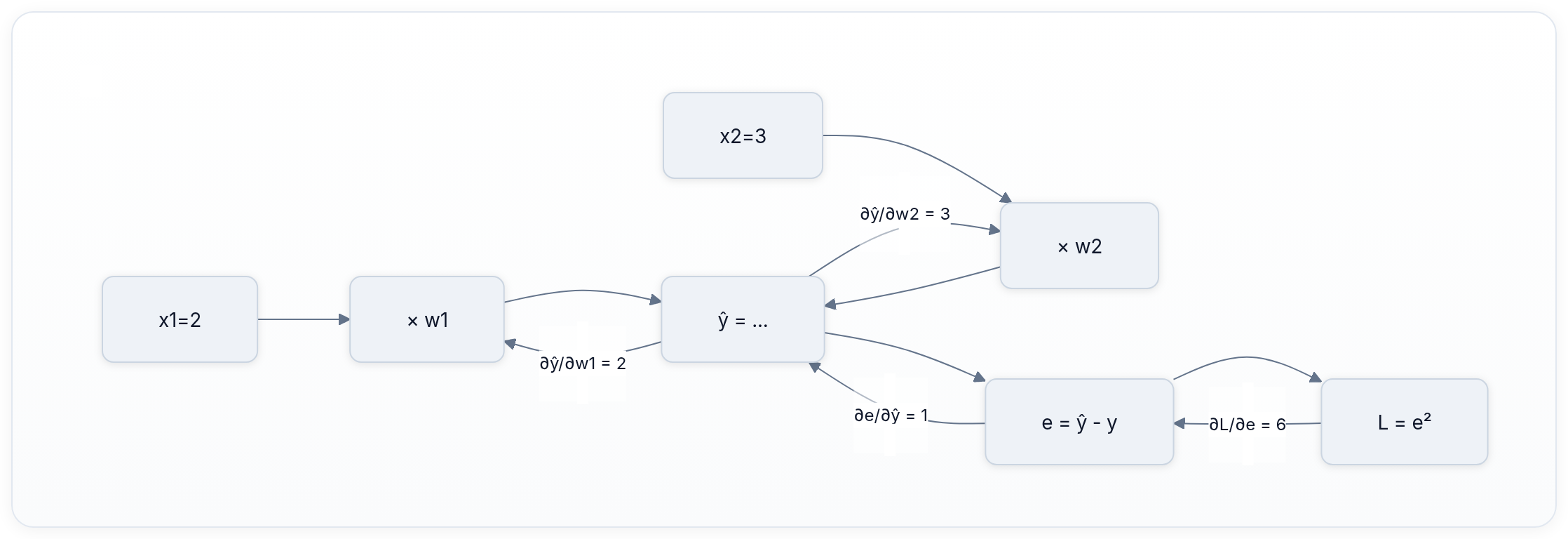

Here is the same story as a small computation graph (forward left to right, gradients flow right to left):

Every arrow on the backward pass carries a number. Multiply the numbers that touch a weight and you have its gradient. That is literally all backpropagation does, just on a graph with thousands of nodes instead of five.

Gradient descent turns the vector into an update

The update rule is brutally simple:

Pick a learning rate lr = 0.05 (a typical starting value for small problems).

- Current weights

[1.0, 2.0], gradient[12, 18] - New

w1 = 1.0 - 0.05 × 12 = 1.0 - 0.6 = 0.4 - New

w2 = 2.0 - 0.05 × 18 = 2.0 - 0.9 = 1.1

Now run the forward pass again with the new weights on the same example:

ŷ = 0.4×2 + 1.1×3 = 0.8 + 3.3 = 4.1L = (4.1 - 5.0)² = 0.81- Loss dropped from 9.0 to 0.81 in one step.

Do it again (second step):

- New gradient at the new point:

∂L/∂w1 = 2×(4.1-5)×2 = -3.6,∂L/∂w2 = 2×(4.1-5)×3 = -5.4 w1 = 0.4 - 0.05×(-3.6) = 0.58

w2 = 1.1 - 0.05×(-5.4) = 1.37

Loss now 0.18. You can keep going. After five or six steps on this tiny surface you are already under 0.01.

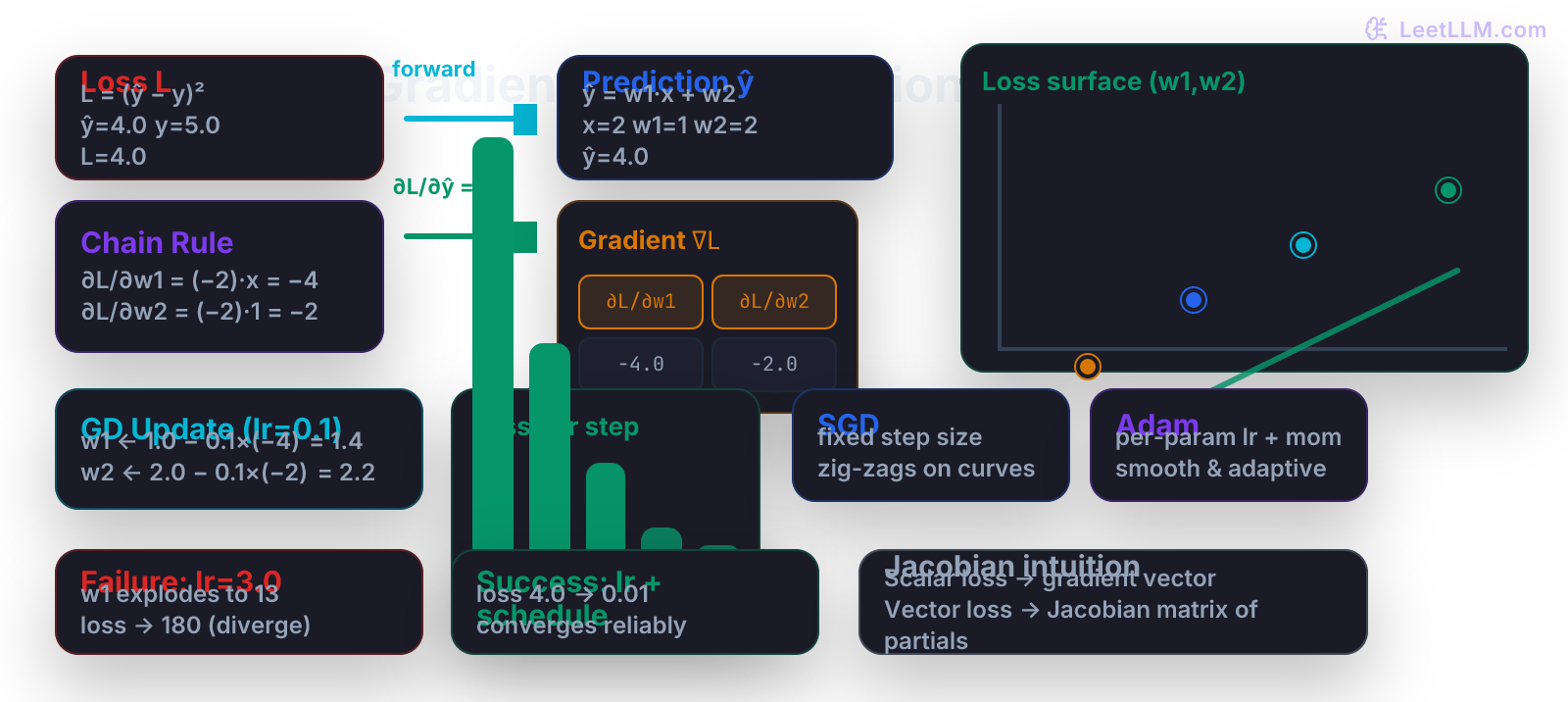

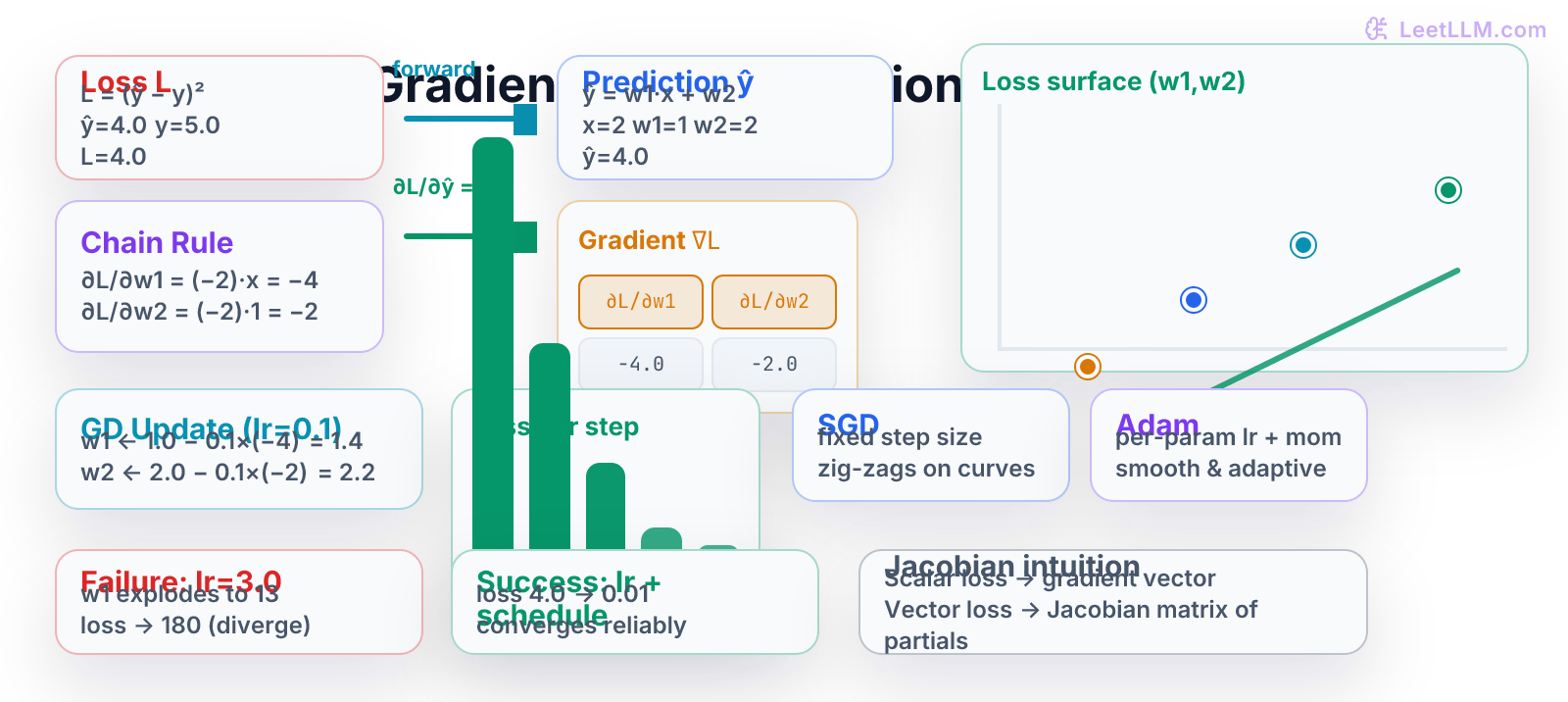

Here is the same story the illustration shows with four descent steps on the loss surface and the loss bars dropping 4.0 → 0.08.

Why plain gradient descent is rarely enough

If every feature had the same scale and every direction on the loss surface were equally curved, vanilla gradient descent would be perfect. In real LLM training neither is true.

- Embedding weights see gradients only on the tokens that actually appear in a batch (sparse). A weight that is rarely updated needs a larger effective step when it does get a signal.

- Some directions in parameter space are steep ravines, others are flat plateaus. A single learning rate either crawls on the plateau or bounces back and forth across the ravine.

- Early in training you want big moves. Late in training you want tiny precise moves so you don't overshoot the minimum.

These are exactly the problems the Adam optimizer (and its cousins) were invented to solve.

Adam keeps two running averages for each weight:

- The first moment (like momentum): a smoothed version of the gradient itself.

- The second moment: a smoothed version of the squared gradient. This acts like an adaptive per-weight learning rate.

Weights whose gradients have been consistently large or small get their step size adjusted automatically. That single trick, plus a couple of bias-correction terms, is why almost every production training script uses AdamW today.

Learning rate schedules finish the job

Even with Adam you usually still decay the learning rate.

A common simple schedule: start at lr = 3e-4, then multiply by 0.1 every time the validation loss stops improving for a while (or use cosine decay that smoothly goes to a tiny final value).

Why it matters:

- High lr early lets you escape bad regions fast.

- Low lr late lets the optimizer settle into a narrow good valley without oscillating or diverging.

In the illustration you can see the failure box: lr=3.0 on the same surface sends the weights flying to huge values and loss explodes to 180. The success box uses a modest lr plus a schedule and reaches 0.01 cleanly.

Code lab: gradient descent from scratch in NumPy

Everything above was hand arithmetic. Now we turn it into code you can run and modify.

First, a minimal scalar example so you can see the loop.

python1import numpy as np 2 3def scalar_gd(): 4 w = 3.0 5 x = 2.0 6 y = 5.0 7 lr = 0.1 8 print("step | w | pred | loss | grad") 9 for step in range(6): 10 pred = w * x 11 loss = (pred - y) ** 2 12 grad = 2 * (pred - y) * x 13 print(f"{step:4d} | {w:6.3f} | {pred:6.3f} | {loss:6.3f} | {grad:6.3f}") 14 w = w - lr * grad 15 16scalar_gd()

Typical output:

text1step | w | pred | loss | grad 2 0 | 3.000 | 6.000 | 1.000 | 4.000 3 1 | 2.600 | 5.200 | 0.040 | 0.800 4 2 | 2.520 | 5.040 | 0.002 | 0.160 5 3 | 2.504 | 5.008 | 0.000 | 0.032 6 ...

Loss collapses toward zero exactly as the math predicted.

Now the full two-weight delivery predictor with a tiny synthetic dataset.

python1import numpy as np 2 3# 5 realistic delivery examples: [distance_hundreds_km, weight_kg] -> hours 4X = np.array([ 5 [2.0, 3.0], # 200km, 3kg 6 [1.5, 1.0], 7 [4.0, 5.0], 8 [0.8, 0.5], 9 [3.2, 2.5], 10], dtype=np.float32) 11y = np.array([5.0, 3.2, 9.5, 2.1, 6.8], dtype=np.float32) 12 13w = np.array([0.8, 1.5], dtype=np.float32) # starting point 14lr = 0.05 15print("step | w1 | w2 | avg_loss") 16for step in range(12): 17 preds = X @ w # vectorized forward 18 errors = preds - y 19 loss = np.mean(errors ** 2) 20 # manual gradient: dL/dw = mean( 2*error * x for each example ) 21 grad = (2 * errors[:, None] * X).mean(axis=0) 22 print(f"{step:4d} | {w[0]:6.3f} | {w[1]:6.3f} | {loss:8.4f}") 23 w = w - lr * grad 24 25print("Final weights:", w)

You will see loss drop from ~8-10 down below 0.2 in a dozen steps. Change lr to 1.5 and watch it explode or oscillate. Change it to 0.001 and watch it crawl.

A minimal Adam implementation (so you see why it helps)

python1# Same X, y, w from above 2m = np.zeros_like(w) # first moment 3v = np.zeros_like(w) # second moment 4beta1, beta2 = 0.9, 0.999 5eps = 1e-8 6lr = 0.05 7t = 0 8 9for step in range(20): 10 t += 1 11 preds = X @ w 12 errors = preds - y 13 loss = np.mean(errors ** 2) 14 grad = (2 * errors[:, None] * X).mean(axis=0) 15 16 m = beta1 * m + (1 - beta1) * grad 17 v = beta2 * v + (1 - beta2) * (grad ** 2) 18 19 m_hat = m / (1 - beta1 ** t) 20 v_hat = v / (1 - beta2 ** t) 21 22 w = w - lr * m_hat / (np.sqrt(v_hat) + eps) 23 if step % 5 == 0: 24 print(f"step {step:2d} loss {loss:8.4f} w {w}")

Even on this tiny problem Adam reaches a lower loss faster and with less oscillation than plain GD. On real embedding tables with sparse updates the difference is dramatic.

How all of this becomes backpropagation in a neural net

A neural network is just a long chain (actually a DAG) of the same operations: matrix multiply, add bias, activation, etc. The loss is still a scalar at the end.

Backpropagation walks the graph backward, multiplying the incoming error signal by the local derivative of each operation (exactly the chain rule arrows in the mermaid diagram). The only engineering trick is that we never materialize the full Jacobian; we only ever need the gradient with respect to the parameters we actually store.

Once you can do the two-weight example by hand, reading the backward pass of a transformer block is just "the same idea, 50,000 times, with careful shape bookkeeping."

Common pitfalls and how to debug them

- Sign error. You added the gradient instead of subtracting. Loss goes up. Fix: always subtract

lr * grad. - Forgetting the chain multiplier. You only took

dL/dŷand applied it directly tow. Your gradient will be off by exactly the value ofx(or the activation derivative). Always trace the full path. - Feature scale mismatch. One column of your design matrix is in thousands, another in 0.001. The gradient for the large feature dominates. Fix: standardize features or let Adam do it for you.

- Learning rate that was good at step 100 is deadly at step 5000. The surface gets flatter near the minimum. Use a schedule.

- NaN or Inf in loss. Almost always means you took a step that was too large somewhere. Print the max absolute value of the gradient before the update. If it is > 100, your lr is probably wrong for that batch.

Print the gradient vector itself during early debugging. If any entry is 0 while the loss is still high, that weight is not receiving any signal (dead ReLU, masked attention, or a feature that never varies).

Practice: do these with pencil and paper first

- Start with

w1=0.5,w2=1.0,x1=3,x2=4,y=6. Compute ŷ, L, both partials, and the gradient vector. - Take one gradient step with

lr=0.1. What are the new weights? What is the new loss on the same example? - Change only the learning rate to 2.0 and repeat step 2. Does loss go up or down? Why?

- Add a third weight

w3that multiplies a bias term (always 1). How does the chain rule change forw3?

Solution sketches appear at the end of the article. The important part is that you can do the arithmetic without running code.

What you can now carry forward

You own the core calculus that every training loop relies on.

When you later read "the gradient of the loss with respect to the embedding table" or "we clipped the gradient norm at 1.0", you will know exactly which numbers are being computed and why the choice of optimizer and schedule changes the shape of the loss curve you see in Weights & Biases.

The same chain-rule arithmetic that let you move two weights on a delivery predictor is what lets a 70-billion-parameter model improve next-token prediction after seeing one more batch.

Solution sketches for the practice problems

-

ŷ = 0.53 + 1.04 = 5.5, L = (5.5-6)² = 0.25

∂L/∂w1 = 2*(-0.5)3 = -3, ∂L/∂w2 = 2(-0.5)*4 = -4

∇L = [-3, -4] -

New w1 = 0.5 - 0.1*(-3) = 0.8, w2 = 1.0 - 0.1*(-4) = 1.4

New ŷ = 0.83 + 1.44 = 8.0, new L = 4.0 (actually went up because we overshot on this single example; on a full batch the average gradient usually points downhill). -

With lr=2.0 the step becomes huge: w1 jumps by +6, w2 by +8. Loss on the example becomes enormous. Classic divergence.

-

The bias term has x3 = 1 for every row, so ∂L/∂w3 = 2*error * 1. The chain rule is identical; the "feature" is just constantly 1.

Evaluation Rubric

- 1Computes partial derivatives and applies the chain rule by hand on a two-weight linear predictor with concrete numbers

- 2Implements a complete gradient descent loop in NumPy, tracks loss over steps, and explains the effect of learning rate on the path

- 3Diagnoses why plain SGD fails on ill-conditioned loss surfaces and why Adam plus schedules succeed in deep network training

Common Pitfalls

- Treating the gradient as a direction you want to move toward (it is the direction of steepest ascent of loss; you subtract it).

- Using the same learning rate for every parameter when features have wildly different scales; some weights get huge updates while others barely move.

- Forgetting that backpropagation is just the chain rule applied efficiently in reverse on the computation graph. If your manual gradient does not match .backward(), you have a chain-rule error.

- Picking a learning rate that works for step 10 but explodes or stalls at step 1000. Always combine a reasonable base lr with a schedule.

- Ignoring that the loss surface for deep nets is not convex. Good initialization, normalization, and Adam are what keep you from getting stuck in bad local regions.

Follow-up Questions to Expect

Key Concepts Tested

partial derivatives and the gradient vectorchain rule on scalar computation graphsgradient descent update rule with concrete arithmeticJacobian intuition for multi-output casesmomentum, adaptive learning rates, and Adamlearning rate schedules and convergence failure modes

Next Step

Next: Continue to Linear Algebra for Machine Learning - Deeper

You now understand exactly how a model measures error and nudges its parameters downhill. The next chapter takes the matrix and vector language you have been using implicitly and makes it precise: you will compute SVD by hand, see why principal components are the natural axes of your data, and learn exactly how rank and condition numbers affect every embedding lookup and training step. Those factorizations are the hidden engine behind the optimizers and schedulers that follow.

References

Deep Learning.

Goodfellow, I., Bengio, Y., Courville, A. · 2016

Pattern Recognition and Machine Learning.

Bishop, C. M. · 2006

Learning Representations by Back-Propagating Errors.

Rumelhart, D. E., Hinton, G. E., Williams, R. J. · 1986 · Nature, 323

Machine Learning: A Probabilistic Perspective.

Murphy, K. P. · 2012