⚙️HardMLOps & Deployment

Advanced MLOps & DevOps for AI

Master advanced MLOps and DevOps patterns for LLM systems: GitOps for prompts and models, feature stores for embedding features, automated rollback on eval regression, shadow traffic, and production-grade model registries.

13 min readGoogle, Meta, OpenAI +38 key concepts

Learning path

Step 127 of 138 in the full curriculum

Your OrderBuddy package tracker chatbot has been stable for weeks on prompt version [email protected] and LoRA adapter v1.9. A teammate adds a small improvement: the bot now pulls the customer's recent order count and average delivery time from a feature store to give more accurate estimates during peak season. The change passes the offline golden set eval, the safety scanner, and the latency budget. It is promoted through staging, a 2% canary, and finally the production alias.

Forty-eight hours later, customers in the western fulfillment region start receiving delivery promises that are off by a full day. The base model weights never changed. The system prompt text never changed. The LoRA weights are identical. Yet the answers degraded.

The root cause was silent training-serving skew in the embedding features. The "recent_order_count" feature used one chunking strategy and normalization in the batch job that built the golden set, but a slightly different preprocessing path in the online feature store that served live traffic. The model registry recorded the adapter and the prompt version, but it had no record of the exact feature computation graph or the timestamped feature values that were present at training time. The basic versioning strategy from the previous lesson was not enough once features and retrieval logic entered the picture.

In Model Versioning & Continuous Deployment, you mastered immutable artifacts, aliases, canary traffic, and automated evaluation gates[1][2]. That foundation is still essential. Advanced MLOps and DevOps for AI systems adds the layers that make those practices scale across teams, prevent skew, and close the feedback loop from production signals back to automatic rollback.

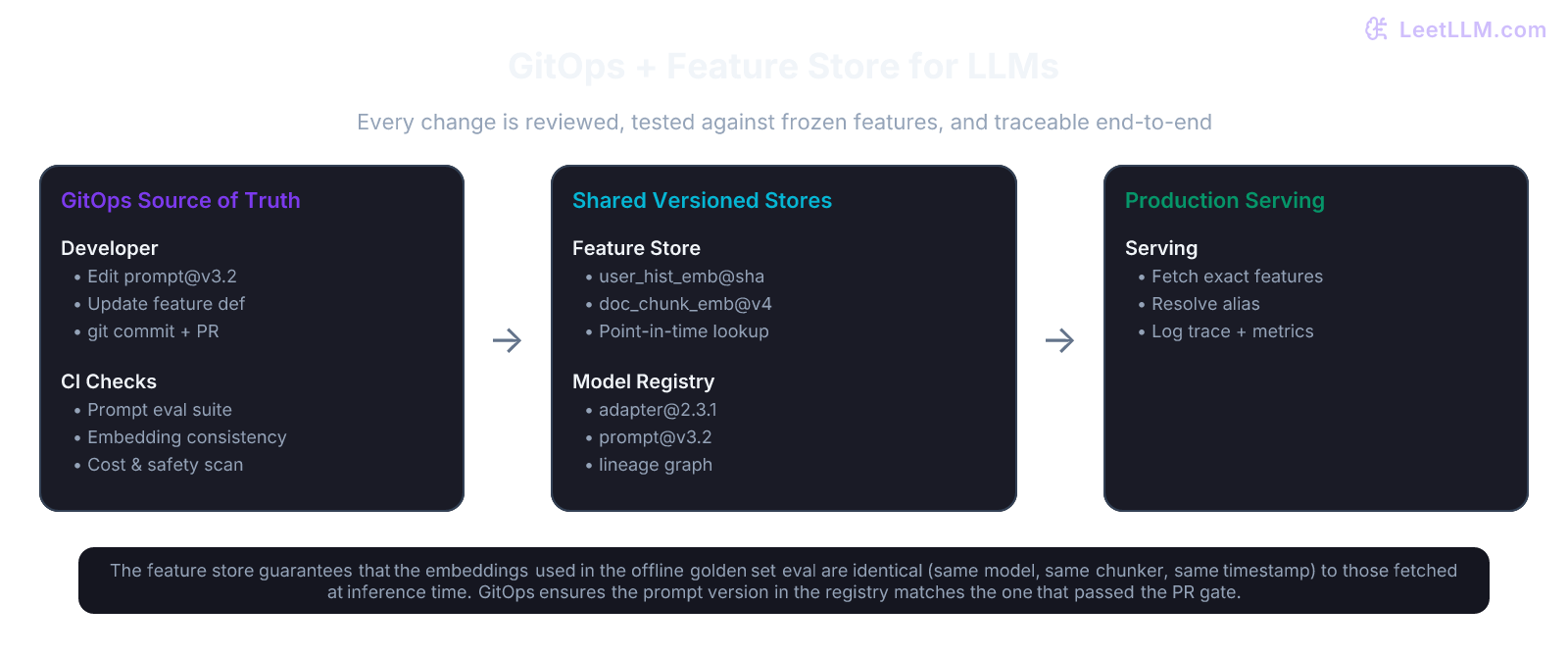

This lesson teaches you how mature LLM teams run GitOps-driven pipelines for prompts and models, use feature stores to keep embeddings consistent, run safe shadow and canary experiments on both prompts and models, and wire observability directly into automated rollback policies.

GitOps for LLM systems

GitOps treats your prompts, feature definitions, model aliases, and deployment configuration exactly like application code[3]. The single source of truth is a git repository. A merge to the main branch (after passing required checks) is the only way production state ever changes.

A typical LLM GitOps repository looks like this:

text1llm-ops-repo/ 2├── prompts/ 3│ ├── support/ 4│ │ ├── [email protected] 5│ │ ├── [email protected] 6│ │ └── tests/ 7│ │ └── support_golden.jsonl 8│ └── policy/ 9│ └── [email protected] 10├── features/ 11│ └── order_history/ 12│ ├── feature_view.py # Feast-style definition 13│ └── entity_keys.yaml 14├── models/ 15│ └── order-status-bot/ 16│ ├── [email protected] 17│ ├── [email protected] # points to registry URI + hash 18│ └── promotion-policy.yaml 19├── deployments/ 20│ └── production/ 21│ └── aliases.yaml # production -> [email protected] 22└── .github/workflows/ 23 └── llm-promote.yml

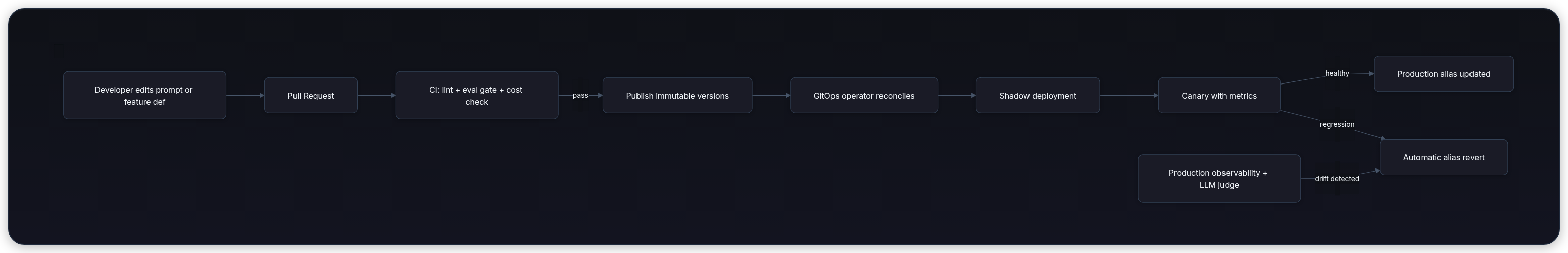

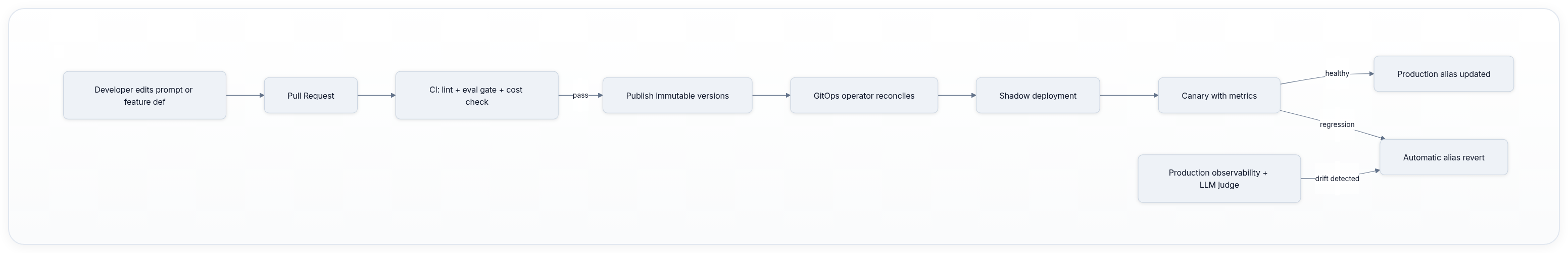

The pipeline on merge does the following:

- Parse changed files and determine what must be re-evaluated.

- Run fast checks (prompt linting, schema validation, cost estimation).

- Run the offline evaluation suite against the exact feature snapshot pinned in the PR.

- If gates pass, publish new immutable versions to the prompt registry and model registry.

- Update the alias file or deployment manifest.

- The GitOps operator (ArgoCD or Flux) detects the change in the repo and applies it to the cluster.

Because the desired state lives in git, a rollback is simply a revert commit or a manual edit of the alias file followed by a pipeline run. No one logs into a dashboard and clicks "promote." The audit trail is the git history.

💡 Key insight: The most important property of GitOps for LLMs is that evaluation datasets and feature definitions are also versioned in the same repository. When a prompt PR runs the golden set, the exact feature values that will be used in production are frozen at that moment.

Feature stores for embeddings and LLM features

Embeddings are the most dangerous source of training-serving skew in modern LLM systems. A RAG pipeline or a personalized prompt may depend on dozens of embedding features: user history summary vectors, document chunk embeddings, category affinity vectors, and time-decayed engagement signals.

A feature store (such as Feast, Tecton, or a custom implementation on top of Redis + S3)[4] solves three problems at once:

- Point-in-time correctness: When you train or evaluate offline, you can request the exact feature values that were available at a historical timestamp. No future data leaks into the training set.

- Consistency: The same feature view definition produces identical vectors whether it runs in a batch Spark job or in the low-latency online path that serves the LLM.

- Lineage: Every embedding vector carries metadata about the exact model checkpoint, chunker version, and preprocessing hash that produced it.

In practice, your feature view for order history might look like this (simplified Feast-style):

python1from feast import Entity, FeatureView, Field 2from feast.types import Float32, Int64 3from datetime import timedelta 4 5order_history = Entity(name="user_id", join_keys=["user_id"]) 6 7order_history_view = FeatureView( 8 name="order_history_embeddings", 9 entities=[order_history], 10 ttl=timedelta(days=90), 11 schema=[ 12 Field(name="recent_order_count", dtype=Int64), 13 Field(name="avg_delivery_days_30d", dtype=Float32), 14 Field(name="order_pattern_embedding", dtype=Array(Float32, 768)), 15 ], 16 source=..., 17 online=True, 18 offline=True, 19)

At serving time the LLM gateway calls the feature store with the current user_id and a request timestamp. The store returns the vector that was valid at that exact moment, using the same transformation that was used when the golden set for the current model version was built.

Without a feature store, teams copy embedding code between training notebooks and the serving service. Over time the two copies diverge, and quality silently collapses exactly as in the OrderBuddy story.

CI/CD for prompts and models at scale

Prompts deserve the same treatment as model weights. A one-line change in a system prompt can shift refusal rates, tone, or factual grounding more than a full fine-tune. Therefore, prompts live in their own registry with semantic versions, test suites, and promotion policies.

A prompt registry entry typically contains:

- The exact template text (with variable placeholders)

- Few-shot or chain-of-thought examples pinned by hash

- Associated guardrail policy version

- Evaluation results on multiple golden sets (helpfulness, groundedness, safety, task-specific accuracy)

- Cost and latency characteristics under different generation settings

When a prompt PR merges, the CI pipeline:

- Renders the prompt against the current golden set using the exact model alias declared in the PR.

- Runs the LLM-as-judge and rule-based safety checks.

- Computes estimated token cost for the target traffic volume.

- Only then does it publish

[email protected]and update the candidate alias.

The same pipeline supports shadow traffic for prompts. The gateway receives the live request, calls the production prompt version for the user, and in parallel (or on a sampled fraction) calls the candidate prompt version against the same model and features. The shadow responses are logged but never shown to users. After a few thousand shadow requests, the team compares judge scores, latency, and cost. Only then is the candidate promoted to a small sticky canary.

Shadow traffic is especially powerful for prompts because the blast radius of a bad prompt is often higher than a bad model weight change, yet the computational cost of running the shadow is only the extra LLM call.

Automated rollback on evaluation regression

The fastest way to lose user trust is to let a degraded version stay in production while engineers investigate. Once you have reliable online signals (from the observability system you built in the previous lesson), you can close the loop.

A production controller watches:

- Infrastructure metrics (TTFT p95, 5xx rate, queue depth)

- Business metrics (task completion rate, thumbs down rate)

- Quality signals (LLM-as-judge groundedness, refusal rate, toxicity classifier score on a continuous sample)

When any signal crosses a policy threshold for a sustained window, the controller automatically moves the production alias back to the last known good version. The entire operation takes under 30 seconds because only the alias changes; no containers are restarted and no weights are reloaded.

Here is a simplified policy in pseudocode:

python1class RollbackPolicy: 2 def __init__(self): 3 self.thresholds = { 4 "p95_ttft_ms": {"max": 850, "window": "5m"}, 5 "groundedness_score": {"min": 0.82, "window": "15m"}, 6 "toxicity_rate": {"max": 0.004, "window": "10m"}, 7 "task_completion_rate": {"min": 0.91, "window": "30m"}, 8 } 9 10 def should_rollback(self, metrics: Dict[str, float], current_alias: str) -> bool: 11 for metric, rule in self.thresholds.items(): 12 value = metrics.get(metric) 13 if value is None: 14 continue 15 if "max" in rule and value > rule["max"]: 16 return True 17 if "min" in rule and value < rule["min"]: 18 return True 19 return False

The controller runs this check every minute against the aggregated metrics for the current alias. Because the registry preserves full history, the rollback is always safe and fully reproducible[5].

⚠️ Common mistake: Rolling back only on error rate or latency while ignoring slow quality regressions. A 3% drop in groundedness over two days will not trigger a 5xx spike, yet it destroys user confidence. LLM judges running on a sampled live stream are one of the best early warning signals for this class of regression.

Putting it all together: lineage and provenance

The ultimate goal of advanced MLOps is complete provenance. Given any production response, an engineer must be able to answer in seconds:

- Which exact prompt version and guardrail policy produced it?

- Which model weights and adapter were active?

- Which feature values (with exact timestamps and computation hashes) were fed into the prompt?

- Which golden set version and judge prompt were used to approve this release?

- What was the commit SHA that last touched any of the above?

All of this information is stored in the model registry entry and cross-linked to the feature store audit log and the git commit that triggered the promotion. When an incident occurs, the first step is never "guess which prompt changed." It is "look up the exact registry entry for the request timestamp and read the immutable lineage."

Self-check

Before moving on, make sure you can answer these questions about the OrderBuddy scenario.

Question 1: The new "holiday shipping" feature used a fresh embedding for average delivery days. The offline eval used a snapshot from April 10. Production traffic on April 12 saw different values because the batch feature job had not yet run for the new orders. Which component should have prevented this mismatch?

Answer: The feature store with point-in-time correctness. The offline eval job should have requested features as of the exact timestamp of the golden set examples, and the online path should have used the same view. The registry should have recorded the feature view version and the snapshot timestamp alongside the model and prompt versions.

Question 2: A teammate pushes a prompt change that improves helpfulness on the golden set but increases average token count by 18%. The canary shows stable latency because the traffic is small. After full promotion, costs spike. What should have happened earlier?

Answer: The CI pipeline and the promotion policy must include cost and token budget checks as first-class gates. Cost regression is as serious as quality regression for production LLM systems. The policy should have blocked promotion or required explicit sign-off from the cost owner.

Question 3: During a canary, the LLM judge score for groundedness drops 4% on the canary variant, but the drop is within the statistical noise of the current sample size. The policy does not trigger automatic rollback. What is the correct next action?

Answer: Increase the canary sample size or extend the observation window before deciding. Never promote on marginal signals. At the same time, the team should investigate the specific failure cases the judge flagged to understand whether the regression is real or an artifact of the judge prompt itself.

Key takeaways

- GitOps is the operating system for LLM teams. Prompts, features, aliases, and promotion policies must live in git with the same review and test discipline as application code.

- Feature stores close the skew gap. Embeddings and other LLM features must be treated as versioned, point-in-time artifacts, not ad-hoc computation scattered across notebooks and services.

- Shadow and canary apply to prompts as much as to models. The marginal cost of an extra LLM call is small compared with the risk of shipping a prompt that changes user behavior in unexpected ways.

- Observability feeds deployment automation. The same signals you monitor for health (latency, judge scores, safety) become the triggers for automatic rollback policies that protect users within seconds.

- Lineage is non-negotiable. Every production response must be traceable back to the exact combination of prompt version, model artifact, feature snapshot, and evaluation gate that produced it.

With these practices in place, your LLM systems become as reliable and auditable as the best traditional software services, while still moving at the speed that modern AI teams require.

Evaluation Rubric

- 1Designs a GitOps pipeline where prompt changes and model promotions are triggered by git merges with full automated test gates

- 2Explains how feature stores eliminate training-serving skew for embedding-based retrieval and personalization features

- 3Implements shadow traffic and sticky canary routing for safe prompt and model experimentation

- 4Defines automated rollback policies that combine latency, cost, safety, and LLM-judge quality signals

- 5Builds end-to-end lineage from data snapshot through prompt version, adapter, and serving image to production alias

- 6Integrates online monitoring (from observability) directly into deployment decision engines

Common Pitfalls

- Storing prompts only in the application code or environment variables instead of a dedicated registry with promotion history

- Using the same embedding model for offline indexing and online retrieval without pinning the exact checkpoint and preprocessing hash

- Running canaries without sticky routing, so users see inconsistent answers across page refreshes

- Triggering rollbacks only on infrastructure metrics while ignoring slow quality regressions caught by LLM judges

- Allowing feature definitions to live in multiple places (notebook, training job, serving code) causing silent skew

- Treating GitOps as 'just ArgoCD' without also versioning the evaluation datasets and golden sets that the pipeline depends on

- Over-automating rollback so that transient traffic spikes cause flapping between versions

Follow-up Questions to Expect

Key Concepts Tested

GitOps workflows for declarative prompt, model alias, and config managementPrompt registries and semantic versioning separate from model weightsFeature stores for consistent embedding computation and point-in-time correctnessShadow traffic and progressive canary deployments for both prompts and modelsAutomated rollback triggered by production evaluation regression signalsFull artifact lineage and provenance tracking across training, retrieval, and servingIntegration of observability metrics with deployment gates and promotion policiesTraining-serving skew prevention in LLM feature pipelines

Next Step

Next: Continue to GPU Serving & Autoscaling

There, you will learn how to run the versioned models and prompts you now manage with advanced MLOps at massive scale: continuous batching, paged attention, KV cache aware autoscaling, cold-start mitigation, and cost-efficient multi-tenancy on GPU fleets. The MLOps practices you just mastered are what make those serving systems safe to operate in production.

References

Continuous Delivery for Machine Learning.

Sato, D., Wider, A., & Windheuser, C. · 2019

Challenges in Deploying Machine Learning: a Survey of Case Studies.

Paleyes, A., Urma, R. G., & Lawrence, N. D. · 2022 · ACM Computing Surveys

Hidden Technical Debt in Machine Learning Systems.

Sculley et al. · 2015

Model Registry Workflows | MLflow AI Platform

MLflow · 2026

Feast: Production Feature Store for Machine Learning

Feast Contributors · 2024

GitOps for Modern MLOps: Declarative Deployments with ArgoCD and Flux

CNCF MLOps Working Group · 2025

Prompt Registries and Versioned PromptOps for Production LLMs

LangSmith + PromptLayer + Helicone Teams · 2026

Automated Rollback Strategies for ML Systems Using Online Evaluation

Sculley et al. + Production ML Case Studies · 2024