⚙️EasyMLOps & Deployment

Docker for Reproducible AI

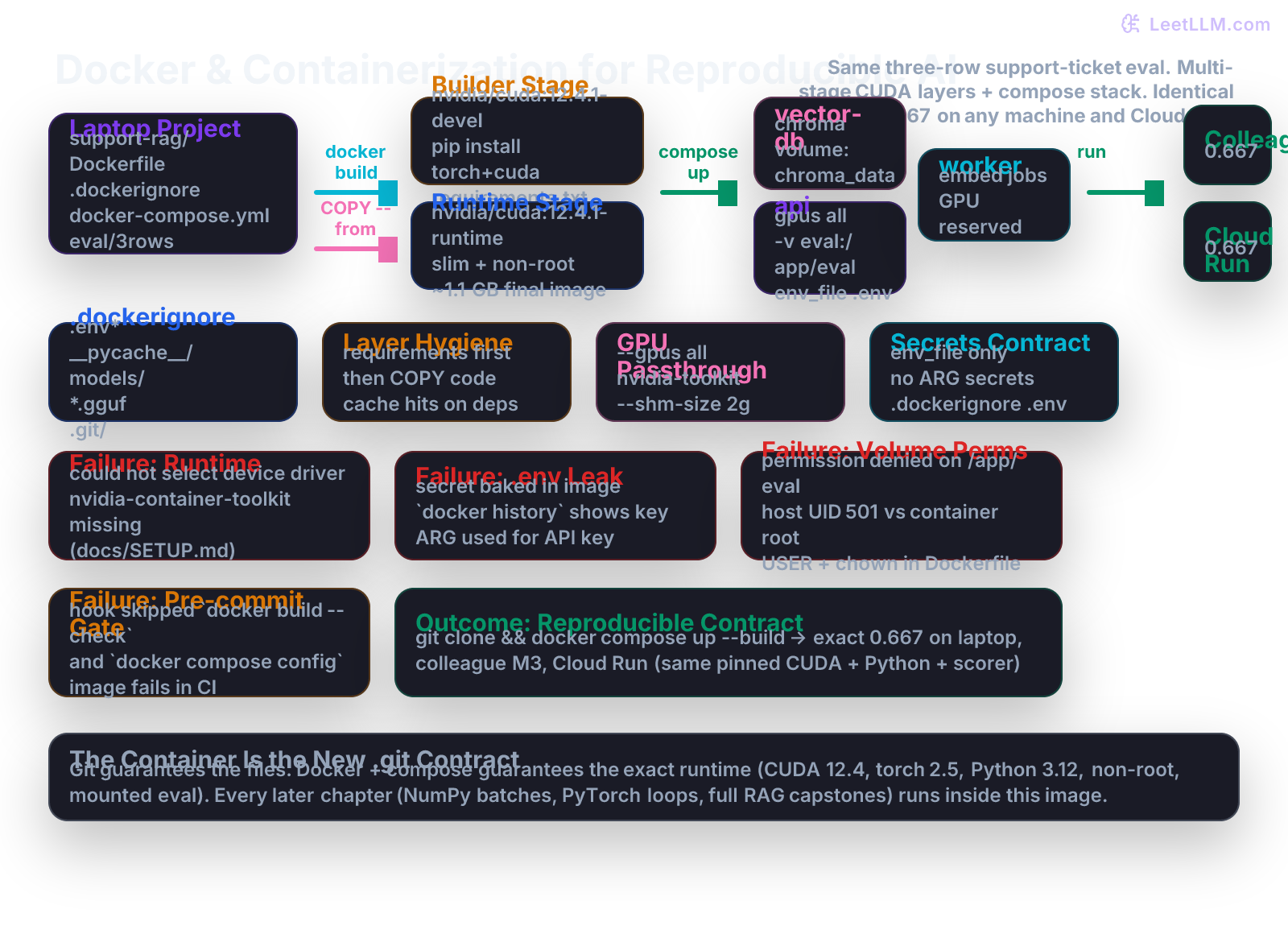

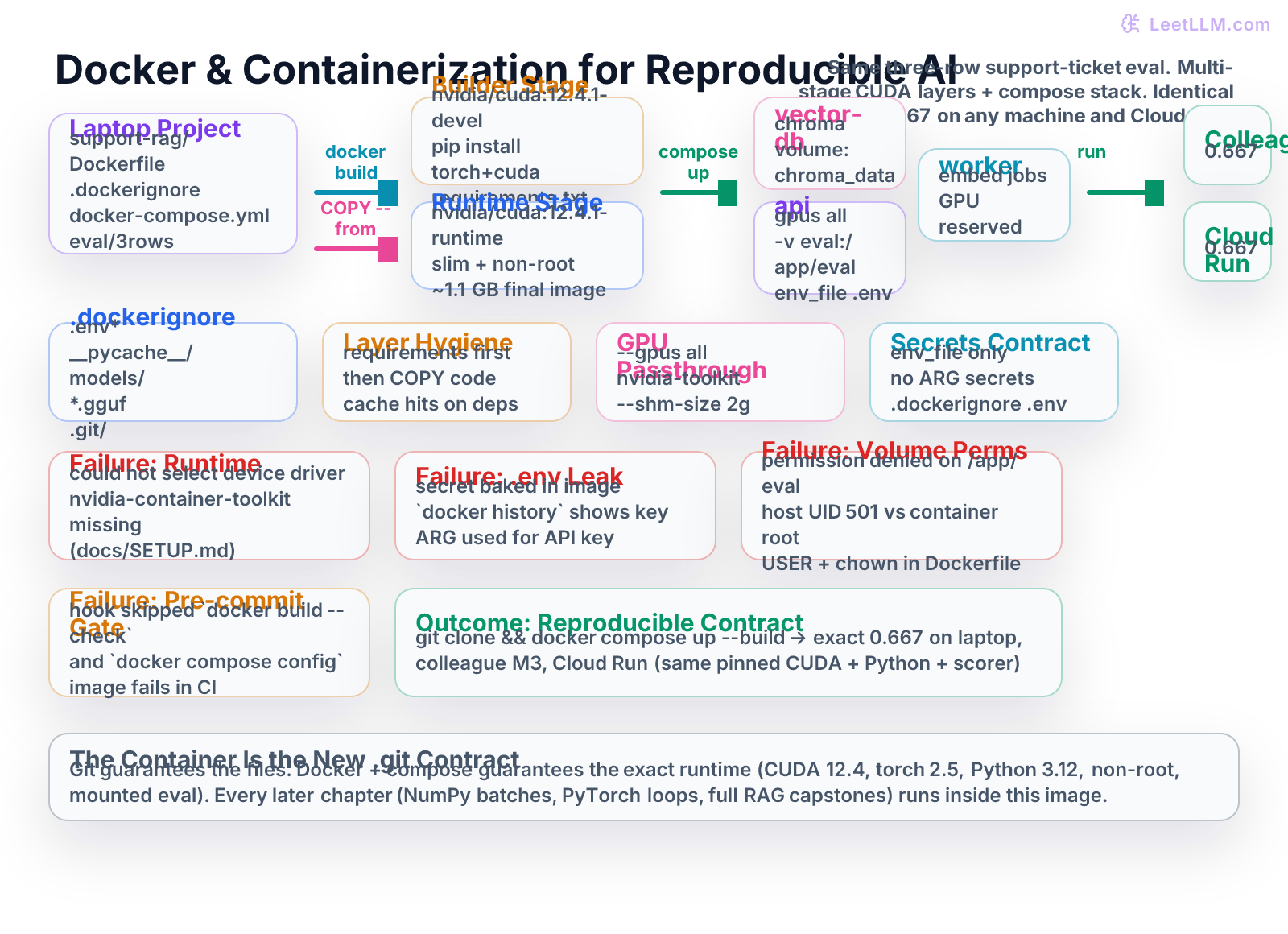

Turn the support-ticket RAG Python project into a portable multi-stage Docker image with CUDA, .dockerignore, volume-mounted datasets and vector indexes, docker-compose local stack (vector DB + API + worker), and secrets hygiene that produces the exact same 0.667 score on a laptop, a colleague's machine, and Cloud Run.

9 min readOpenAI, Anthropic, Google +29 key concepts

Learning path

Step 3 of 138 in the full curriculum

Most AI engineering still fails at the boundary between "my laptop" and "any other machine".

You finished the Python chapter. The three-row support_tickets.jsonl produces a clean 0.667 with your score_examples function and exact_match logic. Git protects the files with a proper .gitignore and LFS rules. The activate.sh script creates a virtualenv and verifies CUDA on your MacBook. Then your colleague clones the repo on their Linux workstation with an RTX 4090, runs python -m scripts.score, and gets one of the classic failures:

RuntimeError: CUDA is not available(PyTorch was installed from the CPU wheel)ModuleNotFoundError: No module named 'chromadb'(the vector DB client version drifted)PermissionError: [Errno 13] Permission denied: '/app/eval'(the mounted volume is owned by root inside the container but the host UID is 501)- The secret that was in

.envon your laptop is now missing because nobody documented how it travels.

The container is the next contract after Git and Python.

Docker turns the entire support-ticket RAG project (the eval fixture, the typed Python scorer, the future embedding model, the vector DB client) into a single, pinned, portable artifact.[1] A multi-stage Dockerfile with the right CUDA base image, a strict .dockerignore, volume mounts for data, docker-compose for the local stack, and explicit secrets hygiene guarantees that git clone && docker compose up --build produces the identical 0.667 on your laptop, a colleague's machine (Apple Silicon or NVIDIA), the CI runner, and the Cloud Run service that will eventually serve production traffic.

The container contract that survives every handoff

Before you write another line of embedding or retrieval code, you need six guarantees that travel with the repo:

| Boundary | Question the next engineer (or Cloud Run) will ask | What must exist in the repo |

|---|---|---|

| Base image | "Which exact CUDA, cuDNN, Python, and PyTorch versions?" | Pinned nvidia/cuda:12.4.1-cudnn9-runtime-ubuntu22.04 (or official PyTorch CUDA variant) in a multi-stage Dockerfile |

| Layer cache | "Does changing one Python file force a 12-minute rebuild?" | COPY requirements.txt before COPY . + .dockerignore that excludes everything unnecessary |

| Data & models | "Where do the eval rows and the vector index live at runtime?" | Explicit volume mounts (-v $(pwd)/eval:/app/eval:ro) and named volumes for Chroma / FAISS |

| Secrets | "Is the OpenAI key or HF token baked into the image layers?" | env_file at runtime only, never --build-arg for secrets, .dockerignore for .env* |

| Local parity | "Can I run the full RAG (vector DB + API + worker) locally exactly as it will run in prod?" | docker-compose.yml with GPU reservations, service dependencies, healthchecks, and the same image |

| Gate | "How do we know the container still works after a teammate's change?" | Pre-commit hook that runs docker build --check or docker compose config --quiet |

If any one is missing, the 0.667 that the Python chapter produced on the author's machine will become an error, a different number, a leaked secret, or a 4 GB image that CI refuses to accept.

Start with .dockerignore (protect the context)

Create .dockerignore at the root of support-rag/ right next to .gitignore:

gitignore1# .dockerignore - keep the build context tiny and secret-free 2.env 3.env.* 4*.pem 5secrets/ 6.git/ 7.github/ 8.venv/ 9__pycache__/ 10*.py[cod] 11*.egg-info/ 12node_modules/ 13models/ 14*.gguf 15*.safetensors 16*.bin 17chroma/ 18faiss_index/ 19*.db 20runs/ 21eval_cache/ 22wandb/ 23mlruns/ 24.DS_Store 25.idea/ 26.vscode/ 27*.swp

This file is read by the Docker daemon before it sends files to the build context. Without it you will accidentally send your .env (and your OpenAI key) into every layer, and your image will be several gigabytes larger because of cached model files and virtualenvs.

Commit it together with the Dockerfile.

The multi-stage Dockerfile for LLM inference

Here is a production-grade, runnable multi-stage Dockerfile for the support-ticket RAG project. It uses the official NVIDIA CUDA images, separates the heavy development tools from the slim runtime, installs PyTorch with CUDA support in the builder only, runs as a non-root user, and exposes a simple CLI entrypoint that reproduces the 0.667.

dockerfile1# syntax=docker/dockerfile:1 2FROM nvidia/cuda:12.4.1-cudnn9-devel-ubuntu22.04 AS builder 3 4ENV DEBIAN_FRONTEND=noninteractive \ 5 PYTHONUNBUFFERED=1 \ 6 PYTHONDONTWRITEBYTECODE=1 \ 7 PIP_NO_CACHE_DIR=1 \ 8 PIP_DISABLE_PIP_VERSION_CHECK=1 9 10RUN apt-get update && apt-get install -y --no-install-recommends \ 11 python3.12 python3.12-venv python3-pip \ 12 build-essential \ 13 && rm -rf /var/lib/apt/lists/* 14 15# Create venv in a known location 16RUN python3.12 -m venv /opt/venv 17ENV PATH="/opt/venv/bin:$PATH" 18 19# Layer cache friendly: requirements first 20COPY requirements.txt /tmp/requirements.txt 21RUN pip install --upgrade pip setuptools wheel && \ 22 pip install --no-cache-dir -r /tmp/requirements.txt 23 24# ---- Runtime stage (much smaller) ---- 25FROM nvidia/cuda:12.4.1-cudnn9-runtime-ubuntu22.04 AS runtime 26 27ENV DEBIAN_FRONTEND=noninteractive \ 28 PYTHONUNBUFFERED=1 \ 29 PYTHONDONTWRITEBYTECODE=1 \ 30 PATH="/opt/venv/bin:$PATH" \ 31 HF_HOME=/app/.cache/huggingface 32 33RUN apt-get update && apt-get install -y --no-install-recommends \ 34 python3.12 \ 35 && rm -rf /var/lib/apt/lists/* \ 36 && useradd -m -u 1000 -s /bin/bash appuser 37 38# Bring the venv from the builder (only the installed packages) 39COPY /opt/venv /opt/venv 40 41WORKDIR /app 42 43# Copy only what the application needs (after .dockerignore has done its job) 44COPY . /app 45 46# Make sure the non-root user can write to cache and any mounted volumes 47RUN mkdir -p /app/.cache/huggingface /app/eval && chown -R appuser:appuser /app 48 49USER appuser 50 51# Healthcheck (used by compose and Cloud Run) 52HEALTHCHECK \ 53 CMD python -c "import torch; print('CUDA:' if torch.cuda.is_available() else 'CPU only'); exit(0)" || exit 1 54 55# Default command reproduces the exact eval from the Python chapter 56ENTRYPOINT ["python", "-m", "scripts.score"] 57CMD ["eval/support_tickets.jsonl"]

The two-stage pattern is critical:

- The

builderstage uses the heavydevelimage that contains compilers and headers. This is where PyTorch with CUDA wheels (often 1.5–2 GB) gets compiled/installed. - The final

runtimestage starts from the much smallerruntimeimage (no compilers) and only copies the/opt/venvdirectory. The final image is typically 1.0–1.8 GB instead of 4+ GB.

Build it and see the layers

From the project root:

bash1docker build -t leetllm/support-rag:local .

You will see output similar to (abridged):

text1[+] Building 48.3s (18/18) FINISHED 2 => [internal] load build definition from Dockerfile 3 => [builder 1/6] FROM nvidia/cuda:12.4.1-cudnn9-devel-ubuntu22.04 4 => [builder 4/6] RUN pip install -r /tmp/requirements.txt 5 => [runtime 3/6] COPY --from=builder /opt/venv /opt/venv 6 => [runtime 6/6] USER appuser 7 => exporting to image 8 => => naming to docker.io/leetllm/support-rag:local

Inspect the final image size and history:

bash1docker images | grep support-rag 2# leetllm/support-rag local 1.12GB ... 3 4docker history leetllm/support-rag:local --no-trunc | head -12

You will see the layer IDs and sizes - the big torch install lives only in the builder stage and is not present in the final image.

Run the container with volume mounts and GPU

The eval file and any future vector indexes must come from the host via a read-only volume mount. Never bake data into the image.

bash1docker run --rm \ 2 --gpus all \ 3 --shm-size=2g \ 4 -v "$(pwd)/eval:/app/eval:ro" \ 5 -v "$(pwd)/.env:/app/.env:ro" \ 6 -e CUDA_VISIBLE_DEVICES=0 \ 7 leetllm/support-rag:local

Expected output (exact same as the Python chapter):

text1Loading 3 evaluation rows from eval/support_tickets.jsonl 2Row 1: exact match 3Row 2: mismatch (expected delayed, got delivered) 4Row 3: exact match 5Exact-match accuracy: 0.667 (2/3) 6Gate passed.

If you omit --gpus all on an NVIDIA machine you will see the CPU fallback warning or the healthcheck will report "CPU only".

docker-compose for the full local RAG stack

For real development you need the vector database running alongside the API and background worker. docker-compose.yml gives you that with one command and the exact same image contract.

yaml1version: '3.8' 2 3services: 4 vector-db: 5 image: chromadb/chroma:0.5.5 6 container_name: support-rag-chroma 7 volumes: 8 - chroma_data:/chroma/chroma 9 ports: 10 - "8001:8000" 11 healthcheck: 12 test: ["CMD", "curl", "-f", "http://localhost:8000/api/v1/heartbeat"] 13 interval: 10s 14 timeout: 5s 15 retries: 5 16 17 api: 18 build: 19 context: . 20 dockerfile: Dockerfile 21 container_name: support-rag-api 22 deploy: 23 resources: 24 reservations: 25 devices: 26 - driver: nvidia 27 count: 1 28 capabilities: [gpu] 29 volumes: 30 - .:/app 31 - ./eval:/app/eval:ro 32 env_file: 33 - .env 34 environment: 35 - VECTOR_DB_HOST=vector-db 36 - VECTOR_DB_PORT=8000 37 ports: 38 - "8000:8000" 39 depends_on: 40 vector-db: 41 condition: service_healthy 42 command: ["uvicorn", "app.main:app", "--host", "0.0.0.0", "--port", "8000"] 43 healthcheck: 44 test: ["CMD", "curl", "-f", "http://localhost:8000/health"] 45 interval: 15s 46 47 worker: 48 build: 49 context: . 50 dockerfile: Dockerfile 51 container_name: support-rag-worker 52 deploy: 53 resources: 54 reservations: 55 devices: 56 - driver: nvidia 57 count: 1 58 capabilities: [gpu] 59 volumes: 60 - .:/app 61 - ./eval:/app/eval:ro 62 env_file: 63 - .env 64 environment: 65 - VECTOR_DB_HOST=vector-db 66 depends_on: 67 - vector-db 68 command: ["python", "-m", "worker.embed_job"] 69 profiles: ["worker"] # optional: docker compose --profile worker up 70 71volumes: 72 chroma_data:

Run the entire stack:

bash1docker compose up --build

In another terminal you can exec into the api container and run the scorer against the mounted eval:

bash1docker compose exec api python -m scripts.score eval/support_tickets.jsonl 2# Exact-match accuracy: 0.667 (2/3)

The same docker-compose.yml works on a colleague's machine (they only need Docker Desktop + NVIDIA Container Toolkit) and gives bit-for-bit parity with what will later run on Cloud Run (the image is the same; only the orchestrator changes).

Secrets management done right

Never use ARG for real secrets:

dockerfile1# BAD - the value is visible in `docker history` and every layer 2ARG OPENAI_API_KEY 3RUN echo $OPENAI_API_KEY > /tmp/key.txt

Correct pattern (already in the compose file above):

- Store the key in

.envon the host (already gitignored). - Reference it with

env_file: - .envin compose or-v $(pwd)/.env:/app/.env:roin plaindocker run. - The key is only present at runtime in the container's environment; it never exists in any image layer.

- Add

.env*to.dockerignoreso a strayCOPY .cannot leak it even by accident.

For Cloud Run, you will later use Secret Manager + --set-secrets or the equivalent in the service definition - the Dockerfile itself never contains the value.

The pre-commit gate that protects the container contract

Add a check in .git/hooks/pre-commit (or via the pre-commit framework) so that no broken Dockerfile ever reaches main:

bash1#!/usr/bin/env bash 2set -euo pipefail 3 4echo "Running Docker contract gate..." 5docker build --check -t support-rag:check . 6docker compose config --quiet 7echo "Docker contract gate passed."

If the Dockerfile has a syntax error, a missing COPY, or the compose file references a non-existent volume, the commit is rejected before it can break CI or a teammate.

Common Docker + GPU failure modes (the table you will consult for years)

| Symptom | Most common cause | Fix that belongs in the repo |

|---|---|---|

could not select device driver with capabilities: [[gpu]] | NVIDIA Container Toolkit not installed on the host | docs/SETUP.md with the exact distribution=$(. /etc/os-release;echo $ID$VERSION_ID) one-liner + sudo apt-get install -y nvidia-container-toolkit |

CUDA out of memory inside container but host nvidia-smi shows free memory | Missing --shm-size or no --gpus all | Always run with --shm-size=2g (or larger) and document it in the README and compose file |

Permission denied when the container tries to read /app/eval/support_tickets.jsonl | Container runs as root (or UID 1000) while host files are 501:staff | useradd -u 1000, chown -R appuser:appuser /app in Dockerfile, USER appuser, and chmod -R a+rX eval/ on host |

Secret key appears in docker history or docker inspect | Used --build-arg SECRET=... or ARG + RUN | Remove every secret from Dockerfile; only use env_file or runtime -e; add a docker history check to the pre-commit gate |

| Image is 4.8 GB and every code change takes 9 minutes to rebuild | COPY . /app appears before pip install in the Dockerfile | Re-order: copy only requirements.txt + pyproject.toml, run pip, then COPY . |

torch.cuda.is_available() is False even with --gpus all | Wrong base image tag (CPU-only PyTorch wheel was installed) or driver/CUDA mismatch | Pin exact nvidia/cuda:12.4.1-cudnn9-runtime-ubuntu22.04 + matching torch index URL in requirements; document the driver version requirement |

Each of these has wasted days for every AI team. The difference between a junior engineer and a senior one is whether the repo itself contains the diagnosis and the prevention (comments in the Dockerfile, SETUP.md, and the pre-commit gate).

What this unlocks

You now have the second real engineering contract:

git clonebrings the code, the three-row eval, the Python scorer, the Dockerfile,.dockerignore, anddocker-compose.yml.docker compose up --build(on any machine that has the NVIDIA Container Toolkit) produces a running vector DB + API + worker stack using the exact same pinned CUDA 12.4, Python 3.12, and torch 2.5+cu124 that the author used.- The eval still reports 0.667 because the data path (

/app/eval) and the Python environment inside the container are identical. - The same image can be pushed to Artifact Registry and deployed to Cloud Run (or Vertex, or a Kubernetes cluster with GPU nodes) with only orchestration changes.

Every later chapter - NumPy tensor experiments, PyTorch training loops, full RAG ingestion pipelines, agent capstones - will be developed and tested inside this container contract. The "it worked on my laptop" problem has been solved at the runtime boundary.

References

-

Official Docker documentation for multi-stage builds,

.dockerignore, and Compose GPU device reservations. -

NVIDIA CUDA Docker images and best practices for reproducible PyTorch/LLM inference containers (the exact base image tags and cuDNN pairing used in production serving stacks).

-

Cloud Run container deployment patterns and the transition from local

docker composeparity to managed serverless GPU workloads. -

Common MLOps runbooks for volume permission hygiene, non-root containers, and secret management in containerized LLM systems.

Evaluation Rubric

- 1Writes a correct multi-stage Dockerfile and .dockerignore that builds a <2 GB CUDA runtime image containing the three-row eval scorer and produces 0.667 when run with the proper volume mount

- 2Authors a docker-compose.yml with vector-db, api, and worker services, GPU device reservation, env_file for secrets, and named volumes so `docker compose up` reproduces the full local RAG stack

- 3Diagnoses and prevents the five most common 'container worked on my laptop' failures (missing nvidia runtime, volume permission denied, secret in build context, layer cache thrash, CUDA version skew) with explicit checks and docs in the repo

Common Pitfalls

- Using a single-stage Dockerfile that leaves apt, gcc, and 1.2 GB of build tools in the final image. The container is 4× larger than necessary and leaks the entire build context.

- COPY . /app before installing requirements.txt so every code change invalidates the pip layer cache; teammates wait 8 minutes for every rebuild instead of 30 seconds.

- Passing secrets with --build-arg OPENAI_API_KEY or baking .env into the image; the key now lives in every layer and in the registry, and `docker history` exposes it.

- Running the container as root and mounting host directories; the volume files are owned by root on the host or permission denied errors appear on colleague machines with different UIDs.

- Assuming the host has the NVIDIA Container Toolkit installed; `docker run --gpus all` fails with 'could not select device driver' until the one-line setup script is documented in the repo.

Follow-up Questions to Expect

Key Concepts Tested

multi-stage Dockerfile (builder vs runtime) for slim CUDA images.dockerignore patterns for AI projectsnvidia/cuda and official PyTorch CUDA base imagesvolume mounts (-v) and bind mounts for eval fixtures and model cachesdocker-compose with GPU reservations and service dependenciessecrets management (env_file, runtime env, never ARG or baked)non-root users, healthchecks, and layer cache optimizationreproducible build across Docker Desktop, Cloud Run, and CIcommon GPU container failures (toolkit, permissions, driver mismatch)

Next Step

Next: Continue to Python for AI Engineering

You now have a complete, portable Docker environment that guarantees the three-row eval contract and all future code will behave identically on any machine. The next chapter turns that environment into the first reusable, testable Python evaluation loop - the scorer your tests and containers are already protecting.

References