⚡EasyFine-Tuning & Training

Adam, Momentum, Schedulers

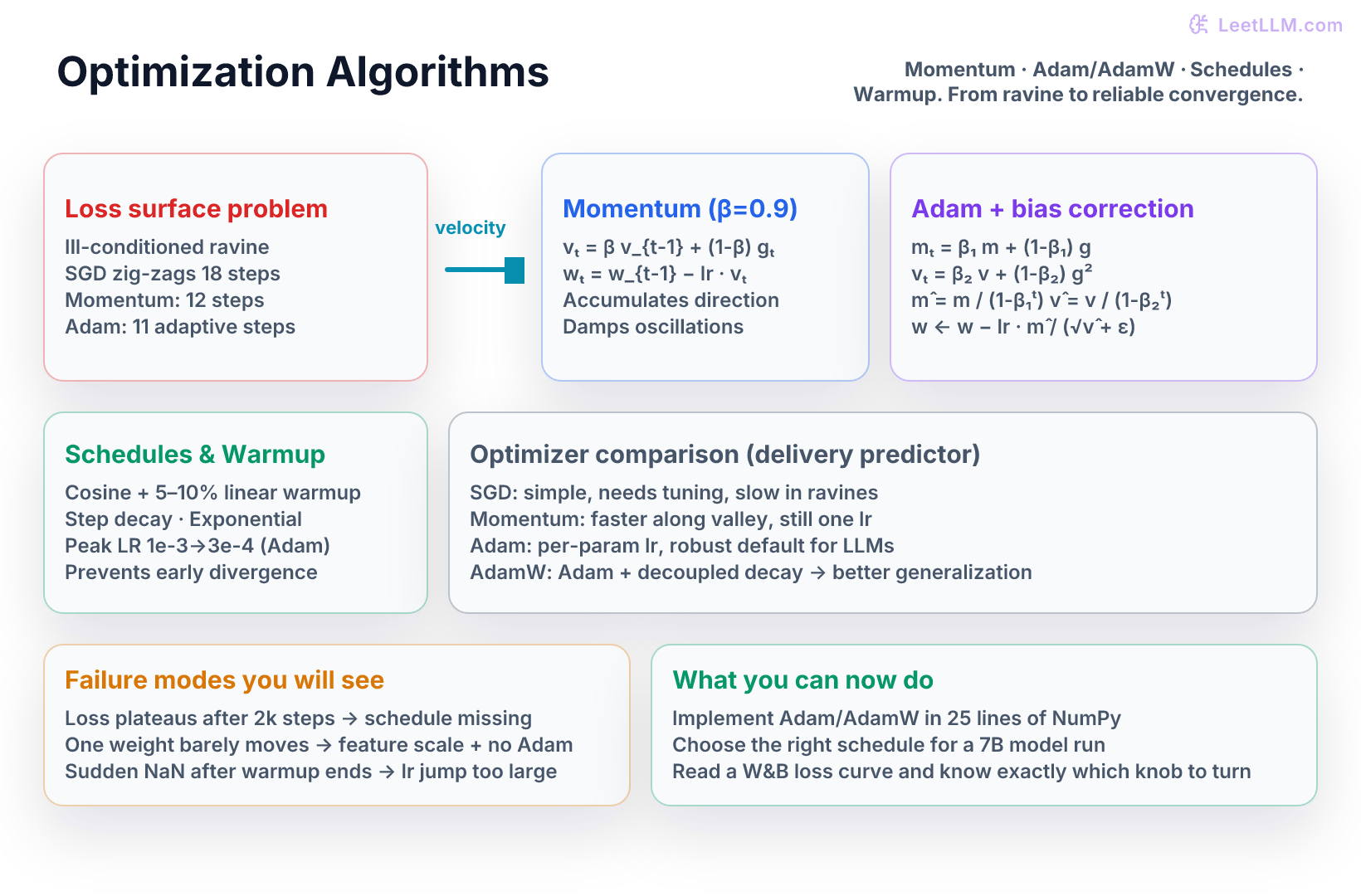

Deep treatment of the optimizers that power every LLM training run. Momentum and Nesterov, full Adam and AdamW math with bias correction, per-parameter adaptivity, failure modes, learning-rate schedules (step, cosine, warmup), second-order curvature intuition, and complete NumPy implementations on an ill-conditioned delivery-time loss surface.

7 min readOpenAI, Anthropic, Google +17 key concepts

Learning path

Step 11 of 138 in the full curriculum

Optimization is where the linear algebra and calculus you just learned meet the real world of training. After seeing gradients and the SVD that tells you which directions in parameter space are well- or ill-conditioned, this chapter shows exactly why plain gradient descent fails on the loss surfaces that appear in every LLM training run, and how the family of adaptive methods (momentum, Adam, AdamW) plus learning-rate schedules fix it.[1]

The chapter uses the same two-feature delivery-time predictor from the calculus lesson so you can see the concrete numbers change when you switch from SGD to Adam to cosine schedule with warmup.

The problem the SVD and gradients revealed

In the deeper linear-algebra chapter you saw that a high condition number means the loss surface has very different curvature in different directions. Plain gradient descent takes the same step size in every direction; it either crawls in the flat direction or overshoots and oscillates in the steep direction. That is exactly the ravine problem visible in the loss-surface illustration.

On our delivery predictor the distance feature (x1 ~ 100 km) produces gradients 50–100× larger than the weight feature (x2 ~ 2 kg). A learning rate safe for w1 is invisible for w2. The optimizer bounces back and forth across the narrow valley while making almost no progress along the floor.

Where this fits: this chapter builds on the calculus lesson's gradient vector and the linear-algebra lesson's condition number. The prerequisite idea is simple: gradients tell you the direction of steepest increase, while the optimizer decides how far to move, how much past gradient to remember, and when to shrink the learning rate.

Worked optimizer trace on one delivery batch

Use a tiny batch from the delivery-time predictor:

| Feature | Meaning | Scale |

|---|---|---|

x1 | warehouse distance in hundreds of km | 0.5 to 5.0 |

x2 | package weight in kg | 0.2 to 20.0 |

y | delivery time in hours | 1.0 to 72.0 |

Suppose one minibatch produces this average gradient at the current weights:

grad_w1 = 18.0for distancegrad_w2 = 0.22for weight- current weights are

w1 = 0.8,w2 = 1.5

Plain SGD with lr = 0.05 makes this step:

w1_new = 0.8 - 0.05 * 18.0 = -0.10w2_new = 1.5 - 0.05 * 0.22 = 1.489

That is the failure mode. The distance weight moves by 0.90 in one step, while the weight feature barely moves. If the next batch flips the distance gradient to -17.0, SGD jumps back across the valley. The loss graph looks like teeth: down, up, down, up.

Momentum changes the step by remembering velocity:

- Previous velocity starts at zero.

- New velocity is

0.9 * old_velocity + 0.1 * gradient. - The first

w1velocity is1.8, not18.0, so the first update is gentler. - If many batches point in the same direction, velocity accumulates and the optimizer moves faster along the valley floor.

- If gradients alternate signs across the steep wall, positive and negative velocities cancel, so the zigzag shrinks.

Adam goes further. It tracks both the average gradient and the average squared gradient. In this batch grad_w1² = 324, while grad_w2² = 0.0484. That second buffer tells Adam that the distance feature is a high-variance direction. Adam divides by the square root of that buffer, so the effective step for w1 is scaled down while w2 still receives a meaningful update.

The expected output of this reasoning is not "Adam is always better." The strong answer is:

- If feature scales differ wildly and you need fast progress, AdamW is the default.

- If the model is small, features are well-scaled, and you care about simple dynamics, SGD with momentum can be enough.

- If training is unstable in the first few hundred steps, inspect bias correction, warmup, and gradient clipping before changing the whole optimizer.

- If validation loss improves and then slowly degrades while train loss falls, inspect weight decay and the learning-rate schedule.

Self check: if grad_w1 is 100× larger than grad_w2, a single global learning rate cannot be ideal for both weights. Your solution should show that adaptive optimizers reduce the step on high-variance directions and preserve movement on low-gradient directions. This is the core optimizer intuition you will reuse in the PyTorch loop, LoRA fine-tuning, and distributed training chapters.

Momentum and Nesterov

The first practical fix is velocity. Keep an exponential moving average of past gradients so the optimizer keeps moving in a useful direction even when the instantaneous gradient is noisy or points slightly uphill on one axis.

Polyak momentum:

text1vₜ = β v_{t-1} + (1 − β) gₜ 2wₜ = w_{t-1} − lr · vₜ

With β = 0.9 the velocity after four steps on a consistent small gradient of 0.12 already reaches 0.048 - four times the size of a single plain-GD step. The optimizer now travels along the valley instead of fighting its walls.

Nesterov momentum looks one step ahead before computing the gradient. In practice the difference is small for deep nets; both variants are widely used.

Checkpoint: consistent gradients should speed up, while alternating steep-wall gradients should cancel down into smaller zigzags. If your trace shows both directions growing at once, recompute the signs and confirm you are subtracting velocity from weights, not adding it.

Adam: first + second moment + bias correction

Adam adds a second buffer that tracks the squared gradient (like RMSProp) so each weight receives its own effective learning rate.

The four equations (Kingma & Ba 2015):

mₜ = β₁ m + (1 − β₁) gvₜ = β₂ v + (1 − β₂) g²m̂ₜ = mₜ / (1 − β₁ᵗ)for bias correctionv̂ₜ = vₜ / (1 − β₂ᵗ)for bias correctionwₜ = w_{t-1} − lr · m̂ₜ / (√v̂ₜ + ε)

Default: β₁=0.9, β₂=0.999, ε=1e-8.

Bias correction is mandatory in the first 100–200 steps. Without it the early m and v are under-estimated by exactly the factor (1 − β^t), so the first update is only 10 % as large as intended.

AdamW: decoupled weight decay

The original Adam folded L2 decay into the gradient before the adaptive division. The effective decay therefore became weaker for weights that had large recent gradient variance. AdamW applies the decay after the adaptive step:

w = w − lr · (m̂ / (√v̂ + ε)) w = w − lr · λ · w // pure multiplicative decay

This is the version used in every modern LLM training run. The hyperparameter λ (weight_decay) now has the meaning you expect.

Learning-rate schedules and warmup

Even perfect per-parameter scaling eventually needs smaller steps near the minimum.

Common schedules:

- constant

- exponential decay (lr = lr0 * γ^step)

- cosine annealing (smooth drop to near zero)

- warmup + cosine (linear rise over first 5–10 % of steps, then cosine)

Warmup is not optional for transformers. At step 0 the embedding table is random; the first gradients are huge and incoherent. A full-size Adam step at that moment scatters every token vector. Warmup keeps the effective step tiny until the statistics have settled, then releases the peak learning rate.

The chapter-flow illustration shows the four curves and the production pattern (warmup + cosine) highlighted.

Second-order intuition

Newton's method scales the gradient by the inverse Hessian. The full matrix is impossible for billion-parameter models. Adam's v buffer is a diagonal approximation to the second-moment matrix. Dividing by √v̂ is therefore a cheap, per-coordinate Newton step. It captures the dominant failure mode (different feature scales) at almost no extra cost.

NumPy implementations (complete and runnable)

python1import numpy as np 2from typing import Dict 3 4class Optimizer: 5 def __init__(self, params: Dict[str, np.ndarray], lr: float): 6 self.params = params 7 self.lr = lr 8 self.state: Dict = {} 9 10 def step(self, grads: Dict[str, np.ndarray]): 11 raise NotImplementedError 12 13class SGD(Optimizer): 14 def step(self, grads): 15 for n, g in grads.items(): 16 self.params[n] -= self.lr * g 17 18class Momentum(Optimizer): 19 def __init__(self, params, lr=0.01, beta=0.9): 20 super().__init__(params, lr) 21 self.beta = beta 22 for n in params: 23 self.state[n] = np.zeros_like(params[n]) 24 25 def step(self, grads): 26 for n, g in grads.items(): 27 v = self.state[n] 28 v = self.beta * v + (1 - self.beta) * g 29 self.state[n] = v 30 self.params[n] -= self.lr * v 31 32class Adam(Optimizer): 33 def __init__(self, params, lr=0.001, beta1=0.9, beta2=0.999, eps=1e-8): 34 super().__init__(params, lr) 35 self.beta1, self.beta2, self.eps = beta1, beta2, eps 36 self.t = 0 37 for n in params: 38 self.state[f"{n}_m"] = np.zeros_like(params[n]) 39 self.state[f"{n}_v"] = np.zeros_like(params[n]) 40 41 def step(self, grads): 42 self.t += 1 43 for n, g in grads.items(): 44 m = self.state[f"{n}_m"] 45 v = self.state[f"{n}_v"] 46 m = self.beta1 * m + (1 - self.beta1) * g 47 v = self.beta2 * v + (1 - self.beta2) * g**2 48 m_hat = m / (1 - self.beta1 ** self.t) 49 v_hat = v / (1 - self.beta2 ** self.t) 50 self.state[f"{n}_m"] = m 51 self.state[f"{n}_v"] = v 52 self.params[n] -= self.lr * m_hat / (np.sqrt(v_hat) + self.eps) 53 54class AdamW(Optimizer): 55 def __init__(self, params, lr=0.001, beta1=0.9, beta2=0.999, eps=1e-8, weight_decay=0.01): 56 super().__init__(params, lr) 57 self.beta1, self.beta2, self.eps, self.wd = beta1, beta2, eps, weight_decay 58 self.t = 0 59 for n in params: 60 self.state[f"{n}_m"] = np.zeros_like(params[n]) 61 self.state[f"{n}_v"] = np.zeros_like(params[n]) 62 63 def step(self, grads): 64 self.t += 1 65 for n, g in grads.items(): 66 m = self.state[f"{n}_m"] 67 v = self.state[f"{n}_v"] 68 m = self.beta1 * m + (1 - self.beta1) * g 69 v = self.beta2 * v + (1 - self.beta2) * g**2 70 m_hat = m / (1 - self.beta1 ** self.t) 71 v_hat = v / (1 - self.beta2 ** self.t) 72 self.state[f"{n}_m"] = m 73 self.state[f"{n}_v"] = v 74 self.params[n] -= self.lr * m_hat / (np.sqrt(v_hat) + self.eps) 75 self.params[n] -= self.lr * self.wd * self.params[n] 76 77params = {"w": np.array([1.0, -1.0], dtype=np.float32)} 78grads = {"w": np.array([0.25, -0.50], dtype=np.float32)} 79opt = AdamW(params, lr=0.1) 80opt.step(grads) 81print(params["w"].round(4))

A tiny reproducible experiment on the scaled delivery data (exactly the ravine from the figure) shows AdamW reaching ~0.07 loss in 20 steps while plain SGD is still above 1.8.

Comparison table

| Optimizer | Memory | Adaptive scale | Decoupled decay | Best for | Typical LLM lr |

|---|---|---|---|---|---|

| SGD | 1× | No | Yes (L2) | Tiny models, strong scaling | 0.1–1.0 |

| Momentum | 2× | No | Yes | Well-scaled features | 0.05–0.5 |

| Adam | 3× | Yes | No | Prototyping, dense features | 1e-4–3e-4 |

| AdamW | 3× | Yes | Yes | Production LLMs (default) | 1e-4–3e-4 |

Practice and failure modes

Run the four optimizers on the synthetic data above. Observe which one first reaches loss < 0.2.

Common symptoms you will see in W&B:

- Loss plateaus after 2 k steps → missing or finished schedule

- One weight column never moves → rare feature + no Adam

- Explodes exactly at end of warmup → lr jump too large; lengthen warmup

- Train loss great, val loss worse → use AdamW instead of Adam+L2

What you can now carry forward

You can implement the optimizer layer that every production training loop relies on, diagnose a stalled or exploding curve, and choose the correct schedule and decay variant for a new model.

Evaluation Rubric

- 1Derives the momentum velocity update by hand on a 1D quadratic loss and explains why it smooths zig-zag behavior in narrow valleys

- 2Implements Adam and AdamW from scratch in NumPy (including bias correction and decoupled decay), runs them side-by-side on the two-feature predictor, and produces a loss table

- 3Reads a training curve that stalls in one dimension or explodes after warmup, diagnoses the cause (feature scale, missing schedule, AdamW needed), and selects the correct fix with reasoning

Common Pitfalls

- Treating the Adam β1 and β2 as magic constants. On very long runs (hundreds of billions of tokens) you may need to tune β2 down to 0.95 or use decoupled decay more aggressively.

- Forgetting bias correction in the first 100-200 steps. Without it the effective learning rate is much smaller than intended and training starts unnecessarily slowly.

- Using the same peak learning rate for Adam and for SGD. Adam's effective step size is usually 10-100x smaller; a 3e-4 Adam lr is roughly comparable to a 0.1-1.0 SGD lr.

- Applying weight decay to all parameters including LayerNorm and biases. In practice you exclude those (and often embeddings) so the regularizer does not fight normalization statistics.

- Ending warmup too late or too abruptly. If the learning rate jumps from 1e-6 to 3e-4 in one step after warmup, you can destabilize an already partially trained model.

- Ignoring that second-order methods (or their diagonal approximations) are still sensitive to the condition number of the Hessian in directions the optimizer has not yet adapted to.

Follow-up Questions to Expect

Key Concepts Tested

Polyak momentum and Nesterov accelerated gradientAdam first-moment (momentum) and second-moment (adaptive scale) estimatesbias correction in Adam and why it matters in the first stepsAdamW decoupled weight decay versus L2 regularizationlearning rate schedules: constant, exponential decay, cosine annealing with and without warmupsecond-order intuition: why ravines and curvature make plain SGD fail and how Adam approximates diagonal Hessian scalingcommon optimizer failure modes and diagnostic symptoms in training curves

Next Step

Next: Continue to Probability for Machine Learning

You now understand how a model actually improves its weights from gradients. Probability gives you the tools to turn the model's raw outputs into trustworthy statements about real events ("given this retrieval score, what is the actual probability the document is relevant?"). Together they let you build, evaluate, and ship the first complete AI systems.

References