🤖HardLLM Agents & Tool Use

Computer-Use Agents

Learn how frontier models control real desktops and browsers through screenshots, mouse movements, clicks, and keystrokes. Covers the observe-act architecture, Anthropic and Gemini computer-use APIs, evaluation benchmarks, sandboxing challenges, and safety boundaries.

23 min readAnthropic, Google, OpenAI +28 key concepts

Learning path

Step 104 of 138 in the full curriculum

Computer-Use Agents: GUI & Browser Control

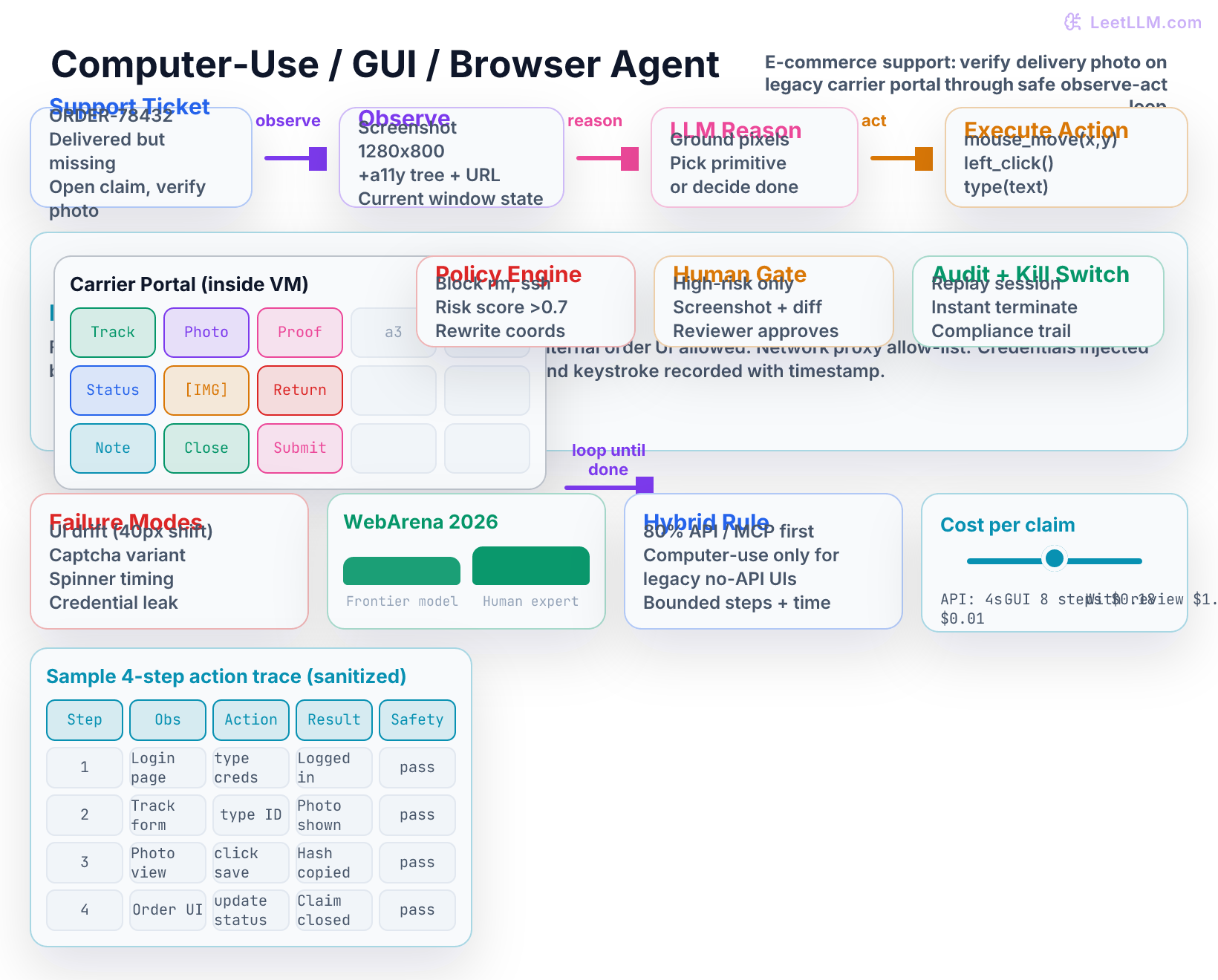

A merchant support agent at an e-commerce company receives a ticket: "My package shows delivered but I never received it." The internal order system has no API for the photos the carrier uploaded to their own portal. The only way to see the delivery proof photo, read the timestamp, and update the claim status is to log into the carrier's web portal, work through three different screens, locate the image, and copy a reference number into the warehouse GUI.

Writing a traditional scraper is not the right abstraction. The site uses aggressive bot detection, JavaScript-heavy layouts, and authenticated sessions whose cookies and tokens rotate weekly. The photos sit behind login walls that change with every A/B test. A workable path is an agent that can open a real browser, see the page exactly as a human operator would see it, move the mouse to the right buttons, read the images with its own vision, and perform the updates.

This is the world of computer-use agents. They do not call clean JSON endpoints. They receive screenshots (and often an accessibility tree), reason over pixels and text, and emit low-level input events: move the virtual mouse to coordinates (1240, 380), left-click, wait 800 ms, type the string "ORDER-78432".[1]

The payoff is enormous. The agent can now operate any software a human can reach: legacy internal tools with no APIs, carrier portals, spreadsheet desktop applications, design software, and sites that deliberately block automation scripts. The cost is equally large. Every action carries real risk of clicking the wrong element, exposing credentials in a recorded log, triggering irreversible side effects, or escaping the intended environment.

From curated tools to full computer control

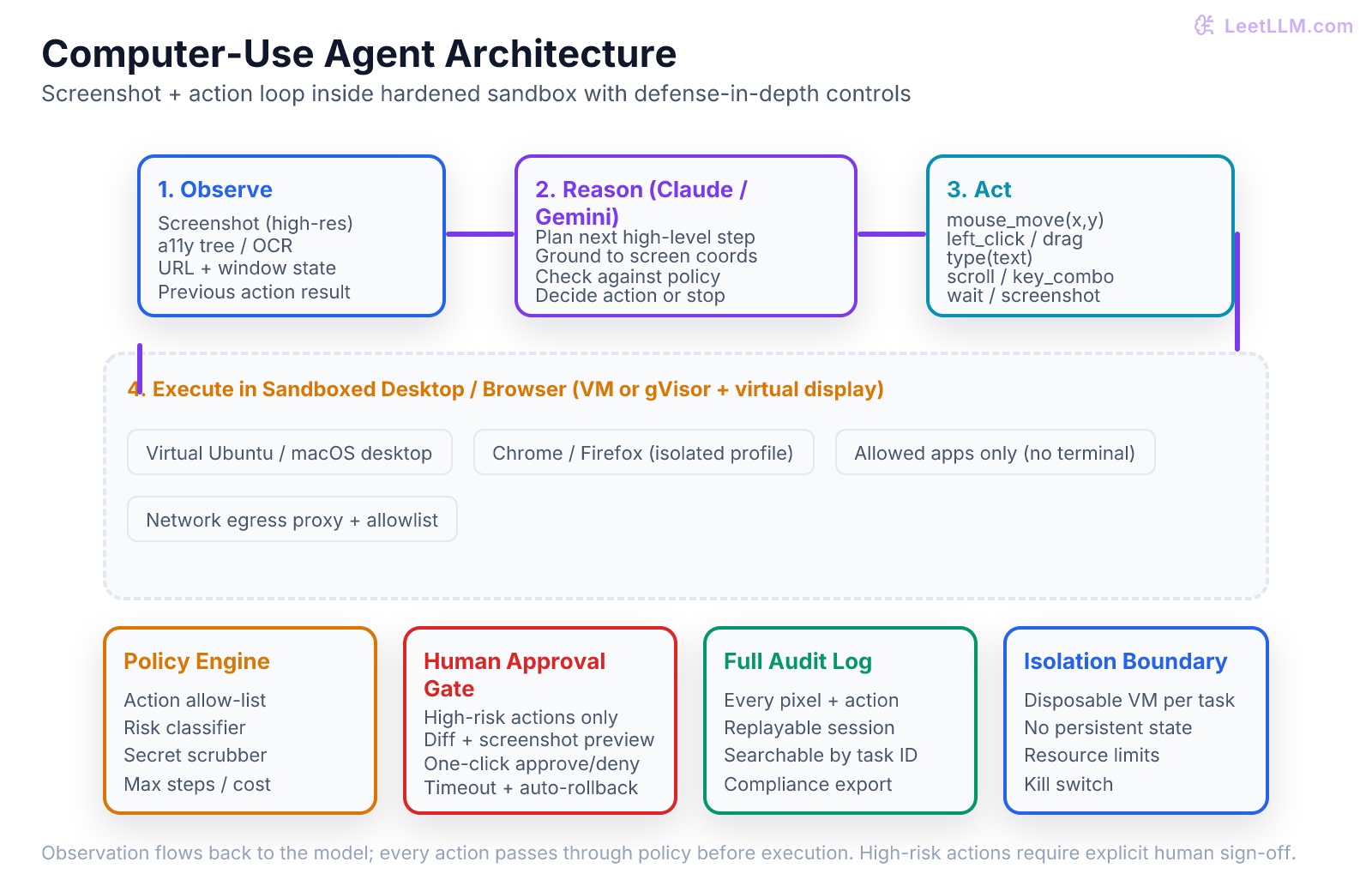

Ordinary function calling and tool use give the model a curated list of safe, typed operations that the developer wrote in advance. The model simply chooses from a JSON schema. Computer use removes that curated guardrail. The model receives a raw screenshot (sometimes augmented with an accessibility tree or OCR output) and must decide the next primitive input event on its own.

The shift changes the threat model completely. A function-calling agent can only call the tools you exposed. A computer-use agent can, in principle, open any application visible on the virtual desktop, click any pixel, and type into any field. That power is exactly what makes the pattern valuable for the long tail of workflows that have no API, and exactly why every production deployment must wrap the agent in multiple independent layers of control.

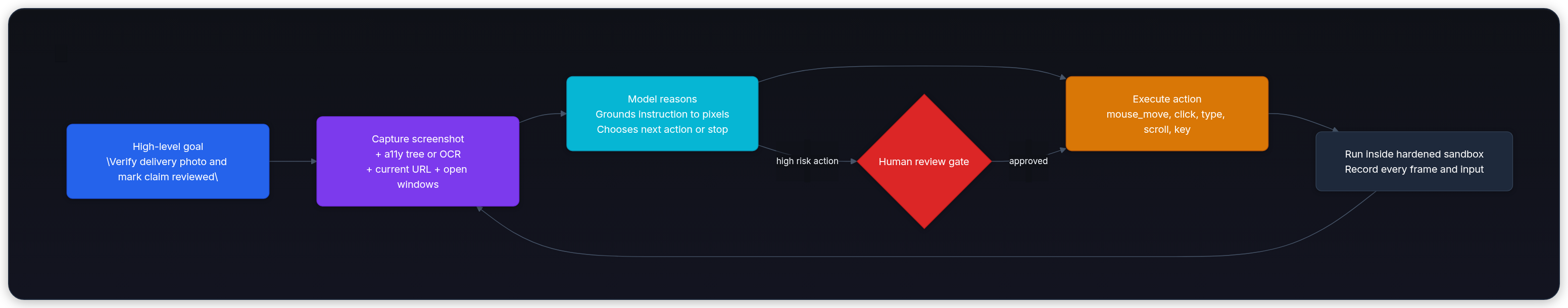

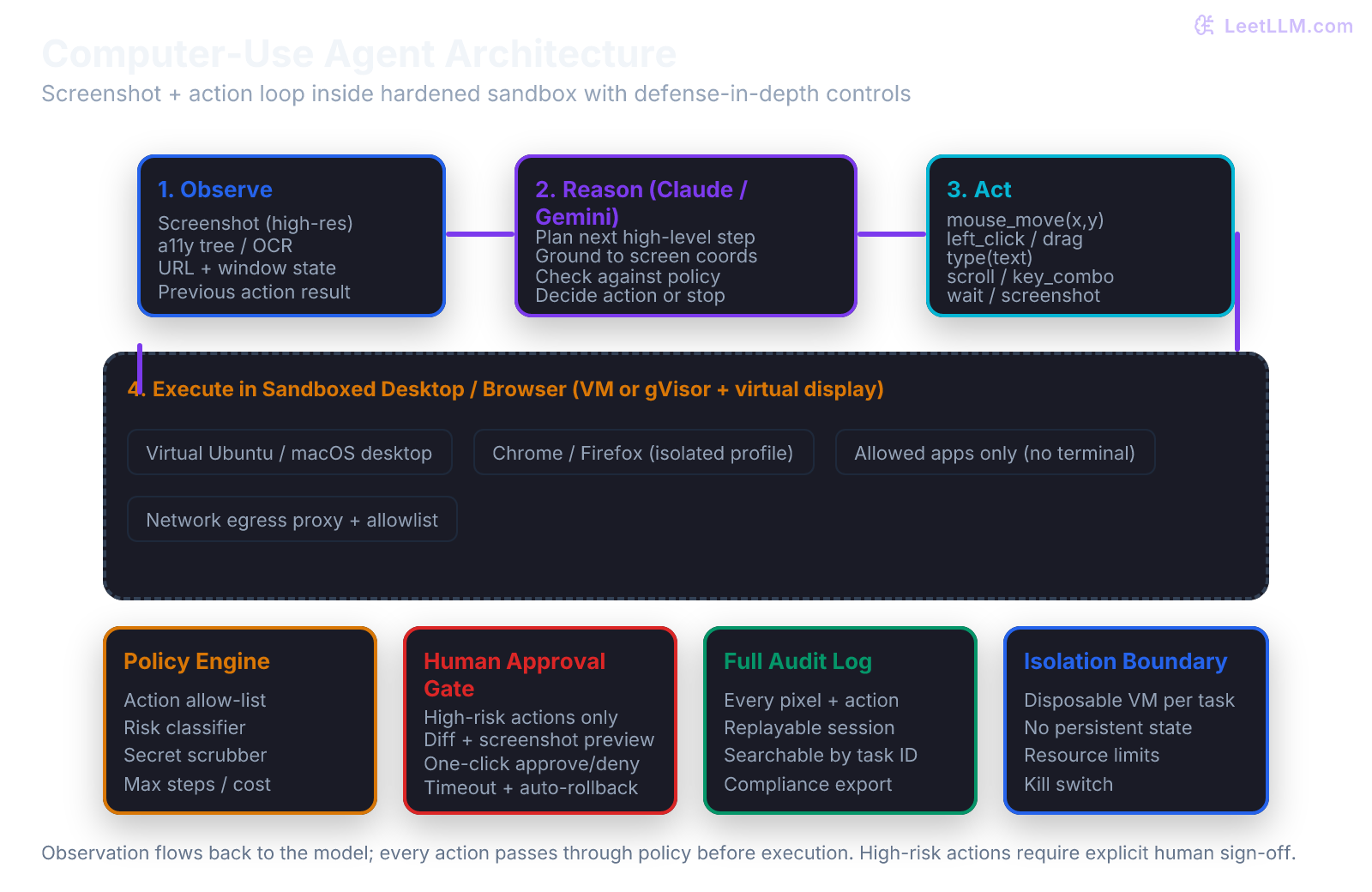

The observe-act loop

Computer-use agents follow a tight sense-plan-act cycle that is more powerful and more fragile than the ReAct tool-calling pattern you saw in earlier lessons.

The loop repeats until the model declares the task complete or a safety boundary intervenes. Each iteration costs vision tokens and latency. A fifteen-step task can easily consume many thousands of vision tokens and several seconds of wall time.

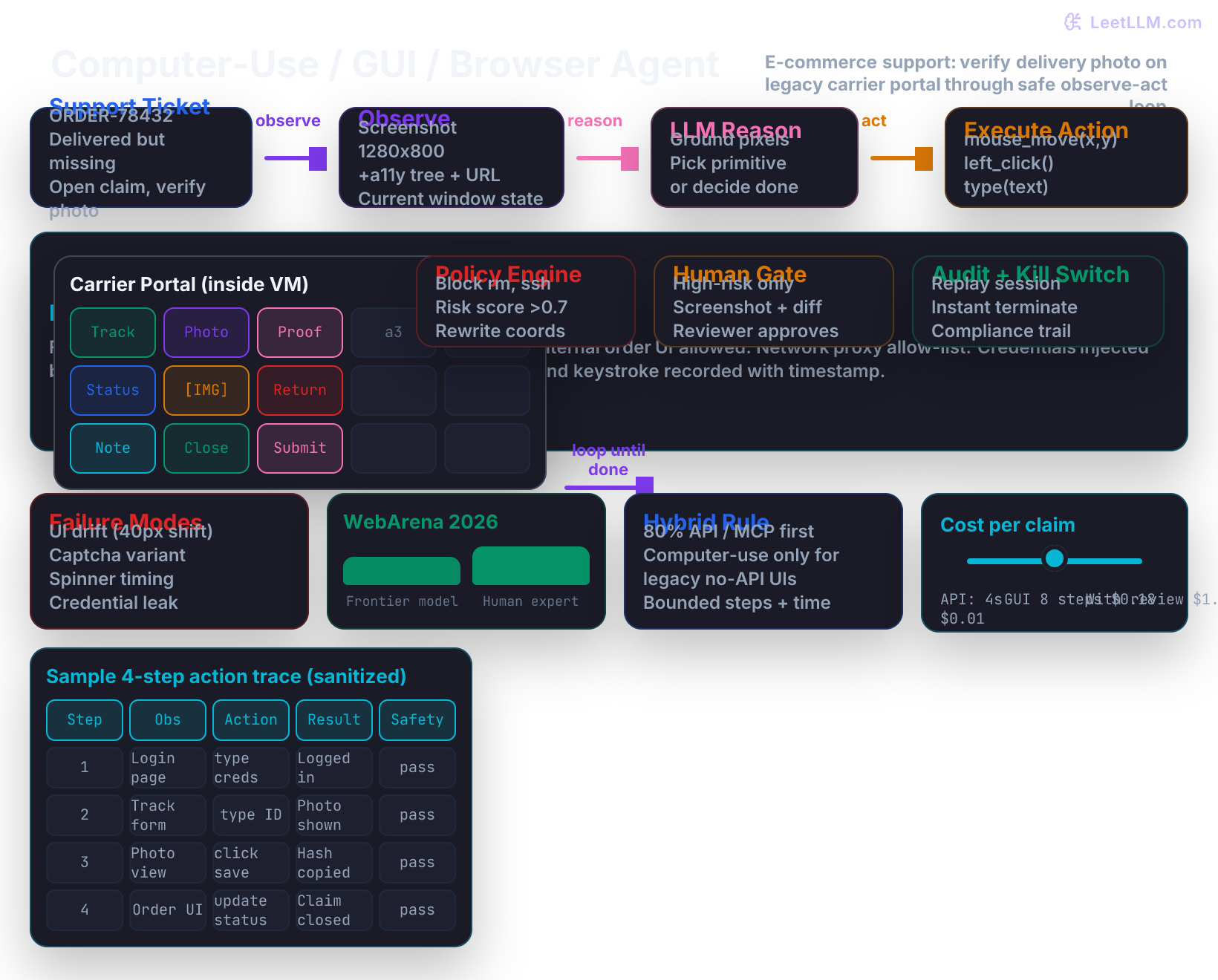

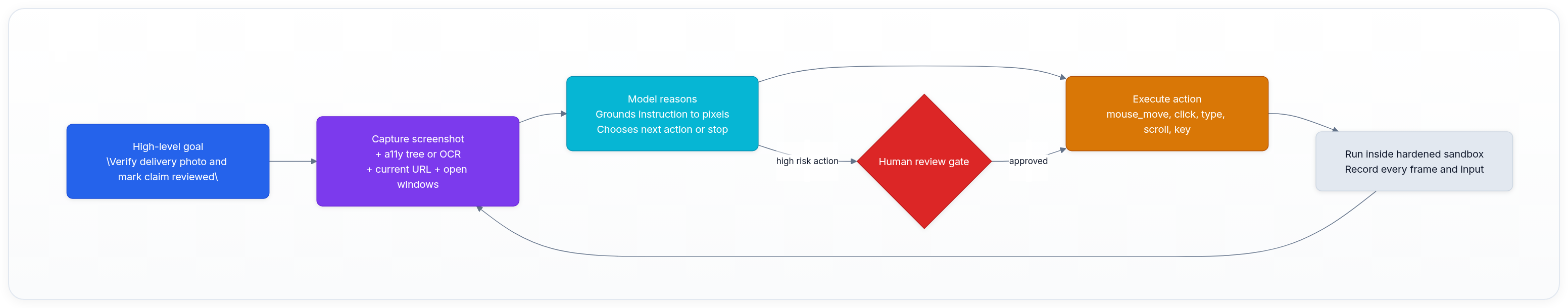

The main illustration below shows the full architecture for our running e-commerce return-claim example. The support ticket enters at the top. The agent captures an observation, the model reasons over it, an action is proposed, and the entire cycle runs inside a disposable sandbox. Four independent safety layers (policy engine, human gate, audit logging, and the VM boundary itself) surround the loop.

Study the diagram carefully. Notice how the carrier portal lives inside the sandbox VM as a realistic browser window (the grid of UI elements). The policy engine sits between the action proposal and execution. High-risk proposals are routed to a human reviewer who sees both the screenshot the model saw and a clear diff of the intended change. Everything is recorded so the session can be replayed exactly.

The same pattern becomes easier to reason about when you strip away the story details and look at the reusable production architecture.

A concrete five-step trace for ORDER-78432

To make the loop tangible, walk through the delivery-photo verification task step by step using the exact primitives a frontier model would emit.

Step 1. The host opens a fresh browser profile inside the VM and goes to the carrier login page. It captures a 1280 by 800 screenshot and the accessibility tree. The model receives the image plus the task description. It outputs a mouse_move to the username field coordinates followed by a type action containing the support-agent credential string (the host actually injects the credential through the automation driver; the model never sees the real password).

Step 2. After the login succeeds, the host captures the next screenshot showing the tracking search form. The model outputs type("ORDER-78432") into the tracking field and a left_click on the search button.

Step 3. The results page loads with the delivery photo visible. The model sees the image region in the screenshot, outputs a mouse_move over the photo area, a left_click to enlarge it, and then a scroll action to bring the timestamp into view. The host captures the new screenshot and returns it.

Step 4. The model reads the visible timestamp and delivery location from the enlarged photo (via its vision), decides the claim is legitimate, and outputs the actions needed to copy the photo hash or reference into the clipboard (or simply describe it). It then proposes navigation to the internal order system tab.

Step 5. Inside the internal order GUI (also inside the same VM), the model updates the claim status to "photo verified, return approved" and closes the ticket with a short note containing the photo reference. The host verifies that the order record now shows the correct status and that no other customer accounts were touched. Only then does the host signal task complete and tear down the VM.

At each step the policy engine inspected the proposed primitive. The human gate was not triggered because the risk score stayed below the refund threshold. Every coordinate, every screenshot hash, and every model decision was written to the immutable audit log.

Grounding pixels to intent

The hardest part of the observe step is turning raw pixels into reliable intent. A screenshot is just a grid of colored pixels. The model must map regions of that grid to UI elements, understand which element corresponds to "the delivery photo for this tracking number," and decide the next physical action that will advance the goal.

Pure coordinate-based clicking is extremely brittle. If the carrier site runs an A/B test that moves the "View Photo" button twenty pixels down, or if the customer uses a different screen resolution, or if a responsive layout kicks in on a slightly narrower window, the coordinates the model learned on the previous run become invalid. The model then clicks empty space and the loop stalls.

That is why production systems almost always augment the screenshot with an accessibility tree (the same a11y information a screen reader would receive) or with OCR plus element detection. The model can then refer to stable identifiers such as role, name, or visible text instead of fragile (x, y) pairs. The best systems let the model output both a high-level intent ("click the photo for this order") and a fallback grounding strategy when the stable identifier is missing.

How frontier providers expose computer use

Anthropic exposes computer use through a special beta tool in the Messages API. Current docs show the computer-use-2025-11-24 beta flag for the newer computer_20251124 tool version, with computer-use-2025-01-24 still used for the earlier tool version.[2] The model returns a tool-use block whose input describes one of a small set of primitive actions: mouse_move with x and y, the various click types, left_click_drag, type with text, key combinations, scroll, wait, or an explicit screenshot request.

Your client code is responsible for executing the primitive inside the controlled environment, capturing the next observation, and returning the result in the next tool_result message. The model itself never touches real input devices.

Google exposes a Gemini 2.5 Computer Use Preview model and tool for browser-control agents. The model analyzes the user request plus screenshots and returns UI-action function calls such as clicking coordinates or typing text; your client code still executes the action, captures the next screenshot, and sends it back. The API can also return a safety_decision for proposed actions.[3]

In both cases the developer still owns the execution environment. The model only decides. Your code must execute safely, enforce boundaries, and decide when to stop or escalate to a human.

Browser agents versus full desktop agents

Many real production workloads stay inside the browser. When that is true, you have lighter options.

You can use Playwright or Selenium directly when the target site tolerates automation and exposes stable selectors. You can adopt a specialized browser-agent harness such as BrowserGym or the WebArena evaluation environment. These frameworks give the model a cleaned accessibility tree plus screenshot and let it click by stable element ID rather than fragile pixel coordinates.

You fall back to full computer-use primitives only when the site intentionally blocks automation, when the task requires interacting with the browser's own chrome (extensions, downloads, multiple profiles), or when the workflow crosses from browser into a native desktop application.

Full desktop computer use, in the style of OSWorld, becomes necessary when the job spans a browser window plus a thick client that has no web equivalent. The sandbox requirements grow because you must now present a believable desktop to both the browser and the native application while still keeping every input observable and revocable by the host.

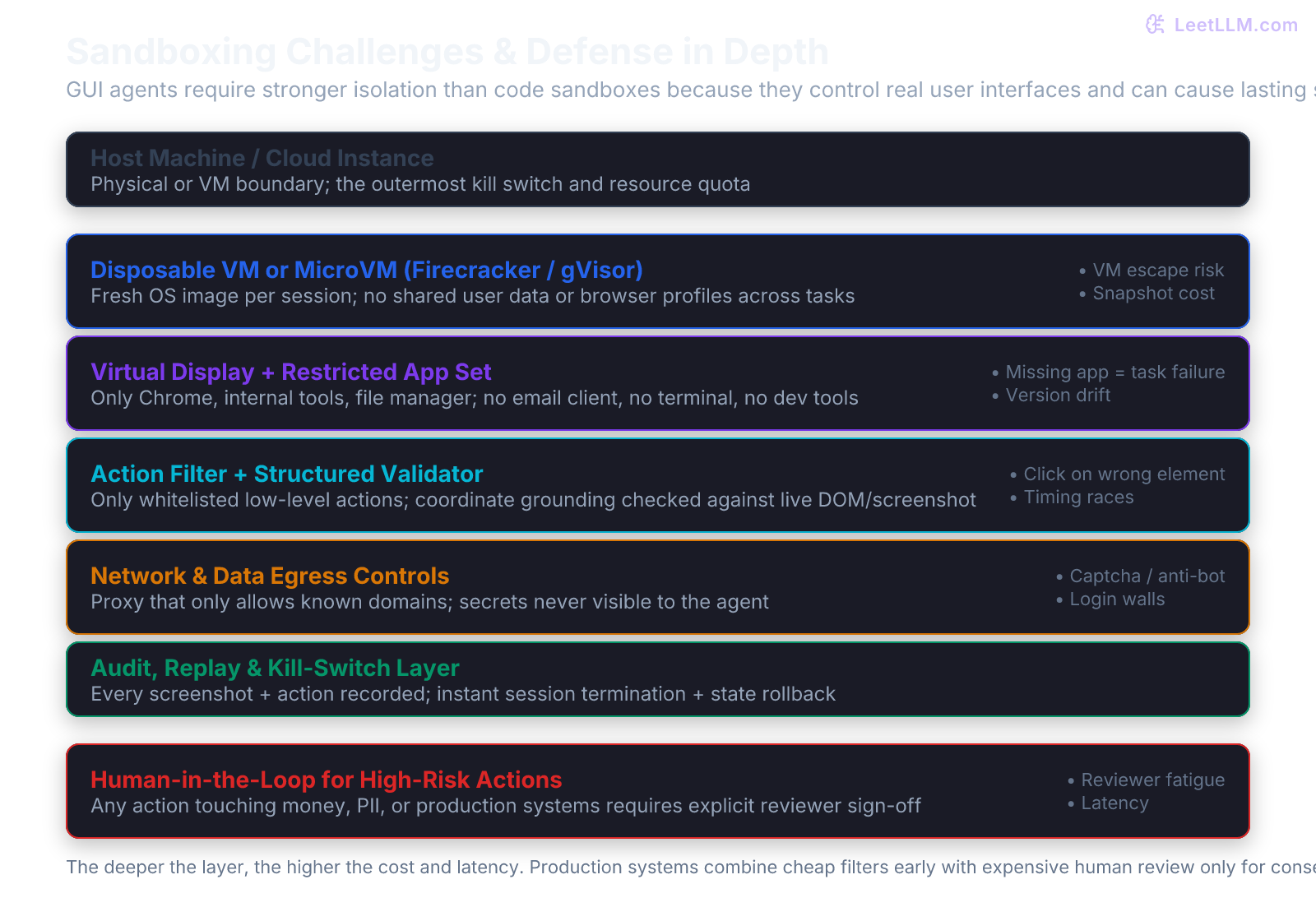

Sandboxing and safety boundaries

The most important engineering decision is not the prompt you give the model. It is the environment in which the actions actually run.

A production computer-use system uses defense in depth. Every session begins in a fresh, disposable microVM or container with a virtual display (noVNC or equivalent). The VM contains only the minimum applications required for the task. There is no email client, no terminal that can reach production databases, and no ability for the agent to install new software.

Network traffic is forced through an egress proxy that permits only an explicit allow-list of domains. Credentials are injected by the host through the automation driver; the model never sees or types a real password. An action validator inspects every proposed primitive before execution and rejects or rewrites obviously dangerous commands such as rm -rf, navigation to internal admin panels, or access to ~/.ssh.

High-risk actions (those that can move money, alter customer records, or touch production systems) are held for explicit human review. The reviewer is shown the exact screenshot the model saw together with a proposed diff of the state change. The entire session (every screenshot, every mouse coordinate, every keystroke) is recorded with timestamps so you can replay the sequence for audits, debugging, or regulatory compliance.

Common pitfalls that break these boundaries include reusing a persistent browser profile across unrelated customer tasks (the agent learns the previous customer's logged-in state), letting the model alone decide when the task is finished (the host must independently verify that the observable application state matches the acceptance criteria), and running against live production sites without rate limits or a kill switch (one runaway agent can generate thousands of requests or trigger fraud detection).

Sandboxing a GUI agent is strictly harder than sandboxing a code-generation agent. A code sandbox can present a clean container with strict filesystem and network limits and no persistent user state. A GUI agent must render a realistic desktop or browser that survives timing-dependent animations, anti-bot challenges, and cookie-based sessions, while still preventing the agent from reaching the host's real email client, password manager, or production dashboards. A single escaped click or typed command can have permanent side effects outside the VM.

Evaluation of computer-use agents

Traditional accuracy numbers are not enough. You need a portfolio of measures that reflect both functional success and operational safety.

Task success rate asks whether the final observable state of the system matches the goal. WebArena and OSWorld supply programmatic validators for this. Step efficiency measures how many actions and model calls were required; long trajectories burn tokens and raise the probability of eventual failure. Safety violation rate counts how often the agent attempted a blocked action or required human intervention. Grounding accuracy compares proposed click coordinates against ground-truth element locations. For open-ended tasks, blind human raters compare two agent trajectories on the same goal and express preference.

The benchmarks that matter in 2026 are WebArena (and its visual and infinite extensions) for browser realism, OSWorld for real desktop applications across operating systems, Mind2Web for large-scale element grounding across hundreds of live sites, GAIA for general assistant tasks that mix web, code, and file work, and AgentDojo plus its successors for measuring resistance to prompt injection and harmful tool use.

Recent public benchmark snapshots show the same pattern across browser and desktop tasks: best systems can solve a meaningful share of browser workflows, but hard OSWorld-style desktop tasks remain much lower and far below human expert reliability. Treat any exact leaderboard number as a moving target, especially for new computer-use model releases.

Failure modes specific to GUI agents

Computer-use agents fail in characteristic ways that pure API agents rarely encounter.

UI drift occurs when an A/B test, font change, or responsive layout moves an element by forty pixels; the model clicks empty space. Visual ambiguity appears when two buttons look identical at the screenshot resolution the model received; it chooses the wrong one because lighting or compression hid the label. Timing and animation problems cause clicks before a menu finishes expanding or before a loading spinner disappears. Captcha and anti-bot systems are deliberately designed to defeat exactly this class of agent. Credential leakage happens when the model is asked to log in and types a real password into a visible field that ends up in the audit log. Scope creep occurs when the model decides "while I am here I should also clean up the user's profile" and edits fields that were never part of the original task.

The best teams treat every computer-use run as a security-sensitive operation. They log the exact prompt, the model version, every observation, and every action taken. They maintain a kill switch that can terminate all active sessions in seconds.

Production patterns that actually work

A strong production pattern is hybrid, not pure computer use.

Use ordinary tool calling, MCP servers, or direct APIs for eighty to ninety percent of the workflow. Only invoke the computer-use loop for the narrow slice that truly requires visual reasoning or legacy GUI access. Keep every computer-use session short and bounded by both maximum step count and wall-clock time. Require human sign-off for any action whose blast radius includes money, PII, or production infrastructure. Record every session and feed the successful trajectories back into prompt optimization or fine-tuning of a smaller, cheaper grounding model.

This is the same engineering discipline you saw applied to AI coding agents. Speed and generality are valuable only when they remain inside explicit boundaries and human accountability.

A minimal host executor sketch

Here is the shape of the host loop you would implement (in TypeScript or Python with Playwright or a similar driver inside the VM).

ts1while (!taskComplete && stepCount < MAX_STEPS) { 2 const obs = await captureScreenshotAndA11y(); 3 const action = await model.decide(task, obs); 4 if (!policy.allows(action)) { logViolation(); continue; } 5 if (riskClassifier.score(action) > HUMAN_THRESHOLD) { 6 await waitForHumanApproval(obs, action); 7 } 8 await executePrimitive(action); // mouse, type, etc. 9 logStep(obs, action); 10 if (await hostVerifier.matchesAcceptanceCriteria(task, obs)) { 11 taskComplete = true; 12 } 13} 14await teardownVM();

The host, not the model, owns the stop condition, the policy checks, the human gate, the audit log, and the final verification.

Risk classification table for e-commerce support actions

In the carrier portal and internal order system example, not every action carries the same blast radius. A production policy engine classifies proposed actions into risk tiers before any execution happens.

| Risk Tier | Example Actions in Carrier Portal | Example Actions in Internal Order UI | Human Gate? | Typical Policy Response |

|---|---|---|---|---|

| Low | View tracking page, scroll, read photo metadata | View order record, read claim notes | No | Execute immediately, log only |

| Medium | Click to enlarge delivery photo, copy reference text, download image | Copy photo hash into claim, add internal note | Optional (configurable) | Execute with extra logging; escalate if repeated |

| High | Navigate to admin panel, change delivery address, initiate refund flow | Update order status to "returned", issue credit, delete customer record | Yes | Pause, present screenshot + diff to reviewer, wait for explicit approval |

The table makes the defense-in-depth concrete. Low-risk navigation and reading stay fast. High-risk money or data mutation steps always pause for a human who can see exactly what the model saw and what the state change would be.

Cost and latency realities

A pure API call to check order status might cost a few milliseconds and a fraction of a cent. A computer-use trajectory for the same information often requires eight to fifteen steps, multiple vision calls, and human review in twelve percent of cases. The per-claim cost can easily rise from one cent to twenty cents or more when review is required.

Teams therefore reserve the heavy path for the cases that truly need it: carriers that have no API, photos that must be visually inspected for fraud signals, or legacy warehouse systems whose only interface is a thick client. The hybrid rule of thumb (eighty percent API or MCP, twenty percent computer-use) keeps the economics reasonable while still covering the long tail.

How to start a minimal pilot with one carrier

Begin with a single carrier partner whose portal is stable and whose support volume is high enough to justify the investment. Limit the initial scope to read-only photo verification and status lookup. Do not allow any write actions until you have thirty days of clean audit logs and zero safety violations.

Run every session in a fresh VM. Log every prompt, every observation hash, every action, and the final host verification result. After two weeks, review the failure cases with the actual support team. Only then decide whether to expand the action space or add a second carrier.

Common implementation gotchas with Playwright inside VMs

Playwright works well inside Linux VMs with xvfb, but you must handle several details. The virtual display must be large enough (at least 1280 by 800) and must match the resolution the model expects. Font rendering and anti-aliasing must be consistent between training screenshots and production runs. You must disable all browser extensions and password managers. You must force every page to wait for the networkidle event plus an explicit stability check before capturing the screenshot the model will see. Missing any of these details produces screenshots that look different to the model than they did during development, and grounding accuracy collapses.

Trajectories as future training data

Every successful computer-use session is a high-quality demonstration of how a human would have solved the same GUI task. The best teams export the clean trajectories (prompt, observation sequence, action sequence, final verification) and use them to fine-tune a smaller, faster grounding model or to improve the prompt and few-shot examples for the frontier model. Over time the expensive frontier calls become fewer and the success rate on the long-tail carriers rises.

Practice: design a safe refund photo verifier

Your e-commerce platform needs an agent that can open the carrier portal for a given tracking number, locate the delivery photo, compare the visible details against the customer claim, update the internal order system status, and close the ticket with a short note.

The carrier portal has no API and blocks known automation.

Complete these tasks:

- Write the exact policy rules the action validator must enforce before any mouse movement is allowed. Cover navigation limits, credential handling, and which UI elements are off-limits.

- Decide which actions require human approval and exactly what evidence (screenshot, proposed diff, order ID) the reviewer must see before approving.

- Specify the acceptance criteria the host must check after the agent claims "done". The criteria should include order status changed correctly, ticket note contains the photo hash, and no other customer accounts were touched.

- List three failure modes you realistically expect in the first week of production and the concrete guardrails that would catch each one.

- Produce a one-page reviewer checklist that a human can read and act on in under ninety seconds.

Write the policy and checklist as documents another engineer could hand to a support-team reviewer on day one.

Key takeaways

- Computer-use turns the model into a general GUI operator, but every pixel-level action expands the attack surface dramatically compared with curated function calling.

- The core architecture is an observe-act loop wrapped by four independent layers: policy engine, human approval gate for high-risk steps, full session recording, and disposable sandbox isolation.

- Strong evaluation requires both functional success rate and safety metrics on realistic benchmarks such as WebArena and OSWorld. Step efficiency and grounding accuracy matter as much as final outcome.

- The practical path in production is hybrid. Use deterministic automation and clean APIs for the great majority of work and reserve computer use for the long tail that truly needs eyes and hands on a legacy interface.

- Sandboxing GUI agents is harder than sandboxing code because the environment must look and behave like a real desktop to the target sites while remaining fully observable, revocable, and killable by the host at any moment.

- Every production computer-use deployment must apply the same operational discipline we give to privileged human operators: least privilege, complete audit trail, and a human ready to hit stop.

Computer-use agents are powerful precisely because they can do anything a human operator can do on a screen. That is also why every production system must treat them with the same caution we give any privileged human operator: least privilege, full audit, and a human ready to intervene.

Evaluation Rubric

- 1Distinguishes computer-use (pixel + input device) from ordinary function calling or browser tool APIs

- 2Designs an observe-act loop that feeds high-quality screenshots and structured observations back to the model

- 3Chooses appropriate sandbox boundaries (disposable VM, restricted apps, egress proxy) for a given risk level

- 4Evaluates a computer-use agent using WebArena or OSWorld success rate, step efficiency, and safety violation metrics

- 5Builds a production policy engine that routes only high-risk GUI actions through human review while keeping low-risk flows fast

Common Pitfalls

- Giving the agent full access to a real user desktop or browser profile instead of a disposable, heavily restricted VM.

- Relying solely on screenshot + coordinate actions without accessibility trees or element grounding, leading to brittle clicks after every UI change.

- Allowing the agent to type or paste credentials directly instead of using secure credential injection or OAuth flows.

- Skipping the human approval gate for actions that touch money, customer data, or production systems because "the model sounded confident."

- Evaluating only final-task success rate while ignoring step count, safety violations, and cost per successful task.

- Running computer-use agents against live production websites without rate limits, monitoring, or easy kill switches.

- Treating every GUI task the same: using a heavyweight computer-use loop for something a simple API call or MCP tool could have solved faster and safer.

Follow-up Questions to Expect

Key Concepts Tested

Computer-use tool schema and observe-act loopScreenshot + a11y tree grounding for GUI actionsAnthropic computer-use beta tool versions and Gemini 2.5 Computer Use PreviewSandbox isolation techniques for GUI agents (VM, virtual display, action allow-lists)WebArena, OSWorld, Mind2Web, and GAIA benchmarksDefense-in-depth safety: policy engines, human approval gates, audit loggingUnique failure modes: UI drift, timing races, credential exposure, and escape risksHybrid agent design: prefer APIs, fall back to computer use only when necessary

Next Step

Next: Continue to Human-in-the-Loop Agent Architecture

Long-running computer-use tasks that span multiple sites and applications need durable memory across steps plus explicit human checkpoints when the agent reaches a policy boundary. The selective-supervision and approval UI patterns you will study next give these powerful GUI agents the persistence and controlled escalation they require to remain safe and effective over many turns and many customer tickets.

References

Introducing computer use, a new Claude 3.5 Sonnet capability

Anthropic · 2024

Computer Use Tool

Anthropic · 2026

Computer Use

Google · 2026

WebArena: A Realistic Web Environment for Building Autonomous Agents

Zhou, S., et al. · 2023 · arXiv preprint

OSWorld: Benchmarking Multimodal Agents for Open-Ended Tasks in Real Computer Environments

Xie, T., et al. · 2024 · NeurIPS 2024

Mind2Web: Towards a Generalist Agent for the Web

Deng, X., et al. · 2023 · arXiv preprint

BrowserGym: A Unified Environment and Benchmark for Browser Agents

ServiceNow Research + Community · 2024

AgentDojo: A Dynamic Environment to Evaluate Prompt Injection Attacks and Defenses for LLM Agents

Debenedetti, E., et al. · 2024 · arXiv preprint

GAIA: A Benchmark for General AI Assistants

Mialon, G., et al. · 2023 · arXiv preprint

Introducing the Model Context Protocol

Anthropic · 2024

ReAct: Synergizing Reasoning and Acting in Language Models.

Yao, S., Zhao, J., Yu, D., Du, N., Shafran, I., Narasimhan, K., & Cao, Y. · 2023 · ICLR 2023