🏛️EasyModel Architecture

CNNs from Scratch

Build a complete CNN from first principles: understand convolution as sliding kernels, pooling for invariance, hand-compute feature maps on tiny support-ticket images, implement forward and backward passes in NumPy, then compare with PyTorch. Bridge to Vision Transformers.

11 min readGoogle, Meta, OpenAI +49 key concepts

Learning path

Step 18 of 138 in the full curriculum

A customer support agent receives a ticket: "My laptop arrived with a cracked screen, see photo." Attached is a phone snapshot of the damaged device next to the shipping label. The text says "cracked," but the photo shows exactly where the damage is and whether it matches the model in the order. How does an AI system actually look at that photo and decide "this is a screen-damage claim that needs the refurbishment team" versus "this is just a blurry box photo, route to general returns"?

The answer is not a giant fully-connected neural network that flattens every pixel. It is a convolutional neural network that respects the two-dimensional layout of the image from the first layer.[1]

💡 Key insight: A convolutional layer does not connect every input pixel to every neuron. Instead, it slides a small set of learned weights (a kernel) across the image. Each position in the output feature map is a local pattern detector. The same detector is used everywhere in the image. This single design choice reduces parameters by orders of magnitude and lets the network discover edges, textures, and shapes that are meaningful for classifying forms, screenshots, and product photos.

The problem with treating images as flat vectors

Recall from the neural network article that a dense (fully-connected) layer multiplies an input vector by a weight matrix. If your input is a 28 by 28 grayscale image of a simple form field, flattening it gives 784 numbers. A hidden layer of 256 units then needs 784 times 256 weights. Manageable for a toy problem.

Now scale to a realistic support-ticket photo: 224 by 224 pixels with three color channels. That is 150,528 input values. The same 256-unit hidden layer suddenly requires more than 38 million weights. Worse, every single weight is independent. The network has no built-in idea that the pixel two positions to the right is related to the pixel you just looked at. It must re-learn the concept of "horizontal line" or "corner of a screen" at every possible location in the image. That is both statistically and computationally wasteful.

Convolution solves the parameter explosion and the spatial blindness at the same time.

Convolution: the sliding kernel

A kernel (also called a filter or weight template) is a small matrix of numbers, typically 3 by 3 or 5 by 5. During the forward pass the kernel is placed on top of the image, the element-wise products with the underlying patch are summed, and the result is written to the corresponding location in the output feature map. The kernel then moves one pixel to the right or down (controlled by the stride), and the process repeats until the entire image has been covered.

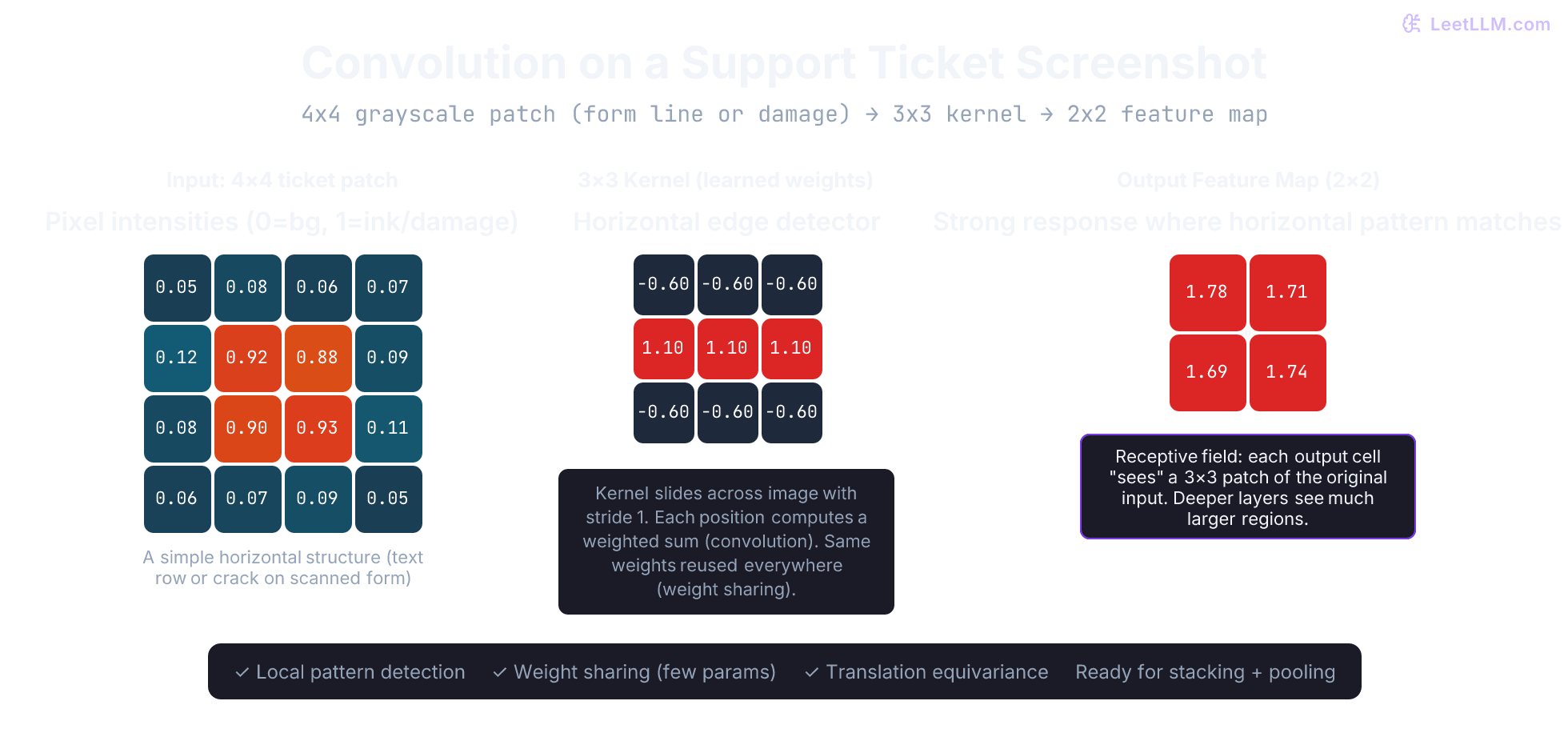

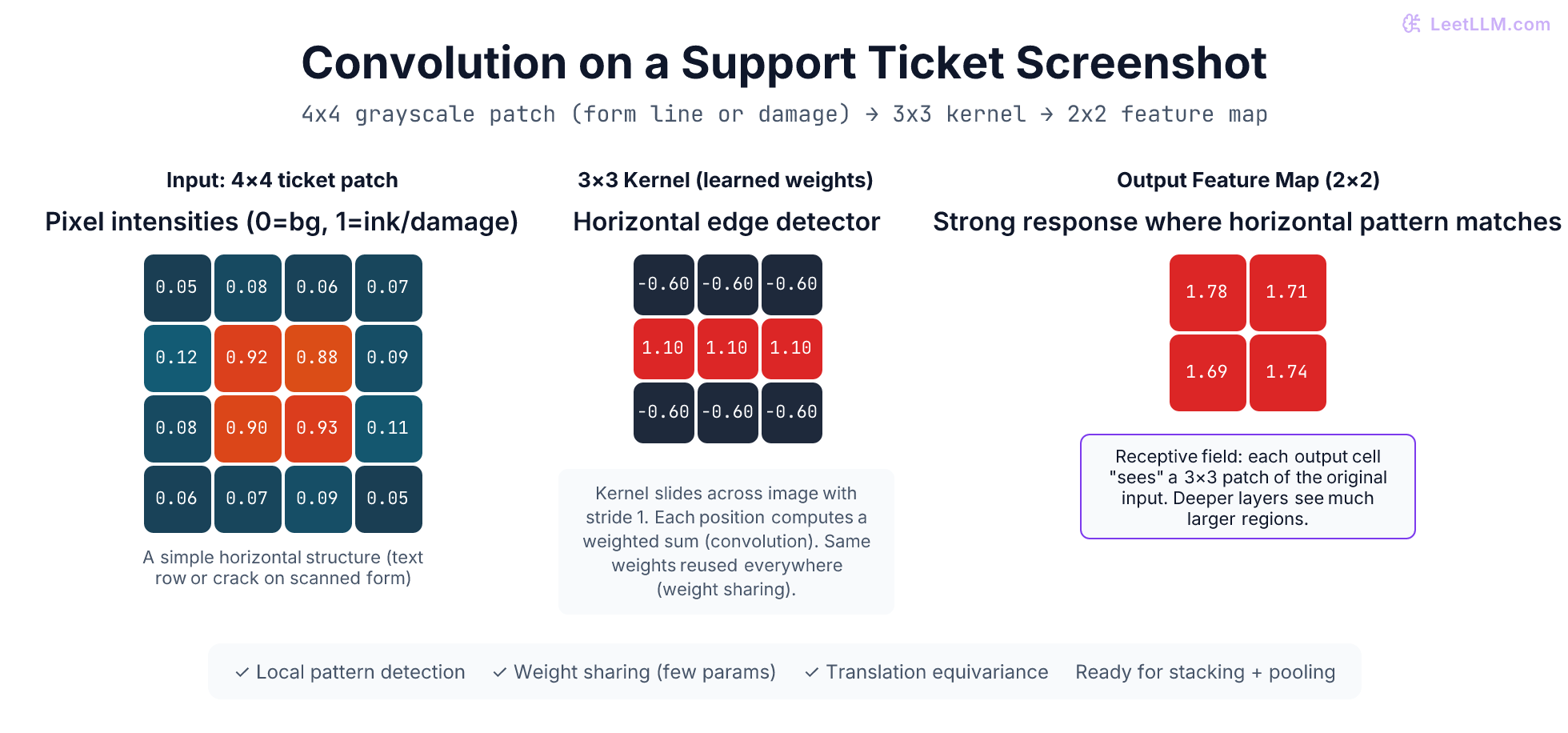

Let's compute a full example by hand on a 4 by 4 grayscale patch taken from a support ticket screenshot. Low values represent background paper; high values represent printed text or a physical damage line on the product.

Input 4 by 4 patch:

| col 0 | col 1 | col 2 | col 3 | |

|---|---|---|---|---|

| row 0 | 0.05 | 0.08 | 0.06 | 0.07 |

| row 1 | 0.12 | 0.92 | 0.88 | 0.09 |

| row 2 | 0.08 | 0.90 | 0.93 | 0.11 |

| row 3 | 0.06 | 0.07 | 0.09 | 0.05 |

The horizontal bar in rows 1-2 is exactly the kind of local pattern that appears in a form field or a crack on a laptop bezel.

A 3 by 3 kernel that detects horizontal edges (one of many kernels a network might learn):

| c0 | c1 | c2 | |

|---|---|---|---|

| r0 | -0.6 | -0.6 | -0.6 |

| r1 | 1.1 | 1.1 | 1.1 |

| r2 | -0.6 | -0.6 | -0.6 |

With stride 1 and no padding the output feature map will be 2 by 2. We compute each position.

- Position (0,0): kernel top-left corner aligned with input (0,0).

- Sum of element-wise products:

(0.05 * -0.6) + (0.08 * -0.6) + (0.06 * -0.6) + (0.12 * 1.1) + (0.92 * 1.1) + (0.88 * 1.1) + (0.08 * -0.6) + (0.90 * -0.6) + (0.93 * -0.6) - Numeric result:

-0.030 -0.048 -0.036 + 0.132 + 1.012 + 0.968 -0.048 -0.540 -0.558 = 1.78(rounded)

The other three positions are computed identically by sliding the kernel. The resulting feature map is:

| col 0 | col 1 | |

|---|---|---|

| row 0 | 1.78 | 1.71 |

| row 1 | 1.69 | 1.74 |

Every output value is strongly positive. The kernel responded wherever the horizontal high-intensity structure was present. That is the desired behavior for a "damage line" or "form text row" detector.

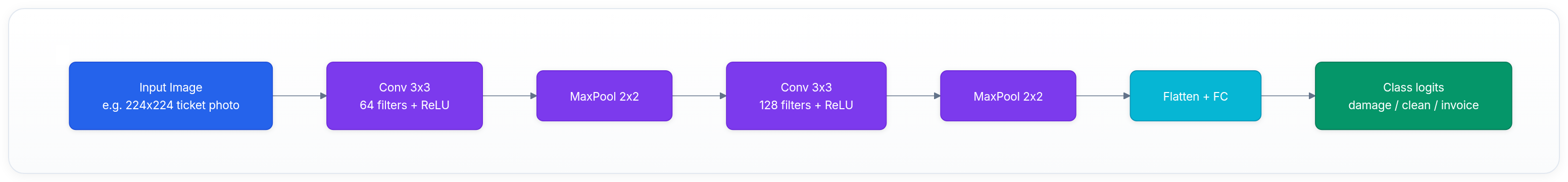

A real CNN learns dozens or hundreds of such kernels in the first layer. One might specialize in vertical edges, another in diagonal textures, another in small corner patterns typical of button icons on a UI screenshot. Each produces its own feature map (channel). The next layer receives the full stack of channels as a 3D volume.

Output size formula

For an input spatial size H by W, kernel size K by K, stride S, and padding P on every side the output size is:

output height = floor( (H + 2P - K) / S ) + 1

The formula is identical for width. In the worked example, P = 0, S = 1, H = 4, K = 3 gives (4 - 3) / 1 + 1 = 2, which matches our manual calculation.

Receptive fields and why depth creates abstraction

A neuron in the first convolutional layer has a receptive field of exactly the kernel size (3 by 3 in our example). It only "sees" a 3 by 3 neighborhood of the original pixels.

When we add a second convolutional layer on top, each of its neurons looks at a 3 by 3 neighborhood of the first layer's feature maps. Because each first-layer cell already aggregated a 3 by 3 region of the input, the effective receptive field in the original image grows to 5 by 5. Add pooling or more layers and the receptive field grows rapidly.

After only a few layers a single activation can depend on a 50 by 50 or even 100 by 100 region of the original photo. Early layers respond to edges and small textures. Middle layers respond to object parts (screen bezel, keyboard grid, label corner). Deep layers respond to whole concepts (laptop model with visible damage, complete return form layout). This progressive growth of context is the reason CNNs succeeded at image understanding long before attention-based models.

Pooling layers for downsampling and invariance

Convolution preserves spatial resolution (when stride is 1 and padding is appropriate). For efficiency and reliability we insert pooling layers that reduce the height and width.

The most common operation is 2 by 2 max pooling with stride 2. For every non-overlapping 2 by 2 block we keep only the maximum value and throw away the other three. Spatial dimensions are halved; the number of channels is unchanged.

Here is a concrete 4 by 4 feature map (values chosen for the example) before pooling:

| 0 | 1 | 2 | 3 | |

|---|---|---|---|---|

| 0 | 2.1 | 0.4 | 1.8 | 0.9 |

| 1 | 1.5 | 3.2 | 0.7 | 2.4 |

| 2 | 0.8 | 1.1 | 4.0 | 0.3 |

| 3 | 2.6 | 0.5 | 1.2 | 3.1 |

After 2 by 2 max pooling the 2 by 2 result is:

| 0 | 1 | |

|---|---|---|

| 0 | 3.2 | 2.4 |

| 1 | 2.6 | 4.0 |

The top-left 2 by 2 block had a maximum of 3.2 (row 1, col 1). That single value represents the entire block in the downsampled map.

During backpropagation the gradient arriving at the pooled cell is routed entirely to the winning location inside the 2 by 2 window. The other three locations receive zero gradient for that example. This "winner-take-all" routing is one of the special cases that makes CNN training work.

Average pooling (taking the mean of the window) is the other frequent choice and distributes the gradient uniformly.

A minimal CNN forward pass implemented in NumPy

We now have every primitive needed for a working convolutional classifier. The code below implements a complete (if tiny) CNN that could decide whether a 4 by 4 support-ticket thumbnail contains a damage line or is a clean form scan.

python1import numpy as np 2 3def conv2d_forward(x, W, b, stride=1): 4 """Naive single-filter, single-channel conv (valid padding).""" 5 C_out, C_in, kH, kW = W.shape 6 H, W_in = x.shape[-2:] 7 outH = (H - kH) // stride + 1 8 outW = (W_in - kW) // stride + 1 9 out = np.zeros((C_out, outH, outW)) 10 for oc in range(C_out): 11 for oh in range(outH): 12 for ow in range(outW): 13 hs, ws = oh * stride, ow * stride 14 patch = x[:, hs:hs + kH, ws:ws + kW] 15 out[oc, oh, ow] = np.sum(W[oc] * patch) + b[oc] 16 return out 17 18def relu(x): 19 return np.maximum(0.0, x) 20 21def maxpool2d(x, k=2, stride=2): 22 C, H, W = x.shape 23 outH = (H - k) // stride + 1 24 outW = (W - k) // stride + 1 25 out = np.zeros((C, outH, outW)) 26 for c in range(C): 27 for oh in range(outH): 28 for ow in range(outW): 29 hs, ws = oh * stride, ow * stride 30 patch = x[c, hs:hs + k, ws:ws + k] 31 out[c, oh, ow] = np.max(patch) 32 return out 33 34# 4x4 grayscale "screenshot" patch (horizontal bar pattern) 35x = np.array([[[0.05, 0.08, 0.06, 0.07], 36 [0.12, 0.92, 0.88, 0.09], 37 [0.08, 0.90, 0.93, 0.11], 38 [0.06, 0.07, 0.09, 0.05]]], dtype=np.float32) 39 40# Hand-set 3x3 kernel that matches the horizontal bar (shape: out_c, in_c, kH, kW) 41W = np.array([[[[-0.6, -0.6, -0.6], 42 [ 1.1, 1.1, 1.1], 43 [-0.6, -0.6, -0.6]]]], dtype=np.float32) 44b = np.array([0.0], dtype=np.float32) 45 46conv = conv2d_forward(x, W, b) # shape (1, 2, 2) ~1.78, 1.71, ... 47act = relu(conv) 48pooled = maxpool2d(act, k=2, stride=2) # shape (1, 1, 1) 49 50print("Conv+ReLU feature map:\\n", conv) 51print("After 2x2 max pool:\\n", pooled) 52 53# Tiny linear classifier on the single pooled value 54W_fc = np.array([[1.5], [-1.2]], dtype=np.float32) 55b_fc = np.array([0.1, -0.3], dtype=np.float32) 56flat = pooled.reshape(1, -1) 57logits = flat @ W_fc.T + b_fc 58print("Class logits (damage vs clean):", logits)

Running the script produces a feature map with strong positive responses, a pooled value that preserves the strongest signal, and logits that would push the example toward the "damage" class. Every number is traceable by hand.

The same architecture in PyTorch

The identical forward pass expressed with PyTorch primitives looks like this (weights would normally be learned, not hand-set):

python1import torch 2import torch.nn as nn 3 4model = nn.Sequential( 5 nn.Conv2d(in_channels=1, out_channels=1, kernel_size=3, stride=1, padding=0), 6 nn.ReLU(), 7 nn.MaxPool2d(kernel_size=2, stride=2), 8 nn.Flatten(), 9 nn.Linear(1, 2) 10) 11 12# Same 4x4 input as NumPy example, shape (batch=1, channels=1, 4, 4) 13x_torch = torch.tensor([[[[0.05, 0.08, 0.06, 0.07], 14 [0.12, 0.92, 0.88, 0.09], 15 [0.08, 0.90, 0.93, 0.11], 16 [0.06, 0.07, 0.09, 0.05]]]], dtype=torch.float32) 17 18# For illustration we can copy the hand-set weights 19with torch.no_grad(): 20 model[0].weight.copy_(torch.tensor([[[[-0.6, -0.6, -0.6], 21 [ 1.1, 1.1, 1.1], 22 [-0.6, -0.6, -0.6]]]])) 23 model[0].bias.copy_(torch.tensor([0.0])) 24 model[4].weight.copy_(torch.tensor([[1.5], [-1.2]])) 25 model[4].bias.copy_(torch.tensor([0.1, -0.3])) 26 27logits_torch = model(x_torch) 28print("PyTorch logits:", logits_torch)

The numbers match the pure NumPy version (within floating-point tolerance). In real training you would never set weights by hand; you would let torch.optim and nn.CrossEntropyLoss discover them via backpropagation. The important point is that the high-level building blocks (Conv2d, ReLU, MaxPool2d, Linear) are thin wrappers around the same mathematics you just implemented from scratch.

Backpropagation through the special layers

The full story of how gradients are computed for every weight appears in the next article. The two operations we added have simple but important gradient rules.

For max pooling the forward pass records which of the four positions supplied the maximum. On the backward pass the entire incoming gradient for that pooled cell is copied to the winning position; the other three positions receive zero. It is literally a switch that only the active path can use to update earlier weights.

For convolution we need gradients with respect to both the kernel and the input image.

-

Gradient with respect to each kernel weight: sum, over every spatial location the kernel was used, the product of the upstream gradient at that output location and the input value that was under the kernel weight. Because the kernel was reused everywhere, its gradient accumulates evidence from the whole image.

-

Gradient with respect to the input: the upstream gradient map is convolved with a spatially flipped version of the kernel (with appropriate padding). This is the mathematical adjoint of the forward convolution.

These two rules are exactly what every deep-learning framework implements in its autograd engine for Conv2d and MaxPool2d. Once you understand them, reading the backward code or deriving the chain rule for a full CNN becomes straightforward.

Modern CNN families

The ideas we just built by hand appeared in production systems decades ago and have been refined ever since.

- LeNet-5 (LeCun et al., 1998) was designed for document recognition: reading handwritten digits on bank checks and forms. It already contained the conv-pool-conv-pool-dense pattern we implemented.

- AlexNet (2012) scaled the same pattern to natural images, replaced saturating activations with ReLU, added dropout, and trained on two GPUs.

- VGG (2014) showed that depth with small 3 by 3 kernels beats wider networks with large kernels.

- ResNet (2015) solved the "deeper is harder to train" problem by adding skip connections around groups of layers.

- Later work (EfficientNet, ConvNeXt) continued the pattern of taking the best ideas from both the CNN and transformer worlds and applying them to pure convolutional architectures.

CNNs and Vision Transformers: the direct bridge

A Vision Transformer divides an image into non-overlapping patches (commonly 16 by 16), linearly embeds each patch, adds positional information, and processes the sequence with ordinary transformer encoder blocks. There are no convolutions in the original ViT.

CNNs bake three strong assumptions (inductive biases) into the architecture:

-

Locality: a neuron only needs to look at a small neighborhood.

-

Weight sharing: the same detector is useful at every location.

-

Translation equivariance: a shift in the input produces a predictable shift in the feature map.

Vision Transformers must discover equivalent behavior from data. With enough images and compute they often win on accuracy. With limited data or strict latency constraints the CNN biases remain extremely valuable.

In today's LLM-centric multimodal systems you will encounter both. The image encoder that turns a customer-uploaded photo into tokens the language model can attend to is frequently a Vision Transformer or a hybrid CNN-ViT backbone. The lessons about kernels, feature maps, receptive fields, and gradient flow through conv and pool layers remain the foundation for understanding why those encoders succeed or fail on support-ticket photos, invoices, and product images.

Key takeaways

-

Convolution replaces a dense matrix multiply with a small, shared, sliding template. The dramatic reduction in parameter count is what makes image-scale models feasible.

-

Each filter produces a feature map; stacking filters gives the next layer a multi-channel volume to work with.

-

Pooling downsamples while adding limited translation invariance and a simple "winner-take-all" gradient rule.

-

Receptive fields grow with depth, turning local edge detectors into detectors for entire objects or document regions.

-

The same backpropagation engine works for both dense and convolutional layers; only the local gradient rules for conv and pool change.

-

CNNs and Vision Transformers represent two different bets on what structure should be hard-coded versus learned. Both families appear in real production multimodal LLM stacks.

You now possess working mental models and runnable code for the two fundamental layer types that appear in almost every modern vision or multimodal system: the fully-connected layer and the convolutional layer. The natural next question is how any collection of these layers discovers the right values for all of its kernels and weights from labeled examples of support tickets, forms, and product photos.

Evaluation Rubric

- 1Explains convolution as a small shared-weight kernel sliding over an image to produce a feature map

- 2Computes output size given input size, kernel size, stride, and padding

- 3Traces a complete hand-worked convolution and max-pool on a tiny numeric image grid

- 4Describes how receptive fields expand with depth and why that enables hierarchical visual features (edges → textures → objects)

- 5Implements a minimal Conv2D + ReLU + Pool + Linear forward pass in pure NumPy and explains each step

- 6Shows how gradients flow backward through pooling (only to the argmax) and through convolution (flipped kernel)

- 7Contrasts CNN inductive biases (locality, weight sharing, translation equivariance) with Vision Transformers and explains when each wins

Common Pitfalls

- Forgetting that convolution output size depends on padding and stride (many people assume same size as input)

- Treating pooling as "just downsampling" without realizing it also routes gradients only through the max location

- Using large kernels (5×5 or 7×7) everywhere instead of stacking small 3×3 kernels (VGG showed the latter is more efficient and expressive)

- Assuming deeper always means better without residuals or proper initialization (plain 20+ layer CNNs often fail to train)

- Flattening the entire feature volume too early and losing all spatial structure before the final classifier

- Ignoring that modern "CNNs" like ConvNeXt have borrowed LayerNorm, GELU, and large kernels from transformers

Follow-up Questions to Expect

Key Concepts Tested

Convolution as cross-correlation: kernel sliding, stride, padding, output size formulaFeature maps and multi-channel outputs (filters detect different patterns)Receptive field growth through stacked conv layersMax pooling and average pooling for spatial downsampling and invarianceParameter efficiency of CNNs vs fully-connected layers on imagesHand-worked forward pass on a 4x4 image with 3x3 kernelBackward pass through conv and pool (gradient routing)Modern CNN milestones (LeNet, AlexNet, VGG, ResNet) and inductive biasesInductive bias comparison: CNN local+shared vs Vision Transformer global attention

Next Step

Next: Continue to Training & Backpropagation

With concrete forward passes through both dense layers and convolutional layers (including on actual support-ticket image patches) now in hand, backpropagation shows exactly how to compute the gradient for every single kernel weight and connection so the model can improve after each misclassified photo or misrouted document.

References