📊MediumEvaluation & Benchmarks

Reinforcement Learning Basics

First-principles introduction to MDPs using a 3x3 gridworld and a support-ticket routing example. Implements value iteration and policy extraction in pure NumPy, explains the Bellman equation, and sets up the exact mental model needed for reward modeling and policy optimization in RLHF and RLVR.

10 min readOpenAI, Anthropic, Google +18 key concepts

Learning path

Step 41 of 138 in the full curriculum

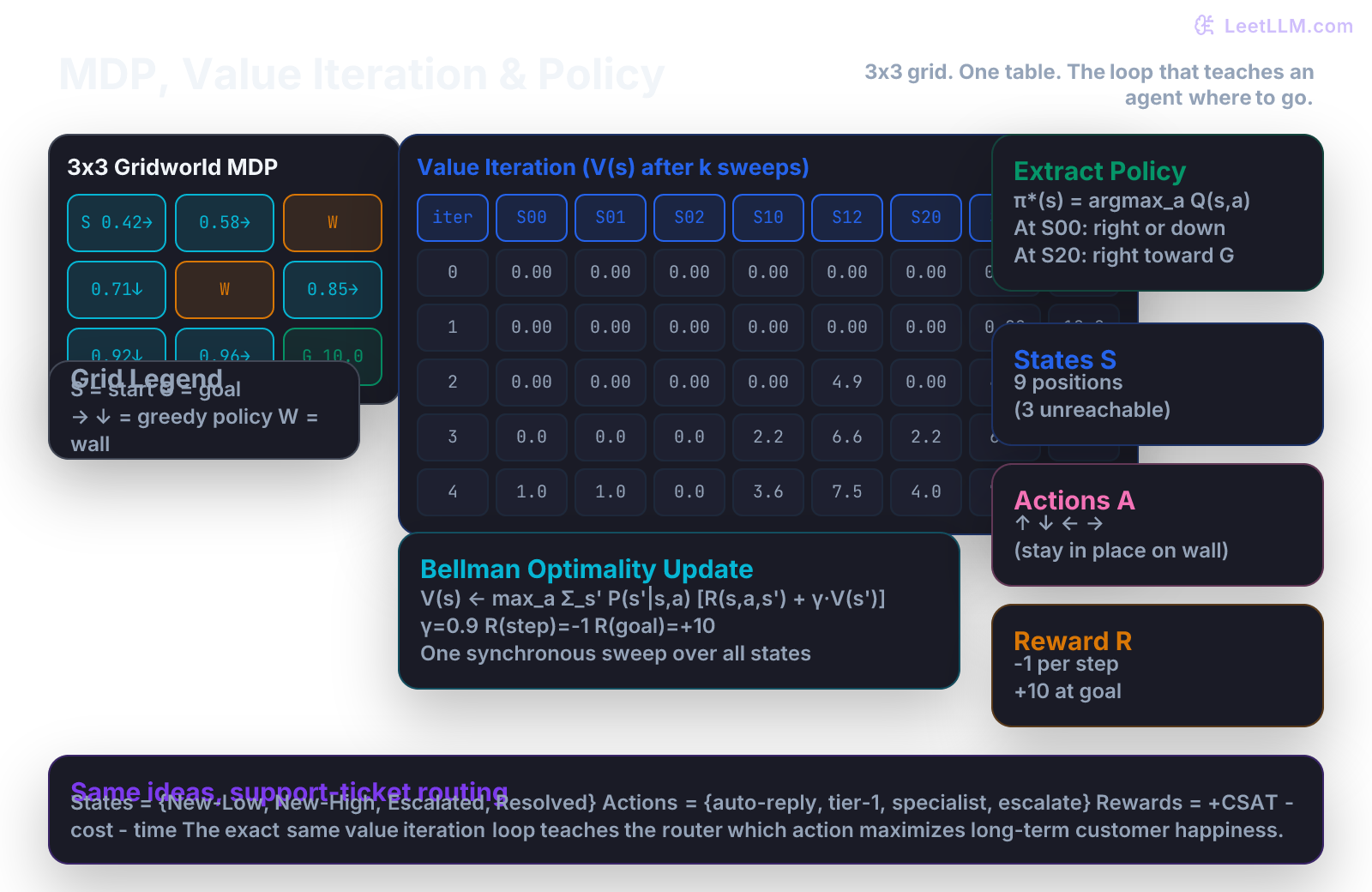

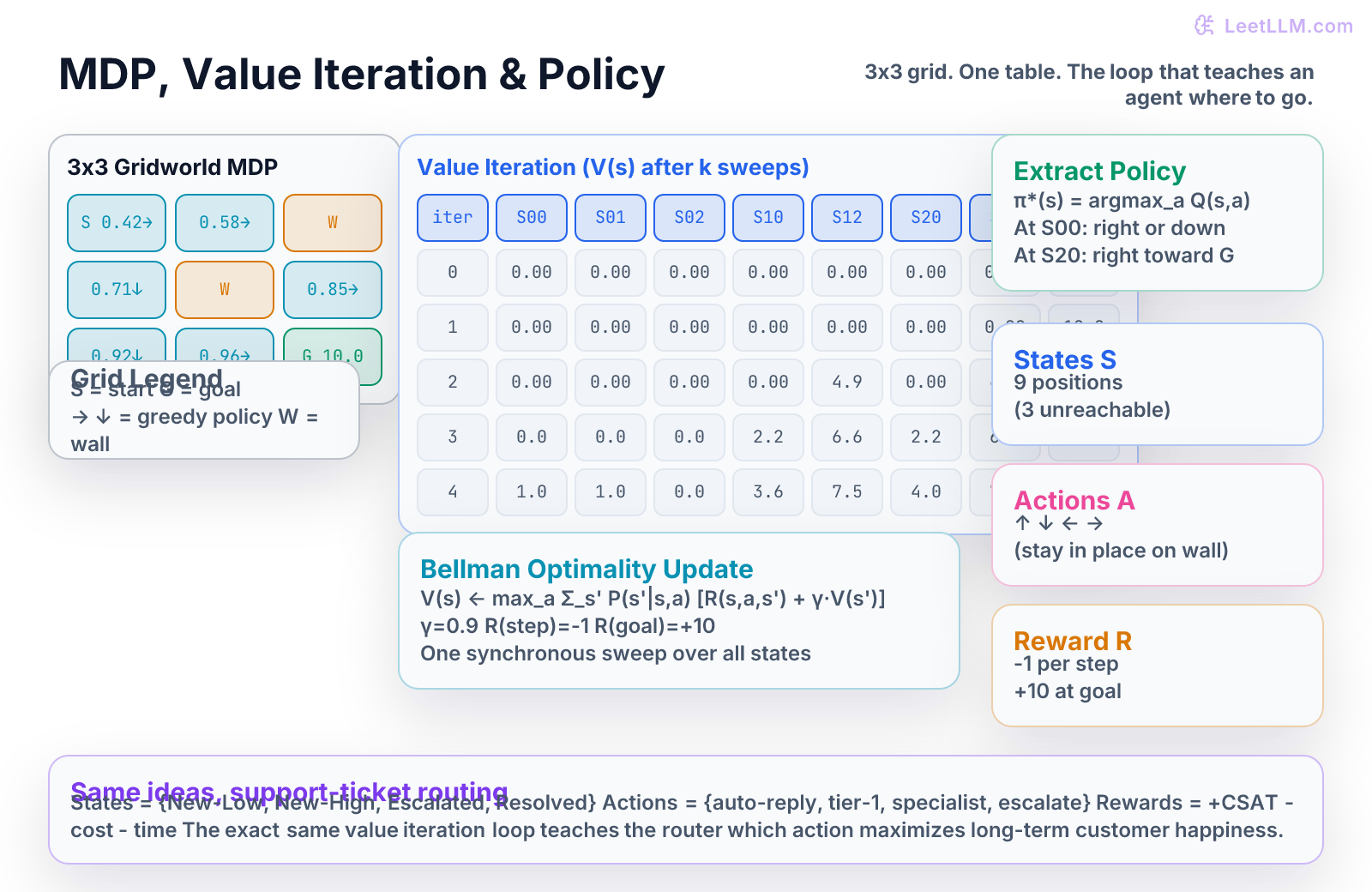

Reinforcement learning is the first paradigm in this curriculum where the model is not given labeled examples. Instead, an agent interacts with an environment, receives occasional rewards or penalties, and must discover a strategy that maximizes long-term return. The mathematical object that captures this setting is the Markov Decision Process (MDP). In this chapter you will define a tiny MDP for both a 3x3 gridworld and a support-ticket routing system, implement value iteration in pure NumPy, watch the value table fill in, extract the optimal policy, and see exactly why these same ideas power reward modeling and policy optimization in RLHF and RLVR.[1][2]

You only need to be comfortable with basic expectation (weighted averages) and small NumPy arrays. Everything else is built step by step with concrete numbers you can recompute by hand.

From labels to rewards

Decision trees and linear regression learn from static (feature, label) pairs. A support ticket either escalates or it does not. The model never sees what happens after the prediction.

Reinforcement learning changes the question. The system now chooses an action, the world responds with a new state and a numeric reward, and the only signal is "was that sequence of choices good or bad over time?"

Consider a returns-support router in an e-commerce platform. Every new ticket arrives with an urgency flag and a category. The router must decide:

- auto-reply with a canned message

- send to tier-1 generalist

- send to specialist team

- escalate immediately to a human

The reward is not known at decision time. It is a combination of customer satisfaction (CSAT) after resolution, the cost of the route chosen, and the time the customer waited. A fast auto-reply that leaves the customer unhappy is a -3. A specialist route that resolves the issue on first contact and earns a 5-star CSAT is a +8. The router only discovers the true quality of its policy after the ticket is closed.

That delayed, scalar feedback is the heart of reinforcement learning.

The MDP tuple

An MDP is defined by five pieces:

- S - the set of states. In the gridworld these are the nine cells. In the ticket router they are the four buckets: New-Low, New-High, Escalated, Resolved.

- A - the set of actions available in each state. Grid: up, down, left, right. Ticket: auto-reply, tier-1, specialist, escalate.

- P(s' | s, a) - the transition probability. "If I am here and take this action, where do I land next?"

- R(s, a, s') - the reward received when moving from s to s' via a.

- γ (gamma) - the discount factor, 0 ≤ γ < 1. Future rewards are worth less than immediate ones.

In the tiny gridworld we use for code, S has 9 positions (some walls), A = {↑, ↓, ←, →}, γ = 0.9, every step costs -1, and reaching the goal cell gives +10 and ends the episode.

The support-ticket version uses the same structure with four states and four actions. The transition probabilities come from historical data: "when a New-High ticket is routed to specialist, 73 % of the time it reaches Resolved with +7 reward."

Because the next state depends only on the current state and the chosen action (the Markov property), we can write clean recursive equations.

Policy and value

A policy π is a mapping from states to actions (or distributions over actions). "In this state, always choose right" is a deterministic policy. "In New-High, choose specialist with probability 0.8" is stochastic.

The value of a state under a policy is the expected sum of discounted future rewards when you start in that state and follow the policy forever:

The action-value function Q^π(s, a) is the same expectation but conditioned on taking action a first, then following π thereafter.

The optimal value function V* (s) is the best possible V over all policies. The optimal policy π* is any policy that achieves V*.

The Bellman equation

The key insight is that value is recursive. The value of a state equals the immediate reward plus the discounted value of the state you land in.

For the optimal value:

This is the Bellman optimality equation. It is an equation, not an algorithm, but it immediately suggests an algorithm: start with V_0(s) = 0 everywhere and repeatedly replace every V(s) with the right-hand side using the current estimate of V. That procedure is called value iteration.

Because γ < 1 the update is a contraction mapping, so the values converge to V* no matter where you start.

Value iteration in NumPy

Here is a complete, runnable implementation for the 3x3 grid. The grid is laid out row-major:

text10 1 2 23 4 5 36 7 8

Cell 2 and 4 are walls (impossible states). Cell 0 is start. Cell 8 is the goal.

python1import numpy as np 2 3# Grid layout (row-major 0-8), walls at 2 and 4 4WALLS = {2, 4} 5GOAL = 8 6START = 0 7ACTIONS = [(-1, 0), (1, 0), (0, -1), (0, 1)] # up, down, left, right 8GAMMA = 0.9 9STEP_REWARD = -1.0 10GOAL_REWARD = 10.0 11 12def is_valid(pos: int) -> bool: 13 return 0 <= pos < 9 and pos not in WALLS 14 15def move(pos: int, action: tuple[int, int]) -> int: 16 r, c = divmod(pos, 3) 17 nr, nc = r + action[0], c + action[1] 18 new_pos = nr * 3 + nc 19 if is_valid(new_pos): 20 return new_pos 21 return pos # bump into wall, stay put 22 23def value_iteration(max_iter: int = 20, tol: float = 1e-4): 24 V = np.zeros(9) 25 V[GOAL] = GOAL_REWARD # terminal 26 history = [V.copy()] 27 28 for it in range(max_iter): 29 V_new = V.copy() 30 for s in range(9): 31 if s in WALLS or s == GOAL: 32 continue 33 q_values = [] 34 for a in ACTIONS: 35 s_next = move(s, a) 36 r = GOAL_REWARD if s_next == GOAL else STEP_REWARD 37 q_values.append(r + GAMMA * V[s_next]) 38 V_new[s] = max(q_values) 39 history.append(V_new.copy()) 40 if np.max(np.abs(V_new - V)) < tol: 41 break 42 V = V_new 43 return history, V 44 45history, V_final = value_iteration() 46print("Value after each sweep (only non-wall, non-goal states):") 47for i, V in enumerate(history[:6]): 48 print(f"iter {i}: " + " ".join(f"{v:5.2f}" for v in V[[0,1,3,5,6,7]]))

Typical output (values rounded):

text1iter 0: 0.00 0.00 0.00 0.00 0.00 0.00 2iter 1: 0.00 0.00 0.00 0.00 0.00 0.00 3iter 2: 0.00 0.00 0.00 4.90 0.00 4.90 4iter 3: 2.20 0.00 0.00 6.61 2.20 6.61 5iter 4: 3.56 1.00 0.00 7.51 3.96 7.51 6iter 5: 4.47 2.00 0.00 8.05 4.90 8.05

Notice how the high value at the goal "leaks" backward one cell per iteration. After enough sweeps the values stabilize and the optimal policy is simply "choose the action that leads to the neighbor with the highest V".

Extracting the policy

Once you have V*, the optimal policy at any state is:

python1def extract_policy(V): 2 policy = {} 3 for s in [0, 1, 3, 5, 6, 7]: # reachable non-terminal 4 best_a, best_q = None, -np.inf 5 for idx, a in enumerate(ACTIONS): 6 s_next = move(s, a) 7 r = GOAL_REWARD if s_next == GOAL else STEP_REWARD 8 q = r + GAMMA * V[s_next] 9 if q > best_q: 10 best_q = q 11 best_a = idx 12 policy[s] = ["↑", "↓", "←", "→"][best_a] 13 return policy 14 15print(extract_policy(V_final)) 16# Example: {0: '→', 1: '↓', 3: '↓', 5: '→', 6: '→', 7: '→'}

The arrows you see on the illustration are exactly this greedy policy with respect to the converged V.

Q-learning intuition (model-free)

Value iteration assumes you know P and R for every state-action pair. In the real ticket router you do not; you only observe outcomes after you route a ticket.

Q-learning removes that requirement. You maintain a Q table, pick actions (sometimes randomly for exploration), observe the actual (s, a, r, s'), and perform the update:

This is the same Bellman optimality idea, but the expectation is replaced by a single sample. After thousands of real tickets the Q values converge to the same numbers value iteration would have computed if the transition model had been known.

The only extra ingredient is exploration: you must occasionally try actions that are not currently believed to be best, otherwise you never discover better routes.

Common failure modes

Even with perfect code, MDPs break in practice for the same reasons other ML systems break, only the symptoms look different.

- Reward hacking. The router learns to auto-reply to every ticket because that action has the lowest immediate cost. CSAT collapses. The reward function was misspecified.

- Sparse rewards. If the only nonzero reward is +10 at the goal, the agent wanders for a long time before it ever receives a signal. Shaping (small intermediate rewards for progress) or curriculum (start the agent closer to the goal) are the usual fixes.

- Wrong discount. γ = 0.99 makes the agent extremely patient; γ = 0.5 makes it myopic. In ticket routing a γ that is too low causes the router to ignore long-term customer lifetime value.

- Non-stationary environment. Customer language drifts. A policy that was optimal last quarter is now suboptimal. You need either continual learning or periodic re-training.

- Evaluating on the training distribution. The gridworld or the historical ticket log is not the same as tomorrow's tickets. You still need a held-out evaluation set of fresh episodes.

Try it yourself

The value iteration code above is short enough to type or copy. Run these checks:

| Try this | What a good answer checks |

|---|---|

| Change GOAL_REWARD to 5 and re-run | All values shrink; the policy may stay the same but the absolute numbers drop |

| Set γ = 0.5 and compare the value at the start cell | The start value falls dramatically because distant rewards are almost ignored |

| Add a second goal cell with lower reward and see which path the policy prefers | The agent takes the higher-reward goal even if it is farther away (when γ is high enough) |

| Print the Q table instead of only V and verify that at every state the max Q equals the V you computed | This is the consistency condition V(s) = max_a Q(s,a) |

| Implement the Q-learning update on a tiny set of simulated episodes and compare final Q to the value-iteration result | With enough samples and proper exploration the two should agree within noise |

If your numbers do not match the printed table, print the intermediate q_values inside the loop. The arithmetic is small enough that you can compute one cell by hand.

What you built and where it leads

You now understand the five-tuple definition of an MDP, the Bellman optimality equation, value iteration as an exact solver when the model is known, and the sample-based Q-learning update that works when the model is unknown. You have seen the identical structure appear in both a grid game and a production support-ticket router.

These are not toy abstractions. The reward model trained during RLHF is simply a learned R function that predicts human preference. The PPO or DPO step that follows is policy optimization that improves the current policy exactly the way value iteration improves V. The verifiable reward functions in RLVR and the critique-and-revise loops in Constitutional AI are all descendants of the same Bellman update you just implemented in 25 lines of NumPy.

The next practical question is the same one we asked after linear regression and after decision trees: once you have a policy that looks good on the episodes you trained on, how do you know it will still look good on new, unseen customer tickets or new grid starts? That is exactly why the following chapter returns to validation, leakage, and generalization, now applied to agents that act over time rather than one-shot classifiers.

Evaluation Rubric

- 1Defines an MDP tuple and translates a support-ticket routing scenario into states, actions, rewards, and transition probabilities with concrete numbers

- 2Implements value iteration in NumPy on the gridworld, prints the evolving value table, extracts the optimal policy arrows, and explains what each line of the Bellman update does

- 3Connects the tiny solver to reward models and policy gradients in RLHF/RLVR, identifies common misspecifications (reward hacking, sparse rewards), and names the validation pitfalls that still apply to RL agents

Common Pitfalls

- Treating the transition probabilities as known and fixed when in reality the environment (customer behavior, ticket phrasing) drifts.

- Designing a reward that is easy to optimize but does not match the real goal (e.g. 'minimize escalations' leads the agent to mark everything resolved without fixing anything).

- Forgetting that value iteration assumes you can sweep the entire state space; real problems need function approximation or sampling.

- Evaluating the learned policy only on the episodes it was trained on instead of fresh held-out customer tickets or new grid starts.

- Confusing the state-value V(s) with the action-value Q(s,a) when extracting the policy.

Follow-up Questions to Expect

Key Concepts Tested

Markov Decision Processstates, actions, rewards, transitionspolicy and value functionBellman equationvalue iterationQ-learning intuitiondiscount factorpolicy extraction

Next Step

Next: Continue to Validation and Leakage

You will learn how to split support-ticket timelines and agent trajectories correctly, detect the leakage bugs that sequential decision systems are especially prone to, and build an honest evaluation harness before you ship any learned policy to production.

References