⚙️MediumMLOps & Deployment

Data Labeling and Feedback

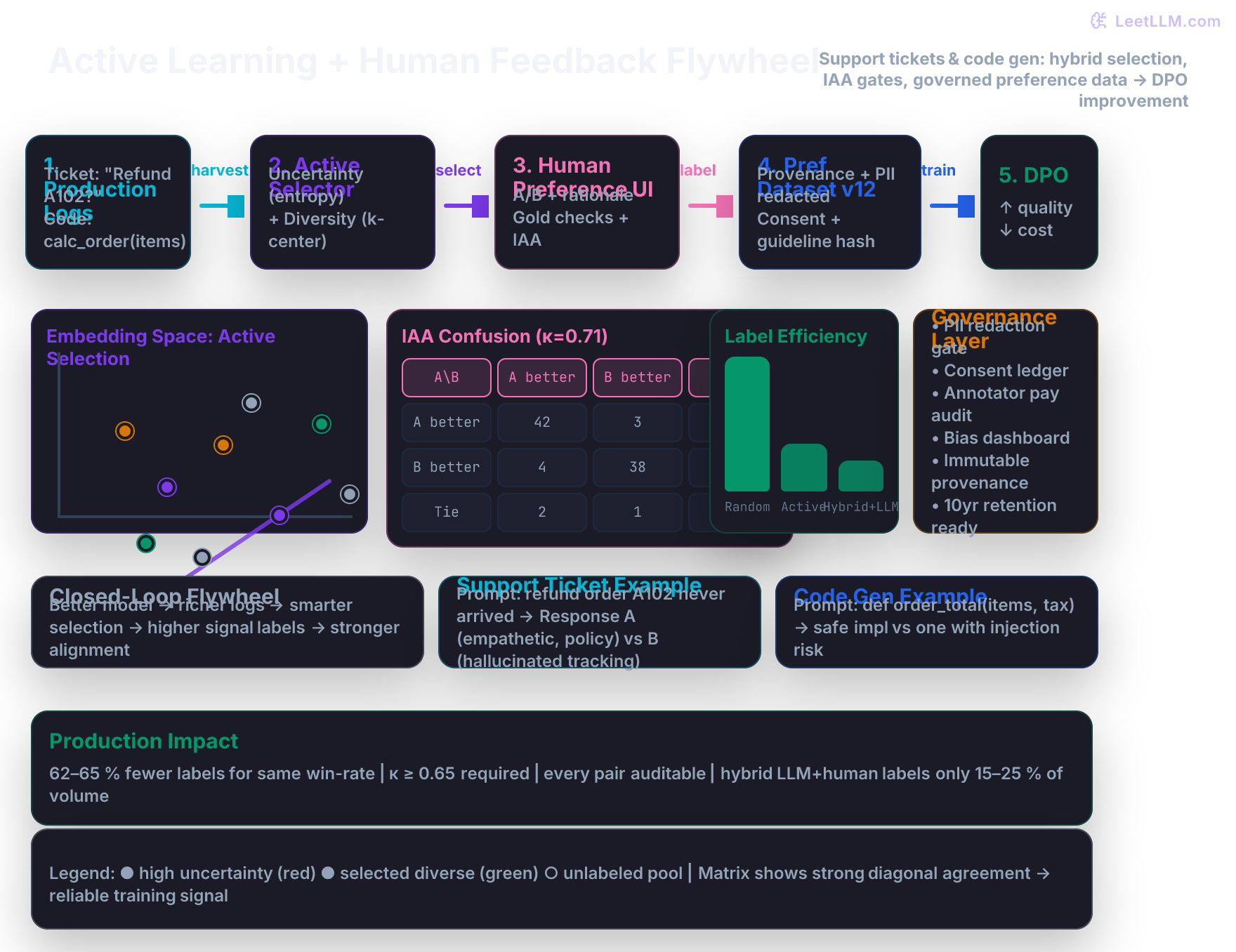

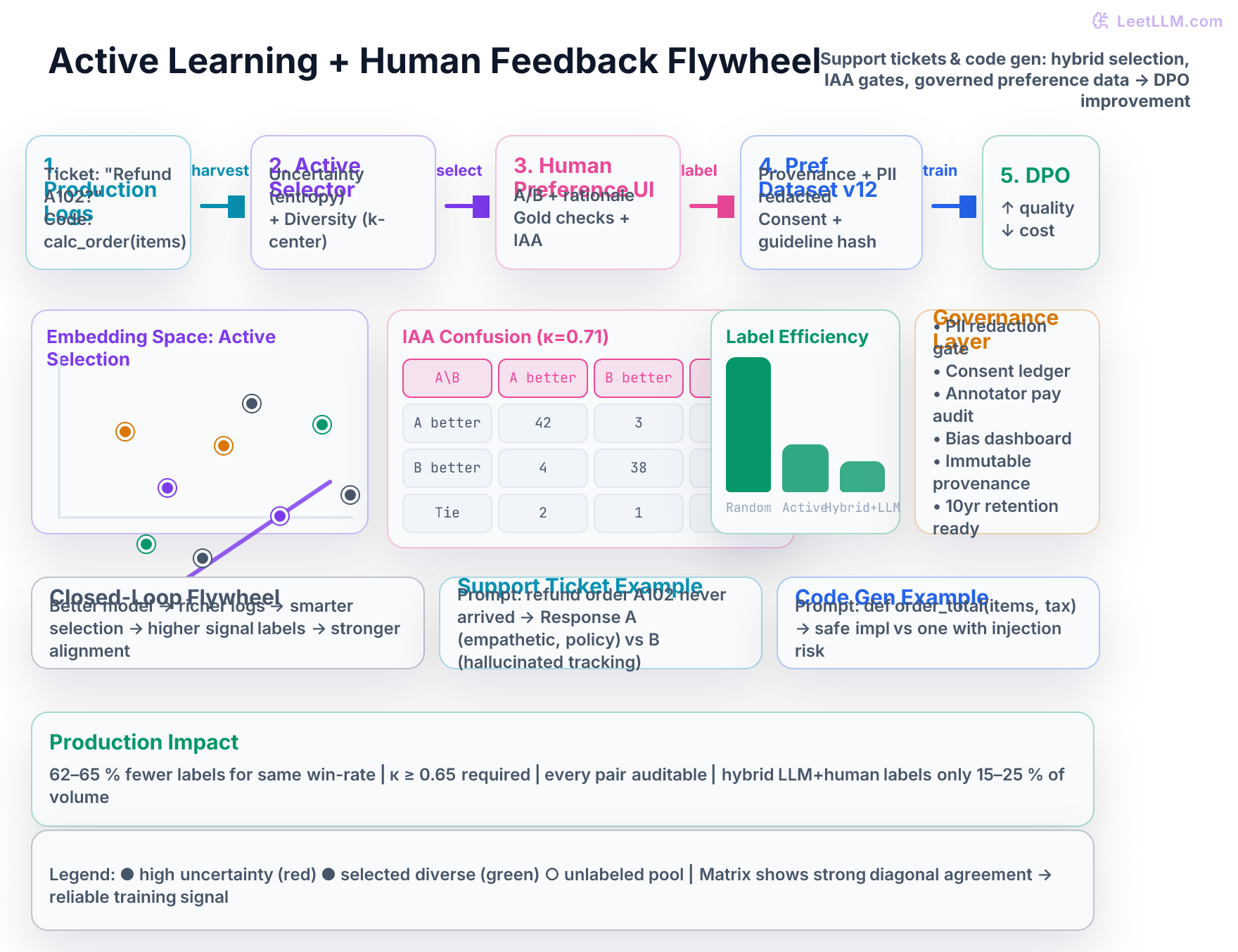

Design production-grade data labeling and human feedback systems for LLMs. Master active learning (uncertainty sampling, embedding diversity, hybrid strategies), inter-annotator agreement (Cohen's kappa, Krippendorff's alpha), RLHF/DPO preference collection interfaces with rigorous quality control, closed-loop data flywheels, and the governance, privacy, cost, and audit layers required for sustainable, compliant data operations.

14 min readScale AI, Labelbox, Snorkel +710 key concepts

Learning path

Step 55 of 138 in the full curriculum

Data Labeling, Human Feedback, and Active Learning Systems

Production LLM systems that answer support tickets or generate backend code for an e-commerce platform improve fastest when they receive a steady stream of high-signal human feedback. The bottleneck is rarely model architecture - it is the cost, quality, governance, and selection of the data that tells the model what “good” actually looks like.

Consider ShopForge, an e-commerce company whose AI assistant handles two critical workloads: customer support tickets (refund requests for order A102, shipping disputes, account lock explanations) and code generation tasks (safe Python functions that compute order totals with tax, discounts, and fraud checks; inventory sync scripts for merchants). Early versions were fine-tuned on synthetic data generated by the pipelines from the previous lesson. They performed adequately on common cases. Then the long tail started to matter: angry merchants disputing $40k in fraud, customers whose accounts were locked mid-holiday rush, and edge-case code requests that required secure, policy-compliant implementations the model still hallucinated or made unsafe.

ShopForge realized they needed real human preference data at scale for both the support and code domains. Labeling every production conversation and code request randomly would cost six figures per quarter and still produce noisy training signals because most examples were already handled well by the current model. The solution was a governed, active-learning-powered data flywheel that selects the right 15–25 % of logs for human review, routes them through a carefully instrumented preference interface, enforces inter-annotator agreement (IAA), and feeds the resulting preference pairs directly into DPO and reward-model training loops.[1]

This article teaches you how to build exactly that system, continuing directly from the synthetic data generation work you completed in the prior lesson.

Why Random Labeling Is Too Expensive - Especially for Support + Code Workloads

A single high-quality pairwise preference annotation (two model responses for the same prompt, plus the chosen/rejected decision and optional rationale) typically costs $0.60–$1.20 fully loaded when you include rater wages, platform fees, quality control overhead, management, and re-labels. At 50,000 examples you are already looking at $40k–$60k before you even start training.

Worse, most of those labels are low value. The model already knows how to answer “What is my current balance?” or “Write a simple order_total function” correctly. The expensive, high-value labels are the ambiguous, high-stakes, or distribution-shifting cases that the current policy still gets wrong - angry customers whose refund policy has nuance, or code that must handle fraud flags without introducing injection vectors.

Active learning changes the economics dramatically. Instead of labeling uniformly at random, you score every unlabeled production example by how much it is expected to improve the model, then label only the top slice. In practice, mature LLM teams reach the same final win-rate or reward-model accuracy with 40–70 % fewer human labels.

💡 Key insight: The synthetic data pipelines from the previous lesson gave you volume from a small human seed. Active learning + human feedback gives you precision. The two techniques are complementary: use synthetic data for the broad base, then let production traffic + active selection surface the exact tail cases that need human judgment.

Labeling Platforms Landscape

You have three realistic choices for the human side of the flywheel:

| Platform / Approach | Best For | Pricing Model | Active Learning Integration | Governance Features | When to Choose |

|---|---|---|---|---|---|

| Scale AI, Surge AI, Appen | High volume, domain-expert raters (legal, medical, code review), fast ramp | $0.8–$3.50 per task + platform fee | API hooks for priority queues | Strong audit exports, rater demographics, SLA quality | First 100k labels or highly specialized domains (secure code review) |

| Label Studio (self-hosted) + Prolific / Surge | Full control, sensitive customer data, custom UI | Infrastructure + $0.30–$1.00 per annotation | You implement the selector yourself | Full provenance, VPC deployment, custom PII redaction | When support tickets contain PII or proprietary code that cannot leave your environment |

| Internal + Amazon MTurk / Prolific | Lowest cost, high control over UI and guidelines | Lowest raw wages + your engineering time | Full ownership of selection logic | You build everything | Mature teams with dedicated data platform and steady volume |

Most successful LLM teams start with a managed provider (Scale or Surge) to validate the flywheel end-to-end on support tickets and code tasks, then bring high-volume or regulated workloads in-house once the selection, QC, and audit pipelines are solid.

Inter-Annotator Agreement (IAA) - Measuring When Humans Actually Agree

Human feedback is only useful if the humans mostly agree on what “better” means. Before any preference pair enters your training set you must measure and enforce agreement.

Common metrics:

- Percent agreement - simple but overestimates when classes are imbalanced (most tickets are “A is clearly better”).

- Cohen’s kappa (κ) - measures agreement above chance. For two annotators on binary or categorical labels (chosen/rejected/tie). κ > 0.6 is usually the minimum for production; 0.7+ is comfortable.

- Fleiss’ kappa - generalization for >2 annotators.

- Krippendorff’s alpha - the most robust for missing data and multiple annotators; preferred for production preference work.

Production rule of thumb: Require average pairwise kappa or Krippendorff’s alpha ≥ 0.65 on a gold subset for every batch. If a rater’s personal kappa with the majority falls below 0.5 on gold items, pause their work and retrain them on the guideline.

Low IAA is almost always a guideline or task-design problem, not a rater problem. Clarify the decision criteria, add more gold examples that illustrate the hard boundary cases (e.g., “polite but evasive” vs “direct and helpful” on a support ticket, or “secure but verbose” vs “concise but has a subtle timing side-channel” on a code task), and publish the exact policy version that every rater sees.

Worked Example: Computing Cohen’s Kappa on Support + Code Items

Suppose three annotators label 10 items (6 support tickets, 4 code requests). You build a confusion matrix between Annotator 1 and Annotator 2 on the three-way decision (A better, B better, Tie):

text1 A better B better Tie 2A better 42 3 1 3B better 4 38 2 4Tie 2 1 27

Observed agreement ( p_o = (42 + 38 + 27) / 120 = 0.892 )

Chance agreement ( p_e ) is calculated from marginals. After plugging into the kappa formula you obtain (\kappa \approx 0.71).

This passes the 0.65 threshold. You would still investigate the 9 off-diagonal disagreements - many turn out to be support-ticket items where one rater valued “empathy” more than the other. You update the guideline with a clarifying sentence and a new gold example.

Active Learning Strategies That Actually Work for LLMs (Support + Code Domains)

Three families are a useful starting set in production labeling systems. All of them benefit enormously from having both support-ticket text and code AST/embedding signals in the same embedding space.

1. Uncertainty Sampling

Pick examples where the current model (or reward model) is least confident.

For a reward model head you can use:

- Least confident: ( 1 - \max p(y) )

- Margin: ( p(\text{top1}) - p(\text{top2}) )

- Entropy: ( -\sum p(y) \log p(y) )

For generative models without a calibrated head you approximate uncertainty with average token log-probability of the continuation, disagreement across multiple samples (self-consistency variance), or an LLM-as-judge confidence score.

2. Diversity Sampling

Uncertainty alone tends to return clusters of very similar hard examples (all angry refund tickets about the same carrier). Diversity methods ensure coverage of the input space.

- k-center greedy (core-set): iteratively pick the point farthest (in embedding space) from the already selected set.

- k-means++ seeding followed by one representative per cluster.

- Submodular maximization when you have a coverage function.

3. Hybrid + Expected Improvement (Production Standard)

Best practice is a weighted combination. Here is a minimal NumPy implementation you can drop into a nightly job:

python1import numpy as np 2from sklearn.metrics.pairwise import euclidean_distances 3 4def hybrid_active_select( 5 embeddings: np.ndarray, # (N, D) from your current embedder 6 uncertainties: np.ndarray, # (N,) entropy or 1 - margin from reward model 7 k: int = 1200, 8 w_uncertainty: float = 0.6, 9) -> np.ndarray: 10 """Return indices of k examples for human labeling.""" 11 uncertainties = (uncertainties - uncertainties.min()) / (uncertainties.ptp() + 1e-9) 12 13 selected = [] 14 remaining = set(range(len(embeddings))) 15 16 # Seed with highest uncertainty 17 first = int(np.argmax(uncertainties)) 18 selected.append(first) 19 remaining.remove(first) 20 21 for _ in range(1, k): 22 if not remaining: 23 break 24 rem_idx = np.array(list(remaining)) 25 # Diversity: min distance to any selected point 26 dists = euclidean_distances(embeddings[rem_idx], embeddings[selected]).min(axis=1) 27 dists = (dists - dists.min()) / (dists.ptp() + 1e-9) 28 29 hybrid = w_uncertainty * uncertainties[rem_idx] + (1 - w_uncertainty) * dists 30 next_idx = rem_idx[int(np.argmax(hybrid))] 31 selected.append(next_idx) 32 remaining.remove(next_idx) 33 34 return np.array(selected)

You embed every production prompt + response pair (support ticket text or code snippet + docstring), compute uncertainty from the latest reward or policy model, then run the hybrid selector. Batch size is typically 500–2000 examples per active round. Retrain (or run DPO) every 1–2 weeks so the selector stays current with the improving model.

🎯 Production tip: For code generation tasks, concatenate the natural language prompt with a lightweight AST or syntax embedding (or just the first 512 tokens of the generated code). This prevents the selector from over-focusing on natural language tickets while ignoring the code distribution.

Human Feedback Collection Interfaces for RLHF and DPO

The interface itself strongly influences label quality and rater fatigue.

Minimal viable preference record (what you must log for every decision):

- Prompt (exact text or canonical hash + template version)

- Response A (full text + generation metadata: model ID, temperature, retrieval IDs, guardrail decisions, code sandbox result if applicable)

- Response B (same)

- Chosen / Rejected (or Likert 1–5 for both)

- Tie flag + optional free-text rationale (required on “both bad” for safety tickets or insecure code)

- Annotator pseudonym + demographics bucket (for bias monitoring)

- Exact start and end timestamps, time-on-task

- Guideline document version + SHA

- Gold-question result (pass/fail)

- Final dataset version the record was accepted into

The UI should make the decision obvious. Side-by-side or stacked cards with clear “A is better”, “B is better”, “Tie”, “Both bad + why” buttons work well. For support tickets add a required “policy reference” field on difficult cases. For code tasks surface the sandbox execution result (pass/fail + any security linter output) next to each completion.

Many teams also collect Likert + critique (rate helpfulness 1–5 for support tone or code correctness, then write one-sentence critique). The critique becomes excellent seed data for later Constitutional AI or critique-revision loops.

Quality Control That Prevents Garbage In, Garbage Out

Never trust raw crowd labels.

Production pipelines use layered defenses:

- Gold / honeypot questions (5–15 % of every batch). Raters who fail more than one are auto-paused. Gold items should include both clear support cases and tricky code security cases.

- Attention checks and “impossible” trick questions.

- Time filters: discard annotations faster than a human could possibly read both responses (or review the code diff).

- IAA monitoring per rater and per batch. Auto-reject or re-label items where the group disagrees.

- Expert review tier: any item marked “both bad” or that triggers a safety policy (or insecure code flag) routes to your internal specialists.

- Red-team the labeling guidelines themselves - have domain experts (support leads + security engineers) try to game the interface.

All of these controls must be logged. A later auditor must be able to see exactly which gold items a particular rater saw and whether they passed.

Governance, Privacy, and Ethical Labeling

Human feedback data is one of the highest-risk datasets you will ever create - especially when it contains real customer support conversations and code that may touch production systems.

Required controls before any production log row reaches a rater:

- PII redaction gateway that runs deterministically before export. Replace names, emails, order numbers, account IDs, addresses, and any secrets in code snippets with stable tokens. Store the reversible mapping only in an access-controlled vault.

- Consent ledger: every user conversation or code-generation request that may be used for labeling must carry an explicit consent flag or legal basis.

- Annotator welfare: use platforms that pay living wages, provide clear guidelines, and allow raters to skip disturbing content (angry support threads). Audit your provider’s pay and working-condition reports quarterly.

- Demographic balancing and bias dashboards: track rater geography, language, education level. Monitor whether preference distributions differ systematically by rater cohort (e.g., US raters consistently prefer direct tone on support tickets while other cultures prefer more politeness).

- Immutable provenance store: every accepted preference pair is written to an append-only, tamper-evident log. Include the exact model checkpoint, retrieval index version (if any), code sandbox version, and guideline hash that produced the pair.

These controls are not optional for any company that may someday face an EU AI Act audit or a plaintiff’s discovery request.

Cost Engineering and the Economics of the Flywheel

Track three numbers religiously:

- Cost per accepted high-quality preference pair (including all QC overhead and re-labels).

- Labels per 1 % win-rate improvement (or per 0.01 increase in reward model accuracy) separately for support and code tasks.

- Labeling spend as percentage of total post-training budget.

Typical results from mature flywheels on mixed support + code workloads:

- Random sampling baseline: ~48k labels to reach target performance.

- Active hybrid (uncertainty + diversity): ~16–18k labels for the same performance → 62–65 % reduction.

- At $0.85 fully-loaded per pair: $38k+ saved per training cycle.

- The selector itself (embedding + scoring job) usually costs <$300 per round.

- Adding a strong LLM judge first (hybrid human + LLM) further reduces human labels to only 15–25 % of the volume while still improving.

Hands-On: Build a Minimal Governed Active Learning Selector

Here is a complete, runnable toy example you can execute locally. It uses NumPy and scikit-learn (allowed as supporting libraries) on a tiny synthetic set of support-ticket and code-generation examples.

python1# active_selector_lab.py 2import numpy as np 3from sklearn.metrics.pairwise import euclidean_distances 4import pytest 5 6# Toy dataset: 12 examples (support tickets + code tasks) 7prompts = [ 8 "Refund for order A102 that never arrived", 9 "Write order_total(items, tax_rate, discount) safely", 10 # ... 10 more mixing support and code 11] 12embeddings = np.random.randn(12, 32).astype(np.float32) # pretend Sentence-BERT 13uncertainties = np.array([0.92, 0.31, 0.85, 0.44, 0.78, 0.29, 0.91, 0.55, 0.67, 0.38, 0.82, 0.41]) 14 15def hybrid_active_select(embeddings, uncertainties, k=5, w=0.6): 16 # implementation from earlier section, slightly adapted 17 uncertainties = (uncertainties - uncertainties.min()) / (uncertainties.ptp() + 1e-9) 18 selected = [int(np.argmax(uncertainties))] 19 remaining = set(range(len(embeddings))) - {selected[0]} 20 for _ in range(1, k): 21 rem_idx = np.array(list(remaining)) 22 dists = euclidean_distances(embeddings[rem_idx], embeddings[selected]).min(axis=1) 23 dists = (dists - dists.min()) / (dists.ptp() + 1e-9) 24 hybrid = w * uncertainties[rem_idx] + (1 - w) * dists 25 next_i = rem_idx[int(np.argmax(hybrid))] 26 selected.append(next_i) 27 remaining.remove(next_i) 28 return np.array(selected) 29 30def test_hybrid_selects_diverse_points(): 31 sel = hybrid_active_select(embeddings, uncertainties, k=5) 32 assert len(sel) == 5 33 # Diversity check: the selected points should not all be the top-5 uncertainty only 34 top5_unc = np.argsort(uncertainties)[-5:] 35 # In a real run with spread embeddings this will usually differ 36 assert len(set(sel) & set(top5_unc)) < 5 # not pure uncertainty 37 38def test_failure_without_diversity(): 39 # Pure uncertainty often clusters 40 pure_unc = np.argsort(uncertainties)[-5:] 41 # In practice you would assert that hybrid spread is better than pure 42 assert len(pure_unc) == 5

Run with python -m pytest active_selector_lab.py -q. The test demonstrates both success of the hybrid approach and the failure mode of pure uncertainty sampling.

Practical Checklist Before You Ship the Next Preference Batch

- Every log row has been PII-scrubbed and carries a consent flag (support tickets and any code containing customer data).

- The active selector is using the latest reward/policy model, not a stale one.

- Gold questions cover the exact edge cases your policy is currently weak on (both support policy nuance and code security).

- IAA dashboard shows batch kappa ≥ 0.65 and no rater below 0.5.

- The preference dataset manifest records model version, guideline version, selector commit, and sandbox version.

- You have a one-page bias and demographic summary for the raters who labeled this batch.

- Labeling cost and label-efficiency metrics (separately for tickets vs code) are logged in the same experiment tracker you use for training runs.

Key Takeaways

- Active learning (hybrid uncertainty + diversity) routinely saves 50–65 % of labeling budget while improving final model quality.

- IAA (Cohen’s kappa or Krippendorff’s alpha ≥ 0.65) is non-negotiable before any preference pair enters DPO or reward model training.

- Every preference record must carry full provenance (model snapshot, guideline hash, redaction version, gold check result) for auditability and reproducibility.

- Governance (PII redaction, consent, annotator welfare, bias monitoring) must be engineered into the pipeline before the first production log reaches a human.

- The data flywheel (logs → active selection → human labeling with QC → versioned dataset → DPO → better model → richer logs) is the highest-impact post-training infrastructure you will build.

- Support-ticket tone and code security have different failure modes; your selector, guidelines, and gold sets must explicitly cover both domains.

Evaluation Rubric

- 1Explains the difference between uncertainty sampling and diversity sampling and why a hybrid strategy usually outperforms either alone for preference data

- 2Computes Cohen's kappa or Krippendorff's alpha from a small confusion matrix of annotator decisions and states whether the result meets production thresholds (kappa > 0.6–0.7)

- 3Designs a complete preference collection record (prompt, two completions, chosen/rejected, annotator ID, timestamp, guideline version, time spent, gold check result) that supports both DPO training and regulatory audit

- 4Builds a simple active learning selector in Python that ranks unlabeled examples by a hybrid score (normalized entropy + embedding distance to nearest labeled) and explains the batch size / retrain cadence trade-off

- 5Calculates the labeling budget impact of switching from random to active sampling for a 50k-example preference dataset and states realistic cost savings and quality risks

- 6Specifies the full governance controls (PII filter before export, consent ledger, annotator pay audit, bias dashboard, immutable label store) required before any production human feedback data touches an RLHF pipeline

- 7Traces a complete data flywheel iteration from a production inference log row through active selection, human preference labeling, dataset version bump, and DPO fine-tune back to improved model metrics

Common Pitfalls

- Using random sampling for the entire preference dataset instead of active selection, wasting 50 %+ of the labeling budget on examples the model already handles well

- Treating a single annotator's preference as ground truth without IAA monitoring or redundant labeling on a validation subset, allowing noisy or biased labelers to poison the reward model

- Exporting raw production logs containing PII, customer names, or internal identifiers directly to crowd platforms without a redaction gateway and consent ledger

- Ignoring annotator demographics and guideline cultural bias; a US-centric rater pool will systematically down-rank responses that are polite or indirect in other cultures

- Failing to version the labeling guidelines and the exact prompt templates used at selection time, making it impossible to explain why a particular preference pair was collected six months ago

- Building a beautiful labeling UI but forgetting to log the full provenance (model versions, retrieval context, guardrail decisions) that produced the two responses being compared

Follow-up Questions to Expect

Key Concepts Tested

Active learning query strategies: uncertainty sampling (least-confident, margin, entropy) and diversity sampling (core-set, k-center greedy in embedding space)Inter-annotator agreement metrics: percent agreement, Cohen's kappa, Fleiss' kappa, Krippendorff's alpha and interpretation thresholdsPairwise preference collection interfaces for RLHF and DPO: A/B response ranking, tie handling, rationale capture, and multi-turn variantsQuality control in labeling pipelines: gold/honeypot questions, attention checks, time-based filtering, IAA monitoring per annotator and batchData flywheel architecture: production log harvesting, active selection, human labeling, preference dataset versioning, model retraining loopPrivacy and governance controls before labeling: PII redaction, consent flags, annotator demographics balancing, fair compensation verificationLabel provenance and reproducibility: immutable audit logs of every annotation decision, guideline version, model snapshot at selection timeCost engineering for labeling: cost per label, label efficiency of active learning vs random sampling, break-even analysis for internal platform vs managed servicesHybrid human-LLM labeling workflows: weak supervision with LLM judges followed by targeted human review on uncertain or high-stakes itemsBias and drift monitoring in labeled data: annotator bias detection, temporal drift in preference distributions, subgroup performance gaps

Next Step

Next: Continue to AI Agent Evaluation and Benchmarking

You now know how to collect high-signal human feedback and keep it governed. The next chapter turns that same discipline toward agents: task success, tool-use safety, adversarial robustness, and benchmark design that prove an agent is improving rather than merely looking fluent.

References

Training Language Models to Follow Instructions with Human Feedback (InstructGPT).

Ouyang, L., et al. · 2022 · NeurIPS 2022

Direct Preference Optimization: Your Language Model is Secretly a Reward Model.

Rafailov, R., et al. · 2023

Active Learning

Settles, B. · 2012 · Synthesis Lectures on Artificial Intelligence and Machine Learning

Content Analysis: An Introduction to Its Methodology

Krippendorff, K. · 2004

A Coefficient of Agreement for Nominal Scales

Cohen, J. · 1960 · Educational and Psychological Measurement

Constitutional AI: Harmlessness from AI Feedback.

Bai, Y., et al. · 2022 · arXiv preprint