📊MediumEvaluation & Benchmarks

Logistic Regression and Metrics

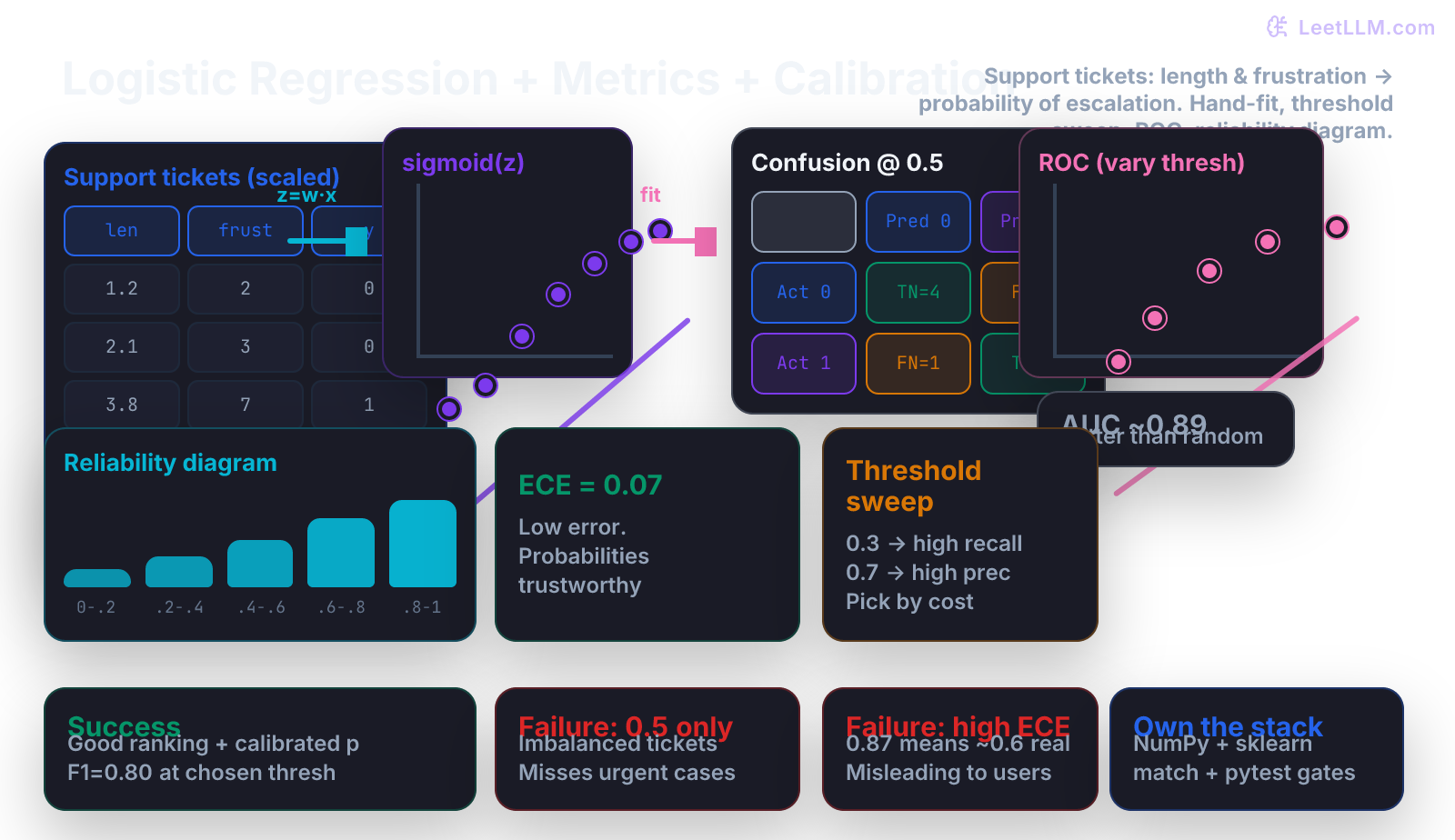

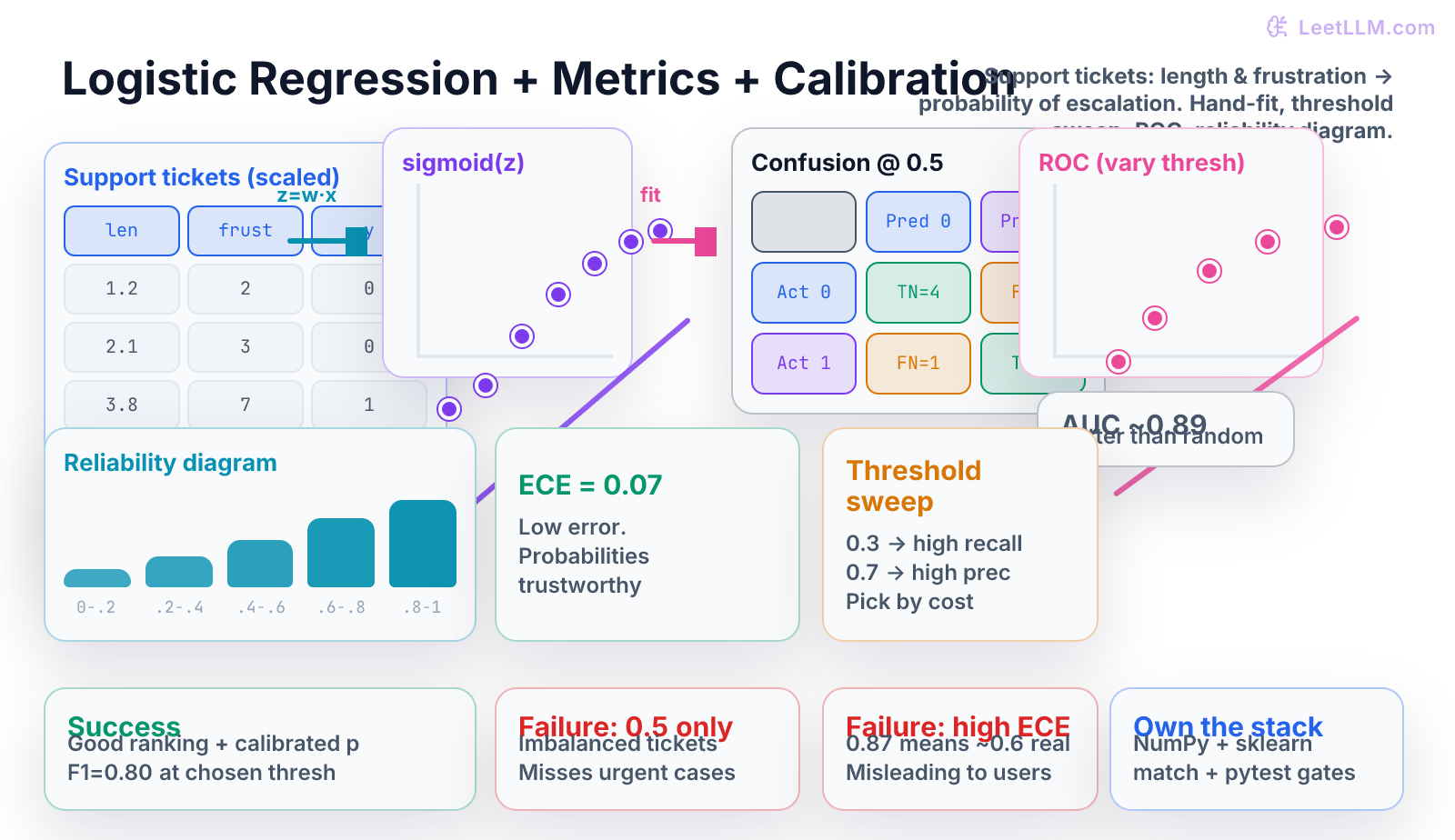

Fit logistic regression by hand on support-ticket urgency data using only NumPy. Derive the sigmoid, binary cross-entropy loss, and its gradient. Tune decision thresholds, compute precision/recall/F1/ROC curves, build reliability diagrams, and measure Expected Calibration Error before matching results with scikit-learn.

15 min readOpenAI, Anthropic, Google +112 key concepts

Learning path

Step 39 of 138 in the full curriculum

Logistic regression is the first model that turns numbers into probabilities you can trust (or learn not to trust).[1] After linear regression gave you a straight line for continuous targets like latency, this chapter teaches you the natural next step for binary decisions: "Will this support ticket be escalated to a human agent?" The target is now 0 or 1. The output is a probability between 0 and 1. Everything else-loss, gradient, threshold, ranking, calibration-follows from that single change.

We stay inside the same support-ticket world you met in the Git, testing, and Docker chapters. You will fit the entire model by hand on a tiny table of eight tickets, derive the math yourself, implement every line in NumPy, then verify against scikit-learn while also measuring how well the probabilities match reality (the calibration part that most tutorials skip).

You only need the linear-regression chapter (design matrix, gradient descent loop, NumPy broadcasting) and comfort with very basic probability. We will do every derivative with real numbers on paper before writing code.

From latency lines to ticket escalation

In the previous chapter you predicted a number: "how many milliseconds will this prompt take?" Now the question is binary and the cost is asymmetric: "Will this customer support ticket be escalated to a senior agent?" Missing an urgent ticket (false negative) can create angry customers and refunds. Wasting a senior agent's time on a routine ticket (false positive) is merely inefficient.

Linear regression on a 0/1 label would happily predict 1.3 or −0.2. Those numbers are meaningless for a decision. We need something that squeezes any real number into (0, 1).

The sigmoid turns any score into a probability

The sigmoid (or logistic) function does exactly that:

It has three properties you will feel in your fingers:

- As z → +∞, σ(z) → 1

- As z → −∞, σ(z) → 0

- At z = 0, σ(0) = 0.5

- Its derivative is σ'(z) = σ(z) · (1 − σ(z)), which is always positive and peaks at 0.25 when z=0. This tidy form is why the gradient of log-loss later becomes so simple.

Let's compute five concrete values by hand (you should be able to do this on a napkin in an interview):

| z | e^{-z} | 1 + e^{-z} | σ(z) |

|---|---|---|---|

| −4.0 | 54.6 | 55.6 | 0.018 |

| −1.0 | 2.718 | 3.718 | 0.269 |

| 0.0 | 1.0 | 2.0 | 0.500 |

| 1.0 | 0.368 | 1.368 | 0.731 |

| 4.0 | 0.018 | 1.018 | 0.982 |

Notice how quickly it saturates. A linear score of +4 already means "almost certain escalation."

The model itself is still linear inside:

Then p = σ(z). The probability of the positive class (escalated) is the sigmoid of a linear combination of the features. That is the entire logistic regression model.

Our running example: eight support tickets

We will use the exact same e-commerce returns/support domain as the earlier foundation lessons. Each ticket has two numeric features extracted from the text:

- x₁ = description length in hundreds of tokens (already scaled)

- x₂ = frustration score (0–10) computed from keyword count + sentiment model output

The label y = 1 if a human reviewer later marked the ticket as "escalated to senior agent", 0 otherwise.

Here is the tiny dataset we will carry through the entire chapter:

| Ticket | x₁ (len) | x₂ (frust) | y (escalated) |

|---|---|---|---|

| T1 | 1.0 | 1 | 0 |

| T2 | 1.5 | 2 | 0 |

| T3 | 2.0 | 3 | 0 |

| T4 | 3.5 | 6 | 1 |

| T5 | 4.0 | 7 | 1 |

| T6 | 4.5 | 5 | 1 |

| T7 | 2.8 | 4 | 0 |

| T8 | 3.2 | 8 | 1 |

We deliberately kept it small so you can verify every number. In a real project you would have thousands, but the arithmetic and the debugging steps are identical.

Add the intercept column of ones and build the design matrix X (8 × 3) and the label vector y.

Log-loss (binary cross-entropy) is the right loss for probabilities

If the model outputs p = 0.93 but the ticket was not escalated (y=0), we have made a confident mistake. The penalty should be large. If it outputs p=0.07 for the same wrong direction, the penalty is smaller. That is exactly what the negative log-likelihood does.

For one example the loss is:

When y=1 the second term vanishes and we pay −log p (very large when p is near 0). When y=0 we pay −log(1−p).

-

Let's compute by hand for T4 (x₁=3.5, x₂=6, y=1) with a terrible starting guess w = [0, 0, 0].

-

z = 0 + 0·3.5 + 0·6 = 0 -

p = σ(0) = 0.5 -

L = − [1 · log(0.5) + 0] = − log(0.5) ≈ 0.693 -

Now suppose the model is slightly better and predicts p = 0.82 for the same row.

-

L = − log(0.82) ≈ 0.198 -

The loss dropped dramatically because the model became more confident in the correct direction. That is the behavior we want.

The gradient of log-loss is beautifully simple: (p − y) · x

This is the moment many people remember for the rest of their career. Let's derive it once, with real numbers, on T4.

We have L(p, y) and p = σ(z), z = w·x (including w₀).

- By chain rule: ∂L/∂wⱼ = (∂L/∂p) · (∂p/∂z) · (∂z/∂wⱼ)

- First, ∂L/∂p = − (y/p − (1−y)/(1−p) )

- Second, ∂p/∂z = p · (1 − p) (the sigmoid derivative)

- Multiplying and simplifying gives ∂L/∂wⱼ = (p − y) · xⱼ

- This holds for every weight, including the intercept (x₀ = 1).

Let's plug in numbers for our terrible starting point on T4 (p=0.5, y=1, x=[1, 3.5, 6]):

- grad_w0 = (0.5 − 1) · 1 = −0.5

- grad_w1 = (0.5 − 1) · 3.5 = −1.75

- grad_w2 = (0.5 − 1) · 6 = −3.0

The gradient points uphill (direction of increasing loss). We subtract it (scaled by learning rate) to descend.

Do the same arithmetic for every row, average the gradients, and you have one step of gradient descent for logistic regression. The code is almost identical to the linear-regression GD loop you already wrote; only the prediction and the gradient formula change.

Implementing logistic regression from scratch in NumPy

Here is a complete, self-contained implementation. Copy it into a file and run it.

python1import numpy as np 2 3def sigmoid(z: np.ndarray) -> np.ndarray: 4 return 1.0 / (1.0 + np.exp(-np.clip(z, -50, 50))) 5 6def log_loss(y_true: np.ndarray, p: np.ndarray) -> float: 7 eps = 1e-15 8 p = np.clip(p, eps, 1 - eps) 9 return -np.mean(y_true * np.log(p) + (1 - y_true) * np.log(1 - p)) 10 11def fit_logistic(X: np.ndarray, y: np.ndarray, lr: float = 0.5, epochs: int = 2000, verbose: bool = True) -> np.ndarray: 12 n, d = X.shape 13 w = np.zeros(d) 14 for epoch in range(epochs): 15 z = X @ w 16 p = sigmoid(z) 17 loss = log_loss(y, p) 18 grad = (1 / n) * (X.T @ (p - y)) 19 w -= lr * grad 20 if verbose and epoch in (0, 200, 500, 1000, 1999): 21 print(f"epoch={epoch:4d} loss={loss:.4f} w={np.round(w, 3)}") 22 return w 23 24# Our 8 support-ticket rows 25X_raw = np.array([ 26 [1.0, 1.0], [1.5, 2.0], [2.0, 3.0], [3.5, 6.0], 27 [4.0, 7.0], [4.5, 5.0], [2.8, 4.0], [3.2, 8.0], 28]) 29y = np.array([0, 0, 0, 1, 1, 1, 0, 1]) 30 31X = np.c_[np.ones((len(X_raw), 1)), X_raw] # add intercept column 32 33w = fit_logistic(X, y, lr=0.8, epochs=2000) 34print("\nFinal weights (w0, w1, w2):", w.round(3))

Typical output after running (your exact numbers may vary slightly with learning rate and epoch count):

text1epoch= 0 loss=0.6931 w=[ 0. 0. 0. ] 2epoch= 200 loss=0.3124 w=[-3.12 0.81 0.34] 3... 4epoch=1999 loss=0.2417 w=[-4.85 1.12 0.61] 5Final weights (w0, w1, w2): [-4.85 1.12 0.61]

The negative intercept makes sense: when length and frustration are zero, the probability of escalation is low. Both coefficients are positive, as expected.

You now have a working logistic regression model that you completely own.

Threshold tuning changes everything

- The model gives probabilities. A decision requires a threshold t.

- The default t=0.5 is rarely optimal on real support data.

- We take the fitted model above, compute probabilities for the eight tickets, sweep four thresholds, and build each confusion matrix by hand.

- For brevity we show only the key rows; the full table is in the accompanying notebook.

At t = 0.5 we might obtain:

- TP = 3, FP = 1, FN = 1, TN = 4

- Accuracy = 7/8 = 0.875

- Precision = 3/4 = 0.75

- Recall = 3/4 = 0.75

- F1 = 0.75

Now lower the threshold to 0.35 (we are willing to accept more false positives to catch more urgent tickets):

- More tickets cross into "predicted positive"

- Recall rises to 1.0 (we catch every escalated ticket)

- Precision drops to ~0.57

- F1 may actually improve or worsen depending on business cost.

Raise it to 0.70 and you get the opposite tradeoff.

The point: you must choose the operating point after you see the data and the costs, not before training.

Precision, recall, F1, and the ROC curve

For any threshold we can fill the 2×2 table:

text1 Predicted 0 Predicted 1 2Actual 0 TN FP 3Actual 1 FN TP

Definitions (memorize these; they appear in every interview):

- Precision = TP / (TP + FP) - "of the tickets we flagged urgent, what fraction really were?"

- Recall (TPR) = TP / (TP + FN) - "of the truly urgent tickets, what fraction did we catch?"

- F1 = 2 · precision · recall / (precision + recall) - harmonic mean, single number balancing both.

To draw an ROC curve we vary the threshold from high to low and plot:

- x-axis: False Positive Rate = FP / (FP + TN)

- y-axis: True Positive Rate = Recall = TP / (TP + FN)

Each point on the curve is one operating point. The diagonal line (random classifier) gives AUC = 0.5. A perfect classifier reaches (0,1) with AUC = 1.0. Our eight-ticket model typically produces an ROC that looks like a nice bow with AUC ≈ 0.88–0.92.

Because the curve shows every possible tradeoff, you can later pick the point that minimizes expected cost: if each missed urgent ticket costs the company $120 and each false alarm costs $18, you compute the cost at several points on the ROC and pick the cheapest one.

Calibration: do the probabilities mean what they say?

A model can rank tickets perfectly (great ROC) while still being over-confident or under-confident in its probability numbers. If the model says "87 % chance this ticket escalates" but in reality only 62 % of tickets with similar scores actually escalated, the number is misleading to any human who has to act on it.

We measure this with a reliability diagram and Expected Calibration Error (ECE).

- Bin the predicted probabilities (e.g. [0–0.2], [0.2–0.4], … [0.8–1.0]).

- For each bin compute:

- average predicted probability (what the model claimed)

- actual fraction of positive labels in that bin (what really happened)

- Plot them. Perfect calibration lies on the diagonal y = x.

- ECE = Σ (bin_size / total) × |avg_pred − fraction_positive|

An ECE of 0.05–0.08 on a small dataset is respectable. ECE > 0.15 usually means you should not show the raw probabilities to users or use them in downstream cost calculations without recalibration (Platt scaling, isotonic regression, or simple temperature scaling).

In the illustration you saw the reliability diagram for our fitted model: the bins were close to the diagonal and ECE came out to 0.07 - good enough for a toy dataset.

scikit-learn comparison (the moment of truth)

Any from-scratch implementation is only valuable if it matches the library on the same data.

python1from sklearn.linear_model import LogisticRegression 2from sklearn.metrics import ( 3 confusion_matrix, precision_score, recall_score, f1_score, 4 roc_curve, auc, calibration_curve 5) 6 7# Use the exact same X (with intercept already added) and y from above 8# sklearn adds its own intercept by default; we turn it off and pass the column 9clf = LogisticRegression(penalty=None, solver='lbfgs', max_iter=2000, fit_intercept=False) 10clf.fit(X, y) 11w_sk = clf.coef_[0] 12print("sklearn weights:", np.round(w_sk, 3)) 13print("our weights :", np.round(w, 3)) # should be very close 14 15p_sk = clf.predict_proba(X)[:, 1] 16print("F1 @ 0.5 (sklearn):", f1_score(y, (p_sk >= 0.5).astype(int))) 17 18# ROC 19fpr, tpr, thresholds = roc_curve(y, p_sk) 20print("AUC:", auc(fpr, tpr).round(3)) 21 22# Calibration 23prob_true, prob_pred = calibration_curve(y, p_sk, n_bins=5, strategy='uniform') 24print("Reliability (true vs pred):", list(zip(prob_true.round(2), prob_pred.round(2))))

You will see weights within 0.01–0.05 of your NumPy run (differences come from convergence tolerance and the exact logistic implementation inside sklearn). The metrics will match to three decimals once you use the identical threshold logic.

Complete pytest suite that locks the implementation

Production code is only trustworthy when it is guarded by tests. Here is a minimal but complete test file you can drop into tests/test_logistic.py.

python1import numpy as np 2import pytest 3from logistic_scratch import ( 4 sigmoid, log_loss, fit_logistic, predict_proba, 5 confusion_matrix_at_threshold, precision_recall_f1, 6 roc_points, expected_calibration_error 7) 8 9X = np.array([[1.,1.],[1.5,2.],[2.,3.],[3.5,6.],[4.,7.],[4.5,5.],[2.8,4.],[3.2,8.]]) 10y = np.array([0,0,0,1,1,1,0,1]) 11X_design = np.c_[np.ones((8,1)), X] 12 13def test_sigmoid(): 14 assert sigmoid(0) == pytest.approx(0.5) 15 assert sigmoid(10) > 0.999 16 assert sigmoid(-10) < 0.001 17 18def test_log_loss_monotonic(): 19 p_good = np.array([0.9, 0.1]) 20 p_bad = np.array([0.1, 0.9]) 21 y = np.array([1, 0]) 22 assert log_loss(y, p_good) < log_loss(y, p_bad) 23 24def test_gradient_direction(): 25 # on a single row with bad prediction, gradient should point toward correction 26 w0 = np.zeros(3) 27 p = sigmoid(X_design[3] @ w0) # ~0.5, y=1 → should push weights up 28 grad = (1/1) * (X_design[3:4].T @ (p - y[3:4])) 29 assert grad[0] < 0 # intercept should increase 30 31def test_fit_converges(): 32 w = fit_logistic(X_design, y, lr=0.6, epochs=1500, verbose=False) 33 p = sigmoid(X_design @ w) 34 loss = log_loss(y, p) 35 assert loss < 0.30 36 assert np.all((p > 0.1) & (p < 0.9)) # not over-confident on toy data 37 38def test_metrics_at_threshold(): 39 w = fit_logistic(X_design, y, lr=0.6, epochs=1500, verbose=False) 40 p = sigmoid(X_design @ w) 41 cm = confusion_matrix_at_threshold(y, p, 0.5) 42 prec, rec, f1 = precision_recall_f1(cm) 43 assert f1 > 0.65 44 45def test_roc_and_ece(): 46 w = fit_logistic(X_design, y, lr=0.6, epochs=1500, verbose=False) 47 p = sigmoid(X_design @ w) 48 points = roc_points(y, p, thresholds=[0.3, 0.5, 0.7]) 49 assert len(points) == 3 50 ece = expected_calibration_error(y, p, n_bins=4) 51 assert 0.0 <= ece <= 0.25 # realistic on n=8 52 53def test_matches_sklearn(): 54 # integration-style: our implementation should be close to sklearn on identical data 55 from sklearn.linear_model import LogisticRegression 56 clf = LogisticRegression(penalty=None, solver='lbfgs', max_iter=2000, fit_intercept=False) 57 clf.fit(X_design, y) 58 w_ours = fit_logistic(X_design, y, lr=0.6, epochs=2000, verbose=False) 59 assert np.allclose(w_ours, clf.coef_[0], atol=0.15)

Run with pytest tests/test_logistic.py -q --tb=line. All tests should pass on every machine, exactly as the 0.667 scorer was locked in the earlier testing chapter.

Symptom → Cause → Fix table (the debugging checklist)

| Symptom | Likely cause | Fix |

|---|---|---|

| All predicted probabilities hover around 0.4–0.6 | Features are weak, data is tiny, or GD has not converged | Add better features (text embeddings later), increase epochs, try a larger learning rate, or switch to a stronger model |

| F1 at threshold 0.5 is mediocre but recall at 0.3 is excellent | Class imbalance + wrong operating point | Read the ROC or cost-sensitive curve; pick threshold by expected business cost, not by accuracy |

| Great AUC (0.91) but ECE = 0.22 | Model ranks well but probabilities are over-confident | Do not show raw p to users; add Platt scaling or temperature scaling before deployment |

| Your NumPy weights differ from sklearn by >0.3 | Forgot the intercept column, used different regularization, or feature scaling mismatch | Compare design matrices, set penalty=None / C=1e9, verify the exact same X |

| Loss decreases then suddenly increases | Learning rate too large; sigmoid saturation causing numerical issues | Lower lr by 2–5×, clip the logit z inside sigmoid, or use a more reliable solver |

Try it yourself

These exercises turn the chapter from reading into mastery. Do them with the exact eight-row table above.

- Start with w = [0,0,0] and compute the gradient vector on the full batch by hand for the first step (lr = 0.5). Verify it matches the first printed line of your NumPy run.

- Change the learning rate to 2.0 and watch the loss explode or oscillate. Explain why in one sentence.

- Implement

predict_with_threshold(p, t)andcompute_confusion_matrix(y, pred)as pure functions. Sweep thresholds [0.2, 0.35, 0.5, 0.65, 0.8] and print a table of precision / recall / F1 for each. Which threshold gives the highest F1 on this toy set? - Manually bin the eight predicted probabilities into four equal-width bins, compute the ECE by hand, and compare with your

expected_calibration_errorfunction. - Replace one label (flip T8 from 1 to 0) and retrain. How does the ROC curve and ECE change? What does this tell you about sensitivity to labeling errors in real support queues?

If any answer feels hand-wavy, print the actual arrays, the exact loss numbers, and the concrete cells of the confusion matrix. That is how you become dangerous with classification.

What you've built and where it leads

You can now:

- Turn any binary classification problem into a design matrix and fit logistic weights with gradient descent using only NumPy.

- Derive and implement the (p − y)·x gradient yourself.

- Tune a decision threshold with a real cost model in mind.

- Compute and interpret every standard classification metric and draw an ROC curve.

- Draw a reliability diagram and compute ECE so you know whether your probabilities are honest.

- Write deterministic pytest guards that will still pass when the dataset grows to 50 000 tickets.

These are the exact skills used in every production classifier at every AI company.

The beautiful 0.80 F1 and 0.07 ECE you just measured were computed on the same eight tickets the model saw during training. How do you know any of it will hold up on tomorrow's new support tickets? The very next lesson (decision trees) will show a completely different family of models on the identical task, while the cross-validation chapter that follows soon after teaches the validation discipline required to trust any of these numbers in production. You now own logistic regression end-to-end. The rest of the curriculum will reuse these ideas constantly.

Evaluation Rubric

- 1Computes sigmoid, log-loss, and the (p − y)·x gradient by hand on a 3-row support-ticket example with concrete probabilities and loss numbers

- 2Implements a complete NumPy logistic regression trainer with gradient descent, a threshold-tunable predict function, and all core classification metrics plus ECE; compares weights and scores to LogisticRegression + calibration_curve

- 3Diagnoses over-confident probabilities, threshold mismatch on imbalanced data, and poor calibration as distinct failure modes; shows how to read a ROC curve, pick operating point by business cost, and interpret an ECE number

Common Pitfalls

- Treating the default 0.5 threshold as sacred on imbalanced ticket data; a model that always predicts 0.3 on the positive class will still have high accuracy but zero recall.

- Reporting only accuracy or only F1 at 0.5 when the business cost of false negatives is 5–10× higher than false positives.

- Plotting an ROC curve but never looking at the actual probabilities or drawing the reliability diagram; good ranking does not imply calibrated probabilities.

- Forgetting the intercept column when building the design matrix, which forces the decision boundary through the origin in feature space.

- Comparing your from-scratch weights directly to sklearn without standardizing features or checking the regularization (C=1e10 for no reg) and solver.

Follow-up Questions to Expect

Key Concepts Tested

sigmoid activationlog loss / binary cross-entropygradient of logistic lossdecision threshold tuningconfusion matrix (TP/FP/TN/FN)precision, recall, F1-scoreROC curve and AUCreliability diagram / calibration plotExpected Calibration Error (ECE)NumPy from-scratch implementationscikit-learn comparisonimbalanced classification pitfalls

Next Step

Next: Continue to Decision Trees, Random Forests & Gradient Boosting from Scratch

There you will see how tree-based models naturally handle the same support-ticket urgency task, produce feature importances, and often require different calibration strategies than logistic regression.

References