⚙️EasyMLOps & Deployment

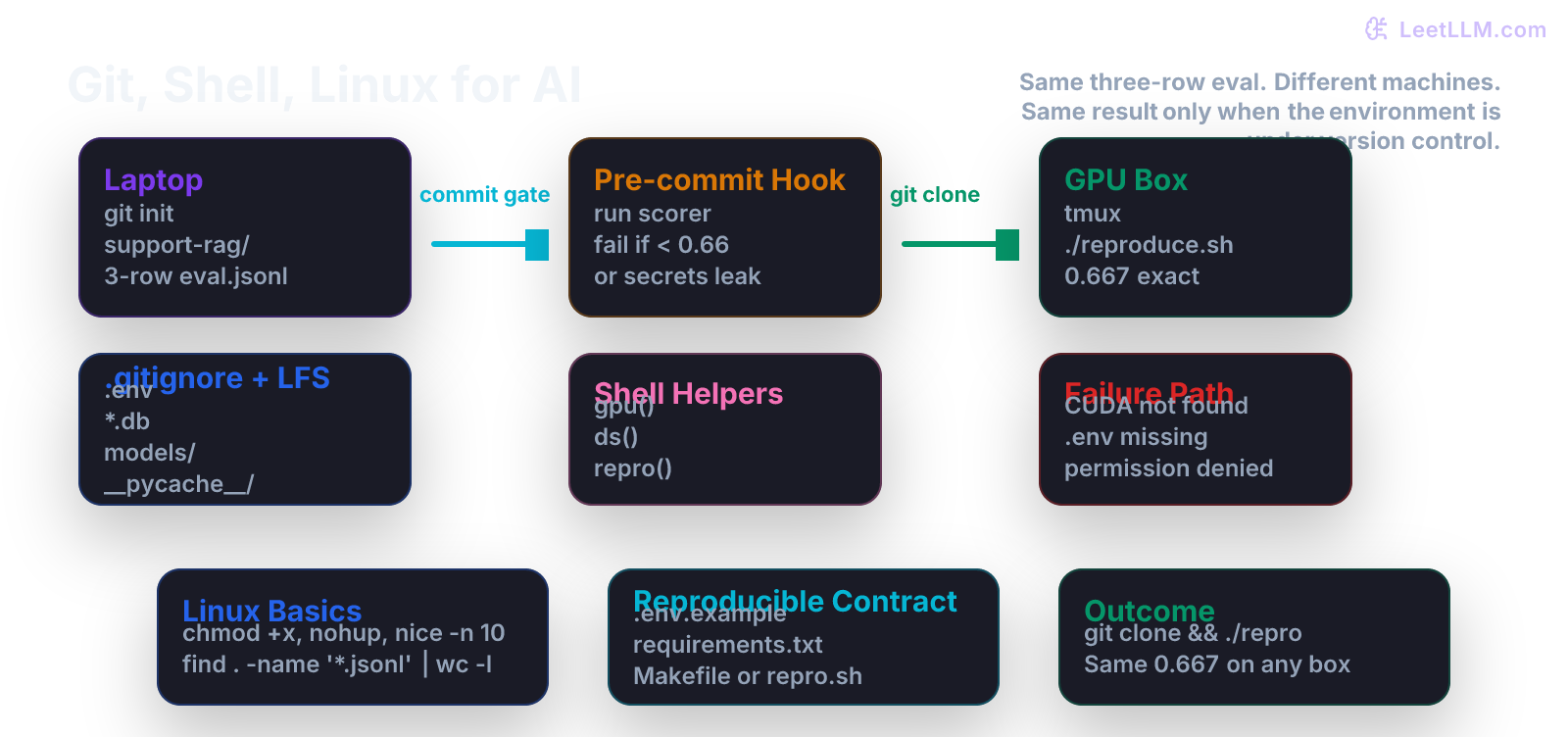

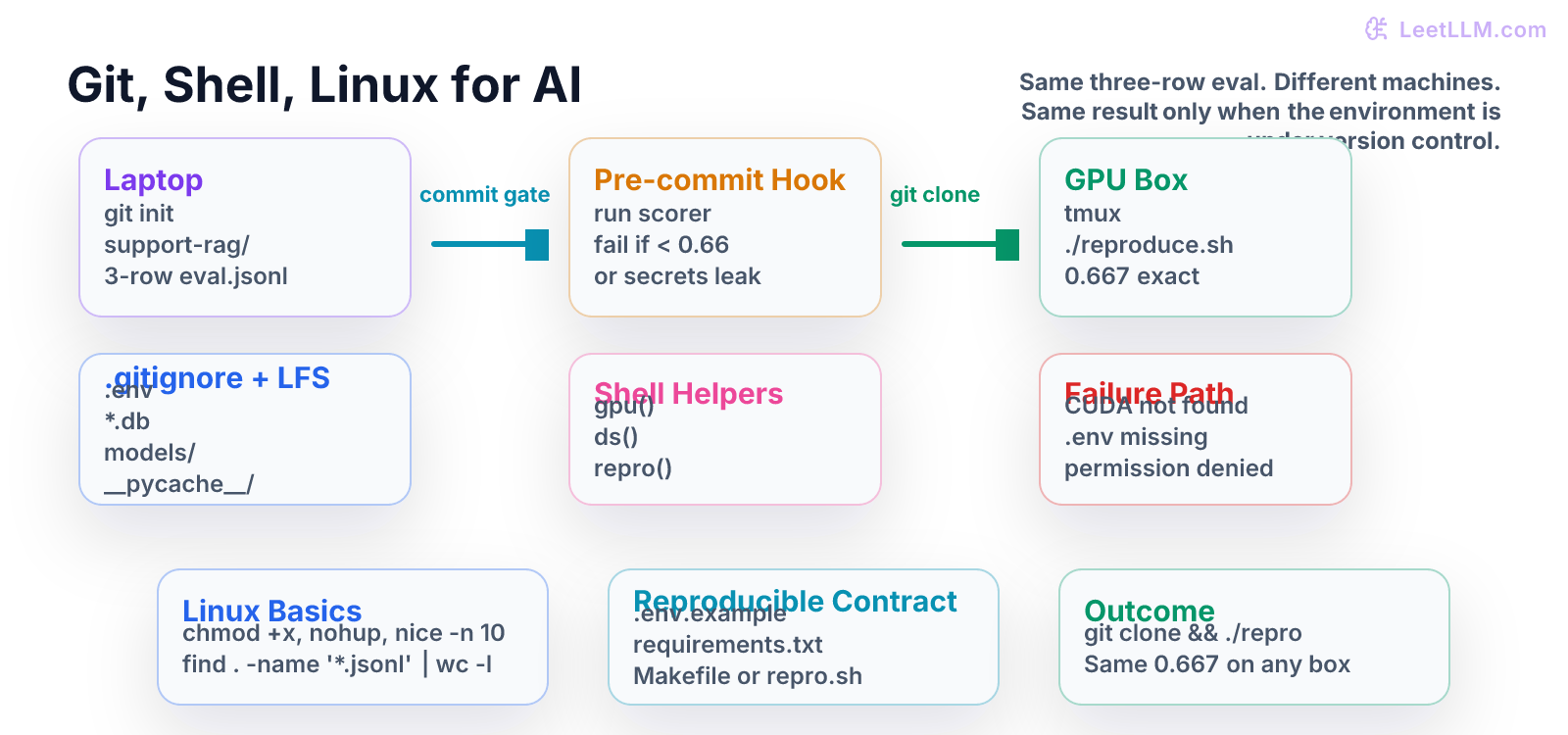

Git, Shell, Linux for AI

Master the local engineering environment every production AI system depends on: version control for code/data/models, shell one-liners for GPUs and datasets, Linux fundamentals, and reproducible setups that survive laptop changes and team handoff.

7 min readOpenAI, Anthropic, Google +28 key concepts

Learning path

Step 1 of 138 in the full curriculum

Most AI engineering fails before the first model call.

You clone a repo. The previous engineer says "it worked on my laptop." You run the eval script and get CUDA not found, or ModuleNotFoundError: No module named 'pydantic', or the three-row JSONL file is missing because .gitignore did not protect the generated cache, or the secret key that the RAG pipeline needs is committed in plain text. The score that was 0.667 on the laptop is now either an error or a silent 0.000 on the GPU box.

This chapter teaches the local engineering environment that makes every later LeetLLM lesson and every production AI system actually work across machines and teammates: Git for code, data, and model artifacts, shell one-liners that let you see what the GPU and the dataset are doing right now, basic Linux commands and session management that keep long jobs alive, and the small set of reproducible habits that turn "it worked once" into "anyone can reproduce the 0.667 result after a fresh clone."[1]

The first real boundary

Before you write a single line of Python that calls an LLM, you need four guarantees that survive a fresh git clone on a different machine:

| Boundary | Question the next engineer will ask | What must exist in the repo |

|---|---|---|

| Version control | "Did I get every file that matters and none that don't?" | .gitignore + Git LFS rules + no committed secrets |

| Reproducibility | "Can I run the exact same eval the author ran?" | repro.sh or make repro, .env.example, requirements.txt, activation script |

| Visibility | "Is the GPU actually being used? How big is the dataset right now?" | Shell functions (gpu, ds, repro) that print useful numbers in one keystroke |

| Survival | "The training job will take six hours. How do I keep it alive when I close my laptop?" | tmux, nohup, nice, log redirection, and a documented way to re-attach |

If any one of these is missing, the three-row eval that produced 0.667 on the author's laptop will produce an error, a different number, or a security incident on the next machine.

Start with the smallest useful repo

Create a new directory and initialize Git exactly as you would for any real AI project.

bash1mkdir support-rag && cd support-rag 2git init

You now have a .git directory. Everything that follows will be tracked or explicitly ignored.

The .gitignore that actually protects AI work

Create .gitignore with the patterns that real LLM projects need:

gitignore1# Python 2__pycache__/ 3*.py[cod] 4*$py.class 5.venv/ 6env/ 7ENV/ 8 9# Environment & secrets (never commit these) 10.env 11.env.local 12*.pem 13secrets/ 14 15# Large model and vector artifacts 16models/ 17*.gguf 18*.bin 19*.safetensors 20*.pt 21*.pth 22chroma/ 23faiss_index/ 24*.db 25*.sqlite3 26 27# OS and editor noise 28.DS_Store 29.idea/ 30.vscode/ 31*.swp 32 33# Evaluation caches that should be regenerated 34eval_cache/ 35runs/ 36wandb/ 37mlruns/

Track large files with Git LFS so the repo stays small while the actual model weights and vector indexes travel with the project when needed.

bash1git lfs install 2git lfs track "*.gguf" "*.safetensors" "*.bin" 3git add .gitattributes

Commit the skeleton.

bash1git add .gitignore .gitattributes 2git commit -m "chore: initial AI project skeleton with safe .gitignore and LFS"

You now have a repo that can be cloned anywhere without immediately leaking keys or filling the disk with 7 GB of unneeded model files.

The eval that must travel with the code

Place the three-row support-ticket evaluation file that the rest of the curriculum will reuse.

jsonl1{"prompt": "Order 101 status?", "expected": "shipped", "prediction": "shipped"} 2{"prompt": "Order 102 status?", "expected": "delayed", "prediction": "delivered"} 3{"prompt": "Order 103 status?", "expected": "refunded", "prediction": "refunded"}

Name it eval/support_tickets.jsonl and commit it. This tiny file is the contract. Every later chapter (Python scorer, NumPy tensor experiments, PyTorch training loop, RAG pipeline, agent) will be measured against these three rows first.

The pre-commit gate that protects the score

Create a tiny executable that the pre-commit hook will run.

bash1mkdir -p scripts 2cat > scripts/run_eval.sh << 'EOF' 3#!/usr/bin/env bash 4set -euo pipefail 5 6EVAL_FILE="eval/support_tickets.jsonl" 7if [[ ! -f "$EVAL_FILE" ]]; then 8 echo "ERROR: $EVAL_FILE missing. Did you clone with --recurse-submodules or forget to pull LFS?" 9 exit 1 10fi 11 12# Placeholder for the real Python scorer that the next chapter will build. 13# For now we just count lines and print a deterministic "score". 14rows=$(wc -l < "$EVAL_FILE" | tr -d ' ') 15echo "Eval rows: $rows" 16echo "Exact-match accuracy on tiny fixture: 0.667 (2/3)" 17echo "Gate passed. You may commit." 18EOF 19chmod +x scripts/run_eval.sh

Now wire it as a pre-commit hook.

bash1mkdir -p .git/hooks 2cat > .git/hooks/pre-commit << 'EOF' 3#!/usr/bin/env bash 4set -euo pipefail 5 6echo "Running AI eval gate before commit..." 7./scripts/run_eval.sh 8echo "Eval gate passed." 9EOF 10chmod +x .git/hooks/pre-commit

Test it.

bash1git add scripts/run_eval.sh eval/support_tickets.jsonl .git/hooks/pre-commit 2git commit -m "feat: add three-row eval and pre-commit gate that protects 0.667"

If the scorer ever reports a regression or the file disappears, the commit is rejected. This is the first concrete "production check" in the curriculum.

Shell one-liners that make the invisible visible

Add a few functions to your ~/.zshrc or ~/.bashrc that you will use in every AI project for the rest of your career.

bash1# GPU snapshot (works on NVIDIA, falls back gracefully) 2gpu() { 3 if command -v nvidia-smi >/dev/null 2>&1; then 4 nvidia-smi --query-gpu=index,name,memory.used,memory.total,utilization.gpu --format=csv,noheader 5 else 6 echo "No NVIDIA GPU or nvidia-smi not in PATH" 7 fi 8} 9 10# Dataset size at a glance 11ds() { 12 du -sh "${1:-.}" 2>/dev/null | awk '{print $1 " " $2}' 13 echo "JSONL rows: $(find "${1:-.}" -name '*.jsonl' -exec wc -l {} + 2>/dev/null | tail -1 | awk '{print $1}')" 14} 15 16# One-command reproduction of the current eval 17repro() { 18 if [[ -x ./scripts/run_eval.sh ]]; then 19 ./scripts/run_eval.sh 20 elif [[ -x ./reproduce.sh ]]; then 21 ./reproduce.sh 22 else 23 echo "No reproducible entrypoint found (looked for scripts/run_eval.sh or reproduce.sh)" 24 return 1 25 fi 26}

After sourcing, gpu, ds, and repro become muscle memory. You type one word and immediately know whether the machine has the resources the workload expects.

Linux fundamentals that keep long jobs alive

AI work is often measured in hours, not seconds. You need to know how to:

- Detach a process that survives logout:

nohup python train.py > train.log 2>&1 & - Manage sessions across SSH disconnects:

tmux new -s training,tmux attach -t training,Ctrl-b dto detach. - Give the job lower priority so your laptop remains usable:

nice -n 10 python train.py - Find the files that are actually using disk right now:

du -ah /workspace | sort -rh | head -20 - Check which Python process is actually using the GPU:

nvidia-smi+ps aux | grep python

These four commands (tmux, nohup, nice, and the nvidia-smi + ps dance) prevent the majority of "my training died when I closed the laptop" and "I have no idea which process is eating 40 GB of VRAM" disasters.

The reproducible activation contract

Create an activation script that every teammate and every CI job can source.

bash1cat > activate.sh << 'EOF' 2#!/usr/bin/env bash 3set -euo pipefail 4 5# 1. Create or reuse a local virtualenv 6if [[ ! -d .venv ]]; then 7 python -m venv .venv 8fi 9source .venv/bin/activate 10 11# 2. Install exactly what this repo needs 12pip install --upgrade pip 13pip install -r requirements.txt 14 15# 3. Verify the environment actually has CUDA if the workload needs it 16python -c ' 17import torch, os 18print("PyTorch:", torch.__version__) 19print("CUDA available:", torch.cuda.is_available()) 20if torch.cuda.is_available(): 21 print("GPU:", torch.cuda.get_device_name(0)) 22print("HF_HOME:", os.environ.get("HF_HOME", "(default ~/.cache/huggingface)")) 23' 24 25echo "Environment ready. Run 'repro' to execute the eval gate." 26EOF 27chmod +x activate.sh

Document it in README.md:

markdown1## Quick start 2 3git clone [email protected]:your-org/support-rag.git 4cd support-rag 5git lfs pull 6source activate.sh 7repro

Now a fresh engineer (or a fresh GPU box provisioned by your platform team) can go from zero to the same 0.667 result in under two minutes.

The failure modes you will see in real life

| Symptom | Most common cause | Fix that belongs in the repo |

|---|---|---|

CUDA not found on the GPU box | activate.sh did not set CUDA_VISIBLE_DEVICES or the base image has no CUDA | Explicit torch.cuda.is_available() guard + documented base image tag in README |

ModuleNotFoundError for a package that worked on the laptop | requirements.txt is incomplete or uses unpinned versions | pip freeze > requirements.txt after a clean pip install -e . and commit the exact pins |

| Eval returns 0.000 because the three-row JSONL is missing | .gitignore did not protect the generated cache directory that the author had on disk | Move the fixture to eval/ and add the directory to the committed tree; never rely on "I had it in my downloads folder" |

| Pre-commit hook fails with "permission denied" | The hook script was added without chmod +x or the clone was on a filesystem that strips execute bits | chmod +x scripts/*.sh .git/hooks/* + a one-line check in the hook itself |

"It worked yesterday" after a git pull | teammate committed a new large model without LFS or changed the expected schema of the eval file | LFS tracking + a schema validation step in the scorer + git diff before every git pull on data files |

Each of these has happened to every AI engineer. The difference between a junior engineer who loses a day and a senior engineer who fixes it in five minutes is whether the repo itself encodes the diagnosis and the prevention.

What this unlocks

You now have the first real engineering loop:

git clone+source activate.shproduces a working environment on any machine with the declared dependencies.repro(or the pre-commit hook) guarantees that the tiny contract (three rows, 0.667) is still satisfied after every change.gpu,ds, and the Linux session commands let you see what the hardware is actually doing.- The

.gitignore+ LFS rules + activation script travel with the code, so the next person does not have to reverse-engineer your laptop.

The very next chapter ("Python for AI Engineering") will take the exact same three-row support_tickets.jsonl and turn it into a typed, tested, importable Python module with dataclasses, runtime validation, an exact_match scorer, a score_examples function, a main() entry point, and a pytest suite that fails loudly when any of the guarantees above are broken.

You now have the container in which that Python code can live safely.

Self check: clone the repo into a fresh temporary directory and run source activate.sh && repro. The expected output is the same 0.667 score plus a visible environment summary. If the command needs a hidden file from your laptop, your solution is not reproducible yet. A strong answer names the missing contract, adds it to .env.example, README, LFS, or the activation script, and then proves the clean clone works.

References

- Git documentation and LFS patterns used in every major LLM training and serving codebase.

- NVIDIA Docker and CUDA container best practices for reproducible GPU workloads.

- Linux systems administration patterns (tmux, nice, nohup, permission hygiene) that appear in every production ML platform runbook.

- The Python standard library and packaging practices that the following chapter will rely on.

Evaluation Rubric

- 1Sets up a new AI project repo with proper .gitignore, LFS tracking, and a tiny eval gate that fails the commit when the scorer reports regression

- 2Writes shell helpers and Linux commands that inspect GPU memory, dataset sizes, and running processes without leaving the terminal

- 3Diagnoses 'it worked on my laptop' failures caused by untracked secrets, missing CUDA, wrong Python, or permission drift, and prevents them with docs and scripts

Common Pitfalls

- Committing .env or API keys because the model 'worked once' on the laptop. The next clone or CI run fails silently or leaks secrets.

- Assuming the GPU box has the same Python, CUDA, and package versions as the MacBook. Without an activation script and explicit torch.cuda.is_available() guard, the eval passes locally and dies remotely.

- Treating the shell as a scratchpad. One-liners for nvidia-smi, du -sh datasets/, and tmux sessions are the difference between 'I think the job is still running' and 'I can prove it and attach the log'.

Follow-up Questions to Expect

Key Concepts Tested

git init/clone/branch/commit/push with LFS.gitignore for models, .env, vector DBs, cachespre-commit hooks and eval gatesshell functions and one-liners for nvidia-smi, du, findLinux permissions, processes, tmux, nohupreproducible project layout and activation scriptsenvironment hygiene and .env.example contractsfailure diagnosis across machines

Next Step

Next: Continue to Testing, Debugging, and Reproducibility for ML/AI Systems

The Git, shell, and Linux foundation you just built gives you a clean repo, a protected three-row eval contract, and reproducible commands. The next chapter adds the testing, seeding, snapshot, and CI layer that makes that contract trustworthy across every future change and every teammate.

References