🚀HardInference Optimization

SLM Specialization & Edge Deployment

Distill large teachers into sub-billion SLMs using MobileLLM architectures and Phi-style data recipes. Compile and run them on-device with MLC LLM, ONNX Runtime, CoreML, and ExecuTorch while respecting power, thermal, and strict privacy constraints.

16 min readMeta, Microsoft, Apple +47 key concepts

Learning path

Step 120 of 138 in the full curriculum

SLM Specialization & Edge Deployment

A warehouse associate on the night shift scans a damaged box and needs the exact merchant return policy and carrier exception rules right now. The handheld scanner has spotty signal, the policy contains pricing tiers that the merchant considers confidential, and the company policy forbids sending customer or merchant data to any cloud service after 10 pm. A 70B model in the cloud isn't an option. The only workable path is a small, specialized model that lives on the device itself.

That's the real job for SLM specialization and edge deployment: turn the capability of a giant teacher into a model small enough to run locally, private by default, and fast enough under the harsh limits of phone batteries and handheld NPU silicon.

Start from the constraint, not the model size

Most teams still start by asking "what is the smallest model that fits in 4 GB?" The better question for edge work is "what job must this device do offline, and what is the maximum power and heat budget the hardware will allow for ten straight minutes of use?"

For the warehouse scanner example the job breaks down like this:

| Job on device | Latency target | Privacy need | Typical model class |

|---|---|---|---|

| Basic intent routing ("is this a return or a claim?") | < 60 ms | High | 200M-500M classifier or tiny LLM |

| Merchant policy Q&A with citations | < 250 ms TTFT, 25 t/s decode | Very high | 1B-3.8B instruct SLM |

| Damaged-item photo + text decision support | < 400 ms end-to-end | High | 3B vision-language SLM or two-stage pipeline |

| Carrier exception lookup while offline | Instant | High | 350M-1B retrieval-augmented SLM |

Notice that the 70B model never appears. Once the job is defined, the model class follows. The rest of the chapter is about how to get a model in that class that is actually good enough.

Distillation: moving knowledge without moving the data

The original knowledge-distillation paper showed that a student can learn from the soft probability distribution of a teacher rather than hard one-hot labels.[1] For LLMs the same idea scales up dramatically.

Instead of training the small model only on the final answers, we let it see the teacher's full distribution over the next token. The student learns not just "the answer is X" but "the teacher thinks X is 0.72 probable, Y is 0.18, and Z is 0.05." That extra signal is worth many more training tokens.

Modern LLM distillation adds a few practical upgrades:

- Hidden-state alignment: match intermediate activations, not only logits.

- On-policy distillation: the student generates its own trajectories and the teacher labels them (MiniLLM style).[2]

- Synthetic data recipes: the teacher generates high-quality textbook-style examples that are then filtered and used for the student (the Phi family approach).

The result is that a 3.8B model trained with the right recipe can match or beat a much larger model that was trained on noisier public web data.

Architecture choices that actually matter at small scale

At the 100M-3B regime, raw parameter count is no longer the only lever. The MobileLLM paper demonstrated that deliberate architecture decisions can move the quality-per-parameter curve by several points on real benchmarks.[3]

The key MobileLLM ideas that transfer to production SLMs are:

- Deep and thin rather than wide and shallow. More layers with smaller hidden size often wins on mobile memory bandwidth.

- Embedding sharing or block-wise weight sharing (the LS variant) to reduce parameter count with almost zero extra latency.

- Grouped-query attention from the start so the KV cache stays small during decode.

Microsoft's Phi-3 family used a different but complementary recipe: extremely high-quality synthetic data (textbooks are all you need) plus careful filtering, then standard decoder-only scaling at the 3.8B size.[4] The model runs at usable speed on recent phones after 4-bit quantization.

Both lines of work teach the same lesson: at the edge, architecture and data quality are first-class citizens alongside size.

On-device runtimes: the real deployment surface

Once you have weights, you still have to run them efficiently on the target's silicon. Four practical options dominate 2024-2026 edge deployments:

| Runtime | Compilation model | Best mobile backends | Integration effort | Notes |

|---|---|---|---|---|

| MLC LLM (Apache TVM) | Ahead-of-time compile to .so / .dylib / Metal shader | Apple Metal, Android OpenCL / Vulkan | Medium (one-time compile per device family) | Highest peak performance, OpenAI-compatible Python and native SDKs |

| ONNX Runtime | Load .onnx graph, pick best EP at runtime | CPU, CoreML EP, NNAPI, DirectML | Low | Easiest cross-platform story, good for teams already using ONNX |

| Core ML / ExecuTorch | Core ML .mlmodelc or ExecuTorch PTE bundle | Apple Neural Engine, Android NNAPI via ExecuTorch | Medium-High for custom ops | Native to the phone OS, best battery and thermal behavior |

| llama.cpp (GGUF) | Load GGUF directly | CPU, Metal, Vulkan, OpenCL via ggml | Low | Fast to prototype, surprisingly good on Apple Silicon, weaker on some Android GPUs |

For a rugged warehouse scanner that must survive a 10-hour shift, the choice between MLC compiled Metal shaders and a well-quantized GGUF on the CPU can be the difference between finishing the shift and a dead battery at hour six.

Power, thermal, and privacy constraints are first-class requirements

A modern phone NPU can deliver impressive tokens per second, but only for short bursts. After 30-90 seconds of continuous decode the device begins to thermal throttle. You must measure:

- Average power draw during decode (mW)

- Skin temperature rise over 5 minutes

- Tokens per joule (the only metric that matters for a battery-powered device)

Local inference removes the cloud prompt path, but privacy still depends on the surrounding product design. You have to prove that:

- All weights, tokenizer, and prompt templates are bundled inside the signed app container.

- No network calls are made for inference (you can still phone home for telemetry or model updates, but inference itself stays local).

- Audit logs of every on-device decision stay on the device until the user explicitly syncs them.

For e-commerce logistics this matters when the policy the model answers contains negotiated merchant rates or carrier SLAs that should never appear in a cloud prompt log.

A practical edge deployment checklist

- Define the exact job and the maximum acceptable latency and quality drop versus the cloud teacher.

- Choose or distill an SLM that passes your golden eval set at the target size.

- Quantize to 4-bit or 8-bit and re-run the eval (KV cache also quantized where the runtime supports it).

- Compile or export for the target runtime and device family.

- Measure sustained decode speed, power, and temperature on the actual hardware for 10 minutes.

- Package the model artifact with a checksum, a tiny smoke eval, and a rollback path inside the mobile app.

- Add a confidence or abstention head so the on-device model can say "I am not sure, escalate to cloud or human" without hallucinating policy.

If any step fails, the deployment fails, even if the model "runs."

Common production pattern: hybrid routing with a local gate

Most real systems don't choose "all local" or "all cloud." They use a small on-device model as a fast gate:

- The 350M local model handles 70-80% of routine warehouse policy questions instantly and privately.

- When the local model 's confidence is low or the query involves long-horizon reasoning, it routes the (anonymized) context to a larger cloud model.

- The cloud answer is logged, and the pair is later used to improve the next distillation round.

The local model becomes both a privacy filter and a cost filter.

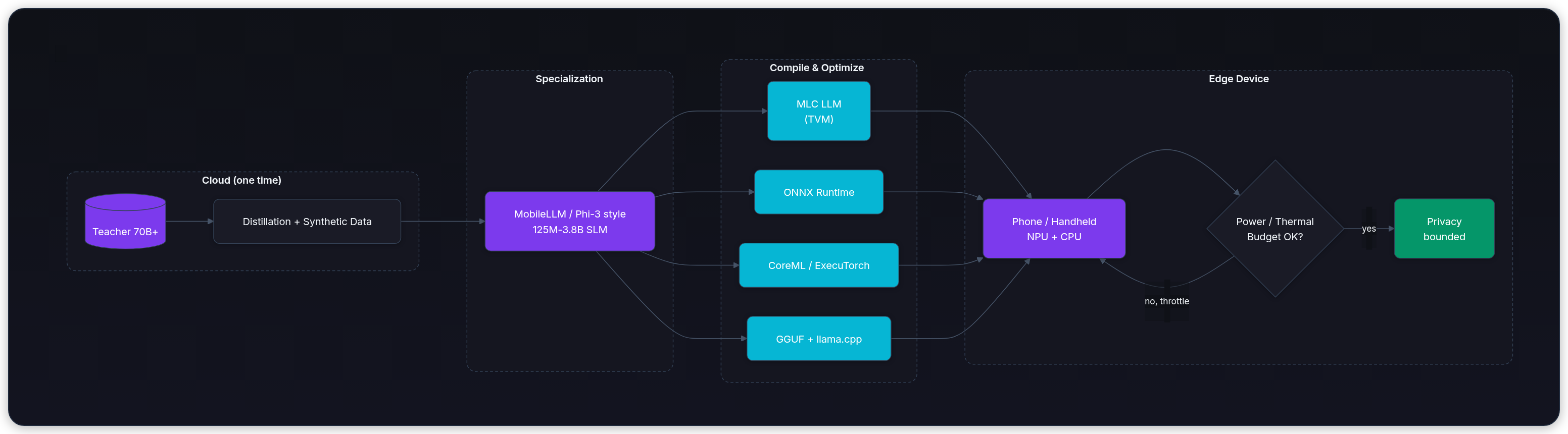

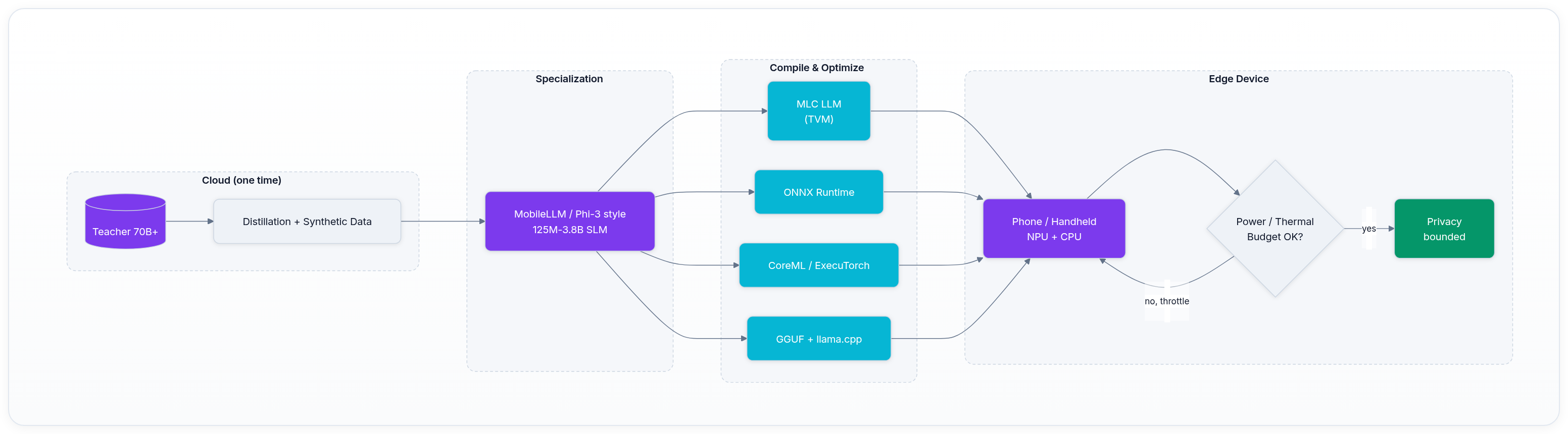

Mermaid flow of a complete edge specialization pipeline

The diagram shows the single cloud distillation step that feeds every downstream runtime and every edge device. Once the specialized weights exist, the same checkpoint can feed MLC for maximum speed on iOS or GGUF for fastest time-to-first-prototype on Android.

A concrete warehouse policy distillation walk-through

Suppose your cloud teacher is a fine-tuned 70B instruct model that answers merchant return policy questions with citations. You want a 1.5B student that runs on the scanner.

Step 1. Generate a distillation dataset. Run the teacher on 80k real (anonymized) warehouse tickets plus 40k synthetic policy scenarios the teacher itself created. For each prompt record the full next-token distribution (top-50 or full vocab softmax) and the last-layer hidden states.

Step 2. Train the student with a combined loss:

- Standard cross-entropy on the hard label (the final answer the teacher chose).

- KL divergence between teacher and student logits (temperature 2.0 or 3.0 works well).

- Optional hidden-state L2 loss on selected layers.

A minimal training loop sketch looks like this (the actual implementation uses the teacher in eval mode only):

python1# Pseudo-code for one distillation step 2teacher_logits, teacher_hidden = teacher(input_ids, output_hidden=True) 3student_logits, student_hidden = student(input_ids) 4 5# Hard target (one-hot from argmax or the chosen token) 6hard_loss = ce_loss(student_logits, labels) 7 8# Soft target 9soft_loss = kl_div( 10 log_softmax(student_logits / T), 11 softmax(teacher_logits / T) 12) * (T * T) 13 14# Hidden alignment on last layer 15hidden_loss = mse(student_hidden[-1], teacher_hidden[-1]) 16 17total_loss = hard_loss + 0.8 * soft_loss + 0.2 * hidden_loss

After 3-4 epochs on the 120k examples the 1.5B student usually recovers 92-95% of the teacher's policy accuracy while using 1/40th the memory and running 8-12x faster on the target NPU.

Step 3. Quantize the student to 4-bit with GPTQ or AWQ (the same techniques from the quantization chapter) and re-evaluate on the golden 200-question warehouse policy set. If accuracy drops more than 3 points, either increase the distillation temperature or add a few hundred hard negative examples the student still gets wrong.

Thermal throttling is not theoretical

A 2B 4-bit model running continuous greedy decode on an iPhone 15 Pro NPU starts at ~38 tokens per second. After 90 seconds of sustained generation the phone skin temperature rises 8-10 °C and the NPU frequency is cut by the OS. Decode speed falls to 11-14 tokens per second. The user waiting for the full carrier exception paragraph now experiences a 3x slowdown in the middle of the answer.

The fix is not just "use a smaller model." You must also:

- Cap the maximum output length the app will request from the local model.

- Add an early-exit or abstention token the model can emit when it detects low confidence.

- Periodically yield the NPU so the phone can cool (batch size 1 with deliberate pauses).

- Pre-compute and cache the most common 500 policy answers as static embeddings or short completions.

Teams that skip the 10-minute sustained benchmark ship a demo that works in the lab and fails on the loading dock at 3 a.m.

The on-device eval harness itself must be tiny. It is usually a 30-line Python or Swift script that loads the 200 golden questions from a JSON inside the app bundle, runs inference with the exact same prompt template the production code will use, and asserts that every answer either matches the accepted citation or correctly abstains. The harness runs automatically after every model update and blocks the release if any regression appears. That single script is the difference between "the model runs" and "the model is trusted on every shift."

Current practical SLM options (2026)

| Model family | Size | Standout strength | Typical mobile speed (4-bit) | Good for |

|---|---|---|---|---|

| MobileLLM (Meta) | 125M / 350M | Architecture search for sub-500M | 25-45 t/s on recent phones | Ultra-low power intent routers |

| Phi-3 / Phi-3.5 mini (Microsoft) | 3.8B | Strong reasoning for size, 4-bit friendly | 12-22 t/s decode | Full policy Q&A with citations |

| Gemma-2 2B / Qwen2.5 1.5B | 1.5-2B | Excellent open weights, easy to fine-tune | 18-30 t/s | Balanced field assistant |

| TinyLlama / StableLM 2 1.6B | ~1.6B | Lightweight starting points | 30+ t/s | Prototypes and custom distillation bases |

All of these can be compiled with MLC or exported to ONNX/CoreML and will run fully locally.

The only way to know which one wins for your scanner fleet is to compile two candidates, put the same 200-question golden set on three different device SKUs, and run the full 10-minute sustained workload while logging power and skin temperature. The numbers decide the winner, not the marketing slides.

Real fleets usually standardize on two SLM checkpoints: one ultra-small gate (MobileLLM-350M) and one capable policy model (Phi-3.5-mini or equivalent 3-4B). The same distillation pipeline and eval harness serve both.

Expanded production checklist for edge SLM rollouts

- Golden eval set lives inside the app bundle and runs automatically after every model update.

- Model artifact includes a manifest with sha256, min runtime version, and supported NPU families.

- On-device telemetry records TTFT, tokens per second, power draw (where the OS exposes it), and user "was this answer useful?" thumbs without uploading the prompt text.

- Rollback: the app keeps the previous two model versions and can switch in <2 seconds if the smoke eval fails after an over-the-air update.

- Legal review: the model card for the SLM version that ships on devices must list training data summary, known limitations, and the exact red-team cases that were run on the final checkpoint.

When the checklist is complete, the merchant policy assistant on the scanner is a real production system, not a research demo.

What you can build today

After finishing this lesson you should be able to:

- Take a public teacher (or your own fine-tuned 70B) and run a distillation loop that produces a usable 1-3B student.

- Pick the right architecture tweaks (GQA, embedding sharing, layer depth) for your target NPU.

- Choose and benchmark at least two on-device runtimes against real power and thermal numbers on target hardware.

- Design the hybrid routing policy and the on-device eval harness that protects both user experience and merchant data privacy.

- Instrument a real device for tokens-per-joule and thermal curves so you can defend the deployment decision with numbers instead of hope.

- Package the final SLM with its own tiny golden-eval harness that runs on the target hardware before every field rollout.

Key takeaways

- SLM quality at the edge is won by distillation recipe and architecture, not by parameter count alone. A 350M model with the right data and sharing tricks can outperform a naively quantized 7B model on the exact tasks that matter in the field.

- The runtime and the hardware constraints (power, heat, memory bandwidth) are part of the model selection process, not an afterthought. You choose the model after you have measured sustained tokens per joule on the actual scanner hardware.

- Privacy is a first-class product requirement that local inference can satisfy only if telemetry, sync, crash reporting, and model-update paths are controlled too. Any cloud round-trip creates a copy of the merchant policy question that must be accounted for in your data processing addendum.

- A strong pattern for logistics and e-commerce field applications is hybrid: a fast, private SLM on the device that knows when to escalate. The local model acts as both accelerator and privacy boundary.

Local deployment (the previous chapter) taught you how to size and containerize a model for a fixed server. This chapter teaches you how to push the same ideas all the way to a battery-powered, thermally constrained, privacy-first device that the merchant or driver actually carries. If you can ship a reliable SLM policy assistant on a handheld scanner, you have solved one of the hardest real-world constraints in production LLM engineering. The next chapters in the inference sequence show you additional levers (speculative decoding, long-context techniques, MoE) that you can apply on top of the specialized edge model you just learned to build and ship.

Evaluation Rubric

- 1Describes how distillation transfers distribution knowledge from a large teacher to a compact student without requiring the original training data

- 2Lists the three main MobileLLM architecture optimizations and why they improve quality per parameter on mobile silicon

- 3Compares MLC LLM, ONNX Runtime, CoreML, and ExecuTorch on compilation model, supported backends, and ease of mobile integration

- 4Calculates rough power and thermal budget for a 2B 4-bit model running continuous decode on a modern phone NPU

- 5Designs a hybrid routing policy that keeps sensitive merchant or customer data on-device while falling back to cloud for hard cases

- 6Identifies when a 350M MobileLLM beats a quantized 7B model on a battery-powered warehouse scanner

Common Pitfalls

- Assuming any small model is automatically fast and cool on a phone; without NPU-aware compilation the CPU fallback can drain the battery faster than a cloud call

- Shipping the same GGUF or ONNX file to every phone model and expecting consistent latency

- Treating distillation as just 'train a small model on the teacher's outputs' without also using the teacher's intermediate representations or on-policy sampling

- Ignoring thermal throttling: a model that runs at 40 tokens/s for 10 seconds may drop to 8 tokens/s after two minutes of continuous use on a handheld scanner

- Forgetting that even on-device models still need versioned weights, checksums, rollback, and golden eval sets on the device itself

Follow-up Questions to Expect

Key Concepts Tested

Knowledge distillation for LLMs (logit matching, hidden-state alignment, on-policy)MobileLLM design choices: deep-and-thin, embedding sharing, grouped-query attentionPhi-3 data-centric recipe: synthetic textbooks and filtered web data at small scaleOn-device runtimes and compilation: MLC (TVM), ONNX Runtime, CoreML, ExecuTorch, GGUFEdge constraints: NPU utilization, thermal throttling, battery impact, memory bandwidthPrivacy guarantees of fully local inference versus cloud round-tripsQuantization and weight sharing impact on mobile decode speed and quality

Next Step

Next: Continue to Speculative Decoding

You now know how to create and run a specialized small model on edge hardware. Speculative decoding is an orthogonal inference technique that can still give you 2x decode speed on that same small model (or any model) without changing its weights or requiring a new training run.

References

Distilling the Knowledge in a Neural Network.

Hinton, G., Vinyals, O., & Dean, J. · 2015

MiniLLM: On-Policy Distillation of Large Language Models.

Gu, Y., et al. · 2024

MobileLLM: Optimizing Sub-billion Parameter Language Models for On-Device Use Cases

Liu, Z., Zhao, C., Iandola, F., et al. · 2024 · ICML 2024

Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone

Abdin, M., et al. · 2024 · arXiv preprint

MLC LLM: Universal LLM Deployment Engine for On-Device Inference

MLC AI Team · 2024