⚙️EasyMLOps & Deployment

SQL and Data Modeling

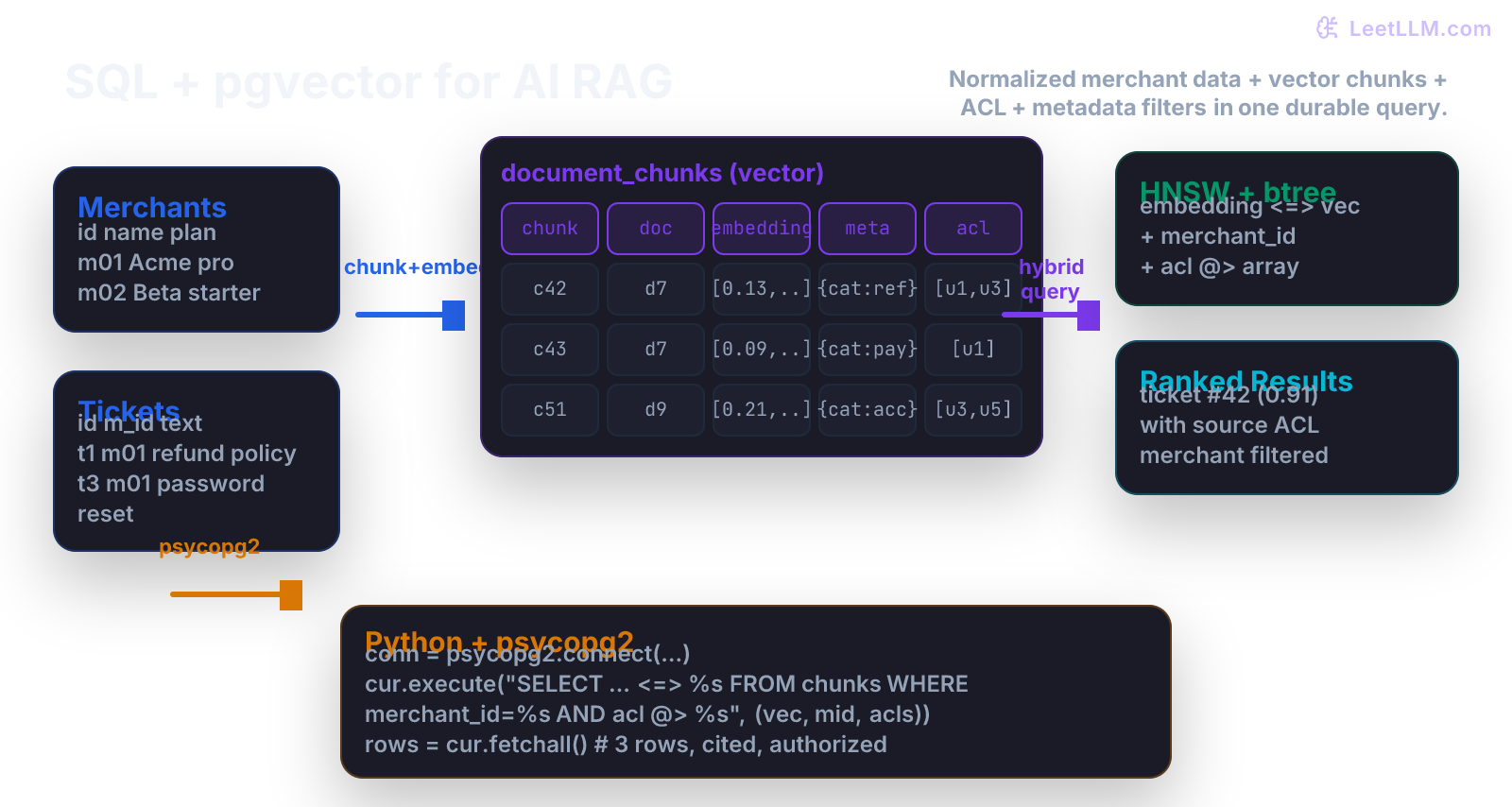

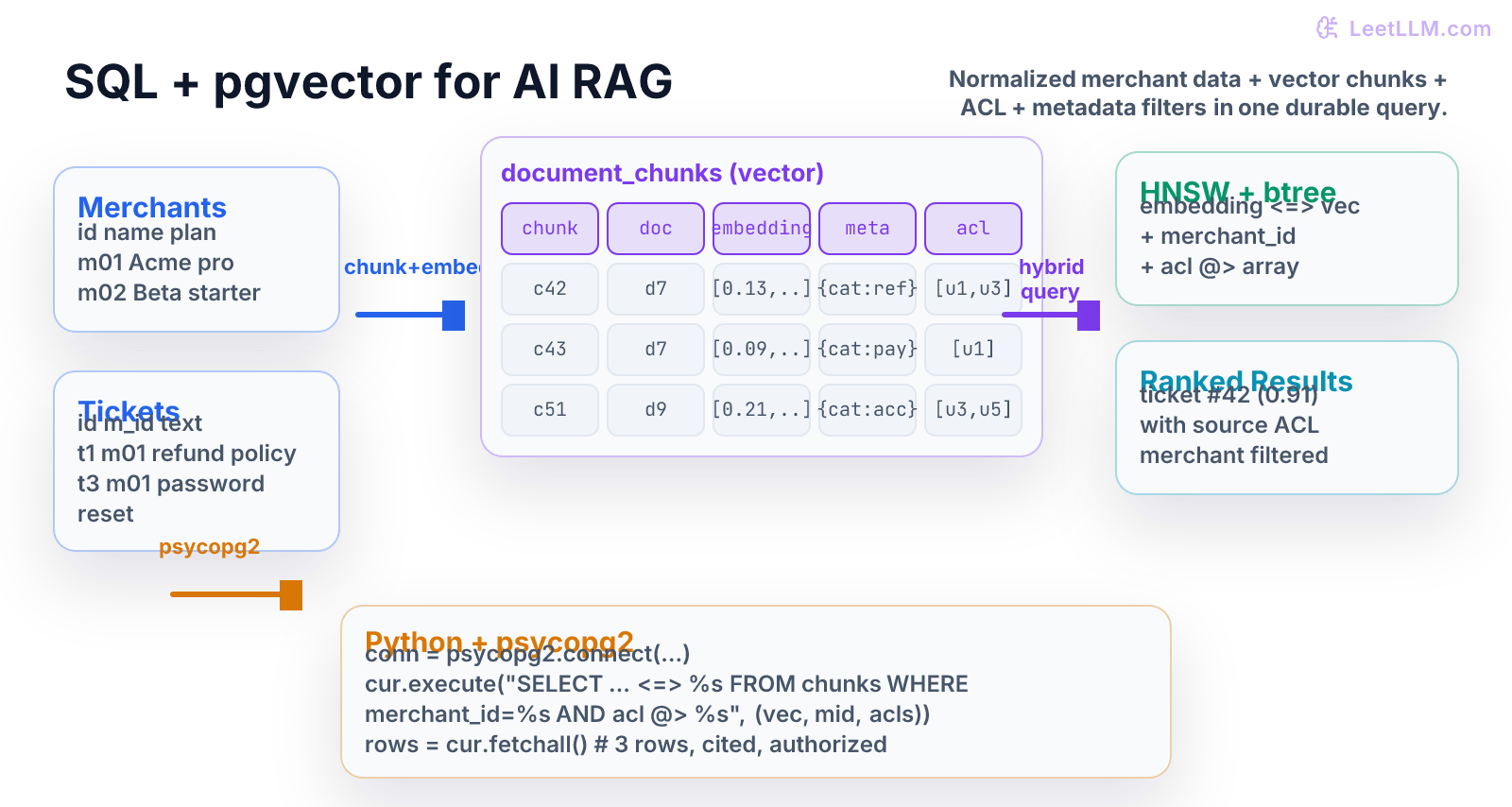

Build a production-grade support-ticket RAG system on Postgres + pgvector. Design schemas that mix normalized merchant data with denormalized chunks and embeddings, enforce ACLs and metadata filters, choose the right indexes, and run hybrid SQL + vector queries from Python.

9 min readOpenAI, Anthropic, Google +28 key concepts

Learning path

Step 7 of 138 in the full curriculum

Imagine a support agent at Acme Co. (merchant ID m01) receives a live chat: "I ordered the blue widget on Tuesday and the refund never arrived. Order #4821."

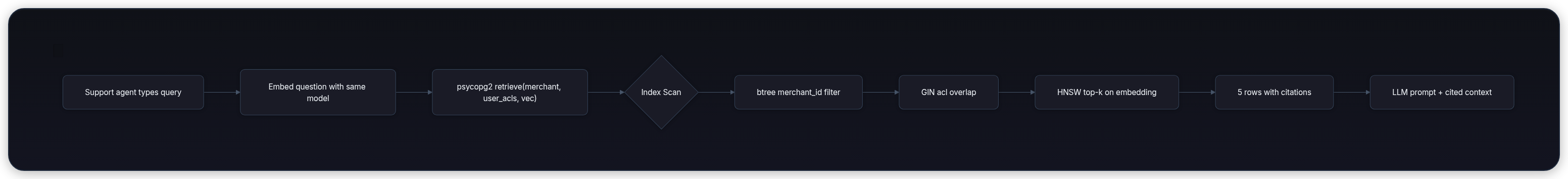

The agent needs the merchant's exact refund policy, the specific ticket history for that customer, the order status from the transactional system, and only the documents and tickets that this agent (or the end customer) is allowed to read. A naive LIKE '%refund%' scan across every row in a 4-million-ticket corpus takes 800 ms and returns garbage. The LLM that will draft the reply needs the right three paragraphs in the right order, with citations it can trust, in under 120 ms.

That is the job of a carefully designed SQL schema with vector search.

Modern AI applications are hybrid systems. The merchant's orders, payments, and user accounts must remain strongly consistent (ACID). The retrieval layer that feeds the LLM (RAG) must be fast, filterable by tenant, and permission-aware. The data model has to satisfy both workloads at the same time.[1]

This chapter teaches you exactly how to do it with Postgres + pgvector, the combination that powers the majority of production RAG systems in 2026.

Where this chapter sits

| Course position | What to carry |

|---|---|

| Before this | You understand in-memory data structures (lists, dicts of posting lists, heaps, caches) from the previous lesson and can write simple Python that talks to a database. |

| This chapter | You will design durable tables that mix normalized merchant records with denormalized chunks + 1536-dimensional embeddings, enforce ACLs, pick the right indexes, and query them from Python. |

| Next chapter | Algorithms & Complexity for ML Engineers teaches you how to reason about the time, space, and memory-bandwidth costs of the retrieval and inference code that will sit on top of the tables you just designed. |

One running example: Acme Merchant support RAG

We will use a single merchant (m01 = Acme) with two source documents and three historical support tickets. All numbers are tiny so you can compute everything by hand before we scale to production.

Source documents (the knowledge base the merchant uploads)

| doc_id | title | full_text (abbreviated) |

|---|---|---|

| d7 | Refund Policy | "Refunds are granted within 30 days for unused items... Email [email protected] with order number..." |

| d9 | Account & Password Help | "To reset your password visit /account/reset. Two-factor is required for Pro plans..." |

Support tickets (the conversational history)

| ticket_id | merchant_id | customer_id | text | status |

|---|---|---|---|---|

| t1 | m01 | u42 | "Where is my refund for order 4821?" | open |

| t3 | m01 | u7 | "Password reset link expired again" | resolved |

| t5 | m01 | u19 | "Can I get a refund on the blue widget?" | open |

Two agents:

- Agent Alice (

u1) works for Acme and can see everything. - Agent Bob (

u3) is a contractor who can only see refund-related tickets (ACL restricted).

We will build a schema that lets Alice ask "show me recent refund questions" and get three perfectly cited chunks in < 15 ms, while Bob asking the same question only sees the chunks he is allowed to read.

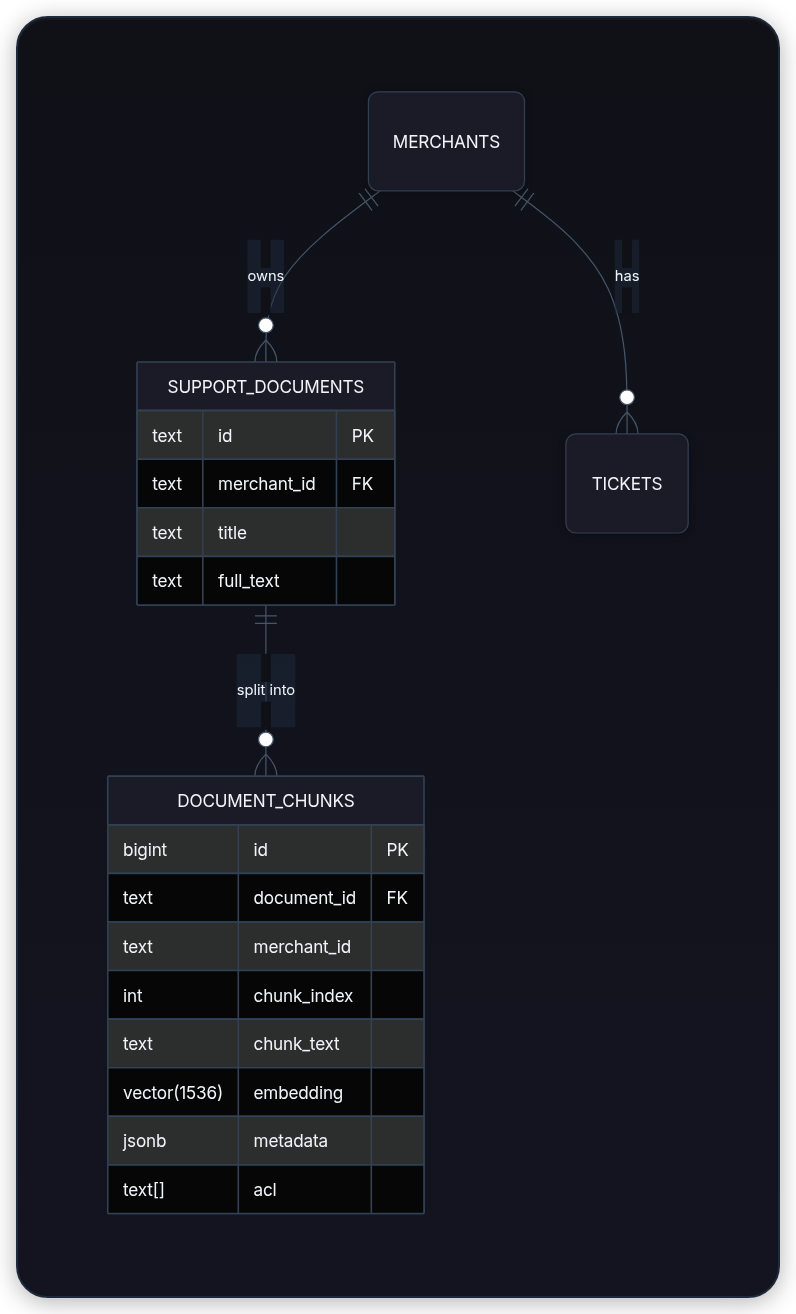

Relational modeling for the merchant side (the normalized core)

Start with the tables that must be correct for billing, compliance, and order integrity.

sql1CREATE TABLE merchants ( 2 id TEXT PRIMARY KEY, 3 name TEXT NOT NULL, 4 plan TEXT NOT NULL CHECK (plan IN ('starter','pro','enterprise')), 5 created_at TIMESTAMPTZ DEFAULT now() 6); 7 8CREATE TABLE support_documents ( 9 id TEXT PRIMARY KEY, 10 merchant_id TEXT REFERENCES merchants(id) ON DELETE CASCADE, 11 title TEXT NOT NULL, 12 full_text TEXT NOT NULL, 13 updated_at TIMESTAMPTZ DEFAULT now() 14); 15 16CREATE TABLE tickets ( 17 id TEXT PRIMARY KEY, 18 merchant_id TEXT REFERENCES merchants(id), 19 customer_id TEXT NOT NULL, 20 text TEXT NOT NULL, 21 status TEXT NOT NULL, 22 created_at TIMESTAMPTZ DEFAULT now() 23);

This is classic third-normal form. If Acme changes its name, one UPDATE fixes it for every ticket and document. If a merchant is deleted, its tickets and documents disappear (or are archived) automatically. The transactional side of the business is happy.

Why we still need a vector table: the RAG side

An LLM cannot read a 12-page refund policy in one shot and the policy is only useful when the right paragraph is retrieved. We therefore split documents into chunks (typically 200–800 tokens) and store an embedding for each chunk.

We could have put the embedding column on support_documents, but then we would be forced to re-embed the entire document every time any sentence changes, and we would lose the ability to cite the exact paragraph that answered the question.

Hence the document_chunks table.

The hybrid RAG schema (the one you will actually ship)

sql1CREATE EXTENSION IF NOT EXISTS vector; -- pgvector 2 3CREATE TABLE document_chunks ( 4 id BIGSERIAL PRIMARY KEY, 5 document_id TEXT REFERENCES support_documents(id) ON DELETE CASCADE, 6 merchant_id TEXT NOT NULL, -- denormalized for fast filter 7 chunk_index INT NOT NULL, 8 chunk_text TEXT NOT NULL, 9 embedding VECTOR(1536) NOT NULL, -- OpenAI text-embedding-3-small size 10 metadata JSONB NOT NULL DEFAULT '{}', -- { "category": "refund", "source": "policy", "last_embedded": "..." } 11 acl TEXT[] NOT NULL DEFAULT '{}', -- user IDs or role strings that may read this chunk 12 created_at TIMESTAMPTZ DEFAULT now() 13); 14 15-- Critical indexes (we explain each in the next section) 16CREATE INDEX idx_chunks_merchant ON document_chunks(merchant_id); 17CREATE INDEX idx_chunks_acl_gin ON document_chunks USING GIN (acl); 18 19-- HNSW is a strong default for high-recall, low-latency vector search 20CREATE INDEX idx_chunks_embedding ON document_chunks 21 USING hnsw (embedding vector_cosine_ops);

Key modeling decisions:

-

merchant_idis duplicated (denormalized) into the chunks table. A tenant-scoped vector query usually wants "only this tenant's data". A join at query time can be much slower and may prevent the planner from using the vector index well. -

metadatais JSONB. You can addcategory,language,version,page_number, or any future field withoutALTER TABLE. -

aclis a text array. Postgres GIN index on arrays gives you fastacl @> ARRAY['u1']checks. -

embeddingis the realvector(1536)type from pgvector. You get the distance operators for free.

This single table now serves both the RAG retrieval path and the permission system.

Normalization vs denormalization tradeoffs for AI workloads

| Workload | Normalized (join) | Denormalized (dupe merchant_id + metadata) | Winner for AI |

|---|---|---|---|

| Update merchant name | One row | Must update N chunk rows or use trigger | Normalized |

| "Give me all refund chunks for merchant m01" | Requires join + filter | Direct btree filter on merchant_id | Denormalized hot path, usually easier to optimize |

| Change ACL on a policy paragraph | Update one document row, trigger updates chunks | Same, but you control the trigger | Either |

| Vector similarity query + tenant filter + ACL filter | Planner may choose bad join order | All filters on the same table; HNSW + btree used together | Denormalized |

| Storage | Lower | ~15–25 % higher | Normalized |

Rule of thumb for serious RAG systems:

- Keep the source of truth normalized (documents, tickets, merchants).

- Denormalize the hot retrieval columns (

merchant_id,acl,category,last_updated) into the chunks table. - Accept the duplication and protect it with a transactional loader or a materialized view + trigger.

Indexing for fast retrieval

A vector index without the surrounding filters is useless in multi-tenant systems.

The combination that works:

- B-tree (or BRIN) on

merchant_id- filters 99 % of the corpus in a single merchant before the vector index even runs. - GIN on

acl- fast array containment. - HNSW on the embedding (cosine) - approximate nearest neighbors with > 0.95 recall at 5–15 ms.

sql1-- You can also combine them in one composite index in newer Postgres + pgvector 2-- but separate indexes + bitmap AND in the planner is often clearer.

Never create only the vector index. The planner will fall back to a sequential scan on any query that also filters by merchant_id or acl.

ACID versus eventual consistency for embeddings

When a new refund policy document arrives:

- The loader must insert the document row, split it into chunks, generate embeddings, and insert the chunk rows.

- If the embedding service is down or returns garbage, the entire batch must roll back. Otherwise the support agent will cite a policy paragraph that was never committed.

sql1BEGIN; 2INSERT INTO support_documents ...; 3INSERT INTO document_chunks (document_id, merchant_id, chunk_index, chunk_text, embedding, metadata, acl) 4VALUES (...), (...), ...; 5COMMIT;

Generate embeddings before opening the transaction if the external call is slow or flaky, then write the document row and chunk rows in one database transaction. Postgres + pgvector then guarantee that either the whole policy (with its vectors) is visible or none of it is.

For the "re-embed everything because we switched to a better embedding model" job you can relax:

- Add a

corpus_versioncolumn. - The background worker writes new chunks with

corpus_version = 2. - A nightly job deletes the old version rows after the new ones are verified.

- Retrieval always adds

AND corpus_version = (SELECT max FROM ...)or uses a view.

This is the classic "strong consistency on the write path, eventual on the refresh path" pattern.

Querying from Python with psycopg2

Install the official pgvector helper (it registers the vector type correctly):

bash1pip install "psycopg2-binary>=2.9" pgvector

Python loader sketch (the exact script you will adapt in your repo):

python1import psycopg2 2from pgvector.psycopg2 import register_vector 3import numpy as np 4import os 5 6conn = psycopg2.connect( 7 host="localhost", dbname="acme_rag", user="rag_app", password=os.environ["DB_PASSWORD"] 8) 9register_vector(conn) 10 11def upsert_chunks(chunks: list[dict]): 12 """chunks = [{"doc_id": "d7", "merchant_id": "m01", "chunk_index": 0, 13 "text": "...", "embedding": np.array([...], dtype=np.float32), 14 "metadata": {"category": "refund"}, "acl": ["u1", "u3"]}, ...]""" 15 with conn.cursor() as cur: 16 cur.execute("BEGIN") 17 for c in chunks: 18 cur.execute(""" 19 INSERT INTO document_chunks 20 (document_id, merchant_id, chunk_index, chunk_text, embedding, metadata, acl) 21 VALUES (%s, %s, %s, %s, %s, %s, %s) 22 ON CONFLICT (document_id, chunk_index) DO UPDATE 23 SET embedding = EXCLUDED.embedding, 24 metadata = EXCLUDED.metadata, 25 acl = EXCLUDED.acl 26 """, (c["doc_id"], c["merchant_id"], c["chunk_index"], c["text"], 27 c["embedding"], c["metadata"], c["acl"])) 28 conn.commit() 29 30def retrieve(merchant_id: str, user_acls: list[str], query_vec: np.ndarray, k: int = 5): 31 with conn.cursor() as cur: 32 cur.execute(""" 33 SELECT chunk_text, 34 metadata, 35 document_id, 36 chunk_index, 37 1 - (embedding <=> %s) AS cosine_sim 38 FROM document_chunks 39 WHERE merchant_id = %s 40 AND acl && %s -- overlap: at least one ACL matches 41 ORDER BY embedding <=> %s 42 LIMIT %s 43 """, (query_vec, merchant_id, user_acls, query_vec, k)) 44 return cur.fetchall() 45 46print("document_chunks loader and retrieval helpers are ready")

The <=> operator is cosine distance (1 − cosine similarity). The && operator on arrays is the fast overlap check that uses the GIN index.

The query returns the exact text, the metadata you stored, and the source document + chunk index so the LLM can emit citations like [Policy §2, Ticket t1].

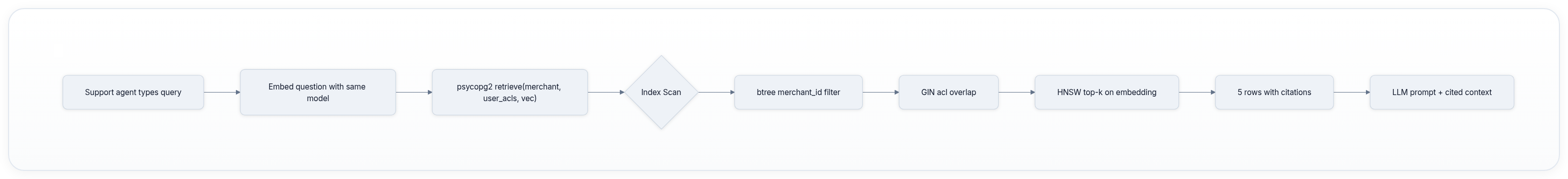

Reading the query plan

Run EXPLAIN (ANALYZE, BUFFERS, FORMAT TEXT) on your retrieve query. You should see:

text1Limit (cost=12.34..45.67 rows=5 width=...) 2 -> Index Scan using idx_chunks_embedding on document_chunks 3 Index Cond: (embedding <=> $1) 4 Filter: (merchant_id = $2) AND (acl && $3) 5 Rows Removed by Filter: 124

If you see Seq Scan instead of Index Scan, you are missing one of the three indexes or the planner statistics are stale (ANALYZE document_chunks;).

A good plan uses the btree/GIN filter first to reduce the candidate set to a few thousand rows, then the HNSW index only on those candidates.

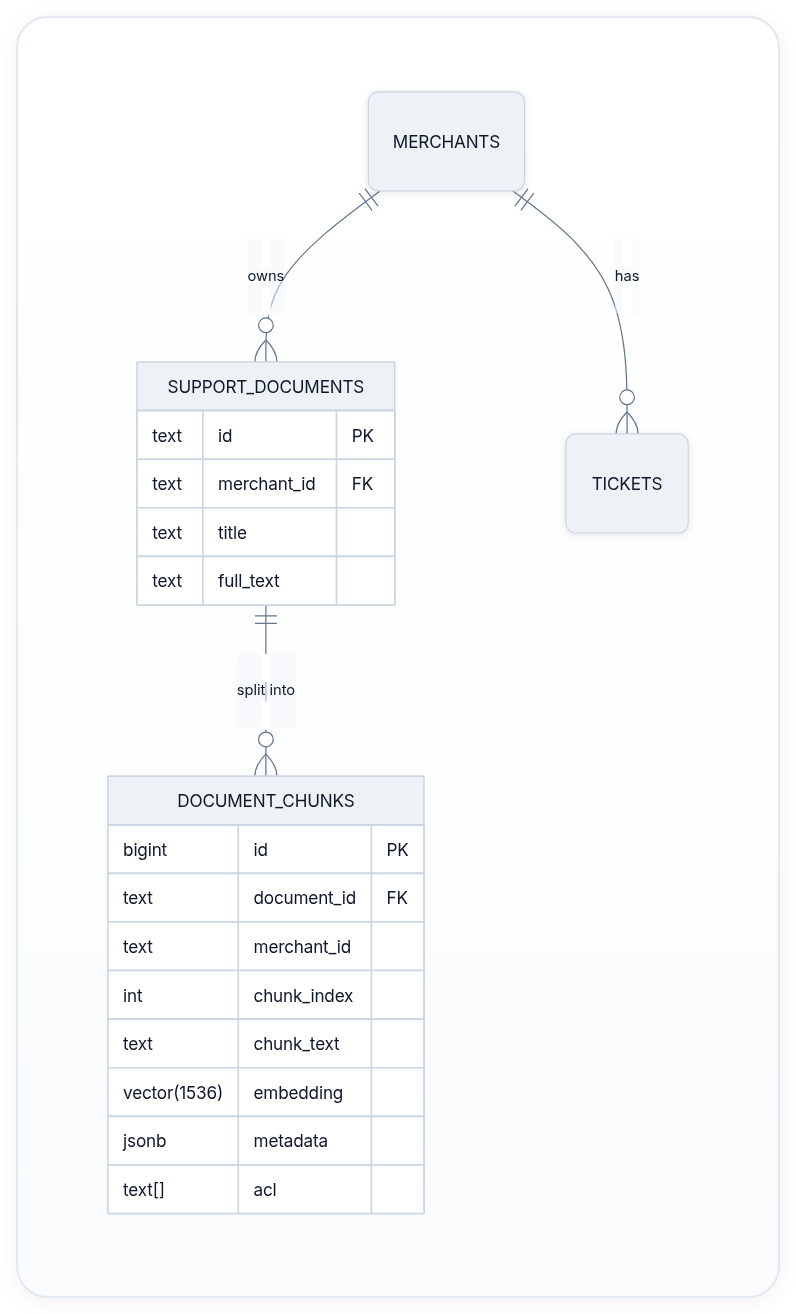

Visual anchor

Schema diagram (Mermaid)

Query execution flow (Mermaid)

Build it yourself (the exercise that makes it stick)

In your local repo (the same one you created in the Git, Docker, and Python foundation chapters):

- Add

pgvectorto yourdocker-compose.ymlPostgres service. - Write a

migrations/003_rag_schema.sqlfile with theCREATE EXTENSIONand the three tables + indexes above. - Create

scripts/load_support_rag.pythat:- Reads three small

.mdfiles fromfixtures/support_docs/ - Uses a 200-token chunker (simple sentence splitter is fine)

- Calls a mock embedder (or

sentence-transformersif you have it) that returns 1536-dim vectors - Calls

upsert_chunks

- Reads three small

- Write

scripts/query_rag.pythat takes--merchant m01 --user u1 "where is my refund"and prints the top-3 chunks with similarity and source. - Run the whole thing with

docker compose upand show that Bob (u3) cannot see the password-reset chunk while Alice can.

Expected output: Alice sees three refund or policy chunks with citation IDs, while Bob sees zero chunks for the restricted password-reset document. If Bob sees it, the solution failed the ACL overlap check. If Alice sees no rows, inspect the merchant filter, vector dimension, and register_vector(conn) call before blaming the LLM.

When scripts/query_rag.py returns the correct three paragraphs with citations and the ACL test passes for both agents, you have built the exact data layer that every later RAG, agent, and evaluation chapter depends on.

Common pitfalls (and the exact error messages you will see)

- Forgetting

register_vector(conn)→can't adapt type 'numpy.ndarray' - Using

float[]column instead ofvector(1536)→ distance operator falls back to Python and is 1000× slower. - Only indexing the embedding column → any query with

WHERE merchant_id = ...becomes a sequential scan. - Updating embeddings in a second transaction after the document commit → a crash leaves "ghost" chunks that have never been seen by the embedding model.

- Storing ACLs as a comma-separated string instead of a native

text[]→ GIN index doesn't help and@>becomes a slowLIKE.

Summary: the mental model you now own

- Use normalized tables for anything that must be correct on write (orders, billing, user identity).

- Use a denormalized

document_chunkstable withmerchant_id,acl[],metadata jsonb, andvectorfor the read-heavy retrieval path. - Protect the duplication with transactions in the loader.

- Index the filter columns first, then the vector column.

- Query with one statement that does vector distance + metadata filters + ACL overlap.

You can now look at any AI product and say: "Show me the schema for the retrieval layer" and immediately know whether it will scale, whether it will leak data between tenants, and whether a failed embedding job will corrupt answers.

What you can explain and build

- Draw the four-table hybrid schema on a whiteboard and defend the denormalization decisions.

- Write the exact

psycopg2+pgvectorquery that returns authorized, ranked chunks for a given merchant and user. - Read an

EXPLAIN ANALYZEand point to the three indexes that must exist. - Implement a transactional loader that either commits a whole document plus its vectors or rolls everything back.

Evaluation Rubric

- 1Designs a correct hybrid schema: normalized merchants/tickets tables + a denormalized document_chunks table with vector(1536), metadata JSONB, and ACL array, plus proper foreign keys and unique constraints

- 2Writes Python + psycopg2 code that inserts chunks with embeddings, runs a hybrid query (vector similarity + metadata filter + ACL check) using the <=> operator, and returns ranked support answers with citations

- 3Chooses the right index (btree on merchant_id + hnsw on embedding), reads an EXPLAIN ANALYZE plan, explains the normalization/denormalization tradeoff for a 10M-ticket merchant corpus, and lists three failure modes when embeddings are updated outside a transaction

Common Pitfalls

- Storing the embedding vector as a float[] or text instead of the pgvector vector type; distance operators then fall back to slow table scans and you lose all index acceleration.

- Putting merchant_id only in the documents table and not duplicating it (or a merchant_id filter) into the chunks table; a vector query without the merchant filter returns cross-tenant results and leaks data.

- Updating an embedding in a separate UPDATE after the chunk row is inserted; a crash leaves a stale embedding and the next retrieval returns wrong answers with no transaction to roll back.

- Using a single btree index on (merchant_id) and hoping the vector index will be used for ACL filters; the planner picks the wrong index and latency jumps from 8 ms to 800 ms on a 5-million-row corpus.

- Treating the vector DB as 'eventually consistent by default' for embeddings while keeping strict ACID on orders; a support agent answers a ticket with an embedding that was never committed, then the ticket status changes and the answer is now factually wrong.

Follow-up Questions to Expect

Key Concepts Tested

relational schemas vs document + vector tablespgvector extension and distance operatorsRAG schema: documents, chunks, embeddings, metadata JSONB, ACLsnormalization vs denormalization tradeoffs for AI read pathsbtree + ivfflat/hnsw indexes for filtered vector searchACID transactions for embeddings vs relaxed consistencypsycopg2 connection, prepared statements, and vector queriesEXPLAIN plans and index selection for retrieval

Next Step

Next: Continue to Algorithms and Complexity for ML Engineers

You now understand the durable, permissioned, vector-augmented data layer that stores every training example, every RAG corpus, and every evaluation label your models will ever see. The next chapter teaches you how to measure the exact time, space, and memory-bandwidth cost of every query, every cache, and every forward pass that will run against those tables. Together they let you build retrieval and inference code that is both correct and efficient.