BlogRun Gemma 4 Locally with Ollama

🏷️ Local LLM🏷️ Ollama🏷️ Gemma 4🏷️ Tutorial🏷️ GPU Inference

Run Gemma 4 Locally with Ollama

Google DeepMind's Gemma 4 is the most capable open-weight model family ever released. This guide walks you through running the 26B MoE and 31B Dense models on your own GPU using Ollama, with hardware-specific performance expectations for everything from a Raspberry Pi to an RTX 5090.

LeetLLM TeamApril 2, 202640 min read

Run Gemma 4 Locally on Your GPU with Ollama

Google DeepMind just released its most intelligent open model family, and you can run it on your own hardware today. Gemma 4, built from the same research and technology as Google's flagship Gemini 3, delivers frontier-level intelligence in four sizes — from a 2-billion-parameter edge model that runs on a Raspberry Pi to a 31-billion-parameter dense model that rivals closed-source giants on reasoning, coding, and math. The entire family ships under the Apache 2.0 license.[1]

This guide walks you through running Gemma 4 locally using Ollama, covers which model to pick for your specific hardware, and gives real-world performance expectations so you know exactly what to expect before downloading.

What Is Gemma 4?

Gemma 4 is Google DeepMind's latest generation of open-weight multimodal models, released in April 2026.[1] The family includes four models — E2B (2.3B effective parameters), E4B (4.5B effective), 26B A4B (Mixture-of-Experts), and 31B (Dense) — all available for free download under the Apache 2.0 license. This is a meaningful shift: previous Gemma releases used restrictive "permissive but controlled" terms. Apache 2.0 means full commercial use, modification, redistribution — no royalties, no strings attached.

What makes Gemma 4 remarkable isn't just the license. The jump in capability from Gemma 3 is the largest generational leap in the open-weight space:

Benchmark leap over Gemma 3

The numbers tell the story. Gemma 4 31B scores 89.2% on AIME 2026 (math), up from 20.8% on Gemma 3 27B. On LiveCodeBench v6 (competitive coding), it hits 80.0% versus 29.1%. GPQA Diamond (scientific knowledge) jumps from 42.4% to 84.3%. And on τ2-bench (agentic tool use, averaged across three domains), it scores 76.9% — compared to just 16.2% for Gemma 3. These aren't incremental improvements. They represent a complete transformation of what an open model can do.

💡 Key insight: Gemma 4 31B currently ranks #3 on the Arena AI text leaderboard, on par with models like Kimi-K2.5 and Qwen-3.5-397B that are 10-20x larger. Intelligence-per-parameter is the metric that matters, and Gemma 4 leads it.

Dual architecture strategy

Gemma 4 ships in both Dense and Mixture-of-Experts (MoE) variants. The 31B Dense model activates every parameter for every token — maximum quality, but requires more memory and compute. The 26B A4B MoE model has 25.2 billion total parameters but only activates 3.8 billion per token via a routing mechanism that selects 8 of 128 total experts per layer. The result: 26B-level intelligence at roughly 4B-level speed. For the full technical story on how expert routing, load balancing, and sparse activation work, see our Mixture of Experts article.

Multimodal architecture

All four models handle text and images with variable aspect ratio and resolution support via dedicated vision encoders (~150M parameters on E2B/E4B, ~550M on 26B/31B). The smaller E2B and E4B models add a native audio encoder (~300M parameters) for speech recognition and translation. While the vision and audio components are separate encoder modules, they're tightly integrated during training, producing strong cross-modal reasoning.

Context and capabilities

The edge models (E2B, E4B) feature 128K context windows, while the workstation models (26B, 31B) support 256K tokens. All models include native function calling for agentic workflows, native system prompt support (the system role is first-class in Gemma 4), configurable thinking modes for step-by-step reasoning, and support for 140+ languages.

Choosing the right model for your hardware

GPU memory (VRAM) is the hard constraint for local inference. You need the entire model to fit in VRAM for fast generation; models that spill into system RAM drop to a fraction of the speed.

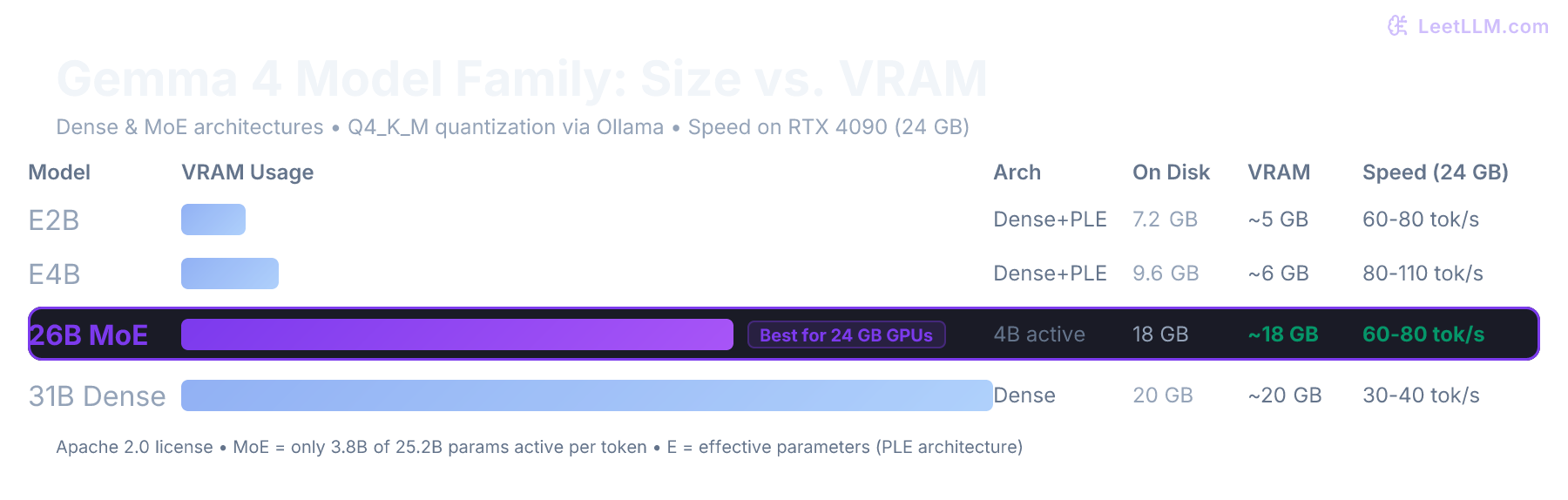

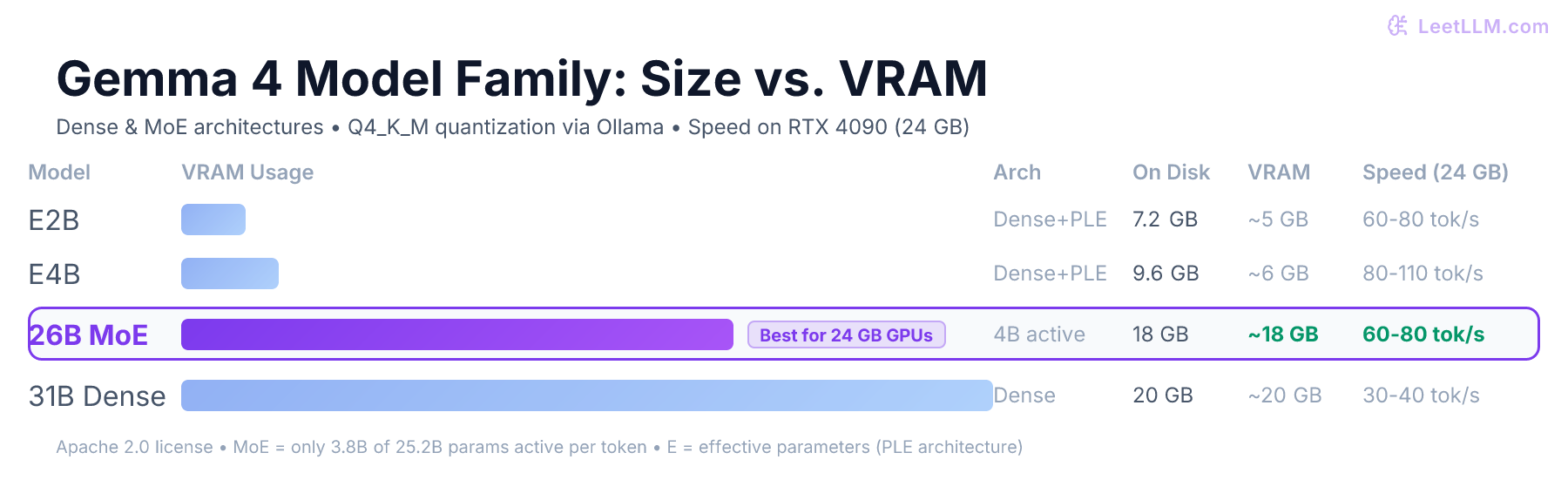

Here's what's available via Ollama and what each variant requires:

| Model | Size on Disk | VRAM Needed | Architecture | Who It's For |

|---|---|---|---|---|

gemma4:e2b | 7.2 GB | ~4 GB (Q4) | Dense + PLE | Phones, Raspberry Pi, IoT devices |

gemma4:e4b | 9.6 GB | ~6 GB (Q4) | Dense + PLE | Laptops, 8+ GB VRAM cards |

gemma4:26b | 18 GB | ~18 GB (Q4) | MoE (4B active) | Sweet spot: 24 GB cards (RTX 3090, 4090, 5090) |

gemma4:31b | 20 GB | ~20 GB (Q4) | Dense | 24+ GB cards, maximum quality |

💡 Key insight: The default

gemma4tag on Ollama points to the E4B model (9.6 GB). For workstation use, specifygemma4:26borgemma4:31bexplicitly. The 26B MoE model is the recommended pick for most developers — it delivers near-31B quality at dramatically faster inference speed because only 4B parameters are active per token.

24 GB cards: RTX 3090 / 4090 / 5090

The sweet spot. Both gemma4:26b (18 GB) and gemma4:31b (20 GB) fit entirely in VRAM with room for a generous context window. The 26B MoE model will be significantly faster because it only activates 3.8B parameters per token, while the 31B Dense model activates all 30.7B. Choose MoE for speed, Dense for maximum quality.

16 GB cards: RTX 4070 Ti / 4080 / 5070 Ti

The E4B model (9.6 GB) fits comfortably and gives excellent all-around performance with vision and audio support. The 26B model at 18 GB will partially spill into system RAM, dropping to CPU-offloaded speeds for some layers. You can run it with reduced quality using aggressive quantization (Q3 or IQ4_XS), but the E4B is the better choice here.

12 GB cards: RTX 3060 / 4060

Run gemma4:e4b or gemma4:e2b. The E4B at ~6 GB in Q4 quantization fits well and offers strong reasoning with multimodal support. Community quantizations like REAP are targeting 12 GB cards for the 26B model with aggressive compression.

8 GB cards and Apple Silicon

The E2B (~4 GB Q4) fits in 8 GB VRAM with room to spare. On Apple Silicon Macs with unified memory, you have more flexibility — a Mac with 32 GB unified memory can run the 26B model, and a 48 GB+ Mac can run the 31B comfortably, since macOS shares memory between CPU and GPU.

⚠️ Common mistake: Trying to run

gemma4:31bon a 16 GB card. When VRAM overflows, Ollama offloads transformer layers to CPU, creating a massive GPU-CPU data transfer bottleneck on every token. The result: 5-10x slower generation than a model that fits completely in VRAM.

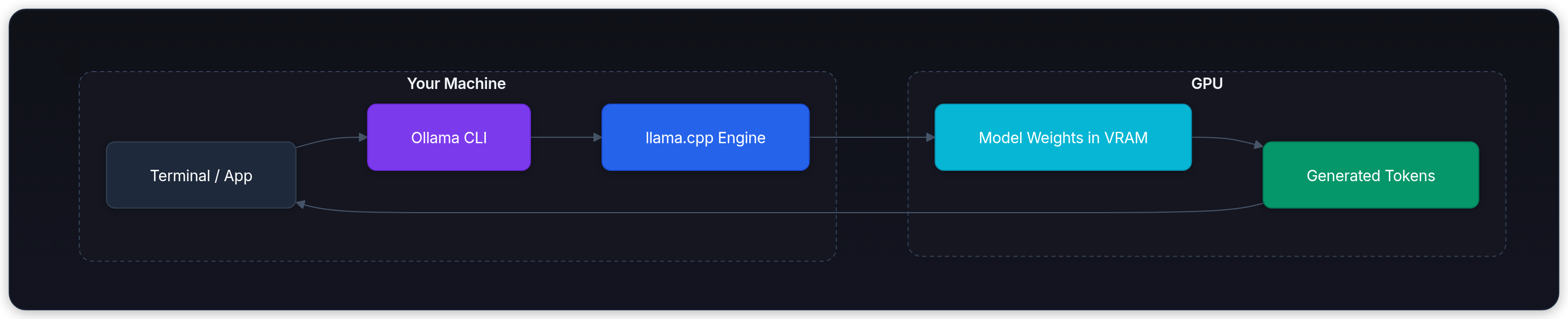

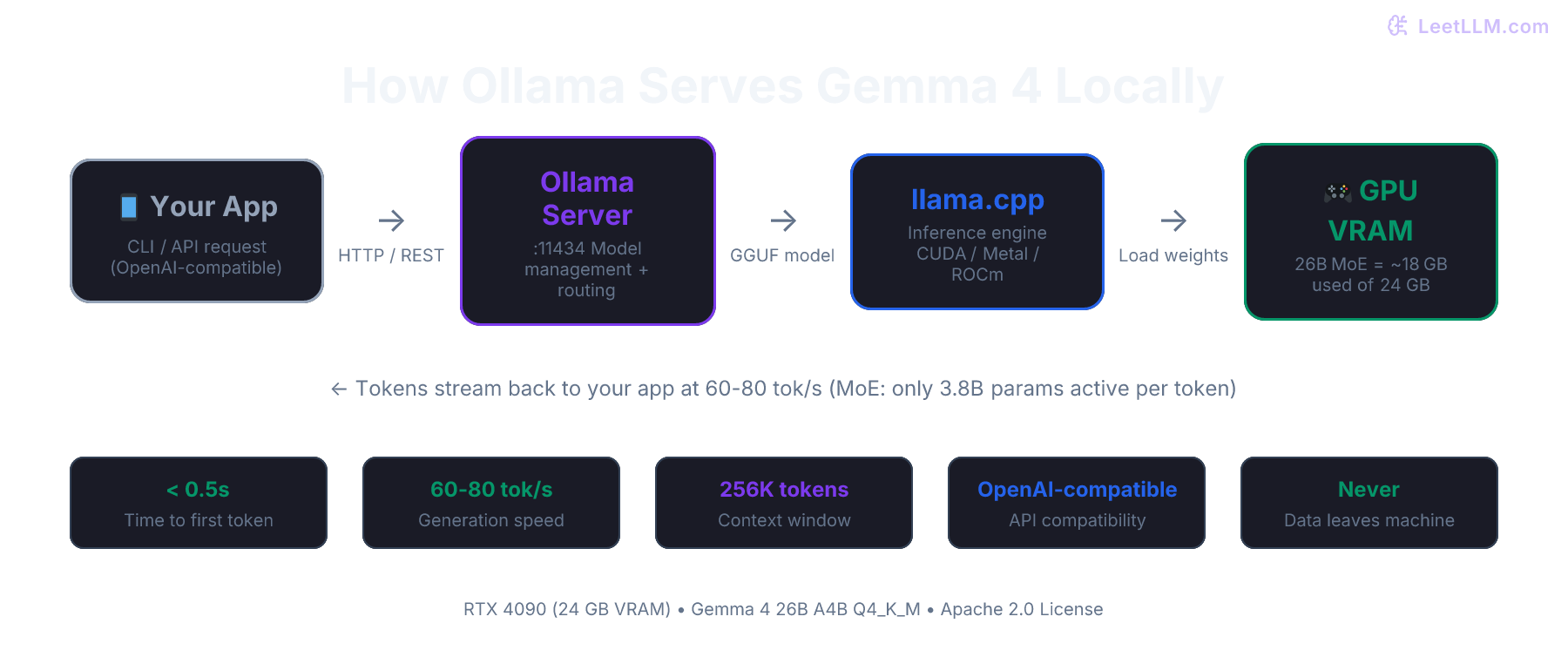

Why Ollama?

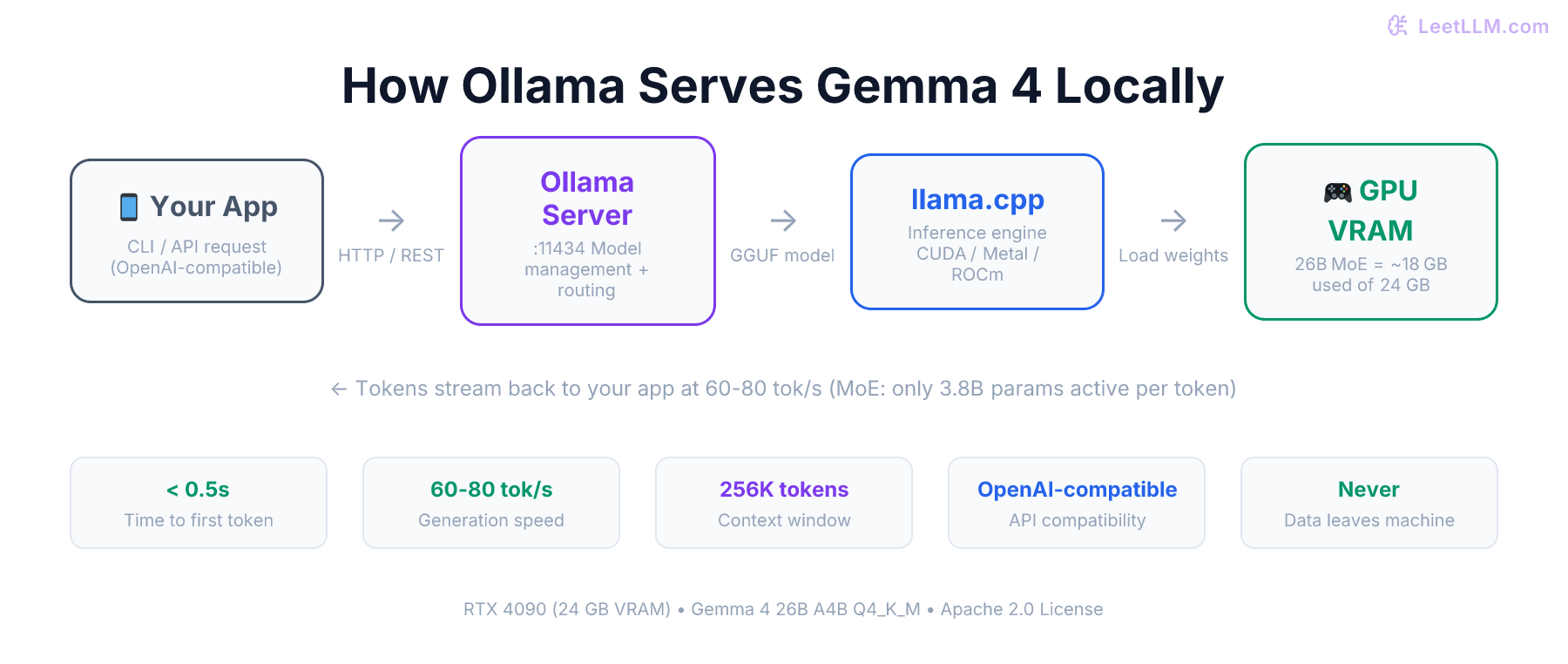

The local inference field has several good tools: llama.cpp (the underlying engine)[2], LM Studio (a GUI), vLLM (production serving), and Unsloth (training + inference). Ollama wraps llama.cpp with a clean CLI, a REST API, and automatic model management.[3]

Here's why it's the right starting point:

- •One-command model management.

ollama run gemma4:26bdownloads the model, sets up quantization, starts the server, and drops you into a chat. - •OpenAI-compatible API. Any tool that works with the OpenAI API works with Ollama by pointing it at

http://localhost:11434/v1. This includes Claude Code, Codex, OpenCode, and hundreds of libraries. - •Cross-platform. Works on Linux, macOS (including Apple Silicon via Metal), and Windows via WSL2.

- •Gemma 4 day-one support. Ollama v0.20+ ships with native Gemma 4 support, including vision, thinking mode, and function calling.

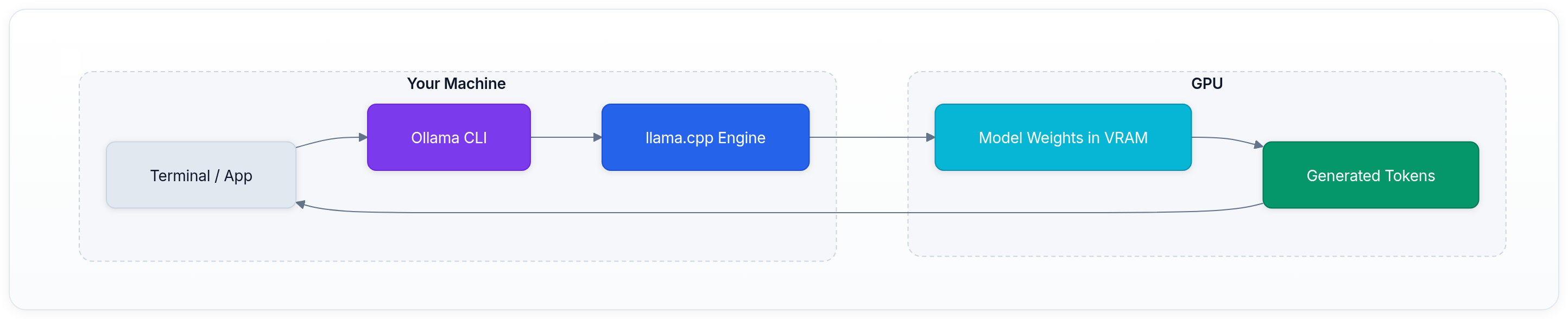

Here's a visual representation of how Ollama processes requests and manages local model inference:

No cloud endpoint, no API key, no data leaving your machine.

Setting up Ollama

Install Ollama

Linux

Download and run the official install script. This detects your GPU (NVIDIA, AMD, or Intel) and installs the appropriate runtime:

bash1curl -fsSL https://ollama.com/install.sh | sh

Gemma 4 requires Ollama v0.20+. If you have an older version, re-running the install script will upgrade in place.

macOS

Download the app from ollama.com and drag it into Applications. Ollama auto-detects Apple Silicon and uses Metal for GPU acceleration.

Windows

Download the installer from ollama.com. It runs through WSL2 — you'll need WSL installed first (wsl --install in an admin PowerShell).

Verify the installation:

bash1ollama --version 2# Should print: ollama version 0.20.x or later

Running your first Gemma 4 chat

One command pulls the model and starts an interactive session:

bash1ollama run gemma4:26b

The first run downloads the 26B MoE model (about 18 GB), which takes a few minutes on a fast connection. Once downloaded, the model loads into VRAM and you'll see the interactive prompt:

text1>>> Send a message (/? for help)

Try asking it something technical:

text1>>> Explain the difference between Dense and MoE architectures in three sentences.

You should see tokens streaming at high speed. On a 24 GB GPU, the 26B MoE model generates fast enough for conversations to feel real-time. If speed is below 10 tok/s, the model may be running on CPU — check the CUDA troubleshooting section below.

To exit, type /bye or press Ctrl+D. The model stays loaded in VRAM for the keep-alive period (default: 5 minutes).

Running the smaller edge models

For hardware with less VRAM, try the E4B (default) or E2B variants:

bash1# Default tag — the E4B model 2ollama run gemma4 3 4# Explicitly run the smallest variant 5ollama run gemma4:e2b

These models include native audio support, which the larger 26B and 31B models don't have. They're the right choice if you need speech recognition or audio processing locally.

The REST API

The real power of Ollama is the REST API, which lets you integrate local AI into any application:

bash1curl http://localhost:11434/api/chat \ 2 -d '{ 3 "model": "gemma4:26b", 4 "messages": [{"role": "user", "content": "What is a transformer?"}] 5 }'

For tools expecting an OpenAI API:

bash1curl http://localhost:11434/v1/chat/completions \ 2 -H "Content-Type: application/json" \ 3 -d '{ 4 "model": "gemma4:26b", 5 "messages": [{"role": "user", "content": "What is a transformer?"}] 6 }'

For Python applications:

python1from ollama import chat 2 3response = chat( 4 model='gemma4:26b', 5 messages=[{'role': 'user', 'content': 'Explain RLHF in simple terms.'}], 6) 7print(response.message.content)

Or using the openai package with Ollama as a drop-in replacement:

python1from openai import OpenAI 2 3client = OpenAI( 4 base_url='http://localhost:11434/v1', 5 api_key='ollama', 6) 7 8response = client.chat.completions.create( 9 model='gemma4:26b', 10 messages=[{'role': 'user', 'content': 'Explain RLHF in simple terms.'}], 11) 12print(response.choices[0].message.content)

Understanding quantization

The models you download via Ollama are already quantized, stored in GGUF format. At full precision (BF16), the 26B model would need about 52 GB of memory — far beyond consumer card capacity. Quantization represents each weight with fewer bits, trading a tiny amount of quality for a massive reduction in memory.[4]

Here's what the quantization levels look like for Gemma 4:

| Model | BF16 | Q8 | Q4 (Default) |

|---|---|---|---|

| E2B (5.1B total) | ~10 GB | 5-8 GB | ~4 GB |

| E4B | ~16 GB | 9-12 GB | ~6 GB |

| 26B A4B | ~52 GB | 28-30 GB | 16-18 GB |

| 31B | ~62 GB | 34-38 GB | 17-20 GB |

Ollama defaults to Q4_K_M quantization: each weight is stored in approximately 4 bits with a grouped scaling strategy. For most conversational and coding tasks, Q4_K_M is nearly indistinguishable from the full-precision model — perplexity degradation is typically under 1%.

💡 Key insight: Quantization isn't a hack; it's how local LLMs work in practice. The quality tradeoff at Q4 is small enough that you won't notice it in normal use, but you get a 4-6x reduction in memory requirements. See our guide to Model Quantization for the full technical story on GPTQ, AWQ, and GGUF.

Unsloth provides Dynamic 4-bit (UD-Q4_K_XL) quantizations for all Gemma 4 models on Hugging Face, which use variable bit-width per layer to preserve quality in the most critical layers. These can be used with llama.cpp directly or imported into Ollama via a Modelfile.

The thinking mode

Gemma 4 features a built-in reasoning mode where the model thinks step-by-step before answering. Unlike Gemma 3's thinking, Gemma 4 uses explicit control tokens that Ollama handles for you.

When thinking is enabled (the default for complex queries), the model outputs internal reasoning followed by the final answer. You'll see the model generate with visible <|channel>thought blocks before the response.

To get more direct, faster answers for simple tasks:

bash1ollama run gemma4:26b 2>>> /set system "You are a concise assistant. Answer directly without extended reasoning."

🎯 Production tip: Thinking mode consumes extra tokens and adds latency, but it dramatically improves accuracy on math, logic, and multi-step problems. For simple Q&A and chat, disable it for snappier responses. For coding and reasoning tasks, leave it on — the extra tokens are doing real work.

For best results, Google recommends these sampling parameters across all use cases:

- •

temperature=1.0 - •

top_p=0.95 - •

top_k=64

Checking what's running

bash1# List all downloaded models 2ollama list 3 4# Show which models are currently loaded in VRAM 5ollama ps 6 7# Pull a specific variant 8ollama pull gemma4:31b 9 10# Remove a model to free disk space 11ollama rm gemma4:e2b

To verify GPU utilization:

bash1nvidia-smi -l 1

You should see GPU Memory Used jump to about 18 GB when gemma4:26b loads, and GPU Utilization spike to 90-100% while tokens are generating.

Performance numbers: what to expect

These are estimated performance numbers based on architecture analysis and early community benchmarks. The 26B MoE model is particularly fast because only 3.8B parameters are active per token.

NVIDIA GPUs

| GPU | VRAM | Best Model | VRAM Usage | Generation Speed (est.) |

|---|---|---|---|---|

| RTX 5090 | 32 GB | gemma4:31b | ~20 GB | 80-100+ tok/s |

| RTX 4090 | 24 GB | gemma4:26b | ~18 GB | 60-80+ tok/s |

| RTX 4090 | 24 GB | gemma4:31b | ~20 GB | 30-40 tok/s |

| RTX 3090 | 24 GB | gemma4:26b | ~18 GB | 40-60 tok/s |

| RTX 5070 Ti | 16 GB | gemma4:e4b | ~10 GB | 100-140 tok/s |

| RTX 4070 Ti | 16 GB | gemma4:e4b | ~10 GB | 80-110 tok/s |

| RTX 4060 | 8 GB | gemma4:e2b | ~4 GB | 60-80 tok/s |

Apple Silicon

| Mac | Memory | Best Model | Generation Speed (est.) |

|---|---|---|---|

| M4 Max (128 GB) | Unified | gemma4:31b | 25-35 tok/s |

| M4 Pro (48 GB) | Unified | gemma4:26b | 20-30 tok/s |

| M4 (24 GB) | Unified | gemma4:e4b | 30-45 tok/s |

| M4 (16 GB) | Unified | gemma4:e2b | 40-60 tok/s |

Edge devices

| Device | Memory | Model | Generation Speed (est.) |

|---|---|---|---|

| Raspberry Pi 5 (8 GB) | 8 GB | gemma4:e2b (Q4) | 3-5 tok/s |

| Jetson Nano | 8 GB | gemma4:e2b (Q4) | 5-8 tok/s |

| Pixel 9 Pro | 12 GB | gemma4:e4b (Q4) | 10-20 tok/s |

🔬 Research insight: The 26B MoE model's speed advantage comes from its sparse activation pattern. Even though the full 25.2B parameter set must reside in VRAM, only 3.8B parameters (8 of 128 experts plus a shared expert) are read per token. This means memory bandwidth — the bottleneck for local inference — is used as efficiently as a 4B dense model.

Platform notes

Linux (NVIDIA) — recommended

Linux gives the best performance and most control. Ollama installs as a systemd service:

bash1sudo systemctl status ollama 2sudo journalctl -u ollama -f 3 4# Enable flash attention for better performance 5sudo systemctl edit ollama 6# Add: [Service] 7# Environment="OLLAMA_FLASH_ATTENTION=1"

Mac (Apple Silicon)

Install via the macOS app. Apple Silicon uses Metal for GPU acceleration with unified memory — a key advantage for large models. The 26B model needs 18 GB, which fits well on a 24 GB or 32 GB Mac:

bash1# M4 Pro or Max with 32+ GB: 2ollama run gemma4:26b 3 4# M4 base with 16 GB: 5ollama run gemma4

Windows (WSL2)

Performance is comparable to Linux via WSL2. Ensure CUDA passthrough is enabled and install the NVIDIA drivers for WSL2 from NVIDIA's website. A common mistake is installing nvidia-cuda-toolkit inside WSL2, which can shadow the Windows driver and break GPU access.

Multimodal capabilities

Gemma 4 is natively multimodal. All four models process images, and the E2B and E4B models add audio.

Image understanding

Drag-and-drop an image into the Ollama terminal, or use the API with base64:

text1>>> Describe what's in this image and identify any technical diagrams.

Gemma 4 supports variable image resolution through configurable visual token budgets (70, 140, 280, 560, 1120 tokens). Lower budgets are faster; higher budgets capture more detail for tasks like OCR and document parsing.

Audio (E2B and E4B only)

text1>>> Transcribe the following speech segment in English into English text.

Audio supports a maximum length of 30 seconds. For longer audio, split it into segments.

Function calling

Gemma 4 has native function-calling support for agentic workflows:

python1from ollama import chat 2 3response = chat( 4 model='gemma4:26b', 5 messages=[{'role': 'user', 'content': 'What is the weather in San Francisco?'}], 6 tools=[{ 7 'type': 'function', 8 'function': { 9 'name': 'get_weather', 10 'description': 'Get the current weather for a location', 11 'parameters': { 12 'type': 'object', 13 'properties': { 14 'location': {'type': 'string', 'description': 'City name'}, 15 }, 16 'required': ['location'], 17 }, 18 }, 19 }], 20) 21print(response.message.tool_calls)

💡 Key insight: Gemma 4's τ2-bench score of 76.9% average across three domains (vs. 16.2% for Gemma 3) means function calling and tool use work reliably now. This is the difference between a demo and a production-grade tool-use model.

Integrating with coding tools

Several tools work with Ollama out of the box:

Claude Code / Codex / OpenCode

Ollama v0.20+ includes built-in support for coding assistants:

bash1# Launch Claude Code with Gemma 4 2ollama launch claude --model gemma4:26b 3 4# Launch Codex 5ollama launch codex --model gemma4:26b 6 7# Launch OpenCode 8ollama launch opencode --model gemma4:26b

Any OpenAI-compatible tool

Point your tool at Ollama's API:

- •API Endpoint:

http://localhost:11434/v1 - •Model:

gemma4:26b - •API Key: any value (Ollama ignores it)

Open WebUI

For a browser-based ChatGPT-like interface:

bash1docker run -d \ 2 -p 3000:8080 \ 3 --add-host=host.docker.internal:host-gateway \ 4 -v open-webui:/app/backend/data \ 5 --name open-webui \ 6 ghcr.io/open-webui/open-webui:main

Then visit http://localhost:3000 and connect to http://localhost:11434.

Validating your setup

This script benchmarks your setup using the Ollama API:

python1import json, urllib.request 2 3def chat_api(messages): 4 body = json.dumps({ 5 "model": "gemma4:26b", 6 "messages": messages, 7 "stream": False, 8 "options": {"temperature": 1.0, "top_p": 0.95, "top_k": 64, "num_predict": 300} 9 }).encode() 10 req = urllib.request.Request( 11 "http://localhost:11434/api/chat", 12 data=body, headers={"Content-Type": "application/json"} 13 ) 14 with urllib.request.urlopen(req, timeout=180) as r: 15 return json.loads(r.read()) 16 17# Warm-up (loads model into VRAM on first call) 18print("Loading model into VRAM...") 19chat_api([{"role": "user", "content": "Say: ready"}]) 20 21# Benchmark 22print("Benchmarking generation speed...") 23res = chat_api([{ 24 "role": "user", 25 "content": "List the numbers 1 through 50, one per line. Numbers only." 26}]) 27 28eval_count = res.get("eval_count", 0) 29eval_duration_s = res.get("eval_duration", 1) / 1e9 30tok_s = eval_count / eval_duration_s 31 32print(f"Generated {eval_count} tokens in {eval_duration_s:.1f}s") 33print(f"Rate: {tok_s:.0f} tok/s") 34 35if tok_s < 20: 36 print("WARNING: Speed looks low. Check GPU is in use: nvidia-smi") 37elif tok_s > 40: 38 print("GPU acceleration confirmed.")

Save as validate_gemma4.py and run:

bash1python3 validate_gemma4.py

Expected output on an RTX 4090:

text1Loading model into VRAM... 2Benchmarking generation speed... 3Generated 200 tokens in 2.8s 4Rate: 71 tok/s 5GPU acceleration confirmed.

Troubleshooting

"pull model manifest: 412" — Ollama version too old

Gemma 4 requires Ollama v0.20+. If you see this error:

text1Error: pull model manifest: 412: 2The model you are attempting to pull requires a newer version of Ollama.

Reinstall using the official script:

bash1curl -fsSL https://ollama.com/install.sh | sh 2sudo systemctl restart ollama 3ollama --version # should show 0.20.x or later

Model running on CPU instead of GPU

Symptom

Generation speed is 5-10 tok/s instead of 40+. ollama ps shows 100% CPU.

Fix

Check the logs for GPU detection:

bash1sudo journalctl -u ollama -n 50 | grep "inference compute"

If GPU acceleration is working:

text1inference compute ... library=CUDA compute=8.9 description="NVIDIA GeForce RTX 4090"

If it shows library=cpu, upgrade Ollama. If you installed via Homebrew (brew install ollama), the binary likely doesn't include CUDA support:

bash1brew uninstall ollama 2curl -fsSL https://ollama.com/install.sh | sh

⚠️ Common mistake: Using

brew install ollamaon Linux often leads to CPU-only execution. Always use the official curl script to ensure the CUDA runtime is bundled.

Inference is slow and nvidia-smi shows 0% GPU utilization

Check where the model is loaded:

bash1ollama ps

If the rightmost column says 100% CPU, GPU offload didn't happen. Common causes:

| Log message | Meaning |

|---|---|

no compatible GPUs were discovered | Driver issue or WSL2 passthrough problem |

CPU.0.LLAMAFILE=1 | Ollama loaded only CPU backend (Homebrew/Snap install) |

offloaded 0/N layers to GPU | All layers stayed on CPU |

insufficient VRAM | Another process is using GPU memory |

Speed drops after a few exchanges

Context length is growing. As your conversation grows, the KV cache consumes more VRAM. Override the default:

bash1OLLAMA_CONTEXT_LENGTH=32768 ollama run gemma4:26b

Or bake it into a Modelfile:

text1FROM gemma4:26b 2PARAMETER num_ctx 32768

bash1ollama create gemma4-26b-32k -f Modelfile 2ollama run gemma4-26b-32k

Stopping the model and freeing VRAM

bash1# Stop a specific model 2ollama stop gemma4:26b 3 4# Stop the Ollama service entirely (Linux) 5sudo systemctl stop ollama 6 7# macOS: quit the menu bar app, or: 8pkill ollama

How Gemma 4 compares to Gemma 3

The generational leap from Gemma 3 27B to Gemma 4 is the most dramatic improvement in the open-weight space:

| Benchmark | Gemma 4 31B | Gemma 4 26B A4B | Gemma 4 E4B | Gemma 3 27B |

|---|---|---|---|---|

| MMLU Pro | 85.2% | 82.6% | 69.4% | 67.6% |

| AIME 2026 (no tools) | 89.2% | 88.3% | 42.5% | 20.8% |

| LiveCodeBench v6 | 80.0% | 77.1% | 52.0% | 29.1% |

| Codeforces ELO | 2150 | 1718 | 940 | 110 |

| GPQA Diamond | 84.3% | 82.3% | 58.6% | 42.4% |

| τ2-bench (avg) | 76.9% | 68.2% | 42.2% | 16.2% |

| MMMU Pro (vision) | 76.9% | 73.8% | 52.6% | 49.7% |

| MATH-Vision | 85.6% | 82.4% | 59.5% | 46.0% |

Gemma 4 31B currently ranks #3 among open models on the Arena AI text leaderboard. The 26B MoE model achieves near-identical benchmark scores to the 31B Dense on reasoning tasks while running at a fraction of the compute cost — making it the best intelligence-per-watt option in the open-weight space.

Key takeaways

- •Gemma 4 is the most capable open-weight model family ever released, with the 31B model ranking #3 on Arena AI and scoring 89.2% on AIME 2026 — up from 20.8% on Gemma 3.

- •The 26B MoE model is the practical sweet spot: 26B-level intelligence running at 4B-level speed because only 3.8B of 25.2B parameters activate per token.

- •For 24 GB VRAM GPUs (RTX 3090/4090/5090), run

gemma4:26b. For 16 GB cards, rungemma4:e4b. For edge devices, rungemma4:e2b. - •Apache 2.0 license means full commercial freedom: use, modify, redistribute, fine-tune — no royalties, no restrictions.

- •Ollama v0.20+ is required. One command installs the runtime, another downloads the model and starts chatting.

- •The E2B and E4B models include native audio support and run on devices as small as a Raspberry Pi, enabling genuine offline edge AI.

- •Native function calling with 76.9% on τ2-bench (averaged across domains, up from 16.2% on Gemma 3) makes Gemma 4 a viable foundation for production agentic workflows.

References