BlogvLLM vs SGLang vs TensorRT-LLM vs Ollama: Choosing an Inference Engine in 2026

🏷️ Inference🏷️ vLLM🏷️ SGLang🏷️ TensorRT-LLM🏷️ Ollama🏷️ Production AI📊 Benchmarks

vLLM vs SGLang vs TensorRT-LLM vs Ollama: Choosing an Inference Engine in 2026

Raw throughput is only half the inference-engine decision. This guide teaches PagedAttention with worked memory math, analyzes an H100 benchmark snapshot, then explains how workload shape, prefix reuse, and deployment friction matter as much as tok/s.

LeetLLM TeamApril 1, 2026Updated May 15, 202614 min read

You just deployed a support chatbot for your SaaS app. You picked a strong model. On launch day, fifty support staff open the chat at once to check billing status. The first ten get instant replies. The rest stare at a loading spinner. Your GPU dashboard shows only 60% utilization.

The model isn't the bottleneck. The inference engine is.

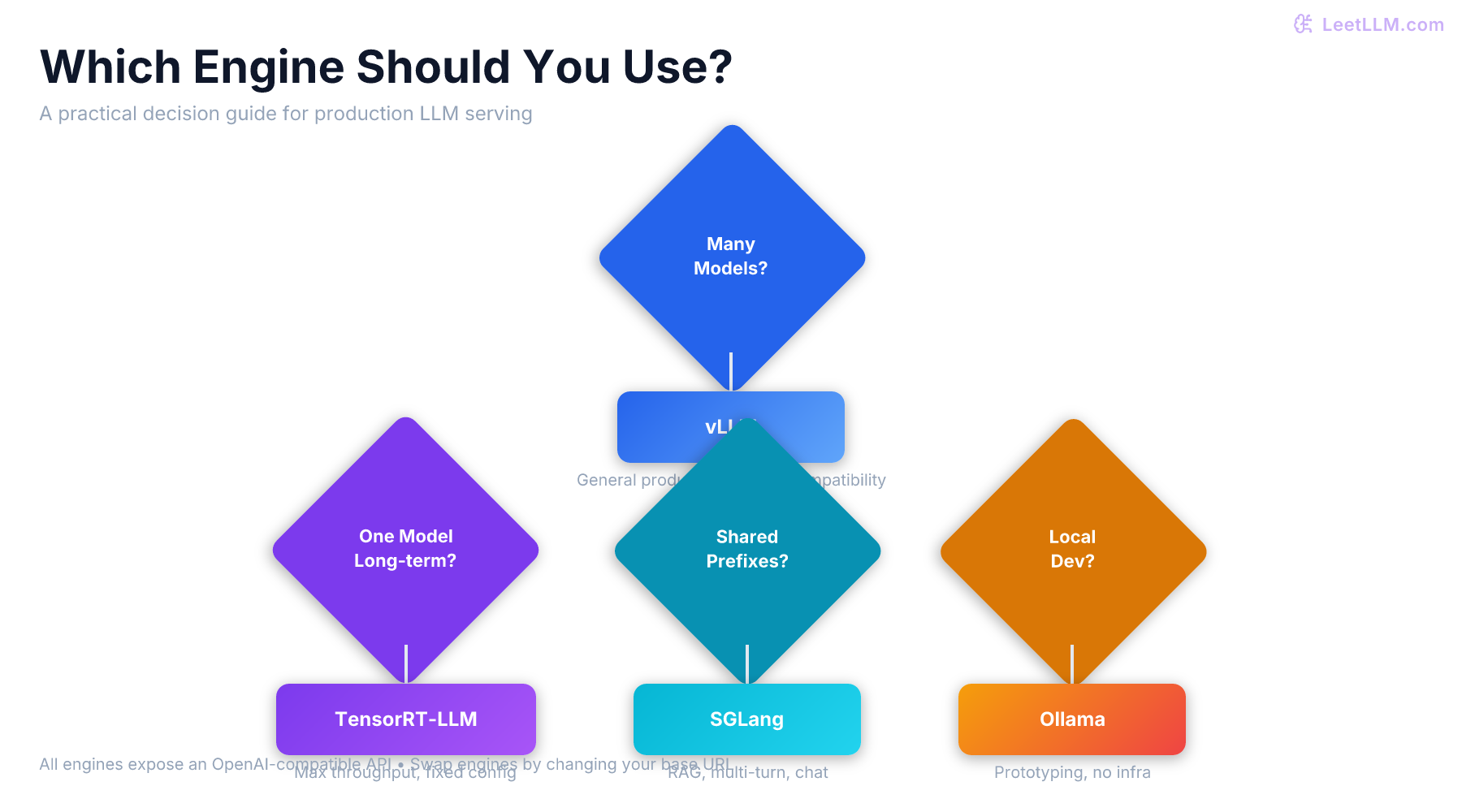

Choosing between vLLM, SGLang, TensorRT-LLM, and Ollama isn't about finding the "fastest" one on a blog chart. It's about matching the engine's strengths to your workload shape, your team's operational budget, and your hardware constraints.

This article walks through one real benchmark snapshot, explains what makes each engine behave differently under the hood, and gives you a decision framework you can use on an actual engineering floor.

What the engines are actually competing over

When an LLM generates text, it predicts one token at a time. To predict the 10th token, it needs the previous 9. Recomputing those 9 tokens every single time would waste most of the GPU's effort. So the engine stores their intermediate results in a KV cache.

Think of it like a shared work queue. Instead of recomputing the same setup for every task, the system stores completed setup state once and reuses it. The KV cache is that reusable state.

The problem is that every request has a different context size. If you reserve a giant memory block for a request that only needs a short answer, most of the block sits empty while another request waits because there's no free space. That's memory fragmentation. Every engine in this article solves that fragmentation problem differently.

Read the benchmark correctly

Spheron's test is a useful snapshot, but it's still a snapshot.

The benchmark setup was:

- 1x H100 SXM5 80 GB

- Llama 3.3 70B Instruct

- FP8 precision

- 512 input tokens

- 256 output tokens

- concurrency levels of 1, 10, 50, and 100[1]

Those details matter because inference-engine results are workload-shaped. The "winner" can change if you switch hardware, model family, prompt sharing pattern, or latency target.

Spheron also notes that its vLLM row predates vLLM's MRV2 path. That means the published vLLM numbers are a point-in-time configuration, not a permanent ceiling for the project.[1]

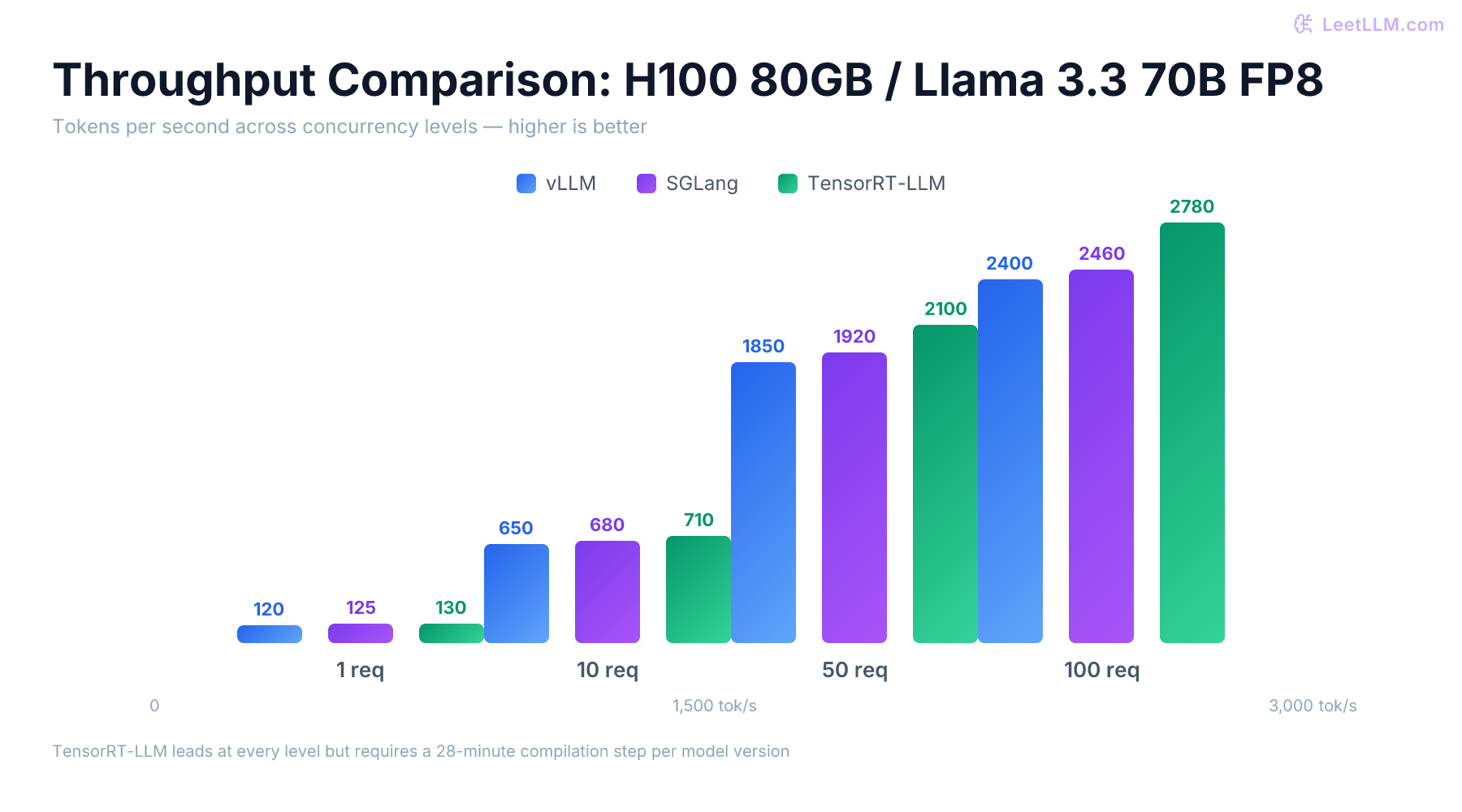

The throughput snapshot

With that caveat in place, here is the Spheron throughput table for unique prompts on that setup:[1]

| Concurrency | vLLM | SGLang | TensorRT-LLM |

|---|---|---|---|

| 1 | 120 tok/s | 125 tok/s | 130 tok/s |

| 10 | 650 tok/s | 680 tok/s | 710 tok/s |

| 50 | 1,850 tok/s | 1,920 tok/s | 2,100 tok/s |

| 100 | 2,400 tok/s | 2,460 tok/s | 2,780 tok/s |

The immediate read is straightforward:

- TensorRT-LLM leads on raw throughput in this specific benchmark

- SGLang sits between TensorRT-LLM and vLLM on the unique-prompt workload

- vLLM remains competitive enough that simplicity still matters

The wrong read would be "TensorRT-LLM wins, case closed."

The right read is:

On this server-class H100 setup, for this model and prompt shape, TensorRT-LLM delivered the highest output throughput. That doesn't automatically make it the best operational choice for every team.

The key lesson: inference benchmarks are workload descriptions. If the prompt length, cache hit rate, hardware, or model churn differs from your system, rerun the comparison before changing runtimes.

Throughput wasn't the only spread. On the same benchmark, TTFT p50 at 10 concurrent requests was 120 ms for vLLM, 112 ms for SGLang, and 105 ms for TensorRT-LLM. Cold start was about 62 seconds for vLLM, 58 seconds for SGLang, and 28 minutes for the compiled TensorRT-LLM path.[1] That compile tax matters if you rotate models often. TensorRT-LLM also has a PyTorch-oriented path that lowers setup friction, but then you're no longer comparing the exact runtime path that produced the peak-throughput numbers in the table.[1][2]

How PagedAttention changes the memory math

vLLM's key contribution is PagedAttention, which attacks KV-cache fragmentation by allocating cache in fixed-size blocks instead of one large contiguous segment per request.[3] That sounds like an implementation detail, but it changed production serving.

Here is the math with concrete numbers. Imagine a GPU with 24 GB of VRAM. Your model weights consume 18 GB. That leaves 6 GB for the KV cache and concurrent requests.

With traditional contiguous allocation, you might reserve 1 GB per customer slot up front, just in case someone sends a long message. You can fit six slots. But most customers only use 400 MB worth of cache. That means 600 MB per slot sits wasted. You are paying for six full slots and using only 40% of each one.

PagedAttention breaks the cache into small blocks, like an allocator using standard pages instead of custom shelves. If a customer's conversation needs 400 MB, it gets exactly 400 MB of blocks. Now 6 GB divided by 0.4 GB gives you fifteen concurrent slots, not six.

That difference, roughly 2.5x more concurrent users on the same hardware in this toy example, is why vLLM became a common production default.

You can verify the slot math with a tiny script. It doesn't model the whole runtime, but it makes the allocator intuition concrete:

python

1def slots(total_cache_mb: int, per_request_mb: int) -> int:

2 return total_cache_mb // per_request_mb

3

4cache_budget_mb = 6_000

5traditional_slots = slots(cache_budget_mb, per_request_mb=1_000)

6paged_slots = slots(cache_budget_mb, per_request_mb=400)

7

8print(f"traditional slots: {traditional_slots}")

9print(f"paged slots: {paged_slots}")

10print(f"slot multiplier: {paged_slots / traditional_slots:.1f}x")Expected output:

text

1traditional slots: 6

2paged slots: 15

3slot multiplier: 2.5xvLLM: the broad-default runtime

On top of PagedAttention, the vLLM project has become the practical compatibility layer for a huge range of open models.[4] vLLM v1 also supports automatic prefix caching, so shared-prefix reuse isn't exclusive to SGLang.[5]

That breadth is part of why most teams still start here, and it's also why the MRV2 note matters. You shouldn't read one benchmark row as the upper bound on current vLLM performance.[1]

Use vLLM when you care most about:

- broad model coverage

- predictable deployment

- a large ecosystem

- not over-optimizing too early

SGLang: the prefix-reuse specialist

SGLang isn't just "vLLM but different." vLLM can also reuse prefixes, but SGLang's advantage is most visible when cache hits dominate the workload, such as:[5][6]

- multi-turn chat with a long system prompt

- RAG over repeated document context

- few-shot prompts with shared exemplars

- agent loops that repeatedly revisit similar state

Picture an internal helpdesk where every request starts with the same 500-token system prompt that explains the warehouse schema, permissions, and tool format. Only the final account ID changes. SGLang's RadixAttention is designed for workloads where reusable prompt structure can stay cached, so the engine doesn't keep recomputing the same prefix.

The Spheron benchmark used unique prompts, so it doesn't fully capture SGLang's best-case workload. That's exactly why reading only the headline throughput bar chart isn't enough.

Use SGLang when:

- shared prefixes dominate the request mix

- structured generation matters

- your traffic pattern rewards cache reuse

TensorRT-LLM: the throughput-maximizing NVIDIA path

TensorRT-LLM is the most performance-obsessed option in this group. It's designed to squeeze the most out of NVIDIA hardware through compiled engines, optimized kernels, and deployment-time specialization.[2]

That's why it often wins peak-throughput benchmarks.

One important serving feature is in-flight batching. Instead of waiting for every request in a batch to finish before admitting new work, the runtime can remove completed sequences and insert fresh requests while generation is still running. That keeps the GPU busier when users produce different output lengths, which is common in chat and agent workloads.

It also explains the main operational trade:

- stronger peak serving numbers on stable NVIDIA deployments

- more build and artifact management on the compiled path

- a PyTorch-oriented path that lowers setup friction for experiments and shorter-lived deployments, while the compiled engine path remains the one to evaluate when maximum NVIDIA throughput is the point[1][2]

Use TensorRT-LLM when:

- you serve a stable model for a long time

- you're NVIDIA-only

- enough traffic exists to justify runtime optimization work

If your model mix changes constantly, or you care more about flexibility than absolute tokens per second, the benchmark lead may not be worth the operational cost.

Ollama: the local-development runtime

Ollama isn't trying to win the H100 datacenter benchmark. It's trying to make local LLM usage boring in the best possible way.

It gives you:

- one local daemon

- published model tags

- simple CLI flows

- a clean local API

Ollama also exposes OpenAI-compatible local endpoints now, which makes it easier to reuse client code during prototyping even if you later move the serving layer elsewhere.[7]

Under the hood, Ollama can run local models through backends such as llama.cpp, and its import path supports GGUF model files, which is why it's so useful on Macs, laptops, and consumer GPUs.[8][9][10]

Use Ollama when:

- you're prototyping locally

- your primary target is a workstation or laptop

- you want the shortest path from "download model" to "send request"

Quick comparison table

| Engine | Primary Strength | Memory / Scheduling Idea | Best Fit | Watch Out For |

|---|---|---|---|---|

| vLLM | Broad production default | PagedAttention and prefix caching | Stable APIs with mixed open models | Benchmark rows can lag current vLLM paths |

| SGLang | Prefix-heavy agent and chat workloads | RadixAttention and structured execution | Reused system prompts, RAG contexts, agent loops | Unique-prompt benchmarks understate its best case |

| TensorRT-LLM | NVIDIA peak throughput | Compiled engines, optimized kernels, in-flight batching | Stable high-traffic NVIDIA deployments | Build artifacts and compile time |

| Ollama | Local development speed | llama.cpp, GGUF, local daemon | Laptops, prototypes, internal demos | Not built for large multi-tenant serving |

How to actually choose

Benchmark tables are only useful if they change a deployment decision. Use the runtime only after the workload shape points there.

Choose vLLM when you want the safest production starting point

For many teams, vLLM is the safest place to start because it balances:

- maturity

- model support

- deployment simplicity

- strong enough performance

If you don't have clear evidence that your workload is prefix-heavy or that raw NVIDIA throughput is the dominant constraint, start here.

Choose SGLang when prefix reuse dominates the workload

If you serve:

- chat products with long shared system prompts

- RAG systems with repeated retrieved context

- agentic systems that repeatedly reuse similar prompt frames

then SGLang deserves a real benchmark in your own environment. vLLM can already reuse prefixes, so SGLang usually earns its keep when shared-prefix hit rate is high enough that its cache design and scheduling move the curve in your workload, not just in theory.[5][6]

Choose TensorRT-LLM when the model is stable and traffic is large

TensorRT-LLM makes the most sense when:

- the model will stay pinned for a while

- you run on NVIDIA hardware only

- a throughput gain translates directly into meaningful GPU savings

This is the engine for organizations that already think in terms of inference artifacts, deployment pipelines, and aggressive hardware tuning.

Choose Ollama when optimizing for developer time

Ollama is the right answer surprisingly often because developer time is expensive too.

If the question is "how do we test this locally today?" the answer is rarely "stand up a TensorRT-LLM artifact pipeline."

The answer is usually:

bash

1ollama pull <model>

2ollama run <model>Then move up the stack only when the product and traffic justify it.

Benchmark checklist before you switch engines

Before replacing a serving runtime, run a benchmark that looks like production traffic. Synthetic throughput is useful, but only if it matches the request mix you will actually serve.

Track these inputs first:

| Dimension | Why it changes the winner |

|---|---|

| Prompt length distribution | Long prompts increase prefill cost and KV-cache pressure |

| Output length distribution | Long generations make decode throughput dominate |

| Shared-prefix hit rate | High reuse can make prefix caching more valuable than raw unique-prompt throughput |

| Concurrency shape | Burst traffic and steady traffic stress schedulers differently |

| Model churn | Frequent model swaps punish runtimes with heavy compile or artifact steps |

| Hardware target | TensorRT-LLM's advantage is tied to NVIDIA specialization |

Then collect at least four metrics:

- TTFT: how long the user waits before seeing output.

- TPOT: how fast the stream continues after the first token.

- Throughput: total generated tokens per second at target concurrency.

- Operational cost: deployment time, rollback complexity, memory headroom, and on-call failure modes.

One practical rule: benchmark two workloads, not one. Use a unique-prompt workload to test raw serving efficiency, then use a shared-prefix workload to test chat, RAG, and agent traffic. If those two results point at different engines, your router may need to split traffic rather than standardize on one runtime.

Common mistake: migrating because one chart has higher tok/s, then discovering rollback, model compilation, or prompt-cache behavior is the real production bottleneck.

Three mistakes teams make when choosing an engine

Mistake 1: Using TensorRT-LLM for a prototype

Symptom: It's been two days and the team still hasn't served a single request. The build pipeline keeps failing on engine compilation.

Cause: TensorRT-LLM's compiled path can take 20 minutes or more per configuration change. In prototyping, you change batch size, sequence length, or model weights constantly.

Fix: Start with Ollama locally. Move to vLLM for staging when you need multi-user testing. Evaluate TensorRT-LLM only when the model is frozen and the traffic justifies the ops cost.

Mistake 2: Confusing throughput with latency

Symptom: The dashboard shows 2,000 tok/s but users complain the chat feels sluggish.

Cause: Throughput is total tokens across all users. Latency is one user's wait time. An engine can have high throughput while individual users wait seconds for the first token.

Fix: Track TTFT and TPOT separately. A 50 ms improvement in TTFT matters more to user experience than a 200 tok/s improvement in aggregate throughput if your product is interactive.

Mistake 3: Reading one benchmark and declaring a winner

Symptom: You switched to SGLang because it won a benchmark, but your GPU costs went up.

Cause: The benchmark used a different prompt shape from production. Your workload is mostly unique prompts, but you assumed the benchmark's cache behavior would transfer. Without your own cache-hit data, you can't know whether SGLang's prefix reuse or vLLM's broader ecosystem is the better fit.

Fix: Benchmark two workloads: a unique-prompt set and a shared-prefix set. If they point at different engines, your traffic split, not the engine choice, is the real problem.

Practice: pick an engine for a support chatbot

A team is building an account-query chatbot for support staff. Every query starts with a 500-token system prompt that describes the company's account schema. During peak shifts, forty workers use it simultaneously. The prompt mix is: 90% of queries start with the same system prompt plus a specific account lookup like "Find record 7429 in region B."

The team is currently using vLLM and considering a switch.

Question 1: What workload characteristic should they measure first?

Question 2: If the shared-prefix hit rate is 85%, which engine should they benchmark first?

Question 3: If they only serve five workers on a Mac Mini during off-peak hours, what's the fastest way to prototype?

Solution guidance:

- Measure the shared-prefix hit rate and the distribution of output lengths. High prefix reuse points toward prefix-caching benefits; long outputs point toward decode throughput.

- At 85% prefix reuse, SGLang deserves a direct comparison. vLLM also caches prefixes, so the test should compare actual tokens per dollar in your environment, not just theoretical cache efficiency.

- For five workers on a Mac Mini, use Ollama. The one-command setup (

ollama pullandollama run) gets you to a working prototype in minutes, not hours.

What this benchmark doesn't tell you

Even a good benchmark leaves important questions open:

- How does the engine behave on your model family?

- How does it behave under your exact prompt length mix?

- What shared-prefix hit rate do you actually get in production?

- How much do schema-constrained or grammar-constrained outputs matter for your requests?

- How painful is rollout and rollback?

- How much operational work does the performance gain buy you?

That's why "best engine" content usually disappoints. The correct answer is rarely a single noun. It's usually a pairing of workload and team maturity.

Moving on: from engine choice to serving architecture

By now you should be able to:

- Explain why a 60% GPU utilization figure can still mean customers are waiting

- Describe how PagedAttention turns wasted memory into extra concurrent slots

- Read a benchmark table and list the three workload dimensions that could flip the ranking

- Pick a starting engine for a new project without over-engineering the first deployment

The next step in the inference path is understanding how batching strategies and quantization change the same numbers. Once you know why the engine matters, you can tune how it packs work onto the hardware.

References

SGLang: Efficient Execution of Structured Language Model Programs

Zheng, L., Yin, L., Xie, Z., et al. · 2023 · arXiv:2312.07104

Efficient Memory Management for Large Language Model Serving with PagedAttention

Kwon, W., et al. · 2023 · SOSP 2023

vLLM: Easy, Fast, and Cheap LLM Serving with PagedAttention

vLLM Team · 2024

Automatic Prefix Caching

vLLM · 2026

TensorRT-LLM: A High-Performance Inference Framework for LLMs.

NVIDIA · 2024

Ollama GitHub Repository

Ollama Team · 2026

OpenAI compatibility - Ollama

Ollama · 2026

Importing a Model - Ollama

Ollama · 2026

llama.cpp: Inference of LLaMA model in pure C/C++

Gerganov, G. · 2023

vLLM vs TensorRT-LLM vs SGLang: H100 Benchmarks (2026)

Spheron · 2026