BlogDeepSeek V4 and the US AI Lab Squeeze

🏷️ DeepSeek🏷️ Open Models🏷️ AI Infrastructure🏷️ Agentic Coding🏢 Industry

DeepSeek V4 and the US AI Lab Squeeze

DeepSeek V4 pairs open weights, 1M context, and aggressive pricing with serious agentic coding results. The bigger story is what that does to closed API economics and US lab positioning.

LeetLLM TeamApril 27, 202615 min read

DeepSeek V4 is important for two reasons, and the parameter count is only one of them.

The obvious story is scale. DeepSeek-V4-Pro is a 1.6T-parameter mixture-of-experts model with 49B active parameters per token. DeepSeek-V4-Flash is the smaller 284B-parameter version with 13B active parameters. Both ship with 1M context as the standard window, not a special enterprise toggle.[1][2]

The less obvious story is pressure. DeepSeek is pushing frontier-style capability into an MIT-licensed, open-weight release while also offering a very cheap hosted API. That combination puts stress on the business model US labs have been defending: closed weights, premium API margins, and long-context pricing as a paid advantage.

This doesn't mean every company should self-host V4 tomorrow. It means the default assumption changed again. Open-weight models are no longer just the fallback when you can't afford a closed model. In a growing number of workloads, they're the thing you benchmark first.

What DeepSeek Released

DeepSeek calls this a preview release, but the artifacts are real enough to evaluate today: open weights on Hugging Face, hosted API endpoints, OpenAI-compatible Chat Completions, Anthropic-compatible API access, and explicit setup guides for coding agents.[1][3]

| Model | Total parameters | Active parameters | Context | Intended role |

|---|---|---|---|---|

| DeepSeek-V4-Pro | 1.6T | 49B | 1M | Hard reasoning, agentic coding, long-context work |

| DeepSeek-V4-Flash | 284B | 13B | 1M | Fast chat, routing, summaries, cheaper agents |

The model card says the instruct checkpoints use mixed precision: MoE expert weights are stored in FP4, while most other parameters use FP8. Base models are FP8 mixed.[2] That detail matters because V4 isn't a simple dense model where parameter count translates directly into active compute. Like other MoE systems, it has a large pool of capacity but routes each token through only a subset of experts.

💡 Key insight: Total parameters describe the full model's storage footprint, while active parameters describe the work done per token. V4-Pro is huge to store, but it doesn't run like a dense 1.6T model on every forward pass.

For a first-principles refresher, this is the core idea behind mixture-of-experts architecture: total parameters describe the full model, while active parameters describe the work done for each token.

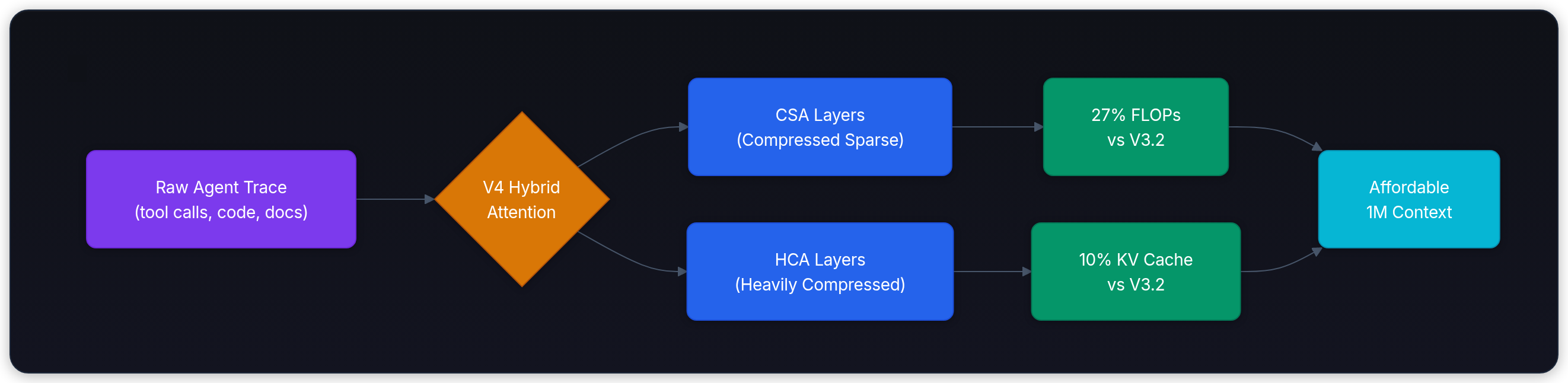

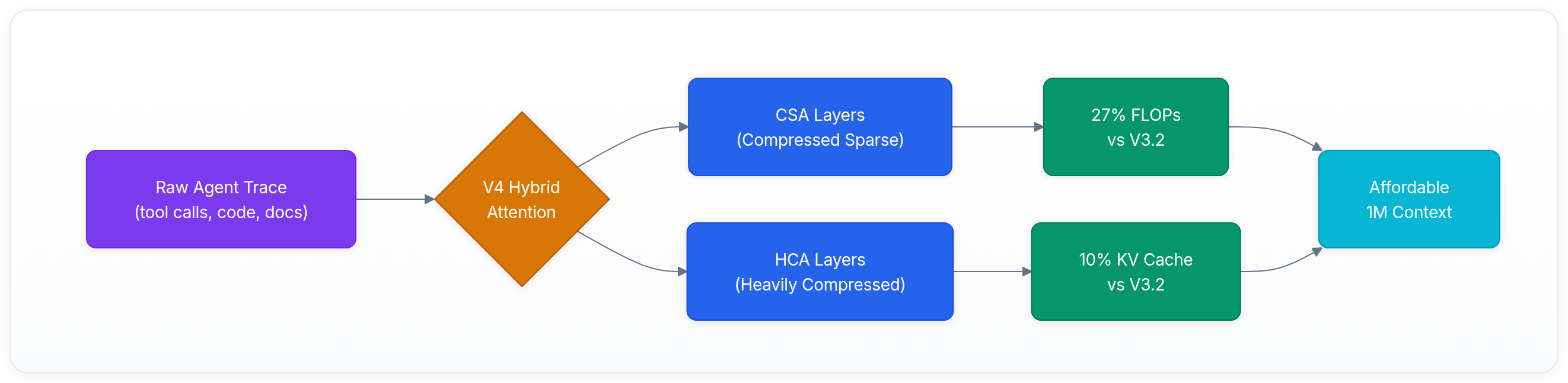

The Architecture Bet: Make 1M Context Affordable

A million-token context window is only useful if the model can afford to use it.

Standard attention gets painful because every new token has to attend over a growing history, and the server has to keep a KV cache for that history. In long-running agent work, that history can include instructions, code files, command outputs, tool results, stack traces, retrieved documents, and previous reasoning traces. The context window may be 1M tokens, but the cost of filling and reusing it can dominate the system.

DeepSeek V4 attacks that with token-wise compression plus DeepSeek Sparse Attention in the release note, and with a broader hybrid attention design in the model card. The card describes Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA), with the headline claim that V4-Pro at 1M context uses 27% of DeepSeek-V3.2's single-token inference FLOPs and 10% of its KV cache.[1][2]

That's the technical center of the release.

The world already had long-context models. The hard part is making long context cheap enough for agents that keep running. A code agent can burn hundreds of tool turns. A research agent can keep appending documents and intermediate notes. A support agent can carry a long account history. If KV cache memory is the bottleneck, the product falls back to truncation, summarization, retrieval, or expensive hosted context.

V4's pitch is different: compress the history enough that a larger part of the raw working trace can stay in the model's context.

Agentic Coding Is the Showcase

DeepSeek is aiming V4 directly at coding agents. The official release claims open-source state of the art in agentic coding benchmarks and lists integrations with Claude Code, OpenClaw, and OpenCode.[1][3]

The model card gives a more useful view because it breaks out the benchmark table. V4-Pro-Max reports 80.6% on SWE Verified, 67.9 on Terminal Bench 2.0, and 73.6% on MCPAtlas Public. V4-Flash-Max is close on several agent tasks, with 79.0% on SWE Verified and 69.0% on MCPAtlas.[2]

Here's how V4-Pro-Max stacks up against closed frontier models on the key agentic benchmarks from the model card:

| Benchmark | Opus 4.6 Max | GPT-5.4 xHigh | Gemini 3.1 Pro | DS-V4-Pro Max |

|---|---|---|---|---|

| SWE Verified (%) | 80.8 | - | 80.6 | 80.6 |

| Terminal Bench 2.0 | 65.4 | 75.1 | 68.5 | 67.9 |

| MCPAtlas Public (%) | 73.8 | 67.2 | 69.2 | 73.6 |

| SWE-Pro (%) | 57.3 | 57.7 | 54.2 | 55.4 |

| LiveCodeBench (%) | 88.8 | - | 91.7 | 93.5 |

Read those numbers carefully. They come from the release materials, so they should be treated as claims to reproduce in your own harness, not as permanent truth. Still, the direction is hard to ignore: the open-weight model is now close enough that the evaluation question becomes workload-specific.

⚠️ Common mistake: Don't take self-reported benchmarks at face value. Different evaluation harnesses, agent scaffolding, and retry strategies can shift results significantly. Always validate against your own workload before making routing decisions.

For a coding platform, the practical question is no longer only:

Which closed model is best?

It's now:

Which requests need the expensive closed model, which can go to V4-Pro, and which should be routed to V4-Flash?

That routing question is where cost engineering starts to look like product architecture.

The API Migration Is Simple, but the Deadline Is Real

DeepSeek's hosted API is available now. The release says users can keep the same base_url and switch the model name to deepseek-v4-pro or deepseek-v4-flash. It also supports OpenAI Chat Completions and Anthropic API formats.[1]

There's one date to put on the migration calendar: deepseek-chat and deepseek-reasoner retire after July 24, 2026 at 15:59 UTC. DeepSeek says those names currently route to V4-Flash non-thinking and thinking modes, but they won't remain accessible after the retirement date.[1]

For teams already using DeepSeek as a cheap reasoning lane, this isn't a cosmetic rename. Update model IDs, retest thinking mode behavior, and check any client code that assumes deepseek-reasoner is a stable model string.

🎯 Production tip: Pin your model IDs explicitly (e.g.

deepseek-v4-flashinstead ofdeepseek-chat). Alias-based routing that depends on generic names will break silently when the deprecation hits.

Why US Labs Should Care

V4 lands in a sensitive spot for US AI labs because it hits four pressure points at once.

1. The dominance story keeps getting weaker

The old story was simple: US labs had the frontier models, while open models were useful but clearly behind. DeepSeek keeps making that story harder to defend.

V4-Pro's official benchmark table places it near closed frontier systems on several coding and agentic tasks, and its long-context design isn't just a copied feature checklist.[2] The release is also openly framed around cost-effective 1M context, which is exactly where closed APIs have been able to charge a premium.

This doesn't mean DeepSeek beats every US model. It doesn't. The model card shows cases where Gemini, Claude, OpenAI, Kimi, or GLM lead. The important point is narrower: a non-US lab is repeatedly producing open-weight releases that force frontier comparisons. That changes the negotiation between developers and API vendors.

2. Open weights pressure closed pricing

Closed models still have real advantages: hosted reliability, safety work, multimodal product polish, enterprise controls, and fast access to the newest frontier releases. But open weights put a ceiling on what customers will tolerate for ordinary workloads.

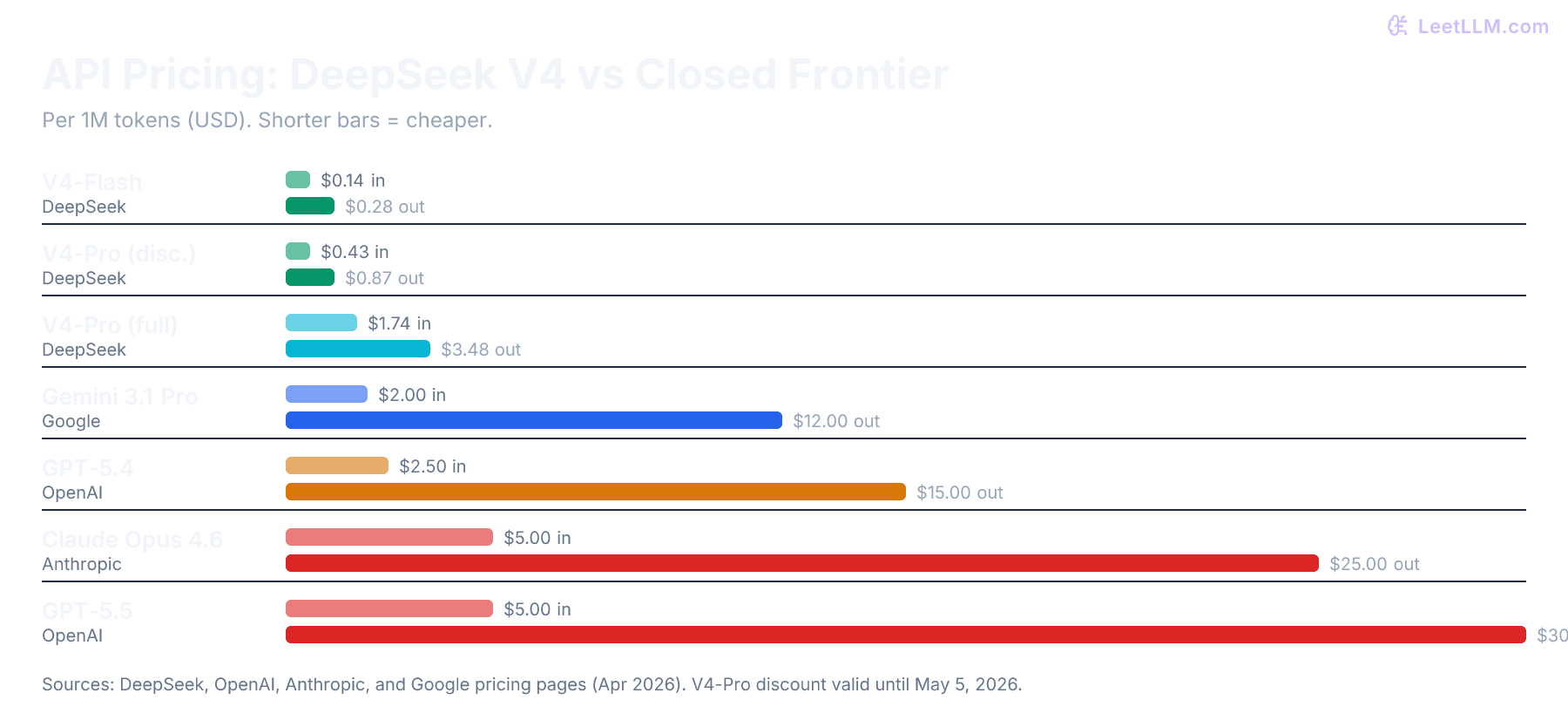

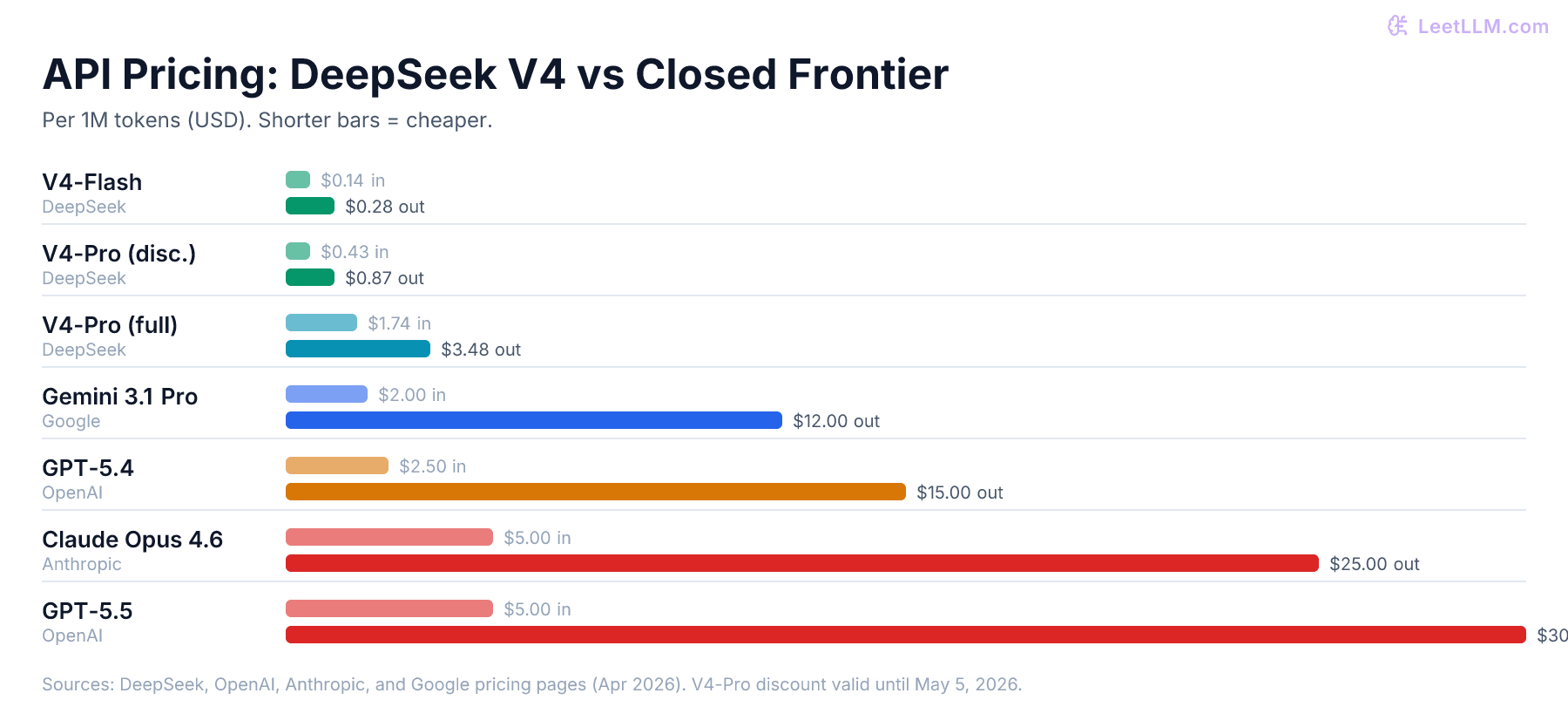

DeepSeek's API page lists V4-Flash at $0.14 per 1M cache-miss input tokens and $0.28 per 1M output tokens. V4-Pro is discounted at launch to $0.435 input and $0.87 output per 1M tokens, with a stated non-discount price of $1.74 input and $3.48 output. Cache-hit input is far cheaper for both models.[4]

Compare that with current premium hosted pricing. OpenAI lists GPT-5.4 standard pricing at $2.50 input and $15.00 output per 1M tokens, while GPT-5.5 standard pricing is $5.00 input and $30.00 output.[5] Anthropic lists Claude Opus 4.6 at $5 input and $25 output per 1M tokens.[6] Google lists Gemini 3.1 Pro paid input at $2.00 to $4.00 per 1M tokens depending on prompt size, with output at $12.00 to $18.00.[7]

| Provider | Model | Input / 1M tokens | Output / 1M tokens |

|---|---|---|---|

| DeepSeek | V4-Flash | $0.14 | $0.28 |

| DeepSeek | V4-Pro (discounted) | $0.435 | $0.87 |

| DeepSeek | V4-Pro (full price) | $1.74 | $3.48 |

| Gemini 3.1 Pro (≤200K) | $2.00 | $12.00 | |

| Gemini 3.1 Pro (>200K) | $4.00 | $18.00 | |

| OpenAI | GPT-5.4 | $2.50 | $15.00 |

| Anthropic | Claude Opus 4.6 | $5.00 | $25.00 |

| OpenAI | GPT-5.5 | $5.00 | $30.00 |

That isn't an apples-to-apples quality comparison. It's a margin comparison. For teams that still think in GPT-4o-era defaults, the lesson is to reprice the workload against today's premium API lanes rather than assume the old managed-model baseline still makes sense. If V4-Flash is good enough for routing, summarization, codebase Q&A, or low-risk agent steps, the expensive model needs to justify each escalation.

3. Cheap 1M context changes product design

Long context used to be something you saved for premium flows because it was expensive and operationally awkward. V4 makes a different design pattern more plausible:

- Put the whole repository, transcript, or document set into context when the task benefits from locality.

- Use retrieval and compaction as optimization tools, not as mandatory workarounds.

- Route routine steps to Flash and escalate hard steps to Pro or a closed model.

That matters for AI engineering because context policy becomes a product decision. You can ask whether a user should pay for a more complete working set, whether cached context should be reused across sessions, and whether the agent should carry raw tool traces instead of summarizing them too aggressively.

The LeetLLM deep dive on million-token context windows covers the broader point: context length isn't just a number in a model card. It changes memory, latency, evaluation, and cost.

4. Hardware efficiency is a strategic weapon

US labs still have massive training clusters. That matters. But V4 points at a different kind of advantage: getting more useful inference out of the same serving fleet.

NVIDIA summarizes V4's architecture as reducing per-token inference FLOPs and KV cache memory burden relative to DeepSeek-V3.2, and reports early GB200 NVL72 tests for V4-Pro at more than 150 tokens per second per user.[8] SGLang and vLLM both published Day 0 recipes for serving V4 on Hopper and Blackwell-class systems.[9][10]

This is why sparse attention matters commercially. If a lab can reduce memory pressure and keep long-context agents on fewer GPUs, it can sell lower prices, serve more users, or spend the same budget on harder tasks.

What It Takes to Run DeepSeek V4 Yourself

The open-weight part is real, but it doesn't make V4-Pro a laptop model.

There are two separate hardware questions:

- Can you load the weights? Total parameters drive storage and VRAM pressure.

- Can you serve useful traffic? Active parameters, KV cache, batching, networking, and the context length drive throughput and latency.

The official Hugging Face page lists V4-Pro instruct with a model size of 862B in the file metadata, and V4-Flash instruct with 158B. The model card says the instruct weights use FP4 for MoE experts and FP8 for most other parameters.[2] That explains why the storage footprint is much lower than a naive FP16 calculation, but it's still large.

| Deployment target | Practical read |

|---|---|

| V4-Flash experimentation | Datacenter workstation or single server class, not a 24 GB gaming GPU |

| V4-Flash production | SGLang lists single-node serving on 4 GPUs for B200, GB300, or H200 platforms[9] |

| V4-Pro experimentation | Multi-GPU datacenter hardware with fast interconnect |

| V4-Pro production | SGLang lists B200 8 GPU, GB300 4 GPU, or H200 16 GPU across 2 nodes[9] |

| V4-Pro with vLLM | vLLM lists B300 8 GPU, H200 8 GPU with context capped at 800K, or two GB200 NVL4 trays for 8 GPUs total[10] |

The exact recipe depends on runtime, checkpoint layout, context length, and parallelism strategy. The important point is that V4-Flash is the realistic self-hosting candidate for many infrastructure teams. V4-Pro is a serious cluster deployment.

This is also where V4 differs from the Llama comparison people usually make. Llama 4 Scout is 109B total, 17B active, and NVIDIA says an INT4-optimized Scout can run on a single H100. Llama 4 Maverick is 400B total, 17B active, with a 1M context window.[11] Llama 3.1 405B is a dense 405B model with 128K context.[12]

DeepSeek-V4-Flash is larger than Scout in total parameters but has only 13B active parameters. V4-Pro is in another storage class entirely. Its advantage isn't that it's easy to self-host. Its advantage is that it gives you open weights and frontier-adjacent agent results if you have the infrastructure to run it.

For closed models, the comparison is different. You don't get a self-hosting plan. You get an API bill, rate limits, enterprise terms, and whatever context and caching behavior the vendor exposes. That's often the right tradeoff, especially for teams without inference engineers. But V4 gives larger teams another option.

Quantization Helps, but It Doesn't Change the Category

V4 already ships in an aggressive mixed-precision format for instruct models. The model card describes FP4 MoE expert weights and FP8 for most other parameters, while the local inference README says you can switch experts to FP8 by changing config and conversion options.[2]

That means the usual local-model intuition needs care. With a Llama 70B dense model, moving from FP16 to 4-bit can be the difference between a server GPU and a high-end desktop. With V4, the official instruct checkpoint has already taken a big precision step. Community GGUF or lower-bit conversions may appear, but quality, tool-calling behavior, and 1M-context performance need validation before production use.

A good operator stance is:

- Use DeepSeek's API first to evaluate quality and routing value.

- Use V4-Flash for self-hosting pilots if your workload has steady volume.

- Treat V4-Pro self-hosting as a cluster project, not a developer workstation project.

- Benchmark at the context length you actually need. A model that fits at 32K may not fit your 384K or 1M agent workload.

For serving fundamentals, KV cache and PagedAttention, continuous batching, and LLM cost engineering are the concepts to understand before you buy GPUs.

Inference Cost Is Not Training Cost

One common mistake is to mix training economics and inference economics.

Training creates the model. Inference runs the model for users. The capital requirements, optimization targets, and accounting are different.

DeepSeek says V4 was pretrained on more than 32T tokens.[2] That's a lab-scale training project. Meta's Llama 3.1 model card reported 30.84M GPU hours for the 405B model's training run, which gives a useful sense of how expensive frontier-scale pretraining can get.[12]

Most companies aren't choosing whether to train DeepSeek V4. They're choosing whether to:

- call DeepSeek's hosted API

- self-host V4-Flash

- self-host V4-Pro

- keep using closed US frontier APIs

- route across all of the above

That's an inference decision. The cost model should focus on tokens, cache hit rate, utilization, latency target, staff time, and GPU reservations.

🔬 Research insight: DeepSeek's V4-Pro launch discount (75% off, valid until May 5, 2026) makes the hosted API extremely cheap for evaluation. At $0.435/M input, teams can run serious agentic benchmarks against their own codebases before committing to infrastructure.

For low or bursty traffic, the API is usually the rational starting point. For steady, high-volume traffic with privacy or customization needs, self-hosting can make sense. The break-even point depends less on the model's benchmark score and more on whether your GPUs stay busy.

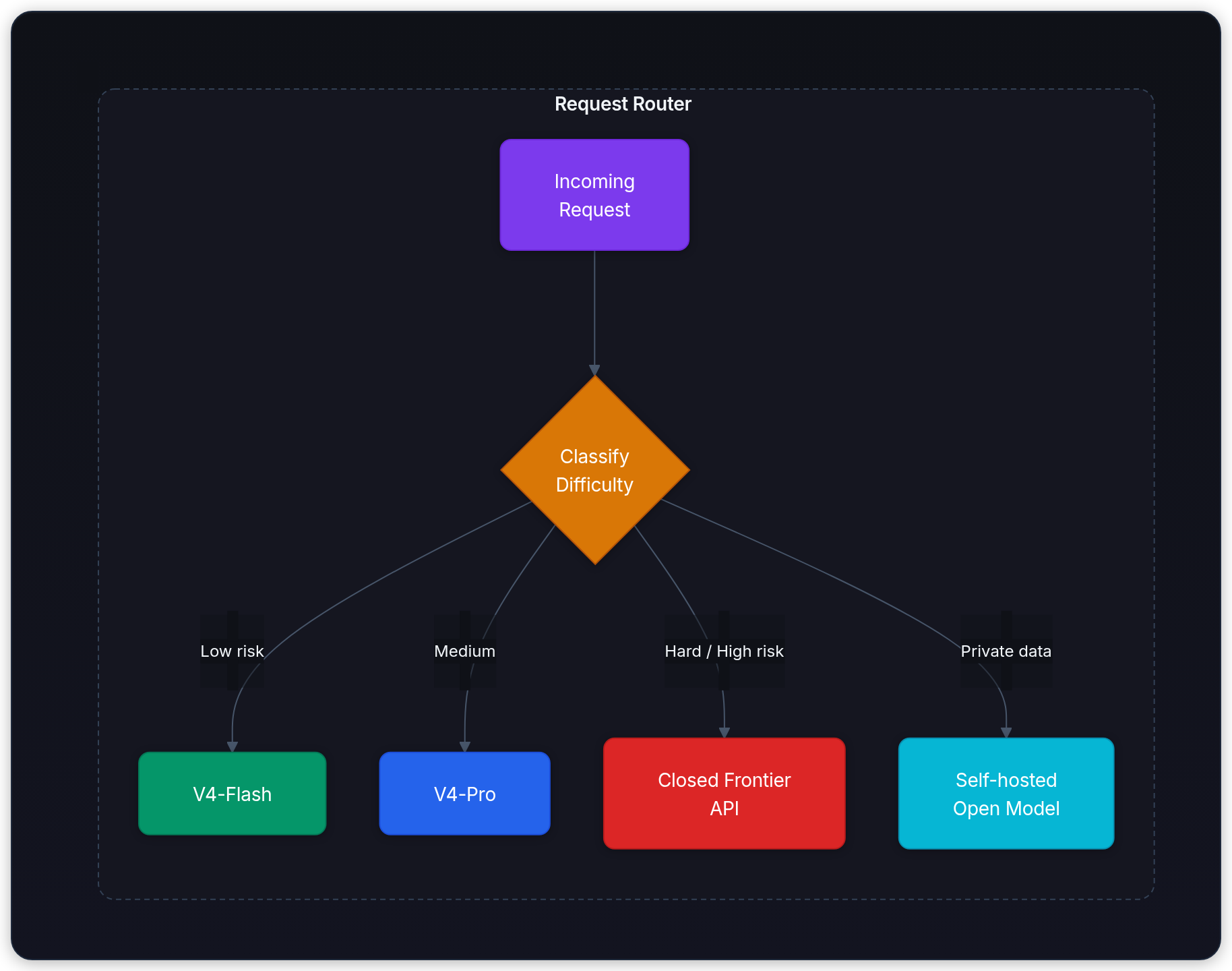

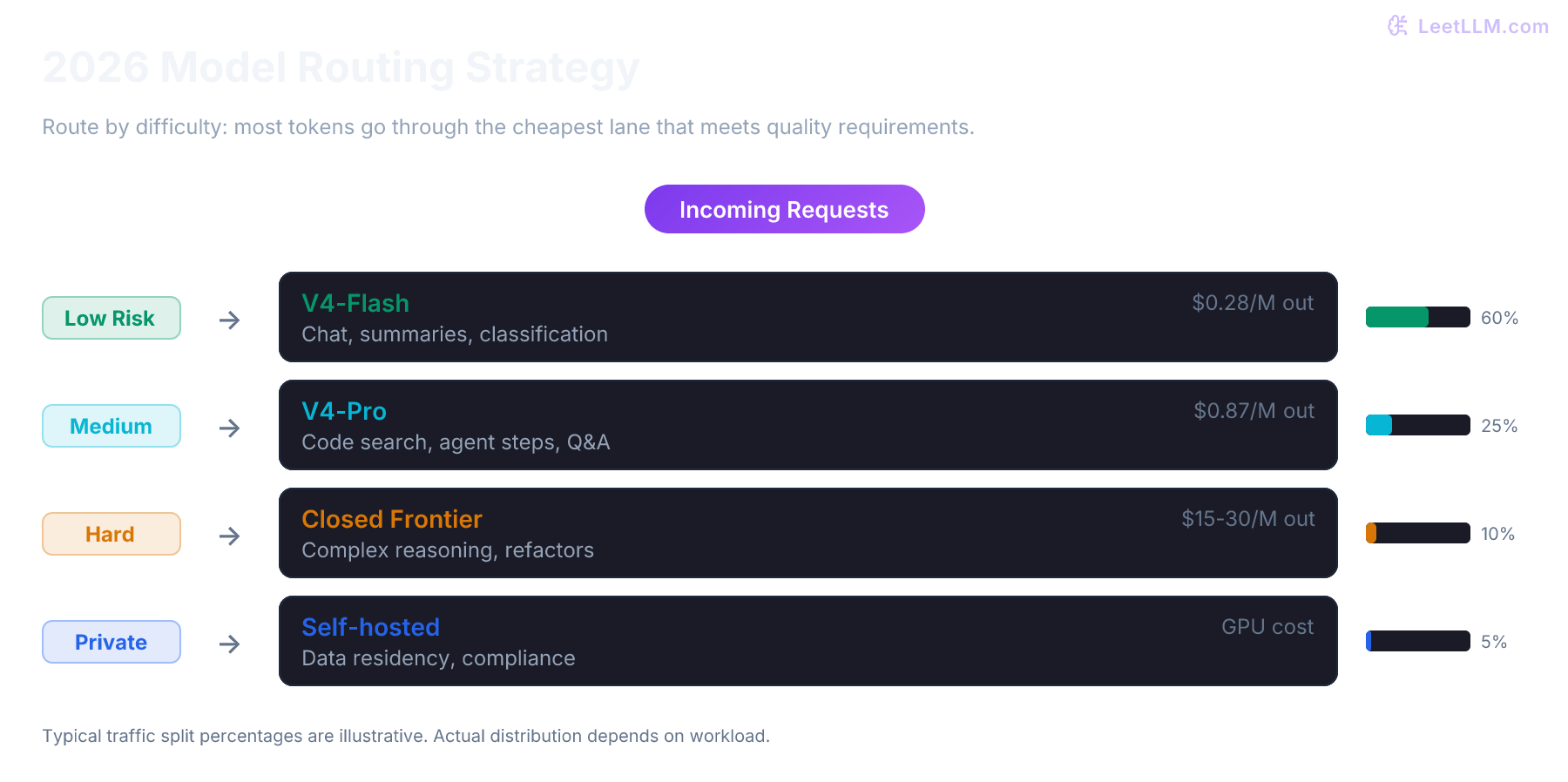

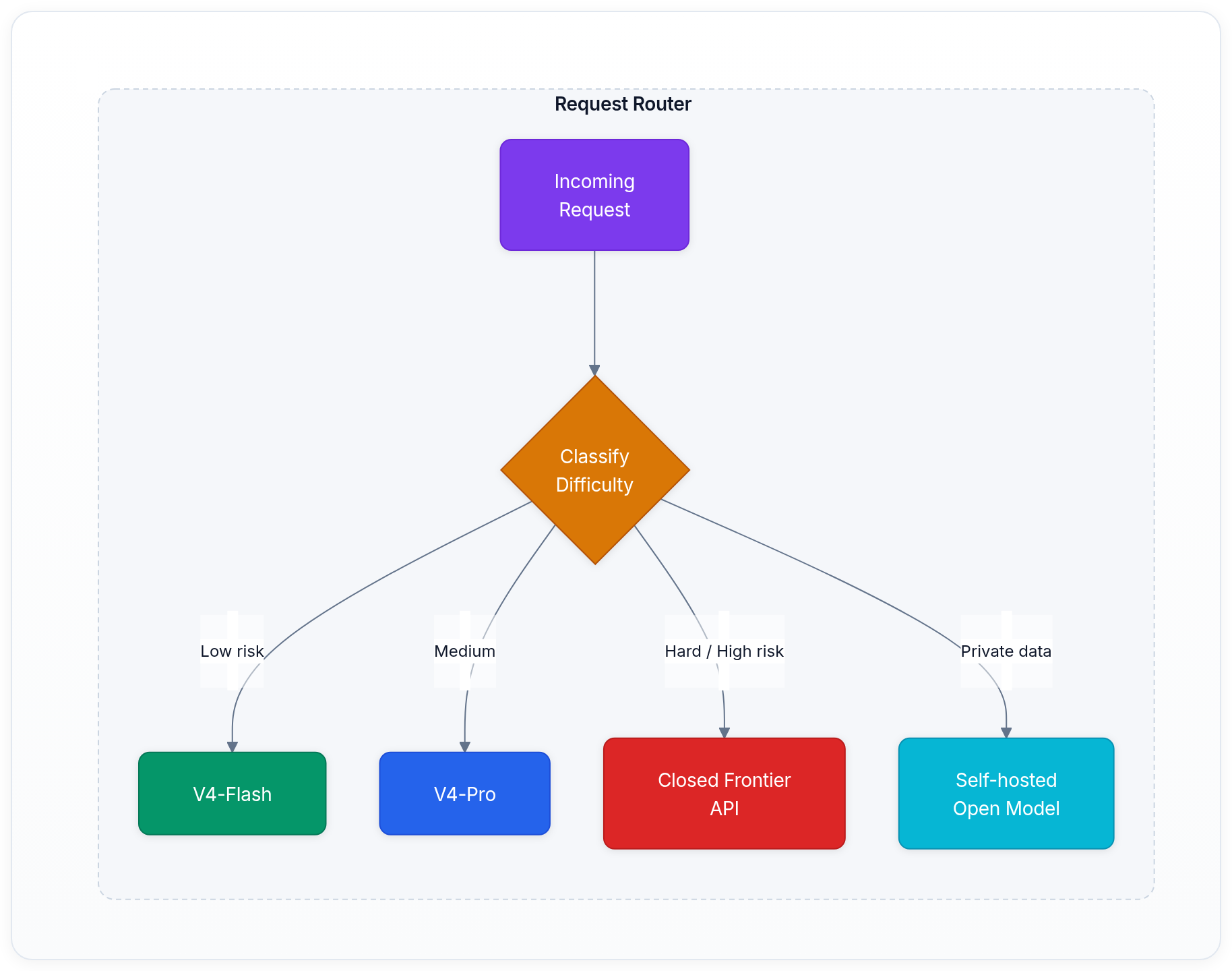

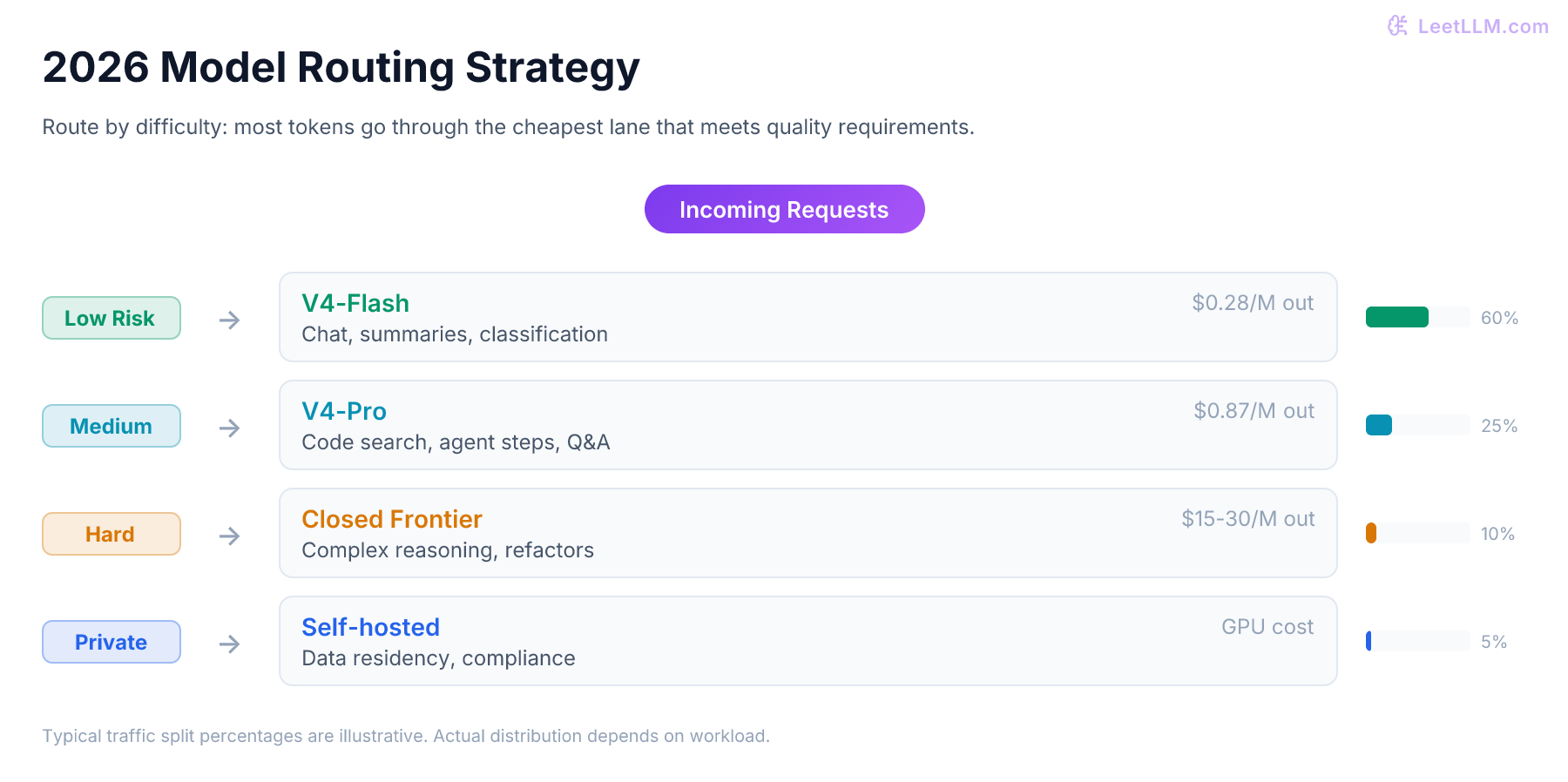

The Real Impact: Routing Becomes the Default

The strongest production response to DeepSeek V4 isn't ideological.

It's routing.

A practical 2026 model router might look like this:

| Request type | Default model lane |

|---|---|

| Low-risk chat, summaries, classification | V4-Flash or another efficient open model |

| Codebase search and medium agent steps | V4-Flash with long context |

| Hard coding tasks, large refactors, multi-step agents | V4-Pro |

| Highest-risk reasoning, multimodal, or enterprise-policy flows | Closed frontier API |

| Private data residency workloads | Self-hosted open-weight model |

That's bad news for any lab whose business assumes every token should go through a premium closed model. The best closed models will still command premium pricing for hard tasks. But V4 makes it easier to avoid paying premium prices for easy and medium tasks.

Bottom Line

DeepSeek V4 isn't the end of US AI leadership. It's a sharp reminder that leadership is no longer measured only by who has the biggest closed model.

The important pieces are practical:

- V4-Pro gives open-weight users a serious agentic coding model with 1M context.

- V4-Flash gives builders a cheaper long-context lane that can cover many routine agent steps.

- The API is available now and works with OpenAI and Anthropic-style clients.

- The old

deepseek-chatanddeepseek-reasonernames retire on July 24, 2026. - Self-hosting V4-Pro is a datacenter project, while V4-Flash is the more realistic first deployment target.

For US labs, the warning is simple: if a competitor can release open weights, support 1M context, integrate with coding agents, and sell the hosted version at a fraction of premium API pricing, closed-source models need to win on more than habit. They need to win on capability, reliability, product polish, enterprise trust, and total cost for the specific workload.

That's a much harder market than the one closed labs enjoyed two years ago.

References

DeepSeek V4 Preview Release

DeepSeek · 2026

DeepSeek-V4: Towards Highly Efficient Million-Token Context Intelligence

DeepSeek-AI · 2026

Models and Pricing

DeepSeek · 2026

Integrate with AI Tools

DeepSeek · 2026

Anthropic Model Pricing

Anthropic · 2026

The Llama 3 Herd of Models.

Dubey, A., et al. · 2024 · arXiv preprint

Gemini API Pricing

Google · 2026

NVIDIA Accelerates Inference on Meta Llama 4 Scout and Maverick

NVIDIA · 2025

Build with DeepSeek V4 Using NVIDIA Blackwell and GPU-Accelerated Endpoints

NVIDIA · 2026

OpenAI API Pricing

OpenAI · 2026

DeepSeek-V4

SGLang · 2026

DeepSeek-V4-Pro vLLM Recipe

vLLM · 2026