BlogThe Million-Token Era: What 1M Context Windows Actually Change

📏 Context Windows📜 Long Context📊 Benchmarks🏗️ Infrastructure🏊 Deep Dive

The Million-Token Era: What 1M Context Windows Actually Change

Frontier APIs now expose seven-figure context windows, from roughly 1.0M to 2.0M tokens. This guide explains what fits, what breaks, how to evaluate effective context length, and when the economics actually justify using it.

LeetLLM TeamMarch 14, 202618 min read

Imagine trying to write a report on a book when you can only read one page at a time. You'd constantly be flipping back and forth, struggling to remember details from earlier chapters. For a long time, that's how AI models had to work with large documents. As of April 2026, frontier APIs expose 1M- to 2M-class context windows, which changes the architecture of code assistants, document analysis systems, and long-running agents.[1][2][3][4][5]

But here's the question nobody seems to be asking: what does any of this actually mean? How much text fits in a million tokens? Does the model actually use all that context, or does information decay the further back you go? And if you're building production systems, should you even care?

This post answers all of that. We'll make the numbers tangible, explore what goes right and wrong with massive context windows, and examine the benchmarks that separate marketing claims from real capability.

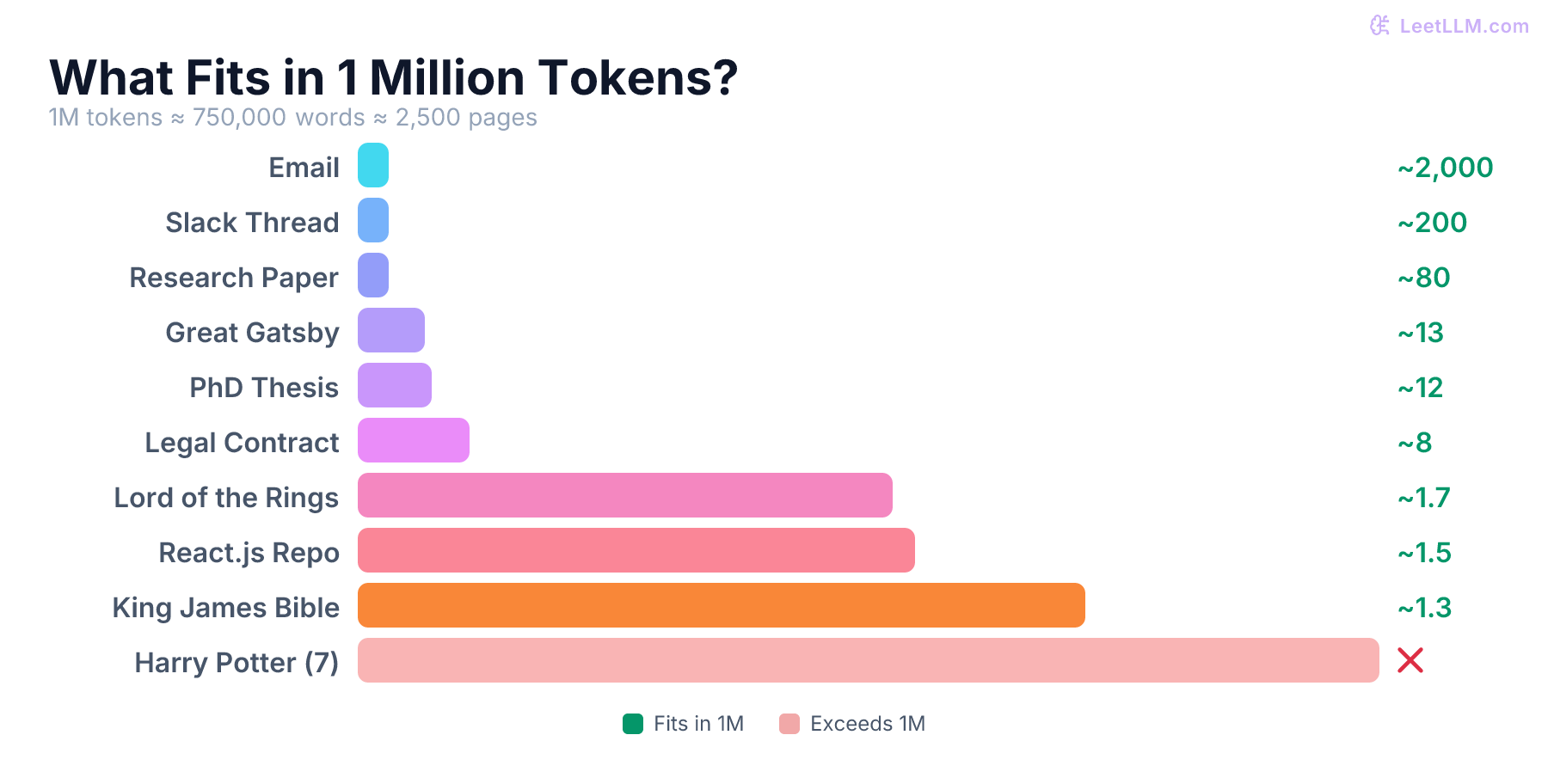

How big is a million tokens, really?

Token counts are abstract. Nobody thinks in tokens. So let's translate. (If you're not sure what a token even is, our guide to tokenization covers BPE, WordPiece, and SentencePiece in detail.)

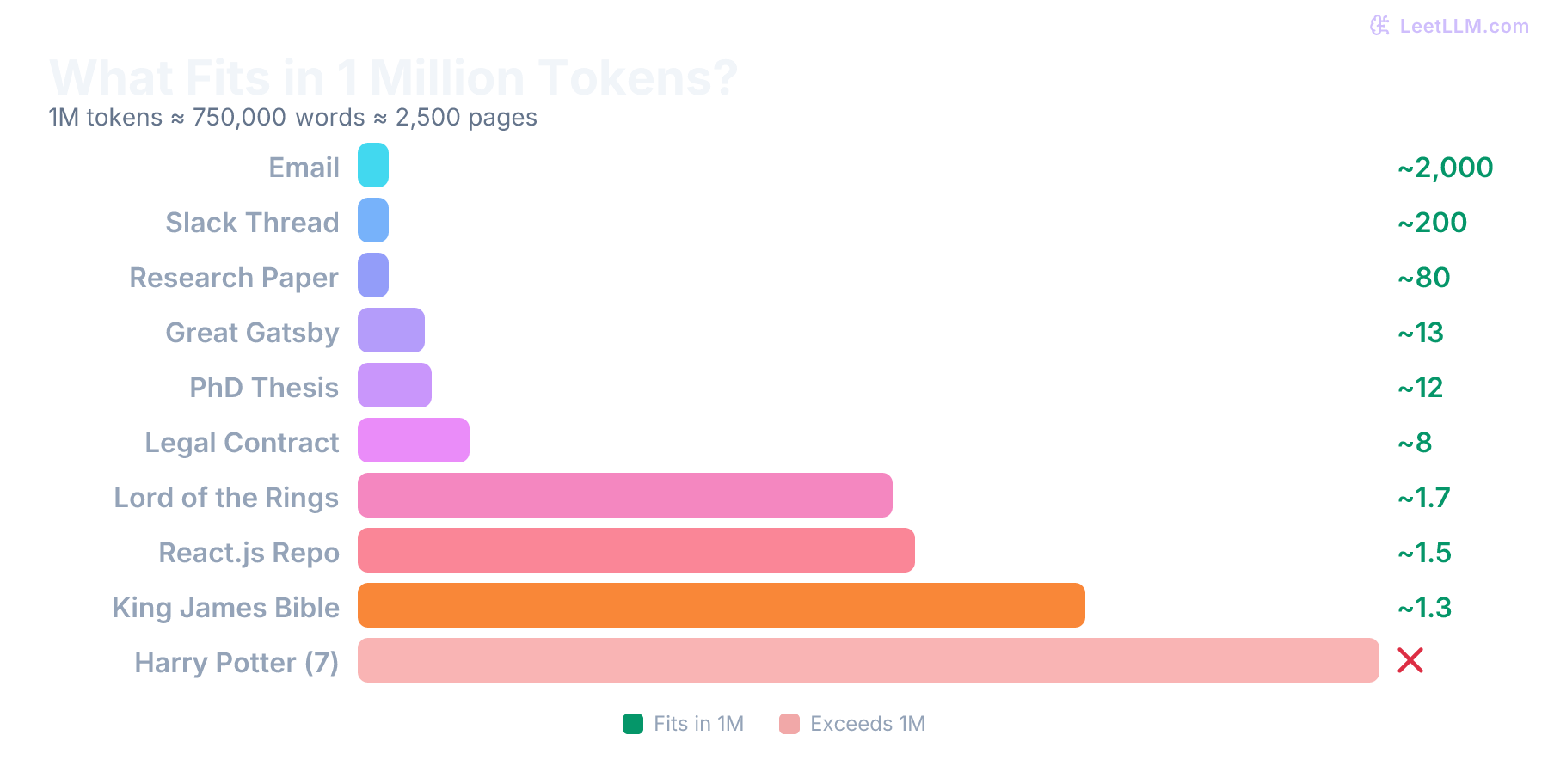

A rough rule of thumb: 1 token ≈ 0.75 English words ≈ 4 characters. That means 1 million tokens is roughly 750,000 words. To put that in perspective:

| What you're fitting | Approximate size | Fits in 1M tokens? |

|---|---|---|

| A typical email | 200-500 tokens | ✅ ~2,000 emails |

| A single Slack thread (50 messages) | ~5,000 tokens | ✅ ~200 threads |

| The Great Gatsby | ~72,000 tokens | ✅ 13 copies |

| A PhD dissertation | ~80,000 tokens | ✅ ~12 dissertations |

| A 400-page legal contract | ~120,000 tokens | ✅ ~8 full contracts |

| 10 hours of meeting transcripts | ~150,000 tokens | ✅ ~66 hours total |

| The Lord of the Rings (trilogy) | ~576,000 tokens | ✅ ~1.7 copies |

| Mid-sized production service (20K LOC) | ~100,000-200,000 tokens | ✅ ~5-10 services |

| King James Bible | ~783,000 tokens | ✅ ~1.3 copies |

| Harry Potter (all 7 books) | ~1,100,000 tokens | ❌ Just barely exceeds |

| Linux kernel (core, no drivers) | ~5,000,000 tokens | ❌ Way too large |

💡 Key insight: 1M tokens isn't infinite. It's roughly the size of a large novel, or a small-to-medium production codebase. Enough to hold an entire project's source code in memory, but not enough for a mature open-source project like the Linux kernel. The practical question isn't "can I fit everything?" but "can I fit enough?"

For engineers, the most exciting comparison might be codebases. A medium-sized production service (50-100 files, 20K lines of code) translates to roughly 100,000-200,000 tokens. That means you could load 5-10 complete microservices into a single context window. That's a fundamentally different capability than what we had two years ago. OpenAI's GPT-4.1 launch post describes 1M tokens as "more than eight copies of the entire React codebase," which is useful as a vendor-supplied repo-scale analogy rather than a universal constant for every repository.[6]

⚠️ Warning: Language matters. These estimates assume English text. Tokenizers like BPE (Byte-Pair Encoding) were predominantly trained on English corpora, so non-Latin scripts use significantly more tokens per word. Chinese, Japanese, Korean, Arabic, and Hindi text can consume 1.5-3x more tokens for the same semantic content. A 1M context window that holds a 750K-word English document might only hold 300K-400K words of Chinese text. If you're building multilingual systems, always measure your actual token consumption. Don't assume the English ratios hold. For a deeper understanding of why this happens, see our article on tokenization algorithms.

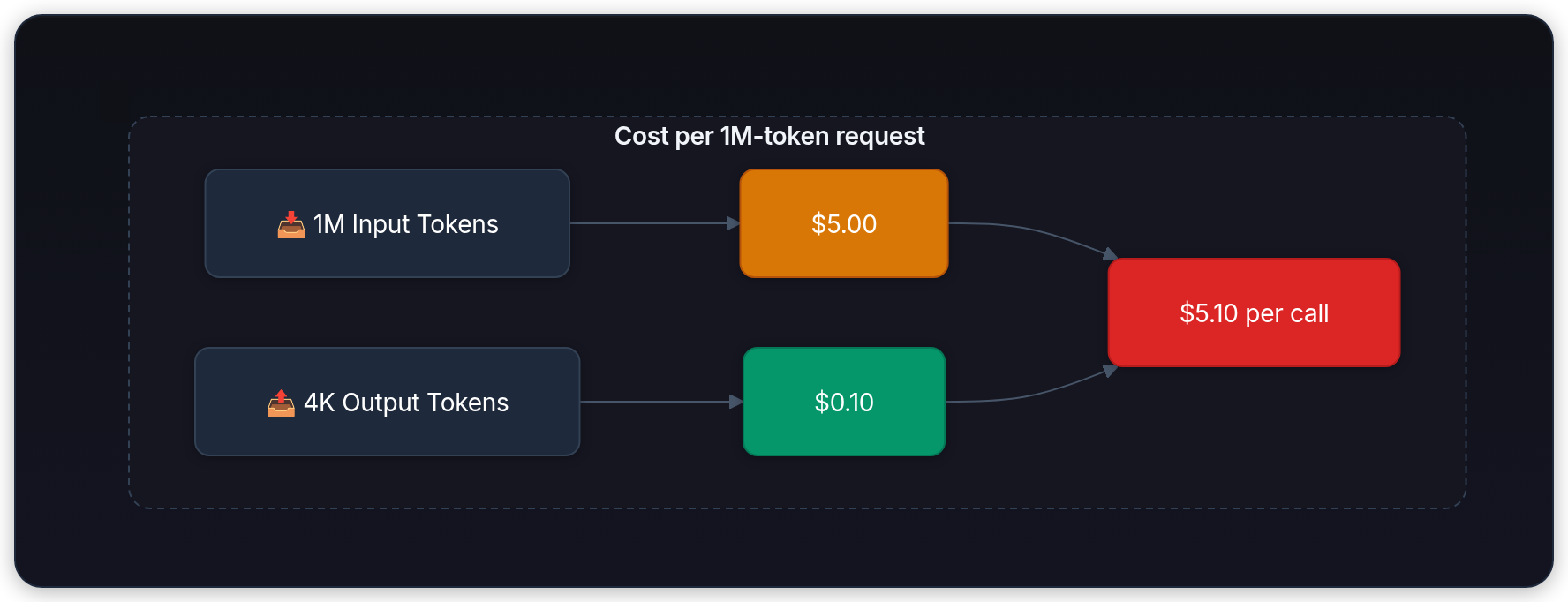

The economic picture

Context window size is only half the story. The economics of using that context matter just as much.

The official model and pricing pages from Anthropic, OpenAI, Google, and xAI all make the same architectural point in different ways: once you move from tens of thousands of tokens to hundreds of thousands, long-context work becomes a systems-budget decision, not a trivial API call.[1][7][2][3][8][5]

What's changed is not that long context became free. It's that vendors now expose it as a normal model capability, with clearer pricing and caching behavior:

| Provider docs | What the official pages emphasize | Practical implication |

|---|---|---|

| Anthropic[1][7] | Anthropic's current docs list 1M-token windows for Claude Opus 4.7, Claude Opus 4.6, and Claude Sonnet 4.6, while pricing docs call out premium long-context pricing once supported requests exceed the provider's threshold | Seven-figure context is available, but you still need to check model-specific availability, access, and billing instead of assuming "1M" is the default tier |

| OpenAI[2][3] | GPT-4.1 and GPT-5.5 expose roughly 1.05M-token windows, but GPT-5.5 pricing steps up above 272K input tokens | Long context is a model-selection decision, and threshold pricing can matter more than the headline window size |

| Google[4][8] | Gemini 3-family text models and Gemini 2.5 Pro/Flash expose 1M windows; Google pricing often steps up above 200K tokens on the larger models | Large prompts are supported, but they still meaningfully change unit economics |

| xAI[5] | Grok 4.20 lists a 2,000,000-token window and cached-token pricing in the model catalog | Massive windows don't remove the need to budget prompt size carefully |

| Open-weight models | No vendor surcharge, but your serving bill becomes the bottleneck | You trade API markup for GPU memory pressure, serving complexity, and KV-cache cost |

🎯 Production tip: The wrong question is "does this model support 1M tokens?" The right question is "how often will my production path actually send 200K, 500K, or 900K tokens?"

What actually gets better with longer context

Bigger context windows unlock use cases that were genuinely impossible before. Here are the most impactful:

Whole-codebase reasoning

Instead of carefully selecting which files to include in your prompt, you can load an entire project. The model sees the full dependency graph, all the configuration files, the test suite, and the README. When you ask "why does this API endpoint return 500 when I pass an empty array?", the model doesn't need to guess which files matter. It can trace the call path from router to handler to database layer in a single pass.

The gain is not mystical. It is operational. You stop throwing away potentially relevant files just to stay under the window.

Long-running agent memory

Agentic systems that run for hours accumulate massive context: tool calls, observations, intermediate reasoning, error logs. Before 1M context, agents needed compaction (summarizing earlier parts of the conversation to free up space). Compaction loses detail. With 1M tokens, agents can hold 5-10x more history before needing to compress, and some conversations never need compaction at all.

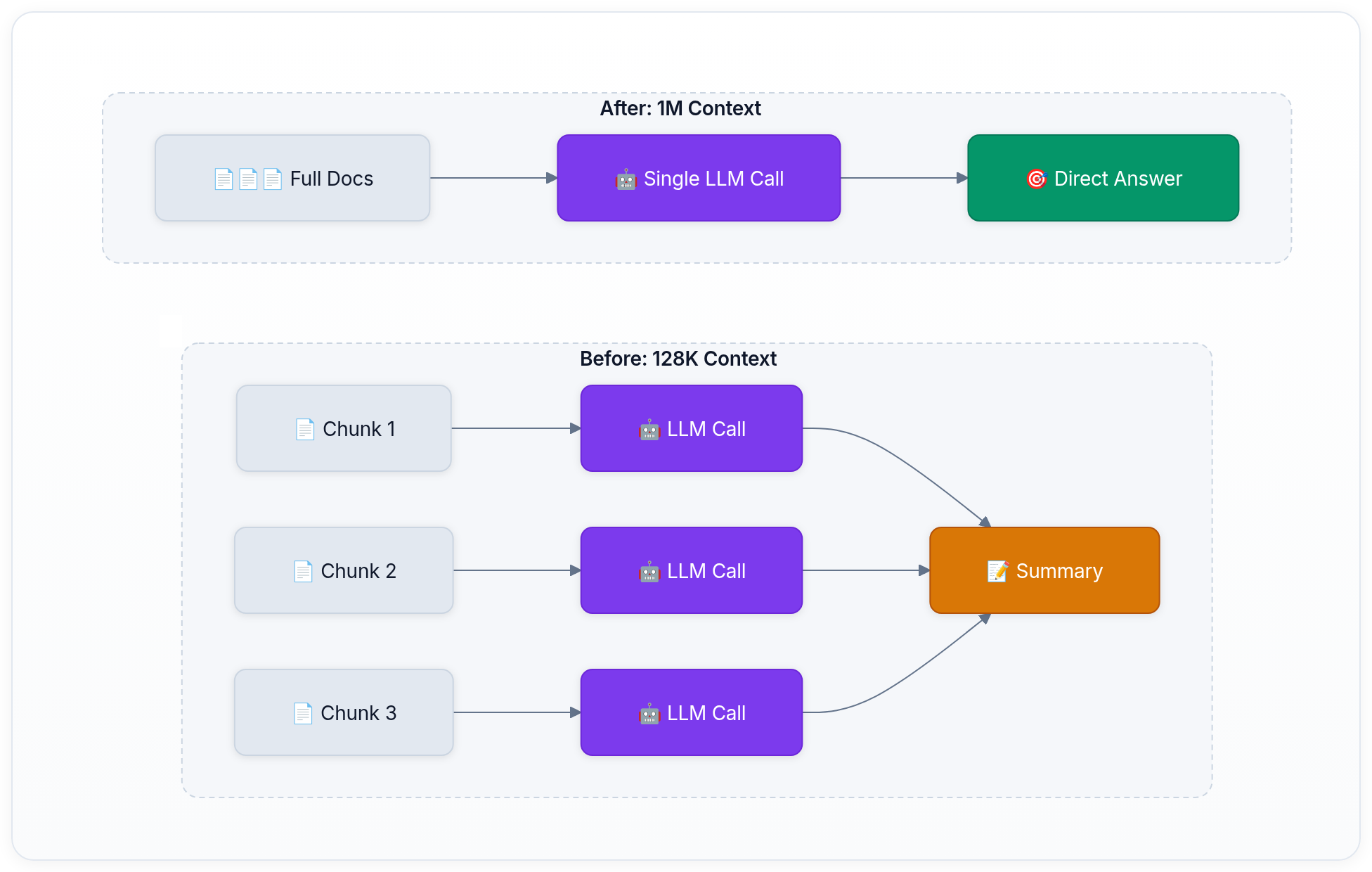

Document-scale analysis

Legal teams can load five complete contracts into a single prompt and ask "what are the inconsistencies in the termination clauses across these agreements?" Medical researchers can load dozens of papers and ask for a synthesis. Financial analysts can include an entire year of quarterly reports plus earnings call transcripts.

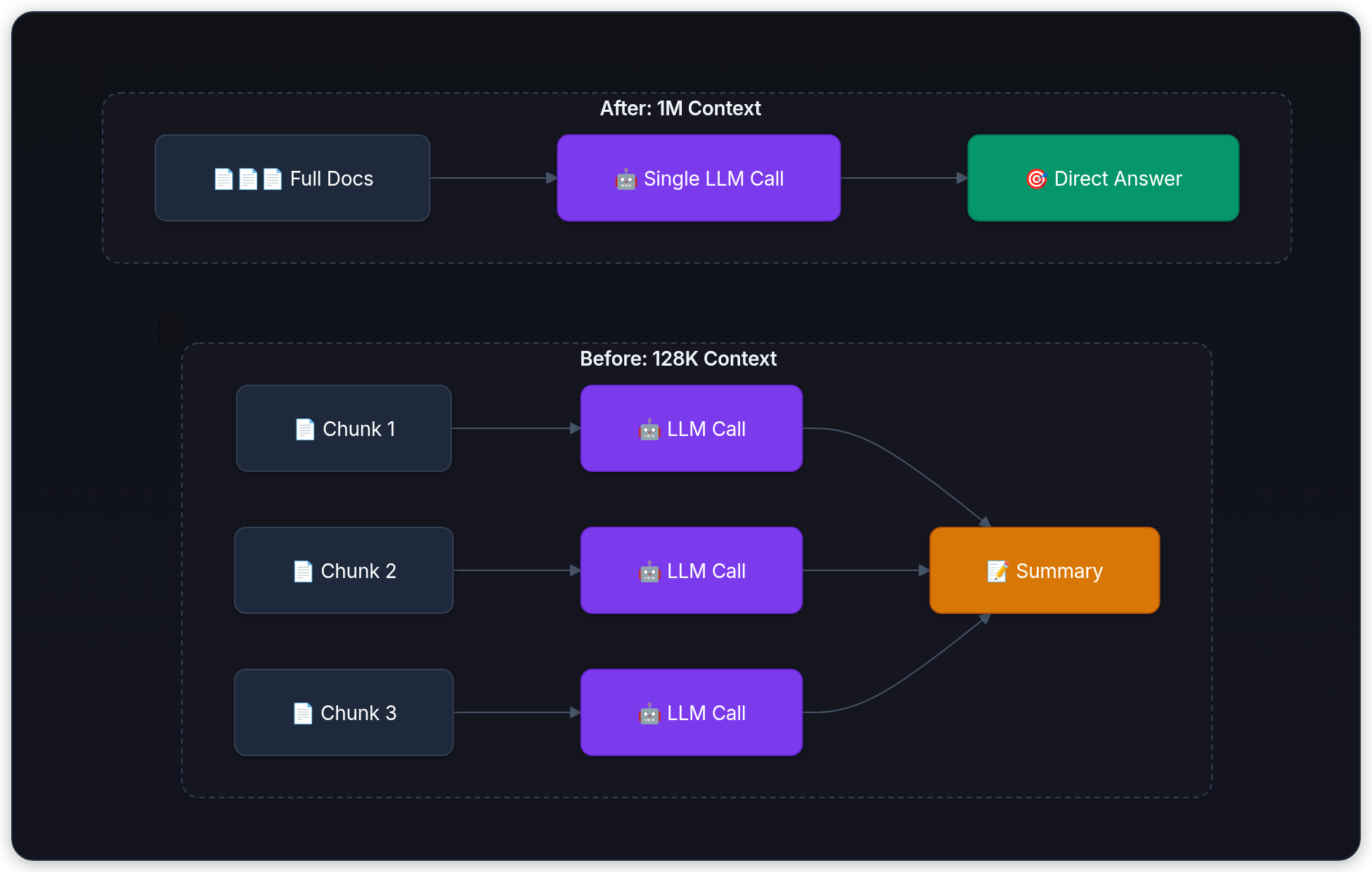

The following diagram illustrates how the workflow shifts from chunked processing to a single model call with expanded context:

Few-shot learning at scale

With 1M tokens, you can include far more examples in your prompt. Instead of 5-10 few-shot examples, you can provide dozens or even hundreds when the task truly benefits from it. That can help on pattern-heavy tasks like classification, extraction, or structured output generation, but the gains are not monotonic forever. Once examples become redundant, off-distribution, or poorly ordered, they can dilute the signal instead of improving it. The context window is no longer the main cap; example quality and budget are.

What gets worse (the hidden costs)

More context isn't free, even when the pricing claims it's free. There are real trade-offs that most announcements gloss over.

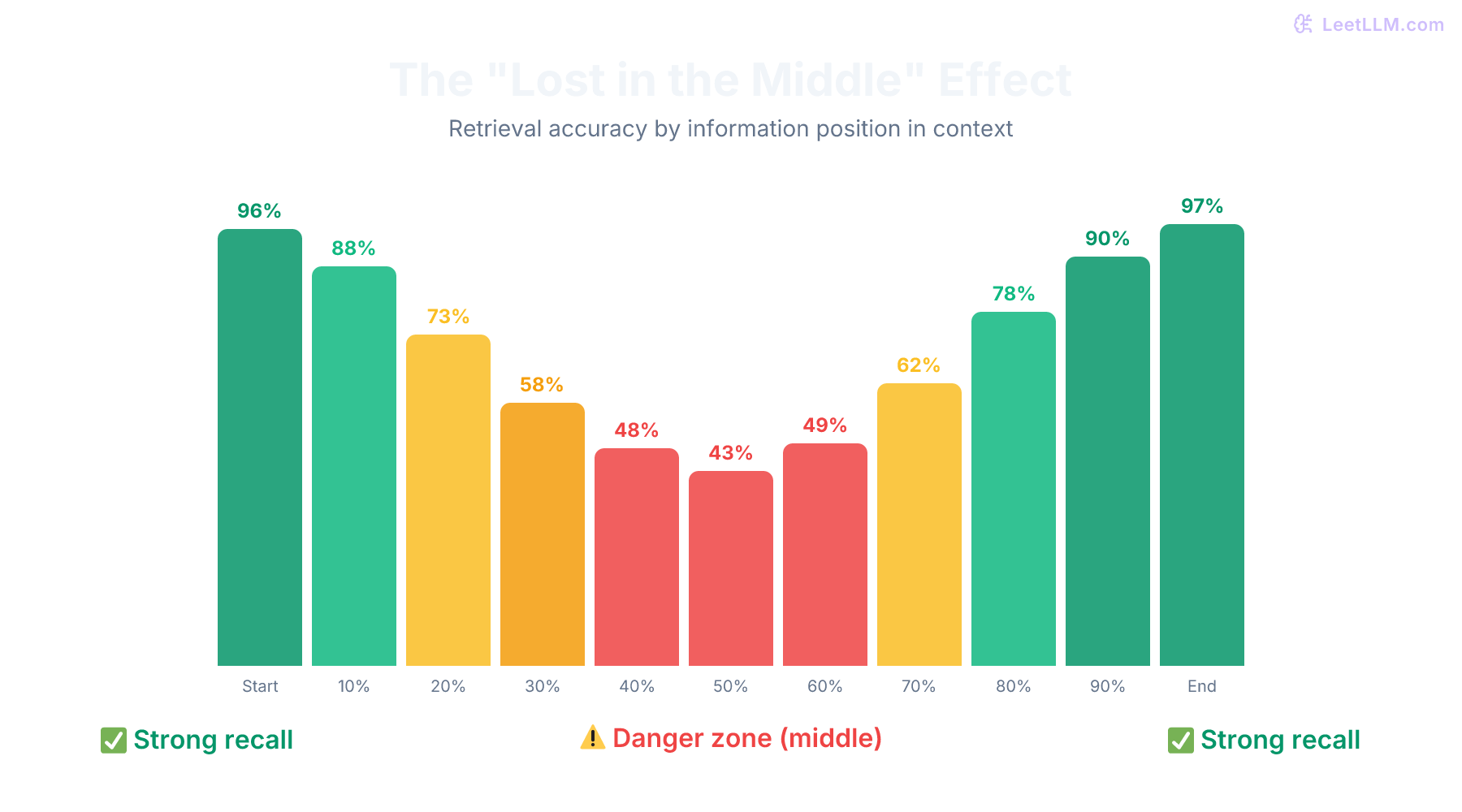

The "Lost in the Middle" problem

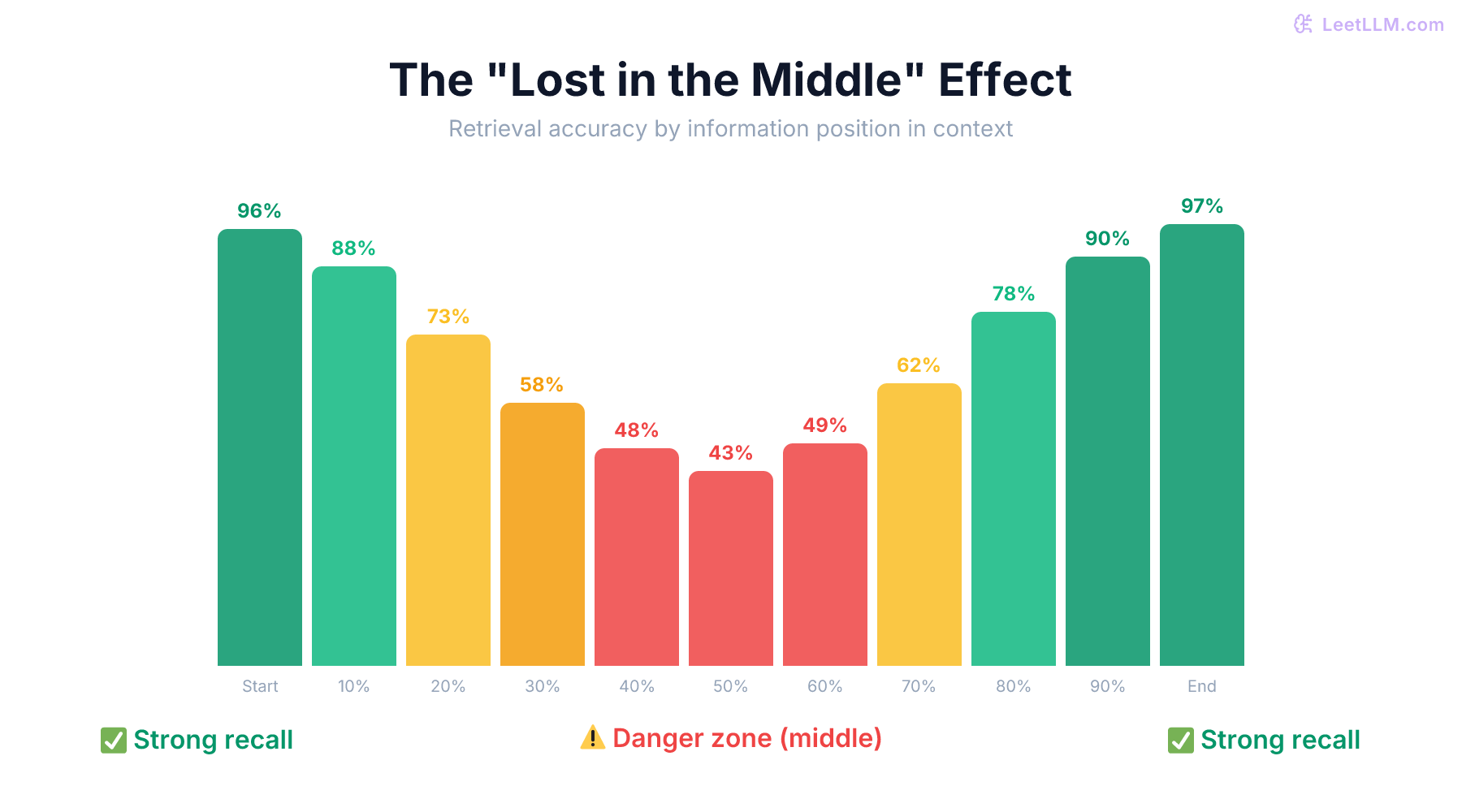

One of the most important findings in long-context research: models don't pay equal attention to all parts of a long prompt. Information placed in the middle of a very long context is significantly harder for the model to recall than information at the beginning or end.[9]

This creates a U-shaped retrieval curve. If you bury a critical fact on page 200 of a 400-page prompt, the model's more likely to miss it than if you put it on page 1 or page 400. The practical implication: order matters, even with a 1M context window.

⚠️ Common mistake: Assuming "more context = better answers." If you dump an entire codebase into the prompt without structuring it, the model may perform worse than if you carefully selected the 5 most relevant files. Long context is a capability, not a strategy.

Latency explosion

Filling a 1M token context window is slow. Self-attention in the standard Transformer architecture has complexity with respect to sequence length.[10] That means longer prompts push both compute and memory hard, even when modern kernels such as FlashAttention reduce the practical pain.[11]

Most of the pain shows up in prefill, the one-shot pass that ingests the full prompt before the first output token can appear. Only after prefill finishes does decode start. Decode is still one token at a time, and it's usually memory-bandwidth-bound because each new token rereads the KV cache.

The operational consequence is simple: near-full-window prompts belong much more naturally in batch processing, document analysis, and long-running agent loops than in latency-sensitive chat. If the user expects an answer in a second or two, "just stuff everything into context" is usually the wrong design.

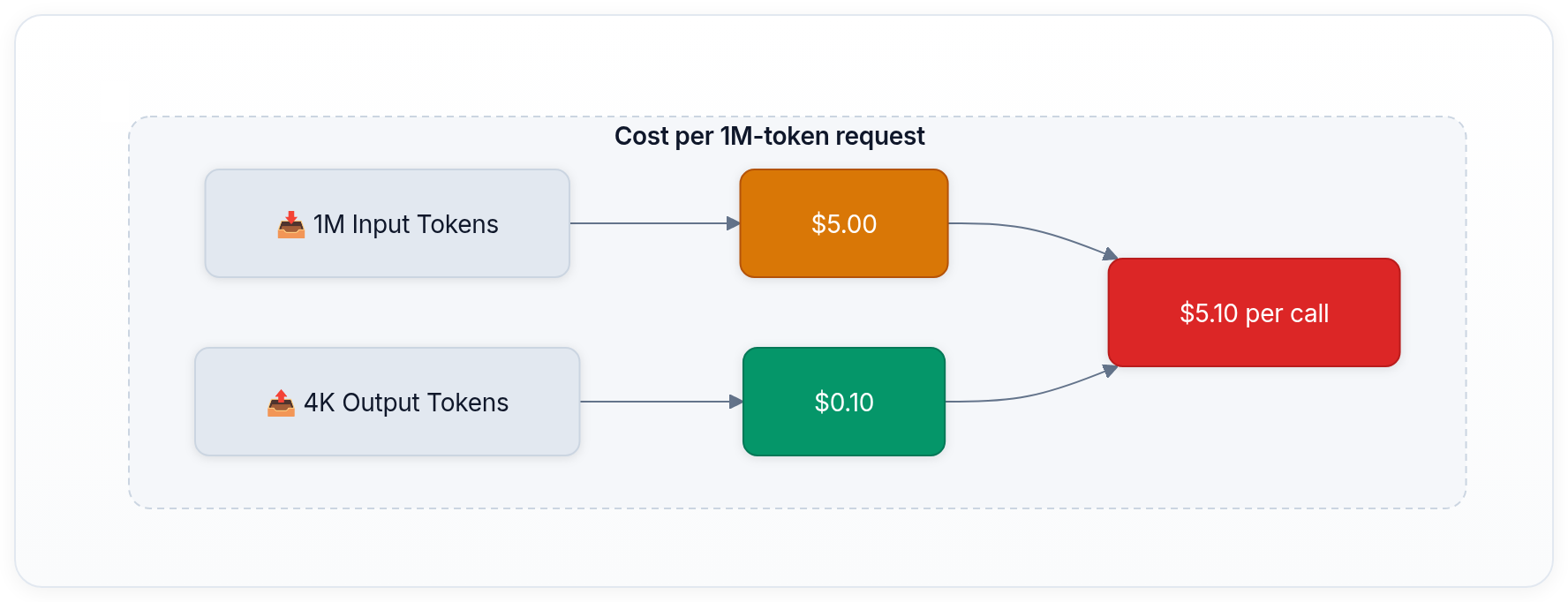

Cost multiplication

Even when pricing is easier to explain than it used to be, the arithmetic is still brutal. Once prompts become very large, each iteration becomes materially more expensive. In long-running agents that repeatedly resend large working sets, total spend compounds fast.[7][2][8][5]

🎯 Production tip: Don't default to filling the full context window. Use retrieval, ranking, or pre-filtering first, then spend long-context budget only on the documents that genuinely need joint reasoning.

Context rot and attention decay

Even models that score well on benchmarks show degraded reasoning quality as context grows. Anthropic's current context-window docs explicitly call this failure mode "context rot," and public long-context studies report the same broad pattern on harder reasoning tasks.[1][12]

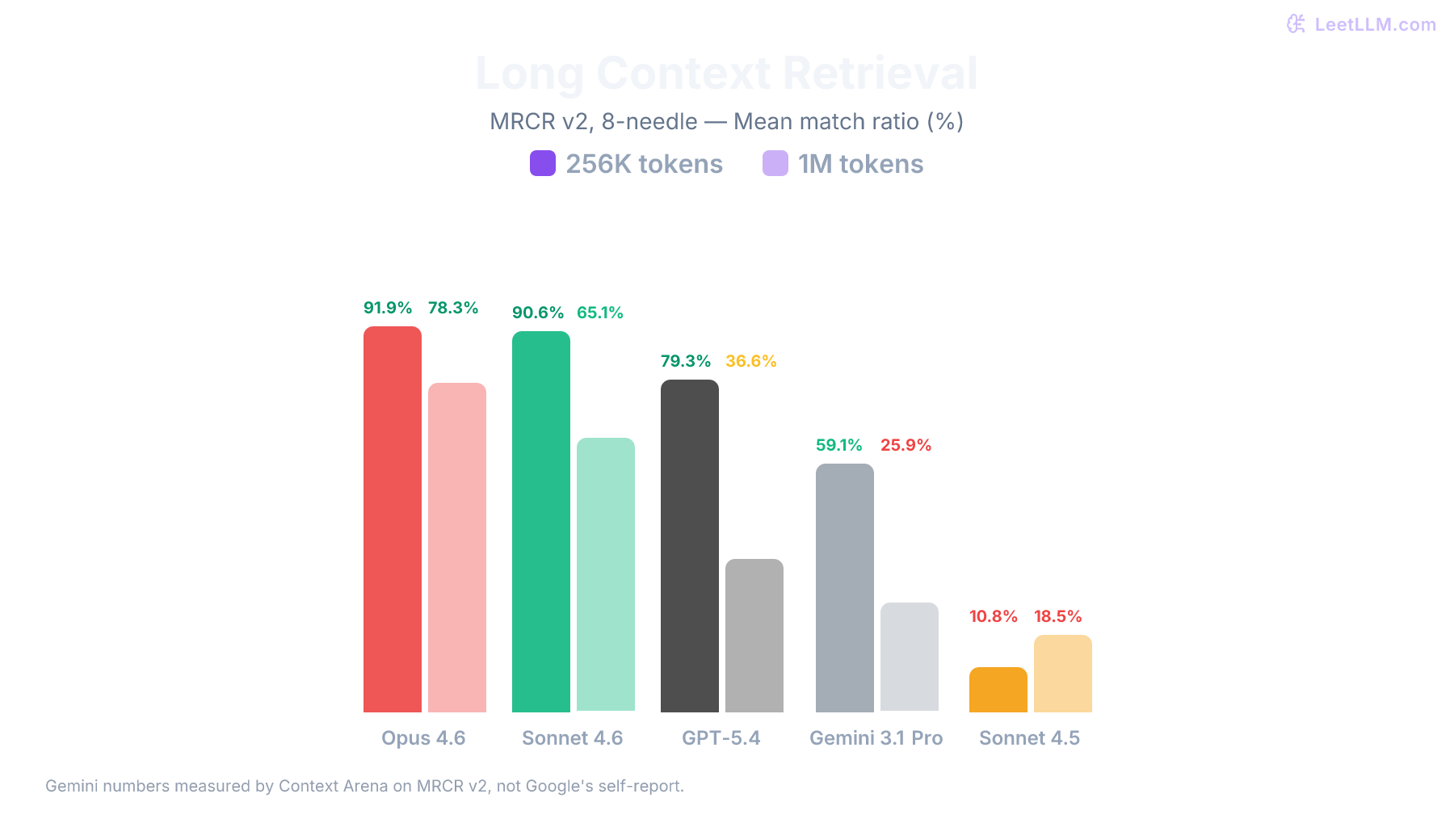

How to measure: the benchmarks that matter

When a provider claims "1M context window," the natural question is: does the model actually use all those tokens effectively? Three families of benchmarks try to answer this question, each testing a different dimension.

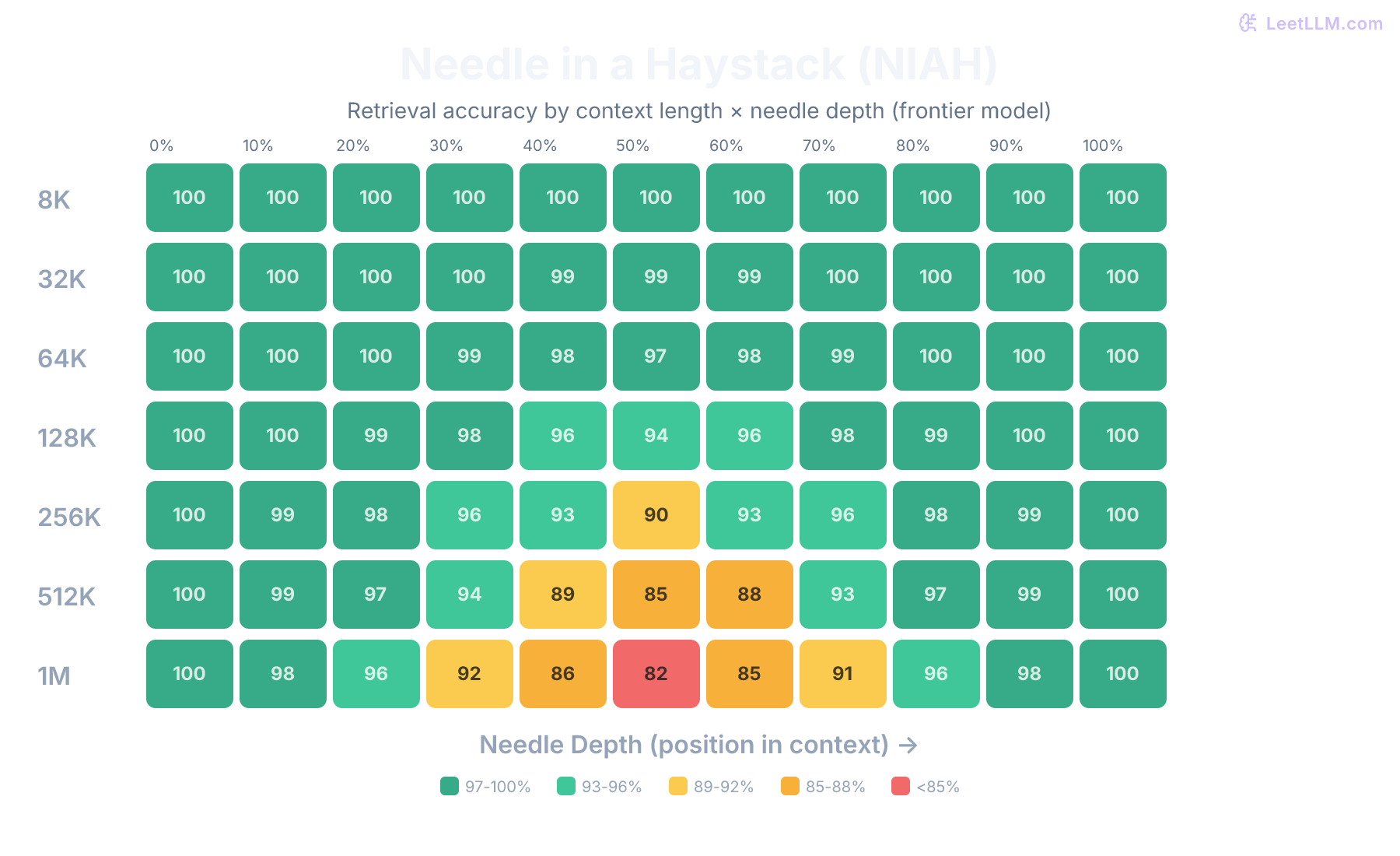

Needle in a Haystack (NIAH)

The simplest and most intuitive benchmark. You insert a specific fact (the "needle") at a random position within a large block of irrelevant text (the "haystack"), then ask the model to retrieve that fact.[13]

How it works

- Generate a haystack of distractor text (usually essays or Wikipedia paragraphs) at a target length (e.g., 500K tokens)

- Insert a short fact at a specific depth (e.g., 25%, 50%, 75% through the text)

- Ask the model to retrieve the fact

- Repeat across multiple depths and haystack sizes

- Plot retrieval success rate as a heatmap (x-axis: depth, y-axis: context length)

What it tells you

NIAH tests recall: can the model find a specific piece of information regardless of where it sits? A perfect score means the model has functional access to the entire context window. Frontier vendor evals now often report near-perfect single-needle retrieval at long context lengths, which is exactly why NIAH alone is no longer enough.[6][14]

What it misses

NIAH is deliberately simple. It tests memorization, not reasoning. A model that scores 100% on NIAH might still fail to synthesize information from different parts of a long context. Retrieving a phone number from page 200 is easy. Comparing two contradictory statements on page 50 and page 350 is hard. NIAH doesn't test the latter.

🔬 Research insight: Single-needle NIAH is increasingly a floor, not a ceiling. Multi-needle, multi-hop, and aggregation-heavy variants are more revealing because real workloads rarely ask for one isolated fact.

RULER: What's the real context size?

RULER (Real-world Understanding of Long-context Language models via Encompassing Retrieval) expands on NIAH by testing four categories of capabilities across varying context lengths.[15]

| Category | What it tests | Example task |

|---|---|---|

| Retrieval | Finding specific information | Multi-key NIAH (retrieve multiple needles) |

| Multi-hop tracing | Following chains of references | "X is Y. Y is Z. What is X?" across 500K tokens |

| Aggregation | Counting or summarizing patterns across the full context | "How many times does entity X appear?" |

| Question answering | Answering questions requiring multi-document reasoning | Synthesizing information across documents |

Why RULER matters

RULER revealed a critical gap in the industry: many models that claim large context windows show significant performance degradation well before reaching their stated limit. A model might have a 128K token window but only effectively use 64K tokens before accuracy starts dropping. RULER measures the "effective context length," not just the "maximum context length."

💡 Key insight: The gap between stated and effective context length is the single most important thing to understand about context window claims. A model with a 200K effective context is more useful than a model with a 1M stated context but 300K effective context, at least for tasks that require reasoning across the full window.

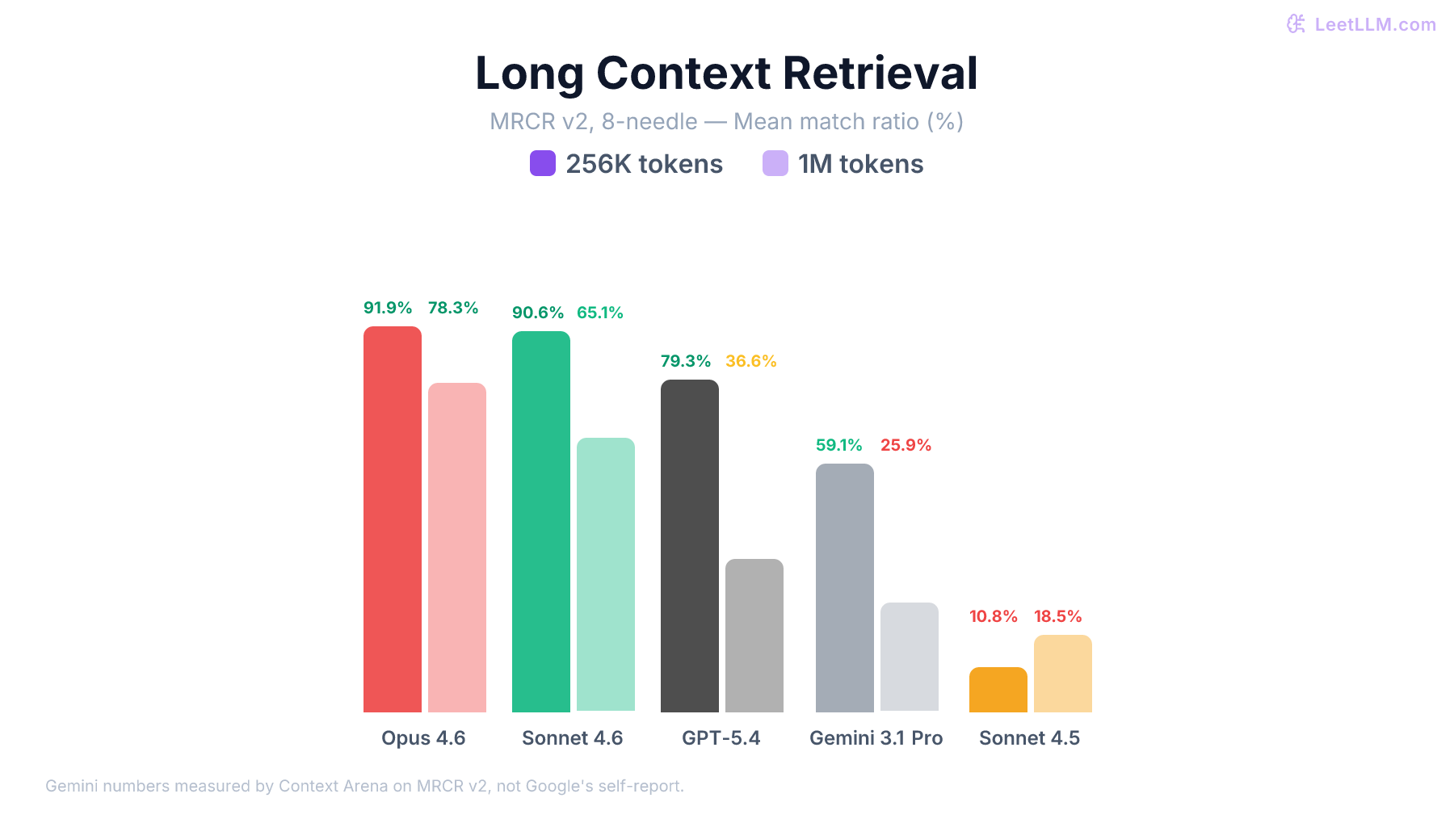

MRCR: Multi-Round Coreference Resolution

MRCR is useful because it targets a real long-context failure mode: tracking entities and references across many turns of conversation. Anthropic's context-window docs call out MRCR and GraphWalks alongside long-context retrieval when describing quality at scale.[1]

The important caveat is that MRCR is best treated as product evidence, not a universal leaderboard. Vendor-defined evals can be informative, but they are not the same thing as a neutral public benchmark.

The practical way to read this benchmark mix is:

| Benchmark family | What it mainly tests | Why it matters | Limitation |

|---|---|---|---|

| NIAH[13] | Point retrieval | Verifies that the model can find a fact in a long prompt | Often too easy for modern frontier models |

| RULER[15] | Retrieval, tracing, aggregation, QA | Better proxy for effective context length | Still synthetic compared with production workloads |

| MRCR / vendor long-conversation evals[1] | Entity tracking across turns | Closer to long-running agent memory | Usually not apples-to-apples across providers |

⚠️ Common mistake: Treating all context window benchmarks as equivalent. NIAH, RULER, and vendor conversation evals answer different questions.

The technique stack: how models handle long context

Supporting a 1M token context window isn't just about training on longer sequences. There's a deep stack of architectural innovations that make it work.

Distributed execution

At 1M tokens, attention math is only half the problem. You also have to decide where the sequence lives during training or serving. Ring Attention is one concrete answer: shard the sequence across accelerators, circulate KV blocks around the ring, and compute attention blockwise so no single device has to hold the whole prompt at once.[16] FlashAttention-style kernels attack a different bottleneck inside each device by reducing high-bandwidth-memory traffic during attention itself.[11][17]

Positional encoding for long sequences

Standard absolute positional encodings break down beyond the training length. Two techniques dominate:

- RoPE (Rotary Position Embeddings) encodes relative position using rotation matrices, which naturally extends to longer sequences.[18] Most modern open-weight long-context models use RoPE or a close variant.

- ALiBi (Attention with Linear Biases) adds a simple linear penalty to attention scores based on distance, allowing the model to extrapolate to longer sequences than it was trained on.[19]

Both allow models trained on shorter contexts to extend to longer ones, though performance typically degrades without fine-tuning on longer data. Positional interpolation techniques (like YaRN and NTK-aware scaling) address this by scaling the position encodings to fit more positions into the same range.[20][21]

KV cache optimization

In autoregressive generation, the Key-Value (KV) cache stores computed attention states for all previous tokens. Its size grows linearly with sequence length, layer count, KV head count, head dimension, and bytes per value. A good back-of-the-envelope estimate is KV bytes ≈ 2 × layers × tokens × kv_heads × head_dim × bytes_per_value, where the factor of 2 accounts for keys and values. On a 32-layer decoder with 8 KV heads, head dimension 128, and bf16 cache entries (2 bytes), a 1M-token prompt needs about 131 GB of KV memory for a single sequence, or roughly 122 GiB if you think in binary units. Larger models push that much higher. Several optimizations make this tractable. The following diagram shows the stack of optimizations that sequentially reduce KV cache memory overhead:

- GQA (Grouped-Query Attention) and related shared-KV attention variants: Reduce the number of KV heads relative to query heads, shrinking cache size roughly in proportion to the Q:KV head ratio.[22]

- Paged Attention: Manages KV cache like virtual memory pages, sharply reducing fragmentation during serving.[23]

- KV Cache Compression: Quantizes or selectively evicts less important keys and values, trading a small accuracy hit for major memory savings.

FlashAttention and IO-aware computing

FlashAttention reformulates the attention computation to minimize reads and writes between GPU HBM (High Bandwidth Memory) and SRAM (on-chip fast memory).[11] Later work such as FlashAttention-3 keeps pushing the same IO-aware idea further.[17] None of this changes the computational complexity, but it does dramatically reduce wall-clock time by avoiding memory bottlenecks. Without these kernels, 1M token context windows would be impractical on current hardware.

When to use (and when to avoid) full context

Here's a practical decision framework for production systems:

| Scenario | Strategy | Why |

|---|---|---|

| Single-document analysis (legal, medical) | ✅ Full context | The document is your context. No retrieval needed. |

| Codebase Q&A | ✅ Full context (if it fits) | Models reason better with full dependency graphs visible. |

| Long-running agents | ✅ Full context + late compaction | Avoid lossy summarization as long as possible. |

| Searching across many documents | ⚠️ RAG (Retrieval-Augmented Generation) first, then context | Use embeddings to retrieve top-K, then feed into context. 1M tokens of irrelevant text hurts accuracy. |

| Real-time chat (latency-sensitive) | ❌ Avoid filling the window | Multi-second or worse TTFT is hard to hide in interactive UX. Use sliding windows, retrieval, or caching. |

| High-volume batch processing | ⚠️ Cost-benefit analysis | Even low single-digit dollars per MTok compounds quickly when every job resends hundreds of thousands of tokens. |

💡 Key insight: Long context and RAG aren't competing strategies. They're complementary. Use RAG to filter down to the most relevant content, then use the large context window to include all the relevant content without truncation. The combination often outperforms either approach alone.

To implement this practically, you might use a pattern like the following. This version uses OpenAI's Responses API with gpt-4.1, but the retrieval-and-budgeting pattern is the important part. The helper functions are placeholders for your own embedding and tokenizer stack.

python1from collections.abc import Iterable 2from openai import OpenAI 3 4def query_with_filtered_context( 5 query: str, 6 all_docs: Iterable[str], 7 model: str = "gpt-4.1", 8 context_budget_tokens: int = 200_000, 9) -> str: 10 """ 11 Retrieve relevant documents, then query a long-context model with a hard budget. 12 """ 13 ranked_docs = retrieve_relevant_docs(query, all_docs, top_k=50) 14 15 context_parts: list[str] = [] 16 used_tokens = 0 17 18 for doc in ranked_docs: 19 doc_tokens = estimate_tokens(doc) 20 if used_tokens + doc_tokens > context_budget_tokens: 21 break 22 23 context_parts.append(f"<document>\n{doc}\n</document>") 24 used_tokens += doc_tokens 25 26 prompt = "\n\n".join(context_parts) 27 28 client = OpenAI() 29 response = client.responses.create( 30 model=model, 31 max_output_tokens=1024, 32 input=f"Context:\n{prompt}\n\nQuestion: {query}", 33 ) 34 return response.output_text

If many requests share the same large prefix, use the provider's caching feature instead of resending the same corpus every time. Anthropic supports prompt caching, OpenAI prompt caching is automatic with optional retention policies, Google exposes both implicit and explicit caching, and xAI automatically caches matching prompt prefixes.[7][24][25][26]

When designing your architecture, usually start with the smallest context window that reliably solves your problem. You can scale up when you hit a ceiling, but starting with a massive context window will often inflate your latency and API costs for little gain.

Key takeaways

-

1M tokens ≈ 750K words ≈ 2,500 pages. Enough for an entire codebase, but not for everything. Think "project-scale," not "enterprise-scale."

-

Stated context length ≠ effective context length. Always check benchmark results to understand how much of the window the model actually uses well.

-

The "Lost in the Middle" problem is real. Information placement matters. Put critical content at the beginning or end, not buried in the middle.

-

Long context is easier to buy than it used to be, and 2M-class windows now exist, but it's still not cheap. The main cost driver is how many tokens your architecture resends on every step.

-

Latency is the hidden cost. Long prompts mean slow time to first token. Design your architecture around this: batch processing is fine, real-time chat isn't.

-

Long context + RAG > either alone. Use retrieval to filter, then context to reason. Don't dump everything into the prompt and hope for the best.

What comes next

The next phase is not just "bigger windows." We already have 2M-class models. The next phase is better use of the window you already bought.

As the "Lost in the Middle" research shows,[9] we're still far from perfect recall and reasoning across very long prompts. Architectural innovations such as better memory management, hierarchical context construction, and retrieval-augmented reasoning will matter more than raw headline window size. Keep watching the benchmarks, not the press releases.

Ultimately, context length may become less of a headline feature and more of a background systems constraint for many workflows. But it's not heading toward irrelevance. Retrieval quality, prompt budgeting, and workload-specific validation will still matter even if raw window sizes keep growing. Until then, you have to measure, validate, and build defensively.

References

Context windows

Anthropic · 2026

Anthropic Model Pricing

Anthropic · 2026

GPT-4.1 Model

OpenAI · 2025

GPT-4.1 Model

OpenAI · 2025

GPT-5.5 Model

OpenAI · 2026

Prompt caching

OpenAI · 2026

Gemini 3 Developer Guide

Google · 2026

Gemini API Pricing

Google · 2026

Context caching

Google · 2026

Long context

Google · 2026

xAI Models and Pricing

xAI · 2026

Prompt Caching

xAI · 2026

Ring Attention with Blockwise Transformers for Near-Infinite Context.

Liu, H., et al. · 2024 · arXiv preprint

RULER: What's the Real Context Size of Your Long-Context Language Models?

Hsieh, C.-Y., et al. · 2024

Lost in the Middle: How Language Models Use Long Contexts

Liu, N.F., et al. · 2023 · TACL 2023

Needle In A Haystack: Pressure Testing LLMs

Kamradt, G. · 2023

GQA: Training Generalized Multi-Query Transformer Models from Multi-Head Checkpoints.

Ainslie, J., et al. · 2023 · EMNLP 2023

Extending Context Window of Large Language Models via Positional Interpolation.

Chen, S., et al. · 2023

YaRN: Efficient Context Window Extension of Large Language Models.

Peng, B., et al. · 2023

FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness.

Dao, T., Fu, D. Y., Ermon, S., Rudra, A., & Ré, C. · 2022 · NeurIPS 2022

FlashAttention-3: Fast and Accurate Attention with Asynchrony and Low-precision.

Shah, J., Bikshandi, G., Zhang, Y., Thakkar, V., Ramani, P., & Dao, T. · 2024

Efficient Memory Management for Large Language Model Serving with PagedAttention

Kwon, W., et al. · 2023 · SOSP 2023

Attention Is All You Need.

Vaswani, A., et al. · 2017

RoFormer: Enhanced Transformer with Rotary Position Embedding.

Su, J., et al. · 2021

Train Short, Test Long: Attention with Linear Biases Enables Input Length Generalization.

Press, O., Smith, N. A., & Lewis, M. · 2022 · ICLR 2022

Long-context LLMs Struggle with Long In-context Learning

Li, T., et al. · 2024