BlogBest AI Plan for OpenClaw in 2026: 5 Providers Compared

🏷️ OpenClaw🏷️ AI Coding Plans🏷️ Cost Optimization🏷️ Agentic AI🏷️ API Pricing🏷️ Kimi K2.6🏷️ Qwen Cloud🏷️ Comparison

Best AI Plan for OpenClaw in 2026: 5 Providers Compared

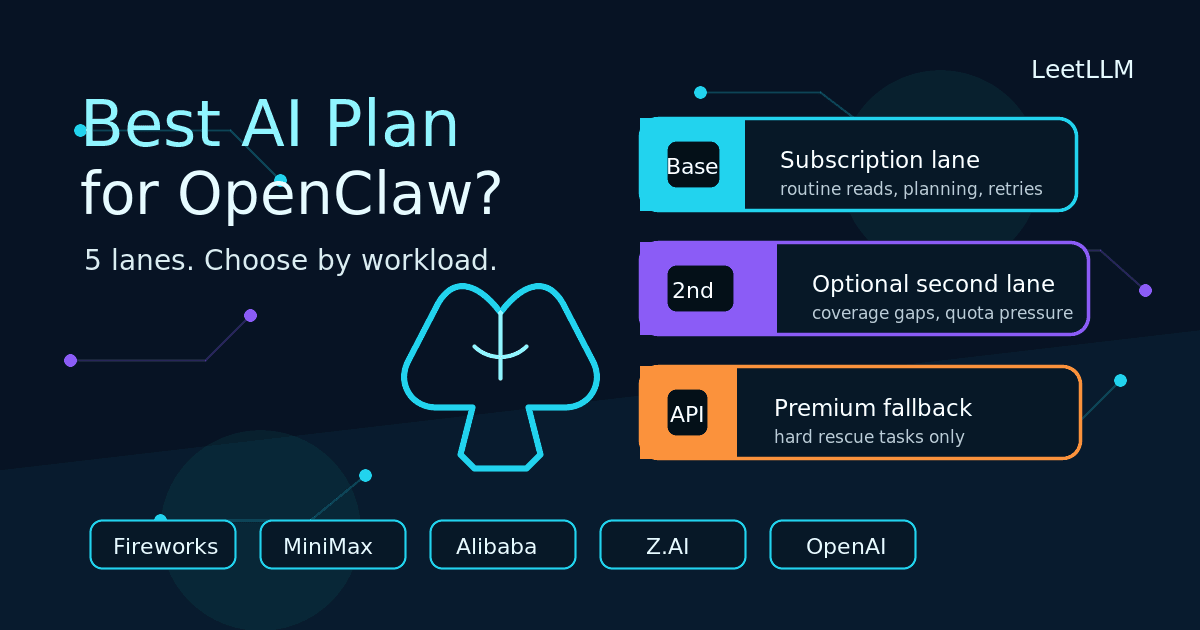

OpenClaw plan selection is mostly a routing and quota problem. This guide compares current Fireworks Fire Pass, MiniMax Token Plan, Z.AI, Alibaba Cloud Coding Plan, and OpenAI routes using official docs and the actual OpenClaw provider paths.

LeetLLM TeamApril 4, 2026Updated May 21, 202621 min read

Imagine you shipped an OpenClaw agent that monitors an internal knowledge base, reads supplier lead-time APIs, and writes restock orders to a shared spreadsheet. You let it run for one afternoon. It reads 120 files, calls 23 external endpoints, retries 8 failed tool calls, and keeps a running summary of every inventory field it touched. At 6 PM you check the bill and realize you routed every single turn through a metered premium API. The agent works for the test case. Your wallet does not.

That scenario is why this article exists. OpenClaw is not a chat tab. It is an open-source agent framework that executes code, reads files, and calls APIs over long-running sessions. It holds large contexts, retries tool calls when they fail, fans out into many small requests, and sometimes stays in the loop for minutes. That workload shape turns plan selection into a routing and quota problem, not a model preference problem.

This article uses official vendor docs and official OpenClaw provider docs current on May 21, 2026. It only counts routes the docs describe for OpenClaw or supported coding tools. That matters because the commercial plan and the OpenClaw provider surface aren't always named the same way. Fireworks is the current sharp edge: Fireworks' Fire Pass page now points to Kimi K2.6 Turbo at $49/month, while OpenClaw's Fireworks provider page can still show the older Kimi K2.5 Turbo Fire Pass catalog row. MiniMax has a similar naming split: OpenClaw documents the OAuth route that lands on minimax-portal/*, while MiniMax's own docs have moved from Coding Plan naming to Token Plan naming. OpenAI can be usage-based API or Codex subscription, but the numbers below use the openai/gpt-5.4 direct API path that the current OpenClaw provider docs describe because that's still the clearest premium fallback to budget.[1][2][3][4][5][6][7]

Why OpenClaw Burns Tokens Differently

A single OpenClaw task can generate dozens of LLM calls. The agent reads a file (one call), decides what to do next (one call), executes a tool (one call), and reads the result (one call). A retry doubles the count. A long context window filled with previous file contents multiplies the token cost per call. By the end of an hour, a busy agent can easily consume more tokens than a human would type in a week of chat sessions.

The implication is simple: the cheapest plan for occasional chat is not necessarily the cheapest plan for an agent that runs all afternoon. You need to match the provider to the task class, not only to the model name.

What Actually Matters for OpenClaw

For this workflow, five questions matter more than leaderboard drama:

- Is there a documented OpenClaw route? A plan that looks cheap on paper is not useful if the setup path is fuzzy.

- What gets counted? Tokens, prompts, requests, and model calls are not interchangeable units.

- When do limits reset? A 5-hour window, a weekly quota, and a monthly quota create different failure modes.

- What is the usage scope? Several of these plans are explicitly personal-use or coding-tool-only.

- Where does metered pricing begin? Your expensive lane should be the exception path, not the default.

The model ref is the practical detail people forget. These are the clean starting routes from the vendor and OpenClaw docs:[1][2][4][8][5][6]

| Option | OpenClaw route | Why it matters |

|---|---|---|

| Fireworks Fire Pass | fireworks/accounts/fireworks/routers/kimi-k2p6-turbo | Fireworks' current Fire Pass setup uses this Kimi K2.6 Turbo router, but you should confirm your installed OpenClaw catalog because older docs can still show K2.5 |

| MiniMax Token Plan | minimax-portal/MiniMax-M2.7 | OpenClaw still documents this OAuth path even as MiniMax shifts public docs toward Token Plan naming |

| Z.AI GLM Coding Plan | zai/glm-5.1 | This is the default bundled GLM route |

| Alibaba Coding Plan | qwen/qwen3.5-plus | OpenClaw routes Alibaba Coding Plan through the bundled qwen provider |

| OpenAI API | openai/gpt-5.4 | Current documented OpenClaw direct API route for OpenAI budgeting; verify your installed OpenClaw catalog before deploying |

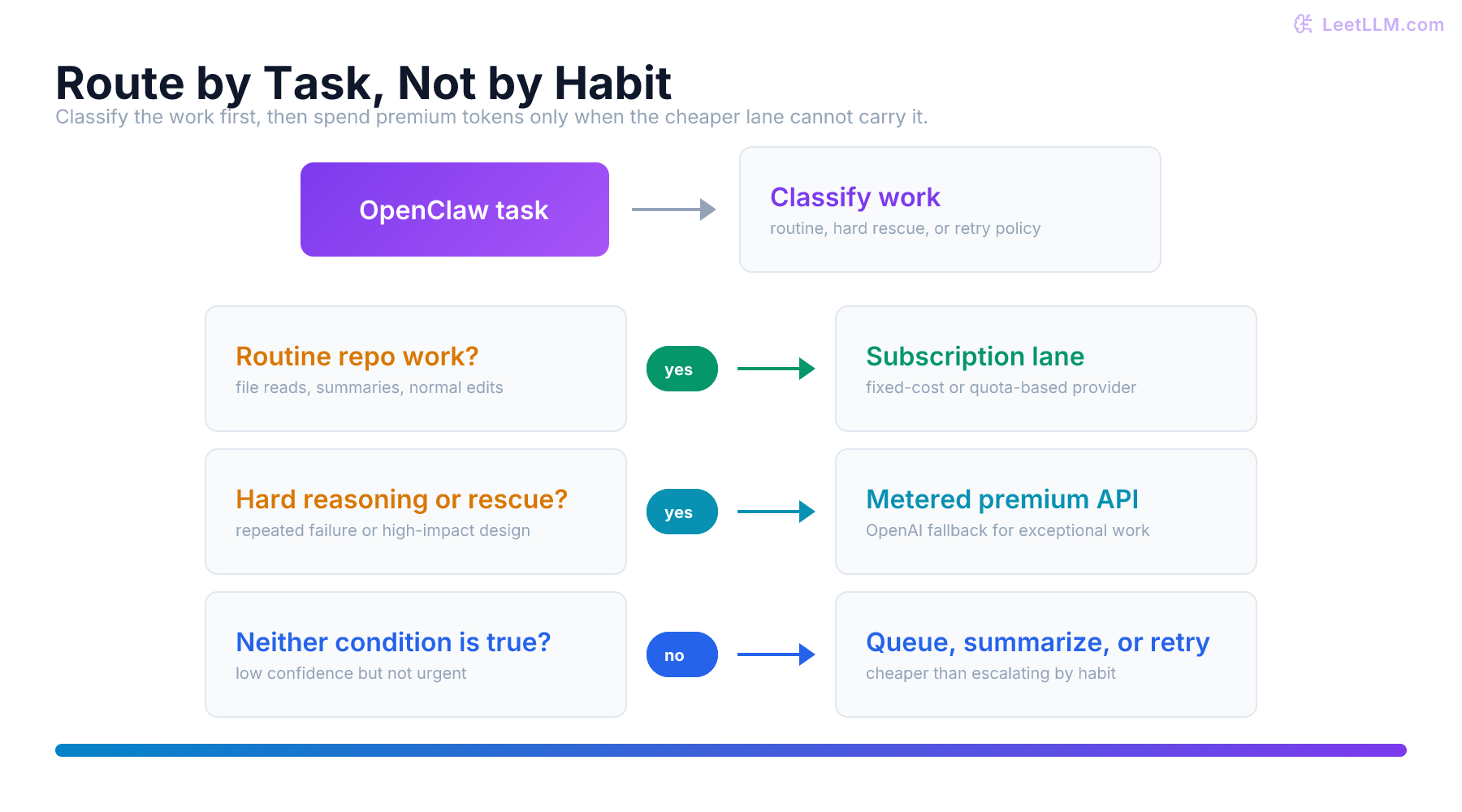

The decision flow looks like this:

That immediately rules out automatic buying. "Best" depends on whether you want the lowest monthly entry point, a fixed-cost Kimi lane, the largest M2.7 ceiling, the broadest bundled model menu, or the safest premium escape hatch.

The Five Credible Options

1. Fireworks Fire Pass

Fire Pass is now a fixed-cost Kimi lane, not the cheapest fixed plan in this set. Fireworks prices the current Early Access pass at $49 per month. The pass covers Kimi K2.6 Turbo only, gives zero per-token costs for that model, uses a dedicated Fire Pass API key, and documents a 256K context window for the router Fireworks tells OpenClaw users to configure.[1][2]

That matters because Fire Pass is not a benchmark story. It's a billing story. If most of your OpenClaw traffic is routine planning, repo search, file edits, and normal retry loops, the marginal cost of "one more Kimi turn" stays at zero while the pass is active.

In the inventory agent example, Fireworks Fire Pass would handle routine file reads, spreadsheet updates, and planning turns without adding per-token cost, as long as Kimi K2.6 Turbo is the right model for those tasks. The fixed monthly fee absorbs call volume that would otherwise burn metered tokens.

Buy it when: you specifically want a Kimi K2.6 Turbo fixed-cost lane for personal OpenClaw use.

Watch out for: it is explicitly personal-use only, it prohibits production workloads and team or shared usage, it only covers one model id, it requires the dedicated Fire Pass key, and Fireworks labels Fire Pass as an early-access experimental product whose availability and pricing can change.[1]

2. MiniMax Token Plan

MiniMax is now the messiest option to read correctly because the docs are mid-migration. The old coding-plan/* documentation now redirects into token-plan/*, while OpenClaw's provider docs still recommend the OAuth path that resolves to minimax-portal/MiniMax-M2.7 or minimax-portal/MiniMax-M2.7-highspeed.[3][4]

The stable part is the spend shape. MiniMax still has entry tiers at $10, $20, and $50 per month, and the cited Token Plan material treats that subscription as a broader package around the M2.7 family instead of the older coding-only naming.[9][3]

The operational details matter more than the label:

- text usage is governed by a 5-hour rolling window

- non-text models use daily quotas

- for purchases from March 23, 2026 onward, MiniMax applies a weekly usage quota equal to 10x the 5-hour quota

- it can also apply dynamic rate limiting during peak traffic

- separate high-speed variants exist when you specifically want

MiniMax-M2.7-highspeedinstead of the standard lane[3][4]

That makes MiniMax useful when you already want the M2.7 family and you like a fixed monthly ceiling, but the doc surface is noisier than Fireworks or Alibaba right now. For the inventory agent, MiniMax would carry the bulk of the reasoning and supplier API parsing under a predictable monthly bill.

Buy it when: you want M2.7, optional high-speed variants, and a fixed monthly ceiling.

Watch out for: the naming is in transition, and the operational limits are rolling-window quota plus traffic shaping, not clean "unlimited" access.[3]

3. Z.AI GLM Coding Plan

Z.AI is still the most explicit about OpenClaw itself. The official OpenClaw guide says OpenClaw tasks use secondary scheduling and best-effort delivery, with coding-agent tasks getting priority under load. Under heavy usage, OpenClaw traffic can hit dynamic queuing and fair-use rate limits.[8]

The broader GLM Coding Plan docs also make the quota model unusually clear. All plans support GLM-5.1, GLM-5-Turbo, GLM-4.7, and GLM-4.5-Air. Published quotas are approximately:

| Plan | Approx. prompts / 5 hours | Approx. prompts / week |

|---|---|---|

| Lite | 80 | 400 |

| Pro | 400 | 2000 |

| Max | 1600 | 8000 |

There's a second catch: Z.AI says GLM-5.1 and GLM-5-Turbo consume quota faster than the baseline models. The cited docs say they're normally billed at 3x during peak hours and 2x during off-peak hours, with a limited-time benefit that keeps off-peak usage at 1x through the end of June.[10][11]

That makes Z.AI useful when you specifically want a GLM-centric lane and you're willing to route routine work to cheaper GLM tiers while reserving glm-5.1 for hard tasks. In the inventory example, Z.AI could handle the daily restock summaries on GLM-4.5-Air while escalating a complex supplier integration failure to GLM-5.1.

Buy it when: you want the most explicit OpenClaw-specific documentation and you prefer the GLM family.

Watch out for: this isn't a pure unlimited lane. It's a quota-shaped plan with best-effort scheduling and model-weighted burn rates.[8][10]

4. Alibaba Cloud Model Studio Coding Plan

Alibaba is the biggest correction in this article. Model Studio does now belong in the fixed-plan conversation.

The official English Coding Plan overview lists Pro at $50 per month with 6,000 requests per 5 hours, 45,000 requests per week, and 90,000 requests per month. It also says the Lite plan stopped accepting new subscriptions on March 20, 2026, while existing Lite users keep their renewal and upgrade rights.[12]

The OpenClaw side is cleaner than the older Model Studio naming suggested. OpenClaw now routes Alibaba's text models through the bundled qwen provider, with separate auth choices for Coding Plan versus Standard pay-as-you-go endpoints. For Coding Plan, the global auth choice is qwen-api-key, the endpoint is https://coding-intl.dashscope.aliyuncs.com/v1, and the default example model is qwen/qwen3.5-plus.[5][12]

The bundled qwen catalog includes qwen/qwen3.5-plus, qwen/MiniMax-M2.5, qwen/glm-5, and qwen/kimi-k2.5 on supported endpoints.[5] Taken together, that makes Alibaba the broadest single-provider subscription surface in this comparison if you want multiple model families behind one provider surface instead of buying separate subscriptions everywhere. For the inventory agent, that means you could route routine tasks to qwen3.5-plus, experiment with MiniMax-M2.5 for a new supplier parser, and keep everything under one plan-specific key.

OpenClaw's cited Qwen docs also list qwen/qwen3.6-plus, but they explicitly steer that model toward Standard pay-as-you-go endpoints rather than treating it as the default Coding Plan route.[5] That's why the budget comparison here still centers on qwen/qwen3.5-plus.

Two operational details matter:

- Coding Plan requires a plan-specific key in the

sk-sp-...format and the coding base URL, not the normal pay-as-you-go key and endpoint.[12][13] - When quota runs out, Alibaba says calls fail. It does not silently spill over into pay-as-you-go billing.[13]

Buy it when: you want the broadest bundled model menu behind one OpenClaw provider route and a fixed monthly ceiling.

Watch out for: the plan-specific key and endpoint rules are easy to get wrong, and quota exhaustion is a hard stop, not a billing fallback.[13]

5. OpenAI API

OpenAI is still the premium escape hatch, not the lane you want handling every filesystem read.

OpenClaw's current OpenAI provider docs point direct API usage at openai/gpt-5.4, even though OpenAI's own docs now surface GPT-5.5 as the latest API model. For the documented OpenClaw path used here, GPT-5.4 standard text pricing is $2.50 / 1M input tokens, $0.25 / 1M cached input tokens, and $15.00 / 1M output tokens.[7][14] If your installed OpenClaw catalog exposes provider-specific aliases beyond that provider page, verify the exact route and pricing before budgeting it.[6]

That's why OpenAI works well as the premium lane in an OpenClaw stack. It's easy to justify for architectural debugging, high-stakes reasoning, or hard codegen. It's much harder to justify as the default path for every routine turn. In the inventory example, you would reserve OpenAI for the one complex architectural decision, like refactoring the agent's retry logic when a supplier API changes its schema, not for the hundredth file read.

If you want flat-cost OpenAI access inside OpenClaw, that's the separate Codex subscription route under openai-codex/*. For cost planning, the direct openai/* API path is still the cleanest premium fallback because the pricing is explicit.[6]

Buy it when: you want the highest-confidence fallback for the hardest tasks.

Watch out for: long-context sessions and output-heavy runs make this the fastest-growing line item in the stack.[7][14]

The Head-to-Head Table

| Option | Spend shape | OpenClaw route | Best role in the stack | Main downside |

|---|---|---|---|---|

| Fireworks Fire Pass | Monthly fixed fee | fireworks/accounts/fireworks/routers/kimi-k2p6-turbo | Kimi fixed-cost daily driver | One model, personal-use only, early access |

| MiniMax Token Plan | Monthly fixed fee with rolling-window text quotas | minimax-portal/MiniMax-M2.7 | M2.7-first monthly ceiling | Naming transition and peak-hour shaping |

| Z.AI GLM Coding Plan | Fixed subscription with model-weighted quota burn | zai/glm-5.1 | GLM-focused primary lane | Best-effort scheduling under load |

| Alibaba Coding Plan | Monthly fixed fee with request quotas | qwen/qwen3.5-plus | Broadest single-provider subscription lane | Hard stop at quota, easy to misconfigure |

| OpenAI API | Pure metered API | openai/gpt-5.4 | Premium escape hatch | Metered; verify route-specific pricing before use |

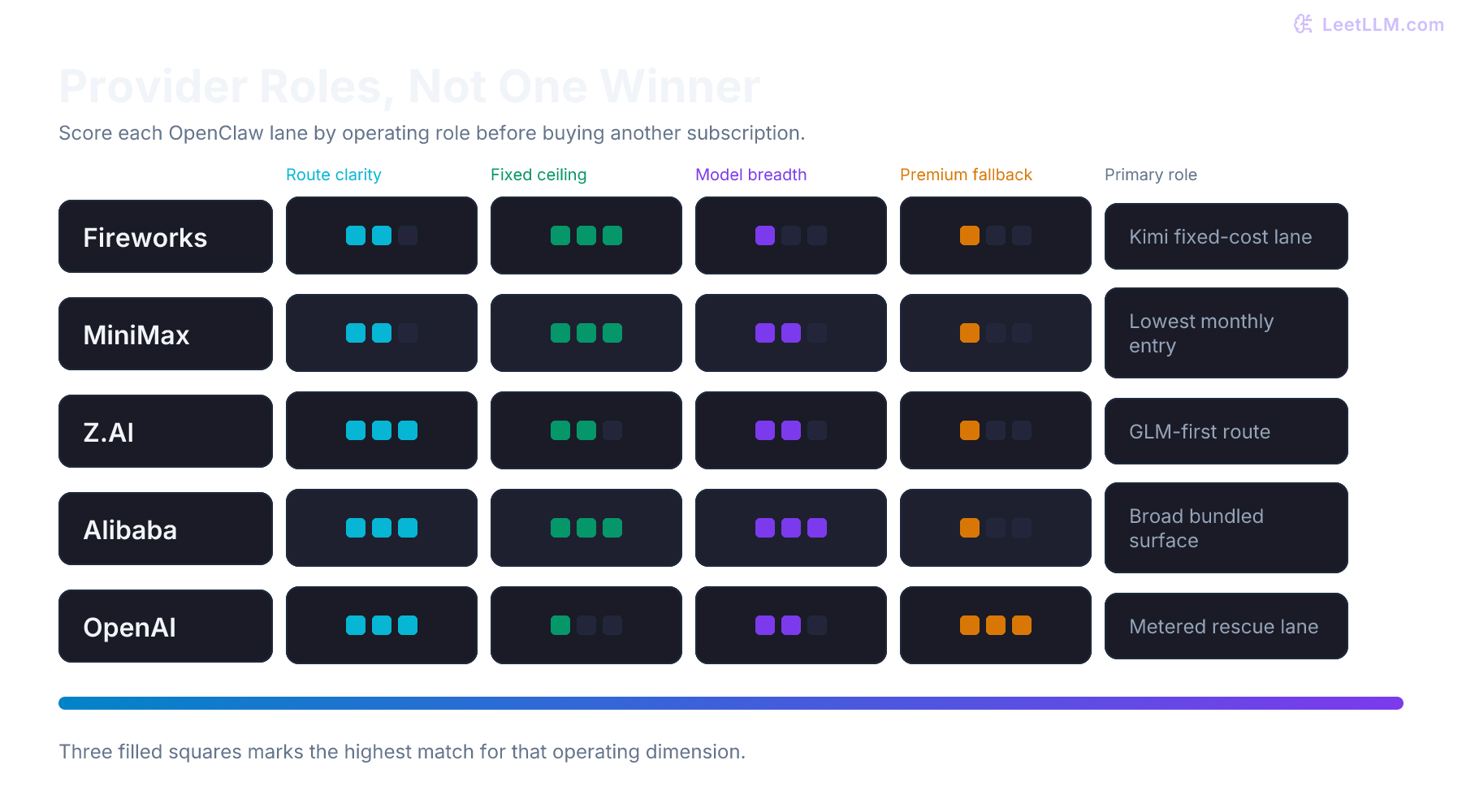

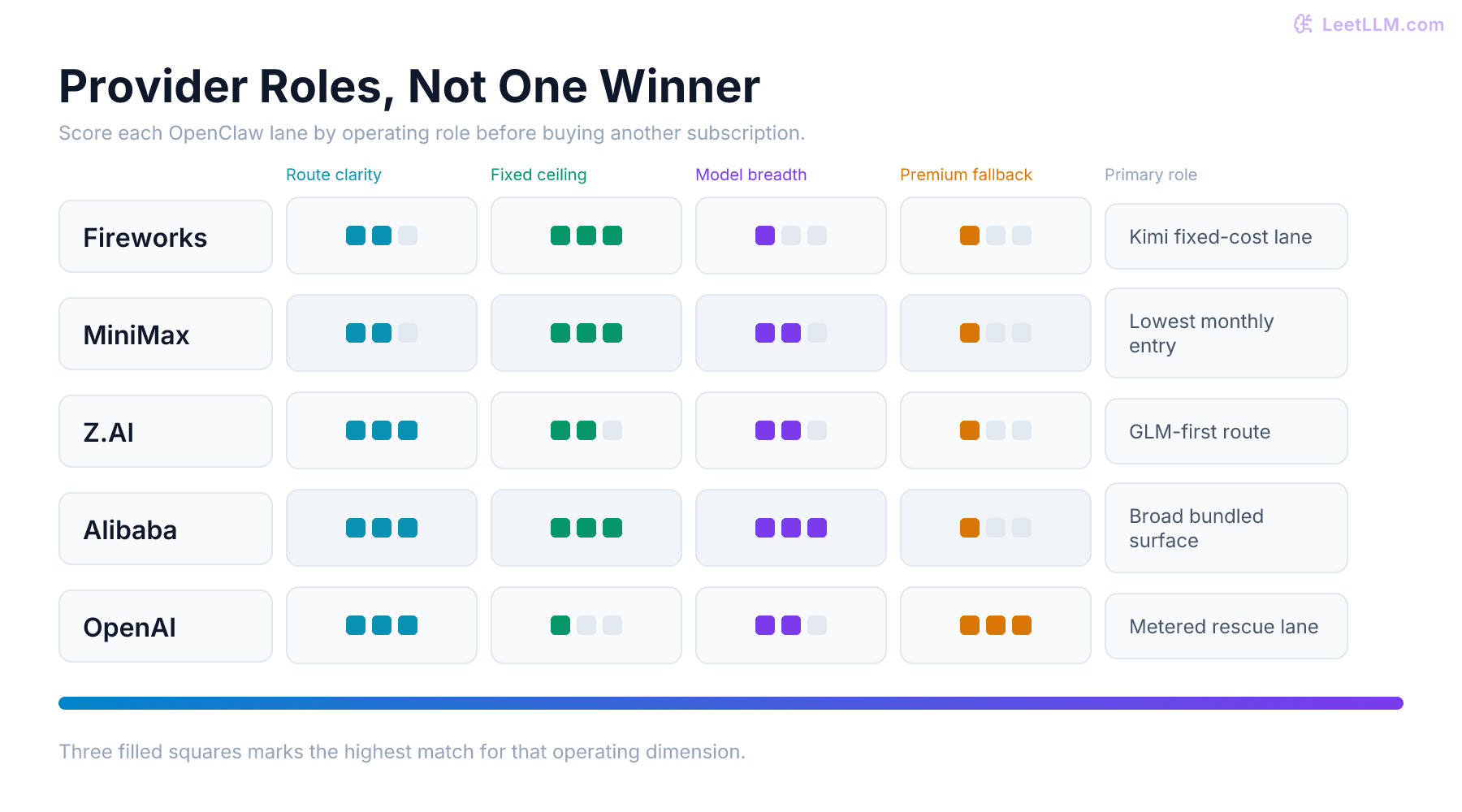

The useful pattern is simple:

- Fireworks wins when you specifically want a fixed-cost Kimi K2.6 lane.

- MiniMax wins on the lowest monthly entry point if M2.7 fits your workload.

- Z.AI wins if you specifically want GLM.

- Alibaba wins on breadth inside one bundled OpenClaw provider surface.

- OpenAI wins when you need a premium fallback, not when you need a budget default.

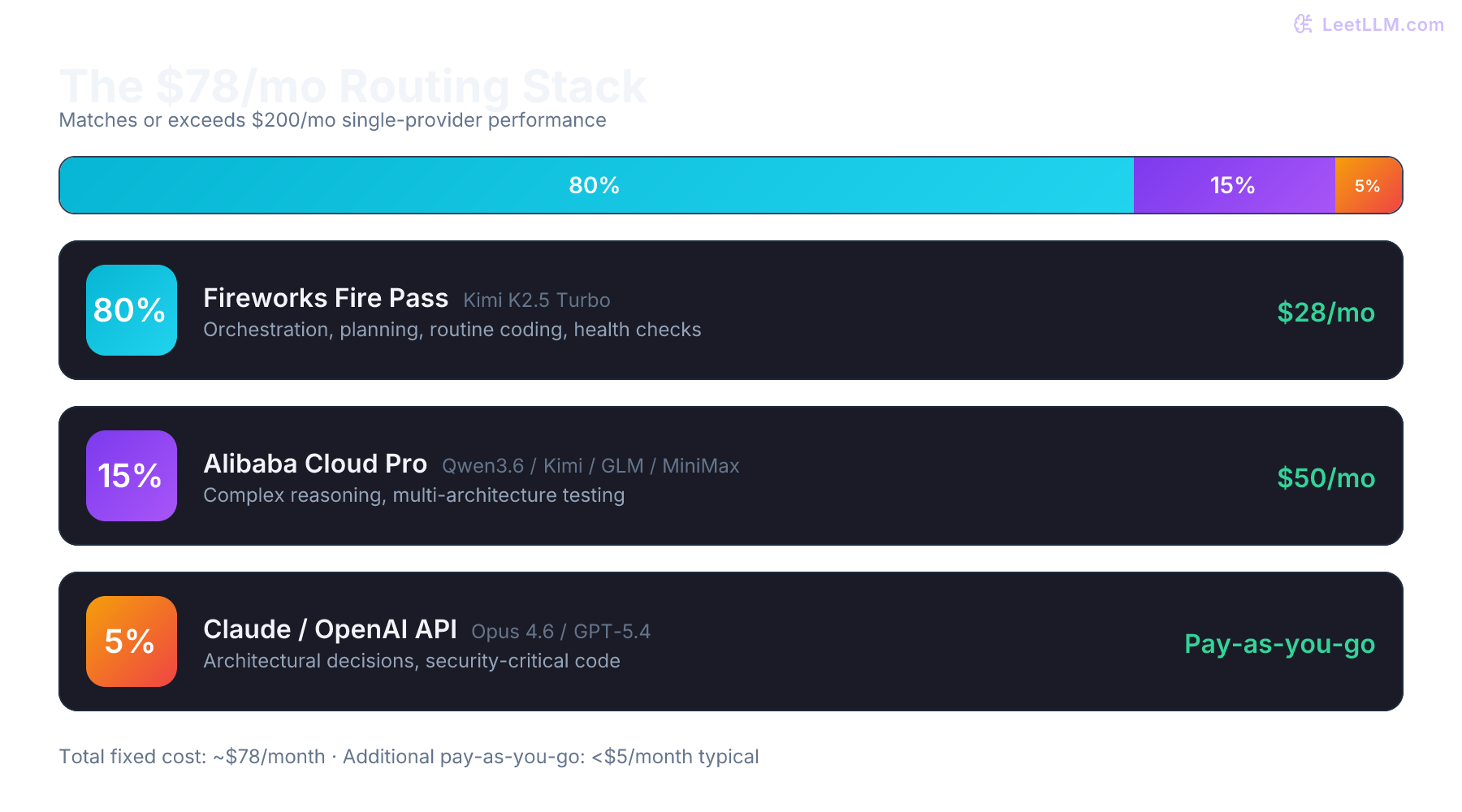

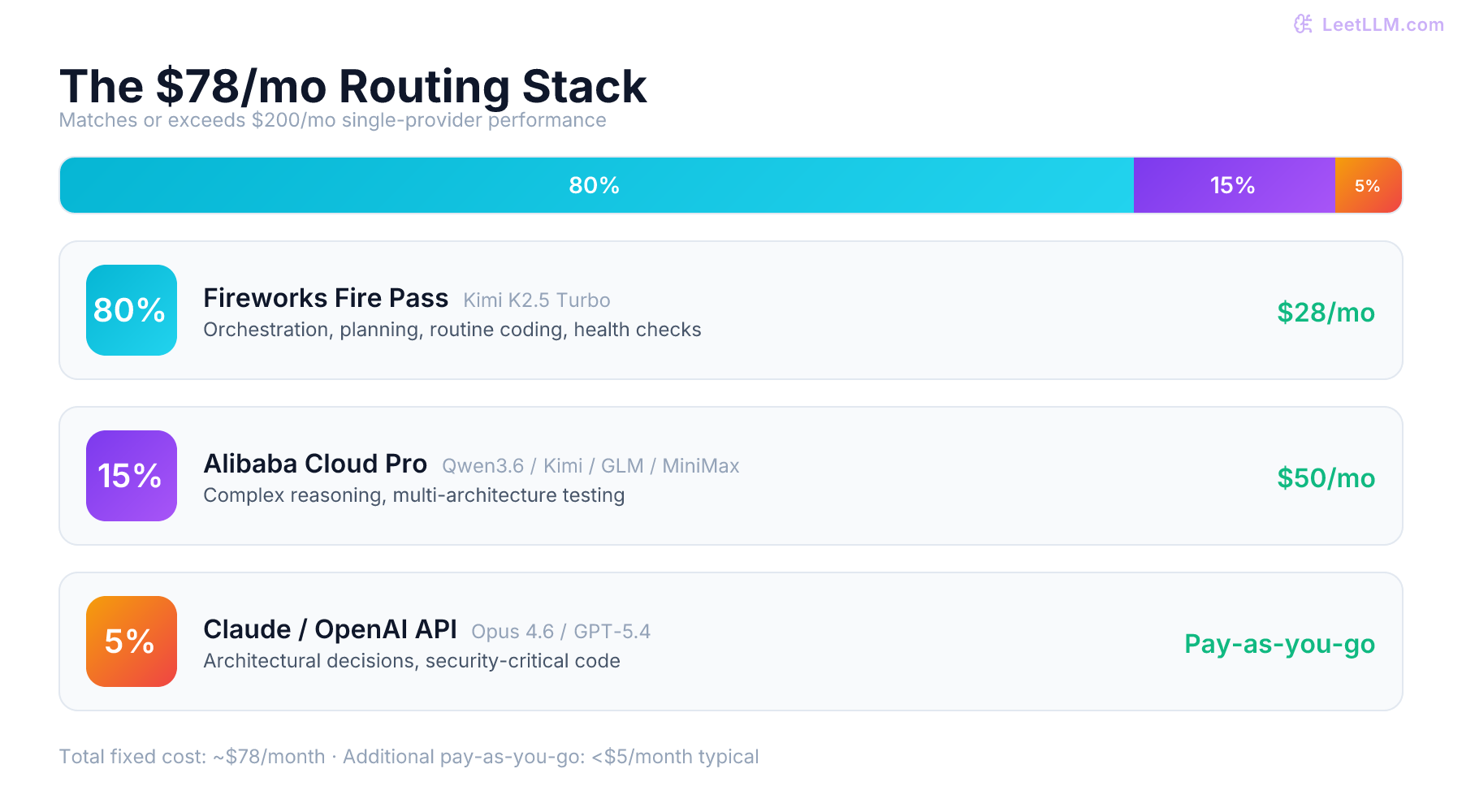

A Worked Cost Example

Let's put real numbers on the inventory agent from the opening. Assume one afternoon produces:

- 120 file reads at ~400 input tokens and ~100 output tokens each

- 23 supplier API calls at ~800 input tokens and ~200 output tokens each

- 8 retry loops after failed tool calls, each adding one extra call

- 1 complex architectural decision that needs deeper reasoning at ~15,000 input tokens and ~3,000 output tokens

Total for the afternoon: roughly 75,000 input tokens and 18,000 output tokens, spread across about 160 model calls.

Here's how that afternoon bills on three representative stacks before cached-input discounts, priority pricing, or a session that crosses the long-context threshold:

| Stack | Primary lane | Premium lane | Estimated model cost for that afternoon |

|---|---|---|---|

| Fireworks + OpenAI fallback | Fire Pass ($49/month, Kimi K2.6 Turbo) | One GPT-5.4 call for the hard decision (~$0.08) | ~$0.08 marginal after the pass is active |

| MiniMax + OpenAI fallback | Token Plan ($20/month example, covers M2.7 quota) | One GPT-5.4 call for the hard decision (~$0.08) | ~$0.08 marginal after the plan is active |

| OpenAI only | Every call hits metered API | Same lane for everything | ~$0.46 on GPT-5.4 |

The lesson is not that OpenAI is inherently expensive. For one small afternoon, direct API usage can look cheap. The problem appears when the same agent runs every day, keeps more context, retries more often, or crosses long-context pricing. A subscription lane absorbs repeated routine turns so the premium lane stays an exception instead of becoming the default.

The Practical Routing Strategy

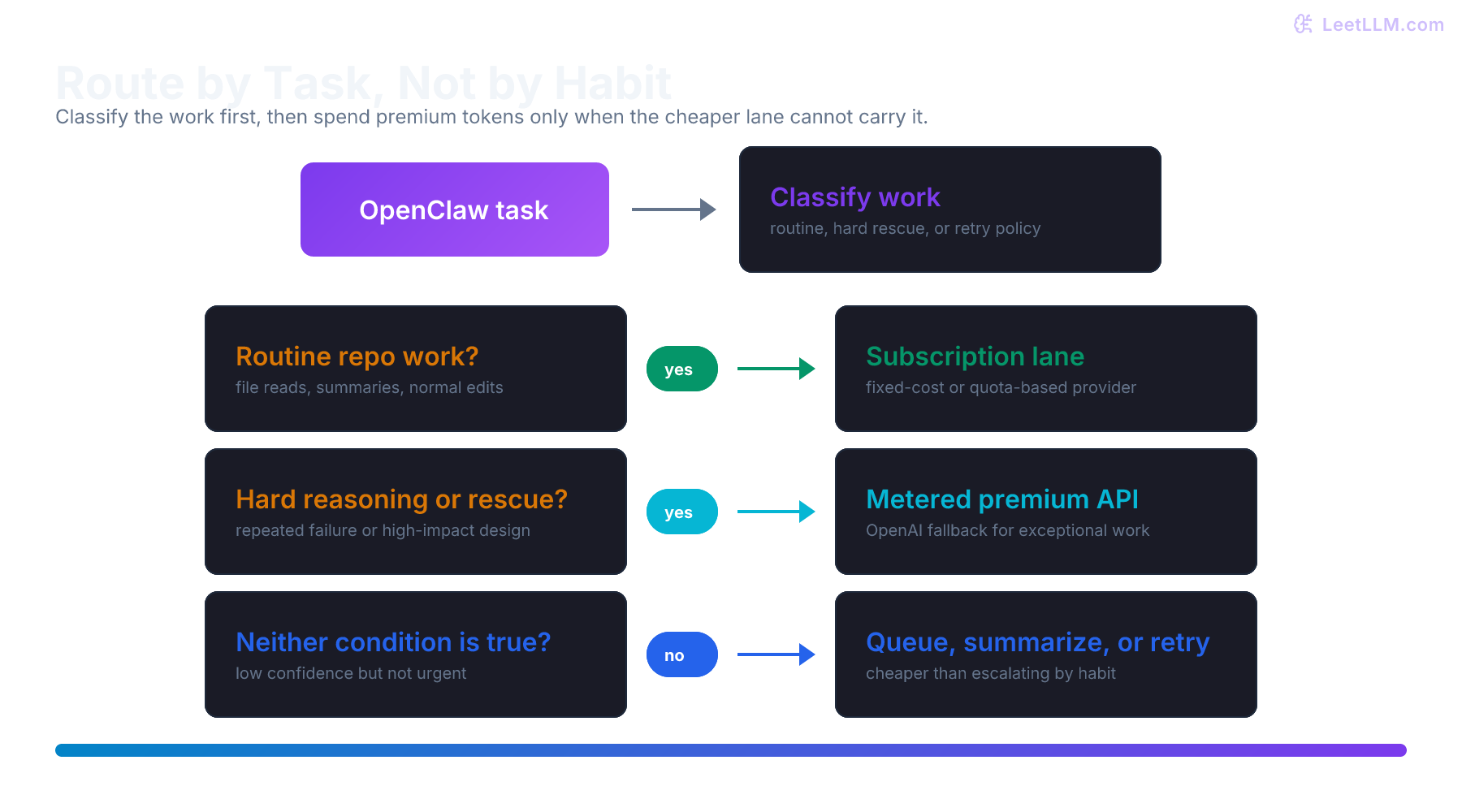

The better default isn't "buy everything." It's this:

-

One primary subscription lane Usually choose one of Fireworks, MiniMax, Z.AI, or Alibaba as the lane that handles routine traffic.

-

One optional second lane Add this only if you can name the exact failure mode you're solving: a second model family, a second region, or a fallback when the first plan hits queueing or fair-use limits.

-

One metered premium lane Put OpenAI last. That keeps premium pricing attached to the hardest work instead of every turn.

This pattern is better than trying to force one plan to do everything, and it's also better than buying three subscriptions on day one. Most people need one plan plus one escape hatch. The second subscription only pays for itself when you know why it's there.

Buyer Profiles

Lowest monthly entry point

- MiniMax Starter or Plus as the primary lane, depending on how much M2.7 quota you need

- OpenAI only for manual or policy-driven escalation

This is the simplest setup if you want a low fixed monthly bill and M2.7 is a good fit for your routine tasks.

Kimi fixed-cost lane

- Fireworks Fire Pass as the primary lane

- OpenAI only for manual or policy-driven escalation

This is the cleanest setup if you specifically want Kimi K2.6 Turbo and you're fine with the personal-use restriction.

Best monthly ceiling

- MiniMax Plus or Max as the primary lane

- OpenAI as the premium fallback

This is the cleanest fit if your main requirement is a predictable monthly bill and you specifically want the MiniMax M2.7 family.

Broadest one-plan setup

- Alibaba Coding Plan Pro as the primary lane

- Route through the bundled

qwenprovider

This is the right fit if you want Qwen, GLM, Kimi, and MiniMax-family options exposed behind one provider surface, and you're willing to respect the plan-specific key and endpoint rules.

GLM-first setup

- Z.AI as the primary lane

- OpenAI as the premium fallback

This is a good fit if you want GLM-5.1 or GLM-4.7 to carry most of the work and you can tolerate best-effort scheduling on OpenClaw traffic.

A Config Shape That Actually Makes Sense

The auth step differs by provider, but the actual OpenClaw routing object is small:

a-config-shape-that-actually-makes-sense.json

1{

2 "agents": {

3 "defaults": {

4 "model": {

5 "primary": "fireworks/accounts/fireworks/routers/kimi-k2p6-turbo",

6 "fallbacks": [

7 "qwen/qwen3.5-plus",

8 "openai/gpt-5.4"

9 ]

10 }

11 }

12 }

13}That's a real OpenClaw-style shape, not pseudo-config: OpenClaw reads agents.defaults.model.primary and agents.defaults.model.fallbacks when it builds the candidate chain.[15] Before you paste a route into production config, verify that the installed provider catalog exposes it:

terminal

1openclaw models list --provider fireworks

2openclaw models list --provider qwen

3openclaw models list --provider openaiThis check matters because vendor plan pages can move faster than the bundled OpenClaw provider docs.[1][2][5][6]

If your Fireworks provider list still exposes fireworks/accounts/fireworks/routers/kimi-k2p5-turbo instead of the current K2.6 route, use the route your installed OpenClaw build reports until the provider catalog catches up.

The routing rule is the important part:

- fixed-cost or quota-based lane first

- optional second subscription lane second

- metered API last

If you're MiniMax-first, swap the primary model to minimax-portal/MiniMax-M2.7 after Coding Plan OAuth setup. If you're GLM-first, swap it to zai/glm-5.1.[4][8]

Guardrails for Budget and Reliability

OpenClaw routing should include policy, not only model ids. Without policy, retries can quietly turn a cheap plan into an expensive run.

Use four guardrails:

| Guardrail | What to enforce | Why it matters |

|---|---|---|

| Task class | Route routine edits, search, and summaries to the subscription lane | Keeps metered models away from low-value turns |

| Context cap | Escalate only after summarizing or trimming context | Prevents long-context pricing from becoming the default |

| Retry budget | Limit automatic retries per task class | Agent loops can multiply quota burn quickly |

| Failover reason | Log why a fallback model was used | Makes cost spikes debuggable after the run |

For fixed-plan providers, also track remaining quota in the UI or logs. Fireworks has the simplest model-specific pass, while MiniMax, Z.AI, and Alibaba each have quota shapes that can fail differently. MiniMax can slow down through rolling-window and dynamic limits. Z.AI can queue OpenClaw work under load. Alibaba can hard-fail when request quota is exhausted.[3][8][13]

For OpenAI, the key control is escalation discipline. A good rule is: call the premium lane only when the cheaper lane produced a low-confidence plan, hit a repeated tool failure, or needs help with a high-impact architectural decision. Don't use it for every directory listing, file read, or low-risk copy edit.

A provider stack is only cheaper if the router knows which work is cheap.

The common mistake is treating "unlimited" as a capacity promise. These plans still have scopes, queues, quota windows, weighted burn rates, or model-specific coverage. Read the operating limit before routing agent loops through it.

Practical Buying Order

Starting from scratch:

- Start with MiniMax Starter or Plus if the goal is the lowest monthly fixed entry point and you want the M2.7 family.[9][3][4]

- Choose Fireworks Fire Pass instead if you specifically want Kimi K2.6 Turbo with no per-token charge while the pass is active.[1][2]

- Choose Alibaba Pro if you want the broadest bundled model surface behind one OpenClaw provider route.[12][5]

- Choose Z.AI if you specifically want GLM and you're comfortable working around best-effort scheduling on OpenClaw traffic.[8][10]

- Keep OpenAI out of the default lane and reserve it for the expensive edge cases where the premium model is worth paying for.[7][14]

That's the practical answer. Most OpenClaw users don't need five active providers. They need one good subscription lane, an optional second lane only if a real gap appears, and one premium API escape hatch.

Self-Check: Pick a Stack for Your Agent

You are building a new agent that processes customer account requests for a SaaS support team. It reads billing policies, checks account status, drafts CRM updates, and escalates exceptions to a human queue. The team expects 200-400 requests per day. Each request triggers 3-5 file reads, 1-2 API calls, and occasional retry loops.

Which provider stack do you pick, and why?

Answer sketch

- A MiniMax Starter or Plus primary lane is the lowest monthly starting point if the billing-policy and account reads are routine and M2.7 is good enough for the workload.

- A Fireworks Fire Pass primary lane makes sense instead if the team specifically wants Kimi K2.6 Turbo and the personal-use restriction fits the use case.

- An Alibaba Pro primary lane makes sense if you want to experiment with multiple model families (Qwen for policy parsing, MiniMax-M2.5 for ticket summarization) behind one subscription.

- OpenAI should only enter the stack for the edge case where a customer dispute requires deep reasoning about policy exceptions or contract terms.

- A second subscription lane is unnecessary on day one. Add it only when you can name the exact failure mode it solves, such as the primary plan hitting quota during a launch-week support surge.

Key Takeaways

- Check the OpenClaw route, not only the vendor name. The plan you buy and the provider id you configure are sometimes different surfaces.

- MiniMax is the lowest monthly entry point if you specifically want M2.7. The bigger correction is that the docs are in a Coding Plan to Token Plan transition, so the quota rules matter more than the marketing label.[9][3][4]

- Fireworks is the Kimi fixed-cost lane, not the generic cheapest lane. Fire Pass is useful when Kimi K2.6 Turbo is your intended daily driver and the personal-use limits fit your use case.[1]

- Z.AI is the GLM lane with good OpenClaw-specific docs. It isn't the right fit if you need hard service levels under load.[8]

- Alibaba is now a real coding-plan option, not only a pay-as-you-go API. Through OpenClaw's

qwenprovider, it's the broadest single subscription lane in this comparison.[12][5] - OpenAI is still the premium fallback lane. Use it selectively, because long-context and output-heavy sessions get expensive fast.[7][14]

After you pick a plan, the next skill is teaching your agent to use tools safely and efficiently. The routing stack protects your budget, but the agent's reasoning loop determines whether it wastes tokens on useless retries. A well-routed agent with poor prompt design will still burn through quota. A well-prompted agent with poor routing will still empty your wallet. You need both, and the curriculum's agent engineering sections show how to build the reasoning side once the infrastructure side is in place.

References

Fireworks Fire Pass

Fireworks AI · 2026

MiniMax Token Plan Pricing

MiniMax · 2026

FAQs

MiniMax · 2026

Fireworks

OpenClaw · 2026

Model failover

OpenClaw · 2026

MiniMax

OpenClaw · 2026

GLM Coding Plan Overview

Z.AI · 2026

GLM Coding Plan FAQ

Z.AI · 2026

OpenClaw - Overview

Z.AI · 2026

Alibaba Cloud Model Studio: Coding Plan overview

Alibaba Cloud · 2026

Alibaba Cloud Model Studio: Coding Plan FAQ

Alibaba Cloud · 2026

Qwen

OpenClaw · 2026

OpenAI API Pricing

OpenAI · 2026

GPT-5.4 Model

OpenAI · 2026

OpenAI

OpenClaw · 2026