BlogRun Qwen3.6 Locally with Unsloth GGUF

🏷️ Local LLM🏷️ Qwen3.6🏷️ Unsloth🏷️ GGUF🏷️ llama.cpp🏷️ GPU Inference

Run Qwen3.6 Locally with Unsloth GGUF

Qwen3.6 adds newer open-weight 35B-A3B and 27B models focused on coding and agent work. This guide shows how to run the Unsloth GGUF builds with llama.cpp, choose the right quant, and expose a local OpenAI-compatible endpoint.

LeetLLM TeamMay 13, 202638 min read

Run Qwen3.6 Locally with Unsloth GGUF

Imagine you're building a coding assistant for a private inventory system. You want it to read local files, explain a failing test, and suggest a patch, but you don't want to send source code, customer names, order histories, or warehouse rules to a hosted model. Running Qwen3.6 locally gives you a private inference loop on your own machine.

The old Qwen3.5 local guide on LeetLLM focused on Ollama because that was the cleanest beginner path at the time. Qwen3.6 changes the recommendation. The newer Qwen3.6 open-weight releases are aimed at coding and agent workflows, and the Unsloth GGUF builds give you direct, exact model tags for llama.cpp and Unsloth Studio workflows.[1][2][3]

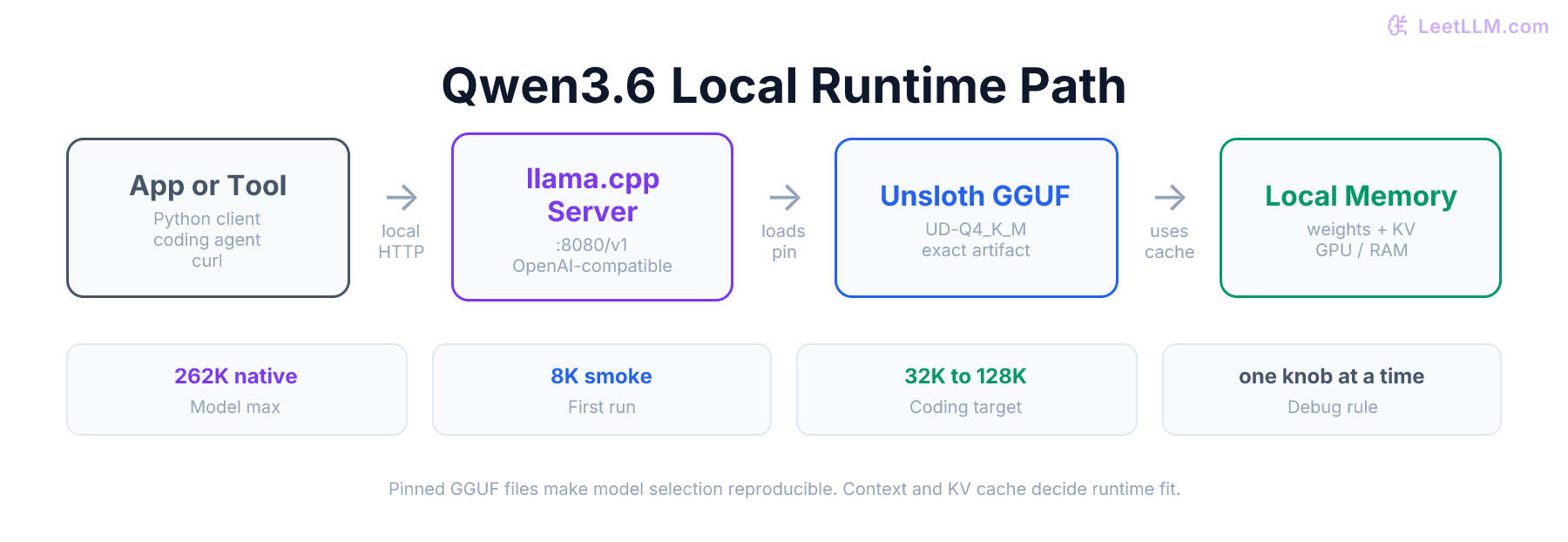

This guide uses llama.cpp as the main runtime and Unsloth GGUF as the model source. That path gives you three practical advantages: exact quant selection, clear hardware debugging, and a local OpenAI-compatible endpoint you can point coding tools or Python scripts at.

💡 Newer-model note: If you already use the older Qwen3.5 Ollama guide, keep it. It still teaches Ollama tags, Modelfiles, context sizing, and local API basics. If you're choosing a fresh model today, start here with Qwen3.6 and Unsloth GGUF.

What Changed in Qwen3.6

Qwen3.6 is the newer Qwen family release after Qwen3.5. The official Qwen repository says Qwen3.6 prioritizes stability, real-world utility, agentic coding, and a feature called thinking preservation, where reasoning context can carry across conversation history.[1]

Two open-weight models matter most for local users:

| Model | Shape | Why you would choose it |

|---|---|---|

Qwen3.6-35B-A3B | 35B total parameters, 3B activated | Sparse Mixture-of-Experts model for coding and agent work |

Qwen3.6-27B | 27B dense parameters | Simpler dense model with strong coding scores and fewer MoE-specific tuning knobs |

A dense model runs every parameter path for every token. A Mixture-of-Experts (MoE) model stores many expert blocks but activates only a subset for each token. Qwen's 35B-A3B model has 35B total parameters, but only 3B are activated per token. Its model card lists 256 experts, with 8 routed experts plus 1 shared expert active during inference.[4]

That distinction explains why the local fit question is subtle. The 35B-A3B model doesn't compute like a dense 35B model for every token, but you still need enough memory to store the quantized weights, the key-value cache, runtime buffers, and any image projection file you load.

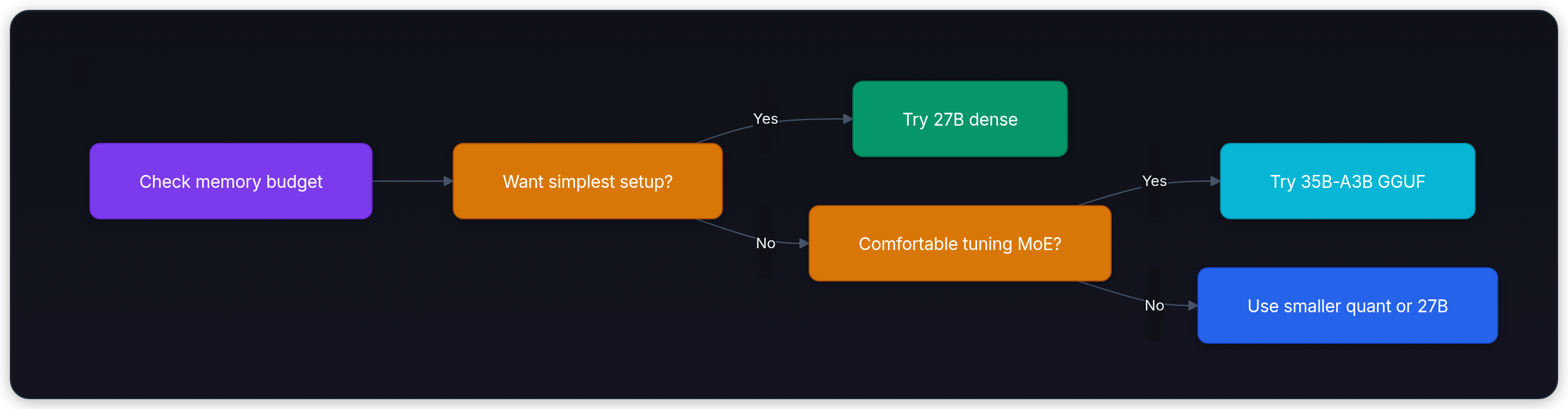

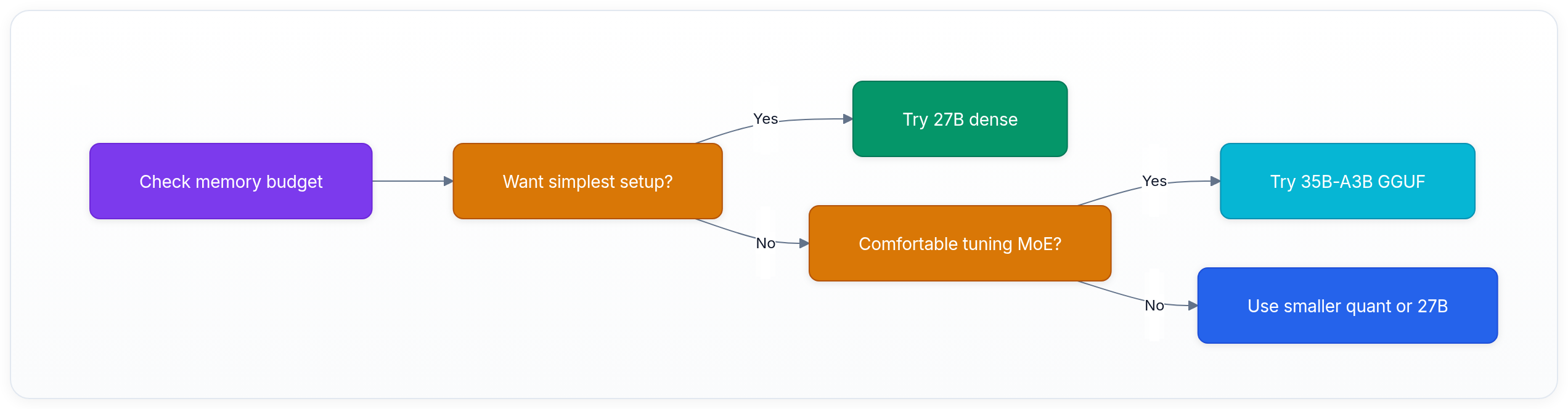

Use this decision flow before you download anything:

Why This Guide Starts with Unsloth GGUF

GGUF is a model file format used by llama.cpp and many local model apps. It packages quantized weights in a form that can run on CPUs, GPUs, and unified-memory systems without a full Python serving stack.

Unsloth publishes Qwen3.6 GGUF repositories and documentation with direct commands for llama.cpp, Unsloth Studio, Pi, Hermes Agent, and other local tools.[2][5][3] It also documents Dynamic 2.0 GGUF quantization, which uses model-specific quantization choices rather than applying one uniform recipe to every layer.[6]

That doesn't mean every Unsloth quant is automatically best for every machine. It means this path is better for a tutorial because you can pin a precise artifact:

text1unsloth/Qwen3.6-35B-A3B-GGUF:UD-Q4_K_M

That string tells you the source organization, model family, file format, and quantization. Compare that with a moving latest alias. Exact pins make local debugging far less mysterious.

🎯 Production tip: Treat model selection like dependency management. Use convenient aliases for experiments, then pin the exact model and quant in scripts, coding-agent config, and benchmark notes.

Read the Quant Name Without Guessing

The hardest beginner step is not the install command. It is looking at a filename like this and knowing whether it belongs on your machine:

text1Qwen3.6-35B-A3B-UD-Q4_K_M.gguf

Read it from left to right:

| Piece | Meaning | Beginner translation |

|---|---|---|

Qwen3.6 | Model family | Which model release you are running |

35B-A3B | Model shape | 35B total parameters, about 3B active per token |

UD | Unsloth Dynamic | Unsloth chose quantization per layer instead of using one blunt setting everywhere |

Q4 | About 4-bit weights | Smaller than 8-bit or BF16, usually the best first quality-size balance |

K_M | llama.cpp quant tier | A middle 4-bit variant, smaller than XL-style variants |

.gguf | File format | The file llama.cpp loads |

Quantization means storing model weights with fewer bits. Think of it like choosing how much detail to keep on a warehouse scan. A full-resolution scan is easiest to read but expensive to store. A compressed scan is smaller and faster to move around, but if you compress too hard, you start losing details that matter.

For local LLMs, the trade-off is:

| Quant level | What it means | Use it when | Avoid it when |

|---|---|---|---|

IQ2 or 2-bit-ish | Very small | You only need a smoke test or have tight memory | You care about final coding quality |

Q3 or 3-bit | Small | You want to see if the model family can fit at all | You can fit a 4-bit model |

Q4 or 4-bit | Default starting point | You want useful local coding quality without huge memory | You are doing careful quality comparisons |

Q5 or Q6 | Higher quality | You have extra memory and want fewer quantization artifacts | Memory is tight |

Q8 or 8-bit | Much larger | You are comparing quality and have plenty of memory | You are on a 24 GB or 32 GB setup |

BF16 | Near source precision | You have server-class memory | You want a casual local setup |

Unsloth's Qwen3.6 guide gives a practical total-memory ladder for the family. Total memory means VRAM plus system RAM, or unified memory on Apple Silicon. Their table lists these planning numbers:[3]

| Model | 3-bit | 4-bit | 6-bit | 8-bit | BF16 |

|---|---|---|---|---|---|

Qwen3.6-27B | 15 GB | 18 GB | 24 GB | 30 GB | 55 GB |

Qwen3.6-35B-A3B | 17 GB | 23 GB | 30 GB | 38 GB | 70 GB |

Those numbers are useful, but keep one rule in mind: "can run" and "feels good" are different. If the model spills to slow storage or system memory, llama.cpp may still produce tokens, but the experience can become painfully slow. For a friendly first setup, leave headroom.

If you want the shortest possible answer:

| Your machine | Pick this first | Why |

|---|---|---|

| Around 16 GB total memory | Try 27B with a smaller quant only as a test | Qwen3.6 is big for this tier |

| Around 24 GB total memory | Qwen3.6-27B-GGUF:UD-Q4_K_XL | Strong 4-bit dense starting point |

| Around 32 GB total memory | Qwen3.6-35B-A3B-GGUF:UD-Q4_K_M | Best first MoE coding setup |

| 48 GB or more | Try higher quants or longer context | You have room for quality and KV cache |

💡 Key insight: Pick the quant for the machine you have, not the model you wish you had. A stable 4-bit model that stays in fast memory usually beats a larger or higher-precision setup that constantly spills.

Pick 35B-A3B or 27B

Qwen's model cards describe both Qwen3.6 open-weight models as causal language models with vision encoders, Apache 2.0 licensing, and native 262,144-token context windows.[4][7] For local text-only coding, you usually care about three questions first:

- Does it fit without thrashing system memory?

- Can it sustain enough tokens per second to feel usable?

- Does the setup stay reproducible when you restart it tomorrow?

Here is a beginner-friendly way to choose:

| Machine budget | Safer first model | Why |

|---|---|---|

| 16 GB unified memory / VRAM | Qwen3.6-27B-GGUF at a smaller quant, or step down to another local model | Qwen3.6 is large; don't start with a 22 GB file on a tight machine |

| 24 GB unified memory / VRAM | Qwen3.6-27B-GGUF:UD-Q4_K_XL | Dense model, fewer MoE offload surprises, still strong coding fit |

| 32 GB+ unified memory / VRAM | Qwen3.6-35B-A3B-GGUF:UD-Q4_K_M | Good first 35B-A3B target if you want the MoE model |

| 48 GB+ | 35B-A3B with larger context and higher quants | More room for KV cache, longer coding sessions, and vision projector |

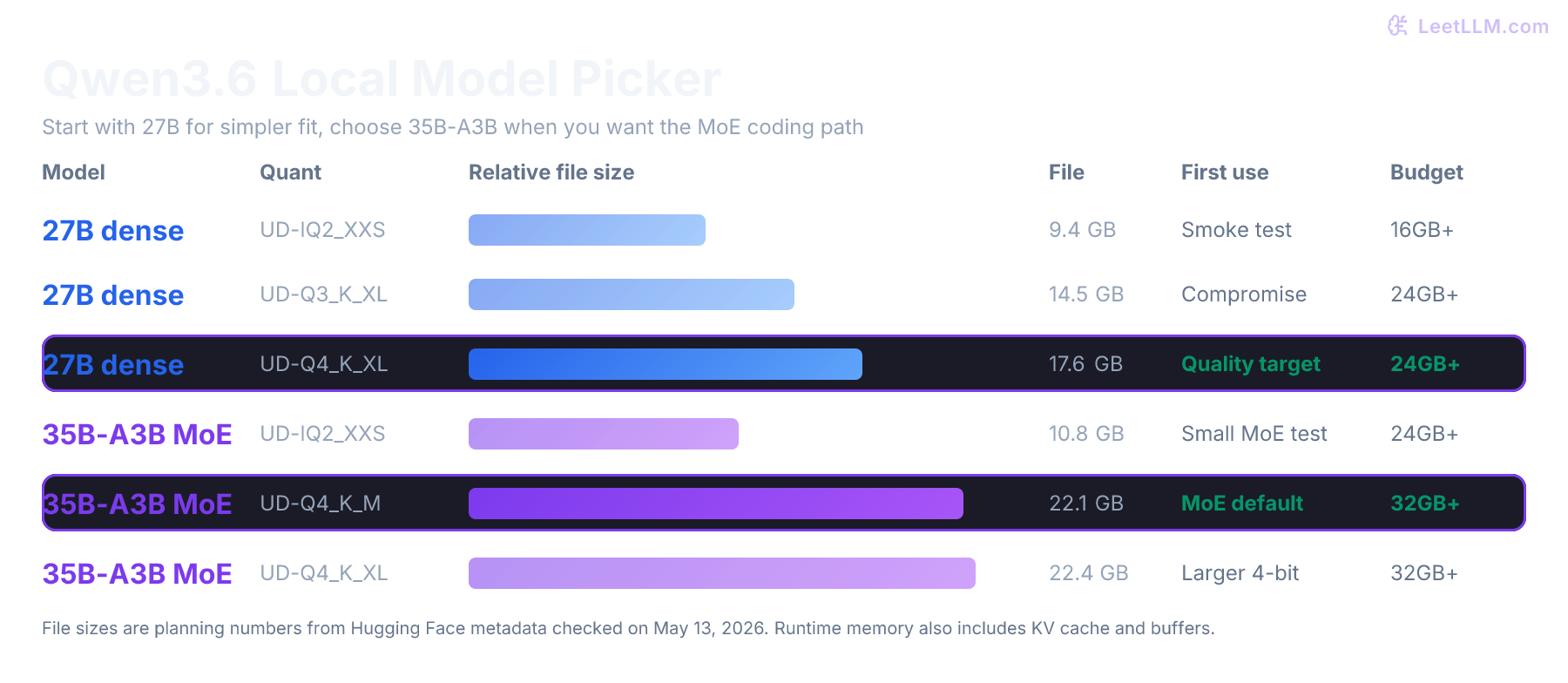

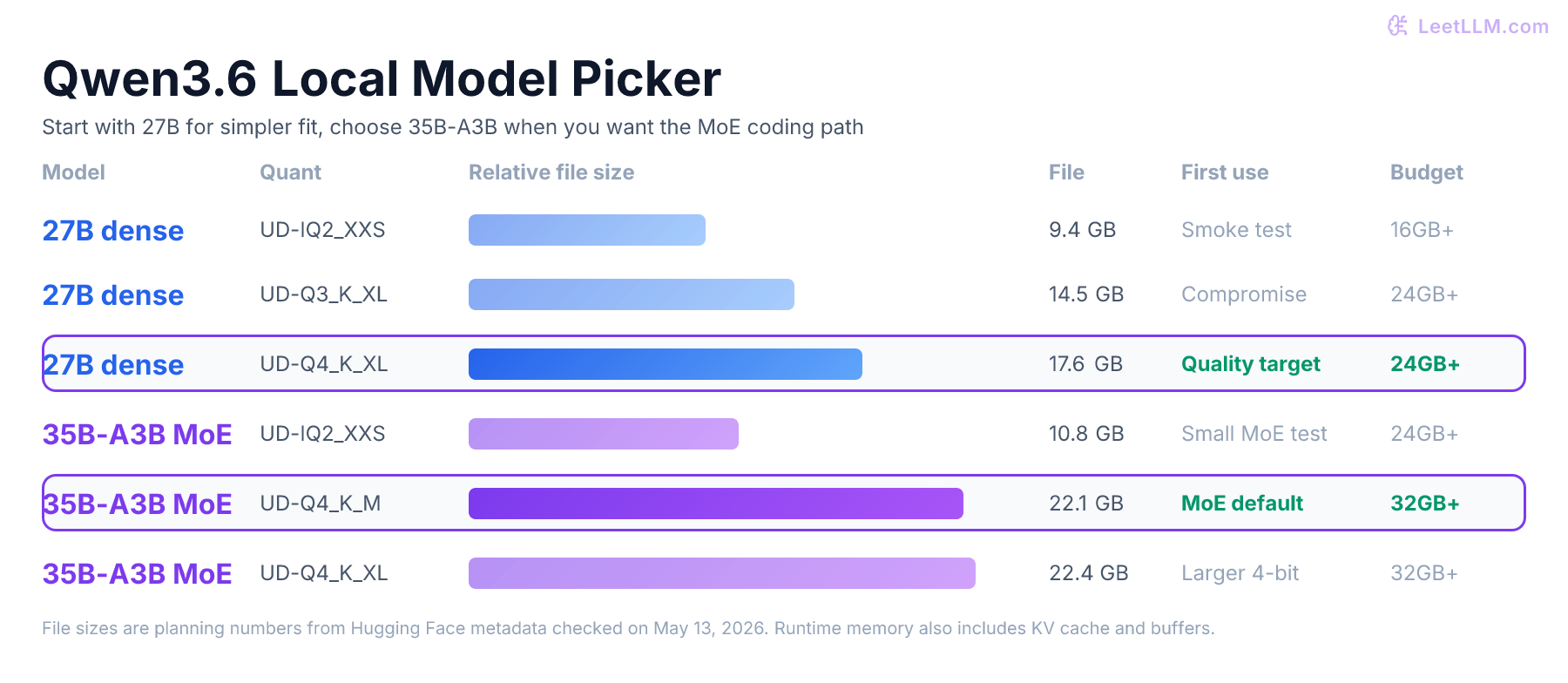

The exact file sizes below came from Hugging Face model file metadata checked on May 13, 2026. Treat them as planning numbers, not a promise that runtime memory equals file size.

| Unsloth repo | Useful file | Approx file size | First use case |

|---|---|---|---|

unsloth/Qwen3.6-27B-GGUF | UD-IQ2_XXS | 9.4 GB | Tight-memory smoke test |

unsloth/Qwen3.6-27B-GGUF | UD-Q3_K_XL | 14.5 GB | Midrange compromise |

unsloth/Qwen3.6-27B-GGUF | UD-Q4_K_XL | 17.6 GB | Good 27B quality target |

unsloth/Qwen3.6-35B-A3B-GGUF | UD-IQ2_XXS | 10.8 GB | Smallest 35B-A3B experiment |

unsloth/Qwen3.6-35B-A3B-GGUF | UD-Q4_K_M | 22.1 GB | Practical 35B-A3B default |

unsloth/Qwen3.6-35B-A3B-GGUF | UD-Q4_K_XL | 22.4 GB | Slightly larger 4-bit target |

| either repo | mmproj-BF16.gguf | about 0.9 GB | Required for image input |

The image shows the core trade-off: 27B is easier to reason about, while 35B-A3B is the interesting coding-focused MoE path. If you don't know which one to start with, start with 27B on 24 GB-class hardware and 35B-A3B on 32 GB-class hardware.

Install llama.cpp

llama.cpp is the local inference engine used by many GGUF workflows. The Unsloth model cards show direct llama-server -hf ... and llama-cli -hf ... commands, so use a recent llama.cpp build with Hugging Face download support.[8][2]

On macOS, Homebrew is the fastest path:

bash1brew install llama.cpp 2llama-server --version

On Linux with an NVIDIA GPU, build from source so you can enable CUDA and remote Hugging Face downloads:

bash1git clone https://github.com/ggml-org/llama.cpp 2 3cmake -S llama.cpp -B llama.cpp/build \ 4 -DGGML_CUDA=ON \ 5 -DLLAMA_CURL=ON 6 7cmake --build llama.cpp/build \ 8 --config Release \ 9 -j \ 10 --target llama-server llama-cli 11 12./llama.cpp/build/bin/llama-server --version

If you don't have an NVIDIA GPU, remove -DGGML_CUDA=ON and use CPU or platform-specific acceleration instead. Apple Silicon users should also test MLX later, because Qwen's official README lists MLX as a supported local path for Qwen3.6.[1]

First Smoke Test

Start with a short terminal test before you run a server. A short test proves that the binary can download the model, parse the GGUF, and generate tokens.

For the 27B dense model:

bash1llama-cli \ 2 -hf unsloth/Qwen3.6-27B-GGUF:UD-Q4_K_XL \ 3 --ctx-size 8192 \ 4 --n-gpu-layers 99 \ 5 -p "Write a Python function that validates an order status enum."

For the 35B-A3B MoE model:

bash1llama-cli \ 2 -hf unsloth/Qwen3.6-35B-A3B-GGUF:UD-Q4_K_M \ 3 --ctx-size 8192 \ 4 --n-gpu-layers 99 \ 5 -p "Write a Python function that validates an order status enum."

The --ctx-size 8192 value is intentionally modest. Qwen3.6 supports much longer native context, but your first run should test the model, not your maximum KV cache budget. Once the small run works, increase context in stages.

Expected behavior:

text1The first run downloads model files from Hugging Face. 2The prompt starts slower than later prompts because the model is loading. 3The answer should be coherent Python, not repeated symbols or random tokens.

If the output is nonsense, update llama.cpp before blaming the model. Qwen3.6 is new enough that old local runtimes are a common hidden variable.

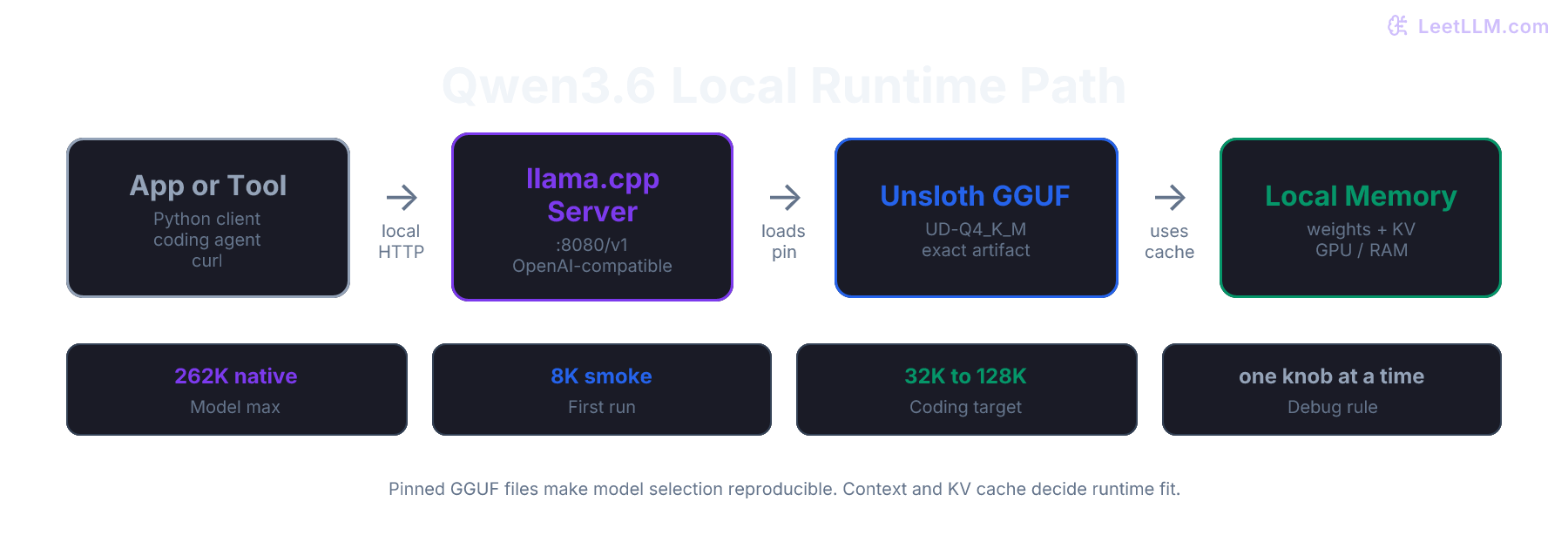

Run a Local OpenAI-Compatible Server

Once the smoke test works, expose a local HTTP endpoint. This is the useful mode for coding tools, small apps, and benchmarks.

The 27B server command:

bash1llama-server \ 2 -hf unsloth/Qwen3.6-27B-GGUF:UD-Q4_K_XL \ 3 --ctx-size 32768 \ 4 --n-gpu-layers 99 \ 5 --host 127.0.0.1 \ 6 --port 8080

The 35B-A3B server command:

bash1llama-server \ 2 -hf unsloth/Qwen3.6-35B-A3B-GGUF:UD-Q4_K_M \ 3 --ctx-size 32768 \ 4 --n-gpu-layers 99 \ 5 --host 127.0.0.1 \ 6 --port 8080

The request path now looks like this:

The important detail is that model storage and context storage are separate. The GGUF file stores weights. The KV cache grows with context length and concurrency.

Test the server with curl:

bash1curl http://127.0.0.1:8080/v1/chat/completions \ 2 -H "Content-Type: application/json" \ 3 -d '{ 4 "model": "qwen3.6-local", 5 "messages": [ 6 { 7 "role": "system", 8 "content": "You are a precise coding assistant. Return concise Markdown." 9 }, 10 { 11 "role": "user", 12 "content": "Explain how to make a retry loop safe for duplicate order updates." 13 } 14 ], 15 "temperature": 0.3, 16 "max_tokens": 500 17 }'

For app code, point the OpenAI SDK at your local server:

python1from openai import OpenAI 2 3client = OpenAI( 4 base_url="http://127.0.0.1:8080/v1", 5 api_key="local", 6) 7 8response = client.chat.completions.create( 9 model="qwen3.6-local", 10 messages=[ 11 { 12 "role": "system", 13 "content": "You are a precise coding assistant for logistics software.", 14 }, 15 { 16 "role": "user", 17 "content": "Write a pytest for an idempotent refund webhook handler.", 18 }, 19 ], 20 temperature=0.2, 21 max_tokens=700, 22) 23 24print(response.choices[0].message.content)

The api_key is a placeholder because the SDK expects one. The local llama.cpp server doesn't require a hosted-provider key.

Choose Context Size Carefully

Qwen3.6's official model cards list a native context length of 262,144 tokens and an extended path up to 1,010,000 tokens with RoPE scaling techniques such as YaRN.[4][7] That is a model capability, not a recommendation to start every laptop session at 262K.

Context costs memory through the KV cache. The longer the prompt and chat history, the more key-value state the runtime keeps. For local coding agents, the cache can become the main reason a model that seemed to fit suddenly slows down or fails.

Use this context ladder:

| Stage | Context | Why |

|---|---|---|

| Smoke test | 8K | Proves the model and runtime work |

| Normal local chat | 16K to 32K | Enough for many code snippets and short files |

| Coding agent | 64K to 128K | Enough for larger diffs, traces, and tool output |

| Long-context experiment | 262K | Only after you know memory placement is stable |

Qwen's 27B model card says Qwen3.6 uses extended context for complex tasks and advises keeping at least 128K when possible to preserve thinking capabilities, while still recommending reducing context if you hit out-of-memory errors.[7] For a beginner setup, read that as a target to grow toward, not the first command to run.

⚠️ Common mistake: Starting with maximum context, then deciding the model is bad because it feels slow. First prove the model at 8K. Then increase context and watch memory behavior.

What About Ollama?

This tutorial uses llama.cpp directly because it gives the clearest view of the GGUF file and runtime knobs. It also avoids a current Qwen3.6-specific Ollama problem.

Unsloth's Qwen3.6 local guide says current Qwen3.6 GGUFs don't work in Ollama because the models use separate mmproj vision files, and it recommends llama.cpp-compatible backends instead.[3] That is why this page is not a simple refresh of the older Qwen3.5/Ollama tutorial.

If you want to learn Ollama fundamentals, keep using the Qwen3.5 Ollama guide. If you want Qwen3.6 today, use llama.cpp or Unsloth Studio first.

Ollama is still a good beginner tool in general. For this specific model family, lower-level llama.cpp commands make it easier to see exactly which GGUF file, context setting, mmproj file, and offload behavior you're testing.

What About Images?

The official Qwen3.6 model cards describe both 35B-A3B and 27B as language models with vision encoders.[4][7] The Unsloth GGUF repositories also include mmproj files, which are the multimodal projection files local runtimes need for image input.

For your first run, stay text-only. Once text generation is stable, add the matching mmproj file and check current llama.cpp vision syntax. Vision adds another file, more memory pressure, and more chances to confuse a model-loading problem with an image-prompting problem.

Use this order:

- Run text-only

llama-cli - Run text-only

llama-server - Test the OpenAI-compatible endpoint

- Add

mmprojonly after the endpoint is stable

That order keeps debugging clean.

Tune for Coding

Local coding models are easiest to evaluate when you give them narrow, checkable tasks. Don't start with "refactor this whole repo." Start with one function, one test, or one file.

A good coding setup uses:

| Knob | Beginner value | Why |

|---|---|---|

temperature | 0.2 to 0.4 | Keeps code less random |

max_tokens | 500 to 1500 | Prevents runaway answers |

| context | 16K to 64K | Enough for files without crushing memory |

| model pin | exact Unsloth tag | Makes test runs repeatable |

Use prompts with visible acceptance criteria:

text1You are editing a TypeScript order service. 2 3Task: 4- Write one pure function named normalizeCarrierStatus. 5- Inputs: "picked_up", "in_transit", "delivered", "failed_delivery". 6- Output: "active", "complete", or "needs_review". 7- Return only TypeScript code plus one short explanation.

Then run the output through a test. Local LLMs are valuable because you can iterate cheaply, but cheap iteration still needs a verifier. For code, the verifier is your test suite.

When Things Go Wrong

Symptom: The model downloads but generation is painfully slow

Cause: The model or KV cache doesn't fit cleanly in fast memory.

Fix: Lower context first. If that doesn't help, use a smaller quant or switch from 35B-A3B to 27B. Don't tune five flags at once.

Symptom: The server crashes when the first prompt arrives

Cause: The weights loaded, but the first real KV cache allocation pushed the process over the limit.

Fix: Restart with --ctx-size 8192. After that works, try 16K, then 32K.

Symptom: Output repeats, breaks formatting, or turns into junk

Cause: The runtime may be too old for this model family, or the chat template path may be wrong.

Fix: Rebuild llama.cpp from a current commit. Then rerun the same prompt at low temperature before changing model files.

Symptom: Image input doesn't work

Cause: Text weights are loaded, but the multimodal projector is missing or mismatched.

Fix: Prove text-only first. Then add the matching mmproj file from the same Unsloth repository.

Symptom: Tool-calling output is almost right but fails JSON parsing

Cause: Local models often need narrower schemas and stronger validation loops than hosted frontier models.

Fix: Reduce temperature, ask for one tool call at a time, validate JSON externally, and retry with the validation error. Unsloth's Qwen3.6 GGUF card says its upload includes tool-calling improvements for nested object parsing, but you should still keep a validator in the loop.[2]

Practice: Pick a Setup

Suppose you have a workstation with 32 GB of VRAM and 64 GB of system RAM. You want a private coding assistant for a logistics monorepo. It needs to read diffs, summarize failing tests, and write focused patches. You don't need image input on day one.

Pick one:

unsloth/Qwen3.6-27B-GGUF:UD-Q4_K_XLwith--ctx-size 32768unsloth/Qwen3.6-35B-A3B-GGUF:UD-Q4_K_Mwith--ctx-size 32768unsloth/Qwen3.6-35B-A3B-GGUF:UD-Q4_K_Mwith--ctx-size 262144

The best first choice is option 2. You have enough memory to try the 35B-A3B MoE model, and 32K context keeps the first server run realistic. Option 1 is safer but leaves the main Qwen3.6 MoE path untested. Option 3 jumps straight to maximum context, so any failure could be model fit, KV cache pressure, runtime age, or offload behavior.

After option 2 works, run the same prompt at 64K and compare latency. Only then try 128K or higher.

Where This Leads Next

By now you should be able to:

- Explain why Qwen3.6-35B-A3B is not the same local fit problem as a dense 35B model

- Choose between 27B dense and 35B-A3B MoE based on hardware and debugging tolerance

- Run a pinned Unsloth GGUF with llama.cpp

- Expose Qwen3.6 through a local OpenAI-compatible endpoint

- Increase context size without confusing model quality with memory pressure

For most developers, the strongest default path is:

bash1llama-server \ 2 -hf unsloth/Qwen3.6-35B-A3B-GGUF:UD-Q4_K_M \ 3 --ctx-size 32768 \ 4 --n-gpu-layers 99 \ 5 --host 127.0.0.1 \ 6 --port 8080

If that doesn't fit, use the 27B dense model or a smaller 35B-A3B quant. If it does fit, connect your coding tool or Python script to http://127.0.0.1:8080/v1 and start testing with small, verifiable tasks.

References