BlogHow to Become an AI Engineer from Zero in 2026

🏷️ Career🏷️ AI Engineering🏷️ Roadmap

How to Become an AI Engineer from Zero in 2026

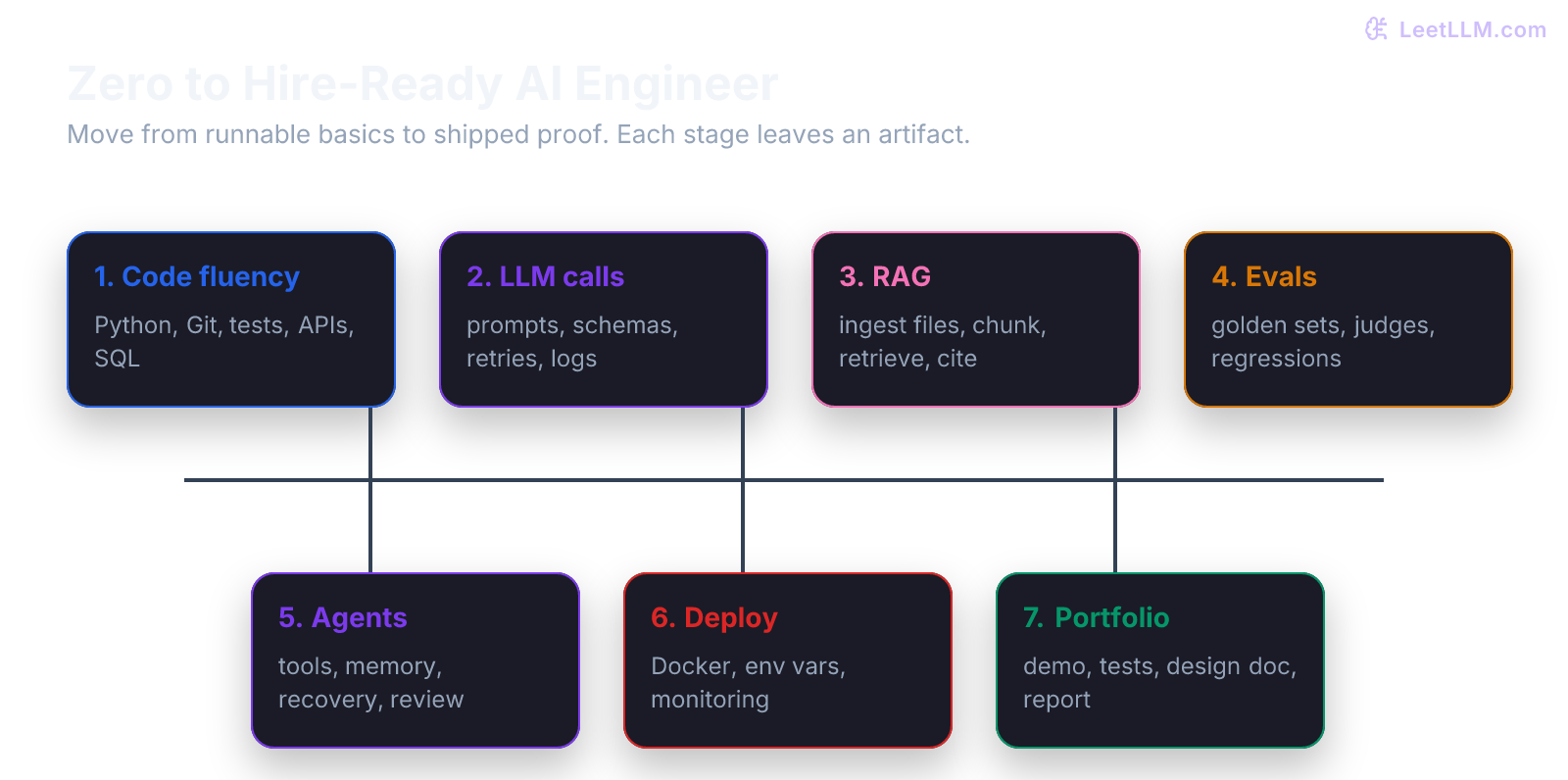

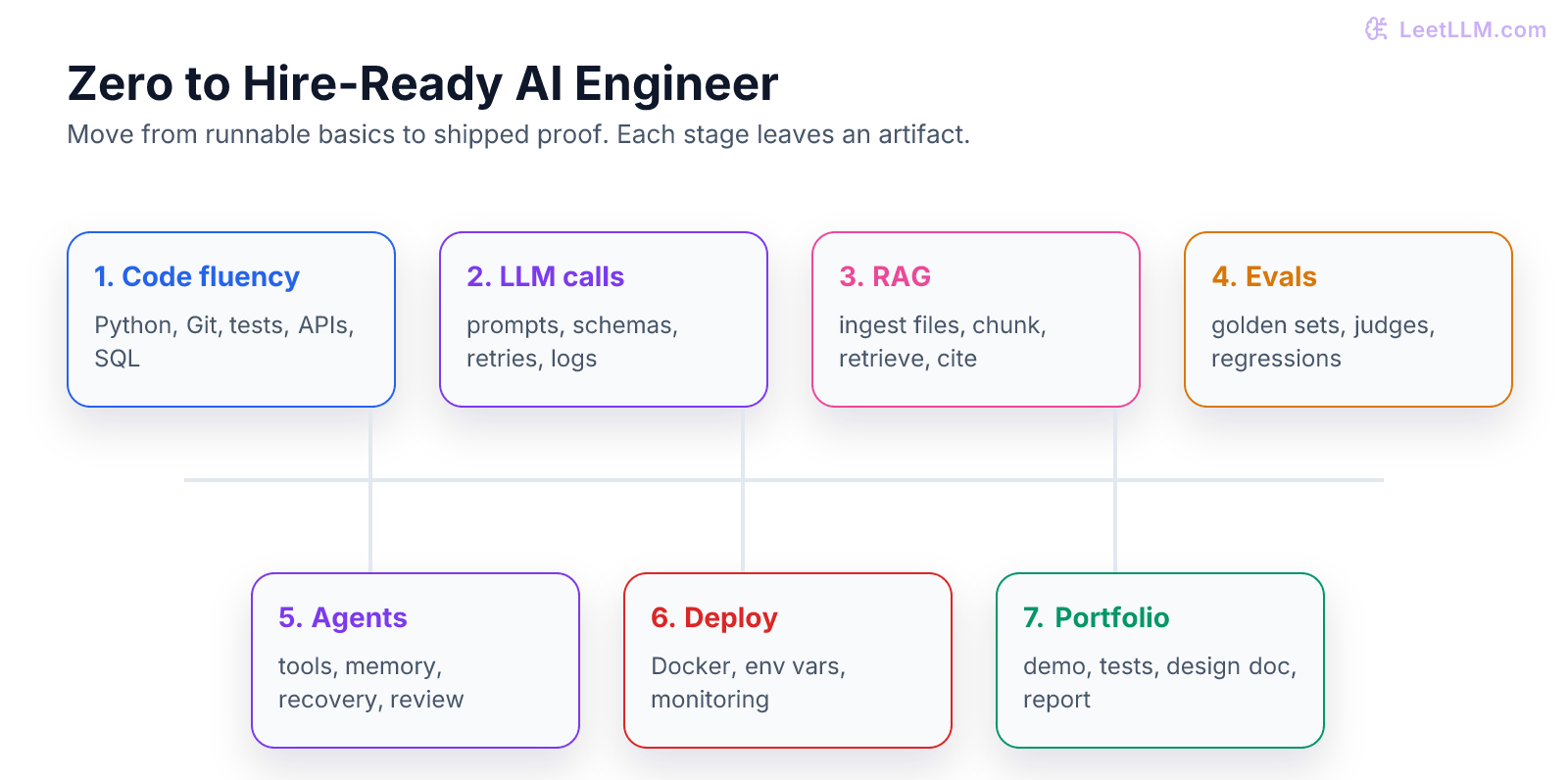

A practical path from beginner to hire-ready AI engineer: programming basics, LLM APIs, RAG, evals, agents, deployment, and portfolio proof.

LeetLLM TeamMay 9, 202618 min read

If you are starting from zero, "learn AI" is too vague to be useful.

AI engineering is not one skill. It's a stack of skills: write reliable software, call models safely, prepare data, retrieve context, evaluate outputs, use agents, deploy services, and explain trade-offs.

The good news is that you don't need to start with research papers or train a foundation model.

You need a path that turns small working artifacts into bigger ones.

The job in plain English

An AI engineer builds software products that use models.

That may include:

- calling an LLM API from a backend service

- extracting structured data from messy text

- building a RAG system over documents

- creating evals that catch regressions

- wiring tools into an agent

- deploying a service with logs and cost controls

- reviewing model failures and improving the system

The model matters, but the product around the model matters more.

| Skill | What it proves |

|---|---|

| Python and tests | You can build reliable backend code. |

| APIs and JSON | You can connect products to model providers. |

| Data ingestion | You can turn messy files into useful context. |

| RAG | You can answer with evidence. |

| Evals | You can measure quality instead of guessing. |

| Agents | You can give models tools safely. |

| Deployment | You can ship and debug real systems. |

| Portfolio | You can show proof, not claims. |

Stage 1: become useful with code

Start with Python, Git, terminal basics, tests, and HTTP APIs.

You don't need to master all of computer science before touching AI. You do need enough software skill to build and debug a small service. Python's official tutorial is still a good baseline for the language itself.[1]

Build this first artifact:

text1Project: Support ticket cleaner 2 3Input: 4User pastes a messy support message. 5 6Output: 7JSON with category, summary, priority, and confidence notes. 8 9Proof: 10- `pytest` tests for normal and invalid inputs 11- README with setup commands 12- sample input/output file

This project teaches the shape of AI engineering before you add a model.

You learn data contracts, error handling, and reproducibility.

Stage 2: call LLM APIs like an engineer

Prompting is only the start.

A production model call needs a wrapper with:

- timeout

- retry policy

- model and prompt version

- response schema

- validation

- trace logging

- cost and latency record

- privacy rules

Provider docs for structured outputs show how schemas can constrain model responses.[2] That feature is useful, but your application still needs validation and business rules.

Build this second artifact:

text1Project: Ticket extractor API 2 3Endpoint: 4POST /tickets/extract 5 6Input: 7Customer message 8 9Output: 10Validated JSON ticket 11 12Proof: 13- mocked model tests 14- invalid-output test 15- latency and token log example 16- one prompt version file

If you can build this cleanly, you are already ahead of many beginners.

Stage 3: build one full AI app

Now connect the pieces.

Use a small backend framework such as FastAPI, which gives you typed request and response models and straightforward route definitions.[3]

Your first app should have:

| Layer | Requirement |

|---|---|

| Frontend | form, loading state, result state, error state |

| Backend | API route, schema validation, model wrapper |

| Storage | saved task, prompt version, output JSON |

| Tests | mocked provider, failure path, schema checks |

| Deploy | environment variables, health route, logs |

Don't start with a complex agent.

Start with one request path that works every time.

Stage 4: learn RAG and file ingestion

RAG means Retrieval-Augmented Generation. The model answers using retrieved context instead of only its training data.

The beginner mistake is jumping straight to a vector database.

Before vectors, learn ingestion:

- parse PDFs

- clean HTML

- handle scanned pages with OCR

- preserve Markdown structure

- store source IDs and page numbers

- remove boilerplate

- track parser quality

Then learn chunking, embeddings, retrieval, reranking, and citations.

Build this artifact:

text1Project: Document QA app 2 3Input: 4Small folder of PDFs or Markdown docs 5 6Output: 7Answer with citations 8 9Proof: 10- source parser logs 11- chunk preview page 12- eval set with 20 questions 13- failure analysis for bad answers

The evidence matters. A portfolio reviewer should see not just "RAG app," but how you ingested files, how retrieval worked, and where it failed.

Stage 5: learn evals

Evals are how you stop guessing.

An eval set can be as small as a JSONL file with inputs, expected properties, and grading rules.

Example:

json1{"input": "I paid twice", "expected_category": "billing", "must_mention": "refund"} 2{"input": "The app crashes on upload", "expected_category": "technical", "must_mention": "upload"}

Start with deterministic checks:

- valid JSON

- required fields

- exact label match

- citation present

- refusal for unsafe request

Then add judge-based evals for cases that need language judgment.

The key is versioning. Save model version, prompt version, dataset version, and judge rubric version. Otherwise you won't know why a score changed.

Stage 6: add agents carefully

Agents are useful when the model needs to use tools, inspect state, or run multiple steps.

They are not a shortcut around product design.

Start with a simple tool loop:

| Tool | Example |

|---|---|

| Search | Find relevant docs. |

| Calculator | Compute totals. |

| Database lookup | Fetch account status. |

| File reader | Inspect uploaded content. |

| Ticket creator | Draft or create a support ticket. |

Then add guardrails:

- loop limits

- tool input schemas

- tool output logs

- human approval for side effects

- retry and fallback path

- prompt injection checks

OWASP's LLM security guidance is worth reading early because prompt injection and sensitive information disclosure show up quickly once tools and documents enter the system.[4]

Stage 7: deploy and operate

A hire-ready AI engineer can ship.

Docker is one common way to package an app so the runtime is reproducible across machines and deployment targets.[5]

Your deploy checklist should include:

| Check | What to show |

|---|---|

| Env vars | No secrets in git. |

| Health route | Service can be checked without model call. |

| Logs | Trace ID connects request to model call. |

| Errors | Timeouts and invalid outputs are visible. |

| Cost | Token usage grouped by feature. |

| Rollback | Known working commit or image. |

This is where many AI demos fail.

They work locally once, but they don't have logs, tests, or a path to debug production failures.

What to study in order

Here is the shortest useful path:

- Python, Git, terminal, tests.

- HTTP, JSON, APIs, and backend routes.

- Prompting and structured outputs.

- Production model calls with retries and validation.

- One full AI app.

- File ingestion and chunking.

- Embeddings, retrieval, reranking, and citations.

- Evals and regression tracking.

- Agents and tool use.

- Deployment, observability, privacy, and security.

- Portfolio projects with design docs.

- Interview practice from your own projects.

Don't rush the early layers.

Every later AI system depends on the same boring foundations: parse input, validate output, save state, test behavior, and debug failure.

What "hire-ready" means

Hire-ready doesn't mean you know every paper.

It means you can build a useful AI system and explain it.

You should be able to show:

- a working app

- clean repository

- tests

- eval report

- deployment notes

- cost estimate

- failure analysis

- design trade-offs

- next steps

You should also be able to answer practical questions:

- Why did you choose RAG instead of fine-tuning?

- How do you know the model improved?

- What happens when the provider times out?

- Where could prompt injection enter?

- What data do you log?

- How would you roll back a bad prompt?

Those questions come from real work.

Common traps

| Trap | Better move |

|---|---|

| Starting with model news. | Build one working request path. |

| Copying agent frameworks without understanding the loop. | Implement a small tool loop yourself. |

| Building RAG before ingestion quality. | Parse and inspect documents first. |

| Trusting model output because it looks right. | Validate and evaluate. |

| Shipping a demo with no tests. | Mock the model and test your app code. |

| Hiding limitations. | Write a real failure analysis. |

The LeetLLM path

LeetLLM is built to support this exact journey:

- beginner programming and math foundations

- prompting and LLM lifecycle

- API production patterns

- first app build

- file ingestion and chunking

- RAG and agents

- evals and monitoring

- capstone projects

- hard system design

The goal is not to memorize terms.

The goal is to become the person who can take an unclear product need, design the AI path, build the first version, measure it, and improve it.

That is the job.

References

The Python Tutorial.

Python Software Foundation. · 2026 · Python Documentation

FastAPI Documentation.

FastAPI Project. · 2026 · Official documentation

Docker Documentation.

Docker Inc. · 2026 · Official documentation

Structured outputs

OpenAI · 2024

OWASP Top 10 for Large Language Model Applications

OWASP Foundation · 2025

Artificial Intelligence Risk Management Framework (AI RMF 1.0).

National Institute of Standards and Technology · 2023 · NIST