BlogAI Engineer Salary Guide 2026

🏷️ Career🏷️ Compensation

AI Engineer Salary Guide 2026

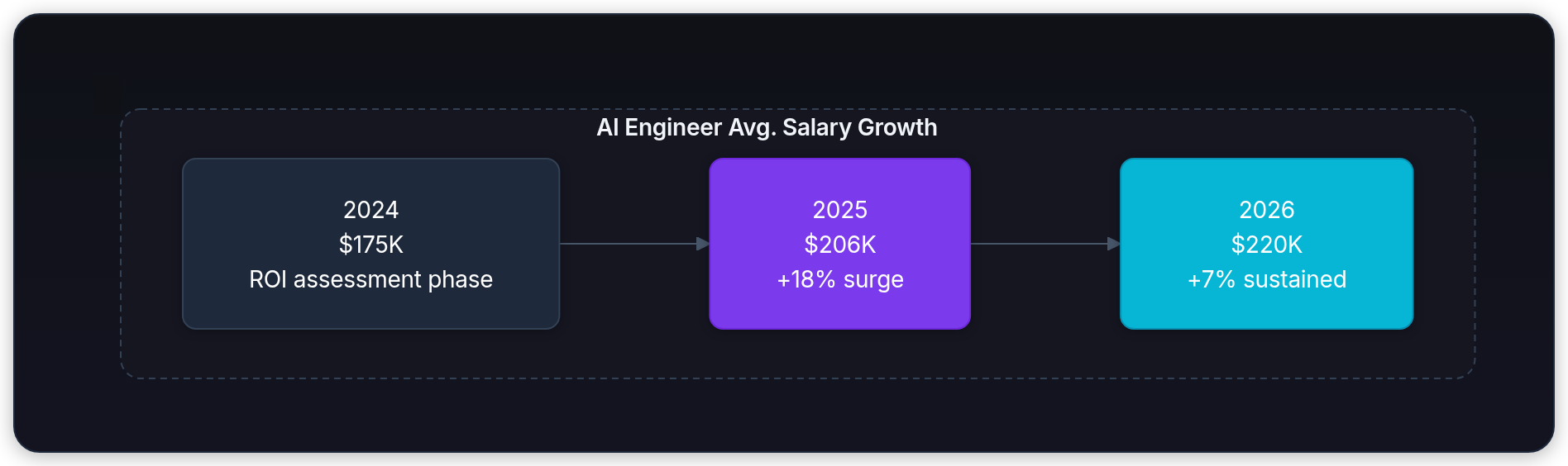

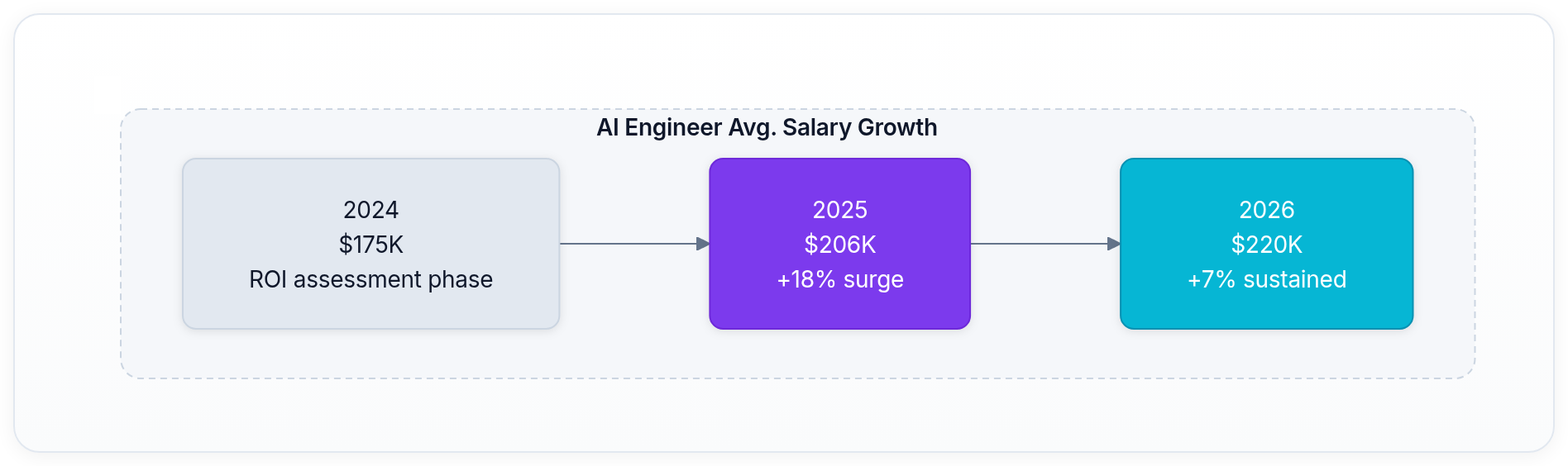

AI engineering pay is not one market. This guide uses public 2026 job postings, Levels.fyi's verified self-reported compensation data, and H-1B salary records to benchmark offers by level, company tier, location policy, and technical scope.

LeetLLM TeamMarch 16, 2026Updated April 22, 202615 min read

Generic salary sites flatten the market too much. They treat "AI engineer" like a standard title, when in practice 2026 compensation depends far more on scope, systems depth, and company tier than on the words in the job title.

Public 2026 listings make that spread obvious. Google's AI/ML early-career software engineer posting lists a US base range of $141K-$202K plus bonus and equity. OpenAI's public Research Engineer, AI for Science role lists $295K-$445K plus equity in San Francisco. Anthropic's currently posted ML and research engineering roles span $350K-$500K base, and some systems-heavy RL or agent roles land at $500K-$850K base.[1] [2] [3] [4] [5]

Levels.fyi's verified, self-reported compensation data tells the same story. It currently shows median total compensation of about $290K for Google machine learning engineers, about $430K for Meta machine learning engineers, and $555K for OpenAI software engineers in the US.[6] [7] [8]

Key idea: If you're still mapping the role itself, read What Does an AI Engineer Actually Do? first. Compensation makes much more sense once you separate product ML, research engineering, agent systems, and ML infrastructure work.

How to benchmark AI compensation in 2026

Three rules make salary data much less confusing:

- Benchmark the job family, not the title. "AI engineer" is too broad to be useful on its own. Research engineer, ML systems engineer, machine learning engineer, and applied AI engineer can sit on very different pay curves.

- Separate base salary from total compensation. Public job postings and H-1B records mostly show base pay. Senior compensation often swings much harder on equity, sign-on, and refreshers.

- Treat frontier-lab numbers as ceiling anchors, not defaults. Anthropic's published salary bands and OpenAI's high-end compensation data are real, but they are not the whole market.[4] [5] [8]

Here's a fast calibration point using live 2026 public data:

Those numbers already tell you something important: once you move into competitive ML tracks, equity matters a lot.

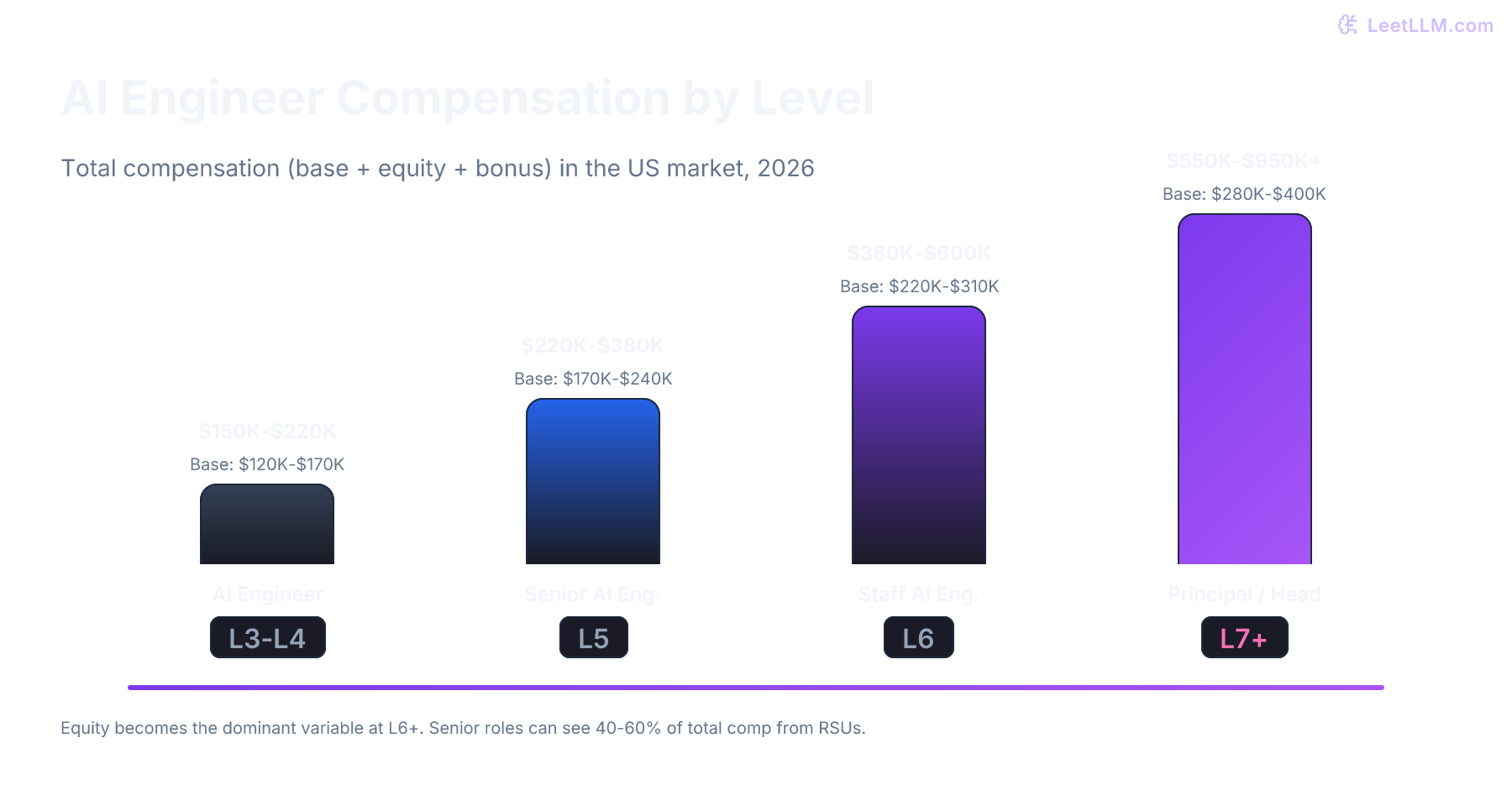

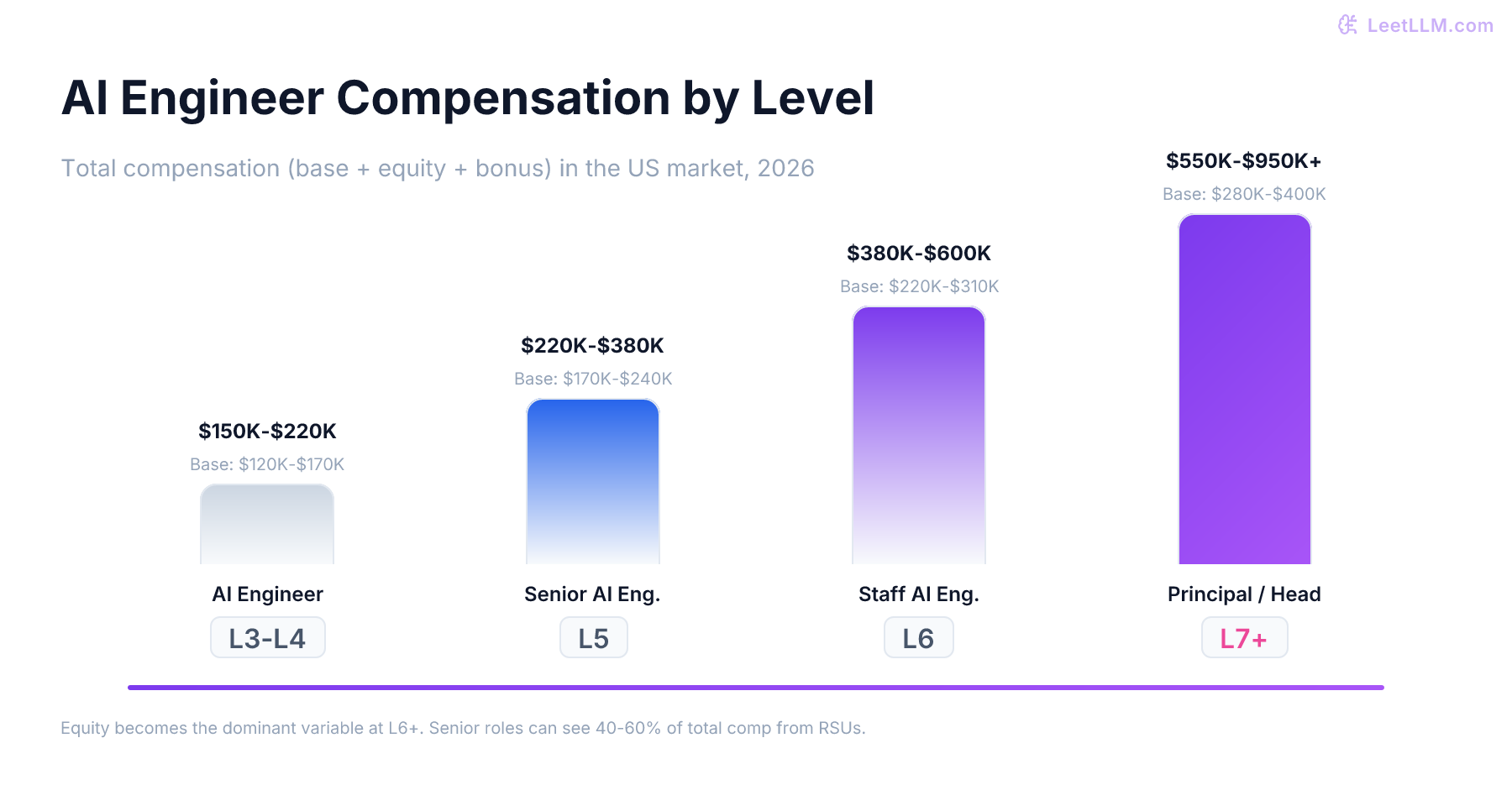

Salary by level

The table below is a practical US benchmark for technical AI roles in 2026. It synthesizes public job postings, Levels.fyi's verified self-reported compensation data, and H-1B base salary disclosures. It is not a single national pay band, and it is not meant to cover every non-technical "AI" title.[1] [6] [7] [8] [9]

| Level | Base Salary | Practical Total Comp | What Usually Gets You There |

|---|---|---|---|

| Early career (0-2 years) | $140K-$200K | $180K-$300K | Strong fundamentals, production coding, one real ML or LLM system shipped |

| Mid-level (3-5 years) | $180K-$250K | $250K-$400K | Owning a model-backed service end to end, shipping evals, debugging production failures |

| Senior (6-9 years) | $220K-$320K | $350K-$650K | Leading architecture, inference cost reduction, reliability work, multi-team delivery |

| Staff / Principal | $260K-$500K+ | $500K-$1.2M+ | Organization-level technical leadership, infra ownership, frontier-model or large-scale ML scope |

A few patterns matter more than the exact row boundaries:

- Base salary rises steadily, but equity widens much faster. That is why senior and staff packages spread out so much.

- The biggest comp jump is usually from mid-level to senior. Going from "I can build a feature" to "I can own the system and defend its trade-offs" changes the offer more than another year of experience alone.

- Staff-plus compensation is a different market. At that point companies are paying for technical leadership, systems judgment, and cross-team impact, not just implementation speed.

Company tier and live market anchors

Frontier labs

This is the top of the published market right now.

- OpenAI: its public Research Engineer, AI for Science role lists $295K-$445K plus equity, is posted in San Francisco, and uses a hybrid model of three in-office days per week.[2]

- Anthropic: its ML/Research Engineer, Safeguards role lists $350K-$500K base. Its Research Engineer, Agents role lists $500K-$850K base. Its Machine Learning Systems Engineer, RL Engineering role also lists $500K-$850K base.[3] [4] [5]

- Verified comp: Levels.fyi's verified, self-reported data currently shows OpenAI software engineers at a $555K median US total compensation, with L4 averaging $569K and L5 averaging about $1.2M.[8]

The catch is equally real: these companies hire against a much narrower funnel. Distributed systems work, frontier-scale model development, agent evaluation, and ML systems performance all show up more often than generic app-layer AI work.

Big tech

Big tech is usually the cleanest place to benchmark because the levels are more legible and the equity is liquid.

- Google: Levels.fyi currently shows machine learning engineer compensation at $199K for L3, $295K for L4, $398K for L5, and $593K for L6, with about a $290K median across the role.[6]

- Meta: Levels.fyi currently shows machine learning engineer compensation at $187K for E3, $326K for E4, $506K for E5, and $786K for E6, with about a $430K median across the role.[7]

- Base-only reality check: Meta's 2024 H-1B filings for the specific title

Machine Learning Engineershow a $209,720 median base salary across 43 records. That gap between $209,720 base and roughly $430K verified total comp is exactly why base-only datasets understate the real market.[9] [7]

Reality check: When a comp source omits stock and bonus, you're not looking at the whole market. For senior AI roles, that missing piece can easily be six figures a year.

If you want a stable anchor for negotiation, big tech numbers are often the most useful. They are easier to level-map, easier to compare, and easier to convert into expected liquid compensation over four years.

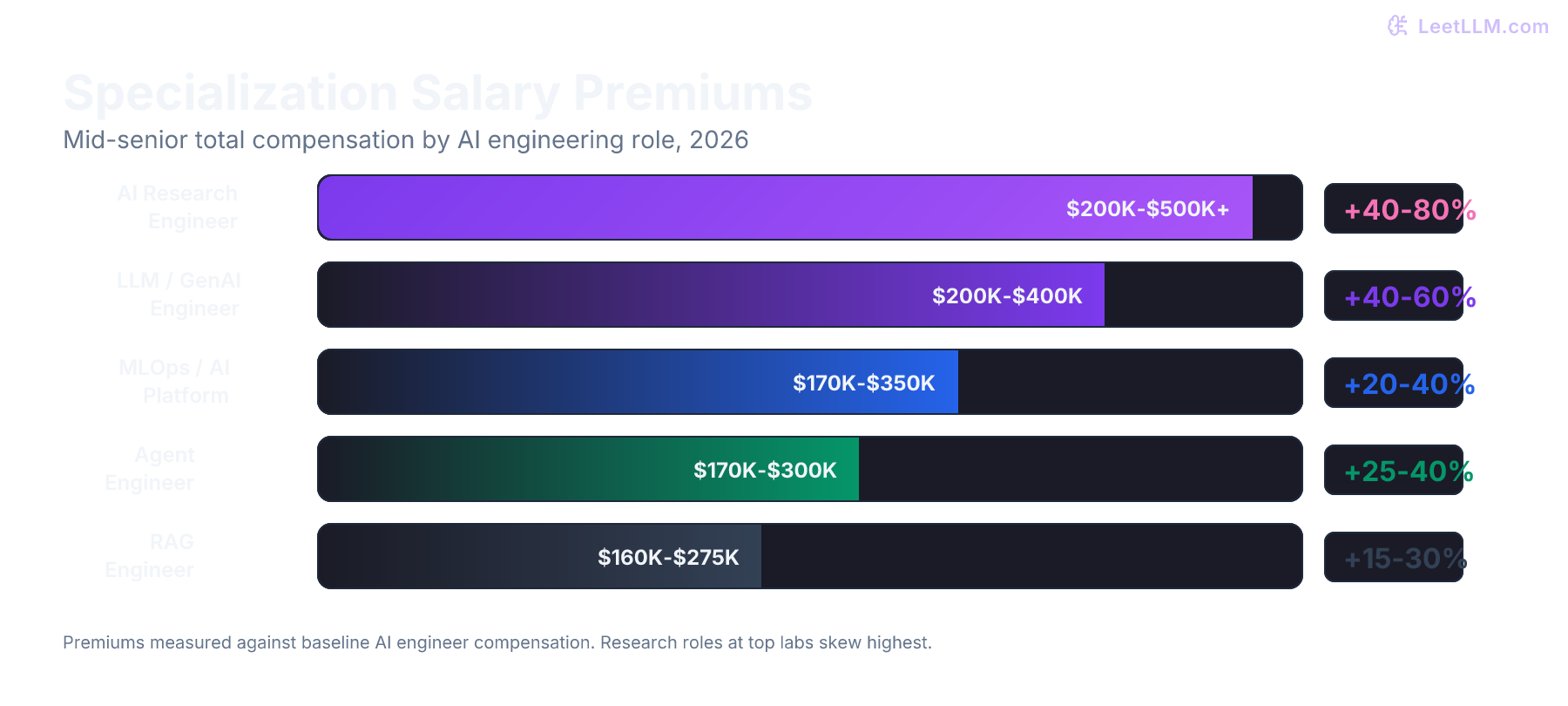

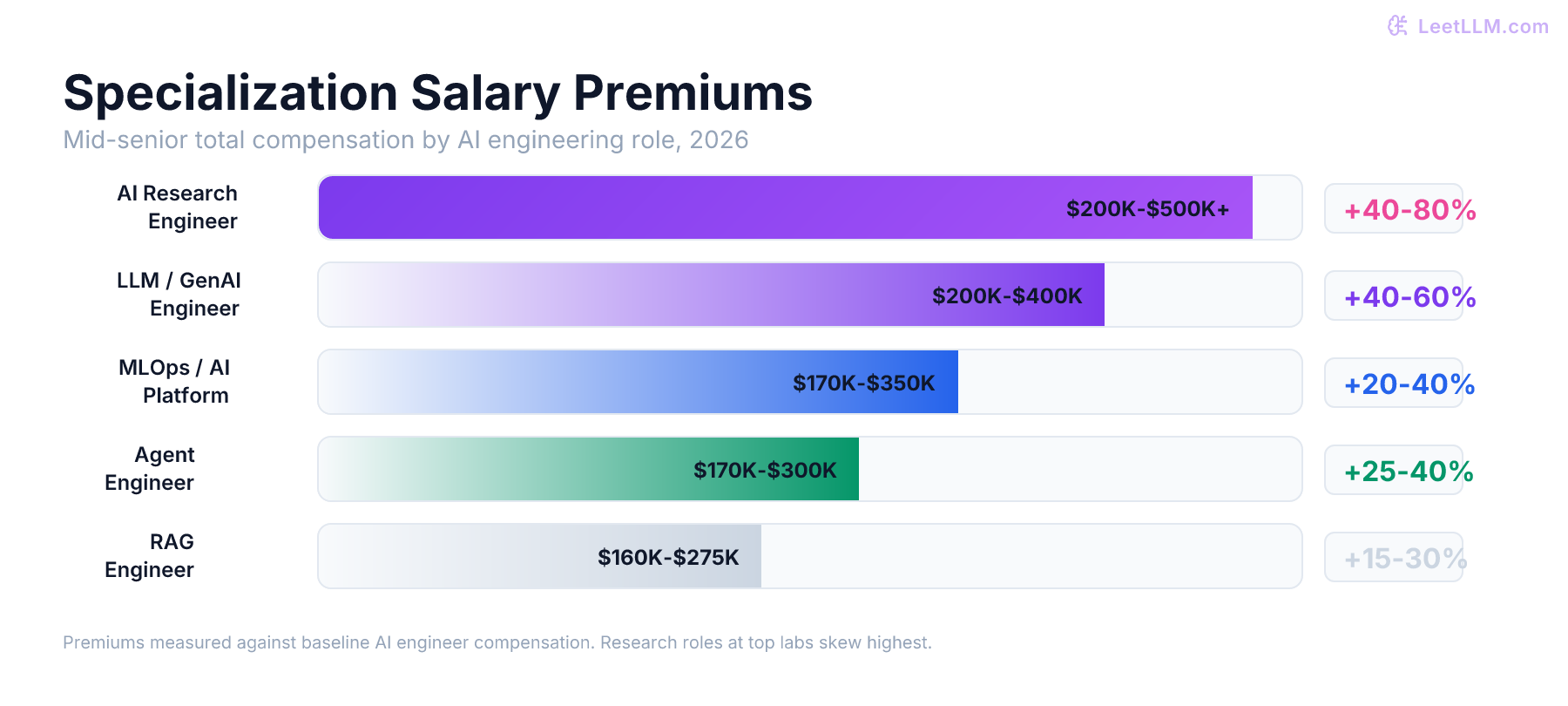

The skills that stretch the band

The public high end is not evenly distributed across AI work. It clusters around technical bottlenecks that are hard to hire for.

| Skill Cluster | Why It Pays | Public 2026 Anchor |

|---|---|---|

| ML systems and RL infrastructure | These engineers keep clusters productive, training runs stable, and expensive hardware busy. | Anthropic's ML Systems Engineer, RL Engineering role lists $500K-$850K base.[5] |

| Agent systems and evaluation | Companies now pay for engineers who can make agent workflows measurable, debuggable, and reliable. | Anthropic's Research Engineer, Agents role lists $500K-$850K base.[4] |

| Safety and safeguards ML | Production misuse detection, classifiers, adversarial robustness, and red-teaming sit close to deployment risk. | Anthropic's ML/Research Engineer, Safeguards role lists $350K-$500K base.[3] |

| Production ML at scale | This is the broad big-tech track: training, deployment, ranking, retrieval, and serving in large products. | Google ML engineer median TC is about $290K. Meta ML engineer median TC is about $430K.[6] [7] |

Notice what is mostly missing from the public top end: vague "prompt engineer" work with no systems ownership. The strongest compensation clusters around the places where model quality, infra reliability, evaluation, and compute efficiency meet.

If you're studying toward the next compensation band, the durable technical areas are still the same: KV cache mechanics, distributed training, agentic architectures, and evaluation frameworks.

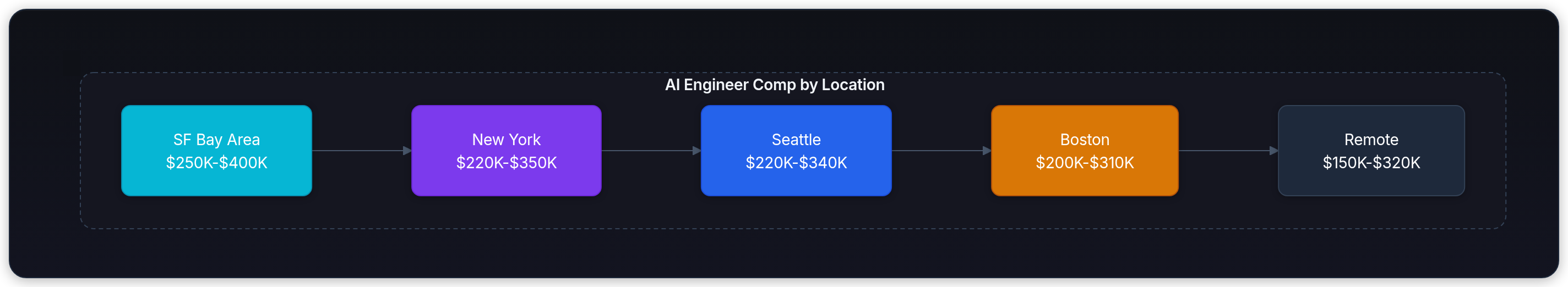

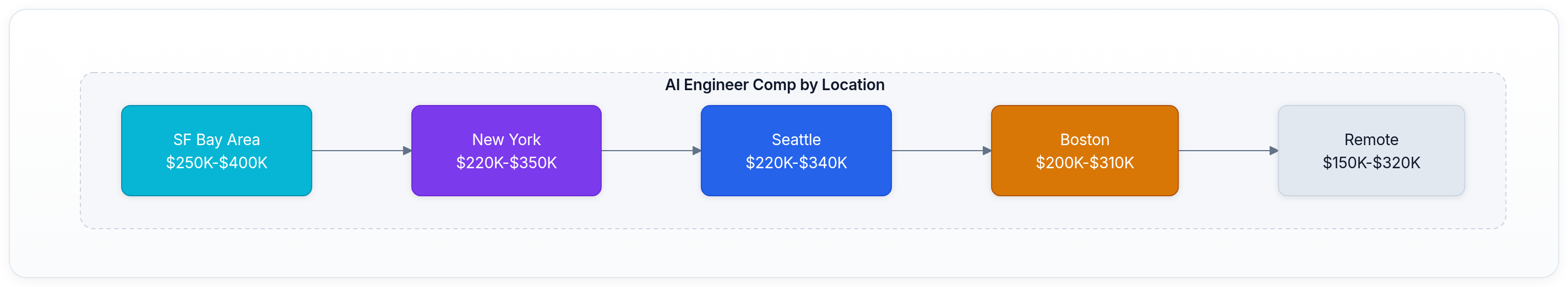

Location still changes the package

Remote work changed the conversation, but it did not erase geography.

The practical 2026 rules are:

- Google says explicitly that pay within the posted band depends on work location, skills, and experience.[1]

- Anthropic currently states on the listed ML and research roles that staff are expected to be in an office at least 25% of the time.[4] [5] [3]

- OpenAI publishes the AI for Science role used in this article with a $295K-$445K cash band, as a San Francisco position, and says employees are expected in the office three days per week unless a role is designated remote.[2]

So yes, location still matters. The highest public cash bands remain concentrated around top US tech hubs and employers that are comfortable paying for scarce ML systems talent there. In negotiation, ask whether the salary band, sign-on, and refreshers are anchored to a specific office, a state, or a national band.

Base salary is only half the story

This is where many technical candidates misread the market.

| Example | Base | Stock / Year | Bonus | Total |

|---|---|---|---|---|

| Google L6 ML Engineer | $270K | $296K | $26.7K | $593K |

| Meta E5 ML Engineer | $229K | $242K | $34.7K | $506K |

| OpenAI L4 Software Engineer | $255K | $315K | $0 | $569K |

All three examples come from current Levels.fyi pages for 2026.[6] [7] [8]

That has two direct consequences:

- Base-only datasets are useful, but incomplete. H-1B filings are good for sanity-checking cash salary floors and medians. They are bad proxies for total compensation in public tech and frontier AI.

- Equity policy matters almost as much as salary. Vesting schedule, refresher cadence, grant size, and company liquidity can move the package by six figures a year at senior levels.

Negotiation tactics for technical candidates

If you're interviewing for serious AI roles, treat compensation prep like system design prep.

- Benchmark the real peer set. Compare yourself against machine learning engineers, research engineers, or ML systems engineers, whichever is closest to the actual scope.

- Quantify technical impact in business units. "Reduced p95 latency by 28%" is good. "Reduced p95 latency by 28%, which cut GPU cost per request by 19%" is far better.

- Ask reverse-interview questions. Ask what actually hurts today: inference cost, eval reliability, training throughput, safety review, data freshness, or agent failure recovery.

- Negotiate the whole package. Base, sign-on, annual bonus target, equity grant, vesting schedule, refresher policy, and location adjustments all matter.

- Refresh your anchor numbers before the offer call. Salary pages and public job listings move. Bring current data, not screenshots from last year.

For a technical reader, the best negotiation asset is still a strong impact log. Keep a record of throughput gains, cost reductions, eval lifts, incident reductions, and deployment scale. Those are the metrics that travel across companies.

Key takeaways

- AI compensation in 2026 is not one market. Product ML, research engineering, and ML systems work sit on different curves.

- Public job postings currently span from Google's $141K-$202K base for an early-career AI/ML software engineer role to Anthropic's $500K-$850K systems-heavy agent and RL roles, with OpenAI's public AI for Science role listing $295K-$445K plus equity in San Francisco.[1] [4] [5] [2]

- Levels.fyi's verified, self-reported total compensation data is already much higher than base salary alone: Google ML median is about $290K, Meta ML median is about $430K, and OpenAI software engineer median is $555K.[6] [7] [8]

- The biggest pay jumps come from systems ownership: training infrastructure, inference efficiency, agent evaluation, and measurable production impact.

- H-1B and public pay bands are useful inputs, but they systematically miss the part of the package that gets largest at senior levels.

Where the numbers come from

This guide uses public job postings, Levels.fyi's verified self-reported compensation pages, and H-1B salary records viewed on April 22, 2026. These pages change frequently, so you should re-check the live listing before you use any single number in a negotiation.

References

Software Engineer, PhD, Early Career, AI/Machine Learning, 2026 Start

Google Careers · 2026

Research Engineer, AI for Science

OpenAI Careers · 2026

Research Engineer, Agents

Anthropic Careers · 2026

ML/Research Engineer, Safeguards

Anthropic Careers · 2026

Machine Learning Systems Engineer, RL Engineering

Anthropic Careers · 2026

Google Machine Learning Engineer Salary

Levels.fyi · 2026

Meta Machine Learning Engineer Salary

Levels.fyi · 2026

OpenAI Software Engineer Salary

Levels.fyi · 2026

Machine Learning Engineer @ Meta Platforms's H-1B Salary 2024

H1B Salary Database · 2024