BlogAI Engineer Portfolio Projects That Get Interviews

🏷️ Career🏷️ Portfolio🏷️ Projects

AI Engineer Portfolio Projects That Get Interviews

Five portfolio projects that prove real AI engineering skill: shipped demos, eval reports, traces, cost notes, tests, and design docs.

LeetLLM TeamMay 9, 202617 min read

Most AI portfolios fail because they show a demo and nothing else.

The app may look good, but a hiring team can't tell whether the candidate understands evaluation, failure modes, cost, deployment, data quality, or security. A wrapper around an LLM API is not enough proof.

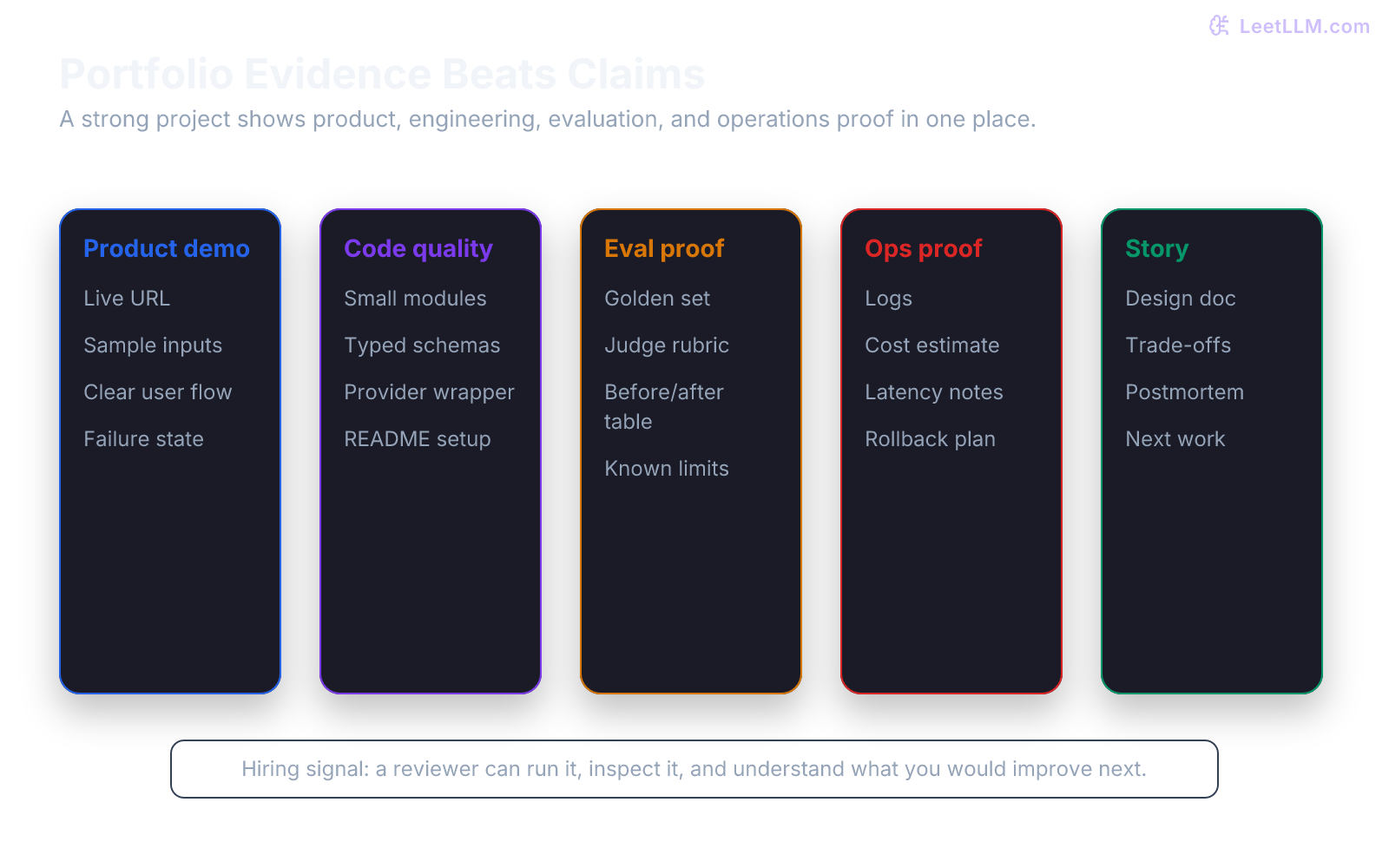

A strong AI engineering portfolio shows evidence.

It lets a reviewer run the product, inspect the code, read the design trade-offs, see eval results, and understand what still needs work.

What hiring teams want to see

Hiring teams don't need another "chat with your PDF" clone with no explanation.

They want signals that transfer to real work:

| Signal | Proof |

|---|---|

| Product judgment | The app solves a clear user problem. |

| Engineering skill | The repo has tests, setup docs, typed schemas, and small modules. |

| AI judgment | The model path has evals, prompts, failure analysis, and citations. |

| Operations | The app has logs, cost notes, timeouts, and deployment notes. |

| Security | The design handles private data and prompt injection risk. |

| Communication | The README explains trade-offs without hype. |

Your portfolio should answer one question:

Would this person be useful on a team building AI software?

Project 1: Document QA with ingestion proof

This is the classic RAG project, but the bar is higher than uploading a PDF and asking questions.

Build a document QA app that shows the ingestion path.

Required proof:

- file parser logs

- chunk preview page

- source citations

- eval set with questions and expected sources

- failure analysis for bad answers

- design doc explaining parser choices

Use a small corpus, such as:

- your own project docs

- public handbook

- small policy set

- open documentation folder

- course notes

Don't use private documents in a public demo.

What makes it strong

Weak version:

text1Upload PDF. Ask question. Get answer.

Strong version:

text1Upload PDF. 2Inspect extracted pages. 3Remove repeated headers and footers. 4Preview chunks. 5Ask question. 6Show answer with citations. 7Run evals. 8Explain failed cases.

This proves you understand that RAG quality starts before the model call.

Project 2: Eval dashboard

An eval dashboard is one of the strongest portfolio projects because it proves measurement.

Build a small app that compares prompt or model versions over a fixed dataset.

Dataset row:

json1{ 2 "id": "refund_001", 3 "input": "I paid twice for order A-102.", 4 "expected_category": "billing", 5 "must_mention": "refund" 6}

Dashboard columns:

| Column | Why it matters |

|---|---|

| Dataset version | Prevents hidden test drift. |

| Prompt version | Connects score to prompt history. |

| Model version | Explains model changes. |

| Judge rubric version | Makes judge changes visible. |

| Pass/fail | Gives a quick quality signal. |

| Failure reason | Turns score into action. |

| Latency and tokens | Connects quality to cost. |

ML systems often accumulate hidden technical debt when data, code, models, and evaluation criteria drift independently.[1] A dashboard that tracks versions shows you understand that problem.

What makes it strong

Include:

- JSONL eval dataset

- deterministic checks

- optional LLM judge with rubric

- side-by-side version comparison

- CSV export

- short report: "What changed and why"

This project can be small and still impressive.

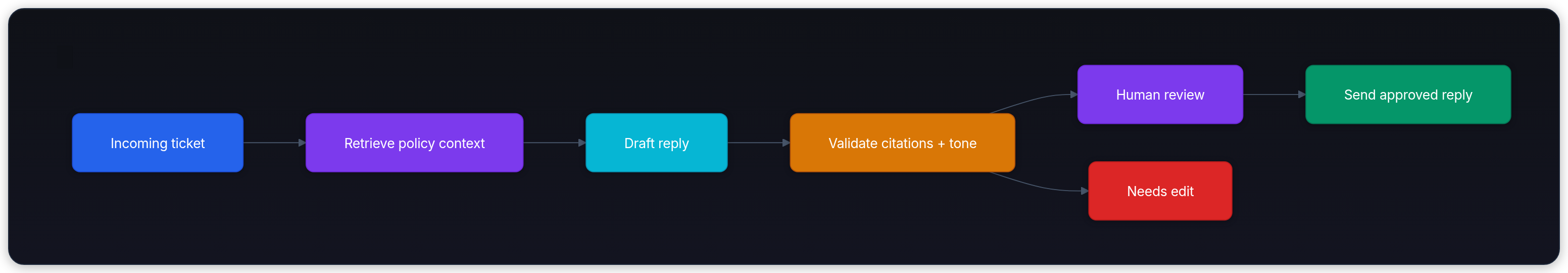

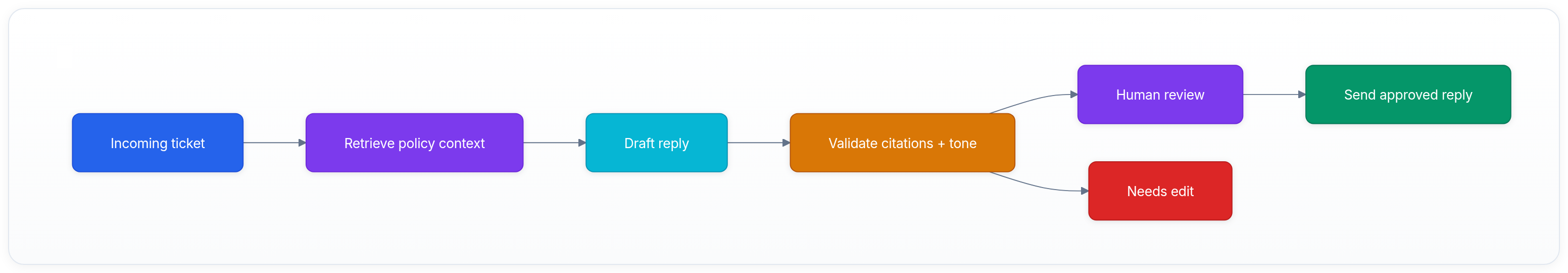

Project 3: Support copilot with human review

A support copilot drafts replies, but a human approves them.

This is more realistic than a fully autonomous support agent.

Workflow:

Required proof:

- policy source citations

- editable draft UI

- approval state

- rejection reason

- prompt version

- sample audit log

- tests for unsafe or unsupported answer

This project shows product maturity because it doesn't pretend the model should send everything on its own.

Project 4: AI coding assistant for one repo

Build a small repo assistant that answers questions about a codebase or drafts safe changes.

Keep it scoped.

Good scope:

text1Assistant reads one repository. 2It answers architecture questions with file citations. 3It suggests test locations. 4It drafts a patch only for one requested file. 5It never runs shell commands without explicit approval.

Bad scope:

text1Autonomous engineer that can fix anything.

Use code-search, file reading, and maybe a small agent loop. If you include code editing, show a diff review step.

SWE-bench exists because real repository tasks are harder than isolated code questions.[2] Your project should show that you respect repository context, tests, and review.

Required proof:

- file citations

- task scope

- generated diff

- review checklist

- tests or dry-run mode

- prompt injection note for repository text

Project 5: Cost and latency optimizer

Many teams care about AI cost and user experience.

Build a tool that compares model-call strategies:

- direct call

- shorter prompt

- prompt caching

- batch processing

- smaller model for simple cases

- retrieval before generation

Show:

| Metric | Example |

|---|---|

| p50 latency | 720 ms |

| p95 latency | 2.4 s |

| input tokens | 1,240 |

| output tokens | 180 |

| estimated cost | per 1,000 requests |

| error rate | timeout or invalid response |

| quality score | eval pass rate |

Don't invent precision you don't have. If costs depend on provider pricing, say when the estimate was made and link the pricing source or config.

This project proves that you understand trade-offs, not just model prompts.

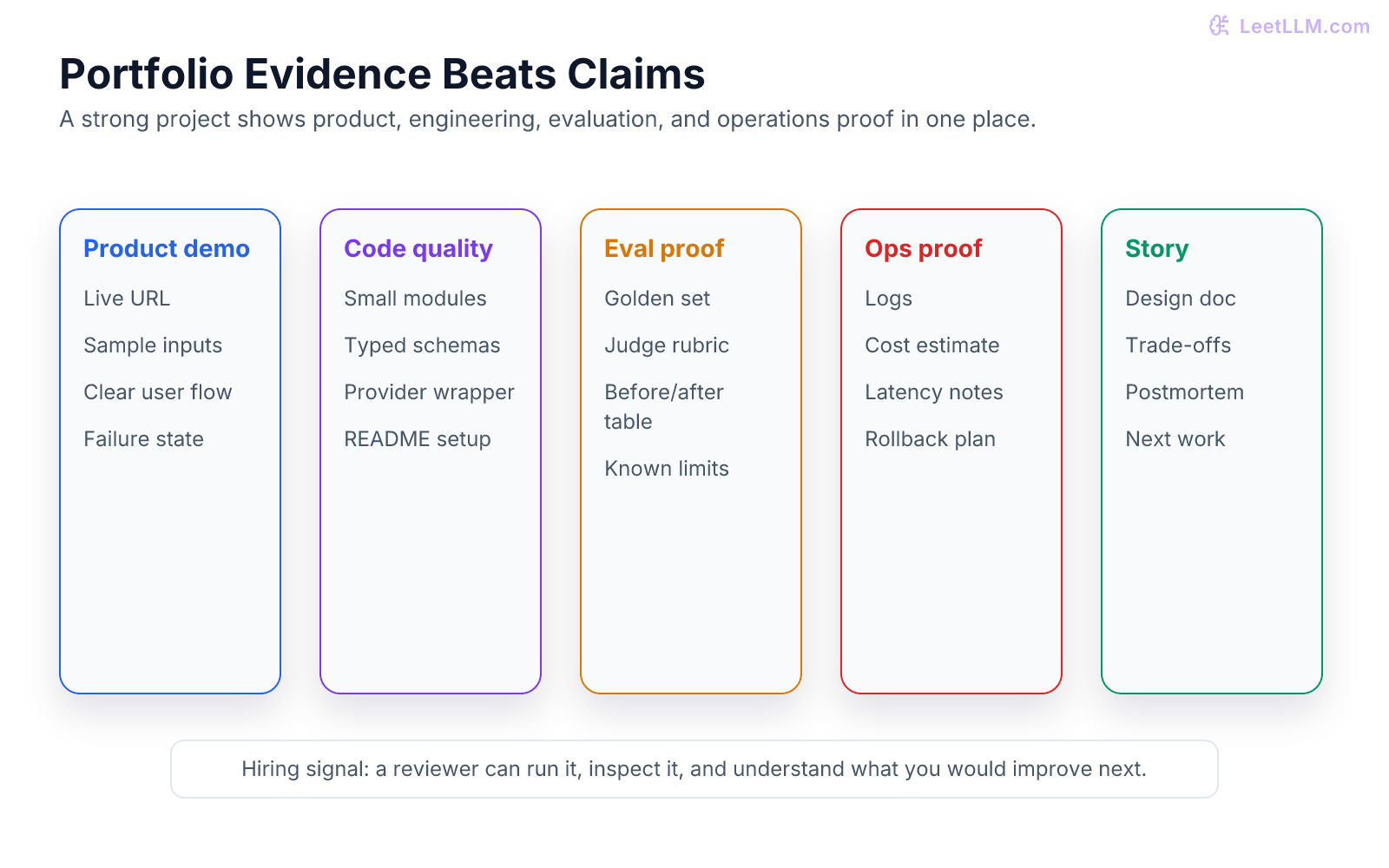

The minimum repo bar

Every portfolio project should have:

| Artifact | What it proves |

|---|---|

| README | A reviewer can run it. |

.env.example | Secrets aren't committed. |

| Tests | Behavior can be checked. |

| Eval data | Quality can be measured. |

| Design doc | You can explain trade-offs. |

| Screenshots or video | The product exists. |

| Known limits | You understand failure modes. |

| Deployment notes | You can ship. |

Use Docker only when it helps the reviewer run the app or understand deployment. Docker's docs are the right source for container basics and image practices.[3]

If a project requires paid model access, include a mocked mode so tests and demos still work.

What to write in the README

Use this structure:

markdown1# Project name 2 3## What it does 4One paragraph user story. 5 6## Demo 7Link or screenshots. 8 9## Architecture 10Short diagram and component list. 11 12## Setup 13Commands and environment variables. 14 15## Evaluation 16Dataset, metrics, and latest result table. 17 18## Failure modes 19Known misses and how you would improve them. 20 21## Security and privacy 22What data is logged, stored, and redacted. 23 24## Trade-offs 25Why this design, not other options.

This turns a project from a demo into evidence.

What not to build

Avoid projects that are hard to review:

| Weak project | Why it underperforms |

|---|---|

| Generic chatbot | No clear product problem. |

| PDF chat with no citations | Can't verify answers. |

| Agent that can do anything | Scope is too broad to trust. |

| Prompt collection | Not enough engineering evidence. |

| Local model benchmark with no method | Numbers aren't meaningful. |

| App with no tests | Reviewer can't tell if it works. |

You can still build these for learning.

For hiring, turn them into proof:

- add an eval set

- add citations

- add logs

- add tests

- add a design doc

- add failure analysis

Interview talking points

A good portfolio gives you stories for interviews.

For each project, prepare answers to:

- What user problem did you choose?

- What did the model do well?

- Where did it fail?

- How did you measure quality?

- What did you log?

- How did you handle invalid output?

- How would you reduce cost?

- How would you handle private data?

- What would you rebuild after another month?

If you can't answer those, the project isn't done.

A simple ranking

If you can only build two projects, build these:

- Document QA with ingestion proof.

- Eval dashboard.

If you can build four, add:

- Support copilot with human review.

- AI coding assistant for one repo.

If you want one operations-heavy project, add:

- Cost and latency optimizer.

This mix proves product work, retrieval, evaluation, agents, and operations.

Final advice

Don't build huge.

Build inspectable.

A small app with tests, evals, traces, and an honest failure report beats a broad demo that nobody can verify.

The best portfolio projects feel like a pull request from someone you would trust on a real AI engineering team.

References

FastAPI Documentation.

FastAPI Project. · 2026 · Official documentation

Docker Documentation.

Docker Inc. · 2026 · Official documentation

Structured outputs

OpenAI · 2024

SWE-bench: Can Language Models Resolve Real-World GitHub Issues?

Jimenez et al. · 2024 · ICLR 2024

OWASP Top 10 for Large Language Model Applications

OWASP Foundation · 2025

Hidden Technical Debt in Machine Learning Systems.

Sculley et al. · 2015